Analyzing Noise Robustness of Cochleogram and Mel Spectrogram Features in Deep Learning Based Speaker Recognition

Abstract

1. Introduction

2. Related Works

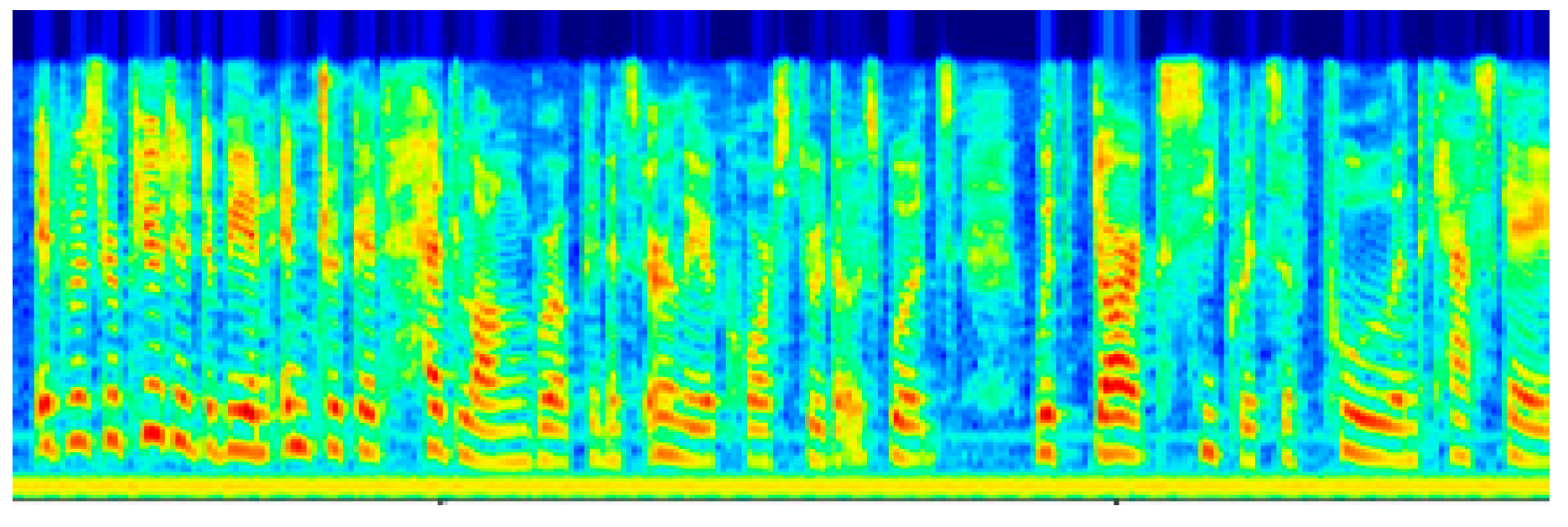

3. Cochleogram and Mel Spectrogram Generation

4. CNN Architectures

5. Experiment and Result

5.1. Dataset

5.2. Implementation Details and Training

5.3. Results

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Beigi, H. Speaker Recognition. In Encyclopedia of Cryptography, Security and Privacy; Springer: Berlin, Germnay, 2021. [Google Scholar]

- Liu, J.; Chen, C.P.; Li, T.; Zuo, Y.; He, P. An overview of speaker recognition. Trends Comput. Sci. Inf. Technol. 2019, 4, 1–12. [Google Scholar]

- Nilu, S.; Khan, R.A.; Raj, S. Applictions of Speaker Recognition; Elsevier: Arunachal Pradesh, India, 2012. [Google Scholar]

- Paulose, S.; Mathew, D.; Thomas, A. Performance Evaluation of Different Modeling Methods and Classifiers with MFCC and IHC Features for Speaker Recognition. Procedia Comput. Sci. 2017, 115, 55–62. [Google Scholar] [CrossRef]

- Tamazin, M.; Gouda, A.; Khedr, M. Enhanced Automatic Speech Recognition System Based on Enhancing Power-Normalized Cepstral Coefficients. Appl. Sci. 2019, 9, 2166. [Google Scholar] [CrossRef]

- Liang, H.; Sun, X.; Sun, Y.; Gao, Y. Text feature extraction based on deeplearning: A review. EURASIP J. Wirel. Commun. Netw. 2017, 2017, 211. [Google Scholar] [CrossRef] [PubMed]

- Zhao, X.; Wang, D. Analyzing noise robustness of MFCC and GFCC features in speaker identification. In Proceedings of the 2013 IEEE International Conference on Acoustics, Speech and Signal Processing, Vancouver, BC, Canada, 26–31 May 2013. [Google Scholar]

- Gua, J.; Wang, Z.; Kuen, J.; Ma, L.; Shahroudy, A. Recent Advances in Convolutional Neural Networks. arXiv 2017, arXiv:1512.07108v6. [Google Scholar] [CrossRef]

- Desplanques, B.; Thienpondt, J.; Demuynck, K. ECAPA-TDNN: Emphasized Channel Attention, Propagation and Aggregation in TDNN Based Speaker Verification. arXiv 2020, arXiv:2005.07143v3. [Google Scholar]

- Koluguri, N.R.; Park, T.; Ginsburg, B. Titanet: Neural model for speaker representation with 1d depth-wise eparable convolutions and global context. arXiv 2021, arXiv:2110.04410v1. [Google Scholar]

- Shao, Y.; Wang, D. Robust speaker identification using auditory features and computational auditory scene analysis. In Proceedings of the 2008 IEEE International Conference on Acoustics, Speech and Signal Processing, Las Vegas, NV, USA, 31 March–4 April 2008. [Google Scholar]

- Zhao, X.; Wang, Y.; Wang, D. Robust speaker identification in noisy and reverberant conditions. In Proceedings of the 2014 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Florence, Italy, 4–9 May 2014. [Google Scholar]

- Jeevan, M.; Dhingra, A.; Hanmandlu, M.; Panigrahi, B. Robust Speaker Verification Using GFCC Based i-Vectors. Lect. Notes Electr. Eng. 2017, 395, 85–91. [Google Scholar]

- Mobiny, A.; Najarian, M. Text Independent Speaker Verification Using LSTM Networks. arXiv 2018, arXiv:1805.00604v3. [Google Scholar]

- Torfi, A.; Dawson, J.; Nasrabadi, N.M. Text-independent speaker verification using 3D convolutional neural network. In Proceedings of the 2018 IEEE International Conference on Multimedia and Expo (ICME), San Diego, CA, USA, 23–27 July 2018. [Google Scholar]

- Salvati, D.; Drioli, C.; Foresti, G.L. End-to-End Speaker Identification in Noisy and Reverberant Environments Using Raw Waveform Convolutional Neural Networks. Interspeech 2019, 2019, 4335–4339. [Google Scholar]

- Khdier, H.Y.; Jasim, W.M.; Aliesawi, S.A. Deep Learning Algorithms based Voiceprint Recognition System in Noisy Environment. J. Phys. 2021, 1804, 012042. [Google Scholar] [CrossRef]

- Bunrit, S.; Inkian, T.; Kerdprasop, N.; Kerdprasop, K. Text-Independent Speaker Identification Using Deep Learning Model of Convolution Neural Network. Int. J. Mach. Learn. Comput. 2019, 9, 143–148. [Google Scholar] [CrossRef]

- Meftah, A.H.; Mathkour, H.; Kerrache, S.; Alotaibi, Y.A. Speaker Identification in Different Emotional States in Arabic and English. IEEE Access 2020, 8, 60070–60083. [Google Scholar] [CrossRef]

- Nagrani, A.; Chung, J.S.; Xie, W.; Zisserman, A. Voxceleb: Large-scale speaker verification in the wild. Comput. Speech Lang. 2019, 60, 101027. [Google Scholar] [CrossRef]

- Ye, F.; Yang, J. A Deep Neural Network Model for Speaker Identification. Appl. Sci. 2021, 11, 3603. [Google Scholar] [CrossRef]

- Tjandra, A.; Sakti, S.; Neubig, G.; Toda, T.; Adriani, M.; Nakamura, S. Combination of two-dimensional cochleogram and spectrogram features for deep learning-based ASR. In Proceedings of the 2015 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), South Brisbane, QLD, Australia, 19–24 April 2015. [Google Scholar]

- Ahmed, S.; Mamun, N.; Hossain, M.A. Cochleagram Based Speaker Identification Using Noise Adapted CNN. In Proceedings of the 2021 5th International Conference on Electrical Engineering and Information & Communication Technology (ICEEICT), Dhaka, Bangladesh, 18–20 November 2021. [Google Scholar]

- Tabibi, S.; Kegel, A.; Lai, W.K.; Dillier, N. Investigating the use of a Gammatone filterbank for a cochlear implant coding strategy. J. Neurosci. Methods 2016, 277, 63–74. [Google Scholar] [CrossRef] [PubMed]

- Nagrani, A.; Chung, J.S.; Zisserman, A. VoxCeleb: A large-scale speaker identification dataset. arXiv 2018, arXiv:1706.08612v2. [Google Scholar]

- Ellis, D. Noise. 26 October 2022. Available online: https://www.ee.columbia.edu/~dpwe/sounds/noise/ (accessed on 9 July 2022).

- Salehghaffari, H. Speaker Verification using Convolutional Neural Networks. arXiv 2018, arXiv:1803.05427v2. [Google Scholar]

- Kim, S.-H.; Park, Y.-H. Adaptive Convolutional Neural Network for Text-Independent Speaker Recognition. Interspeech 2021, 2021, 66–70. [Google Scholar]

- Cai, W.; Chen, J.; Li, M. Exploring the Encoding Layer and Loss Function in End-to-End Speaker and Language Recognition System. arXiv 2018, arXiv:1804.05160v1. [Google Scholar]

| Layer No. | Layer | Description | Output Size |

|---|---|---|---|

| 1 | input (224 × 224 × 3) | Cochleogram or Mel Spectrogram | |

| 2 | Conv2D | f = 64, k = 3 × 3, p = same, a = ReLu | (None, 112, 112, 64) |

| 3 | BatchNormalization | - | (None, 112, 112, 64) |

| 4 | Max pooling | Pool size = 2 × 2, s = 2 × 2 | (None, 56, 56, 64) |

| 5 | Conv2D | f = 128, k = 3 × 3, p = same, a = ReLu | (None, 56, 56, 128) |

| 6 | BatchNormalization | - | (None, 56, 56, 128) |

| 7 | Max pooling | Pool size = 2 × 2, s = 2 × 2 | (None, 28, 28, 128) |

| 8 | Conv2D | f = 256, k = 3 × 3, p = same, a = ReLu | (None, 28, 28, 256) |

| 9 | BatchNormalization | - | (None, 28, 28, 256) |

| 10 | Max pooling | Pool size = 2 × 2, s = 2 × 2 | (None, 14, 14, 256) |

| 11 | Conv2D | f = 512, k = 3 × 3, p = same, a = ReLu | (None, 14, 14, 512) |

| 12 | BatchNormalization | - | (None, 14, 14, 512) |

| 13 | Max pooling | Pool size = 2 × 2, s = 2 × 2 | (None, 7, 7, 512) |

| 14 | Flatten | - | (None, 25088) |

| 15 | FC | f = 512, k = 3 × 3, p = same, a = ReLu | (None, 512) |

| 16 | BatchNormalization | - | (None, 512) |

| 17 | Dropout | Probability = 0.5 | (None, 512) |

| FC | |||

| Softmax | |||

| Layer No. | Layer | Description | Output Size |

|---|---|---|---|

| 1 | input (224 × 224 × 3) | Cochleogram or Mel Spectrogram | |

| 2 | Conv2D | f = 64, k = 3 × 3, p = same, a = ReLu | (None, 224, 224, 64) |

| 3 | Conv2D | f = 64, k = 3 × 3, p = same, a = ReLu | (None, 224, 224, 64) |

| 4 | Maxpool | pool = 2 × 2, s = 2 × 2 | (None, 112, 112, 64) |

| 5 | Conv2D | f = 128, k = 3 × 3, p = same, a = ReLu | (None, 112, 112, 128) |

| 6 | Conv2D | f = 128, k = 3 × 3, p = same, a = ReLu | (None, 112, 112, 128) |

| 7 | Maxpool | pool = 2 × 2, s = 2 × 2 | (None, 56, 56, 128) |

| 8 | Conv2D | f = 256, k = 3 × 3, p = same, a = ReLu | (None, 56, 56, 256) |

| 9 | Conv2D | f = 256, k = 3 × 3, p = same, a = ReLu | (None, 56, 56, 256) |

| 10 | Conv2D | f = 256, k = 3 × 3, p = same, a = ReLu | (None, 56, 56, 256) |

| 11 | Maxpool | pool = 2 × 2, s = 2 × 2 | (None, 28, 28, 256) |

| 12 | Conv2D | f = 512, k = 3 × 3, p = same, a = ReLu | (None, 28, 28, 512) |

| 13 | Conv2D | f = 512, k = 3 × 3, p = same, a = ReLu | (None, 28, 28, 512) |

| 14 | Conv2D | f = 512, k = 3 × 3, p = same, a = ReLu | (None, 28, 28, 512) |

| 15 | Maxpool | pool = 2 × 2, s = 2 × 2 | (None, 14, 14, 512) |

| 16 | Conv2D | f = 512, k = 3 × 3, p = same, a = ReLu | (None, 14, 14, 512) |

| 17 | Conv2D | f = 512, k = 3 × 3, p = same, a = ReLu | (None, 14, 14, 512) |

| 18 | Conv2D | f = 512, k = 3 × 3, p = same, a = ReLu | (None, 14, 14, 512) |

| 19 | Maxpool | pool = 2 × 2, s = 2 × 2 | (None, 7, 7, 512) |

| Flatten | |||

| Fully Connected | |||

| Fully Connected | |||

| Fully Connected | |||

| Softmax | |||

| Layer | Description | Output | Iteration |

|---|---|---|---|

| input (224 × 224 × 3) | Cochleogram or Mel Spectrogram | - | |

| ZeroPadding2D | Size = 3 × 3 | (None, 70, 70, 3) | 1× |

| Conv2D | f = 64, k = 7 × 7, strides = 2 × 2, a = ReLu | (None, 32, 32, 128) | |

| BatchNormalization | - | (None, 32, 32, 128) | |

| MaxPooling2D | Pool size = 3 × 3, strides = 2 × 2 | (None, 15, 15, 128) | |

| Conv2D | f = 64, k = 1 × 1, s = 1 × 1, p = valid, a = ReLu | (None, 15, 15, 64) | 3× |

| BatchNormalization | - | (None, 15, 15, 64) | |

| Conv2D | f = 64, k = 3 × 3, s = 1 × 1, p = same, a = ReLu | (None, 15, 15, 64) | |

| BatchNormalization | - | (None, 15, 15, 64) | |

| Conv2D | f = 256, k = 1 × 1, s = 1 × 1, p = valid, a = ReLu | (None, 15, 15, 256) | |

| BatchNormalization | - | (None, 15, 15, 256) | |

| Conv2D | f = 128, k = 1 × 1, s = 2 × 2, p = valid, a = ReLu | (None, 8, 8, 128) | 4× |

| BatchNormalization | - | (None, 8, 8, 128) | |

| Conv2D | f = 128, k = 3 × 3, s = 1 × 1, p = same, a = ReLu | (None, 8, 8, 128) | |

| BatchNormalization | - | (None, 8, 8, 128) | |

| Conv2D | f = 512, k = 1 × 1, s = 1 × 1, p = valid, a = ReLu | (None, 8, 8, 512) | |

| BatchNormalization | - | (None, 8, 8, 512) | |

| Conv2D | f = 256, k = 1 × 1, s = 2 × 2, p = valid, a = ReLu | (None, 4, 4, 256) | 6× |

| BatchNormalization | - | (None, 4, 4, 256) | |

| Conv2D | f = 256, k = 3 × 3, s = 1 × 1, p = same, a = ReLu | (None, 4, 4, 256) | |

| BatchNormalization | - | (None, 4, 4, 256) | |

| Conv2D | f = 1024, k = 1 × 1, s = 1 × 1, p = valid, a = ReLu | (None, 4, 4, 1024) | |

| BatchNormalization | - | (None, 4, 4, 1024) | |

| Conv2D | f = 512, k = 1 × 1, s = 2 × 2, p = valid, a = ReLu | (None, 2, 2, 512) | 3× |

| BatchNormalization | - | (None, 2, 2, 512) | |

| Conv2D | f = 512, k = 3 × 3, s = 1 × 1, p = same, a = ReLu | (None, 2, 2, 512) | |

| BatchNormalization | - | (None, 2, 2, 512) | |

| Conv2D | f = 2048, k = 1 × 1, s = 1 × 1, p = valid, a = ReLu | (None, 2, 2, 2048) | |

| BatchNormalization | - | (None, 2, 2, 2048) | |

| AveragePooling | |||

| Flatten | |||

| Fully Connected | |||

| Softmax | |||

| Dataset Split | Number of Speakers | Number of Utterances | |

|---|---|---|---|

| Identification | Dev | 1251 | 145,265 |

| Test | 1251 | 8251 | |

| Verification | Dev | 1211 | 148,642 |

| Test | 40 | 4874 | |

| Model Type | Feature Type | Accuracy (%) with Additive Noises | Without Additive Noise | |||||

|---|---|---|---|---|---|---|---|---|

| SNR = −5 | SNR = 0 | SNR = 5 | SNR = 10 | SNR = 15 | SNR = 20 | |||

| Basic 2DCNN | Mel Spectrogram | 46.78 | 67.78 | 81.07 | 87.74 | 89.44 | 92.16 | 93.61 |

| Cochleogram | 73.56 | 87.16 | 91.98 | 93.66 | 94.65 | 95.47 | 95.62 | |

| ResNet-50 | Mel Spectrogram | 48.89 | 69.91 | 83.22 | 89.91 | 91.63 | 94.37 | 96.97 |

| Cochleogram | 74.14 | 87.88 | 92.87 | 94.63 | 95.63 | 96.22 | 97.85 | |

| VGG-16 | Mel Spectrogram | 51.96 | 70.82 | 85.3 | 91.64 | 92.81 | 95.77 | 96.93 |

| Cochleogram | 75.77 | 89.38 | 93.94 | 95.96 | 96.79 | 97.32 | 98.04 | |

| ECAPA-TDNN | Mel Spectrogram | 53.98 | 72.25 | 86.94 | 92.54 | 93.67 | 96.59 | 96.72 |

| Cochleogram | 76.42 | 89.75 | 94.25 | 96.39 | 97.15 | 97.61 | 97.89 | |

| TitaNet | Mel Spectrogram | 55.25 | 73.03 | 87.61 | 93.15 | 94.19 | 97.17 | 97.55 |

| Cochleogram | 78.37 | 89.97 | 94.51 | 96.55 | 97.34 | 97.81 | 98.02 | |

| Model Type | Feature Type | EER (%) | Without Additive Noise | |||||

|---|---|---|---|---|---|---|---|---|

| SNR = −5 | SNR = 0 | SNR = 5 | SNR = 10 | SNR = 15 | SNR = 20 | |||

| Basic 2DCNN | Mel Spectrogram | 22.97 | 18.18 | 13.37 | 10.45 | 9.12 | 8.46 | 8.11 |

| Cochleogram | 17.83 | 14.59 | 10.82 | 8.72 | 7.19 | 6.54 | 6.47 | |

| ResNet-50 | Mel Spectrogram | 19.12 | 16.05 | 12.23 | 10.04 | 8.70 | 6.15 | 5.64 |

| Cochleogram | 16.06 | 13.23 | 9.41 | 8.21 | 7.92 | 5.41 | 4.28 | |

| VGG-16 | Mel Spectrogram | 18.83 | 15.71 | 11.92 | 9.74 | 8.37 | 5.86 | 5.28 |

| Cochleogram | 15.42 | 12.86 | 9.10 | 7.95 | 6.61 | 4.55 | 4.16 | |

| ECAPA-TDNN | Mel Spectrogram | 13.83 | 10.72 | 6.93 | 3.75 | 2.06 | 1.16 | 0.91 |

| Cochleogram | 11.15 | 9.68 | 5.84 | 2.61 | 1.30 | 0.64 | 0.61 | |

| TitaNet | Mel Spectrogram | 11.36 | 8.72 | 4.92 | 2.70 | 1.34 | 0.83 | 0.75 |

| Cochleogram | 10.82 | 7.64 | 3.83 | 2.53 | 1.24 | 0.62 | 0.54 | |

| Model Types | Feature Type | Dataset | Identification Accuracy (%) | Verification EER (%) |

|---|---|---|---|---|

| CNN-256 + Pair [27] | Mel Spectrogram | VoxCeleb1 | - | 10.5 |

| CNN [24] | Mel Spectrogram | VoxCeleb1 | 92.10 | 7.80 |

| Adaptive VGG-M [28] | Mel Spectrogram | VoxCeleb1 | 95.31 | 5.68 |

| CNN-LDE [29] | Mel Spectrogram | VoxCeleb1 | 95.70 | 4.56 |

| ECAPA-TDNN [9] | Mel Spectrogram | VoxCeleb1 | - | 0.87 |

| TitaNet [10] | Mel Spectrogram | VoxCeleb1 | - | 0.68 |

| 2DCNN (Ours) | Cochleogram | VoxCeleb1 | 95.62 | 5.33 |

| ResNet-50 (Ours) | Cochleogram | VoxCeleb1 | 97.85 | 4.06 |

| VGG-16 (Ours) | Cochleogram | VoxCeleb1 | 98.04 | 3.81 |

| ECAPA-TDNN(Ours) | Cochleogram | VoxCeleb1 | 97.89 | 0.61 |

| TitaNet(Ours) | Cochleogram | VoxCeleb1 | 98.02 | 0.54 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Lambamo, W.; Srinivasagan, R.; Jifara, W. Analyzing Noise Robustness of Cochleogram and Mel Spectrogram Features in Deep Learning Based Speaker Recognition. Appl. Sci. 2023, 13, 569. https://doi.org/10.3390/app13010569

Lambamo W, Srinivasagan R, Jifara W. Analyzing Noise Robustness of Cochleogram and Mel Spectrogram Features in Deep Learning Based Speaker Recognition. Applied Sciences. 2023; 13(1):569. https://doi.org/10.3390/app13010569

Chicago/Turabian StyleLambamo, Wondimu, Ramasamy Srinivasagan, and Worku Jifara. 2023. "Analyzing Noise Robustness of Cochleogram and Mel Spectrogram Features in Deep Learning Based Speaker Recognition" Applied Sciences 13, no. 1: 569. https://doi.org/10.3390/app13010569

APA StyleLambamo, W., Srinivasagan, R., & Jifara, W. (2023). Analyzing Noise Robustness of Cochleogram and Mel Spectrogram Features in Deep Learning Based Speaker Recognition. Applied Sciences, 13(1), 569. https://doi.org/10.3390/app13010569