A Unified Mixed Deep Neural Network for Fatigue Damage Detection in Components with Different Stress Concentrations

Abstract

1. Introduction

2. Experimental Method

2.1. Specimen Design

2.2. Fatigue Testing

2.3. Ultrasonic Signals

2.4. Binary Classification Using the Confocal Microscope

3. Data Analysis Methodology

3.1. Dataset Bifurcation Strategy, Training of Baseline DNNs, and Transductive Analysis

3.2. The Mixed Learning Approach

3.3. Performance Metrics

4. Results and Discussion

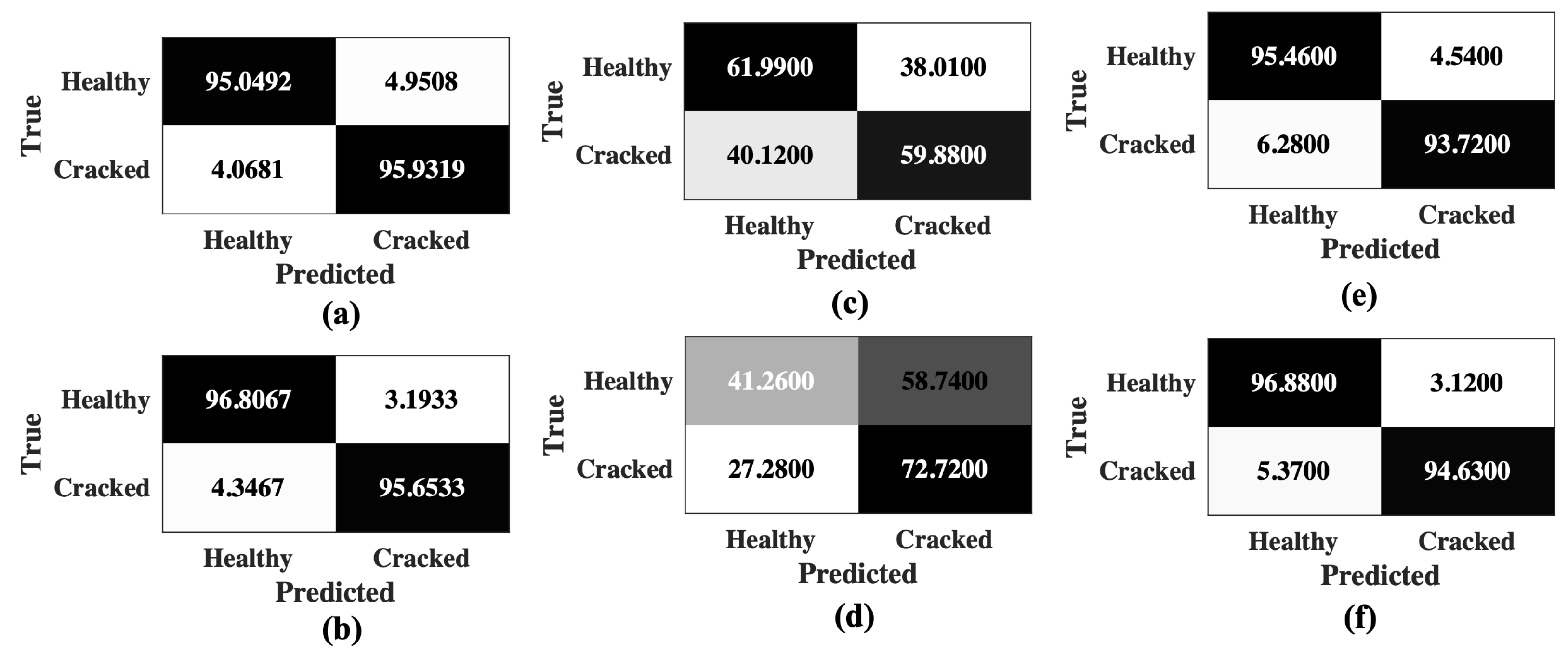

4.1. Performance of the Baseline DNNs and Transductive Analysis

4.2. Performance of the Mixed Learning Framework

5. Conclusions and Future Work

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Sofronas, A. Case Histories in Vibration Analysis and Metal Fatigue for the Practicing Engineer; John Wiley & Sons: Hoboken, NJ, USA, 2012. [Google Scholar]

- Stephens, R.I.; Fatemi, A.; Stephens, R.R.; Fuchs, H.O. Metal Fatigue in Engineering; John Wiley & Sons: Hoboken, NJ, USA, 2000. [Google Scholar]

- Suresh, S. Fatigue of Materials; Cambridge University Press: Cambridge, UK, 1998. [Google Scholar]

- Besten, H.d. Fatigue damage criteria classification, modelling developments and trends for welded joints in marine structures. Ships Offshore Struct. 2018, 13, 787–808. [Google Scholar] [CrossRef]

- Liao, D.; Zhu, S.P.; Correia, J.A.; De Jesus, A.M.; Berto, F. Recent advances on notch effects in metal fatigue: A review. Fatigue Fract. Eng. Mater. Struct. 2020, 43, 637–659. [Google Scholar] [CrossRef]

- Yaghoobi, M.; Stopka, K.S.; Lakshmanan, A.; Sundararaghavan, V.; Allison, J.E.; McDowell, D.L. PRISMS-Fatigue computational framework for fatigue analysis in polycrystalline metals and alloys. NPJ Comput. Mater. 2021, 7, 1–12. [Google Scholar] [CrossRef]

- Stopka, K.S.; Yaghoobi, M.; Allison, J.E.; McDowell, D.L. Effects of boundary conditions on microstructure-sensitive fatigue crystal plasticity analysis. Integr. Mater. Manuf. Innov. 2021, 10, 393–412. [Google Scholar] [CrossRef]

- Pilkey, W.D.; Pilkey, D.F.; Bi, Z. Peterson’s Stress Concentration Factors; John Wiley & Sons: Hoboken, NJ, USA, 2020. [Google Scholar]

- Liu, H.; Guo, S.; Chen, Y.F.; Tan, C.Y.; Zhang, L. Acoustic shearography for crack detection in metallic plates. Smart Mater. Struct. 2018, 27, 085018. [Google Scholar] [CrossRef]

- Avci, O.; Abdeljaber, O.; Kiranyaz, S.; Hussein, M.; Gabbouj, M.; Inman, D.J. A review of vibration-based damage detection in civil structures: From traditional methods to Machine Learning and Deep Learning applications. Mech. Syst. Signal Process. 2021, 147, 107077. [Google Scholar] [CrossRef]

- Farrar, C.R.; Lieven, N.A. Damage prognosis: The future of structural health monitoring. Philos. Trans. R. Soc. A Math. Phys. Eng. Sci. 2007, 365, 623–632. [Google Scholar] [CrossRef]

- Yuan, F.G.; Zargar, S.A.; Chen, Q.; Wang, S. Machine learning for structural health monitoring: Challenges and opportunities. In Proceedings of the Sensors and Smart Structures Technologies for Civil, Mechanical, and Aerospace Systems 2020, Online, 27 April–9 May 2020; Volume 11379, p. 1137903. [Google Scholar]

- Salehi, H.; Burgueño, R. Emerging artificial intelligence methods in structural engineering. Eng. Struct. 2018, 171, 170–189. [Google Scholar] [CrossRef]

- Azimi, M.; Eslamlou, A.D.; Pekcan, G. Data-driven structural health monitoring and damage detection through deep learning: State-of-the-art review. Sensors 2020, 20, 2778. [Google Scholar] [CrossRef]

- Zhao, R.; Yan, R.; Chen, Z.; Mao, K.; Wang, P.; Gao, R.X. Deep learning and its applications to machine health monitoring. Mech. Syst. Signal Process. 2019, 115, 213–237. [Google Scholar] [CrossRef]

- Munawar, H.S.; Hammad, A.W.; Haddad, A.; Soares, C.A.P.; Waller, S.T. Image-Based Crack Detection Methods: A Review. Infrastructures 2021, 6, 115. [Google Scholar] [CrossRef]

- Ebrahimkhanlou, A.; Salamone, S. Single-sensor acoustic emission source localization in plate-like structures using deep learning. Aerospace 2018, 5, 50. [Google Scholar] [CrossRef]

- Mylonas, C.; Tsialiamanis, G.; Worden, K.; Chatzi, E.N. Bayesian graph neural networks for strain-based crack localization. In Data Science in Engineering, Volume 9; Springer: Berlin/Heidelberg, Germany, 2022; pp. 253–261. [Google Scholar]

- Amiri, N.; Farrahi, G.; Kashyzadeh, K.R.; Chizari, M. Applications of ultrasonic testing and machine learning methods to predict the static & fatigue behavior of spot-welded joints. J. Manuf. Process. 2020, 52, 26–34. [Google Scholar]

- Bansode, V.M.; Billore, M. Crack detection in a rotary shaft analytical and experimental analyses: A review. Mater. Today Proc. 2021, 47, 6301–6305. [Google Scholar] [CrossRef]

- Dharmadhikari, S.; Basak, A. Fatigue damage detection of aerospace-grade aluminum alloys using feature-based and feature-less deep neural networks. Mach. Learn. Appl. 2022, 7, 100247. [Google Scholar] [CrossRef]

- Xu, L.; Yuan, S.; Chen, J.; Ren, Y. Guided wave-convolutional neural network based fatigue crack diagnosis of aircraft structures. Sensors 2019, 19, 3567. [Google Scholar] [CrossRef]

- Perfetto, D.; De Luca, A.; Perfetto, M.; Lamanna, G.; Caputo, F. Damage Detection in Flat Panels by Guided Waves Based Artificial Neural Network Trained through Finite Element Method. Materials 2021, 14, 7602. [Google Scholar] [CrossRef]

- Califano, A.; Foti, P.; Berto, F.; Baiesi, M.; Bertolin, C. Predicting damage evolution in panel paintings with machine learning. Procedia Struct. Integr. 2022, 41, 145–157. [Google Scholar] [CrossRef]

- Basak, A.; Das, S. Epitaxy and microstructure evolution in metal additive manufacturing. Annu. Rev. Mater. Res. 2016, 46, 125–149. [Google Scholar] [CrossRef]

- Angel, N.M.; Basak, A. On the Fabrication of Metallic Single Crystal Turbine Blades with a Commentary on Repair via Additive Manufacturing. J. Manuf. Mater. Process. 2020, 4, 101. [Google Scholar] [CrossRef]

- Dharmadhikari, S.; Raut, R.; Bhattacharya, C.; Ray, A.; Basak, A. Assessment of Transfer Learning Capabilities for Fatigue Damage Classification and Detection in Aluminum Specimens with Different Notch Geometries. Metals 2022, 12, 1849. [Google Scholar] [CrossRef]

- Kannan, A.; Datta, A.; Sainath, T.N.; Weinstein, E.; Ramabhadran, B.; Wu, Y.; Bapna, A.; Chen, Z.; Lee, S. Large-scale multilingual speech recognition with a streaming end-to-end model. arXiv 2019, arXiv:1909.05330. [Google Scholar]

- ASTM E466-15; Standard Practice for Conducting Force Controlled Constant Amplitude Axial Fatigue Tests of Metallic Materials. ASTM International: West Conshohocken, PA, USA, 2015.

- ASTM B209; Standards Specification for Aluminum and Aluminum-Alloy Sheet and Plate. ASTM International: West Conshohocken, PA, USA, 1996.

- Dharmadhikari, S.; Bhattacharya, C.; Ray, A.; Basak, A. A Data-Driven Framework for Early-Stage Fatigue Damage Detection in Aluminum Alloys Using Ultrasonic Sensors. Machines 2021, 9, 211. [Google Scholar] [CrossRef]

- Dharmadhikari, S.; Basak, A. Energy dissipation metrics for data-driven fatigue damage detection in the short crack regime. In Proceedings of the ASME Turbo Expo, Virtual, 7–11 June 2021. [Google Scholar]

- Dharmadhikari, S.; Basak, A. Evaluation of Early Fatigue Damage Detection in Additively Manufactured AlSi10Mg. In Proceedings of the 2021 International Solid Freeform Fabrication Symposium, Virtual, 2–4 August 2021. [Google Scholar]

- Dharmadhikari, S.; Keller, E.; Ray, A.; Basak, A. A dual-imaging framework for multi-scale measurements of fatigue crack evolution in metallic materials. Int. J. Fatigue 2021, 142, 105922. [Google Scholar] [CrossRef]

- Goodfellow, I.; Bengio, Y.; Courville, A.; Bengio, Y. Deep Learning; MIT Press: Cambridge, UK, 2016; Volume 1. [Google Scholar]

- Géron, A. Hands-On Machine Learning with Scikit-Learn, Keras, and TensorFlow: Concepts, Tools, and Techniques to Build Intelligent Systems; O’Reilly Media: Sebastopol, CA, USA, 2019. [Google Scholar]

- Bhattacharya, C.; Dharmadhikari, S.; Basak, A.; Ray, A. Early detection of fatigue crack damage in ductile materials: A projection-based probabilistic finite state automata approach. ASME Lett. Dyn. Syst. Control 2021, 1, 041003. [Google Scholar] [CrossRef]

- Xiao, W.; Yu, L.; Joseph, R.; Giurgiutiu, V. Fatigue-Crack Detection and Monitoring through the Scattered-Wave Two-Dimensional Cross-Correlation Imaging Method Using Piezoelectric Transducers. Sensors 2020, 20, 3035. [Google Scholar] [CrossRef] [PubMed]

| Specimen Type | Total Data | Healthy Data | Cracked Data |

|---|---|---|---|

| 448,939 | 204,571 | 244,368 | |

| 458,655 | 196,175 | 262,480 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Dharmadhikari, S.; Raut, R.; Ray, A.; Basak, A. A Unified Mixed Deep Neural Network for Fatigue Damage Detection in Components with Different Stress Concentrations. Appl. Sci. 2023, 13, 1542. https://doi.org/10.3390/app13031542

Dharmadhikari S, Raut R, Ray A, Basak A. A Unified Mixed Deep Neural Network for Fatigue Damage Detection in Components with Different Stress Concentrations. Applied Sciences. 2023; 13(3):1542. https://doi.org/10.3390/app13031542

Chicago/Turabian StyleDharmadhikari, Susheel, Riddhiman Raut, Asok Ray, and Amrita Basak. 2023. "A Unified Mixed Deep Neural Network for Fatigue Damage Detection in Components with Different Stress Concentrations" Applied Sciences 13, no. 3: 1542. https://doi.org/10.3390/app13031542

APA StyleDharmadhikari, S., Raut, R., Ray, A., & Basak, A. (2023). A Unified Mixed Deep Neural Network for Fatigue Damage Detection in Components with Different Stress Concentrations. Applied Sciences, 13(3), 1542. https://doi.org/10.3390/app13031542