Image-Based Radical Identification in Chinese Characters

Abstract

Featured Application

Abstract

1. Introduction

2. Materials and Methods

2.1. Indexing Radical Identifier

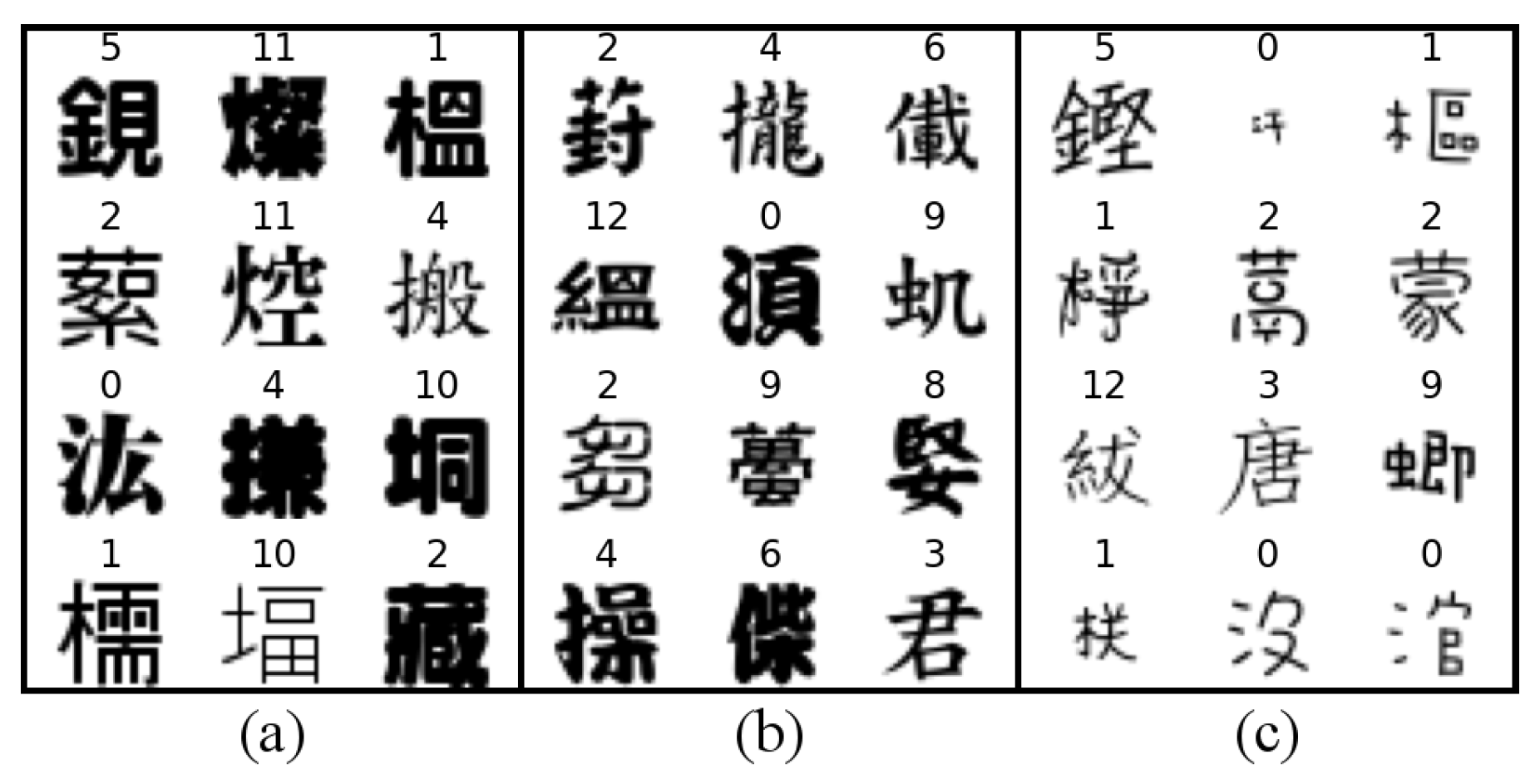

2.2. Training Dataset

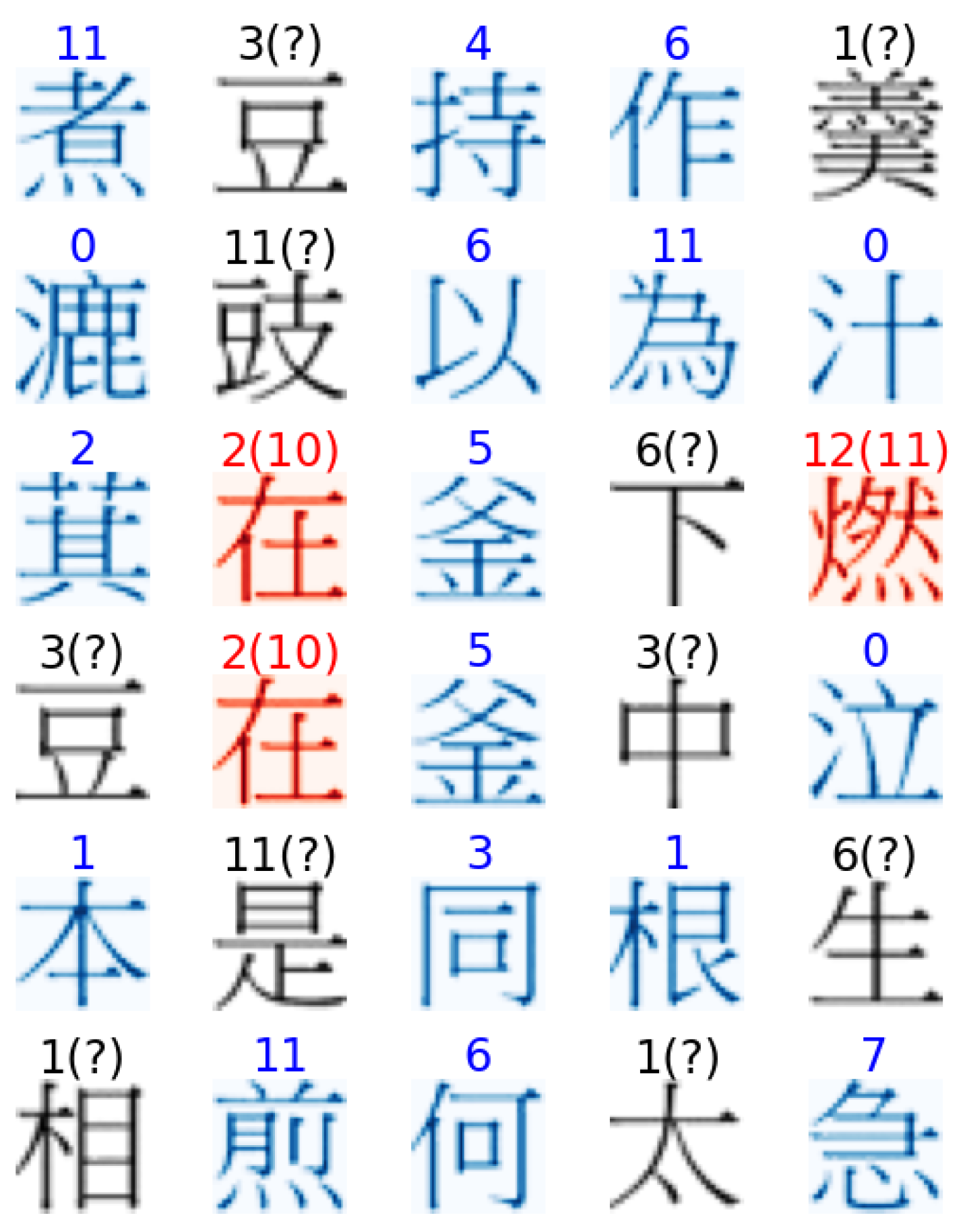

2.3. General Test

- (1)

- It must contain foreground pixels;

- (2)

- Its total area is within the 30% range of the average size of all loci ().

3. Results

3.1. Indexing Radical Identification Models

3.2. Model Validation for the 15 Indexing Radicals

3.3. Performance in General Test

4. Discussion

4.1. Performance of the Proposed Model

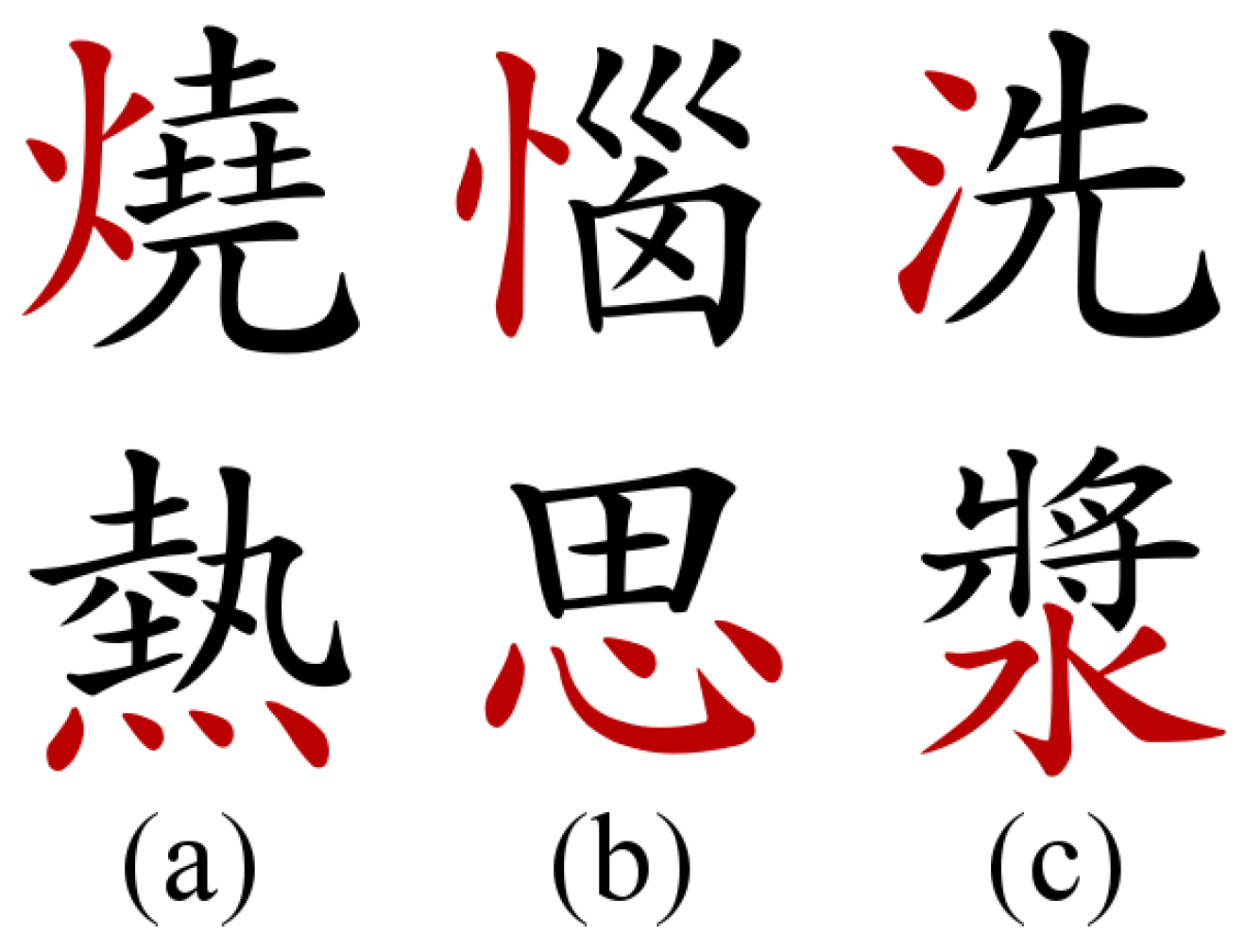

- (1)

- The bùjiàn component of the hanzi is an indexing radical itself. For instance, “沐” is formed by the indexing radical “water” (on the left side) and the bùjiàn “wood” (on the right side), which is also an existing indexing radical.

- (2)

- The indexing radical has multiple forms, but one is much rarer than the others among the entry set. For example, “person” (人) is more common in the standing form (e.g., 何) than the regular form (e.g., 企).

- (3)

- The same form of the indexing radical assumes different positions inside a hanzi, but one is more common than the other. For example, “fire” in its traditional form (火) is more common at the left side (e.g., 燒) than at the bottom of a hanzi (e.g., 災).

4.2. Related and Future Works

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| CJK | Chinese-Japanese-Korean |

| CNN | Convolutional neural networks |

| FN | False negative |

| FP | False positive |

| OCR | Optical character recognition |

| TP | True positive |

References

- Lindqvist, C. China: Empire of Living Symbols; Addison-Wesley: Reading, MA, USA, 1991. [Google Scholar]

- Yamasaki, T.; Hattori, T. Computer calligraphy—Brush written Kanji formation based on the brush-touch movement. In Proceedings of the 1996 IEEE International Conference on Systems, Man and Cybernetics. Information Intelligence and Systems (Cat. No. 96CH35929), Beijing, China, 14–17 October 1996; Volume 3, pp. 1736–1741. [Google Scholar] [CrossRef]

- Xu, S. Shuowen Jiezi [Discussing Writing and Explaining Characters]. (Eastern Han). 100–121. Available online: https://ctext.org/shuo-wen-jie-zi/zh (accessed on 11 May 2022).

- Zhan, H.; Cheng, H.J. The role of technology in teaching and learning Chinese characters. Int. J. Technol. Teach. Learn. 2014, 10, 147–162. [Google Scholar]

- Tao, H.; Tong, S.; Zhao, H.; Xu, T.; Jin, B.; Liu, Q. A radical-aware attention-based model for Chinese text classification. In Proceedings of the AAAI Conference on Artificial Intelligence, Honolulu, HI, USA, 27 January–1 February 2019; Volume 33, pp. 5125–5132. [Google Scholar] [CrossRef]

- Li, H.; Chen, H.C. Processing of radicals in Chinese character recognition. In Cognitive Processing of Chinese and Related Asian Languages; Chen, H.C., Ed.; Chinese University Press: Hong Kong, China, 1997; Chapter 8; pp. 141–160. [Google Scholar]

- Ho, C.S.H.; Ng, T.T.; Ng, W.K. A “radical” approach to reading development in Chinese: The role of semantic radicals and phonetic radicals. J. Lit. Res. 2003, 35, 849–878. [Google Scholar] [CrossRef]

- Tong, X.; Tong, X.; McBride, C. Radical sensitivity is the key to understanding Chinese character acquisition in children. Read. Writ. 2017, 30, 1251–1265. [Google Scholar] [CrossRef]

- Lü, C.; Koda, K.; Zhang, D.; Zhang, Y. Effects of semantic radical properties on character meaning extraction and inference among learners of Chinese as a foreign language. Writ. Syst. Res. 2015, 7, 169–185. [Google Scholar] [CrossRef]

- Shen, H.H.; Ke, C. Radical Awareness and Word Acquisition Among Nonnative Learners of Chinese. Mod. Lang. J. 2007, 91, 97–111. [Google Scholar] [CrossRef]

- Wong, Y.K. The role of radical awareness in Chinese-as-a-second-language learners’ Chinese character reading development. Lang. Aware. 2017, 26, 211–225. [Google Scholar] [CrossRef]

- Li, J.; Zhou, J. Chinese character structure analysis based on complex networks. Phys. A Stat. Mech. Appl. 2007, 380, 629–638. [Google Scholar] [CrossRef]

- Tong, X.; Yip, J.H.Y. Cracking the Chinese character: Radical sensitivity in learners of Chinese as a foreign language and its relationship to Chinese word reading. Read. Writ. 2015, 28, 159–181. [Google Scholar] [CrossRef]

- Chen, H.C.; Hsu, C.C.; Chang, L.Y.; Lin, Y.C.; Chang, K.E.; Sung, Y.T. Using a radical-derived character e-learning platform to increase learner knowledge of Chinese characters. Lang. Learn. Technol. 2013, 17, 89–106. [Google Scholar]

- Chen, M.P.; Wang, L.C.; Chen, H.J.; Chen, Y.C. Effects of type of multimedia strategy on learning of Chinese characters for non-native novices. Comput. Educ. 2014, 70, 41–52. [Google Scholar] [CrossRef]

- Liu, H.C. Using eye-tracking technology to explore the impact of instructional multimedia on CFL learners’ Chinese character recognition. Asia-Pac. Educ. Res. 2021, 30, 33–46. [Google Scholar] [CrossRef]

- Hu, H.; Du, X. Radical features for Chinese text classification. In Proceedings of the 2012 9th International Conference on Fuzzy Systems and Knowledge Discovery, Chongqing, China, 29–31 May 2012; pp. 720–724. [Google Scholar] [CrossRef]

- Tan, H.; Yang, Z.; Ning, J.; Ding, Z.; Liu, Q. Chinese Medical Named Entity Recognition Based on Chinese Character Radical Features and Pre-trained Language Models. In Proceedings of the 2021 International Conference on Asian Language Processing (IALP), Singapore, 11–13 December 2021; pp. 121–124. [Google Scholar] [CrossRef]

- The Unicode Consortium. The Unicode Standard; Technical Report Version 14.0.0; Unicode Consortium: Mountain View, CA, USA, 2021. [Google Scholar]

- Ke, Y.; Hagiwara, M. Radical-level Ideograph Encoder for RNN-based Sentiment Analysis of Chinese and Japanese. In Proceedings of the 9th Asian Conference on Machine Learning, Seoul, Republic of Korea, 15–17 November 2017; Volume 77, pp. 561–573. [Google Scholar]

- Huang, Y.; He, M.; Jin, L.; Wang, Y. RD-GAN: Few/Zero-shot Chinese character style transfer via radical decomposition and rendering. In Proceedings of the Computer Vision—ECCV 2020, Glasgow, UK, 23–28 August 2020; Vedaldi, A., Bischof, H., Brox, T., Frahm, J.M., Eds.; Springer International Publishing: Cham, Switzerland, 2020; pp. 156–172. [Google Scholar] [CrossRef]

- Xue, M.; Du, J.; Zhang, J.; Wang, Z.R.; Wang, B.; Ren, B. Radical Composition Network for Chinese Character Generation. In Proceedings of the International Conference on Document Analysis and Recognition, Lausanne, Switzerland; 5–10 September 2021; Springer: Berlin/Heidelberg, Germany, 2021; pp. 252–267. [Google Scholar] [CrossRef]

- Wang, T.; Xie, Z.; Li, Z.; Jin, L.; Chen, X. Radical aggregation network for few-shot offline handwritten Chinese character recognition. Pattern Recognit. Lett. 2019, 125, 821–827. [Google Scholar] [CrossRef]

- Zhang, J.; Du, J.; Dai, L. Radical analysis network for learning hierarchies of Chinese characters. Pattern Recognit. 2020, 103, 107305. [Google Scholar] [CrossRef]

- Yin, Y.; Zhang, W.; Hong, S.; Yang, J.; Xiong, J.; Gui, G. Deep learning-aided OCR techniques for Chinese uppercase characters in the application of Internet of Things. IEEE Access 2019, 7, 47043–47049. [Google Scholar] [CrossRef]

- Kim, C.; Kim, J.S.; Kim, U.J. A study on features for improving performance of Chinese OCR by machine learning. In Proceedings of the 2019 3rd High Performance Computing and Cluster Technologies Conference, Guangzhou, China, 22–24 June 2019; pp. 51–55. [Google Scholar] [CrossRef]

- Chollet, F.; Scott Zhu, Q.; Rahman, F.; Lee, T.; de Marmiesse, G.; Zabluda, O.; Jin, H.; Watson, M.; Chao, R.; Pumperla, M. Keras. 2015. Available online: https://keras.io (accessed on 10 March 2022).

- Tian, Y. zi2zi: Master Chinese Calligraphy with Conditional Adversarial Networks. 2017. Available online: https://kaonashi-tyc.github.io/2017/04/06/zi2zi.html (accessed on 10 March 2022).

- Yan, M.; Richter, E.M.; Shu, H.; Kliegl, R. Readers of Chinese extract semantic information from parafoveal words. Psychon. Bull. Rev. 2009, 16, 561–566. [Google Scholar] [CrossRef] [PubMed]

- Fawcett, T. An introduction to ROC analysis. Pattern Recognit. Lett. 2006, 27, 861–874. [Google Scholar] [CrossRef]

- Wu, Y.T.; Fujiwara, E.; Suzuki, C.K. Identification of the radical component from images of Chinese characters. In Proceedings of the 2022 35th SIBGRAPI Conference on Graphics, Patterns and Images (SIBGRAPI), Natal, Brazil, 24–27 October 2022; pp. 55–60. [Google Scholar] [CrossRef]

- Li, S.P.D.; Law, S.P.; Lau, K.Y.D.; Rapp, B. Functional orthographic units in Chinese character reading: Are there abstract radical identities? Psychon. Bull. Rev. 2021, 28, 610–623. [Google Scholar] [CrossRef] [PubMed]

- Zhang, C.; Liu, X. Feature extraction of ancient Chinese characters based on deep convolution neural network and big data analysis. Comput. Intell. Neurosci. 2021, 2021, 2491116. [Google Scholar] [CrossRef] [PubMed]

- Yalin, M.; Li, L.; Yichun, J.; Guodong, L. Research on denoising method of Chinese ancient character image based on Chinese character writing standard model. Sci. Rep. 2022, 12, 19795. [Google Scholar] [CrossRef]

| Radical | English Translation | Number of Hanzi | Class Label |

|---|---|---|---|

| 水 | Water | 1079 | 0 |

| 木 | Wood | 1016 | 1 |

| 艸 | Grass | 981 | 2 |

| 口 | Mouth | 756 | 3 |

| 手 | Hand | 740 | 4 |

| 金 | Gold, metal | 692 | 5 |

| 人 | Person | 645 | 6 |

| 心 | Heart | 581 | 7 |

| 女 | Girl | 477 | 8 |

| 虫 | Insect | 469 | 9 |

| 土 | Earth | 460 | 10 |

| 火 | Fire | 447 | 11 |

| 糸 | Thread | 423 | 12 |

| 言 | Word, to talk | 416 | 13 |

| 魚 | Fish | 395 | 14 |

| Font Category | Number of Samples |

|---|---|

| Regular | 140,423 |

| Brush | 220,642 |

| Handwriting | 93,738 |

| Validation Dataset | |||

|---|---|---|---|

| Model | Regular | Brush | Handwriting |

| Regular-Based | ∼98.6% | ∼69.4% | ∼30.9% |

| Brush-Based | ∼83.0% | ∼96.8% | ∼60.4% |

| Handwriting-Based | ∼41.8% | ∼71.7% | ∼91.8% |

| Poem | Total of Hanzi | Known Radical Hanzi |

|---|---|---|

| A | 30 | 20 |

| B | 35 | 23 |

| C | 80 | 36 |

| D | 28 | 16 |

| E | 28 | 13 |

| Poem | Only for Known Radical Hanzi | For All Hanzi |

|---|---|---|

| A | 85.0% | 68.0% |

| B | 100.0% | 79.3% |

| C | 83.3% | 51.7% |

| D | 75.0% | 54.5% |

| E | 84.6% | 53.7% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Wu, Y.T.; Fujiwara, E.; Suzuki, C.K. Image-Based Radical Identification in Chinese Characters. Appl. Sci. 2023, 13, 2163. https://doi.org/10.3390/app13042163

Wu YT, Fujiwara E, Suzuki CK. Image-Based Radical Identification in Chinese Characters. Applied Sciences. 2023; 13(4):2163. https://doi.org/10.3390/app13042163

Chicago/Turabian StyleWu, Yu Tzu, Eric Fujiwara, and Carlos Kenichi Suzuki. 2023. "Image-Based Radical Identification in Chinese Characters" Applied Sciences 13, no. 4: 2163. https://doi.org/10.3390/app13042163

APA StyleWu, Y. T., Fujiwara, E., & Suzuki, C. K. (2023). Image-Based Radical Identification in Chinese Characters. Applied Sciences, 13(4), 2163. https://doi.org/10.3390/app13042163