1. Introduction

The medial appeal of table tennis seems to go down in terms of television (TV) hours, at least outside Asia. One of the reasons is the fact that the speed of the game is currently so high that it is very hard for spectators to follow the balls [

1,

2]. Possible counteractions to slow the game down involve using bigger balls or higher nets.

One intention of possible rule changes is to reduce the impact of spin on the game. Another goal is to reduce the speed of the balls to allow better visual tracking during the rallies [

2]. Some rule changes, such as the use of a larger ball, different counting system, stricter limits for rubbers, or new service rules, were already implemented and new modifications are under discussion [

2]. For the players, all rule or technical changes have strong impacts on their techniques and strategies, usually requiring adaptations of their individual training programs. Therefore, players are rather hesitant to new rules.

The 40-mm ball played today is 2 mm larger and 0.2 g heavier than the 38-mm ball used before. It has a larger air drag due to its larger cross-sectional area reducing the maximum velocities [

3]. The mass distribution of the larger ball is shifted further away from the center compared with the 38 mm ball. This creates a larger inertial moment and reduces the spin. The larger 40-mm ball results in a velocity and spin reduction of about 5% to 10% [

4,

5]. However, the larger ball had practically no impact on the characteristics of table tennis, because larger exertions of forces by the players compensated for the effects of the size increase [

4,

6]. Because of the modified technique, the fitness of the individual player got more important. In modern table tennis, the forces for a stroke are created not only by the arms but also support from the whole body. A stronger athletic body allows more pronounced use of the legs producing larger forces on the ball, which are needed to compensate for the size increase. In addition, the wrist has to be used more effectively to produce spin. For the larger ball, only the use of the forearm is no longer sufficient for spin, as was the case for the 38-mm ball. The needs for larger exertion of forces amplify possible technical mistakes, because the individual movement execution gets extended [

7].

One obvious strategy for reducing the maximum velocity in table-tennis rallies is to increase the net height. However, such a change will have a severe impact on the characteristics of table tennis, because this will very directly limit fast spins, shots, and service. Therefore, until now, this change of rule was avoided and ball size was the preferred correction action. Nevertheless, a scientific database is still missing for a decision.

Usually, an empirical approach is followed to study the effect of such changes on the players and the game. Rather short and limited tests with some players are evaluated [

8]. These tests are biased, because the players use the technique optimized for the existing situation (ball size, ball weight, and net height) and modifications needed for the new situation take too long for the players to be automatized in their training to be considered. In this work, the impact of larger balls or higher nets on table tennis trajectories was studied using computer simulations. A database was created to quantify the influence of such changes. Modifications in technique, tactics, strength, and fitness were not considered in this analysis. For a huge number of initial conditions, the effect on successful strokes was studied. This delivers the maximum number of possible strokes for different conditions in terms of statistical distributions. This already represents the best possible adaptation to the changes, independent of what this would mean for the players in terms of changes in their training. Half a billion different initial conditions involving hitting location, initial spin, and velocities were analyzed to reach sufficient statistical significance.

In our previous work [

9], the distribution functions of successful hits were empirically compared for different cases with respect to their variations for the different variables, summing up the distributions with respect to all other variables. In this work, a more rigorous statistical analysis of the database is presented to identify the most significant variables. The multi-dimensional distribution functions of these variables were then used to identify the major differences for the different cases, thereby avoiding a possible bias in the analysis by correlation. An obvious example for a direct correlation between variables is the values of starting velocity and kinetic initial energy.

After a short discussion of the effects of larger balls and higher nets as measures to slow down table tennis, the forces acting on a moving ball are introduced. The very fast graphics processing unit (GPU) Compute Unified Device Architecture (CUDA) [

10] computer code solving the equation of motion is described, and statistical analysis of trajectory distribution functions for different balls and net heights is presented. Finally, the results are summarized and discussed.

2. Materials and Methods

For a quantitative analysis of the effects of ball size and net height, a computational approach was followed. The basic element of the simulation was the solution of the equation of motion for table-tennis balls. The equation of motion needs a mathematical description of the acting forces. The gravitational force of the earth and aerodynamic forces determine the flight trajectory of a table-tennis ball. The gravitational force,

alone results in a parabolic trajectory. This force acts toward the center of the earth and depends on the mass

m of the ball and the gravitational constant

g (9.81 m/s²). The aerodynamic forces modify the simple parabola by air drag and lift. Air drag acts as friction force against the direction of the movement of the ball. A simple example for this force is the back-pushing of a hand being held out of a driving car. A larger velocity gives stronger force acting against the direction of the car. This force also gets larger if one puts out not only a part of the hand, but the full hand. It scales with the cross-sectional area. The mathematical expression is as follows:

where

ρ is the density of air,

A is the cross-sectional area for a ball with radius

r (

),

v is the ball velocity, and

CD is an air drag coefficient, which can be measured, e.g., in wind-tunnel experiments.

The second important aerodynamic force is the airlift. The so-called “Magnus effect”, named after its discoverer Heinrich Gustav Magnus (1802–1870), is the reason that a rotating ball experiences a deviation from its flight path. The Magnus effect is a surface effect, because, around the spinning ball, a co-rotating air layer is formed at the surface of the ball. The flying and spinning ball induces a pressure imbalance, because, on one side, the ball is rotating with the airflow created by the movement of the ball in the air, while the other side is opposite to it. On the side where counter-rotation exists, the total velocity of the airflow is reduced, because both velocities compensate for each other partly. On the co-rotation side, a larger flow velocity is created, because both velocities add up. Higher velocity in a flow means lower pressure, and the pressure differences of the two sides lead to the deviating Magnus force, mathematically expressed with an airlift coefficient

CL as

The airlift force acts perpendicular to the axis of rotation

and to the velocity

. Air drag and lift coefficients of a rotating ball (

Figure 1) as a function of the ratio of spinning velocity to translational velocity were implemented into the computer code as a fit of experimental data [

11,

12,

13,

14,

15].

During a topspin shot with forward rotation, the lift force acts downward; during a backspin with backward rotation, it acts upward.

Swirling balls, often quoted in soccer and volleyball, can be created when the ball is hit with a critical velocity leading to access of the inverse Magnus effect. It shows up in

Figure 1 for low spinning velocities as a negative value of the airlift coefficient. This can lead also to swirling balls in table tennis, because, during the flight path, the regime of positive and negative airlift coefficients can change, resulting in swirling. However, for table-tennis balls, negative airlift coefficients exist only where the coefficient itself is already quite small. Therefore, the effect exists, but gives only deviations of some millimeters. The frequently quoted swirling balls with long pimples are, therefore, more a psychological effect than one due to physics: the pre-programmed movement of the player anticipates a flight path of a strongly rotating ball from a normal rubber sponge. The balls from long pimples with reduced rotation have a different flight path with less lift and fall down earlier such that the player misses the ball, and he complains that the ball was swirling.

The computer code solves the equation of motion of table-tennis balls for given initial positions, velocities, and spins (see one example in

Figure 2). A Euler solver was used, because its algorithmic simplicity allowed an easy transfer onto the GPU with CUDA.

The implicit assumption of the Verlet algorithm (with or without velocity calculation) is that the driving force is conservative, i.e., on a closed path, no work is done. For our system studied here, this was not the case, since the drag and the Magnus force both influence the trajectory depending on the actual velocity. If a particle on a closed path comes back to its origin, the drag will slow it down. Energy is not conserved. In the standard Verlet scheme, the second force evaluation depends only on the position, whereas the force on the table-tennis ball is velocity-dependent. Thus, valid options are the Euler and Runge–Kutta (RK) methods. The RK fourth-order method requires four force calculations compared to one for the Euler method. Each force evaluation calls a function to calculate the drag coefficient and another one for the lift coefficient, because these depend on the actual translational velocity. These are rational functions where the numerator and denominator are polynomials of the third order. This makes every force evaluation costly.

For a very long flight of 2 s, a test trajectory with the standard time step of the code (10−4 s) led to an end position even beyond the table. The difference of the final positions was 0.115mm for the Euler and RK4 methods. This was well below the precision of 1 mm we were looking for. However, the RK4 method was 4.37 times slower. Increasing the time step by a factor of five to compensate for the reduced calculation speed compared with the Euler method increased the error for the RK4 method beyond 1 mm, which we defined as our resolution limit.

A more extensive comparison of the Euler and RK4 algorithms was done for 10

5 random test trajectories with identical start conditions for both integrators. The differences are listed in

Table 1.

The results confirm the single test trajectory results. The number of successful trajectories and their distribution is nearly indistinguishable for the two cases and the 4.4-times-faster Euler integrator can be used.

One important factor for the limits of the time step for the integrator is the additional dependence of the drag and lift coefficients on the velocity (see

Figure 1). These are non-linear terms in velocity, and changes in velocity in one time step need to be small enough to linearize this term during the time integration. Adaptive time-stepping could solve the problem, but this counteracts the optimized usage of GPUs, because this is only effective if the calculation effort for the different trajectories calculated in parallel is balanced. A strong variation of calculation time would reduce the effectivity of this parallelization strongly, because the slowest trajectory is then defining the limit. Therefore, the Euler method was chosen as a fast method for the calculation of half a billion trajectories for each case within the precision limit of 1 mm for the time step of 10

−4 s.

For a statistical analysis of the effects of ball sizes and net heights on trajectories of table-tennis balls a Monte Carlo procedure was used.

Many different initial conditions were solved:

x was varied between 0.3 m and −3 m, representing hitting locations from 30 cm above the table to 3 m behind the table;

y was kept constant at 0.381 m, which is ¼ of the width of the table-tennis table. This was chosen as a representative position, the exact location of the hitting point in

y (forehand or backhand position) is not important for this numerical test. Initial height

z was sampled from 0.4 m to −0.4 m. The direction of the initial velocity was determined in the following way: the horizontal angle was sampled between the limiting angles of the starting point to the net posts, and the elevation angle was chosen randomly. The spin axis (expressed as normalized spin vector) was also sampled randomly, which means topspin, backspin, and sidespin were included. The spinning of the ball is constant during the flight, as proven experimentally [

16].

Monte Carlo studies using random numbers were done for the 38-mm ball with a weight of 2.5 g, used in tournaments until end of 2000, the actual 40-mm ball with 2.7 g, and a 44 mm-ball with a weight of 2.3 g, which was previously tested in Japan. For the 40-mm ball, an increase in net height of 1 and 3 cm was also analyzed.

The analysis was aimed particularly at fast shots. Therefore, only balls passing the net within 30-cm height distance were accepted. The absolute values of the translational velocities were limited from 20 to 200 km/h, the spinning velocities from 0 to 150 turns/s (which is equal to 9000 turns/min). These values were previously determined empirically as limits for 38-mm balls [

17]. A ball is counted as a successful ball if it passes the net within the height limit and hits the other side of the table-tennis table.

The sampling of such a large number of initial conditions guarantees covering all possible combinations of initial parameters (positions, and translational and spinning velocities) for the different cases creating a successful stroke. Clearly, for different balls and net heights, the parameter space of initial conditions leading to successful strokes will be different. The database created in this study allowed also an analysis of this effect.

For each case, 5 × 10

8 initial conditions were sampled and trajectories calculated. Initially this was done on a Linux cluster with 32 cores. The run-time for each core was 20 h, resulting in a total run time of 640 h. Alternatively, GPU computing with CUDA was used on a Dell Precision T7500 desktop with NVIDIA Quadro FX3800. Here, only 3 h for the same calculation was needed. CUDA [

8] is a programming interface using the parallel architecture of NVIDIA GPUs for general-purpose computing. CUDA library functions are provided as extensions of the C language, which allows for convenient and rather natural mapping of algorithms from C to CUDA. A compiler generates executable code for the CUDA device. The central processing unit (CPU) identifies a CUDA device as a multi-core coprocessor. For the programmer, CUDA consists of a collection of threads running in parallel. A collection of threads, which is called a block, runs on a multiprocessor at a given time. The blocks form a so-called grid. They divide the common resources, such as registers and shared memory, equally among them. All threads of the grid execute a single program called the kernel. All memory available on the device can be accessed using CUDA with no restrictions on its representation. However, the access times vary for different types of memory. Shared and register memory are the fastest, as they locate on the multiprocessor (on chip). The shared memory has the lifetime of the block and it is accessible by any thread on the block from which it was created. This enhancement in the memory model allows programmers to better exploit the parallel power of the GPU for general-purpose computing. Additionally, the texture memory that is off-chip allows for faster reading compared to global memory due to caching.

Our implementation consists of two main procedures [

8]. Firstly, a predefined number of trajectories were initialized on the CPU side. Thereafter, the ball movements were implemented on the GPU. One step of the equation of motion for the ball’s trajectory, which includes the speed and the position of the ball, was computed in a kernel. The input parameter of the kernel function was the previous trajectory point. The calculations ran for a maximal number of iterations. In each iteration step, the updates of the ball’s position and velocity were computed if the trajectory was not stopped earlier, e.g., when the ball flew beyond the table. The algorithm was optimized for GPU coding by minimizing the control flow, which slows down the parallel process. All trajectories were followed for the same number of iterations and, if the ball flew beyond the table, a flag identifying this was set. This procedure guaranteed optimum performance because all trajectories required the same calculation time and the threads were nearly perfectly balanced. At the end of the GPU calculation, the results of the trajectories were pushed back to the CPU; further diagnostics, such as identifying successful hits, was done there.

For each case, half a billion initial conditions were sampled and trajectories were calculated on a GPU with CUDA [

8]. This also defines the uniqueness of this work, because a sufficient resolution of the phase space was possible only with this optimized coding and the usage of a GPU. Calculation of trajectories in table tennis mostly concentrates on individual cases without statistical analysis [

18]. New interest for the fast calculation of table-tennis trajectories is motivated by the research on robots [

19,

20] and for the programming of computer games [

21]. The large modeling database established by this procedure was analyzed in this paper using statistical methods to identify, in more detail, the major differences for the different cases and their major dependencies.

3. Results

Datasets of successful hits for the different cases (38-mm ball, 40-mm ball, 44-mm ball, and 40-mm ball with 1- and 3-cm-higher net) were created.

Table 2 shows that, in terms of successful strokes, the 38-mm and 40-mm balls varied only marginally. The heavier 44-mm ball allowed for more strokes that were successful, whereas the higher net reduced this number.

The list of dataset variables is presented in

Table 3.

The data of the different cases were loaded into a Pandas [

22] table. The data of the trajectories themselves were normalized, i.e., the mean was zero and the standard deviation was set to one. The two variables

y0 and

ze had to be removed from the original data, since their standard deviation was practically zero. The initial

y-position

y0 was not varied and

ze was the height at the end of the trajectory, which was, by definition, zero for successful trajectories. For these datasets, the principal component analysis (PCA) from the Python package scikit-learn [

23] was applied to the 21-dimensional parameter space.

Table 4 shows the results for the 40-mm ball reference case; the other PCA results can be found in

Appendix A.

Many components were superfluous, and their contribution was negligible. The first strong drop of eigenvalues can be seen in

Figure 3 after four components, and a second step appeared after 10 components. The first four components accounted for about 68% of the variance. This subspace could be used to identify the major differences. An optimum set for the four remaining variables was found with vstart:

ω0,

ωy, and

ωz. This combination led to a nearly equal distribution of eigenvalues, as shown in

Table 5.

A further reduction would result in a severe loss of variance in the data. Using more variables would lead to a strong drop of the next eigenvalue, demonstrating the reasonable limit of variables to this subset.

This reduction in the number of variables to four was plausible because the start velocity, together with the rotation velocity and the rotation axis, determines most of the dataset variation. Only two components of the rotation axis were needed, because this was a unit vector and, from two components, the third component was pre-determined. The influence of starting positions and initial angles of the start velocity was much weaker and was reflected by the second drop in eigenvalues after the 10th component.

Using this subset of variables, a more detailed statistical analysis was then done. Comparing the results for eigenvalues and eigenvectors of the 38-mm ball and 40-mm ball databases gave nearly identical results (

Figure 4). The first eigenvector was dominated by the contribution from the spin velocity, the second one by the translational velocity, the third one by the topspin component, and the fourth by the sidespin.

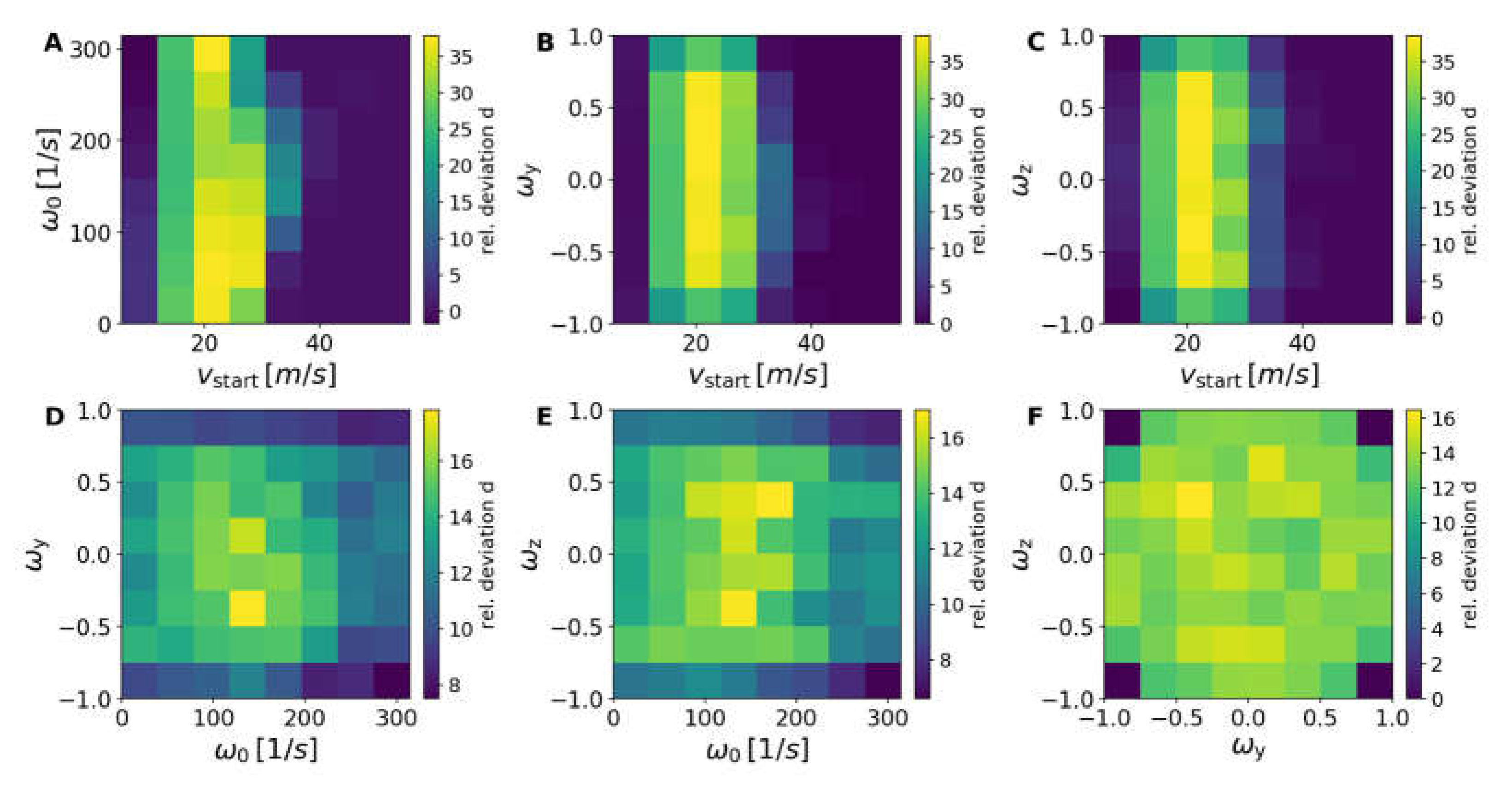

To analyze the differences between the different cases a four-dimensional histogram of each case was calculated with the Numpy “histogramdd” routine [

24]. The relative deviation

d was calculated with respect to the case of the 40-mm ball using the following equation:

where Φ is the number of trajectories within the four-dimensional bin with the coordinates (

i,

j,

k,

l) of the histogram; Φ is an abbreviation for Φ

i,j,k,l. Two dimensions from

vstart,

ω0,

ωy, and

ωz were selected for the plots denoted by

i and

j, whereas the relative deviation was summed up over the remaining dimensions,

k and

l. In order to account only statistically sensible data, differences in the numerator less than 10 were neglected. Plots were then generated with the Python packages Matplotlib [

25] and HoloViews [

26].

The 38-mm ball and the 40-mm ball were practically identical (

Figure 5); very small differences showed up close to statistical variation limits.

The larger and heavier 44-mm ball showed clear deviations from the 40-mm reference database (

Figure 6 and

Figure 7).

The first eigenvector had an even stronger weighting of the spin compared to the 40-mm reference case. Also, the second and third eigenvectors increased the dominant weights for the translational velocity and the topspin, whereas the fourth eigenvector stayed unchanged.

This change in eigenvectors can be easily understood. The global increase of successful strokes for the 44-mm ball compared to the 40-mm ball resulted mainly from the fact that the 40-mm ball was heavier (increasing the gravitational force).

One needs larger spins to influence the trajectories of the 44-mm ball. For higher initial velocity, a larger number of successful shots was possible with the 44-mm ball, because the effect of drag forces increased with larger size.

Deviations for the cases with 1- and 3-cm-higher nets for the 40-mm balls compared with the normal 40-mm scenario were more pronounced.

With an increased net height of 1 cm (see

Figure 8 and

Figure 9) and 3 cm (see

Figure 10 and

Figure 11) for the 40-mm ball, a strong reduction in the number of successful strokes was detected. The reduction increased, as naively expected, when the net was higher.

The first eigenvector had larger contributions from spin, but now also significant contributions from translational velocity. The second eigenvector had a reduced weighting of the translational velocity, but a larger weighting of the sidespin. The third eigenvector increased also the influence of sidespin, whereas the fourth eigenvector showed an increase of the spin contribution with a further reduction of the translational velocity. This was the result of the increased net height. If the starting velocity was too low, the ball was not able to pass the higher net successfully. Therefore, a reduction of successful strokes appeared for low starting velocities compared to the standard net. The higher net needed stronger spins and reduced velocities for successful strokes. For very low velocities, the impact of the air drag was not yet important, resulting in a smaller reduction compared to higher velocities of about 30 m/s (or higher), where a strong reduction is observed. This reduction in the number of successful trajectories is equivalent to a slowing-down of the game.

The strong reduction in the number of successful strokes was linked with rather small top spin components ωy, but rather larger sidespin components ωz.

In addition, cluster analysis was applied to the data in order to dissect the properties of the most interesting cases. The datasets of the trajectories included roughly three million entries. A naive approach with an agglomerative clustering algorithm would easily exceed the memory of most computer systems. The agglomerative clustering with no constraints scaled quadratically in memory such that a crude estimate amounted to 36 TB = (3 × 106)2 × 4 B.

Therefore, balanced iterative reducing and clustering using hierarchies (BIRCH) clustering from the Python scikit-learn library [

23] was used to reduce the number of data points to about 1100 points. This tool is rather memory-effective and designed for big datasets. The method generates a tree structure which can also be passed to other clustering algorithms. In our case, this was the agglomerative clustering from the same library [

23]. The metric used was Ward linkage with Euclidean affinity. This linkage minimized the variance of the clusters being merged.

From these reduced datasets, dendrograms were generated with the Python scipy library [

27]. A dendrogram is a plot of a hierarchical binary tree. The height of each line represents the distance between different datapoints with the given metric.

From the dendrograms (see

Figure 12), one can visually deduce the number of clusters for each case. Cutting the tree at a given height with maximum variation of the metrics delivered the minimum number of clusters required for this case.

For all cases, one can find four clusters as sufficient to represent the largest metric variations. The reduced datasets for all cases were further diminished with K-means clustering from scikit-learn [

23] until four clusters remained. The centers of the clusters contained the condensed properties of the different systems and could be compared easily. The sizes of the symbols also represent the number of members of the clusters, showing that they were nearly identical.

Figure 13,

Figure 14 and

Figure 15 show the most significant differences for the cluster centers of the four clusters for the different cases. The larger 44-mm ball showed higher initial velocities for all four cluster centers compared to the 40-mm ball, as shown also in previous work [

8], because the 40-mm ball was heavier, as discussed before (

Figure 13).

Included in the cluster analysis, one can also see from

Figure 14 shows that, even for the higher initial velocities in the 44-mm case, a clear slowing-down of the end velocity appeared. The same was obtained for the 40-mm case with increased net height (shown is the case with 3-cm increased height).

Larger

ωz and

ωy are visible in

Figure 15 for the centers of the 40-mm case with increased net height and even stronger effects for the 44-mm ball, which had a smaller weight than the 40-mm ball. One needed larger spins to influence the trajectories of the 44-mm ball, because the effect of drag forces increased with larger size. The higher-net case needed stronger spins and reduced velocities for successful strokes. The

ωz values were larger than the

ωy contributions, meaning that stronger sidespin appeared, as discussed before.

4. Discussion

The databases for the 38- and 40-mm balls showed only variations within statistical fluctuations for the multi-dimensional distribution of successful shots, whereas the 44-mm ball results were already significantly different compared to the 40-mm ball, as discussed also in Reference [

8]. The analysis confirmed the empirical observation that the change of the ball in the year 2000 from a 38-mm to a 40-mm-ball could be compensated for such that their resulting trajectory distribution functions were nearly identical. This was achieved in reality by adaptations of technique and the material. One needed larger spins to influence the trajectories of the 44-mm ball with smaller weight compared with the 40-mm ball. For higher initial velocity, a larger number of successful shots was possible with the 44-mm ball, because the effect of drag forces increased with larger size. Very high velocities above 35 m/s were suppressed earlier for the 44-mm ball. A larger ball of 44 mm with small weight is one option for suppressing high velocities; however, the players could compensate for this by improving their physical fitness to perform stronger shots.

Most of the results of the previous empirical analysis are supported. A clear influence was visible for the 40-mm ball upon increasing the net height. For smaller initial and end velocities of about 10 m/s, a reduction in the number of successful trajectories showed up, being equivalent to a slowing-down of the game. For very low velocities, the impact of the air drag was not yet important, resulting in a larger number of successful trajectories. As a new result, the importance of stronger sidespin for the cases of higher nets was identified, which would change the characteristics of long rallies in table tennis. For these cases, not only are reduced velocities important, but the tactical possibilities will be modified by reducing the number of spinless short trajectories. The strong reduction in the number of successful strokes was linked with rather small top spin components ωy, but rather larger sidespin components ωz. This means that the game will not only slow down, but also diagonal play with longer reaction times for the opponent will get more important than fast parallel balls. One can also expect from this longer and more attractive rallies. However, the characteristics of the game will change strongly, because the possibilities for successful trajectories are limiting technical and tactical alternatives, reducing especially the influence of service.

Modifications of basic rules of table tennis, such as ball size and net height, can reduce the maximum velocities; however, such modifications will be linked to severe changes in the characteristics of table tennis: dynamics, technique, and strategy will change strongly. The question is if a possible gain in attractiveness of table tennis for TV upon implementing such changes is worth the loss of key elements of existing table tennis.