1. Introduction

Road pavements are transport infrastructures built with different types of hot mix asphalt (HMA), namely mixtures made of aggregates and bitumen, mixed at temperatures higher than 150 °C. In order to withstand traffic loads and environmental conditions, such infrastructures have to be properly designed in terms of their thickness and material properties. With respect to the composition and mechanical characteristics of HMAs, experimental methods are currently used to design and optimize such bituminous mixtures [

1,

2,

3,

4,

5,

6]. Particularly, for what concerns the bitumen content identification and the mixtures’ performance evaluation, quite onerous laboratory tests are necessary; moreover, such experimental procedures require experienced and skilled technicians. Any modification of the HMAs composition, in terms of type or quantity of bitumen, rather than of aggregates, requires new laboratory tests. A numerical model of HMAs’ mechanical behavior that could quickly elaborate a reliable prediction of the material’s response would allow a reduction in time and costs for the design of the mixture itself.

Typically, a mathematical model of the material’s behavior is based on constitutive equations, expressed in analytical terms [

7,

8], eventually implemented in a computational system for finite element analysis [

9,

10].

One of the milestone studies on the modeling of the mechanical behavior of bituminous mixtures aimed to investigate the elastic–viscoelastic correspondence principle, which allowed the elastic continuum damage model to extend to a corresponding viscoelastic damage model, characterized by an evolution law able to properly describe the growth of damage within asphalt concrete, under uniaxial tension tests at different strain rates [

11]. The following developments of such a constitutive approach have included cyclic loading conditions [

12,

13] and an analysis of the healing phenomenon [

14]. On the basis of the elastic–viscoelastic correspondence principle and continuum damage mechanics, the constitutive framework has been further elaborated to obtain a fatigue prediction model, which has also been compared to a classic phenomenological fatigue model [

15,

16,

17]. It has been demonstrated that the regression coefficients of the phenomenological model can be expressed as functions of viscoelastic and damage characteristics of asphalt concretes. More recently, the modeling approach has been modified to consider the viscoplastic rate-dependent hardening–softening response of bituminous mixtures under compression [

18,

19,

20,

21]. A viscoelastoplastic formulation of the constitutive approach has also been developed [

22,

23].

Linear viscoelastic, rather than thermo-viscoplastic laws have also been studied by Di Benedetto et al. [

24,

25] to model the mechanical response of bituminous mixtures.

A fundamental constitutive approach proposed by Masad et al. [

26] to model the permanent deformation of asphalt concretes at high temperatures was characterized by an anisotropic non-associated flow rule, on the basis of the Drucker–Prager yield surface. Laboratory data obtained by triaxial tests, carried out at different confining stress levels and strain rates, were used to validate the proposed model, with respect to three different asphalt concretes. Another relevant aspect of such an approach is the relationship that has been established between the model parameters and some significant aggregate properties (shape, surface energy, anisotropic distribution), particularly with regard to the optimization of the mixture.

The research group of the Texas A & M University, after a series of studies [

27,

28,

29,

30], achieved significant advances, leading up to a temperature-dependent viscodamage model coupled to the Schapery’s nonlinear viscoelasticity and the Perzyna’s viscoplasticity [

31]. Such a model has allowed the ability to distinguish between the compressive and tensile response of asphalt concretes with respect to the damage development phenomenon. Advanced numerical algorithms were used to implement the proposed model in a general-purpose finite element code. An effective method to obtain the model parameters through the creep test carried out at various stress levels and temperatures was also developed.

Further improvements have been accomplished over the years with more refined models based on the mechanics of materials [

32,

33,

34,

35,

36,

37,

38,

39,

40] to acquire a deeper understanding about the complex relationship between asphalt mixtures’ microstructure and their mechanical behavior. Particular emphasis has been given to the possibility of using such constitutive approaches to properly select asphalt concretes’ components and their optimal proportions to ensure the required design properties and performance of the mixture. Furthermore, advanced material characterization methods have also been used to support such modeling approaches, for instance, techniques based on X-ray computed tomography image analysis.

A coupled constitutive model, still based on Schapery nonlinear viscoelasticity and Perzyna-type viscoplasticity, but properly modified to take into account the hardening–relaxation phenomena, represents the current state of the art achieved by such a research group [

41]. One of the key characteristics of the last approach is given by the possibility to calibrate and validate the model on the basis of conventional tests, namely dynamic modulus tests and repeated creep-recovery tests. The model has shown the capacity to properly describe the complex behavior of asphalt concretes under different loading conditions.

Other studies, based on continuum thermomechanics, have allowed constitutive equations suitable for modelling the mechanical response of asphalt concretes to be obtained. Krishnan et al. [

42] developed a nonlinear rate type viscoelastic model, by means of thermodynamic principles. A coupled temperature-dependent viscoelastic, viscoplastic, and viscodamage model was obtained by Darabi et al. [

43], on the basis of a thermodynamic framework.

Introducing the Helmholtz free energy and the concept of internal state variables, a viscoelastic–viscoplastic damage model, congruent from the thermodynamics point of view, was developed by Zhu and Sun [

44]. A deep discussion on the capacity of the model to reliably predict the volumetric deformation was provided; the contraction and dilation response of the material was successfully associated to the viscoelastic component and viscoplastic damage component, respectively.

Within the ambit of thermomechanics, Chen et al. [

45] recently derived a constitutive formulation in finite strains and characterized by a viscoelastic dissipation potential to take into account the deviatoric and volumetric response, a modified Perzyna viscoplastic law with a non-associated flow rule, to model the inelastic deformation by means of a Drucker–Prager type plastic dissipation potential and a damage model, specifically introduced to represent the different behavior of the material in tension and compression.

The environmental as well as the physical and chemical behaviors of the bituminous mixtures, related to ageing, healing, or moisture-induced damage, have also been introduced in some constitutive formulations [

46,

47,

48,

49].

Other mathematical and numerical methods are based on fractional models [

50,

51,

52,

53], rather than the distinct element method [

54,

55,

56,

57].

The brief discussion just presented represents a partial overview of the total amount of studies conducted by different research groups on the modeling of asphalt concretes’ behavior, based on the mechanics of materials. Nevertheless, it can be stated that the main and very important characteristic of such methods is the mechanistic framework of the numerical or mathematical expressions, which allows a rational analysis and deep understanding of the bituminous mixtures’ response under different testing conditions. For this reason, the mechanistic constitutive methods also represent a really useful tool to support the mix design phase.

Alternative approaches rely on methods that are not physically based, for instance, statistical rather than artificial neural networks (ANNs) models.

Statistical regressions of experimental data sets could be an alternative approach to develop predictive equations of the HMA’s property analyzed [

58,

59,

60,

61]. Nevertheless, it has also been reported that ANNs can produce more accurate predictions with respect to multiple linear regression [

62,

63]. Indeed, in recent years, ANNs have arisen in scientific research as a powerful numerical technique for data analysis [

64], even in the pavement-engineering field. Tapkın et al. [

65] and Baldo et al. [

66] verified the possibility of fitting Marshall test results of bituminous mixes to provide predictive equations that can be used to quicken the empirical Marshall mix design; nevertheless, such neural models are not mechanistically based and for this reason, they are considered as “black box” methods. However, the so called “black box” principle is basically based on a nonlinear fitting approach, as it has previously been widely discussed [

66]. Furthermore, even without a physical basis, such approaches can be useful to reduce the number of laboratory tests required by the Marshall mix design, which is still widely adopted in many asphalt laboratories [

67,

68,

69,

70,

71].

ANNs have also been used in other studies to model different mechanical parameters of bituminous mixtures for road pavement; such researches have reported the use of a basic network architecture, a standard approach for the data sampling and adoption of just one type of transfer function, namely the hyperbolic tangent one [

65,

72,

73,

74,

75,

76,

77]. This type of methodological approach has been followed primarily because the data sets were large enough to avoid the use of more complex neural network structure; however, it has been mentioned that more sophisticated ANNs could be useful to improve the prediction performance of neural models [

64].

Therefore, the main objective of the current paper was to verify the effectiveness of an ANN modeling approach based on multiple layer structures, a more reliable data sampling technique and a more efficient transfer function, for accurate and fast prediction of mechanical parameters of bituminous materials to enhance the mix design phase in the laboratory. The ANNs models developed in this study were trained and tested on the basis of laboratory data of HMAs for road pavements, characterized by different types of aggregate and bitumen.

2. Theory and Calculation

2.1. Artificial Neural Network Modeling

An artificial neural network is a computational approach that is increasingly used in the development of predictive models; its fundamental unit is the artificial neuron [

78,

79]. The function of an artificial neuron, like a biological one, is to process input signals and modulate its own response through an activation function, called the transfer function, which determines the interruption or transmission of the outgoing impulse. The computing power of a biological brain depends on the number of connections between neurons and the same goes for ANNs. In a feedforward ANN, neurons are organized into layers and since information flows only in one direction without any recurrent cycle, connections are established between neurons belonging to different layers so that none of the possible pathways touch the same neuron twice. Within an ANN, each neuron (

) computes a weighted sum (

) of elements (

) in the input vector through weights (

) associated with each connection. Then, it calculates an output value (

) by applying an assigned transfer function to

[

80]:

where

is the number of elements in the input vector and

is the neuron bias. The transfer function may take different analytical expressions. In the current study, the positive linear (Equation (2)) and hyperbolic tangent (Equation (3)) functions were used:

Connections’ weights and neuron bias are adjustable scalar parameters and their values determine the network function. The central idea of neural networks is to adjust such parameters, through an iterative process (called “training” or “learning”), so that the ANN is able to perform a desired task [

78,

79,

81]. Given an experimental data set, the learning of a feedforward network is based on a supervised approach: It consists of iteratively adjusting weights’ values to minimize the difference between experimental targets and calculated output by using an optimization algorithm [

78,

79,

81]. The supervised learning is divided into two phases (

Figure 1): After the initialization of connection’s weights, the forward pass begins, which consists of processing the incoming information through the parallel processing of many neurons to compute net outputs. Then, the backward pass carries out a comparison between the experimental targets and calculated outputs so that a backpropagation algorithm can evaluate a weight’s correction. With such an approach, the artificial network learns to recognize the implicit relationship between the input and target and can provide a solution to new input data. A detailed description of the structure of feedforward networks, the computational process performed by the neuron, and the supervised learning has previously been discussed [

66].

2.2. The K-Fold Cross Validation

In machine learning, the available data set is usually divided into subsets for training, validation, and testing. After the model’s hyperparameters have been set up (for example, the number of hidden layers, number of neurons in each layer, learning rate, etc.), the training data set, generally made up of 60% to 80% of the data, is used to train the model itself. If the model performs satisfactorily on the training data, then it is run on the validation data set, which usually ranges from 5% to 20% of the data. The validation data set helps to provide an assessment of the model’s capability. If the error (i.e., the mean squared difference between the predicted and experimental data, ) on the validation data set significantly increases (or decreases) compared to the training error, then an overfitting (rather than an underfitting) condition has occurred. The test data set, typically consisting of 5% to 20% of the data, is employed to complete the analysis and assessment of the model prediction capacity.

The procedure described above, considered as “conventional”, poses two issues: On the one hand, the subdivision of the data into subsets with different purposes involves the risk of leaving out some relevant trends from the training data set and, on the other hand, a model can lead to a lower prediction error on training data but at the same time to high test error due to data sample variability [

82].

An alternative to the conventional approach is the so-called k-fold cross validation (CV). It is a statistical technique that allows a more accurate estimation of a model’s performance and then to compare and select a model for a given predictive modeling problem [

83,

84,

85,

86]. It is strongly recommended to follow such a procedure in the case of a relatively small data set [

78,

81]. It involves a random division of data into k folds of equal sizes: One of these folds is used as a test set to evaluate the model’s performance and others (

k−1) are grouped to form the training data set (

Figure 2). The training and test phases are performed k times and each time a fold is assumed as a test set while the remaining ones are used to train the model. Performing multiple rounds of CV with different subsets from the same data allows a significant reduction in sample variability: All data are included in a training set (

k−1) times, and in a test set once. Usually, the value of k is set to 5 or 10, as these values have been shown to result in more accurate estimations of a model’s capacity [

82,

86]. By calculating the average of the testing error,

, over these multiple rounds, an estimation of the model’s predictive performance is obtained:

where

is the number of samples in the

i-th fold,

is the experimental targets, and

is the ANN-calculated outputs. Including a measure of the variance of the skill scores is also recommended [

82,

83], such as the standard deviation (i.e., the standard deviation is the square root of the variance) of

:

However, to find the hyperparameters of the model that best fit the experimental data and that satisfactorily generalize the problem, it is necessary to define a model selection procedure. This consists of designing several neural networks by assigning numerical values, within a range, to one of the hyperparameters and by fixing those of the remaining ones. This operation is repeated by varying at least one pair of hyperparameters. Resulting models have to adapt well to the data used for training. Subsequently, a k-fold CV is performed on each of the models thus defined. The network that shows the best predictive performance is initialized and then trained on the entire data set available; this is the final model that is used for predictions.

2.3. ANN Structure

In the current study, ANN models of the selected mechanical parameters of the bituminous mixtures investigated were developed using the software MATLAB

® (R2014b, The MathWorks Inc., academic use). The designed ANNs are of the feedforward type and are characterized by multiple layers, which make use of the positive linear rather than the hyperbolic tangent transfer function. The structure of all the ANNs is completed by an output layer, adopting a linear transfer function. The use of the positive linear transfer function (also known as ReLu, rectified linear unit [

64,

87]) has been shown empirically that it leads to better performance than the use of the hyperbolic tangent, which is prone to problems of rapid saturation [

64,

87]. Each hidden layer has the same number of neurons. The backpropagation algorithm, used to train the networks and improve their generalization, is the Bayesian regularization, which updates the weight values according to the Levenberg–Marquardt optimization [

88].

The network was set up with random initial weights and biases, whereas the learning rate (i.e., the parameter that controls changes of weight and bias during learning) was 0.001, the number of epochs (i.e., is the maximum number of presentations of the set of training vectors to a network and updates of weights and errors) was 3000, the error goal was 0.01, and the minimum gradient (i.e., the magnitude of the gradient of performance used to stop the training) was 0.0000001. In order to train networks more efficiently, a pre-processing function was assigned to normalize the data set, so that the input variables and the target parameter had a mean equal to zero and standard deviation equal to one. The same function, as a post-processing function, also reconverts normalized outputs into the targets’ original units. The following equation illustrates the normalization process performed on the training and test data sets:

where

is the

i-th component of the input vector or one of the three mechanical parameters considered in the current study;

and

are respectively the average and the standard deviation of the parameter,

; and finally,

is the normalized input or target variable treated (

Table S1). Each new data that will be used by the network has to be pre-processed by the mean of Equation (6).

The number of hidden layers and the number of neurons in each hidden layer were selected as hyperparameters to be optimized in the model selection procedure to search for the best predictive capabilities on the basis of the selected machine learning method (i.e., the Bayesian regularization).

2.3.1. ANN-Model’s Equation

Referring to the computational process performed by neurons (

Section 2.1) and to the architecture of multiple-layer ANNs, the vector equation of a one-hidden layer model (Equation (7)), two-hidden layer model (Equation (8)), and three-hidden layer model (Equation (9)) are reported in the following:

where

is the

length input vector and

is the network scalar output associated with the considered mechanical parameter. All ANN models were elaborated on the basis of four numerical input variables, namely the bitumen content (% by weight of mix), filler to bitumen ratio (%), air voids (%), and voids in the mineral aggregates (%), and two categorical input variables, namely the bitumen type and aggregate type. Such input data have been considered as effective in properly representing the composition of HMA mixes, which were designed and produced with different aggregate (limestone or diabase) and bitumen (standard 50/70 or modified 25-55/75) types. Predictive models characterized by such types of input data allow modifications of the mixture composition to be numerically simulated, obtaining an estimation of the mechanical parameter under analysis without the necessity of performing further experimental tests, thus representing an extremely useful design tool during the optimization of the material.

The parameters’ meaning of Equation (8) is explained in the following, while a graphical representation is reported in

Figure 3. Vector and scalar variables are indicated in bold and italics type, respectively. Each layer is characterized by a connections’ weight matrix,

, adds a vector,

, of neurons’ bias, and returns an output vector,

. To distinguish one layer from another, the number of the layer is added to the variable of interest; furthermore, a distinction between the weight matrix that is connected to inputs and ones that are connected between layers is made by associating letters,

and

, to

, respectively. A pair of indexes is also assigned to each weight matrix to identify the destination (first index) and the origin (second index) of represented connections. Thus, in a three-layer feedforward network (

Figure 3), the

neurons in the first hidden layer add an

bias vector,

, to the dot product of

(i.e., the

weight matrix of connections between

inputs and

neurons) and the input vector,

. The sum of the bias,

, and the product,

, is the so-called net input,

, with the dimension,

. This sum is processed by the transfer function,

, to get the neurons’

output vector,

, of the first hidden layer, which is also the input vector of the second hidden layer. This layer, made of

neurons, elaborates the incoming information by the

bias vector,

, the

layer weight matrix,

(having a source 1, neurons’ outputs of the first layer, and a destination 2, neurons of the second layer), and the transfer function,

, to produce the

output vector,

. This process is repeated for all the hidden layers that belong to the architecture of the ANN. The last layer, which produces the network output, is called the output layer; function-fitting neural networks often have just one output neuron, because most of the time the prediction needed regards only one target value associated with a specific mechanical or physical parameter. Thus, the sum of the scalar bias,

, and the product,

, is elaborated by the transfer function,

, typically of the linear type, to obtain the scalar network output, labelled as

. The concepts discussed above can also be applied to the other equations’ variables.

2.4. Model Selection Procedure and Error Estimation

Quantities and functions illustrated in

Section 2.3 represent the networks’ hyperparameters that are set in the model selection procedure in order to ensure better efficiency of the training process. As mentioned, the number of hidden layers (

) and the number of neurons in each hidden layer (

) were modified to obtain 15 case studies for each selected transfer function (positive linear or hyperbolic tangent):

was changed from 1 to 3,

from 6 to 14, by increments of 2 neurons; such research design is reported in

Table 1. In the literature, the use of a lower number of neurons and a greater number of hidden layers is recommended in order to increase the capacity of an ANN and avoid overfitting problems [

72].

The hyperparameters’ selection is based on the evaluation of the models’ prediction capacity [

83,

89], which is obtained by means of statistical indicators. In fact, the aim of the model evaluation is to estimate the generalization error of the selected model, calculated on the basis of a defined test set. The term “generalization” means the ability of a model to return a good prediction on unseen data, i.e., that have not been used for training. The most used statistical indicators, especially in the “conventional” procedure, are the mean squared error (

) and the correlation coefficient (

). The first one (whose analytical expression is reported in the last side of Equation (4)) calculates the average squared difference between the experimental targets (

) and ANN-computed outputs (

); it is also employed as performance function (also called the “loss” function) to optimize the ANN training process [

78]. In general, the lower the mean square error, the closer the ANN-calculated data are to the experimental ones (i.e., calculated values are dispersed closely to experimental ones). Instead, the correlation coefficient,

, measures the linear relationship between

and

, and it is expressed by the ratio between the covariance of the two considered variables (

and

) and the product of the respective standard deviations:

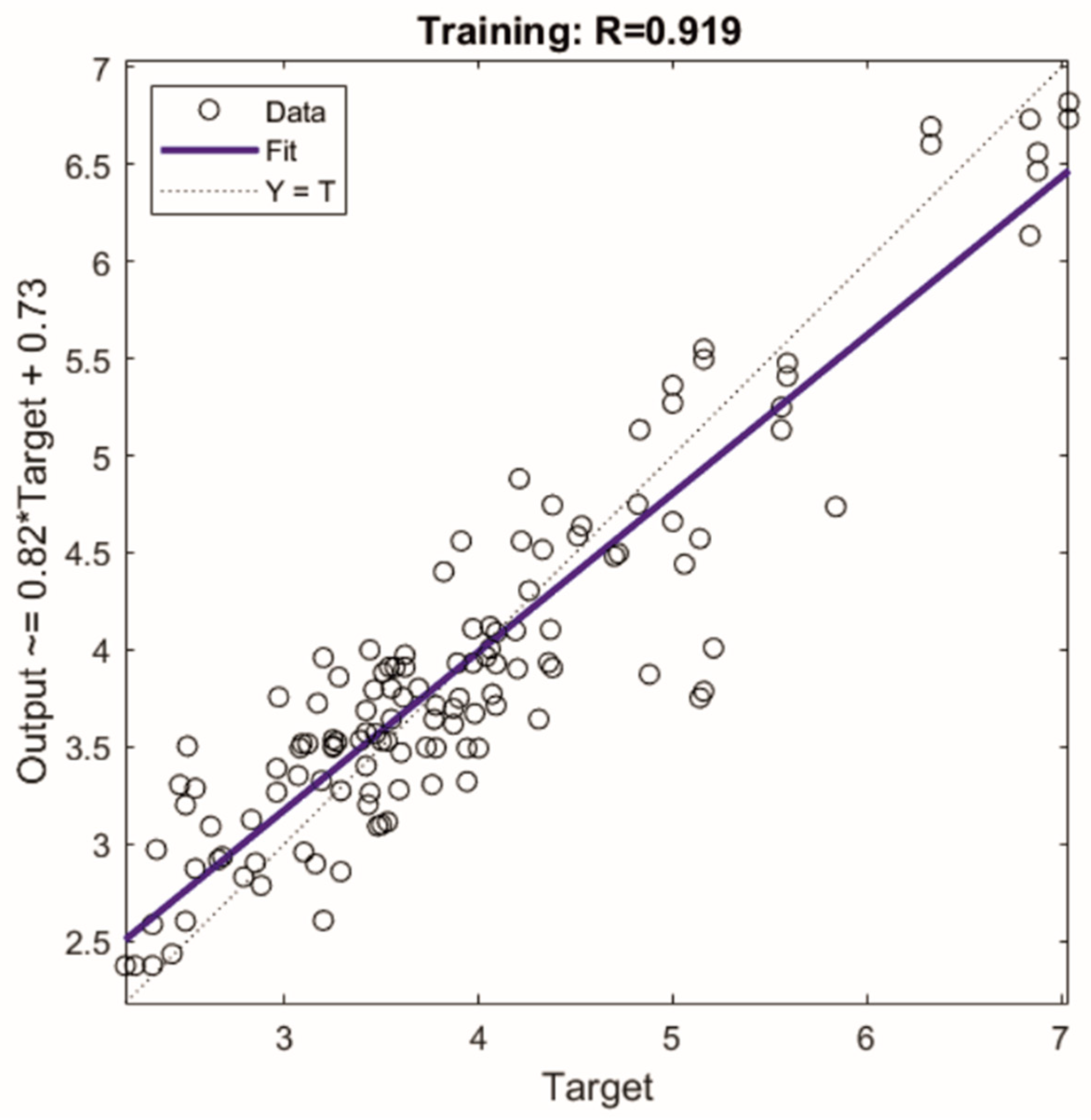

where

and

are the mean value of the targets and of network outputs, respectively. In general, the closer the value of

is to the unity, the stronger the results of the linear relation between

and

, thus confirming that the training has been completed successfully (if

for the training data set) and that the degree of generalization achieved can be considered optimal (if

for the testing data set).

The mean squared error and the correlation coefficient have already been used in previous performance analysis of some ANNs designed to predict the mechanical parameters of HMA mixtures [

65,

66,

72,

73,

74,

75,

76,

90].

On the contrary, when a k-fold CV is performed, the ANN performance evaluation takes place through three statistical indicators [

82,

83,

84,

85,

86], namely the CV mean squared error (

), the MSEs’ standard deviation (

), and the CV correlation coefficient (

), which is the average of the test correlation coefficient (

) over k rounds. A fourth indicator is given by the mean difference (

) between the training and test MSEs over k-folds, which allows the occurrence of an overfitting problem to be assessed. In fact, when a model over-adapts to the training data set, the difference between training and test errors increases [

82,

85]. Therefore, the hyperparameters that allow the lowest values of

(i.e., higher

),

, and

to be obtained can be selected to identify the final model, which has to be trained on the entire data set available.

2.5. Standard Approach

Five ANNs with a different number of neurons were also developed for each mechanical parameter of interest using the MATLAB® ANN toolbox; these networks were trained with 80% of the available data set and tested on the remaining 20%. This configuration was chosen to match the data subdivision performed by the five-fold CV. The sampling process was completely random and carried out just one time by the standard approach implemented in the MATLAB® ANN toolbox. If such an initial subdivision of the data set, with the following training and testing of the model, gives an unsatisfactory performance, it is suggested, on the basis of the experience of the researcher, that the whole procedure is repeated with a new sampling of the data set; however there is no automatic procedure aimed at optimizing model performance. In order to evaluate the performance of the standard approach with respect to the sample variability, in this study, random sampling was repeated 10 times to obtain 10 random subdivisions of the available data set.

The k-fold cross validation involves partitioning the available data set into k non-overlapping groups of equal size that represent the partition’s classes of the initial data set [

78,

81]. Therefore, given that the performance of a model is evaluated on k test sets (which by definition cannot contain common elements), the average of test errors across k trials represents a more reliable estimation of the model’s predictive capacity [

78,

81]. Indeed, in the standard approach, the subdivision of the data set in the training and test subsets is randomly performed and by repeating this operation, the identified test sets can contain common elements, which leads to a noisy estimation of the predictive performance for small-size data sets [

78,

81]. Furthermore, the MATLAB

® ANN toolbox carries out the data sampling as a “black box” and consequently it does not allow the performed subdivision of the data set to be easily identified; thus, the variability of the training and test set is difficult to establish.

Therefore, this study of the standard-ANN’s aimed to verify the wide variability of the models’ predictive capacity that could be caused by data sample variability.

Standard ANN Structure

The designed standard-ANNs are characterized by one hidden layer with a hyperbolic tangent transfer function, and one output layer with a linear transfer function; this architecture is set as the default in the MATLAB

® ANN toolbox. The number of neurons in the hidden layer ranges from 6 to 14 by increments of 2 neurons; as a result, five different standard-ANNs were obtained for each mechanical parameter considered. The seven types of input data already mentioned above (

Section 2.3.1) were considered. Moreover, all the hyperparameters fixed in the model selection procedure for k-fold analysis were set to the same values (

Section 2.3). In the following, these ANNs are simply called “standard”.