1. Introduction

The Internet of Things (IoT) was introduced for the first time by the British scientist Kevin Ashton in 1999, where he described a system that would allow physical objects to be connected to the Internet via many sensors [

1]. The IoT can be defined as a network of interconnected devices that can send and receive data while it is in a static or dynamic state [

2]. IoT devices collect data by using some devices, such as sensors and radio-frequency identification (RFID) tags, for a special event or environment to provide an intelligent solution for different challenges. This has become possible because of the rapid development of technologies, such as cloud computing, advanced data analysis algorithms, and wireless communication [

1]. Therefore, the IoT has been used in various applications, such as smart vehicles, smart homes, healthcare, and industries, to put them on a network and digitize them. All of the collected data can be exchanged between all the parties in the IoT; for example, the data can be exchanged between a human and a device, a device and a device, and a human and all other realistic environments [

3].

Edge computing is an emerging technology that aims to deliver various services and applications close to IoT devices [

4]. Edge computing aims to minimize the high latency in IoT applications, improve network performance, reduce operational cost, ensure the appropriate use of energy and resources, and efficiently manage data. It has been implemented by many researchers to detect malicious activities in IoT devices. For example, Eskandari et al. [

5] proposed an intelligent anomaly intrusion detection system for IoT devices. The proposed method analyzes the network traffic by using an edge device to detect malicious behavior.

Various researchers have proposed different techniques to detect malicious activities in IoT devices. These techniques range from tools running on edge computing to cloud computing. Some examples include [

6,

7]. These tools extract one or more features of the network traffic. Subsequently, they apply machine learning techniques to classify the requests as malware or benign. However, some of these tools have high latency, as they depend on fog computing to capture and analyze requests. Moreover, some of the existing works only consider a few types of attacks in the IoT in their detection system.

This paper presents CoLL-IoT, a collaborative system that detects malicious activities in IoT devices. CoLL-IoT consists of the following four main layers: IoT layer, network layer, fog layer, and cloud layer. All of the layers work collaboratively by monitoring and analyzing all the network traffic that is generated and received by IoT devices. The first layer, namely the IoT layer, consists of all the IoT devices and sensors that are connected to the system. The second layer contains intelligent edge computing devices that observe all of the network traffic generated by the IoT devices in the previous layer. Managing and maintaining a list of all the malicious activities is the responsibility of the fog layer. The last one is the cloud layer; it contains high computational resources to train and update the detection system in the edge computing devices.

In summary, the main contributions of this research are as follows:

Present CoLL-IoT, a collaborative system that detects malicious activities that are targeting IoT devices.

Implement different machine learning algorithms to achieve the best results in terms of time and space complexities.

Evaluate the proposed system on UNSW-NB15 [

8] dataset that was recently generated using the data of real traffic.

Deploy and execute CoLL-IoT on a low powered device and effectively detect most of the malicious activities with low type II error rate.

Achieve a better detection rate than existing tools by using the same benchmark dataset.

The remainder of this paper is organized, as follows:

Section 2 discusses the background of the Internet of Things (IoT) and the edge computing paradigm.

Section 3 discusses the related work.

Section 4 presents the system design of CoLL-IoT.

Section 5 presents the results of the proposed system and a discussion of the results. Finally,

Section 6 presents the conclusion of this paper.

3. Related Work

Researchers have proposed a variety of tools to detect different attacks targeting IoT devices. These attacks are classified into multiple types, as follows: physical attack, network attack, software attack, and data attack. Ibrahim et al. [

6] proposed a detection tool, called AD-IoT (which stands for anomaly detection of IoT), which analyzes the network traffic and uses a machine learning algorithm to detect malicious traffic in IoT devices. The proposed system consists of the following three layers: IoT layer, fog layer, and cloud layer. Moreover, they applied different machine learning algorithms to evaluate the proposed system by using the UNSW-NB15 dataset [

8]. However, their results did not show the binary classification performance of the proposed system. Furthermore, the proposed system does not illustrate the capturing process of the network traffic.

Nevertheless, Kasongo and Sun [

13] conducted a study to analyze the performance of intrusion detection systems using UNSW-NB15 [

8]. They applied the extreme gradient boosting (XGBoost) [

14] algorithm with a filter-based feature reduction technique. Subsequently, they applied different machine learning algorithms to evaluate the proposed feature reduction technique and the achieved an accuracy of 90.85% for the binary classification part. Therefore, the application of such a technique will improve the classification results for the selection of the optimal features for the classification. Moreover, Moustafa and Saly [

15] evaluated the different network anomaly detection systems by using different datasets, namely UNSW-NB15 [

8] and KDD99 [

16]. Their statistical analysis revealed that the use of the UNSW-NB15 dataset in anomaly detection systems led to better performance than that of the KDD99 dataset, as the former contains more than 40 features that are composed of the network flow between hosts. In the case of the UNSW-NB15 dataset, the accuracy reached 85.56%; in contrast, when the KDD99 dataset was used, the highest accuracy was 92.30%. However, the study revealed that the UNSW-NB15 dataset can be considered to be more complex than the KDD99 dataset, as it contains more behavioral traffic of modern attacks.

Papamartzivanos et al. [

17] proposed new detection rules that were based on a decision tree (DT) algorithm to classify network attacks and zero-day attacks that target IoT devices. The proposed tool, called Dendron, was tested on different datasets, namely KDD99, UNSW-NB15, and NSL-KDD [

18]. It achieved an accuracy of 98.85% on the KDD99 dataset, while achieving an accuracy of 97.55% and 84.33% on the NSL-KDD and UNSW-NB15 dataset, respectively.

Furthermore, Parker et al. [

7] utilized a deep learning detection technique to improve IoT intrusion detection systems. The proposed model combined deep extraction and mutual information selection elements with a radial basis function classifier. The proposed model was called DEMISe, and the Aegean WiFi impersonation attack detection (AWID) dataset was the dataset utilized to evaluate the proposed system [

19]. It achieved a detection rate of

with the top 10 features using logistic regression (LR) classifier. However, this method takes a long time for the classification as compared to the previously discussed methods.

Zhou et al. [

20] proposed another detection tool based on machine learning by using the random forests (RF) algorithm. The proposed method was tested on the KDD99 and NSL-KDD datasets and achieved an accuracy of

on the KDD99 dataset. However, despite the high accuracy, the proposed method could not detect attacks from the network traffic [

21].

Anthi et al. [

22] proposed a three-layer intrusion detection system using a supervised machine learning approach. The proposed system classifies network attacks on IoT devices in three phases, as follows: (i) profile each normal behavior for each IoT device connected to the network, (ii) detect malicious packets in the network on the basis of the attack behavior, and (iii) classify the attack’s type once it has been detected. The detection of malicious packets in the network achieved an F-score of

.

Nguyen at al. [

23] proposed anomaly detection system, called (DÏoT), for IoT based on federated learning (FL) approach, which can be defined as multiple devices build a joint training machine learning model without sharing the data [

24]. The proposed system trained on devices using unlabeled data to detect malicious behavior in the network traffic. The proposed system achieved a detection accuracy rate of

. However, despite the high accuracy rate achieved by DÏoT, some potential vulnerabilities exist in the federated learning approach, such as model poisoning and inference attacks [

25].

Ferhat and Ahmet [

26] proposed a hybrid malware detection technique that uses autoencoder and deep neural networks (ANN). The proposed system uses the UNSW-NB15 dataset that depicts a recent network flow of multiple attacks. The main contribution of the proposed tool is to use the autoencoder that allows the neural network model to learn in an unsupervised approach. The evaluation of the proposed system shows that the best detection accuracy rate of achieved is

using the relu activation function.

Table 2 shows a comparison of the existing works in terms of the security threat, detection method, evaluation, utilized datasets, applied machine learning, and limitations.

Limitations of Existing Works: as malware is becoming a severe problem in IoT paradigm, a comprehensive solution is needed to protect users’ data and safeguard the resources of the IoT devices. Unfortunately, existing solutions suffer from many limitations. For example, the proposed system by Ibrahim et al. [

6] has a major limitation, which is analyzing network traffic on the fog computing layer, which results in a high latency rate. Moreover, the solutions proposed by [

13,

15,

17] produce a low detection rate, which might not be able to detect malicious behavior accurately. Nevertheless, the execution time for the tool proposed by [

19] takes a long time to classify a new sample as benign or malicious traffic. Additionally, the tool that was proposed by [

22] was developed to detect only five types of the IoT attacks, while CoLL-IoT is designed to detect more type of attacks. Moreover, some of the existing tools do not consider typical network attack, such as the proposed tool by [

20], which does not consider typical network attacks and it uses the KDD99 dataset, which does not contain recent attacks.

Therefore, CoLL-IoT overcomes the limitation of existing tools by introducing a collaborative system that detects malicious activities that are targeting IoT devices. Furthermore, it applies several machine learning algorithms to achieve the best results in terms of time and space complexities whlie using a recent benchmark dataset.

4. Proposed Method

The goal of this research was to design and implement a collaborative system that detects malicious activities in IoT devices; the proposed system was named CoLL-IoT. CoLL-IoT consists of four main layers, as follows: IoT layer, network layer, fog layer, and cloud layer, as shown in

Figure 4. All of the layers work collaboratively by monitoring and analyzing all of the network traffic generated and received by the IoT devices.

Figure 5 shows the basic steps of the proposed system.

The first layer, the IoT layer, consists of all the IoT devices and sensors that are connected to the system. Therefore, all of the network traffic generated or received by the IoT devices in this layer will be analyzed by an intelligent system in the upper layer.

The network layer is the second layer. This layer contains intelligent edge computing devices that observe all of the network traffic generated by the IoT devices in the lower layer. All of the traffic will be captured as raw packets to extract the required features that allow machine learning models to distinguish abnormal traffic.

Figure 6 shows the packet capturing process in this layer and the architecture of the edge computing device. This layer consists of two detectors, called the pre-detector and the primary detector. The pre-detector utilizes scanning technique to analyze all samples to detect abnormal activities. This achieved by sending a query of each incoming and outgoing traffic to VirusTotal [

27], in order to classify each sample as benign or malicious. Incoming and outgoing traffic are both considered to protect the devices from communicating with attackers or malicious destinations. Therefore, if a device initiates a new communication or receives an incoming communication, then the pre-detector will interrupt that request for further investigation. Therefore, the pre-detector will query the interrupted request to VirusTotal to check the destination’s or source’s IP address of the request. Thus, if the results from the pre-detector do not contain any malicious activity, then the extracted features will be presented to the primary detector model to classify the extracted features as malicious or benign based on the main model that is trained on the cloud layer. The goal of the primary detector is to detect zero-day attacks that are not detected by VirusTotal yet. Hence, if the sample is detected to be abnormal by one of the detectors, then the traffic will be blocked, and the sample will be sent to the upper layer for further analysis to confirm the malicious activities. Moreover, the detected sample will be broadcasting to all other primary detector nodes as a zero-day attack. The primary detector model is stored in the internal storage of the edge device and it will be updated automatically on the basis of the notification received from the upper layer. There are two reasons of considering VirusTotal for scanning all packets in the pre-detector: (1) VirusTotal is a free service that can be accessed online through the website or the pre-designed API; and, (2) VirusTotal allows users to analyze files or URLs using different antivirus and scanner systems. Algorithm 1 shows the detection procedures for CoLL-IoT.

| Algorithm 1 CoLL-IoT Detection Procedures |

Input: NT: Captured Network Traffic; Output: Result: 0-Normal; 1-Malicious; - 1:

- 2:

for do - 3:

- 4:

if then - 5:

- 6:

else - 7:

- 8:

|

The upper layer that is responsible for managing and maintaining a list of all the malicious activities is the fog layer, as shown in

Figure 4. In this layer, a list of all the detected abnormal traffic will be aggregated from the different edge computing devices in the previous layer. Subsequently, the detected samples will be analyzed again to reduce the error rates. This step confirms the abnormal behavior of the captured packets in the lower layer.

Figure 7 shows the steps to confirm and broadcast the confirmed malicious samples in the fog layer.

The cloud layer is the top layer in the CoLL-IoT detection system. This layer is composed of high computational resources. Therefore, all of the new abnormal samples detected by all the connected nodes in the network layers and confirmed by the fog layer are sent to this layer. The machine learning model will be trained on all of the samples in the datasets, including the new detected samples. Once the machine learning model is trained, the new trained model will be published to all the nodes to update the primary detector and clear the pre-detector model. This approach will help to reduce the hardware consumption that is utilized by the pre-detector model.

4.1. Machine Learning

Machine learning is a technique that takes large sets of data and attempts to predict a value for a new sample after discovering patterns in the previous data. In many complicated problems, designing a specific algorithm in computer science is extremely difficult. Therefore, machine learning is often used to solve these complex problems. This section discusses machine learning algorithms that have been applied to detect suspicious network traffic.

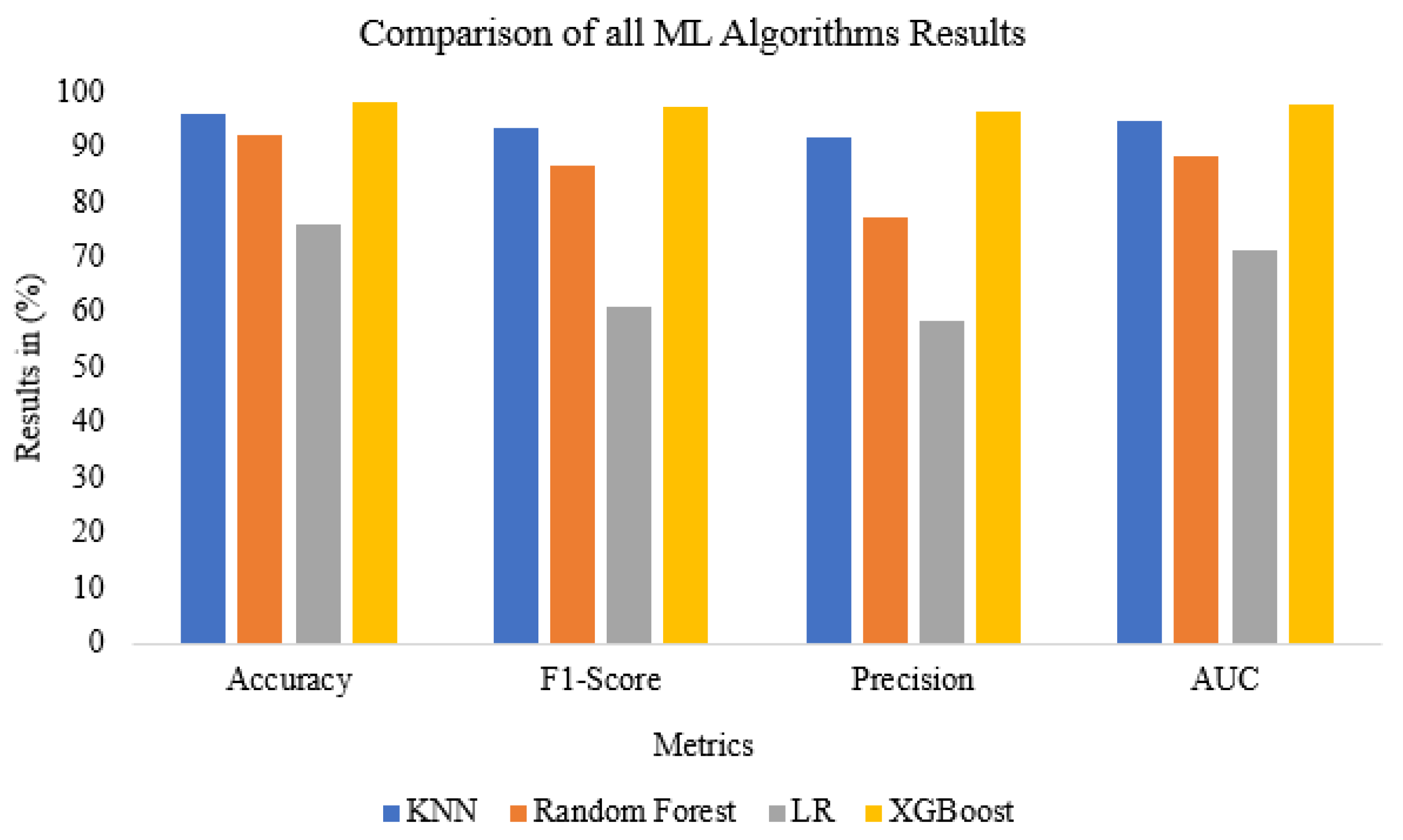

In this research, several supervised machine learning algorithms that were used for classification were investigated and tested. They included K-nearest neighbors (K-NN), logistic regression (LR), random forests, and extreme gradient boosting (XGBoost). All of the ML algorithms were trained on the top 15, 20, 25, and 30 features that were selected by the F-test [

28] and chi-square [

29] feature selection algorithms from the 49 considered features. Therefore, after training and testing all of algorithms, the algorithm that provides the best results is saved in a pickled format to be used to classify new samples.

4.1.1. K-Nearest Neighbors (K-NN)

K-nearest neighbors (K-NN) is a machine learning technique that classifies a new sample by determining the most similar samples in the training dataset. Therefore, it represents each feature of the inspected sample in an n-dimensional space for classification [

30]. The classification of the new sample depends on the distance between all of the samples in the dimensional area and the order of the neighborhood samples.

4.1.2. Random Forests

The random forests algorithm consists of several decision trees, which sorts new samples on the basis of the values of its features [

30]. The classification results can be reached by the leaf nodes in the decision trees. Each tree in the decision trees classifies and selects a set of data randomly from the input data. Once a testing data item is labeled by a tree (also called a vote), the forest can give the classification result based on the most votes among all of the trees.

Extreme gradient boosting (XGBoost) [

14] is another ensemble algorithm that utilizes decision trees to build a robust learning algorithm. To predict a new sample, XGBoost uses an arbitrary differential loss function for the result prediction. XGBoost is known for its efficiency in terms of the computing time and memory utilization.

4.1.3. Logistic Regression (LR)

Logistic regression classifies the data on the basis of an equation that separates the data points from each other. It utilizes the sigmoid function to predict a new sample by taking all of the features as an input and multiplying each individual feature by a weight. The result of the sum of all the features is used for the classification decision once it is applied to the sigmoid function.

4.2. Feature Selection

Applying the feature selection algorithm on the extracted features is an essential step to find the best set of features that can be used to classify benign traffic from malicious traffic. In this research, two features selection algorithms were applied, namely F-test [

28] and chi-square [

29].

The F-test feature selection algorithm is utilized by CoLL-IoT to reduce the number of extracted features. It is one of the filter methods that computes the score of each feature by considering the relationship between the feature and target variable [

28]. The F-test is a statistical test that is used to compare between the models and check whether there are any important differences. The score for each feature

is calculated using Equation (

1) [

31].

where

and

refer to the mean of the i-th feature for class

, where

j is equal to 1 or 2, which denotes the class index;

and

refer to the sizes of the group for the first class and second class samples, respectively. Additionally,

and

refer to the standard deviation of the i-th feature for class

.

Figure 8 shows the top 20 selected features after applying the F-test feature selection.

Table 3 shows the description of the top 20 features that were selected by the F-test feature selection algorithm.

Moreover, the chi-square feature selection algorithm is also considered to find the best features. It calculates the independence between the label and each feature, as shown in Equation (

2).

where

t and

c are the feature dimension and label to be evaluated, respectively;

N represents the number of samples;

A represents the number of times that

t and

c co-occur;

B represents the number of times

t occurs without

c;

C represents the number of times

c occurs without

t; and,

D represents the number of times neither

t nor

c occur.

4.3. Dataset

CoLL-IoT was evaluated using the UNSW-NB15 [

8] dataset that was recently generated using the data of real traffic. The dataset was created by the Cyber Range Lab of the Australian Centre for Cyber Security in 2015. It contains nine types of attacks, as shown in

Table 4. These attacks are, as follows: denial-of-service (DoS), fuzzers, backdoors, exploits, analysis, generic, worms, shellcode, and reconnaissance. These types were analyzed based on 49 features. There were 175,341 records in the training set and 82,332 records in the testing set.

4.4. Evaluation Metrics

To evaluate the performance of the detection model, the following metrics were considered:

Accuracy: the total number of samples that are correctly classified to the total number of samples.

Accuracy was calculated using Equation (

3):

where

TP refers to true positive. This means that the model correctly classifies malicious samples as malicious.

TN refers to true negative, which means that the model correctly classifies benign samples as benign.

FP refers to a false positive, which means that the model could not classify a benign sample as benign.

FN refers to false negative, which means that the model could not classify a malicious sample as malicious.

Type I Error or

FP Rate: the total number of benign samples that are not classified correctly to the total number of all the benign samples. This was calculated using Equation (

4):

Type II Error or

FN Rate: the total number of malicious samples that are not classified correctly as compared to the total number of all the malicious samples. This was calculated using Equation (

5):

F1-

Score: this refers to how discriminative the model is and it was calculated using Equation (

6):

where

precision represents the ratio of the malicious samples that are classified correctly to the total number of all samples that are classified as malicious; and,

recall represents the ratio of the malicious samples that are correctly classified to the total number of malicious samples.

Additionally, sensitivity and specificity metrics were considered to evaluate the performance of the detection model. Therefore, sensitivity represents the percentage of malicious samples that were correctly classified as malicious; and specificity represents the percentage of benign samples that were classified correctly as benign.

6. Conclusions

This paper presented CoLL-IoT, a collaborative detection system to detect malicious activities in IoT devices. The proposed system consisted of the following four main layers: the IoT layer, network layer, fog layer, and cloud layer. All of the layers worked collaboratively to analyze the network traffic in order to detect malicious activities. First, different machine learning algorithms were implemented to achieve the best results in terms of time and space complexities. Second, CoLL-IOT was deployed and executed on a resource-constrained device and effectively detect most of the malicious activities with low type II error rate. Third, CoLL-IoT was evaluated on the UNSW-NB15 dataset that contains recent IoT attacks, namely: denial-of-service (DoS), fuzzers, backdoors, exploits, analysis, generic, worms, shellcode, and reconnaissance. The evaluation results showed that CoLL-IoT achieved up to accuracy with a low type II error rate of . Finally, the proposed tool achieved a better detection rate than existing tools by using the same benchmark dataset.

As future work, we plan to implement deep learning algorithm using LITNET-2020 benchmark dataset [

36]. This dataset has more features of the network flow than UNSW-NB15. Moreover, it has 12 types of attacks, which will help to build a model that can detect recent attacks in this area.