1. Introduction

Natural language generation (NLG) based on neural network models has developed remarkable progress recently. Large pretrained language models with an encoder–decoder structure, such as BERT2BERT [

1], BART [

2], and T5 [

3], are more than capable of generating fluent and coherent text, gaining high performance on various NLG tasks. However, such developments are mainly made on the surface realization level: how to “say” the words. For what to “say”, formally called content planning, many problems remain unsolved, one of which is low fidelity. Generation fidelity refers to how faithful the generated target is to the underlying facts, data, knowledge, or meaning representation, i.e., for the data-to-text task, the generated text should only describe what the data explicitly describe or imply. Hence, the concept of fidelity can be further interpreted as (1) the surface-level factual correctness (explicit) and (2) the logical correctness (implicit) of the generated target. Such a concept was brought up by a recent study of LogicNLG [

4].

For the first type of fidelity, a series of recent studies adopt the copy mechanism [

5,

6] or model content planning as an individual stage [

7,

8,

9] to gain higher surface-level fidelity, though recent encoder–decoder-based deep neural network models tend to be trained in an end-to-end fashion [

10,

11,

12] instead of a cascade of two stages. Most of these approaches simply copied or restated the underlying facts from the input data and gained considerable results on factual correctness [

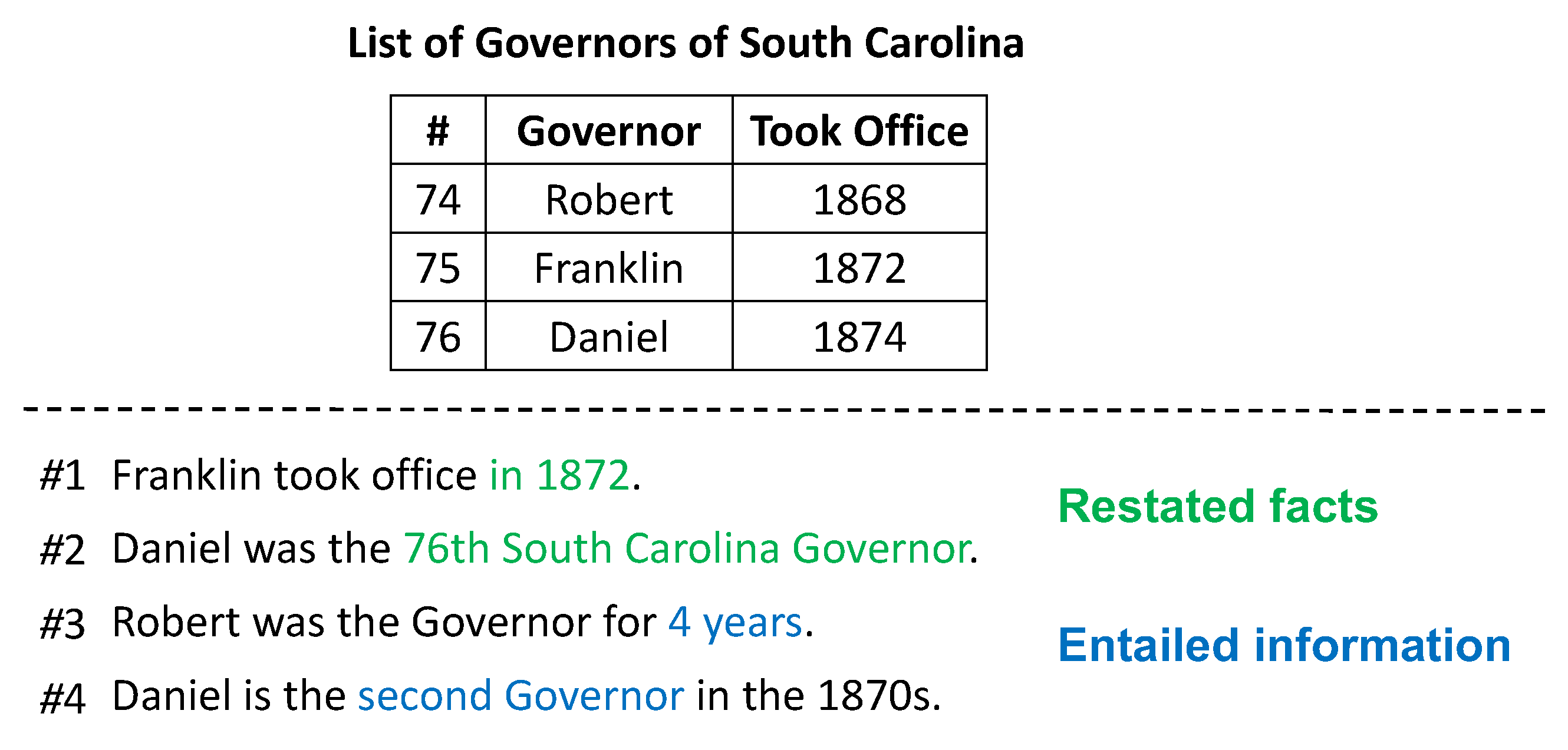

13]. However, humans are capable of discovering implicit information by inspecting the data and inferring from the underlying facts (e.g., “Daniel was the Governor for 4 years.” in

Figure 1). We believe such entailed information is important for a deeper level of understanding and generating more valuable targets. Therefore, the main focus of this work is high-fidelity logical content generation. Some factual and logical statements are shown in

Figure 1.

A logical natural language generation (logical NLG) task was already brought up by LogicNLG [

4]. The task definition is to generate natural language statements that can be logically inferred from the given data (i.e., the table). To avoid confusion, the logic here refers to the inferences with symbolic operations over the input data [

14], which is mainly numerical reasoning. The generated text is then automatically evaluated on three different metrics specifically designed for logical fidelity evaluation: the parsing-based, NLI-based, and adversarial evaluation. More details of the evaluation metrics can be found in their original paper. Results of recent studies on the logical NLG task are still primitive, which is mainly due to that (1) it is challenging for existing NLG models to perform reasoning and symbolic calculations, and (2) the search space of logically entailed statements is exponentially large due to a vast number of combinations of different operations and arguments from the table. To partially address these challenges, LogicNLG proposes a model using the coarse-to-fine generation scheme: the model generates a template that determines the logical structure globally first and fills the slots conditioned on the template. The coarse-to-fine generation schema is shown in

Figure 2. To construct a logical template, the numbers and entities are replaced by a placeholder “[ENT]”.

The decoder-based model predicts both the the template and the slot-filled target Y using a left-to-right decoding style. The template and the final text is separated with the special token “[SEP]”, denoted by . The goal is to maximize the overall likelihood of , where is a sequence of table headers, table captions, and table content.

Though such a coarse-to-fine generation scheme could partially improve the logical fidelity by constraining the generated target to a specific logical structure, we argue that the slot-filling stage remains uncontrolled. The model lacks reasoning abilities to fill the correct values that fit the logical template. Suppose that the logical template “[ENT] was [ENT] for [ENT] years” in

Figure 2 is correctly generated. The model may not fully understand the logic information implied by the word “for” and predict the wrong slot value: Robert was actually the governor for “4” years instead of “3” years, based on the given table. A recent work, TAPAS [

15], suggests that pretrained models are fairly accurate in “guessing” numbers that are close to the target. Even the state-of-the-art large language model ChatGPT [

16] may make mistakes in solving math equations, which is a natural problem for data-driven probabilistic models. We argue that surface-realization (how to say it) can be flexible, but the logic behind the words should be absolutely correct. To address the “guessing” problem, we suggest that the logic verification should be completed by a computer program, as models are “creative writers” while computer programs are deterministic. Hence, we propose to guide the slot-filling stage with semantic parsing techniques.

Another prior work, Logic2Text [

17], formulates the logical NLG task as a logic-form-to-text problem. The model is provided with both the source table and a logic form, which represents the semantics of the target text. The training objective is

where

Y is the final target,

is a sequence of table headers, table captions, and the table content, and

S is the logic form. With the logic form included, the logic behind the word “for” from “Robert was the governor for 4 years” can now be represented by a subfunction

belonging to the logic form.

,

denote the two parameters of the logic function, and

calculates the difference between two arguments. The main problem for Logic2Text is the approach they propose to leverage the logic forms, which is to (1) consider the logic form as a known variable, and (2) feed the model with both the source data (table) and the logic form linearly as pure text. Such an approach poses great challenges for the model’s ability to understand the semantics behind the logic forms, as the logic forms are mainly diversified graph structures. The limited amount of annotated logic-form-to-text pairs is insufficient for fully training a model to understand the semantics.

To address the aforementioned problems and further encourage research in this direction, we propose SP-NLG: a

semantic-

parsing-

guided

natural

language

generation framework to generate content with high logical fidelity. The framework consists of three modules: (1) logical template generation, (2) semantic parsing, and (3) target ranking.

Figure 3 is an overview of our framework. The modules are presented from bottom to top.

Similar to the coarse part of LogicNLG, we generate a logical template based on the source data (table) , which can be formally described as , where is the learnable parameter. Instead of removing entities and numbers from the target to construct the logical templates, we tie the parameters of the logic form with the slots of the logical template using heuristic rules. We repurpose the annotated logic-form–text pairs from Logic2Text train a semantic parser with text–logic-form pairs. The training objective is to maximize the likelihood of , where S is the logic form with slot-tied parameters to the logical template , and is the the learnable parameter. Different from Logic2Text, instead of requiring the model to understand the logic form, we transform the understanding problem into a translation problem: the model only needs to “translate” the logical template into a logic form S. Such transformation significantly reduces the difficulty of model learning. Different from LogicNLG, which predicts the slot-filled target next to the template in sequence, we design a slot-tied back-search algorithm on the logic form to find correct arguments in real time. As the the parameters of the logic form are tied to the slots of the logical template in one-to-one correspondence, these arguments are also slot values for the template. The correct argument for a single logic form (also a single template) may not be distinct. We rank all slot-filled templates (candidate targets) and consider the one with the highest ranking score as the final generated target. The ranking label is based on the BLEU score of the candidate target against the golden target. With SP-NLG, the logical correctness of the generated target can be guaranteed.

To conclude, the contribution of this work can be summarized as follows:

(1) A semantic-parsing-guided natural language generation framework to generate logical content. The design of slot-tied back-searching allows a generation model to leverage the arguments and results of the logic form in real time, which guarantees the logical correctness of the output.

(2) Experiment results of a model built on SP-NLG demonstrates the effectiveness of our framework on generating text with high logical fidelity.

5. Experiments and Results

We examine the SP-NLG framework with BART [

2] (except the ranking module, which is based on BERT [

52]), a pretrained language model targeted on NLG tasks with both encoder–decoder structures. It should be noted that our framework can be built on any pretrained language model with a decoder, such as BERT2BERT [

1] and T5 [

3].

For the baseline model, we use the pretrained model “GPT-TabGen” and a nonpretrained model “Field-Infusing + Trans”. Both methods are from the original work of LogicNLG [

4]. The motivation of this work is to propose a new perspective of logical natural language generation. The idea of combining semantic parsing techniques with generation approaches provides new insights and possibilities on generating logical inferred content instead of simply restating facts. Thus, comparing the performance of a specific model built on our framework with other models is not a priority of this work. The BART-based model we built on the SP-NLG framework should be considered as a baseline. In fact, each module of this framework can be further optimized with additional model designs or a larger amount of annotated data. We hope the SP-NLG framework may encourage further endeavors on the logical NLG task. As only the Logic2Text dataset provides annotated logic forms, we use the LogicNLG dataset here for data augmentation purposes. The experiments are conducted on the Logic2Text dataset.

5.1. Setup

We follow the same data preprocessing steps adopted by Chen et al. [

4]. First, the target is aligned with the given table with a entity parser to build a logical template. Then, we identify the arguments matching the same aligned entities of the target and replace them with unique placeholders. We save the correspondence between the slots of the logical template and the placeholders in order to fill the arguments searched from Algorithm 1.

Both the logical template generation module and the semantic parsing module are initialized with BART, and the embedding weights of the two modules can be either shared or not shared. We conduct experiments on both settings and compare the difference. The target ranking model is built on BERT. The max input and output tokens are set to 1024 and 128, respectively based on the top 90% token length of the dataset examples. We pad the input tokens to the max length with a special “” token. Tokens that exceed the maximum length will be truncated. The learning rate is set to , combined with a linear learning rate scheduler. We further employ the Adam optimizer with the epsilon set to . Decoding is conducted via beam search with a beam size of four. Due to limited computing resources, we train our model with eight NVIDIA Tesla V100 32G GPUs. The final batch size combined with gradient accumulation and multiple GPUs is 64. We evaluate both the baseline and our model on the following metrics: BLEU-1/2/3, SP-Acc, and NLI-Acc. The SP-Acc and NLI-Acc are two automatic metrics proposed by LogicNLG to evaluate the logical fidelity of the generated text from the aspect of parsing and entailment. The metrics are computed with their official released scripts.

5.2. Results

Table 6 shows the comparison between different settings of our approach and the baseline. We observe that (1) the SP-NLG model with back-search outperforms all methods on almost all metrics, (2) shared embedding weights does not further improve the model performance, and (3) due to the best model checkpoint being selected based on the best BLEU-3, the SP-NLG model tends to be optimized for maximizing the BLEU-3 score.

Based on the results of the oracle setting, which is evaluated on the golden target, we discover that the upper bounds for SP-Acc and NLI-Acc are 64.3 and 72.8, respectively. The SP-NLG (with back-search) setting assumes that the logical template and the corresponding logic form with slots are given, and only the slot-tied back-search algorithm and the target ranking module are employed for SP-NLG. Comparison between these results with the oracle results suggests that the slot-tied back-search algorithm is more than capable of searching correct arguments (template slot values), which contributes greatly to the logical fidelity of the final generated target. Target ranking is also an effective design to determine the best arguments for the logical template in terms of surface-level fidelity.

Almost all metrics except SP-Acc dropped when sharing the embedding weights of the logical template module with the semantic parser, which suggests that the logical template module and the semantic parser should be considered as individual components. In fact, this could be an advantage. As each module is replaceable, the model is not required to be trained jointly. All modules can be trained offline separately and plugged in/out of the framework.

We choose the best checkpoint of our model based on the BLEU-3 score. To achieve a better result on SP-Acc or NLI-Acc, the evaluation strategy can be changed to select the best checkpoint based on SP-Acc or NLI-Acc. As these metrics are model-based, which is time-costly, we did not try these strategies. Another approach is to optimize the semantic parser. The original work of Logic2Text annotated around 10,000 logic forms; while this may be sufficient for their approach by considering the logic form as a given input, the scale of annotated data is far from enough for fully training a semantic parser. To address this problem, the following methods should be considered: