Reliable Multi-View Deep Patent Classification

Abstract

1. Introduction

- (1)

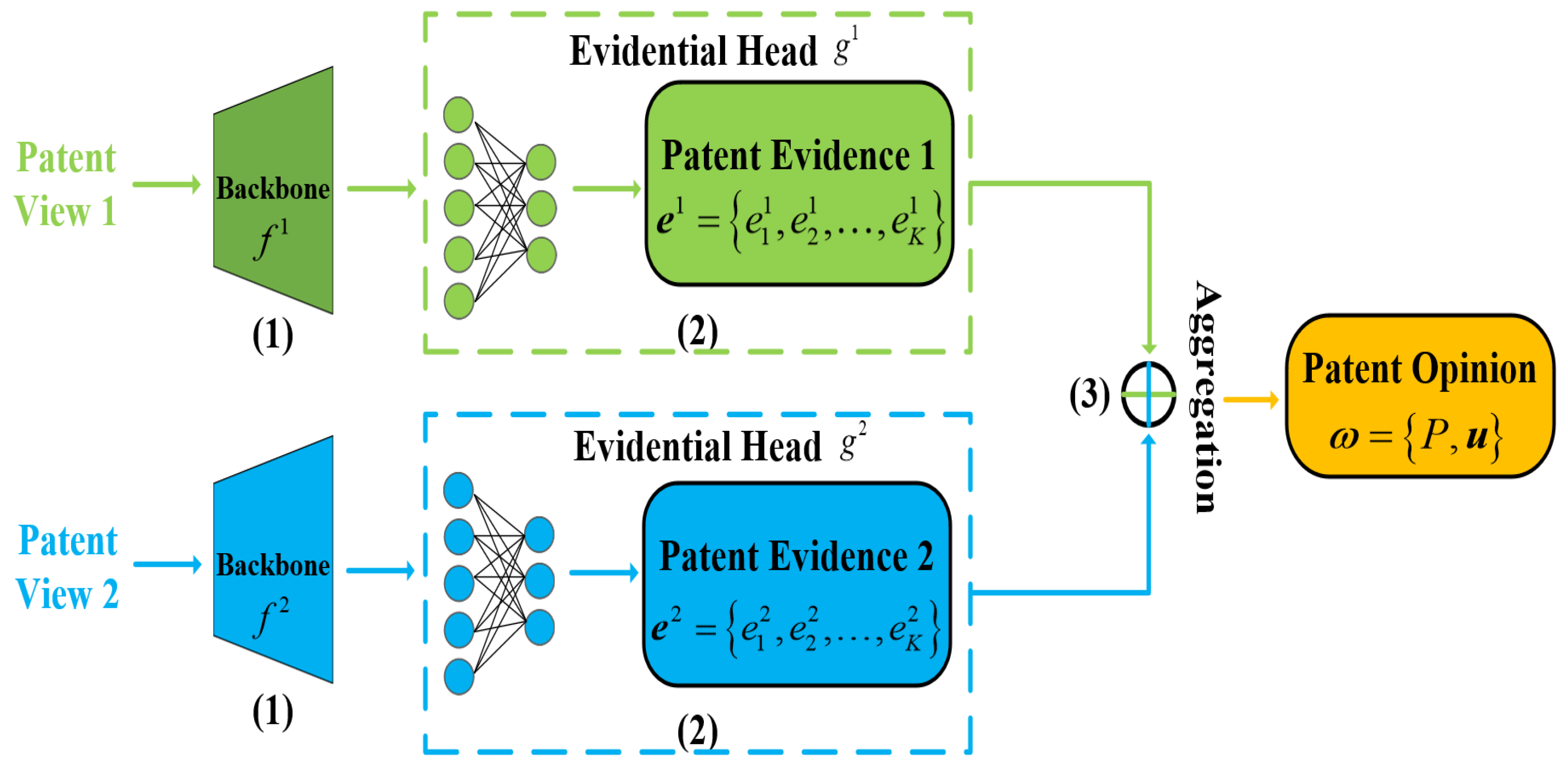

- We first propose a reliable multi-view deep patent classification (RMDPC) method that integrates the complementary information from each view in the latent patent feature space to improve classification performance and guarantee the reliability of the patent classification results.

- (2)

- By constructing a simple and effective fusion strategy using evidence theory, RMDPC can provide a theoretical reliability guarantee that reduces the overall uncertainty of the patent classification results while integrating each patent view effectively.

- (3)

- The experimental results on a large-scale real-world patent multi-view dataset including 759,809 data validate the superiority of our method in terms of accuracy, reliability, and robustness.

2. Related Works

2.1. Deep Patent Classification

2.2. Multi-View Deep Learning

2.3. Uncertainty in Deep Learning

2.4. Uncertainty in Evidence Theory

3. Method

3.1. Overview

3.2. Reliable Multi-View Deep Patent Classification

| Algorithm 1 Algorithm for Reliable Multi-view Deep Patent Classification (RMDPC) |

|

3.3. Theoretical Study

4. Experiments

4.1. Datasets

4.2. Implementations

4.3. Comparative Methods

- DCPC: The CNN-based deep patent classification method extracts the information using a traditional conventional neural network.

- DRPC: The ResNet-based deep patent classification method uses a ResNet neural network to obtain the information from the patent data to produce the classification results.

- DBPC: The BERT-based deep patent classification method utilizes a BERT neural network as the information extractor to classify the patent data.

- MCDO: The Monte Carlo dropout method [32] considers the dropout network training as the approximate inference in a Bayesian neural network.

- DE: The deep ensemble method [33] generates multiple deep sub-models and combines the predictions of these sub-models.

- EDL: The evidential deep learning method [14] forms a subjective opinion in terms of evidence theory to obtain predictions.

- DCCA: A deep canonically correlated analysis [25] obtains the correlations through deep neural networks, which maximizes the correlations among the views.

- DCCAE: Deep canonically correlated autoencoders [27] employ autoencoders to search for a common representation.

- MVTCAE: A multi-view total correlation autoencoder [28] obtains the correlations among multiple views using an autoencoder to learn a complete representation.

- ETMC: Enhanced trusted multi-view classification [29] focuses on the uncertainty estimation problem and produces reliable classification results.

4.4. Ablation Study

4.5. Comparison with the Patent Deep Classification Methods Using the Best View

4.6. Comparison with the Uncertainty-Based Classification Methods Using the Best View

4.7. Comparison with the State-of-the-Art Multi-View Deep Classification Methods

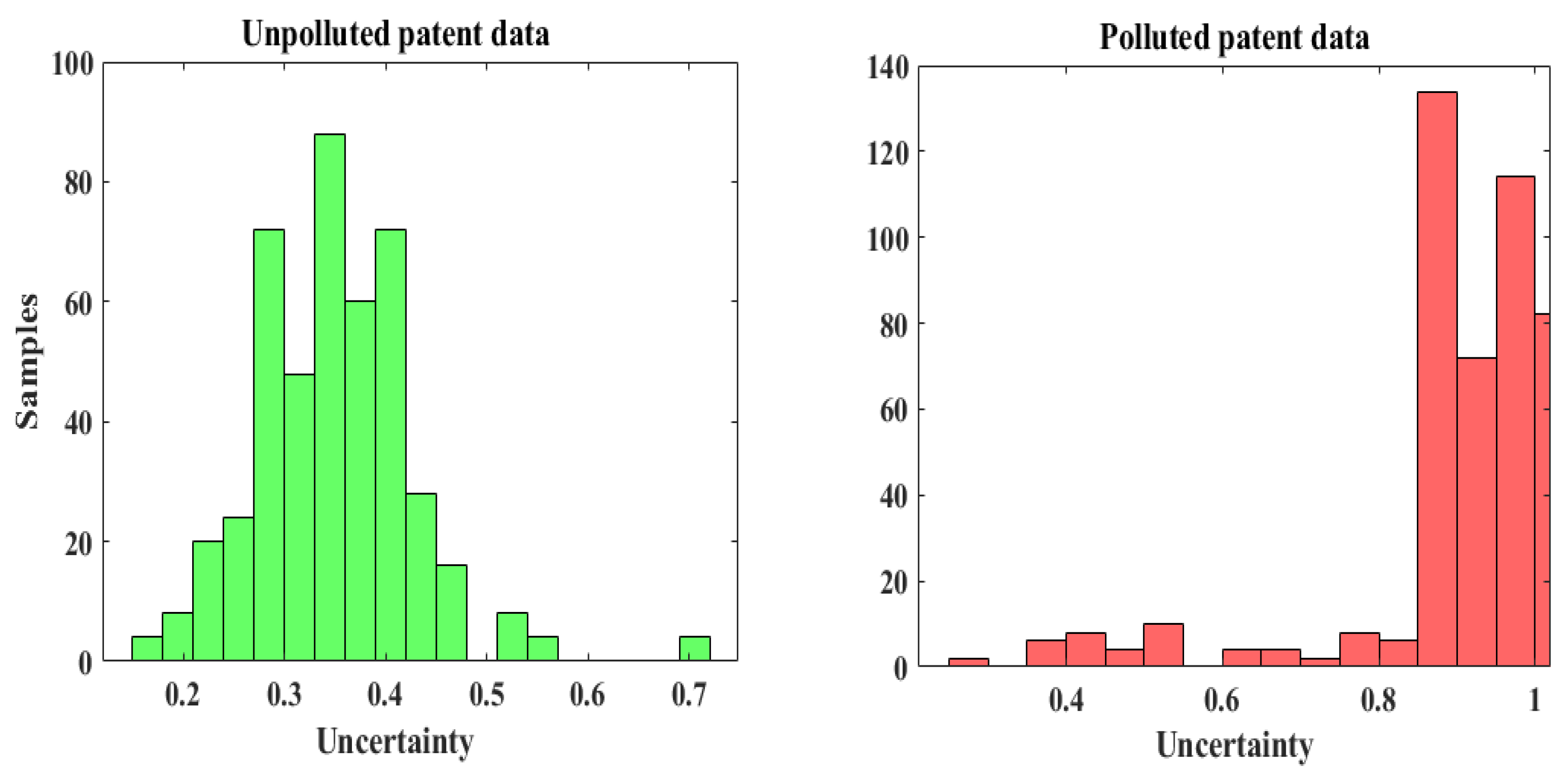

4.8. Reliability Estimation

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Li, Z.; Tate, D.; Lane, C.; Adams, C. A framework for automatic TRIZ level of invention estimation of patents using natural language processing, knowledge-transfer and patent citation metrics. Comput. Aided Des. 2012, 44, 987–1010. [Google Scholar] [CrossRef]

- Li, S.; Hu, J.; Cui, Y.; Hu, J. DeepPatent: Patent classification with convolutional neural networks and word embedding. Scientometrics 2018, 117, 721–744. [Google Scholar] [CrossRef]

- Zhang, L.; Li, L.; Li, T. Patent mining: A survey. Acm Sigkdd Explor. Newsletter 2015, 16, 1–19. [Google Scholar] [CrossRef]

- Larkey, L. Some issues in the automatic classification of US patents. In Proceedings of the Working Notes for the AAAI-98 Workshop on Learning for Text Categorization, Madison, WI, USA, 27 July 1998; pp. 87–90. [Google Scholar]

- Liu, W.; Yue, X.; Chen, Y.; Denoeux, T. Trusted Multi-View Deep Learning with Opinion Aggregation. Proc. Aaai Conf. Artif. Intell. 2022, 36, 7585–7593. [Google Scholar] [CrossRef]

- Denœux, T.; Younes, Z.; Abdallah, F. Representing uncertainty on set-valued variables using belief functions. Artif. Intell. 2010, 174, 479–499. [Google Scholar] [CrossRef]

- Shafer, G. A Mathematical Theory of Evidence; Princeton University Press: Princeton, NJ, USA, 1976. [Google Scholar]

- Dempster, A.P. A generalization of Bayesian inference. J. R. Stat. Soc. Ser. Methodol. 1968, 30, 205–232. [Google Scholar] [CrossRef]

- MacKay, D.J.C. A practical Bayesian framework for backpropagation networks. Neural Comput. 1992, 4, 448–472. [Google Scholar] [CrossRef]

- Neal, R.M. Bayesian Learning for Neural Networks; Springer Science and Business Media: Berlin/Heidelberg, Germany, 2012. [Google Scholar]

- Graves, A. Practical variational inference for neural networks. Adv. Neural Inf. Process. Syst. 2011, 24, 2348–2356. [Google Scholar]

- Ranganath, R.; Gerrish, S.; Blei, D. Black box variational inference. In Proceedings of the Seventeenth International Conference on Artificial Intelligence and Statistics, Reykjavik, Iceland, 22–25 April 2014; pp. 814–822. [Google Scholar]

- Blundell, C.; Cornebise, J.; Kavukcuoglu, K.; Wierstra, D. Weight uncertainty in neural networ. In Proceedings of the 32nd International Conference on Machine Learning, Lille, France, 6–11 July 2015; pp. 1613–1622. [Google Scholar]

- Sensoy, M.; Kaplan, L.; Kandemir, M. Evidential deep learning to quantify classification uncertainty. Adv. Neural Inf. Process. Syst. 2018, 31, 3179–3189. [Google Scholar]

- Krestel, R.; Chikkamath, R.; Hewel, C.; Risch, J. A survey on deep learning for patent analysis. World Pat. Inf. 2021, 65, 102035. [Google Scholar] [CrossRef]

- Yoo, Y.; Lim, D.; Heo, T.S. Solar cell patent classification method based on keyword extraction and deep neural network. arXiv 2021, arXiv:2109.08796. [Google Scholar]

- Haghighian Roudsari, A.; Afshar, J.; Lee, W.; Lee, S. PatentNet: Multi-label classification of patent documents using deep learning based language understanding. Scientometrics 2022, 127, 207–231. [Google Scholar] [CrossRef]

- Roudsari, A.H.; Afshar, J.; Lee, S.; Lee, W. Comparison and analysis of embedding methods for patent documents. In Proceedings of the 2021 IEEE International Conference on Big Data and Smart Computing (BigComp), Jeju Island, Republic of Korea, 17–20 January 2021; IEEE: Piscataway, NJ, USA, 2021; pp. 152–155. [Google Scholar]

- Hu, J.; Li, S.; Hu, J.; Yang, G. A hierarchical feature extraction model for multi-label mechanical patent classification. Sustainability 2018, 10, 219. [Google Scholar] [CrossRef]

- Abdelgawad, L.; Kluegl, P.; Genc, E.; Falkner, S.; Hutter, F. Optimizing neural networks for patent classification. In Joint European Conference on Machine Learning and Knowledge Discovery in Databases; Springer: Cham, Switzerland, 2019; pp. 688–703. [Google Scholar]

- Kucer, M.; Oyen, D.; Castorena, J.; Wu, J. DeepPatent: Large scale patent drawing recognition and retrieval. In Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, Waikoloa, HI, USA, 4–8 January 2022; pp. 2309–2318. [Google Scholar]

- Roudsari, A.H.; Afshar, J.; Lee, C.C.; Lee, W. Multi-label patent classification using attention-aware deep learning model. In Proceedings of the 2020 IEEE International Conference on Big Data and Smart Computing (BigComp), Busan, Republic of Korea, 19–22 February 2020; IEEE: Piscataway, NJ, USA, 2020; pp. 558–559. [Google Scholar]

- Tang, P.; Jiang, M.; Xia, B.N.; Pitera, J.W.; Welser, J.; Chawla, N.V. Multi-label patent categorization with non-local attention-based graph convolutional network. Proc. AAAI Conf. Artif. Intell. 2020, 34, 9024–9031. [Google Scholar] [CrossRef]

- Fang, L.; Zhang, L.; Wu, H.; Xu, T.; Zhou, D.; Chen, E. Patent2Vec: Multi-view representation learning on patent-graphs for patent classification. World Wide Web 2021, 24, 1791–1812. [Google Scholar] [CrossRef]

- Andrew, G.; Arora, R.; Bilmes, J.; Livescu, K. Deep canonical correlation analysis. In Proceedings of the 30th International Conference on Machine Learning, Atlanta, GA, USA, 16–21 June 2013; pp. 1247–1255. [Google Scholar]

- Zhang, C.; Cui, Y.; Han, Z.; Zhou, J.T.; Fu, H.; Hu, Q. Deep partial multi-view learning. IEEE Trans. Pattern Anal. Mach. Intell. 2020; early access. [Google Scholar]

- Wang, W.; Arora, R.; Livescu, K.; Bilmes, J. On deep multi-view representation learning. In Proceedings of the 32nd International Conference on Machine Learning, Lille, France, 6–11 July 2015; pp. 1083–1092. [Google Scholar]

- Han, Z.; Zhang, C.; Fu, H.; Zhou, J.T. Trusted Multi-View Classification with Dynamic Evidential Fusion. In IEEE Transactions on Pattern Analysis and Machine Intelligence; IEEE: Piscataway, NJ, USA, 2022. [Google Scholar]

- Xu, J.; Li, W.; Liu, X.; Zhang, D.; Liu, J.; Han, J. Deep embedded complementary and interactive information for multi-view classification. Proc. AAAI Conf. Artif. Intell. 2020, 34, 6494–6501. [Google Scholar] [CrossRef]

- Xu, S.; Chen, Y.; Ma, C.; Yue, X. Deep evidential fusion network for medical image classification. Int. J. Approx. Reason. 2022, 150, 188–198. [Google Scholar] [CrossRef]

- Gal, Y.; Ghahramani, Z. Dropout as a bayesian approximation: Representing model uncertainty in deep learning. In Proceedings of the 33rd International Conference on Machine Learning, New York, NY, USA, 20–22 June 2016; pp. 1050–1059. [Google Scholar]

- Gal, Y.; Ghahramani, Z. Bayesian convolutional neural networks with Bernoulli approximate variational inference. arXiv 2015, arXiv:1506.02158. [Google Scholar]

- Lakshminarayanan, B.; Pritzel, A.; Blundell, C. Simple and scalable predictive uncertainty estimation using deep ensembles. Adv. Neural Inf. Process. Syst. 2017, 30, 6402–6413. [Google Scholar]

- Zhou, X.; Yue, X.; Xu, Z.; Denoeux, T.; Chen, Y. PENet: Prior evidence deep neural network for bladder cancer staging. Methods 2022, 207, 20–28. [Google Scholar] [CrossRef]

- Zhou, X.; Yue, X.; Xu, Z.; Denoeux, T.; Chen, Y. Deep Neural Networks with Prior Evidence for Bladder Cancer Staging. In Proceedings of the 2021 IEEE International Conference on Bioinformatics and Biomedicine (BIBM), Houston, TX, USA, 9–12 December 2021; pp. 1221–1226. [Google Scholar]

- Jøsang, A. Subjective Logic: A Formalism for Reasoning under Uncertainty; Springer: Berlin/Heidelberg, Germany, 2018. [Google Scholar]

- Jøsang, A.; Cho, J.H.; Chen, F. Uncertainty characteristics of subjective opinions. In Proceedings of the 21st International Conference on Information Fusion (FUSION), Cambridge, UK, 10–13 July 2018; IEEE: Piscataway, NJ, USA, 2018; pp. 1998–2005. [Google Scholar]

- Guo, C.; Pleiss, G.; Sun, Y.; Weinberger, K.Q. On calibration of modern neural networks. In Proceedings of the 34th International Conference on Machine Learning, Sydney, Australia, 6–11 August 2017. [Google Scholar]

- Kingma, D.P.; Ba, J. Adam: A method for stochastic optimization. arXiv 2014, arXiv:1412.6980. [Google Scholar]

- Geng, Y.; Han, Z.; Zhang, C.; Hu, Q. Uncertainty-aware multi-view representation learning. Proc. AAAI Conf. Artif. Intell. 2021, 35, 7545–7553. [Google Scholar] [CrossRef]

| Case | Patent Title View | Patent Abstract View | Category |

|---|---|---|---|

| 1 | Eco-board | Steel plate with a layer of an inorganic material fireproof composite board glued on each side. | B32B3/18 |

| 2 | Eco-board | A protective layer of an inorganic nano waterproof fireproof material. | E04F13/075 |

| 3 | @&$%@% | A new environmentally friendly aluminum alloy material. | Inconsistency sources |

| 4 | @&$%@% | (*@$&*)(*$%%@!$&% | Unknown sources |

| Main Components | Metric | |

|---|---|---|

| Fusion | KL Divergence | ccuracy (%) |

| - | 82.93 ± 0.60 | |

| - | ✔ | 83.12 ± 0.01 |

| ✔ | 84.31 ± 0.20 | |

| ✔ | ✔ | 85.21 ± 0.50 |

| Method | Accuracy (%) |

|---|---|

| DCPC | 76.75 ± 0.02 |

| DRPC | 78.50 ± 0.12 |

| DBPC | 79.55 ± 0.32 |

| RMDPC | 85.21 ± 0.50 |

| Method | Accuracy (%) |

|---|---|

| MCDO | 80.95 ± 0.12 |

| DE | 82.00 ± 0.01 |

| EDL | 82.93 ± 0.60 |

| RMDPC | 85.21 ± 0.50 |

| Method | Accuracy (%) |

|---|---|

| DCCA | 78.65 ± 0.01 |

| DCCAE | 79.00 ± 0.00 |

| MVTCAE | 81.16 ± 0.21 |

| ETMC | 83.79 ± 0.43 |

| RMDPC | 85.21 ± 0.50 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhang, L.; Liu, W.; Chen, Y.; Yue, X. Reliable Multi-View Deep Patent Classification. Mathematics 2022, 10, 4545. https://doi.org/10.3390/math10234545

Zhang L, Liu W, Chen Y, Yue X. Reliable Multi-View Deep Patent Classification. Mathematics. 2022; 10(23):4545. https://doi.org/10.3390/math10234545

Chicago/Turabian StyleZhang, Liyuan, Wei Liu, Yufei Chen, and Xiaodong Yue. 2022. "Reliable Multi-View Deep Patent Classification" Mathematics 10, no. 23: 4545. https://doi.org/10.3390/math10234545

APA StyleZhang, L., Liu, W., Chen, Y., & Yue, X. (2022). Reliable Multi-View Deep Patent Classification. Mathematics, 10(23), 4545. https://doi.org/10.3390/math10234545