Abstract

X-ray tomography is an effective non-destructive testing method for industrial quality control. Limited-angle tomography can be used to reduce the amount of data that need to be acquired and thereby speed up the process. In some industrial applications, however, objects are flat and layered, and laminography is preferred. It can deliver 2D images of the structure of a layered object at a particular depth from very limited data. An image at a particular depth is obtained by summing those parts of the data that contribute to that slice. This produces a sharp image of that slice while superimposing a blurred version of structures present at other depths. In this paper, we investigate an Optimal Experimental Design (OED) problem for laminography that aims to determine the optimal source positions. Not only can this be used to mitigate imaging artifacts, it can also speed up the acquisition process in cases where moving the source and detector is time-consuming (e.g., in robotic arm imaging systems). We investigate the imaging artifacts in detail through a modified Fourier Slice Theorem. We address the experimental design problem within the Bayesian risk framework using empirical Bayes risk minimization. Finally, we present numerical experiments on simulated data.

MSC:

94A08

1. Introduction

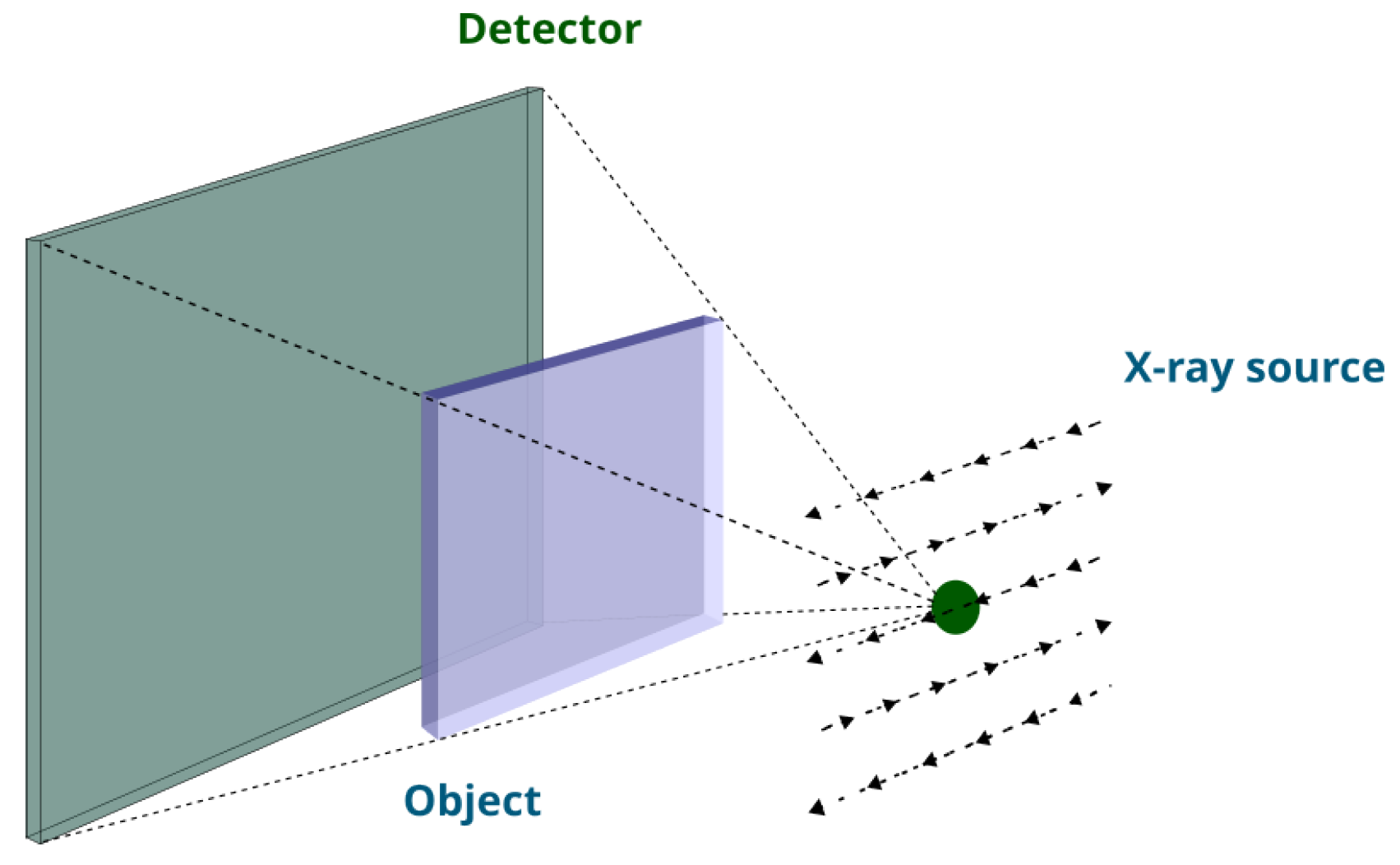

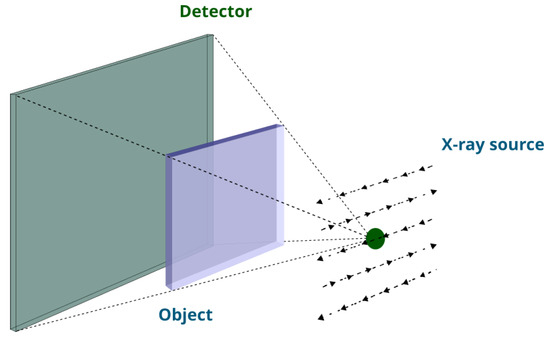

Non-destructive testing is essential in industrial quality control [1]. X-ray imaging in particular is a highly effective non-destructive testing technique that can deliver detailed 3D images of the internal structure of products. It is widely employed across various industries for quality control [2,3]. Conventional tomographic acquisition requires a full rotation around the object, limiting its applicability to the off-line inspection of small-to-medium-sized objects. To overcome this limitation, several approaches to tomographic reconstruction from limited-angle data have been developed. These methods often rely on additional prior knowledge of the object to fill in information that is missing in the measurements. A prime example is discrete tomography, where objects consisting of a few distinct materials can be reconstructed from severely under-sampled data. In certain applications where objects are wide and flat, tomographic data can only be collected over a very limited angular range. This pushes the limits of conventional limited-angle tomography and we have to resort to another class of methods [4]. Laminography offers a method to reconstruct an image of a specific slice of a flat layered object. As shown in Figure 1, it needs a flexible source and detector that can move and collect data from several directions such that during scanning the X-ray tube is located on the same side of the flat object and the detector is on the opposite side of it [5].

Figure 1.

A laminographic setup designed for scanning flat objects, featuring a flexible source that moves along a raster trajectory. Note that dashed arrows specify the source trajectory.

In this paper, we consider a particular setup for laminographic imaging which requires the acquisition of X-ray projection images from N source positions on a fixed grid, while the detector is fixed on the opposite side of the object. Our aim is to be able to optimally design acquisition protocols with sources for a given set of objects. By optimally designing the setup, we can reduce imaging artifacts as well as potentially speed up the data acquisition process, particularly in scenarios where adjusting the source position is time-consuming, such as in robotic arm imaging systems. Bayesian Optimal Experimental Design (OED) is a mathematical approach that facilitates the design of experimental parameters while minimizing experimental costs. It utilizes prior knowledge and Bayesian inference to refine the selection of experimental settings, thereby improving data collection efficiency and decision-making effectiveness [6]. Various criteria, including D-optimality and A-optimality, are frequently employed to assess and compare the efficacy of experimental designs [6,7]. D-optimality focuses on maximizing the Kullback–Leibler divergence between the posterior and prior distributions, whereas A-optimality aims to minimize the expected error between reconstructions and actual ground truths [8,9]. In [10], the authors proposed the optimization of projection angles in X-ray imaging. They incorporated empirical A-optimal design in constrained problems based on calibration data. Specifically, they obtained a sparse design out of a predetermined set of angles and implemented it with a gradient-based optimization method. Similarly, we pose the OED problem for laminography as a bilevel optimization based on the A-optimality criterion. Additionally, we employ gradient-based methods to facilitate implementation in high-dimensional settings.

Our main contributions are the following:

- We analyze the 3D and 2D (slice-based) image reconstruction process for laminography, present an analog of the Fourier Slice Theorem specific to this setup, and analyze the image artifacts present in slice images.

- We pose an experimental design problem for selecting the K most informative source positions for laminography across a specific class of objects. Additionally, we formulate a bilevel optimization within the Bayes risk minimization framework to solve it.

The remainder of this paper is organized as follows: In Section 2, we present the forward and backward operators in the continuous domain for the depth imaging procedure. We study depth reconstruction in the discrete domain in Section 3. In Section 4, we propose a bilevel optimization problem to find a source design with K sources chosen from a fixed array of sources. In Section 5, we propose an algorithm to solve the proposed bilevel optimization problem and present its pseudocode in Section 6. In Section 7, we show the results obtained by our method and compare them to optimal source designs obtained by exhaustive search. Finally, Section 8 and Section 9 discuss the limitations and future directions of our research and conclude the paper, respectively.

Notation

Throughout this paper, scalars and vector elements are denoted by lower-case letters, vectors by lower-case boldface letters, and matrices by upper-case boldface letters. The notation stands for a transpose of either a vector or matrix. Moreover, # denotes the cardinality of a vector and denotes the norm of a vector.

2. Forward and Backward Operators in the Continuous Domain

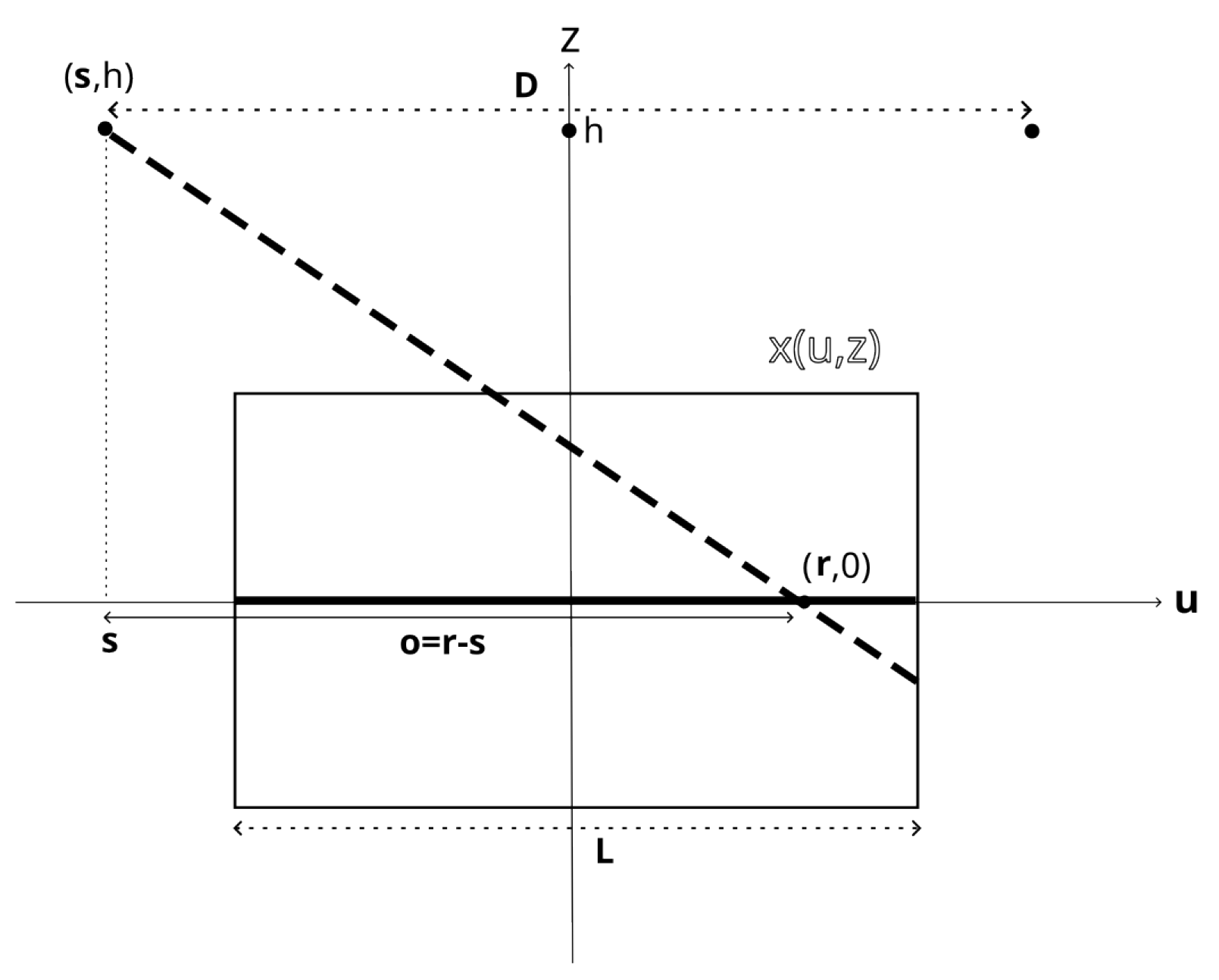

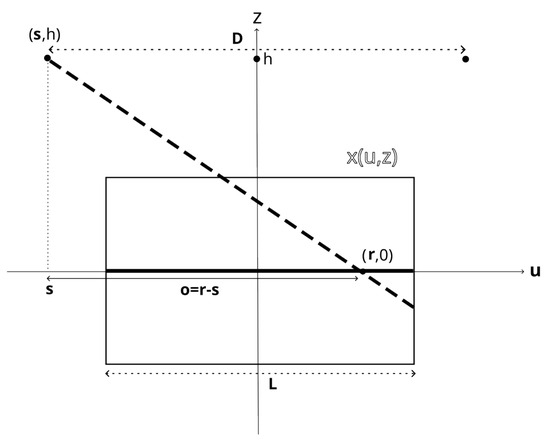

In this section, we first review the linear X-ray projection model. Subsequently, we introduce the forward and backward operators as depicted in Figure 2. Finally, we detail the depth imaging process for laminography in the continuous domain, utilizing these operators.

Figure 2.

Two-dimensional view of the acquisition setup. The source is located at lateral position and height h; the detector is parameterized without loss of generality by . The object is characterized by x as a function of lateral coordinate and vertical coordinate z. The ray from the source to the detector is shown by the dashed line.

Briefly, the forward projection operator transforms the object’s internal features into the collected projection data, effectively modeling the imaging process. Conversely, the backward projection operator transforms the projection data back into a representation of the object’s internal features, serving as the basis for reconstructing the original image [11].

2.1. Linear Projection Model

During the X-ray imaging process, an object is exposed to a beam of X-ray photons emitted by an X-ray tube. Some of these photons are absorbed by the object, while others reach the detector. The captured measurements can be modeled using the Beer–Lambert law, which describes the exponential relationship between the intensity of the X-ray beam and the distance it travels through the object:

where denotes the number of photons that reach the detector from starting point in the direction of , where . is the initial intensity of the beam (i.e., the number of photons emitted by the source), and x is the function representing the attenuation coefficient of the object. This relation is usually written as

with representing the measured data for a single ray. In the following, we present the forward and backward operators for the X-ray setup shown in Figure 2. To study the depth imaging procedure within this specific setup, we need to understand forward and backward operators for both 2D and 3D objects.

2.2. Forward and Backward Operators for the Slice Imaging Setup

The proposed imaging setup is schematically depicted in Figure 2. X-rays are emitted by a source at lateral position and height h. Without loss of generality, we parameterise the detector position by . The forward operator, , is now given by

as shown in Figure 2. Here, denotes the attenuation coefficient of the object in terms of its lateral () and vertical () coordinates. In fact, it integrates along the ray from the source at to the detector located at .

2.2.1. The Fourier Slice Theorem

The Fourier Slice Theorem illustrates the relationship between the collected measurements and the Fourier transform of the object. In traditional computed tomography, a complete sampling process enables the straightforward inversion of the Radon transform using the filtered back projection method [12]. Due to the limited acquisition view in laminography, the Fourier Slice Theorem shows that some parts of the object spectrum are missing. The absence of complete data leads to the appearance of artifacts within the reconstructed image at specific depths. According to the setup shown in Figure 2, we define the measurements in receiver-offset coordinates as and find the following relation between the 2D Fourier transform of g and the 3D Fourier transform of x. Let and represent the spatial frequency coordinates. Then, it reads as follows (see the derivation in Appendix A.2):

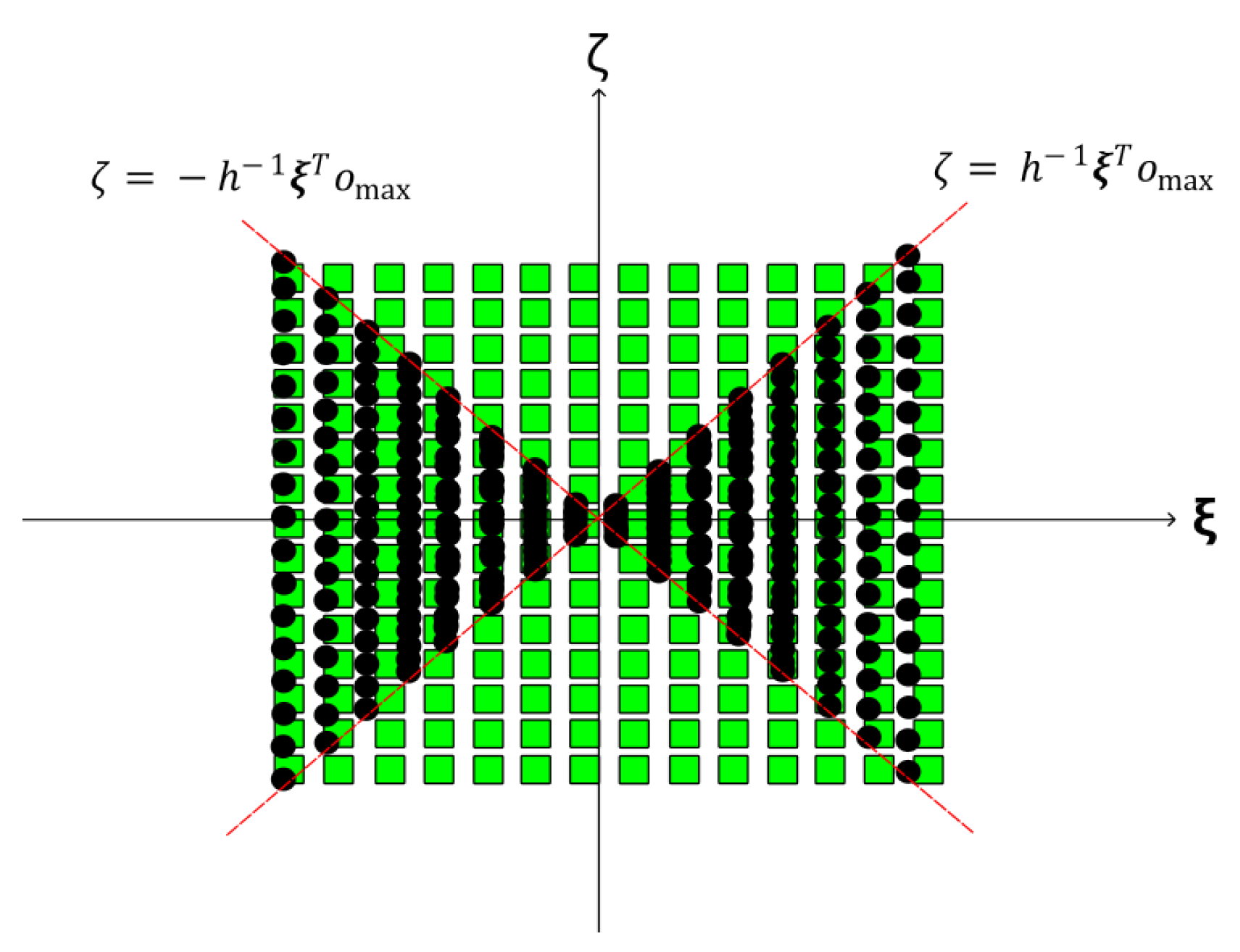

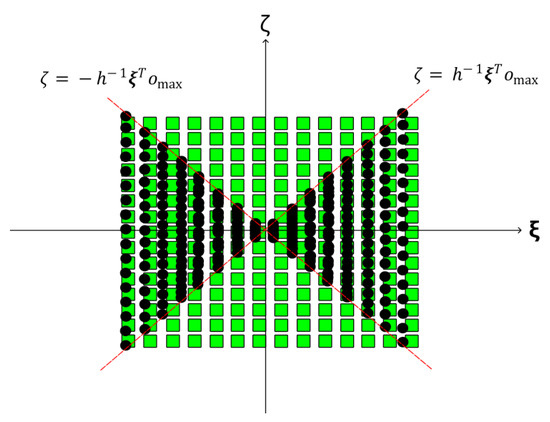

where and denote the 2D and 3D Fourier transforms, respectively, and signifies the vector difference between the detector position and the source position . Figure 3 depicts sampled elements (black circles) with respect to the Fourier object samples (shown by green squares). Obviously, the sampling procedure is missing a portion of the object spectrum due to the limited values of , i.e., the forward operator samples from . Since the range of is finite, this leads to a missing wedge in the frequency domain. This missing wedge leads to an ill-posed inverse problem of recovering x from g (or, equivalently, y).

Figure 3.

Schematic depiction of the missing wedge in the Fourier domain sampling of by the measurements . The green squares represent and black circles represent , which are limited in by . The limited aperture in gives rise to the missing wedge.

2.2.2. The 2D Slice at Depth z

To study the depth imaging procedure, we introduce the corresponding forward operator which maps a 2D image at depth z, denoted by , to the corresponding projection data (see Appendix A.3):

the corresponding adjoint operator is given by (see Appendix A.3):

we then find that is given by

so that for a limited range of , and hence is trivially inverted. Depth imaging of an object consisting of a single thin layer can thus be achieved by a simple back projection. When multiple layers are present we expect artifacts, which we study next.

2.3. Depth Image Reconstruction

Here, we are interested in studying the artifacts that arise when imaging a slice from measurements of a 3D layered object. In the remainder of this section, we let for ease of notation. As argued above, we can form a depth image by back projection, so it suffices to study the operator (see Appendix A.4). In particular, we expect to image layered objects, which we can model as

using a finite number of sources , the depth image of the layer is then given by

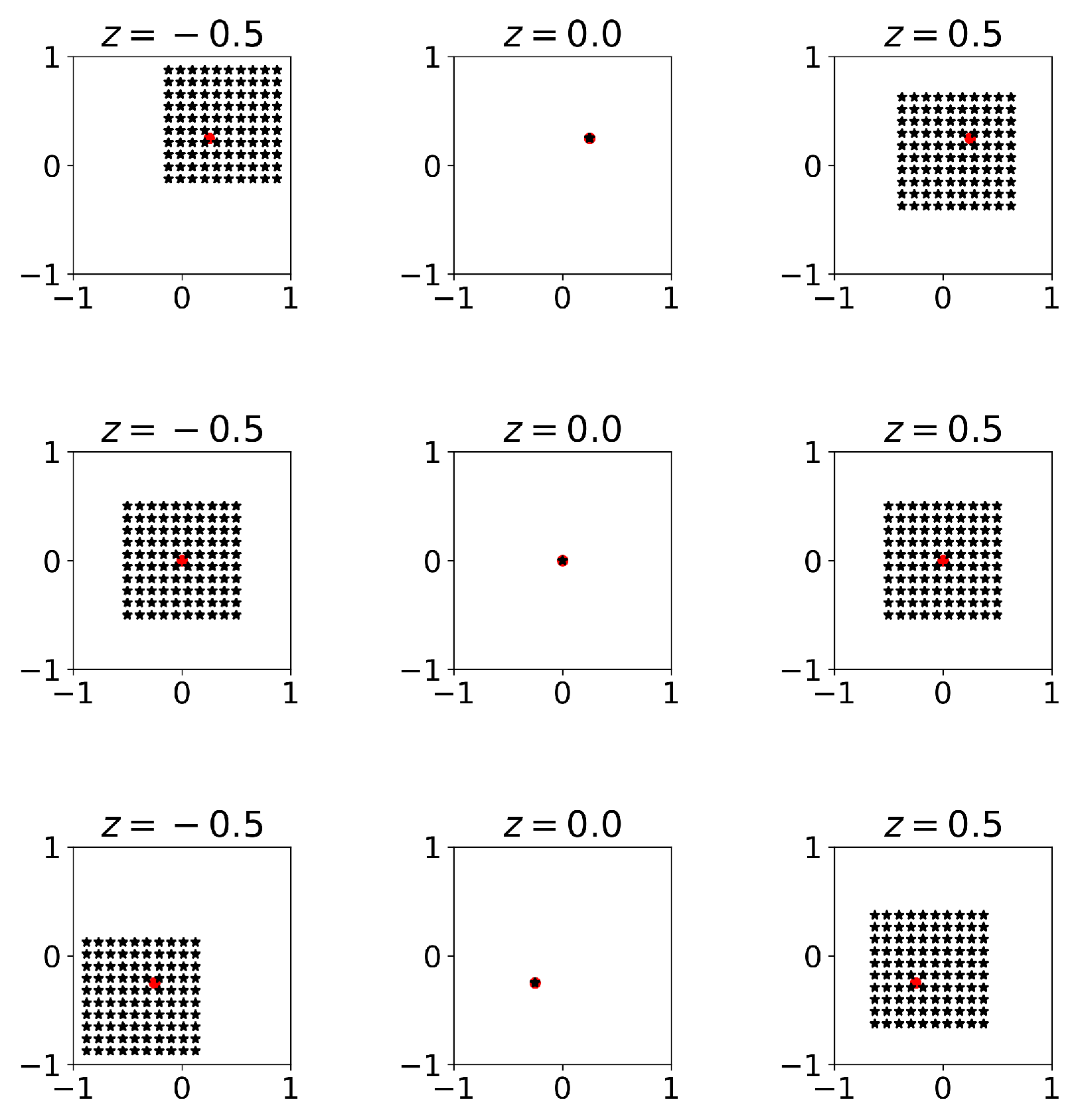

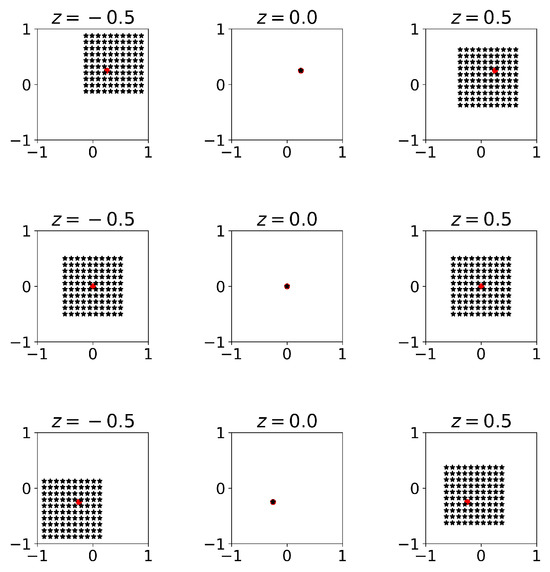

Hence, the error in the depth image of the layer is a superposition of spatially averaged versions of the other layers. An illustration of the resulting spatial sampling patterns is shown in Figure 4.

Figure 4.

Example of the spatial sampling patterns when imaging the middle layer (at ) of an object with layers and regularly spaced sources in at . The red circle indicates the point at which we evaluate the output.

So far, it is evident that imaging a specific depth of a flat object leads to artifacts, as demonstrated by the derived depth image in Equation (9). Furthermore, these artifacts are influenced by the positions of the X-ray sources. The source design problem is now to identify a configuration of source positions, , that minimize unwanted features in the depth image. As we do not expect to be able to find a closed-form solution to the source design problem in general, we discretize the problem and resort to numerical optimization.

3. Discrete Problem

In this section, we explore depth imaging in the discrete domain, discuss the challenges that arise from it, and present the algebraic reconstruction technique for addressing these challenges.

3.1. Forward and Back Projection

We discretize the forward model in (3) and express it as

where , , and . Note that the number of rows in is the product of the number of detector pixels and the number of sources. Every block of rows corresponds to a particular X-ray source.

Let and denote the forward matrix and the slice object at depth z, respectively. In general, will not be a multiple of the identity as in the continuous setting (7), so we consider solving . We can view this as an approximation of 3D reconstruction through the Schur complement [13]. To see this, let and represent the forward operator and the object for all pixels except the ones contained in . The linear equations for the forward projection (10) can now be expressed as follows:

By multiplying , it reads

By utilizing the Schur complement, one can re-write this in terms of as

where

However, it is not computationally feasible to compute the corresponding Schur complements, and we can interpret depth imaging as neglecting the additional terms involving .

3.2. Algebraic Reconstruction

Although the operator is unitary (up to a scaling factor), the corresponding discrete operator is generally not. Moreover, it is desirable to stabilize the reconstruction with additional regularization. We therefore pose depth imaging as a regularized least-squares problem:

where controls the trade-off between the two terms. While the problem is not extremely ill-posed, we utilize as a safety factor to ensure the uniqueness of the reconstructed depth image. The above optimization can be solved using iterative algorithms such as LSQR [14]. Various other regularization techniques, such as Total Variation regularization and sparse regularization, introduce different penalty functions that promote desired properties in the reconstructed image.

4. Efficient Source Design for Sources

Here, we address the OED problem in laminography through a bilevel optimization framework. Bilevel optimization addresses a specific class of problems characterized by one problem being nested within another. This hierarchical structure comprises two levels of optimization tasks: the upper level (also referred to as the leader problem) and the lower level (known as the follower problem). The solution to the upper-level problem typically depends on the solution to the lower-level problem. Below, we outline the bilevel optimization strategy for the OED problem in laminography [15].

Let denote the design parameter, where the components of determine the influence of each source in the reconstruction process. The problem of finding the optimal design parameter for a fixed number of sources in the reconstruction of a specific depth plane can be formulated as a bilevel optimization problem whose upper level involves

where represents the ground truth and the hyper-parameter serves as a scaling factor ensuring that is on the same scale as . Also, is obtained by solving the lower-level optimization as below:

where denotes the data corresponding to and is a linear operator which applies the weights to each source’s corresponding measurements. The optimization problem (15) minimizes the Euclidean distance between the reconstructed image (scaled by ) and the ground truth subject to certain constraints on to enforce its binary nature with K elements. The binary constraint on in (15) can be relaxed using bound constraints [16], and the bilevel optimization is then formulated as

while the optimal value of the scalar parameter in (15) is substituted in the above optimization with . Mathematically speaking, this problem seeks a that minimizes the Euclidean distance of and and lies on the intersection of a hyperplane and the hyperbox . Note that we cannot guarantee that this will produce binary solutions. In such cases, we convert the solution to a binary one by setting the K largest elements to one and assigning zeros to the remaining elements.

In practice, the ground truth is unknown; however, a set of calibration data of representative images may be available. This can then be utilized to compute an efficient source design that minimizes the empirical Bayes risk. More precisely, for a given set of calibration data with corresponding measurements , we can generalize (17) to

where is the solution of (16) for the i-th calibration data .

In the next section, we will present an algorithm for solving the bilevel optimization problem (17) using a gradient-based method.

5. Implementation: Projected Gradient Approach

To address the implementation of the Efficient K-Source Design (EKSD) method, it is important to note that the non-linear optimization problem specified in Equation (17) requires the use of numerical optimization algorithms that begin with an initial guess and iteratively refine the solution. This section discusses the solution of (17) employing a projected gradient method.

In general, the projected gradient (PG) method solves

where is a smooth function and is a nonempty closed convex set. The projection onto is the mapping defined by

The PG algorithm is defined by

where is the step size, L is the Lipschitz constant of , and is the gradient of f at . The stopping criteria for the PG method is determined by the Euclidean norm of the difference between consecutive iterates, denoted as , being less than or equal to a specified threshold value, denoted as . This condition ensures that the optimization process is halted at an approximate solution point where further iterations do not significantly alter the solution [16]. In the following subsection, we will outline the gradient calculation for the objective function and describe a method for projecting onto a simplex.

5.1. Gradient of the Objective Function

To implement the non-linear optimization of (17) using the PG algorithm, the gradient calculation of is required. Here, it reads,

where , and with . (See the derivation in Appendix A.5).

5.2. Projection onto the Simplex Constraint

Here, we investigate the projection operator presented in (21) which maps each point onto the simplex set of . (See the derivation in Appendix A.6)

where and can be obtained by solving the following optimization problem:

which can be solved using the Newton method. So far, we have examined the implementation of the OED method via a projected gradient approach. The following section presents the pseudocode for our proposed algorithm.

6. EKSD Algorithm

In this section, we outline the pseudocode in (Algorithm 1) for implementing the proposed bilevel optimization in (17) through the projected gradient method.

| Algorithm 1: Pseudocode for Efficient K-Source Design algorithm. |

|

7. Numerical Experiments

Here, we present several results related to our proposed algorithm. Our goal is to evaluate the algorithm’s performance across two distinct initialization scenarios for the design parameters, focusing on convergence behavior. The performance is assessed based on the following criteria:

i: Normalized mean square error (NMSE): it can be defined as the error between the reconstructed image and ground truth

ii: Structural similarity index measure (SSIM): Unlike the NMSE, the SSIM takes into account the structural information and perceived quality of the images. It compares three aspects of the images, luminance, contrast, and structure, and computes a similarity index between 0 and 1, where 1 indicates perfect similarity [17].

7.1. Implementation Details

We define the X-ray setup as shown in Figure 2. The distance, h, between the center of the array and the detector is equal to . Here, L represents the width of the phantom. Moreover, we consider (discretized uniformly with 250 × 250 pixels) and (discretized uniformly with source positions), where D is the fixed array length. The projection data are generated using the ASTRA toolbox [11]. For illustration, we present the projection images from all sources in a array of sources in Figure 5. It is challenging to inspect individual layers of the phantom with a single projection, as overlapping them hinders a proper investigation.

Figure 5.

Different views from sources in a array of sources.

We chose a small regularization parameter to ensure the solution’s stability and uniqueness without significantly biasing the reconstruction. We carefully tuned the step size, , to be sufficiently small, reducing the risk of algorithmic overshooting during optimization. We included additional plots in Appendix A.1 to illustrate the sensitivity of the algorithm to variations in the parameters. Additionally, we manually adjusted the stopping threshold, , to ensure that the algorithm stops at approximately consistent convergence points. The initial value of the parameter vector is initialized either with a vector or with i.i.d. uniform random values in . We refer to these methods as EKSD(K) and EKSDR(K), respectively. In several experiments, we will compare the results to completely random designs of K out of N source positions. We refer to this as the RANDOM method.

7.2. Data Set

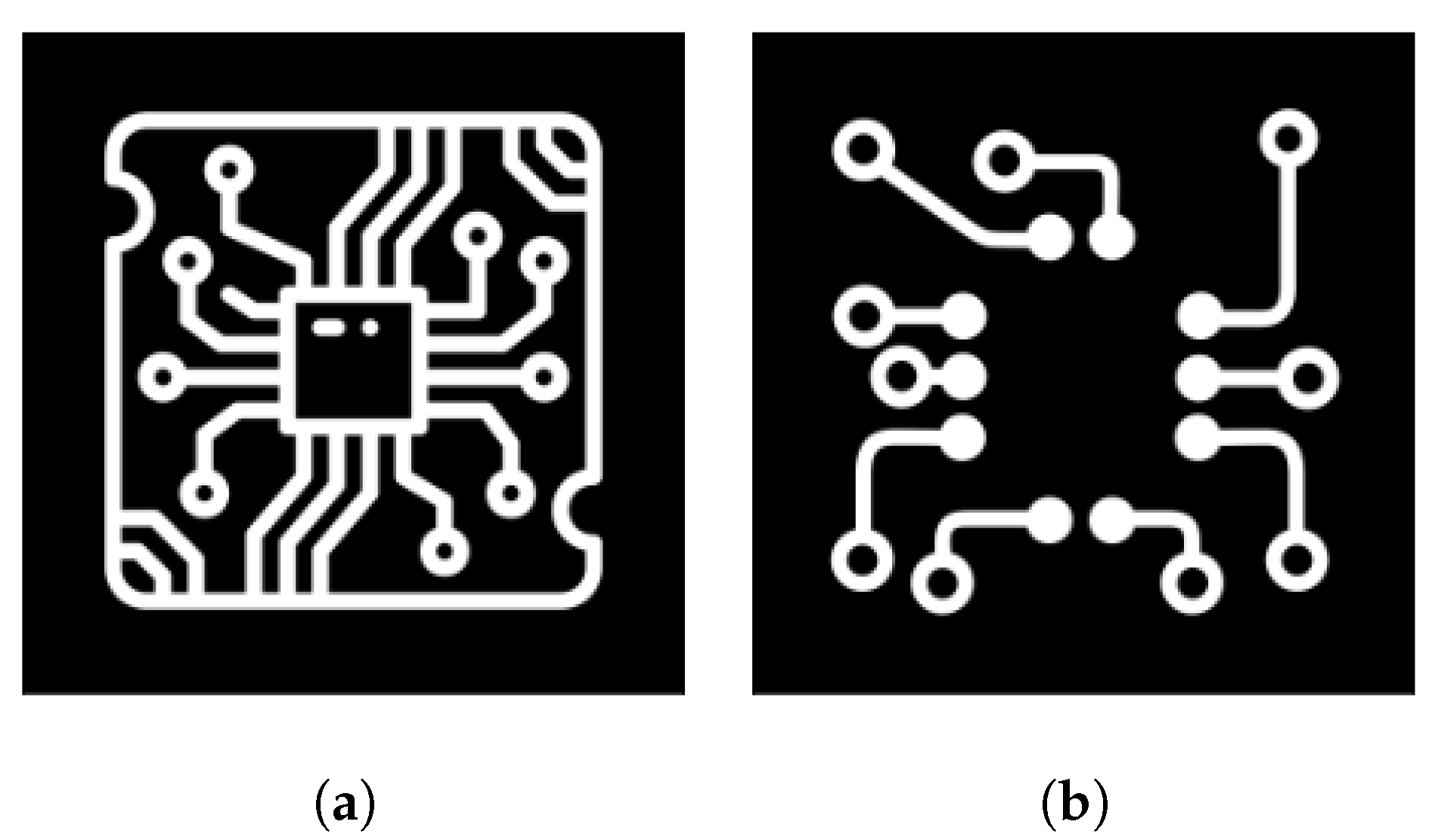

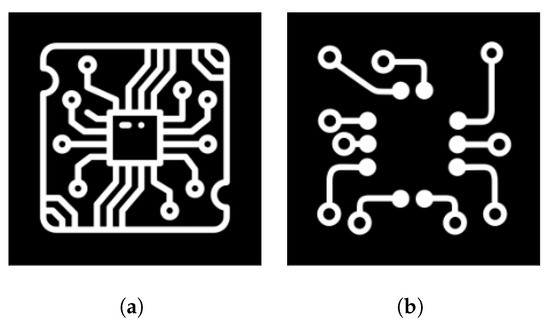

As a benchmark example, we consider a two-layer PCB of size pixels. It consists of two distinct wire designs (as illustrated in Figure 6) with a thickness of 25 pixels each. These designs are separated by a gap of 20 pixels. The layer of interest is the one depicted in Figure 6a.

Figure 6.

The wire designs with a size of . (a) Bottom layer of the PCB. (b) Top layer of the PCB.

We create 200 defect shapes in various locations, forming a data set of defective wire design for the bottom layer. A few samples of this data set are depicted in Figure 7. We use half of this data set for source design (calibration) and half for validation.

Figure 7.

Various defects in different locations on the wire design.

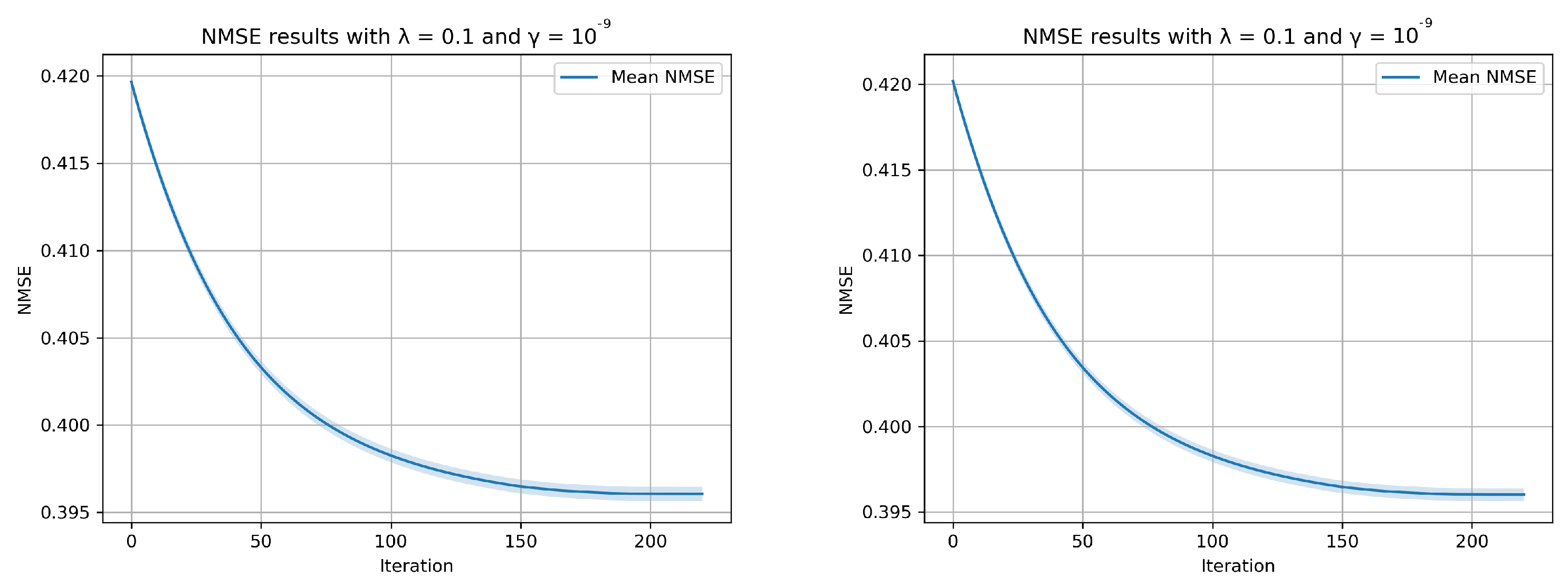

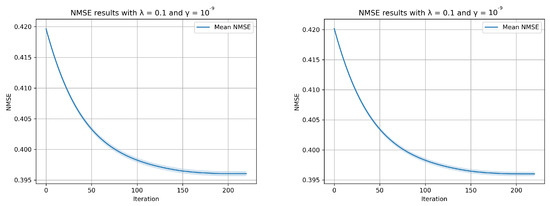

7.3. Convergence

We evaluate the convergence behavior of the EKSD algorithm across various iterations. Here, we let , , and . In Figure 8, we depict the NMSE at each iteration as evaluated on the calibration (left) and validation (right) data. This figure shows that the design is not overfitted on the calibration data.

Figure 8.

The EKSD algorithm’s convergence as applied to a calibration data set is depicted. The blue line indicates the mean values, while the black dots highlight the variance in the NMSE at each iteration.

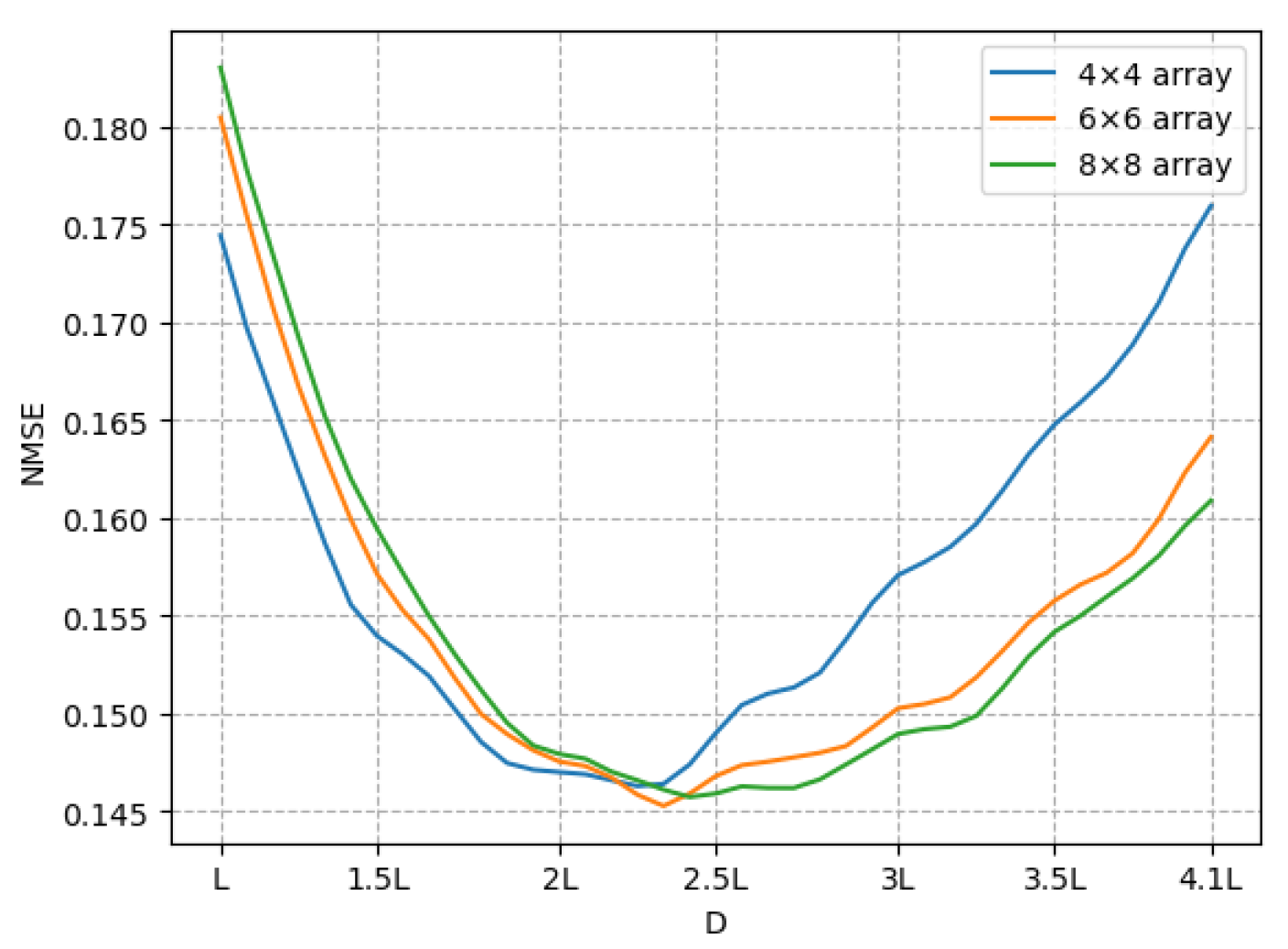

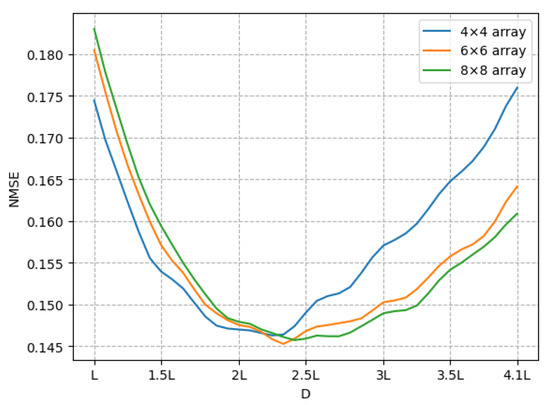

7.4. Exploring the Effects of Array Length

Here, we study the influence of the array length D on the reconstruction error for . Hence, we compare the average NMSE over the validation data across various array lengths, as depicted in Figure 9. The heightened errors observed for both smaller and larger array lengths indicate that certain source positions within the array contribute to artifacts in the depth image, leading to an elevated NMSE. Next, we will examine the results for array lengths of and , which serve as examples of optimal and suboptimal array lengths, respectively.

Figure 9.

The reconstruction error for various ranges of the area occupied by the source.

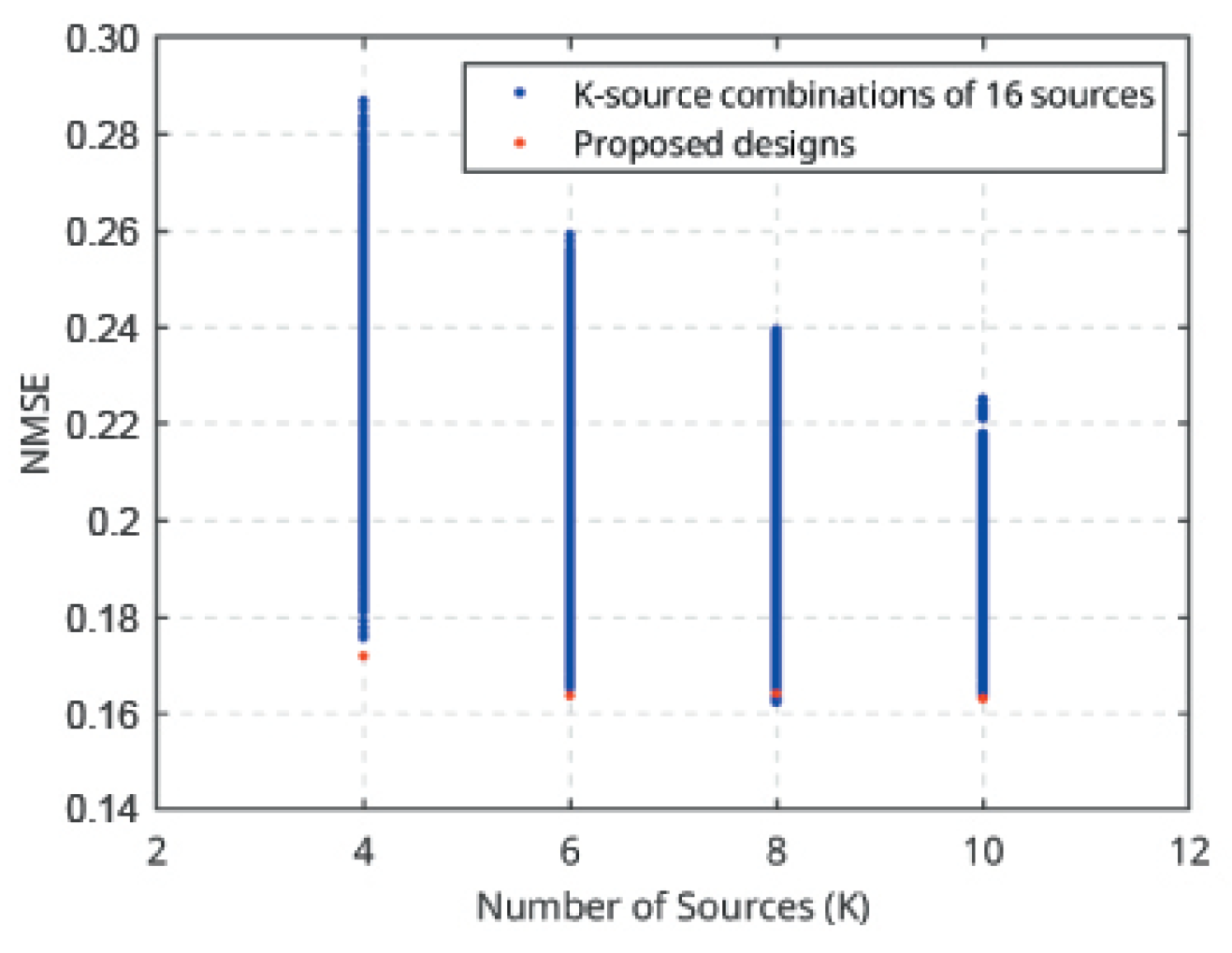

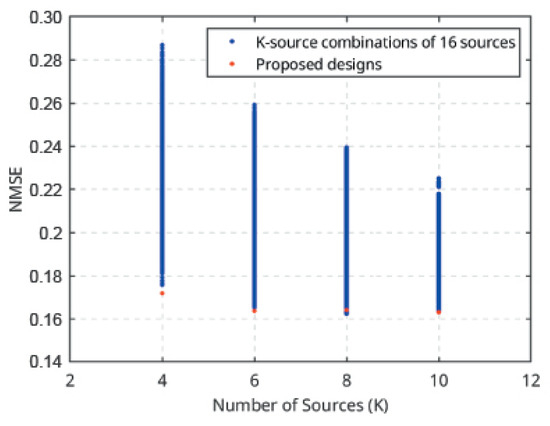

7.5. Exhaustive Search

Before evaluating the OED method for a large number of source positions, we aimed to assess its efficiency by comparing it with a combinatorial optimization method. We carried out an exhaustive search to assess the performance of EKSD. We considered source positions with . Figure 10 shows the average NMSE over the validation data for all possible K-source designs for each K, as well as the average NMSE achieved by the EKSD-design. We see the designs found by EKSD are nearly optimal.

Figure 10.

The error range applies to any K-source positions within the array of 16 positions, where K can be 4, 6, 8, or 10.

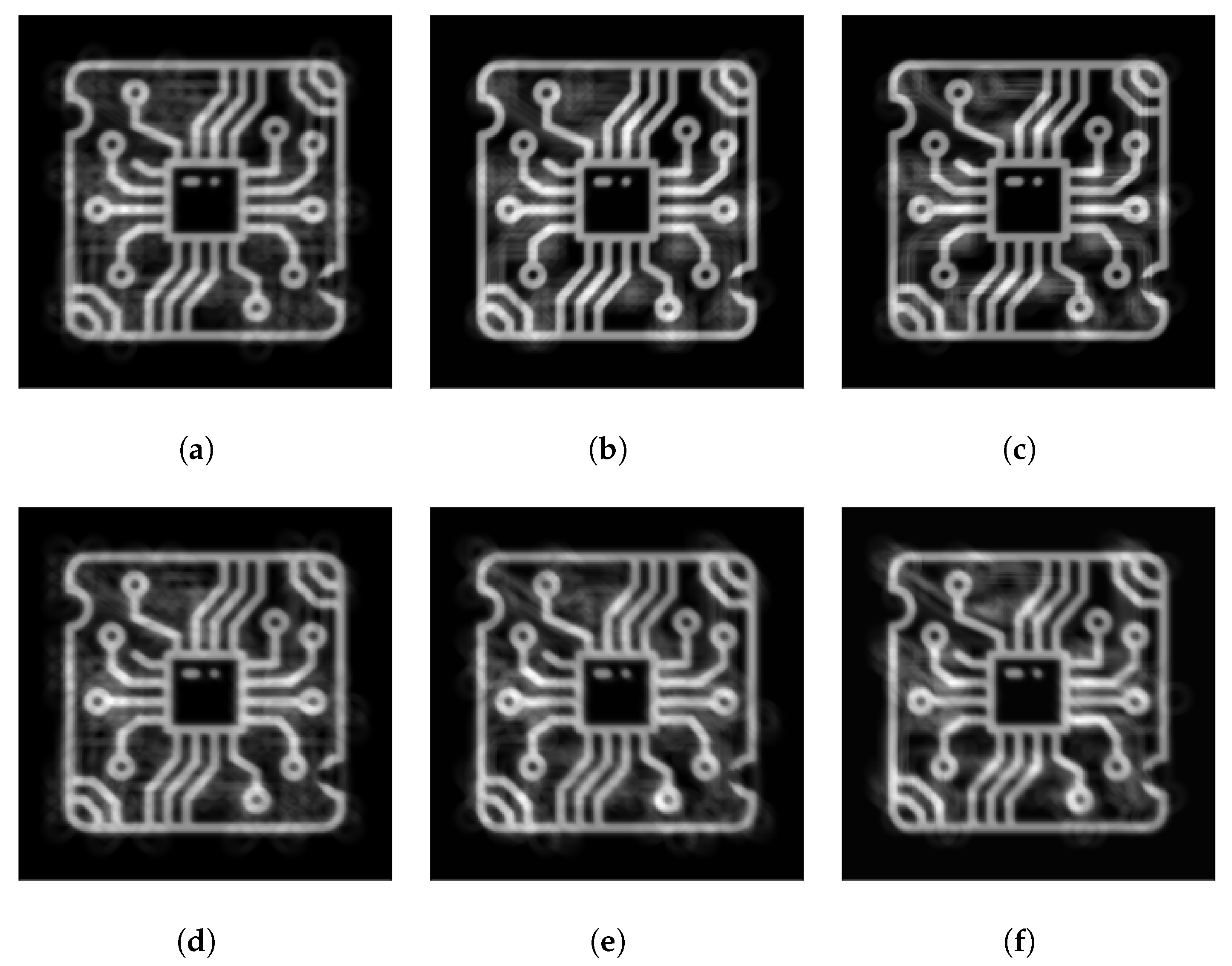

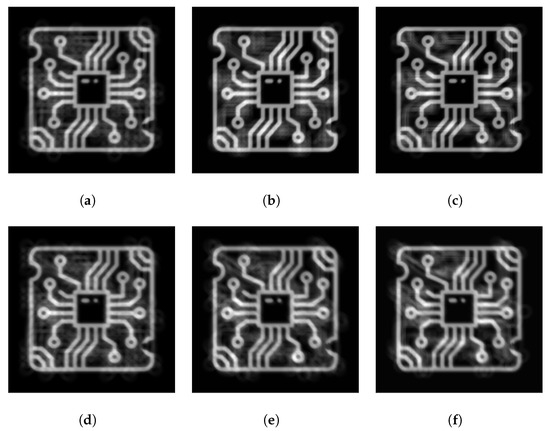

7.6. Source Design 1

We consider three configurations with at , and we show the NMSE and SSIM results in Table 1. Initially, we display results for depth-reconstructed images utilizing all source positions within the array. Subsequently, we derive efficient source designs with EKSD and EKSDR and present the corresponding NMSE and SSIM results. For each value of N, we observe that EKSD and EKSDR outperform the case using all source positions. This enhancement is largely attributed to the reduction in imaging artifacts, which likely results from the selective measurement approach inherent in EKSD and EKSDR strategies. As a comparison, we show the average NMSE and SSIM of random designs with sources, which significantly underperform compared to the other methods.

Table 1.

NMSE and SSIM results with the occupied array length of . For randomized methods, we present the average and standard deviation over 50 runs.

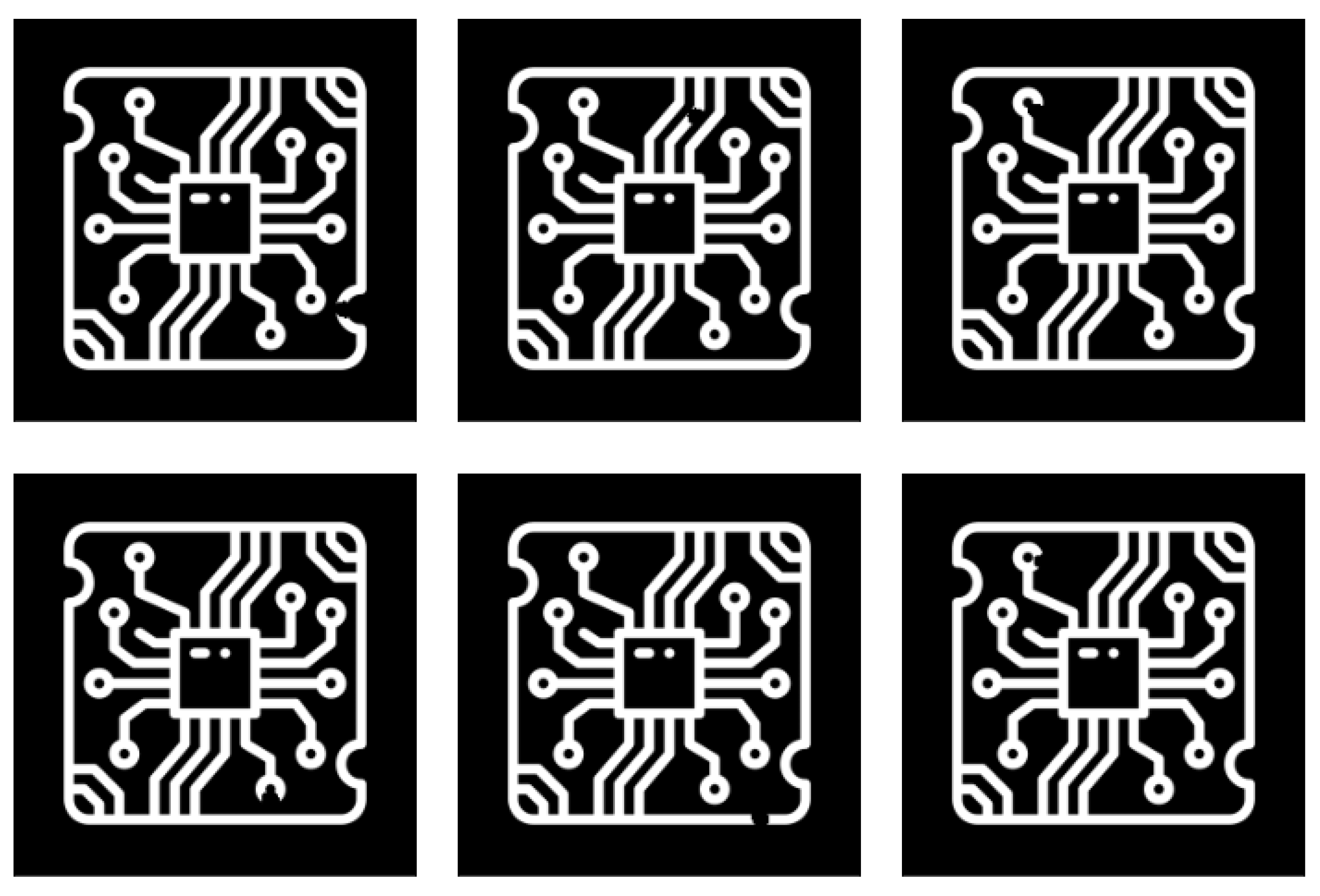

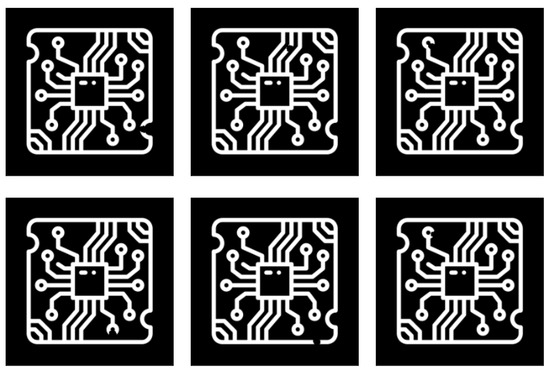

Figure 11 shows the corresponding depth images of the bottom layer reconstructed with different source positions. As demonstrated in Section 2.3, artifacts in these images are attributed to the superposition of features from different layers. The lack of rotational acquisition in laminography leads to this artifacts. Clearly, the designs proposed by EKSD minimize artifacts in depth images compared to random designs, facilitating accurate defect detection within the depth images.

Figure 11.

Depth images in (a–c) are reconstructed using 10 proposed source positions from the total of 16, 36, and 64 positions, respectively. Conversely, the depth images in (d–f) are derived from 10 random source positions out of the same total positions.

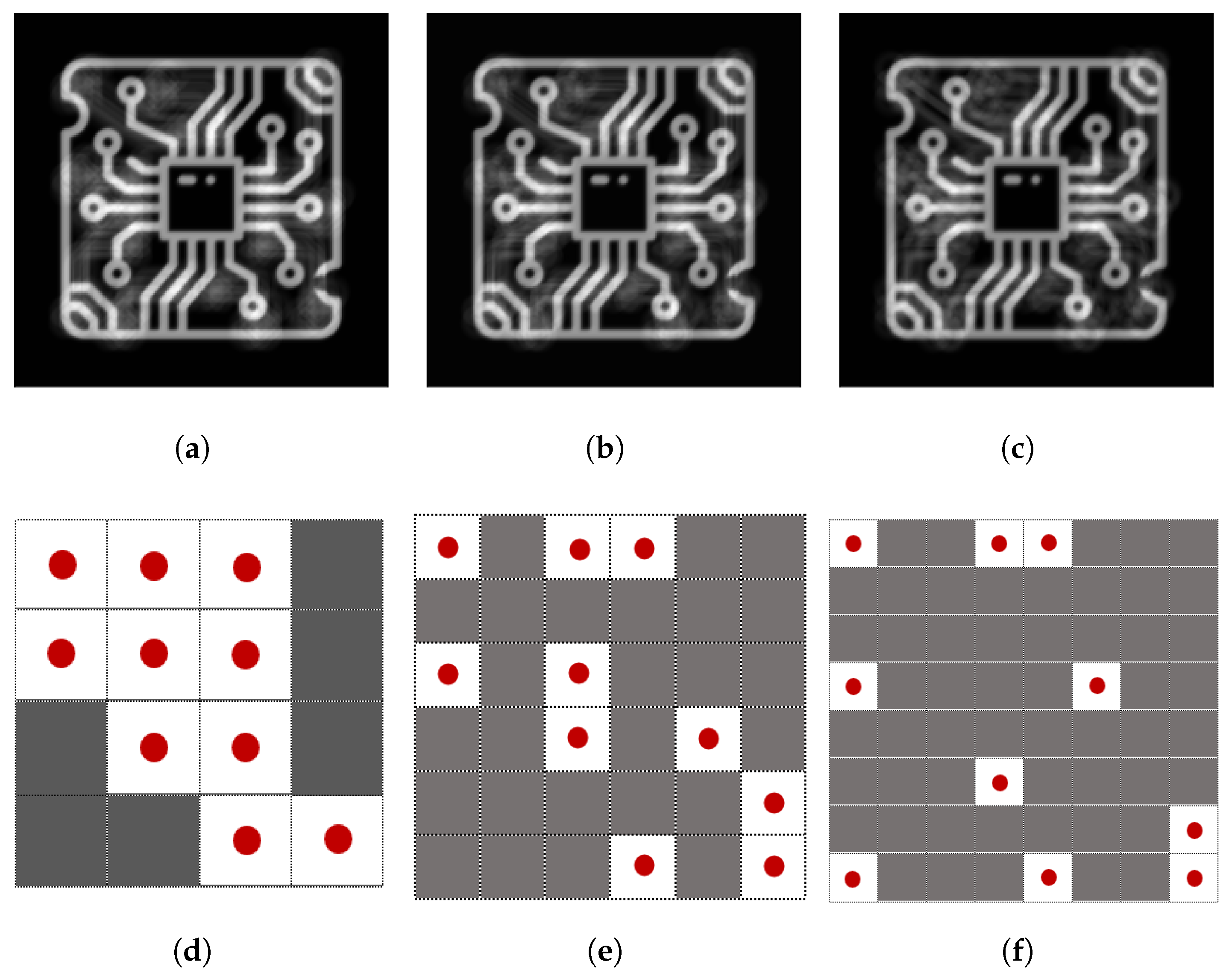

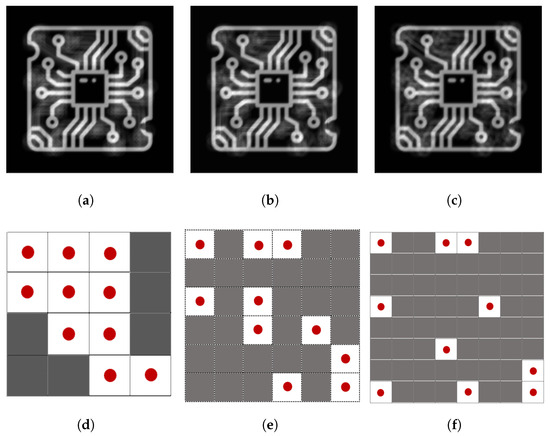

7.7. Source Design 2

We repeat the previous experiment with the array length optimally chosen according to Figure 9; for different N values. The results are summarized in Table 2. As the full designs are already optimal in a sense, there is less dramatic improvement when optimizing the design with fewer source positions. However, the results for suggest that increasing the number of positions while maintaining a fixed array length, thereby reducing the distance between sources, may result in a non-binary solution. Then, converting the solution into a sparse vector with elements results in a less optimal outcome. The proposed source designs and corresponding depth images are shown in Figure 12.

Table 2.

NMSE and SSIM results with the occupied array length of . For randomized methods, we present the average and standard deviation over 50 runs.

Figure 12.

Depth images shown in (a–c) are reconstructed by 10 proposed source positions shown in (d–f), respectively.

8. Discussion

In this research, we explore the methodology of experimental design for a laminographic setup, acknowledging potential challenges that may arise in real-world applications. Here, we outline several significant challenges with our proposed method: (i) As observed in the differences in EKSD and EKSDR results, the non-convex nature of the objective function may lead to entrapment in local minima. To mitigate this issue, one could explore the use of optimization methods that thoroughly explore the entire search space, such as the Genetic Algorithm [18], which can potentially provide more robust solutions by avoiding suboptimal local minima. However, it is worth mentioning that these methods are restricted to low-dimensional problems. (ii) Our method optimizes the positions of sources within a specified grid. However, this grid may not represent the most efficient set of source positions for optimization. An alternative approach could involve optimizing within a continuous domain of positions, leveraging the closed-form formulation for depth image reconstruction presented in this paper. (iii) While our current investigation of the experimental design problem uses noise-free measurements, this assumption may not hold in real-world scenarios characterized by noisy data. This can be addressed by incorporating noise directly into the optimization process to enhance real-world applicability.

9. Conclusions

In real-world scenarios, imaging a particular depth of a planar object can yield valuable information with less effort than a full 3D reconstruction. In a depth image, the information corresponding to the chosen layer appears sharp, while the details of other layers appear blurred. We argue that source design plays a role in mitigating these unwanted artifacts and pose the experimental design problem as a bilevel optimization problem. To solve this problem, we employed bilevel optimization and implemented it using gradient-based methods. The suggested framework can be tailored more specifically to a particular application by incorporating different regularization terms in the lower-level optimization problem or by adopting the alternate loss function in the upper-level optimization problem to more effectively capture the characteristics of the image.

Author Contributions

Conceptualization, T.v.L.; methodology, H.F. and T.v.L.; software, H.F.; formal analysis, H.F.; writing—original draft, H.F.; writing—review & editing, T.v.L.; visualization, H.F.; supervision, T.v.L. All authors have read and agreed to the published version of the manuscript.

Funding

Our work was supported by funding from the European Union’s Horizon 2020 research and innovation program under the Marie Skłodowska-Curie grant agreement No. 956172 (xCTing).

Data Availability Statement

Data are contained within the article.

Conflicts of Interest

The authors declare no conflict of interest.

Appendix A

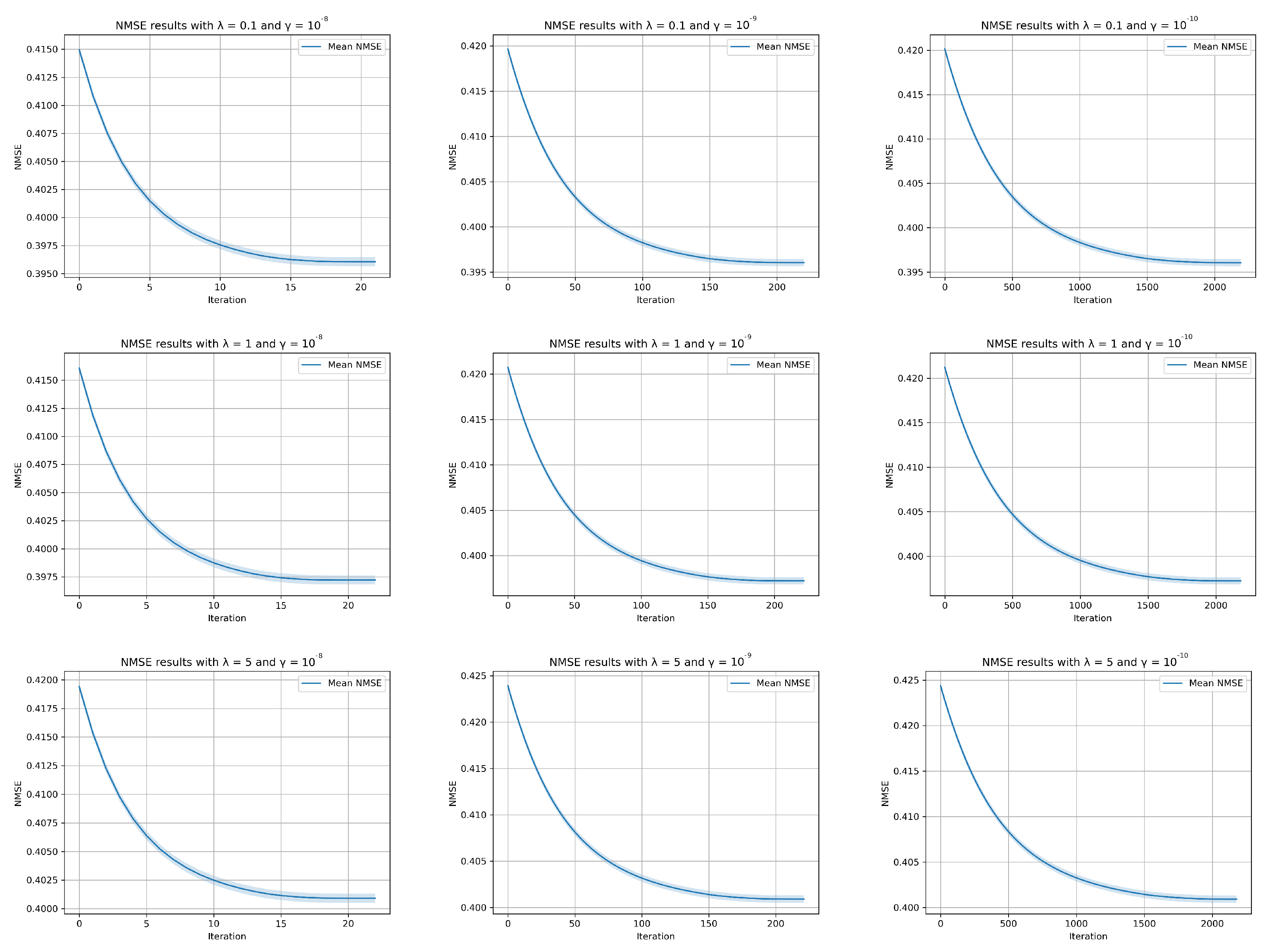

Appendix A.1. Parameter Tuning Plots

In Figure A1, we provide the convergence behavior of our method for distinct values of and , presenting both the average values (represented by solid points) and the standard deviations (illustrated as shaded areas), with respect to the normalized mean square error (NMSE) in each iteration.

Figure A1.

Various and applied to the Algorithm 1 and results in different convergence speed.

Figure A1.

Various and applied to the Algorithm 1 and results in different convergence speed.

Appendix A.2. Forward Operator for the 3D Object

Figure 2 presents the 2D view of the acquisition setup for a planar object where is the lateral coordinate and the depth coordinate. The line integral of from to for a fixed h can be expressed as follows:

where and denote the source and detector positions, respectively. Next, we substitute with its representation in the Fourier domain :

Using (A2) in (A1), it yields

Using , it follows that

and then

Using , the following can be written:

which means we can link the 3D Fourier transform of x to the 2D Fourier transform of the data as

Appendix A.3. Forward Operator for a 2D Slice at Depth z

We introduce as a slice of the object x at depth z, i.e.,

We then define the forward operator as projecting a 2D object to the detector at via

or, equivalently, as

The adjoint operator can be obtained by considering the definition . First, we have

Now, let ; then, and , and it follows that

We thus find that is defined as

By using (A9) in (A12), the composition can be written as below:

Appendix A.4. Back Projection for a Depth Image

Appendix A.5. Gradient Calculation of the Objective Function

To find the gradient of with respect to , by the chain roll it reads

where the second term is equal to zero because is the optimal point of . Then, the first term can be written as

To obtain an expression for the derivative of with respect to , we exploit the fact that the lower-level optimization problem (16) has a closed-form solution:

We define , and then the derivative follows:

Recall that ; therefore,

Then, (A19) can be rewritten as

where is equal to in (A18) and is denoted by . Then, it can be rewritten:

Appendix A.6. Projection onto the Simplex Constraint

Let be an operator that maps each point onto the simplex set of . In fact, the orthogonal projection of is the unique solution of

to solve the above optimization via its dual problem, we introduce the Lagrangian [16]:

where is a Lagrangian multiplier. The dual problem can now be written as

where is the dual function as the minimum value of the Lagrangian over . Regarding strong duality, the optimal solutions of (A25) and (A27) are equal if there exists a dual variable of that makes (A27) a tight lower bound for (A25). The solution of the dual problem of (A27) can be written as [19]

where is obtained by solving . Let us define the function , which is non-increasing and can be solved using the Newton method. The derivative is given by

References

- Medici, V.; Martarelli, M.; Paone, N.; Pandarese, G.; van de Kamp, W.; Verhoef, B.; Sipsas, K.; Broechler, R.; Besada, L.B.; Alexopoulos, K.; et al. Integration of Non-Destructive Inspection (NDI) systems for Zero-Defect Manufacturing in the Industry 4.0 & IoT (MetroInd4.0&IoT). In Proceedings of the 2023 IEEE International Workshop on Metrology for Industry 4.0 & IoT (MetroInd4.0&IoT), Brescia, Italy, 6–8 June 2023; pp. 439–444. [Google Scholar] [CrossRef]

- Wang, B.; Zhong, S.; Lee, T.L.; Fancey, K.S.; Mi, J. Non-destructive testing and evaluation of composite materials/structures: A state-of-the-art review. Adv. Mech. Eng. 2020, 12, 1687814020913761. [Google Scholar] [CrossRef]

- Hassler, U.; Oeckl, S.; Bauscher, I. Inline ct methods for industrial production. In Proceedings of the International Symposium on NDT in Aerospace 2008, Fürth, Germany, 3–5 December 2008; pp. 123–131. [Google Scholar]

- Gondrom, S.; Zhou, J.; Maisl, M.; Reiter, H.; Kröning, M.; Arnold, W. X-ray computed laminography: An approach of computed tomography for applications with limited access. Nucl. Eng. Des. 1999, 190, 141–147. [Google Scholar] [CrossRef]

- O’Brien, N.; Mavrogordato, M.; Boardman, R.; Sinclair, I.; Hawker, S.; Blumensath, T. Comparing cone beam laminographic system trajectories for composite NDT. Case Stud. Nondestruct. Test. Eval. 2016, 6, 56–61. [Google Scholar] [CrossRef][Green Version]

- Chaloner, K.; Verdinelli, I. Bayesian Experimental Design: A Review. Stat. Sci. 1995, 10, 273–304. [Google Scholar] [CrossRef]

- Haber, E.; Magnant, Z.; Lucero, C.; Tenorio, L. Numerical methods for A-optimal designs with a sparsity constraint for ill-posed inverse problems. Comput. Optim. Appl. 2012, 52, 293–314. [Google Scholar] [CrossRef]

- Haber, E.; Horesh, L.; Tenorio, L. Numerical methods for experimental design of large-scale linear ill-posed inverse problems. Inverse Probl. 2008, 24, 055012. [Google Scholar] [CrossRef]

- Haber, E.; Horesh, L.; Tenorio, L. Numerical methods for the design of large-scale nonlinear discrete ill-posed inverse problems. Inverse Probl. 2009, 26, 025002. [Google Scholar] [CrossRef]

- Ruthotto, L.; Chung, J.; Chung, M. Optimal Experimental Design for Inverse Problems with State Constraints. SIAM J. Sci. Comput. 2018, 40, B1080–B1100. [Google Scholar] [CrossRef]

- van Aarle, W.; Palenstijn, W.J.; Cant, J.; Janssens, E.; Bleichrodt, F.; Dabravolski, A.; Beenhouwer, J.D.; Batenburg, K.J.; Sijbers, J. Fast and flexible X-ray tomography using the ASTRA toolbox. Opt. Express 2016, 24, 25129–25147. [Google Scholar] [CrossRef] [PubMed]

- Jørgensen, J.S.; Lionheart, W.R.B. Chapter 6: Filtered Back-Projection. In Computed Tomography: Algorithms, Insight, and Just Enough Theory; Society for Industrial and Applied Mathematics: Philadelphia, PA, USA, 2021; pp. 73–103. [Google Scholar] [CrossRef]

- Zhang, F. The Schur Complement and Its Applications; Springer Science & Business Media: Berlin/Heidelberg, Germany, 2006; Volume 4. [Google Scholar]

- Paige, C.C.; Saunders, M.A. LSQR: An algorithm for sparse linear equations and sparse least squares. ACM Trans. Math. Softw. (TOMS) 1982, 8, 43–71. [Google Scholar] [CrossRef]

- Sinha, A.; Malo, P.; Deb, K. A Review on Bilevel Optimization: From Classical to Evolutionary Approaches and Applications. IEEE Trans. Evol. Comput. 2018, 22, 276–295. [Google Scholar] [CrossRef]

- Boyd, S.; Vandenberghe, L. Convex Optimization; Cambridge University Press: Cambridge, UK, 2004. [Google Scholar]

- Wang, Z.; Bovik, A.; Sheikh, H.; Simoncelli, E. Image quality assessment: From error visibility to structural similarity. IEEE Trans. Image Process. 2004, 13, 600–612. [Google Scholar] [CrossRef] [PubMed]

- Srinivas, M.; Patnaik, L. Genetic algorithms: A survey. Computer 1994, 27, 17–26. [Google Scholar] [CrossRef]

- Kadu, A.; van Leeuwen, T.; Batenburg, K.J. CoShaRP: A convex program for single-shot tomographic shape sensing. Inverse Probl. 2021, 37, 105005. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).