Abstract

Episodic memory training plays a crucial role in cognitive enhancement, particularly in addressing age-related memory decline and cognitive disorders. Accurately assessing the effectiveness of such training requires reliable methods to capture changes in memory function. Electroencephalography (EEG) offers an objective way of evaluating neural activity before and after training. However, EEG classification in episodic memory assessment remains challenging due to the variability in brain responses, individual differences, and the complex temporal–spatial dynamics of neural signals. Traditional EEG classification methods, such as Support Vector Machines (SVMs) and Convolutional Neural Networks (CNNs), face limitations when applied to episodic memory training assessment, struggling to extract meaningful features and handle the inherent uncertainty in EEG signals. To address these issues, this paper introduces DU-former, which improves feature extraction and enhances the model’s robustness against noise. Specifically, data uncertainty (DU) explicitly handles data uncertainty by modeling input features as Gaussian distributions within the reparameterization module. One branch predicts the mean through convolution and normalization, while the other estimates the variance via average pooling and normalization. These values are then used for Gaussian reparameterization, enabling the model to learn more robust feature representations. This approach allows the model to remain stable when dealing with complex or noisy data. To validate the method, an episodic memory training experiment was designed with 17 participants who underwent 28 days of training. Behavioral data showed a significant reduction in task completion time. Object recognition accuracy also improved, as indicated by the higher proportion of correctly identified target items in the episodic memory testing game. Furthermore, EEG data collected before and after the training were used to evaluate the DU-former’s performance, demonstrating significant improvements in classification accuracy. This paper contributes by introducing uncertainty learning and proposing a more efficient and robust method for EEG signal classification, demonstrating superior performance in episodic memory assessment.

1. Introduction

Episodic memory refers to recalling specific times, locations, and events, typically involving multi-dimensional information such as people, places, and time [1]. With the global aging population and the rising prevalence of cognitive disorders, research and applications in episodic memory training have increasingly become a focus of attention. Episodic memory training aims to enhance memory capabilities by simulating or reconstructing specific situations. Standard training methods include scenario reconstruction, virtual environment training, and multi-sensory stimulation. By activating the sensory nervous system, these methods help strengthen the associations and visualization of information, thereby promoting the recovery and improvement of cognitive functions in the brain.

In recent years, video games and virtual reality (VR) technologies in episodic memory training have advanced. Video games effectively engage participants’ interests with their interactivity and variable task structures. Studies have shown that this training approach significantly improves memory abilities in elderly individuals [2,3,4]. By offering immersive virtual environments, virtual reality allows participants to engage in memory training in situations resembling the real world. Some studies have found that active navigation and moderate interaction within virtual environments can significantly enhance the episodic memory abilities of elderly individuals [5,6].

In assessing episodic memory, traditional neuropsychological tests typically involve tasks such as story recall or word listing [7,8,9]. Nevertheless, these methods correlate poorly with individuals’ subjective memory abilities and daily life memory functions. In contrast, VR environments offer a more comprehensive and function-oriented type of assessment, allowing for better testing of episodic memory by simulating daily activities [10]. For example, in a virtual town task, participants were asked to remember the location of specific events or places or assess spatial memory and time perception through virtual navigation tasks [11].

By analyzing brain electrical activity patterns, electroencephalogram (EEG) can be used to effectively evaluate memory states. EEG classification in episodic memory-related research is particularly challenging due to the variability in brain responses, individual differences, and the complex temporal–spatial dynamics of neural signals. Traditional EEG classification methods, such as Support Vector Machines (SVMs) [12,13] and Linear Discriminant Analysis (LDA) [14,15], rely on manual feature extraction and preprocessing. Even though these methods are effective, there exist limitations when handling complex signals [16,17,18]. In recent years, deep learning algorithms, particularly Convolutional Neural Networks (CNNs) [19] and Long Short-Term Memory Networks (LSTMs) [20,21], have gradually become the mainstream approach for EEG signal classification. Centroid-Guided Domain Incremental Learning (CGER) [22] introduces a centroid-based regularization mechanism combined with experience replay to enhance incremental adaptation in EEG classification models. By maintaining class-wise centroids in feature space, CGER facilitates domain adaptation while preserving past knowledge. The advancement of deep learning, particularly data uncertainty (DU) using Transformer models [23], opens up new possibilities for more accurate and reliable episodic memory assessment.

This paper introduces the DU-former model, designed to enhance the accuracy and reliability of EEG signal classification for episodic memory assessment by overcoming the limitations of traditional methods. By incorporating DU into the Transformer architecture, the model improves feature extraction and robustness against noise. Specifically, the proposed architecture achieves synergistic enhancement through DU-former processing: the separable multi-head self-attention (SMHSA) mechanism first extracts spatiotemporal contextual features via its projection matrices, while the reparameterization module subsequently models channel-wise uncertainty distributions, enabling joint optimization of feature discriminability and noise robustness in end-to-end learning. The paper is structured to systematically demonstrate the DU-former’s effectiveness: First, this paper elucidates the model’s dual-path architecture integrating temporal–spatial convolution with uncertainty modeling, detailing core components including the multi-scale convolution module, separable multi-head self-attention blocks, and probabilistic reparameterization layers. Subsequently, rigorous experimental protocols are established, encompassing VR-based episodic memory tasks with 17 subjects undergoing longitudinal training, followed by an evaluation comparing classification metrics against baseline models.

2. DU-Former: An EEG Classification Model

2.1. Model Architecture Design

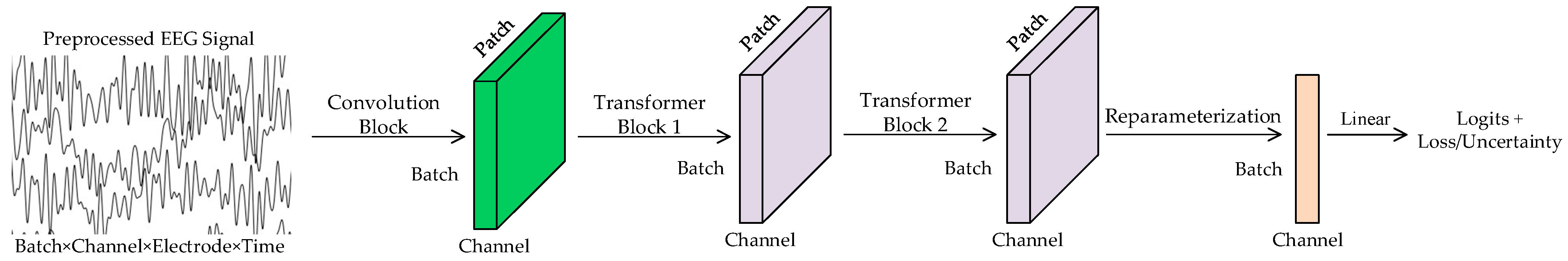

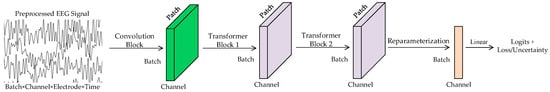

The architecture of the DU-former model designed in this paper is shown in Figure 1. It is designed to enhance the accuracy and robustness of EEG signal classification, particularly in the context of episodic memory training assessment. The model comprises three core components: a convolutional module, dual Transformer encoder modules, and a reparameterization module. The convolutional module employs parallel large-kernel and small-kernel convolutional branches to extract both local and global features from EEG signals, ensuring comprehensive feature representation across temporal and spatial dimensions. Subsequently, the dual Transformer encoder modules utilize an SMHSA mechanism, which effectively models the temporal dynamics of EEG signals while reducing computational complexity. Finally, the reparameterization module incorporates Gaussian distribution modeling to capture the uncertainty in input features. By separately learning the principal feature components through the mean branch and the noise components through the variance branch, this module enhances classification robustness and prevents the model from overfitting to the inherent variability of EEG signals.

Figure 1.

DU-former model.

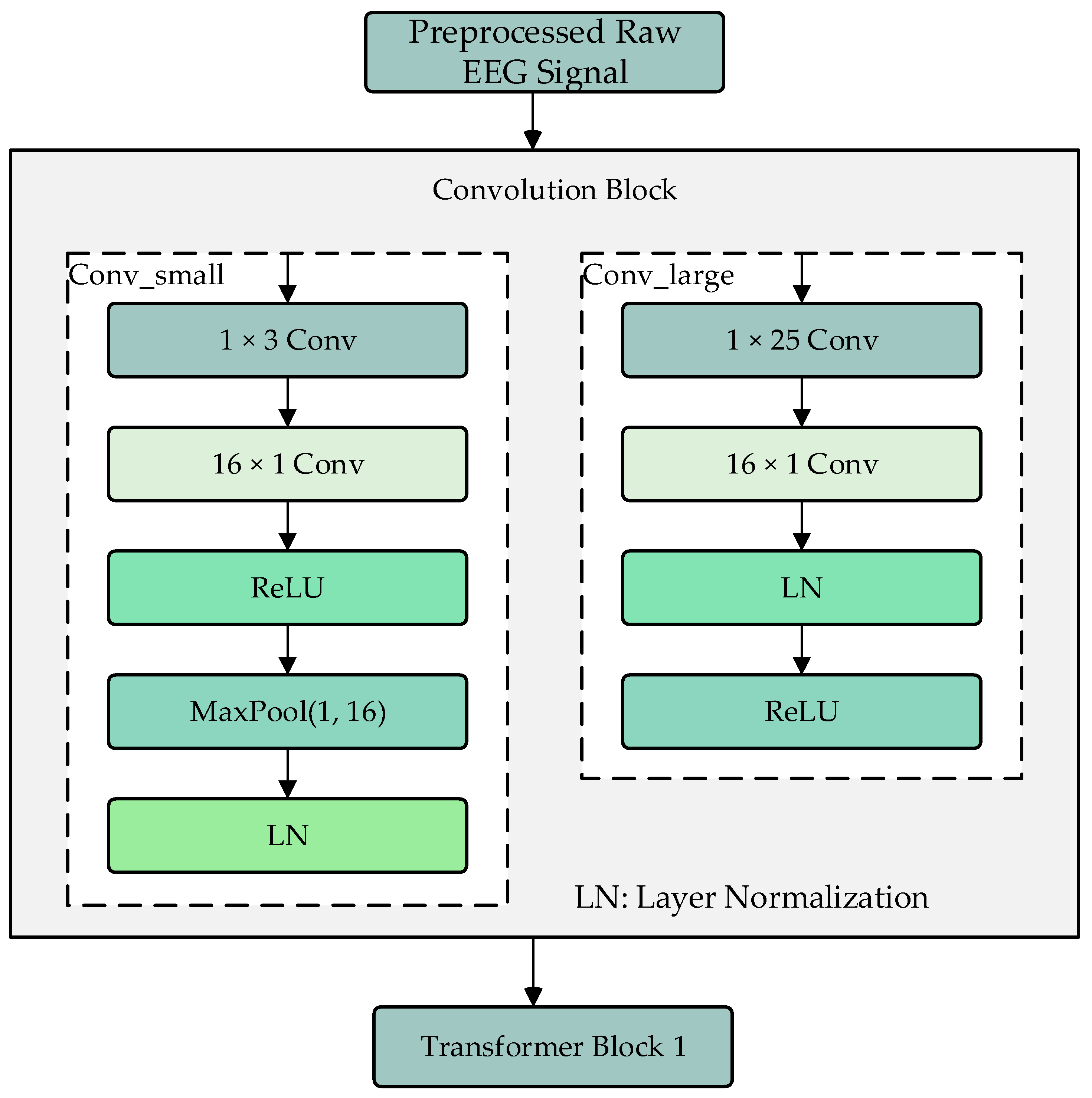

2.2. Convolution Module

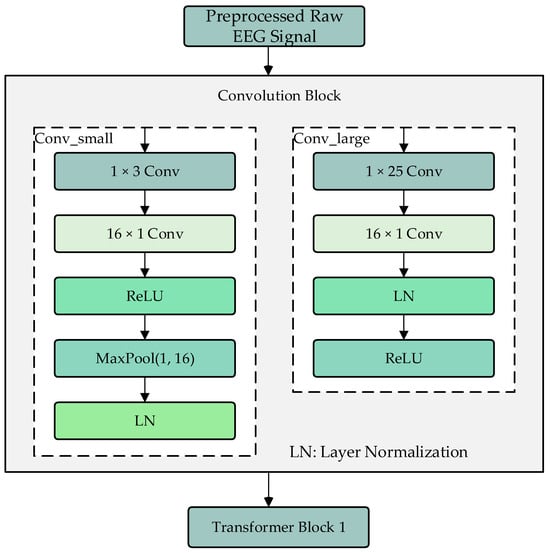

The convolution module consists of two branches, Conv_large and Conv_small, which are used to extract local and global features of the processed EEG signals, as shown in Figure 2. The Conv_large branch first uses a 1 × 25 convolution kernel to extract features along the time dimension, followed by a 16 × 1 convolution kernel to extract features along the electrode channel dimension. The results are then normalized and activated using ReLU. The Conv_small branch also uses a 1 × 25 convolution kernel to extract features along the time dimension, followed by a 16 × 1 convolution kernel to extract features along the electrode channel dimension. After ReLU activation, a max-pooling operation is performed to adjust the data dimensions to match those of the Conv_large branch, followed by layer normalization. The two branches use different sizes of convolution kernels to extract local and global features, and their outputs are fused as input to the Transformer encoder modules.

Figure 2.

Convolution module.

Compared to traditional EEG feature extraction approaches, the convolution module in DU-former introduces significant improvements in capturing both short-term fluctuations and long-range dependencies in EEG signals. Classical methods such as Short-Time Fourier Transform (STFT) and Wavelet Transform (WT) primarily focus on frequency domain analysis, while statistical feature-based approaches rely on handcrafted measures such as power spectral density and event-related potential amplitudes. Although these methods have demonstrated effectiveness in certain EEG applications, they often fail to generalize across varying recording conditions due to their reliance on manually defined features. In contrast, early deep learning-based models, such as CNNs, leverage convolutional operations to automatically extract informative representations, but they typically apply uniform kernel sizes, which may limit the ability to capture multi-scale dependencies. EEGNet, a widely used CNN-based architecture for EEG classification, employs depth wise and separable convolutions to improve computational efficiency while maintaining spatial–temporal representations. However, EEGNet’s reliance on a single convolutional pipeline restricts its adaptability to complex EEG signals.

The convolution module in DU-former addresses these limitations by incorporating dual-branch convolutional processing, allowing the simultaneous extraction of fine-grained and high-level EEG features. The Conv_large branch ensures that long-range temporal dependencies are captured, making the model more robust to variations in brain activity across time. Meanwhile, the Conv_small branch enhances sensitivity to local changes in neural patterns while maintaining computational efficiency. This dual-branch structure significantly improves the model’s ability to generalize across subjects and recording sessions, which is critical for EEG-based cognitive assessments. Furthermore, by normalizing features at multiple stages and leveraging distinct convolutional scales, the module mitigates the risk of overfitting, which is a common challenge in EEG deep learning models. These architectural enhancements ultimately contribute to improved classification performance, as validated in experimental comparisons against baseline models.

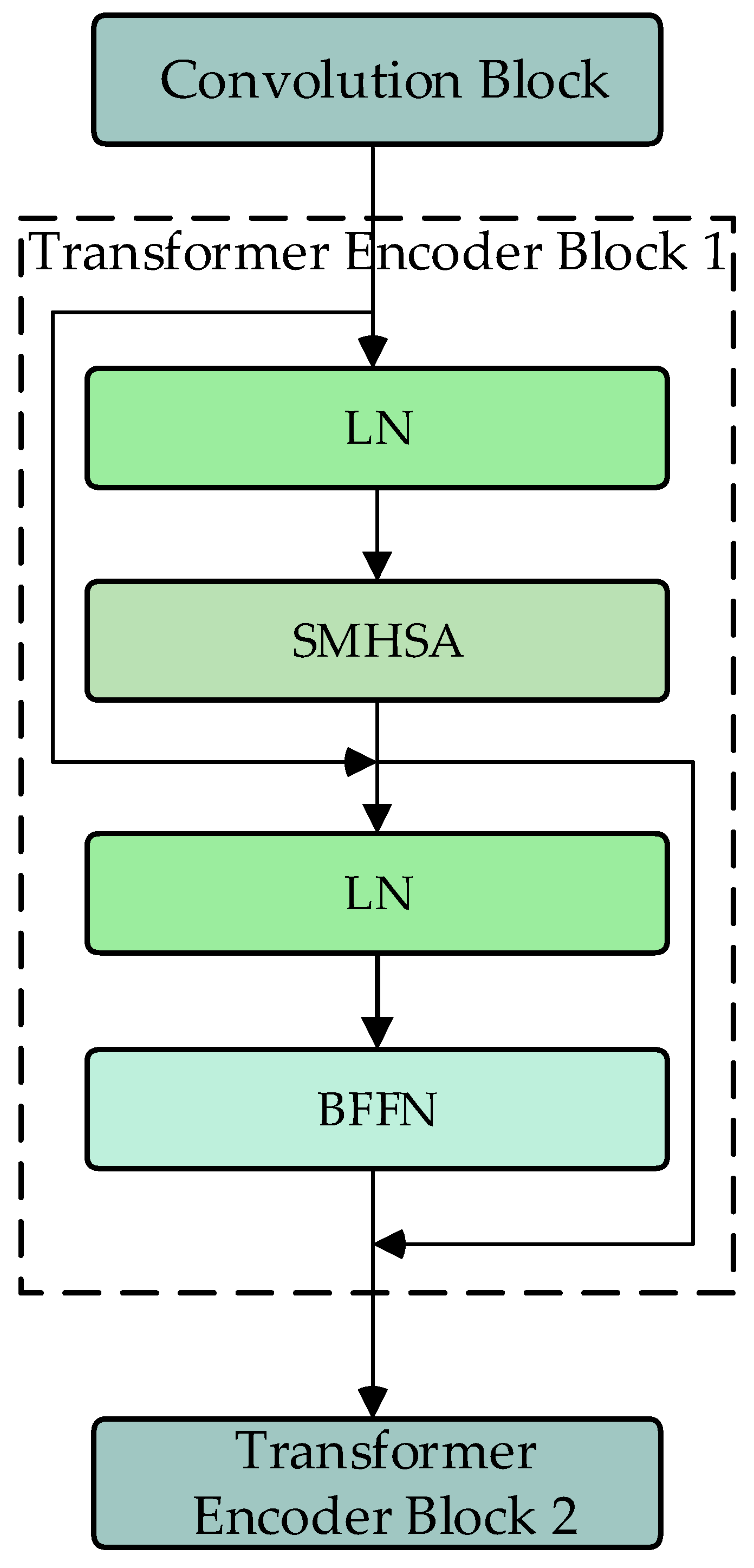

2.3. Transformer Encoder Modules

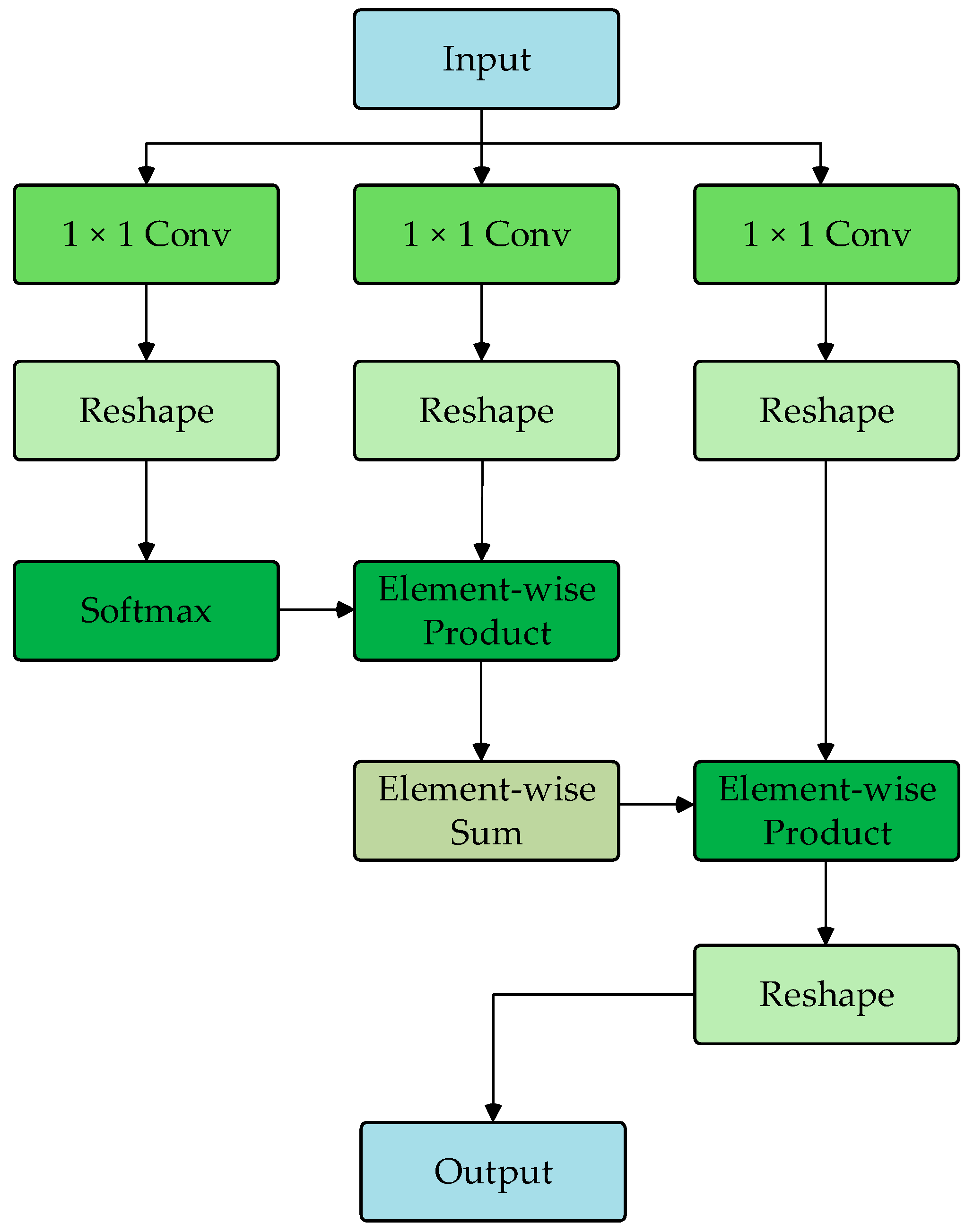

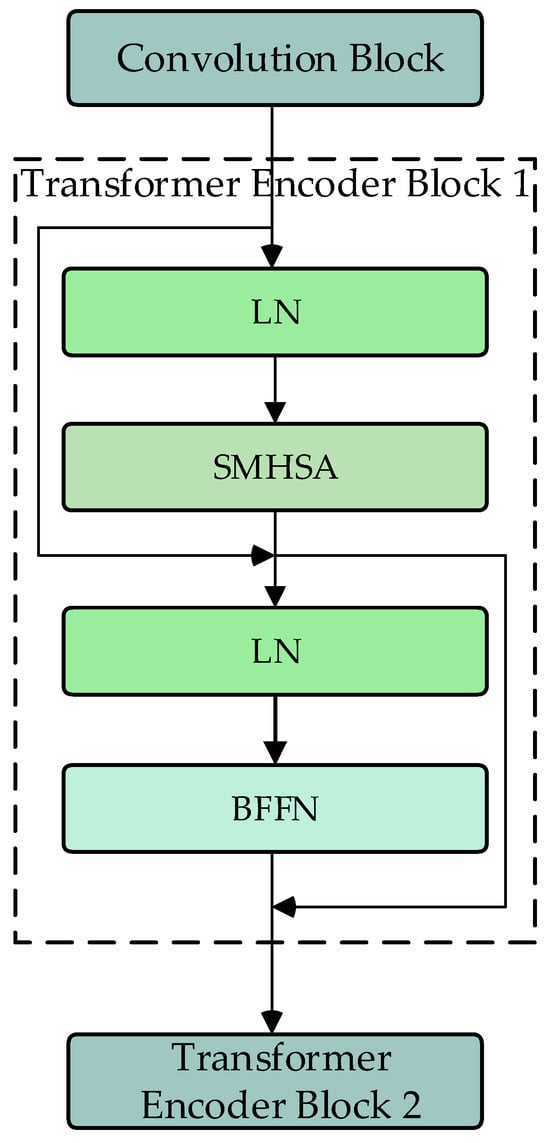

The DU-former model employs two Transformer encoder modules to encode the input sequence layer by layer, capturing contextual information and deep feature representations. Figure 3 shows the process of Transformer encoder block 1. The process of Transformer encoder block 2 is consistent with block 1. Each Transformer encoder module comprises an SMHSA and a feed-forward network (FFN). The attention mechanism is the core component of the Transformer encoder module, as it calculates the correlation between positions within the sequence, assigning different weights to each position, thus enhancing the model’s ability to capture related information within the sequence. As shown in Figure 4, this paper uses the separable multi-head self-attention mechanism from the MobileViT model to improve computational efficiency, which reduces computational complexity.

Figure 3.

Flowchart of Transformer encoder block (SMHSA: separable multi-headed self-attention; BFFN: feed-forward network).

Figure 4.

Flowchart of SMHSA.

First, the input features are mapped to , , and matrices. Then, the matrix computes the inner product with the matrix via the softmax operation to obtain the context scores. Finally, these scores are weighted by the matrix to generate the attention vector, as shown in Equation (1).

FFN consists of two linear layers. The input data are first mapped to a higher-dimensional space and then mapped back to a lower-dimensional space, allowing the model to learn richer feature representations.

Compared to conventional Transformer-based EEG classification models, the DU-former’s Transformer encoder module introduces architectural improvements tailored for efficient neural signal processing. Standard Transformer models rely on traditional self-attention mechanisms, where each query interacts with all key–value pairs across the sequence. While effective in modeling long-range dependencies, this approach incurs substantial computational complexity, making it less practical for applications involving high-dimensional EEG signals with limited training data. Recent adaptations, such as EEG–Transformer and Vision Transformers (ViTs), have sought to address these limitations by incorporating patch-wise attention or hybrid convolutional–attention architectures. However, these methods still suffer from quadratic complexity in relation to sequence length, limiting their scalability.

The DU-former model refines this approach by integrating SMHSA, inspired by MobileViT, which decomposes the attention mechanism into spatially separable operations. This design reduces redundant computations while preserving the model’s capacity to extract salient EEG features. Additionally, the use of separable attention enhances the model’s ability to focus on relevant temporal and spatial patterns within EEG signals, making it more robust to noise and inter-subject variability. Furthermore, by maintaining dual Transformer encoder modules, the model ensures a hierarchical feature extraction process, where initial layers capture broad contextual dependencies, while deeper layers refine and specialize the learned representations.

The architectural contributions of the Transformer encoder module in DU-former directly improve EEG classification performance by balancing computational efficiency with representational power. The combination of SMHSA and FFN enables the model to capture multi-scale dependencies in EEG signals while maintaining a lower computational footprint. This makes DU-former particularly suited for episodic memory assessment, where EEG data exhibit complex temporal structures and high inter-subject variability.

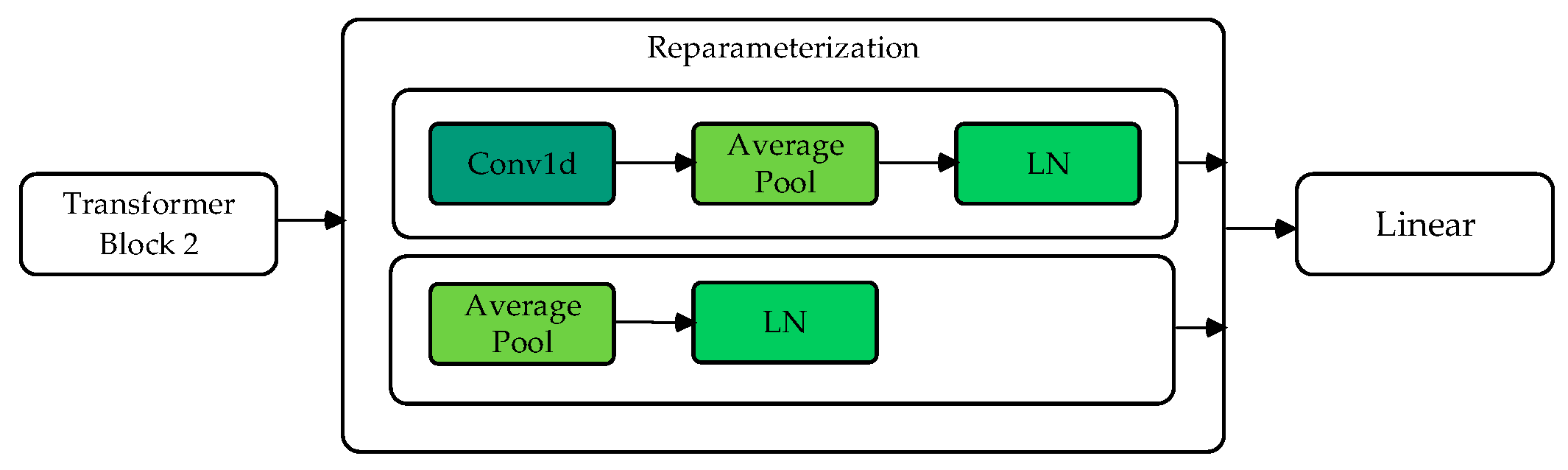

2.4. Reparameterization Module

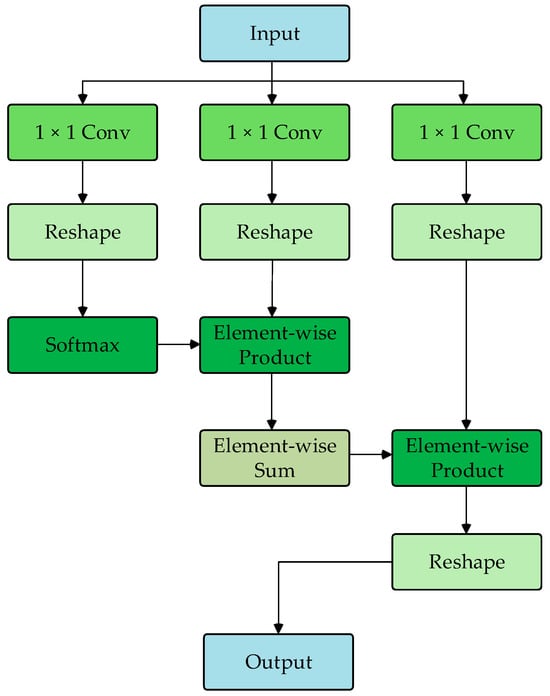

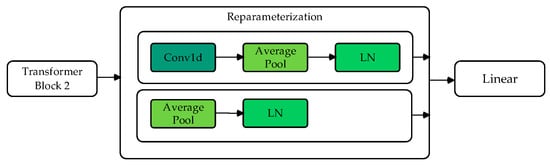

The reparameterization module, which consists of two branches, is inspired by methods used in other studies [24]. Branch 1 performs convolution, average pooling, and normalization operations to obtain the model’s distribution mean (). Branch 2 directly performs average pooling and layer normalization to obtain the distribution variance (). Through Gaussian reparameterization, the original features are reparametrized as embedded features with a Gaussian distribution.

As shown in Figure 5, after the model backbone, two branches predict the mean and variance . At each iteration, a random noise is sampled, and a new sample feature is generated using the following formula:

Figure 5.

Flowchart of reparameterization module.

This ensures that the reparametrized feature follows a Gaussian distribution with mean and variance , effectively simulating a stochastic feature representation. This simple resampling technique enhances the model’s robustness during training.

EEG signals inherently exhibit variability due to inter-trial fluctuations, individual differences, and external noise, posing significant challenges to classification models. Research on wayfinding uncertainty using EEG has demonstrated that cognitive uncertainty is reflected in neural activity patterns, affecting the reliability and confidence of classification outputs. Conventional EEG classification models, including CNNs and Transformers, typically treat extracted features as deterministic representations, which may lead to suboptimal generalization, particularly in scenarios with high neural variability. To address this challenge, the proposed DU-former model incorporates a reparameterization module that explicitly captures uncertainty by modeling feature distributions as Gaussian variables. This probabilistic representation enables the system to learn robust feature embeddings, mitigating the impact of ambiguous or noisy EEG patterns and enhancing classification stability.

Existing uncertainty-aware approaches, such as Bayesian Neural Networks and Monte Carlo dropout, attempt to estimate predictive uncertainty by performing multiple stochastic forward passes. However, these methods often incur high computational costs. The reparameterization trick employed in DU-former offers a computationally efficient alternative by enabling the model to learn feature distributions without requiring multiple forward passes. Unlike traditional Bayesian deep learning frameworks, such as Bayes-by-Backprop, which rely on complex prior distributions and additional optimization constraints, DU-former directly integrates Gaussian reparameterization within the classification pipeline, allowing feature distributions to be optimized in an end-to-end manner. Additionally, while variational autoencoders (VAEs) have been employed for probabilistic EEG feature extraction, they often struggle to balance latent space regularization and reconstruction fidelity. The DU-former model circumvents this issue by leveraging reparameterization to refine feature representations while maintaining computational efficiency.

By incorporating uncertainty-aware learning, DU-former effectively enhances the reliability of EEG classification, particularly in scenarios where EEG signal quality may be compromised by noise, motion artifacts, or subject-specific variability. The ability to sample features from a learned distribution not only improves robustness but also stabilizes decision boundaries, reducing the likelihood of overconfident misclassifications. Experimental results validate the effectiveness of this approach, demonstrating that the reparameterization module significantly contributes to superior classification performance compared to baseline methods. Through this probabilistic feature learning strategy, DU-former advances the state-of-the-art in EEG-based cognitive assessment by offering a more resilient and adaptive framework for modeling complex neural dynamics.

3. Episodic Memory Training and Testing Games Based on VR

To validate the effectiveness of the proposed DU-former model, it is essential to design a controlled experimental paradigm that can elicit distinct episodic memory-related EEG patterns before and after training. The combination of an episodic memory training game and a testing game in a VR environment allows for standardized memory tasks, providing both behavioral data and EEG signals to evaluate model performance. Effective episodic memory assessment scales provide objective, quantitative data that help researchers analyze changes in memory abilities with greater precision. This paper designs and implements an episodic memory training game and an episodic memory testing game based on VR technology to facilitate the paper of participants’ episodic memory functions.

Compared to real-world training settings, VR provides a controlled and repeatable environment that minimizes external distractions, thereby improving the reliability of EEG classification. This paper designs and implements an episodic memory training game and an episodic memory testing game based on VR technology to facilitate the assessment of participants’ episodic memory functions while systematically analyzing the impact of immersive environments on EEG signal variability.

3.1. The Design and Implementation of the Episodic Memory Training Game

- (1)

- Training Scene

This paper adopts a virtual room as the training scene to enhance the sense of immersion and real-life experience in episodic memory training. The scene includes a living room, two bedrooms, a bathroom, and a kitchen. As shown in Figure 6, the scene’s layout strives to replicate a real family environment, thereby improving realism and immersion. During the training process, the items to be memorized are everyday objects, such as a pot, hanger, slippers, towels, etc. These items are randomly generated and placed in the scene before each training session.

Figure 6.

An overhead view of the training game scene.

- (2)

- Training Task Design

The design of the episodic memory training tasks draws from the methods in Fajnerová et al.’s research, which demonstrated that such task designs effectively improve episodic memory abilities [25]. The training tasks are divided into three phases, the memory phase, the object recognition phase, and the recall phase, all of which involve the core elements of episodic memory—time, place, and objects. The specific task designs for each phase are as follows.

Memory Phase: As shown in Figure 7, participants must follow the blue directional arrows on the floor to move to designated locations. The items that participants must remember will be highlighted, and their names will be displayed when the mouse hovers over them. The primary task in this phase is to remember the location and arrangement of the objects.

Figure 7.

Memory phase training scene.

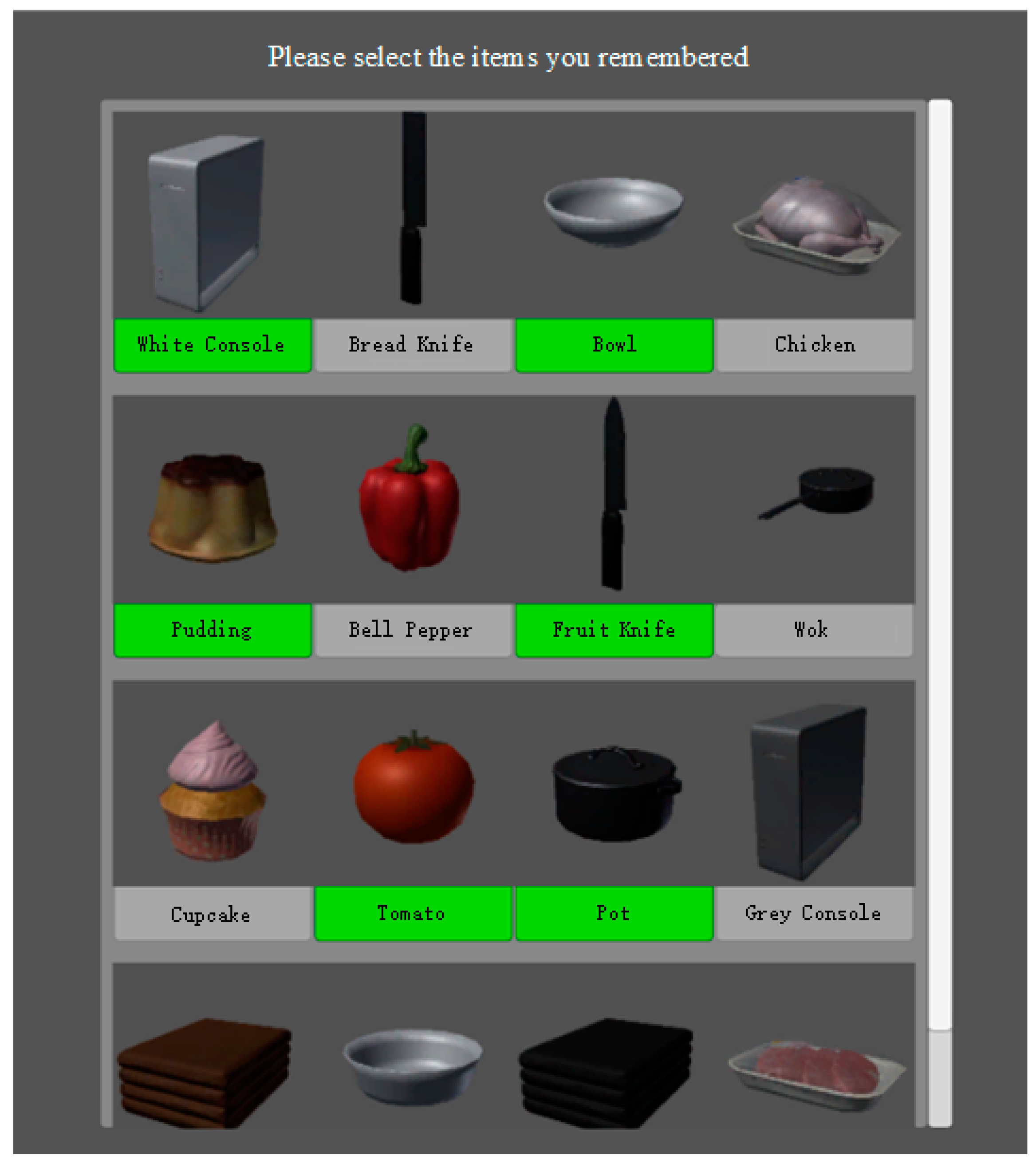

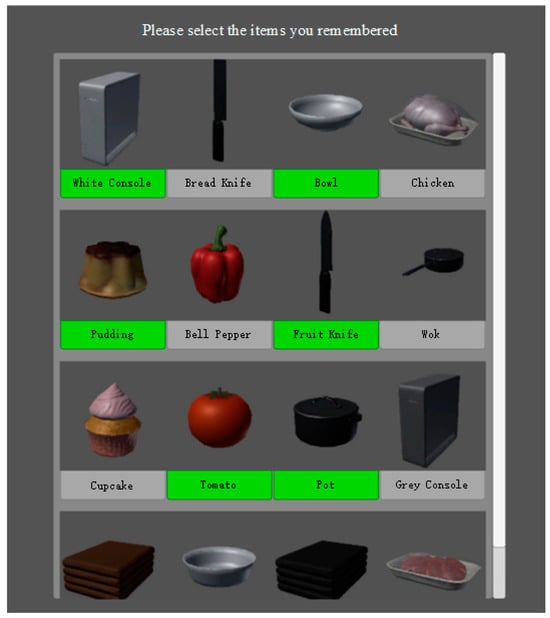

Object Recognition Phase: Figure 8 shows that this phase displays a series of object images, including target objects (those shown in the memory phase) and non-target objects (distractor objects). The ratio of target to distractor objects is 1:1, and the distractor objects are visually similar to the target objects. For example, if the target item is a blue towel, the distractor could be a red towel. Participants must identify the objects in the memory phase and mark the selected objects with a green icon. This phase does not require participants to recall the sequence of objects. The phase records the accuracy of the participants’ selections and the time taken.

Figure 8.

Object recognition phase training interface.

Recall Phase: Participants must return the objects to their original positions in the order they remember. The left side of the screen displays the icons of the objects from the memory phase in a randomized order. Participants drag and hold the icons to generate the objects and place them in the correct positions (a deviation of no more than half a meter is considered correct). This phase records the number of objects placed correctly by the participants.

- (3)

- Task Difficulty Design

This system incorporates multiple difficulty levels to increase the training’s challenge effectively. By adjusting the number of objects to be remembered, the training difficulty can gradually increase according to the participant’s progress, ensuring that the tasks progressively deepen and match the participant’s learning and memory abilities.

3.2. Design and Implementation of Episodic Memory Testing Game

- (1)

- Testing Game Scene

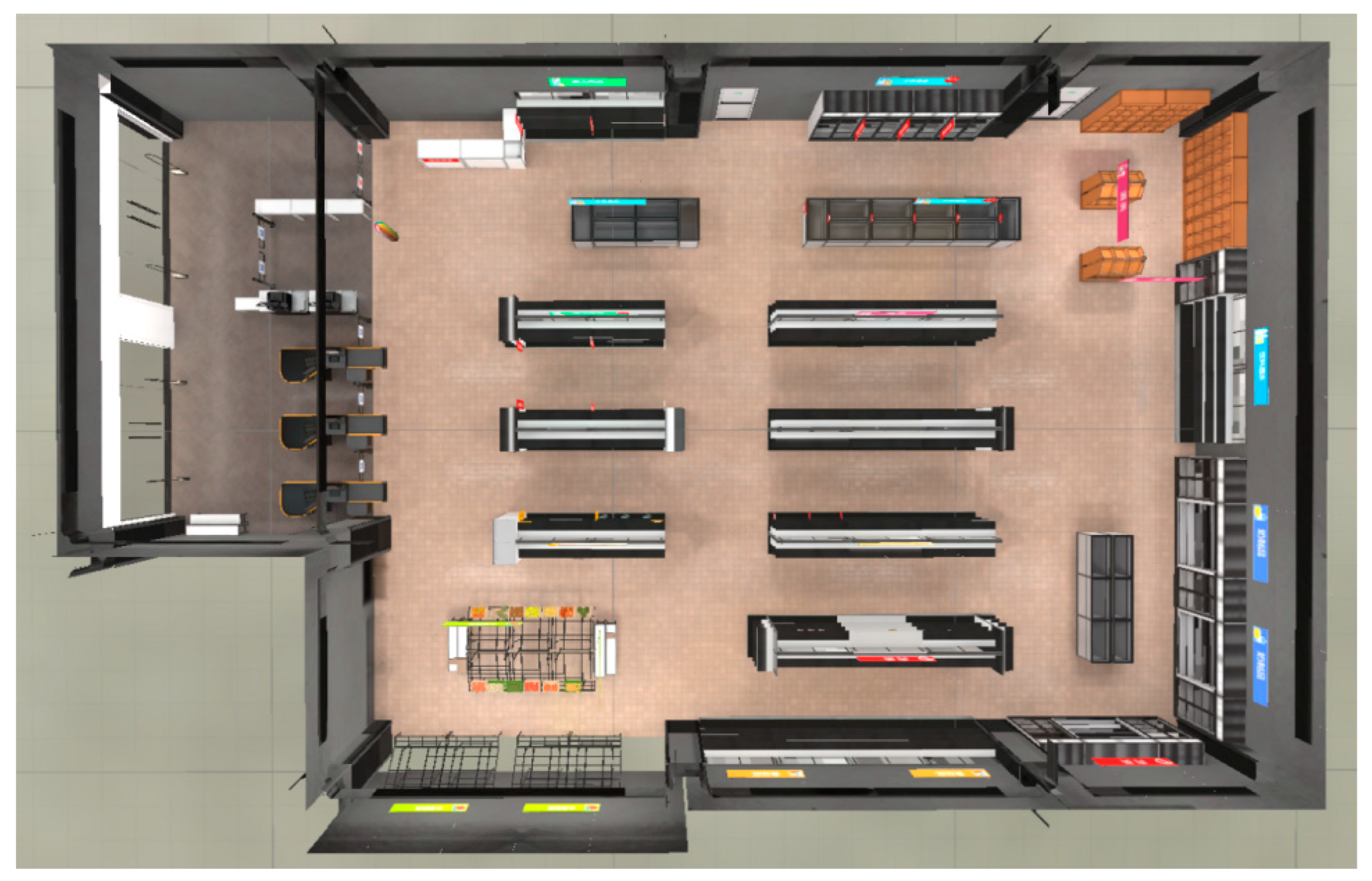

The testing game scene is a virtual supermarket, with the layout shown in Figure 9.

Figure 9.

Overhead view of testing game scene.

The scene is divided into five main areas: daily necessities, food, decorations, beverages, and fruits and vegetables. All food items are displayed on 16 shelves, with 5 items per shelf totaling 80. The five items on each shelf belong to the same category and share certain similarities; for instance, the fruit items on the same shelf are visually similar. Before the test begins, the system randomly selects one item from each shelf as the target object, while the other four items serve as distractors. Ultimately, the system randomly selects 16 target items from the 16 shelves.

- (2)

- Testing Task Design

Both Lecavalier [26] and Parsons [27] used virtual reality-based grocery stores for episodic memory testing, demonstrating that this approach is comparable to traditional paper-and-pencil tests in assessing episodic memory. This paper’s testing game design is based on these two studies.

At the beginning of the test, the system prompts the player to memorize a shopping list containing 16 items, which are sequentially displayed on the screen in both image and text formats, with each item shown for 5 s. After the memorization phase, the player engages in a 2 min conversation with a virtual shop assistant, introducing a delayed interference effect. After the conversation, the player can freely move around the virtual supermarket scene and search for the items. There is no restriction on the number of items that the player can select, and the selection time is set between 2 and 10 min. The test process records the items selected by the player, the time spent, and the player’s movement trajectory within the scene.

4. Experimental Design

This study assumes that episodic memory training induces measurable changes in EEG patterns, and that these changes can be captured and classified by deep learning models.

4.1. Participant Demographics

A total of 17 participants were recruited for this experiment, including 7 males and 10 females, with an average age of 22 ± 2.17 years. This paper was approved by the Ethics Committee of the First People’s Hospital of Qinhuangdao (Approval No.: 2018B006).

4.2. Experimental Procedure

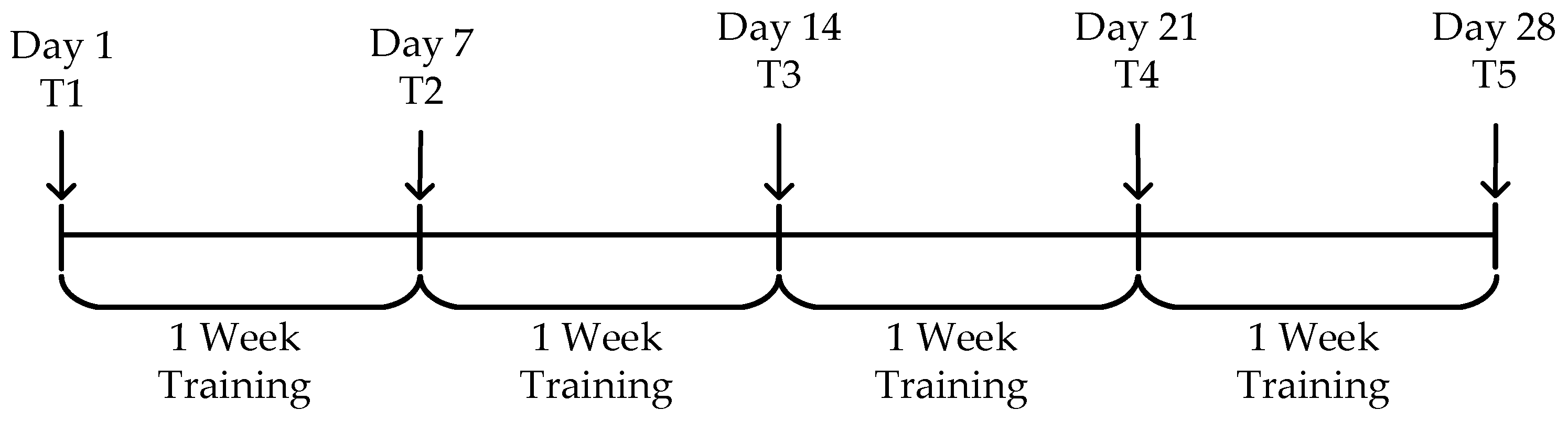

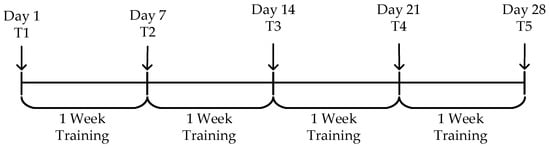

As shown in Figure 10, to evaluate the effectiveness of the episodic memory training system, a 28-day experiment was conducted. The episodic memory testing sessions were performed on Day 1 (T1) and at the end of each week (T2 to T5). The overall task schedule is displayed, where “Day” indicates the specific date, “T1” refers to the first testing session, and “Training” refers to the regular training sessions.

Figure 10.

Experimental procedure.

4.3. Data Collection

EEG signals and behavioral data from the participants were collected during each testing session. The data collection process is as follows:

- (1)

- EEG Signal Collection

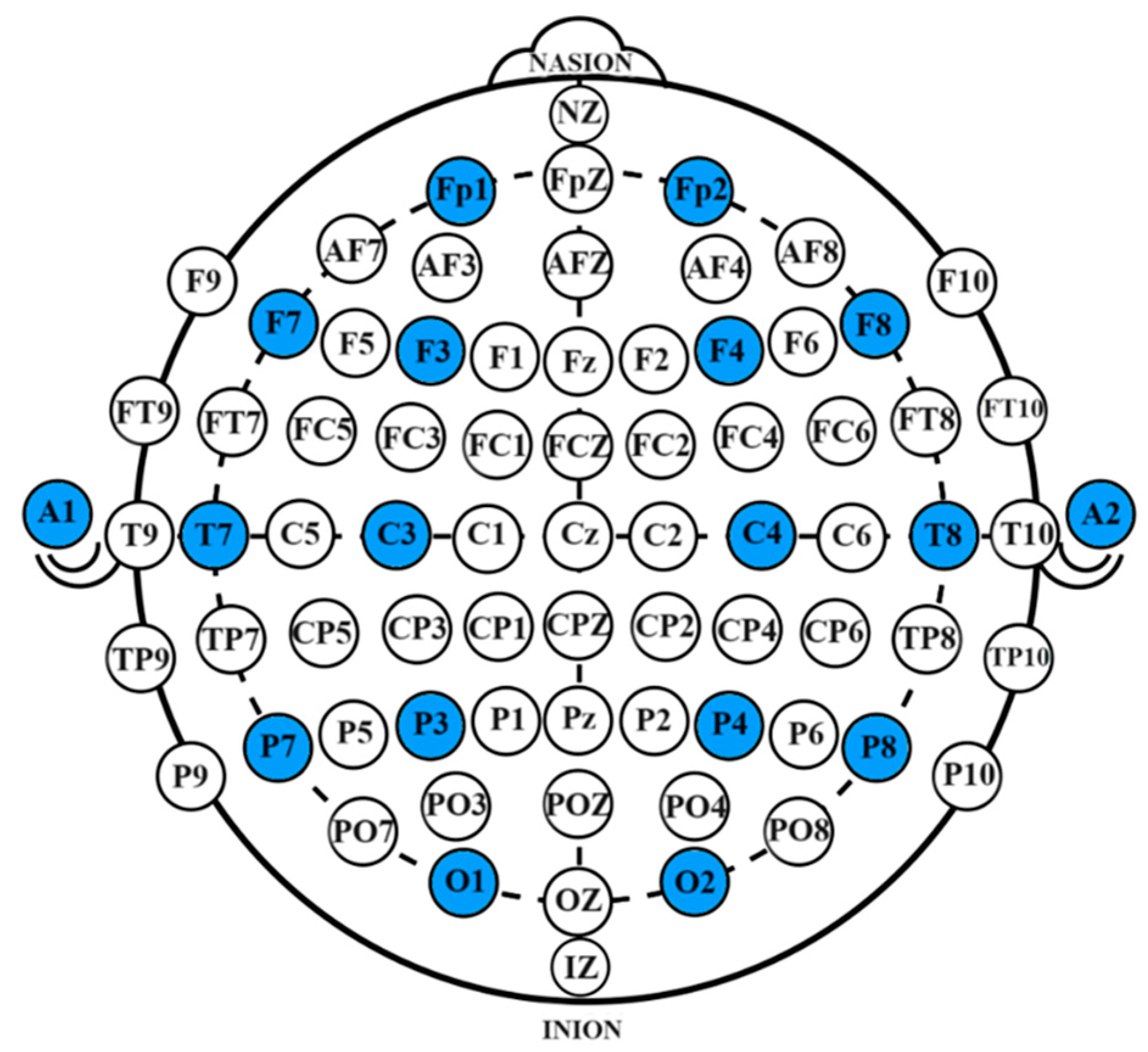

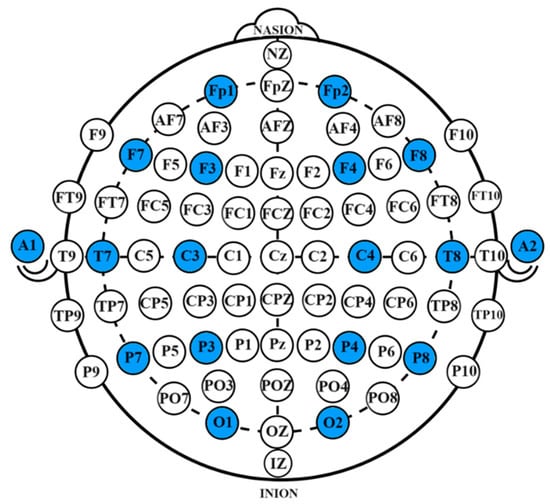

The system employed a 16-channel OpenBCI EEG acquisition device for data collection. During the collection process, the impedance of each channel was maintained below 10 kΩ. The collected EEG signals transmitted in real-time via Wi-Fi and were stored on a desktop computer. A Greentek EEG cap with semi-dry electrodes was used, with data being collected from 16 electrode sites, which include Fp1, Fp2, F7, F8, F3, F4, Fz, FCz, C3, C4, Cz, P7, P8, Pz, O1, and O2. The electrode configuration followed the international 10-10 system, with bilateral earlobe areas as reference electrodes. The specific electrode locations are shown in Figure 11, with the selected channels highlighted in blue.

Figure 11.

Electrode placement diagram. The specific electrode locations are shown in Figure 11, with the selected channels highlighted in blue.

- (2)

- Behavioral Data Collection

The training game was divided into three phases: the memory phase, the object recognition phase, and the recall phase. Behavioral data were primarily collected during the object recognition phase, which included the participant’s accuracy and execution time for each test.

4.4. Data Preprocessing

This paper analyzes EEG signals from participants before and after episodic memory training, which corresponds to the testing sessions T1 and T5. The acquired EEG data are first divided into frequency bands, including Delta (1–4 Hz), Theta (4–8 Hz), Alpha1 (8–10.5 Hz), Alpha2 (10.5–13 Hz), Beta1 (13–20 Hz), Beta2 (20–30 Hz), and Gamma (30–50 Hz). Afterward, each frequency band is further divided into time slices, specifically by a sliding window technique. A 2 s window with a 1 s step size (50% overlap) segments the original EEG data into multiple 16-channel × 2000-sample-point segments.

Preprocessing constitutes the initial phase in EEG signal processing, aiming to eliminate artifacts, noise, and motion-induced interference while enhancing signal reliability. The implementation of this paper involves three sequential steps.

Bandpass Filtering: Digital filters (Butterworth 4th-order) are employed to remove high-frequency noise (>50 Hz) and low-frequency drift (<0.5 Hz), with cutoff frequencies consistent with subsequent frequency band division.

Artifact Removal: A hybrid approach combining Independent Component Analysis (ICA) and regression methods is applied. Ocular artifacts (EOG) are identified through correlation analysis (threshold: r > 0.8) with reference channels, while myogenic artifacts (EMG) are suppressed using template matching with predefined muscle activation patterns.

Spatial Interpolation: Bad channels (impedance >50 kΩ) are reconstructed via spherical spline interpolation, ensuring spatial continuity across the 16-channel montage.

4.5. Statistical Analysis

The Mann–Whitney U test was applied for statistical analysis of accuracy and execution time data. According to the Mann–Whitney U test, if the p-value between two data groups is less than 0.01, it indicates a significant statistical difference.

4.6. Hardware Environment

The algorithms were executed in the hardware environment detailed in Table 1, ensuring consistent and reliable performance across all models.

Table 1.

Hardware environment.

5. Results

5.1. Behavioral Results of the Testing Game

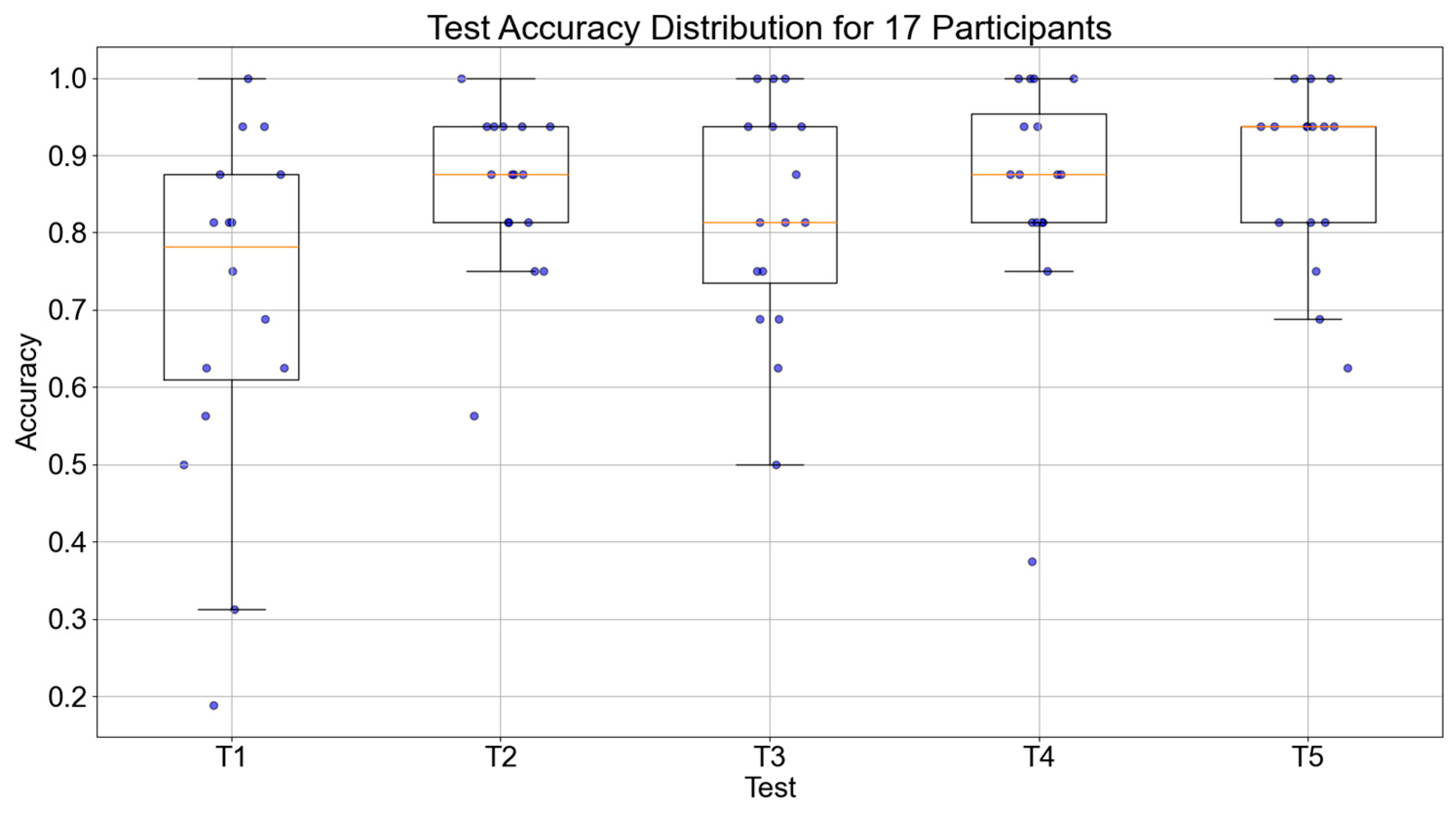

The accuracy of object recognition and average execution time were used as the primary evaluation metrics for behavioral data. By comparing the results of the five behavioral sessions during the experiment, the changes in participants’ episodic memory abilities were analyzed to determine whether the experiment improved their episodic memory.

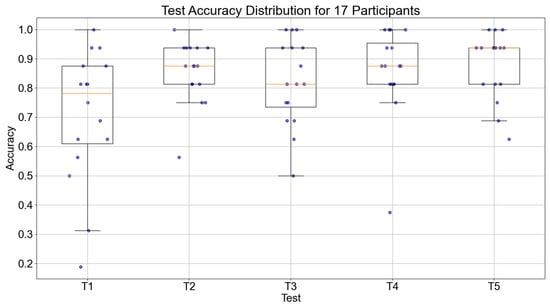

The accuracy of object recognition and execution times of the 17 participants during the five testing sessions were collected. The trend of accuracy for all 17 participants is shown in Figure 12. The median values of the participants for the five tests were 0.726, 0.859, 0.867, 0.871, and 0.883, respectively. Overall, there was an increasing trend in accuracy, with a significant improvement from the first test, which demonstrates the effectiveness of the episodic memory training system.

Figure 12.

Boxplot of object recognition accuracy across five tests for 17 participants.

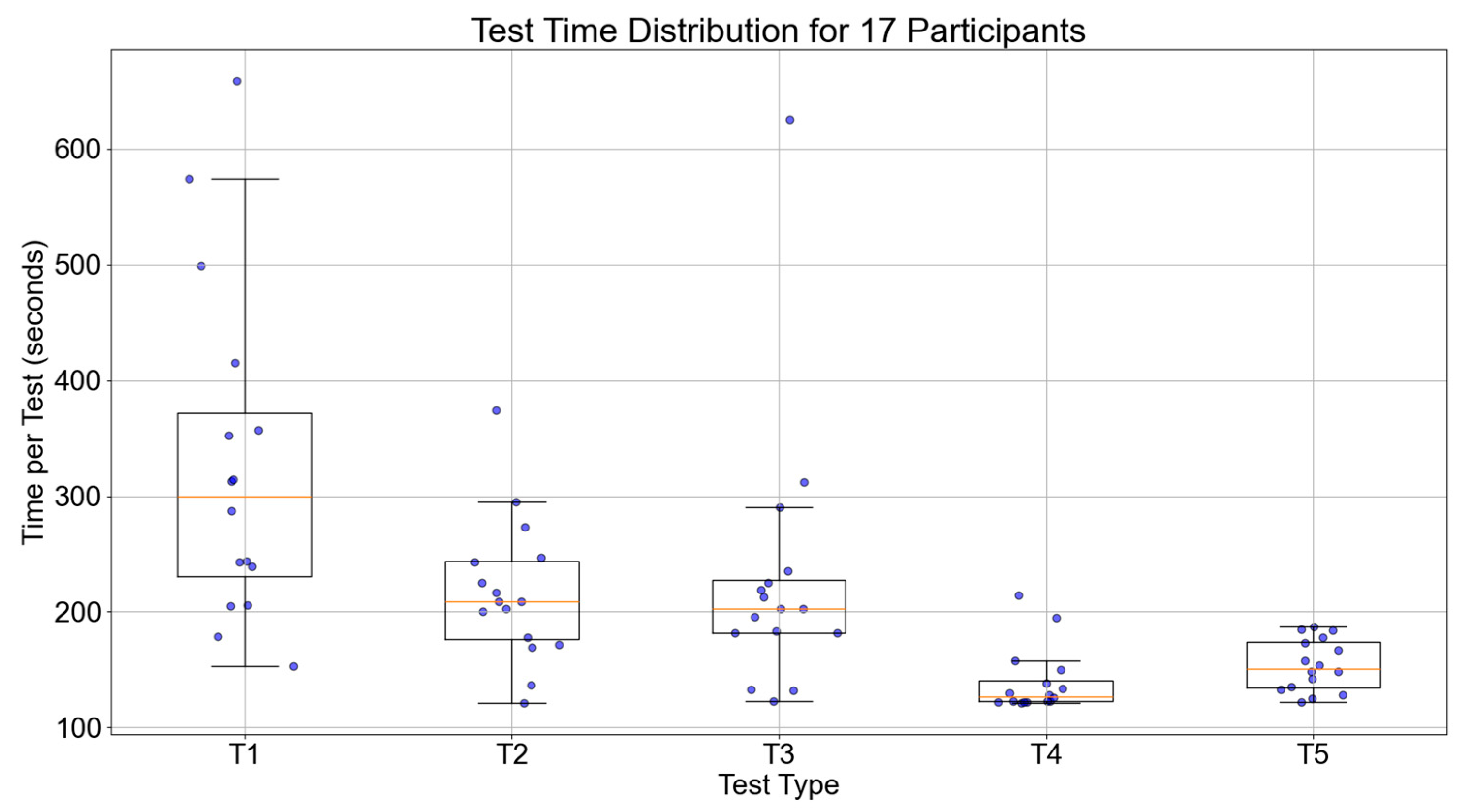

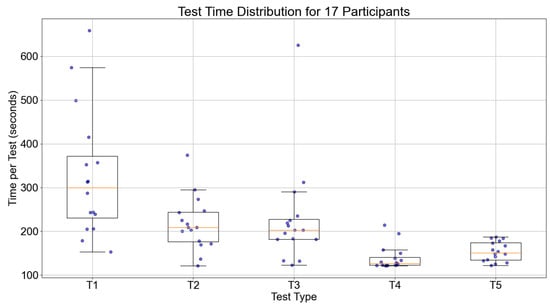

Figure 13 shows the trend of execution times for the 17 participants during the five tests. The median value of the participants for the five tests were 271.25, 209.50, 174.50, 171.00, and 138.00 s, respectively. Overall, the execution times decreased for all participants, indicating that the time and routes taken to locate the items were optimized compared to pre-training. This further proves the effectiveness of episodic memory training.

Figure 13.

Boxplot of execution time across five tests for 17 participants.

The results of statistical analysis shown in Table 2 revealed no significant differences between the first and second tests regarding accuracy and execution time, with p-values greater than 0.01. However, as the number of training sessions increased, the differences between the data became increasingly significant, especially between T1 and T5, where the p-value was less than 0.01, indicating a significant difference. This finding suggests that increasing the number of training sessions plays a crucial role in improving test performance, further validating the effectiveness of the episodic memory training method.

Table 2.

Results of statistical analysis.

5.2. DU-Former Classification Results

The model was evaluated using ten-fold cross-validation, with metrics such as accuracy, precision, recall, F1-score, and AUC. The uncertainty of the classification results was used to assess the reliability of the model’s classification. In addition, DU-former was compared with Transformer, EEGNet, and CNN models to demonstrate its superior performance in classifying EEG signals related to episodic memory.

5.2.1. Model Training Results

Table 3 shows the classification results of the DU-former model across seven frequency bands. As observed, the model’s classification performance improved from the low- to high-frequency bands. The classification performance was weakest in the Alpha2 band, with accuracy, precision, recall, F1, and AUC values of 0.850, 0.833, 0.809, 0.820, and 0.817, respectively. The best performance was observed in the Gamma band, with accuracy, precision, recall, F1, and AUC values of 0.975, 0.954, 0.989, 0.971, and 0.977. All frequency bands showed classification results above 0.85, demonstrating that DU-former has a strong classification ability for EEG signals related to episodic memory.

Table 3.

Classification results of DU-former model in seven frequency bands.

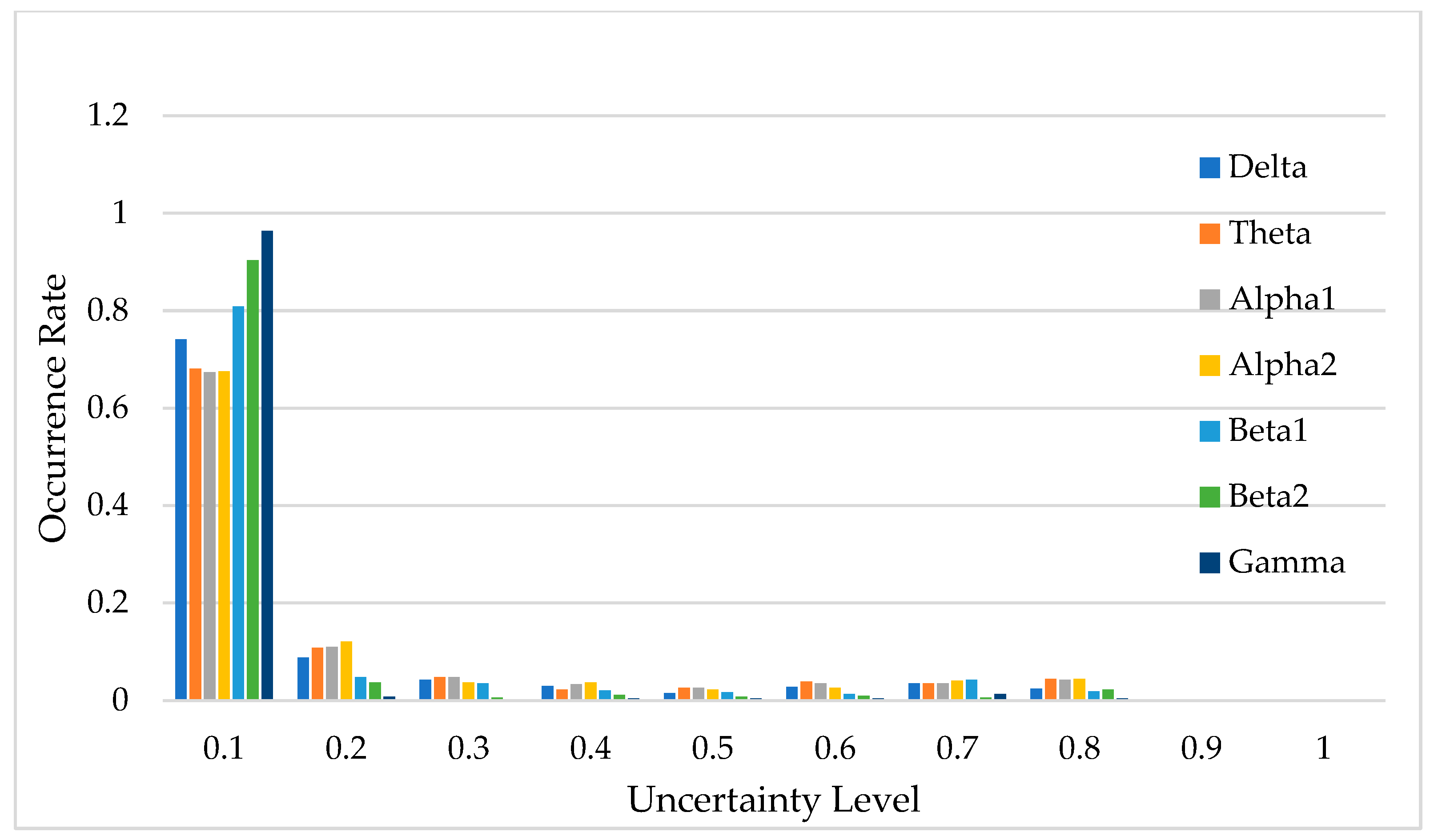

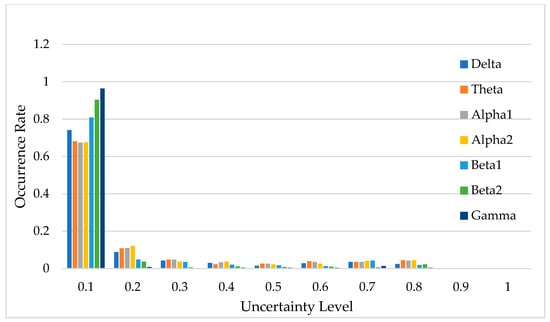

Figure 14 illustrates the uncertainty distribution of classification results across the seven frequency bands. In this context, uncertainty refers to the degree of confidence the DU-former model has in its classification predictions, with lower uncertainty indicating higher confidence and more reliable decisions. The horizontal axis, labeled “Uncertainty Level”, represents the degree of uncertainty in the model’s classification predictions, while the vertical axis, labeled “Occurrence Rate”, indicates the proportion of predictions that fall within each uncertainty level.

Figure 14.

Uncertainty distribution of model classification results.

Uncertainty plays a crucial role in EEG classification, as high uncertainty may indicate ambiguous neural patterns, signal noise, or overlapping feature distributions between different classes [22,23]. By quantifying uncertainty, researchers can assess the reliability of the model’s decisions and determine the proportion of classifications that can be considered trustworthy. As shown in Figure 14, predictions with uncertainty values less than 0.1 account for over 65% of the total data, suggesting that the model is highly confident in most cases.

If an uncertainty threshold of ≤0.3 is considered acceptable, then the acceptable classification results for each frequency band account for 83.0%, 83.2%, 89.1%, 94.5%, 87.1%, 97.3%, and 83.6% of the total data, respectively. This demonstrates that the DU-former provides highly reliable classification results for EEG signals related to episodic memory.

5.2.2. Model Comparison Results

To evaluate whether DU-former outperforms existing models in classifying episodic memory EEG signals, this paper compares it with EEGNet, Transformer, and CNN.

- (1)

- Delta Band Model Comparison Results

Table 4 presents the classification results of the four models in the Delta frequency band. As shown in Table 4, the DU-former model has the best overall performance, with accuracy, precision, recall, F1, and AUC scores of 0.883, 0.868, 0.856, 0.861, and 0.839, respectively. CNN has the worst performance, with an accuracy score of only 0.772.

Table 4.

Classification results of different models in the Delta band.

- (2)

- Theta Band Model Comparison Results

Table 5 shows the classification results of the four models in the Theta frequency band. As presented in Table 5, the DU-former model performs the best, with accuracy, precision, recall, F1, and AUC scores of 0.986, 0.986, 0.983, 0.984, and 0.986, respectively. CNN performs the worst, with an accuracy score of only 0.908.

Table 5.

Classification results of different models in the Theta band.

- (3)

- Alpha1 Frequency Band Model Comparison Results

Table 6 shows the classification results of the four models in the Alpha1 frequency band. Table 6 shows that DU-former outperforms the other three models in all metrics, with accuracy, precision, recall, F1, and AUC values of 0.852, 0.819, 0.838, 0.827, and 0.828, respectively. CNN performed the worst, with an accuracy of only 0.758.

Table 6.

Classification results of four models in the Alpha1 frequency band.

- (4)

- Alpha2 Band Model Comparison Results

Table 7 shows the classification results of the four models in the Alpha2 frequency band. As shown in Table 7, the DU-former model outperforms the other models overall, with accuracy, precision, recall, F1, and AUC scores of 0.850, 0.833, 0.809, 0.820, and 0.817, respectively. In contrast, CNN yields the worst performance, with accuracy, precision, recall, F1, and AUC scores of 0.800, 0.804, 0.787, 0.795, and 0.788, respectively.

Table 7.

Classification results of different models in the Alpha2 band.

- (5)

- Beta1 Band Model Comparison Results

Table 8 shows the classification results of the four models in the Beta1 frequency band. As seen from Table 8, the DU-former model achieves the best performance, with accuracy, precision, recall, F1, and AUC scores of 0.908, 0.909, 0.872, 0.889, and 0.882, respectively. CNN performs the worst, with an accuracy score of only 0.826.

Table 8.

Classification results of different models in the Beta1 band.

- (6)

- Beta2 Band Model Comparison Results

Table 9 shows the classification results of the four models in the Beta2 frequency band. According to Table 9, the DU-former model performs the best, with accuracy, precision, recall, F1, and AUC scores of 0.928, 0.921, 0.909, 0.914, and 0.936, respectively. CNN performs the worst, with an accuracy score of only 0.886.

Table 9.

Classification results of different models in the Beta2 band.

- (7)

- Gamma Band Model Comparison Results

Table 10 presents the classification results of the four models in the Gamma frequency band. As indicated in Table 10, the DU-former model performs the best, with accuracy, precision, recall, F1, and AUC scores of 0.975, 0.954, 0.989, 0.971, and 0.977, respectively. CNN performs the worst, with accuracy, precision, recall, F1, and AUC scores of 0.817, 0.748, 0.834, 0.789, and 0.820, respectively.

Table 10.

Classification results of different models in the Gamma band.

5.2.3. Ablation Study Results

This paper conducted ablation experiments to analyze the impact of various modules on the performance of the DU-former model in classifying EEG signals across different frequency bands. The results shown in Table 11 demonstrate that the exclusion of key modules, such as the SMHSA and the reparameterization module, significantly degrades the model’s classification performance.

Table 11.

Classification performance of DU-former with excluded modules across frequency bands.

In the case of the Alpha1 band, the model’s accuracy dropped from 0.852 to 0.536 when the SMHSA module was excluded, showing a stark decline in the model’s ability to capture critical temporal and spatial dependencies. This sharp reduction highlights the importance of the self-attention mechanism in learning relevant features from the EEG signals. In contrast, excluding the reparameterization module led to a less severe decline, with accuracy dropping to 0.780. This indicates that while the reparameterization module plays a critical role in handling uncertainty and improving model stability, the absence of SMHSA has a more pronounced impact on performance.

Similar trends were observed in other frequency bands such as Alpha2, Beta1, and Beta2. For instance, when the SMHSA module was excluded, the accuracy decreased substantially in the Alpha2 band from 0.850 to 0.555, and in the Beta2 band from 0.928 to 0.582. This consistent degradation underscores the pivotal role of the self-attention mechanism in maintaining classification performance across various EEG frequency bands.

Compared to the removal of the SMHSA module, the removal of the reparameterization module generally caused smaller reductions in performance, as seen in the Beta1 band, where accuracy dropped from 0.908 to 0.860, and in the Gamma band, where accuracy decreased from 0.975 to 0.971.

The results of these ablation studies reinforce the significance of both the SMHSA and reparameterization modules in the DU-former model. The SMHSA module is essential for efficiently learning the complex relationships within the data, while the reparameterization module helps the model handle data uncertainty and noise effectively. Thus, removing either module results in a notable drop in classification accuracy, particularly when the SMHSA module is excluded. These findings confirm that the DU-former’s architecture is highly sensitive to the presence of these modules, further emphasizing their integral role in enhancing the model’s robustness and performance.

6. Discussion

With the in-depth research on episodic memory, classification models for EEG signals related to episodic memory have gradually gained attention. However, due to the relatively small number of models designed explicitly for episodic memory and the fact that some existing models have not made their source code publicly available, it is impossible to thoroughly compare the classification performance of various models on episodic memory datasets. Existing research primarily uses machine learning methods to classify EEG signals for episodic memory. For example, Soroush et al. conducted a comparative study of four models—LASSO, Naive Bayes, SVM, and logistic regression [28]. The paper showed that the LASSO model performed best in classification accuracy, achieving an accuracy of 72%, while Naive Bayes had an advantage in classification speed, processing at 2.3 s per instance. Damir et al. explored the application of SVM, CNN2D, and EEGNet models in episodic memory EEG signals [29]. Their results indicated that while CNNs required longer training times, they excelled in accuracy. The 1D Convolutional Neural Network applied to raw data achieved a classification accuracy of 82%. In contrast, the SVM algorithm had slightly lower classification accuracy but was faster [28].

Despite the existing classification models achieving specific classification outcomes, there is still a trade-off between feature extraction and classification performance. The DU-former model proposed in this paper demonstrates significant advantages in episodic memory EEG signal classification by combining convolutional modules, Transformer modules, and data uncertainty learning. The experimental results show that the classification performance for high-frequency bands outperforms low-frequency bands. This phenomenon suggests that as the EEG signal frequency band increases, the model can better capture features related to episodic memory. This finding is consistent with traditional EEG signal analysis methods and provides a new perspective for EEG signal classification in episodic memory.

Further comparison with other existing models further validates the superiority of the DU-former model. For example, the intracranial EEG classification model based on CNN and Transformer proposed by Yu et al. [30] uses CNN to extract local features, similar to the proposed model’s convolutional module design. The study also showed that introducing spatiotemporal convolutions effectively enhanced the performance of the Transformer in EEG signal classification. In contrast, DU-former improved the model’s classification accuracy and robustness by integrating convolutional modules, Transformer encoder modules, and data uncertainty learning.

Additionally, the work by Deng et al. [23], which uses data uncertainty learning in epilepsy EEG prediction, inspired this paper. Through Gaussian reparameterization, the DU-former model achieved data uncertainty learning. The training results demonstrated that the model maintained low uncertainty across all frequency bands, thereby enhancing the credibility of the classification results. This approach also significantly improved the model’s robustness, particularly in handling high-noise and complex signals, where the model showed good stability and interpretability. To further evaluate uncertainty modeling strategies, this paper implemented a Bayesian CNN using the same backbone as the CNN model used in Section 5.2. However, the Bayesian CNN achieved only around 50% classification accuracy in each frequency band, which was significantly lower than that of DU-former. This result suggests that the proposed Gaussian reparameterization-based uncertainty learning provides a more stable and effective approach for EEG classification.

Furthermore, the impact of EEG signal uncertainty on classification performance is evident in the variability of feature representations across different cognitive states. Previous research on wayfinding uncertainty suggests that neural uncertainty can introduce ambiguity in classification tasks, leading to reduced model confidence and increased misclassification rates. The DU-former model addresses this challenge by leveraging Gaussian reparameterization to represent uncertainty states, allowing the model to differentiate between stable and uncertain neural patterns. As shown in our experimental results, the inclusion of uncertainty modeling significantly enhances classification accuracy, particularly in EEG frequency bands with higher signal variability, such as the Beta and Gamma bands. This demonstrates that explicitly accounting for cognitive uncertainty in EEG signals contributes to more reliable and interpretable classification outcomes.

While this study primarily focuses on the impact of training on spatial information encoding in episodic memory, other cognitive factors—such as sleep [31], time perception [32], and statistical learning [33]—were not explicitly considered. Specifically, sleep has been shown to facilitate memory consolidation through characteristic EEG patterns during slow-wave and REM stages [31]; time perception, influenced by attention and cognitive load, modulates EEG dynamics during memory tasks [32]; and statistical learning may give rise to predictable EEG patterns that affect classification accuracy [33].

Our VR-based training and testing paradigm was designed to emphasize spatial navigation and object–location associations, which are well-established components of episodic memory. Prior research has highlighted the involvement of the hippocampus and parietal regions in spatial memory representation, supporting our spatially oriented approach [34]. Nevertheless, future work may benefit from integrating these additional cognitive factors to further enhance the robustness and generalizability of EEG classification models.

Although these aspects fall beyond the scope of our current experimental design, they offer promising directions for extending DU-former. Future studies could explore how integrating sleep EEG, temporal modeling, and statistical regularities can improve the robustness and interpretability of memory-related EEG classification.

7. Conclusions

This paper proposes a virtual reality-based episodic memory training and assessment system combined with the DU-former model for EEG signal classification analysis. The experimental results indicate that episodic memory training significantly improved the memory abilities of participants in the experimental group, especially in tasks involving object recognition and recall.

The DU-former model successfully enhanced the classification accuracy and robustness of episodic memory EEG signals by incorporating data uncertainty learning and Gaussian reparameterization. Compared to traditional methods, the model outperformed classification accuracy across all frequency bands and showed high stability and interpretability in handling complex signals.

This research provides an innovative solution for episodic memory training and EEG signal assessment. Future studies can further optimize the algorithm’s real-time performance and personalized training strategies, advancing application development in this field.

This study focuses on spatial aspects of episodic memory through a VR-based design, aligning with established findings on spatial encoding. While factors like sleep, time perception, and statistical learning may also affect EEG patterns, they were beyond our current scope. Future work may incorporate these variables to further enhance model generalization and interpretability.

Author Contributions

Conceptualization, D.W.; Funding Acquisition, X.W. and D.W.; Investigation, Z.L.; Methodology, X.W. and D.W.; Project Administration, X.W. and D.W.; Supervision, W.Z.W.H. and D.W.; Validation, Y.Y.; Visualization, Z.L. and Y.Y.; Writing—Original Draft, X.W. and Z.L.; Writing—Review and Editing, X.W., Z.L., Y.Y., T.L., D.D., X.X., and W.Z.W.H. All authors have read and agreed to the published version of the manuscript.

Funding

This research was funded in part by the Natural Science Foundation of China (62206014 and 62276022), and in part by the National Key Research and Development Program of China (2023YFF1203702).

Institutional Review Board Statement

The study was conducted in accordance with the Declaration of Helsinki and approved by the Ethics Committee of the First People’s Hospital of Qinhuangdao, Hebei Province (protocol code 2018B006 and date of approval is 6 March 2018).

Informed Consent Statement

Written informed consent was obtained from the patients to publish this paper.

Data Availability Statement

The datasets presented in this article are not readily available due to restrictions related to ongoing analyses and further research development. For any inquiries, please contact the corresponding author.

Conflicts of Interest

The authors declare no potential conflicts of interest with respect to the research, authorship, and publication of this article.

References

- Tulving, E. Episodic memory: From mind to brain. Annu. Rev. Psychol. 2002, 53, 1–25. [Google Scholar] [CrossRef] [PubMed]

- Ashford, J.W.; Gere, E.; Bayley, P.J. Measuring Memory in Large Group Settings Using a Continuous Recognition Test. J. Alzheimers Dis. 2011, 27, 885–895. [Google Scholar] [CrossRef] [PubMed]

- Green, C.S.; Bavelier, D. Exercising Your Brain: A Review of Human Brain Plasticity and Training-Induced Learning. Psychol. Aging 2008, 23, 692–701. [Google Scholar] [CrossRef] [PubMed]

- Salthouse, T.A.; Somberg, B.L. Skilled performance: Effects of adult age and experience on elementary processes. J. Exp. Psychol. Gen. 1982, 111, 176. [Google Scholar] [CrossRef]

- Jebara, N.; Orriols, E.; Zaoui, M.; Berthoz, A.; Piolino, P. Effects of enactment in episodic memory: A pilot virtual reality study with young and elderly adults. Front. Aging Neurosci. 2014, 6, 338. [Google Scholar] [CrossRef]

- Sauzeon, H.; N’Kaoua, B.; Pala, P.A.; Taillade, M.; Guitton, P. Age and active navigation effects on episodic memory: A virtual reality study. Br. J. Psychol. 2016, 107, 72–94. [Google Scholar] [CrossRef]

- Dunlosky, J.; Kubat-Silman, A.K.; Hertzog, C. Training monitoring skills improves older adults’ self-paced associative learning. Psychol. Aging 2003, 18, 340–345. [Google Scholar] [CrossRef]

- Rebok, G.W.; Carlson, M.C.; Langbaurn, J.B.S. Training and maintaining memory abilities in healthy older adults: Traditional and novel approaches. J. Gerontol. Ser. B-Psychol. Sci. Soc. Sci. 2007, 62, 53–61. [Google Scholar] [CrossRef]

- Verhaeghen, P.; Marcoen, A.; Goossens, L. improving memory performance in the aged through mnemonic training—A meta-analytic study. Psychol. Aging 1992, 7, 242–251. [Google Scholar] [CrossRef]

- Vicario, C.M.; Martino, G. Psychology and technology: How Virtual Reality can boost psychotherapy and neurorehabilitation. AIMS Neurosci. 2022, 9, 454–459. [Google Scholar] [CrossRef]

- Burgess, N.; Maguire, E.A.; Spiers, H.J.; O’Keefe, J. A temporoparietal and prefrontal network for retrieving the spatial context of lifelike events. Neuroimage 2001, 14, 439–453. [Google Scholar]

- Li, Q.; Shi, K.; Gao, N.; Li, J.; Bai, O. Training set extension for SVM ensemble in P300-speller with familiar face paradigm. Technol. Health Care 2018, 26, 469–482. [Google Scholar] [CrossRef]

- Nicolaou, N.; Georgiou, J. Detection of epileptic electroencephalogram based on Permutation Entropy and Support Vector Machines. Expert Syst. Appl. 2012, 39, 202–209. [Google Scholar] [CrossRef]

- Nkengfack, L.C.D.; Tchiotsop, D.; Atangana, R.; Louis-Door, V.; Wolf, D. EEG signals analysis for epileptic seizures detection using polynomial transforms, linear discriminant analysis and support vector machines. Biomed. Signal Process. Control. 2020, 62, 102141. [Google Scholar] [CrossRef]

- Vidaurre, C.; Kawanabe, M.; von Buenau, P.; Blankertz, B.; Mueller, K.R. Toward Unsupervised Adaptation of LDA for Brain-Computer Interfaces. IEEE Trans. Biomed. Eng. 2011, 58, 587–597. [Google Scholar] [CrossRef] [PubMed]

- Orhan, U.; Hekim, M.; Ozer, M. EEG signals classification using the K-means clustering and a multilayer perceptron neural network model. Expert Syst. Appl. 2011, 38, 13475–13481. [Google Scholar] [CrossRef]

- Polat, K.; Gunes, S. A novel data reduction method: Distance based data reduction and its application to classification of epileptiform EEG signals. Appl. Math. Comput. 2008, 200, 10–27. [Google Scholar] [CrossRef]

- Potapov, P. On the loss of information in PCA of spectrum-images. Ultramicroscopy 2017, 182, 191–194. [Google Scholar] [CrossRef]

- Jia, Z.; Lin, Y.; Wang, J.; Yang, K.; Liu, T.; Zhang, X. MMCNN: A Multi-branch Multi-scale Convolutional Neural Network for Motor Imagery Classification. In Proceedings of the European Conference on Machine Learning and Principles and Practice of Knowledge Discovery in Databases (ECML PKDD), Electr Network, Grenoble, France, 14–18 September 2021; pp. 736–751. [Google Scholar]

- Luo, T.-j.; Zhou, C.-l.; Chao, F. Exploring spatial-frequency-sequential relationships for motor imagery classification with recurrent neural network. BMC Bioinform. 2018, 19, 344. [Google Scholar] [CrossRef]

- Wang, P.; Jiang, A.; Liu, X.; Shang, J.; Zhang, L. LSTM-Based EEG Classification in Motor Imagery Tasks. IEEE Trans. Neural Syst. Rehabil. Eng. 2018, 26, 2086–2095. [Google Scholar] [CrossRef]

- Deng, Z.; Li, C.; Song, R.; Liu, X.; Qian, R.; Chen, X. Centroid-Guided Domain Incremental Learning for EEG-Based Seizure Prediction. IEEE Trans. Instrum. Meas. 2024, 73, 2500613. [Google Scholar] [CrossRef]

- Deng, Z.W.; Li, C.; Song, R.C.; Liu, X.; Qian, R.B.; Chen, X. EEG-based seizure prediction via hybrid vision transformer and data uncertainty learning. Eng. Appl. Artif. Intell. 2023, 123, 106401. [Google Scholar] [CrossRef]

- Chang, J.; Lan, Z.; Cheng, C.; Wei, Y.; IEEE. Data Uncertainty Learning in Face Recognition. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Electr Network, Seattle, WA, USA, 14–19 June 2020; pp. 5709–5718. [Google Scholar]

- Fajnerova, I.; Oravcova, I.; Plechata, A.; Hejtmanek, L.; Sahula, V.; Vlcek, K.; Nekovarova, T.; IEEE. The Virtual Episodic Memory Task: Towards remediation in neuropsychiatric disorders. In Proceedings of the International Conference on Virtual Rehabilitation (ICVR), Montreal, BC, Canada, 19–22 June 2017. [Google Scholar]

- Corriveau Lecavalier, N.; Ouellet, E.; Boller, B.; Belleville, S. Use of immersive virtual reality to assess episodic memory: A validation study in older adults. Neuropsychol. Rehabil. 2020, 30, 462–480. [Google Scholar] [CrossRef] [PubMed]

- Parsons, T.D.; Barnett, M. Validity of a Newly Developed Measure of Memory: Feasibility Study of the Virtual Environment Grocery Store. J. Alzheimers Dis. 2017, 59, 1227–1235. [Google Scholar] [CrossRef]

- Mirjalili, S.; Powell, P.; Strunk, J.; James, T.; Duarte, A. Evaluation of classification approaches for distinguishing brain states predictive of episodic memory performance from electroencephalography. Abbreviated Title: Evaluating methods of classifying memory states from EEG. Neuroimage 2022, 247, 118851. [Google Scholar] [CrossRef]

- Basic Knezevic, D.; Heimerson, A. Statistical and machine learning methods for classification of episodic memory. In Master’s Theses in Mathematical Sciences; 2018; Available online: https://lup.lub.lu.se/student-papers/search/publication/8949940 (accessed on 26 March 2025).

- Yu, M.; Zhang, Y.; Liu, H.; Wu, X.; Du, M.; Liu, X. IEEG-CT: A CNN and Transformer Based Method for Intracranial EEG Signal Classification. In Proceedings of the 30th International Conference on Neural Information Processing (ICONIP) of the Asia-Pacific-Neural-Network-Society (APNNS), Changsha, China, 20–23 November 2023; pp. 549–563. [Google Scholar]

- Brodt, S.; Inostroza, M.; Niethard, N.; Born, J. Sleep—A brain-state serving systems memory consolidation. Neuron 2023, 111, 1050–1075. [Google Scholar]

- Fountas, Z.; Sylaidi, A.; Nikiforou, K.; Seth, A.K.; Shanahan, M.; Roseboom, W. A Predictive Processing Model of Episodic Memory and Time Perception. Neural Comput. 2022, 34, 1501–1544. [Google Scholar] [CrossRef]

- Forest, T.A.; McCormick, S.A.; Davel, L.; Mlandu, N.; Zieff, M.R.; Amso, D.; Donald, K.A.; Gabard-Durnam, L.J.; Khula South Africa Data Collection, T. Early Caregiver Predictability Shapes Neural Indices of Statistical Learning Later in Infancy. Developmental Science 2024, 28, e13570. [Google Scholar] [CrossRef]

- Torres-Morales, C.; Cansino, S. Brain representations of space and time in episodic memory: A systematic review and meta-analysis. Cogn. Affect. Behav. Neurosci. 2024, 24, 1–18. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).