1. Introduction

The honey bee (Apis mellifera) is a species native to Africa, Europe, and western Asia; its management has contributed to the presence of this species in all continents except Antarctica and some oceanic islands [

1]. They are vital as pollinators, visiting more than 90% of the world’s principal crops [

2]. They also generate essential products such as honey, pollen, propolis, and royal jelly, producing jobs mainly in rural areas [

3]. Hence, reducing their population could affect the production and quality of food whose crops depend on insect pollination [

4]. Despite its relevance, a decline in bee diversity has been recorded due to climate change, pesticide use, and loss of natural habitats [

5]. In addition, diseases associated with fungi, bacteria, viruses, and invertebrate parasites threaten the health of bees [

2]. These diseases tend to spread to nearby bee populations due to commercial management, mass breeding, transport, trade, and physical contact between bees, especially during harvesting, representing a problem for conserving wild bee species.

The worldwide transmission and spread of the ectoparasitic mite Varroa destructor is the main factor in increasing viral infections. Furthermore, it inoculates in the larvae and adults, causing the death of hives [

6]. A decrease in production of

is estimated in a swarm of domestic bees infested with Varroa, which causes economic losses due to sanitary treatments, the repopulation of the packs, the treatment of secondary diseases, and labor [

7]. Currently, different chemicals and application methods keep the mite population under control. However, to achieve an optimal effect, these control methods must consider the climate, the conditions inside the hive, and the mode of application [

6].

Nowadays, diagnostic tools are an essential component of integrated pest management. Monitoring the level of infestation requires washing, separating, and counting the number of bees and mites. Therefore, proposals have been made to evaluate and monitor different factors that allow the health of bee colonies to be tracked with minimal human interaction through computer vision systems. For example, Rodríguez et al. [

8] perform pollen detection by analyzing the hive entrance, where they perform a comparative analysis with images of pollen-bearing and non-pollen-bearing bees using different types of neural networks. Sledevivc [

9] presents image classification with pollen-bearing bees using a convolutional neural network. Marstaller et al. [

10] propose DeepBees, which is a non-invasive system to monitor hives using computer vision based on a Raspberry Pi by transferring cropped image sequences of each insect for pollen detection and bee pose.

On the other hand, the work presented by Tiwari [

11] uses deep learning to recognize bees in videos and monitor the behavior of the colonies through traffic analysis; this variable is essential to observe the availability and demand for food, the age structure of the colony, and the impact of pesticides. Rodríguez et al. [

12] develop a system for detecting, locating, and tracking bee body parts from a video at the entrance ramp of the colony. Tashakkori et al. [

13] implement a data collection and monitoring system called Beemon that captures sensor data (temperature, humidity, and weight) and sends video and audio recordings, as well as input to hives, for analysis and research.

Regarding pest detection, Bjerge et al. [

14] describe a portable computer vision system that performs infection detection and automatic counting of bees to determine the level of infestation and minimize damage to the colony. This system uses a multispectral camera and illumination with blue, red, and infrared LEDs to facilitate Varroa separation using machine vision algorithms based on deep learning. Sevin et al. [

15] develop the Var-Gor system using bee passage tunnels, an autofocus detection camera, and a solar-panel-generated power supply to detect Varroa mites. Bilik et al. [

16] present an object-detector-based method for monitoring the health status of bee colonies with a dataset of 600 images of healthy and infested bees in various scenes, and the detectors reach 87% in the detection of infested bees and up to 71% in the detection of the Varroa destructor mite itself. Schurischuster and Kampel [

17] classify bees into two classes, healthy and infested with the parasitic mite Varroa destructor, through images acquired at the hive’s entrance. They compare two classification methods based on AlexNet and ResNet and a semantic segmentation approach using DeepLabV3, with the latter achieving a classification of 90.8% with an F1 score of 95%.

The mentioned works use extensive databases because deep learning models require a large amount of information for their training and usually use data augmentation to improve their results [

14,

16]. In addition, these systems present problems for the correct classification of Varroa, such as the bee’s orientation at the time of acquiring the image and the characteristic color of Varroa being confused with parts of the bee. Therefore, new strategies are required to deal with this type of problem. In this context, circular moments are a novel tool to represent information with minimal redundancy and invariance to transformations of geometric type [

18], which allows the classification of images with reduced databases without requiring data augmentation or image preprocessing.

Recently, the multichannel approach emerged as a new proposal to extract features in RGB images showing higher efficiency than the quaternion moments. Sing and Sing [

19] present the orthogonal multichannel moments based on Zernike moments (ZMs). Similarly, Hosny and Darwish [

20] present a new set of Chebyshev–Fourier multichannel moments (MMCFs), introducing new sets of multichannel moments [

21,

22,

23,

24,

25]. Furthermore, the moments distinguish the characteristic color of objects in images with a reduced number of descriptors [

19].

There is a large number of families of moments capable of characterizing images; however, studies have shown that Legendre–Fourier moments perform well with respect to other moments [

26,

27,

28,

29]. On the other hand, using different color models to analyze different image features is common. For example, Bolappa Gamage et al. [

30] evaluate the nutritional status of strawberry crop leaves using the RGB color model; this same color model is also used by Malgorzata et al. [

31] to analyze the health status of horses after exercise. Wan Yuanbin et al. [

32] perform image segmentation in RGB and YCbCr color models for forest fire image recognition. The works presented to identify the Varroa mite describe problems differentiating between the mite and the bee’s eye due to the color similarity [

16]. In addition, as they are living beings, it is difficult to keep the bee’s capture in a single position [

33], so it requires descriptors robust to different changes.

In this research work, an alternative method is proposed to classify bee images using Legendre–Fourier multichannel moments. The descriptors used have relevant qualities that allow the representation of digital images with a minimum number of descriptors; this characteristic is beneficial in cases where images of living beings that do not keep a static position are analyzed. The multichannel moments provide the information of each channel of the color space necessary to carry out the classification with greater accuracy and a lower computational cost. The proposed algorithm is also used with different color models to evaluate its effectiveness.

It is essential to mention that deep learning neural-network-based methods such as semantic segmentation and object detection have shown high performance in numerous computer vision challenges. Although these methods are relevant and have demonstrated excellent performance, they have limitations, especially regarding computational demand and the need for large volumes of data. Our study tried to address these problems using Legendre–Fourier multichannel moments, providing an alternative for handling images of living beings in various positions with fewer descriptors and less computational cost. One of the innovative approaches in object detection is provided by Li et al. [

34], which explores using single-vision transformers for object detection, demonstrating performance comparable to convolution networks. In addition, Wang et al. [

35] present a new state-of-the-art in real-time object detector, further improving the precision and speed of object detection in images. Although these methods are highly effective, they rely on complex network structures and large amounts of training data, which can be challenging in resource-constrained environments or where the data volume is insufficient. Improving image quality can be crucial to effective object detection, as shown by Liu et al. [

36]. This study uses an image enhancement guide to improve object detection in visually degraded scenes. However, this approach could be problematic since the image enhancement could alter the color characteristics critical for detecting the Varroa destructor. On the other hand, Su et al. [

37] introduce an efficient method of detecting objects without anchors, which could be relevant to our study. However, this method still requires a lot of training data, which can be problematic. Regarding semantic segmentation, Strudel et al. [

38] and Zhang et al. [

39] present efficient transformers for semantic segmentation. These approaches achieve high performance in segmentation tasks, but as with the method mentioned above, these detection methods require large amounts of training data and significant computational resources. Finally, Yan et al. [

40] introduce an approach to domain adaptation that could be relevant in our case if the training and test data come from different domains. However, this method may not be necessary if our training and test data are consistent regarding image quality and shooting conditions. Therefore, although these methods have shown significant performance in their respective fields, their limitations may make them less suitable for our Varroa destructor classification task. Based on Legendre–Fourier multichannel moments, the proposal seeks to address these limitations by providing an alternative that can handle images of living beings in various positions with fewer descriptors and less computational cost. The present approach can give comparable or even superior results to those obtained with deep-learning-based methods that classify Varroa destructor using semantic segmentation [

17] and object detection [

16], with the added advantages of lower data demand and computational cost.

The document is organized as follows.

Section 2 briefly describes the database to be used, the definition of multichannel Legendre–Fourier moments, and the different test color spaces.

Section 3 presents the strategy to improve the classification of the Varroa mite in bees.

Section 4 shows the results and discusses this work’s advantages compared to current techniques. Finally, we provide the conclusions of the work carried out.

3. Multichannel Legendre–Fourier Moments for the Varroa Detection

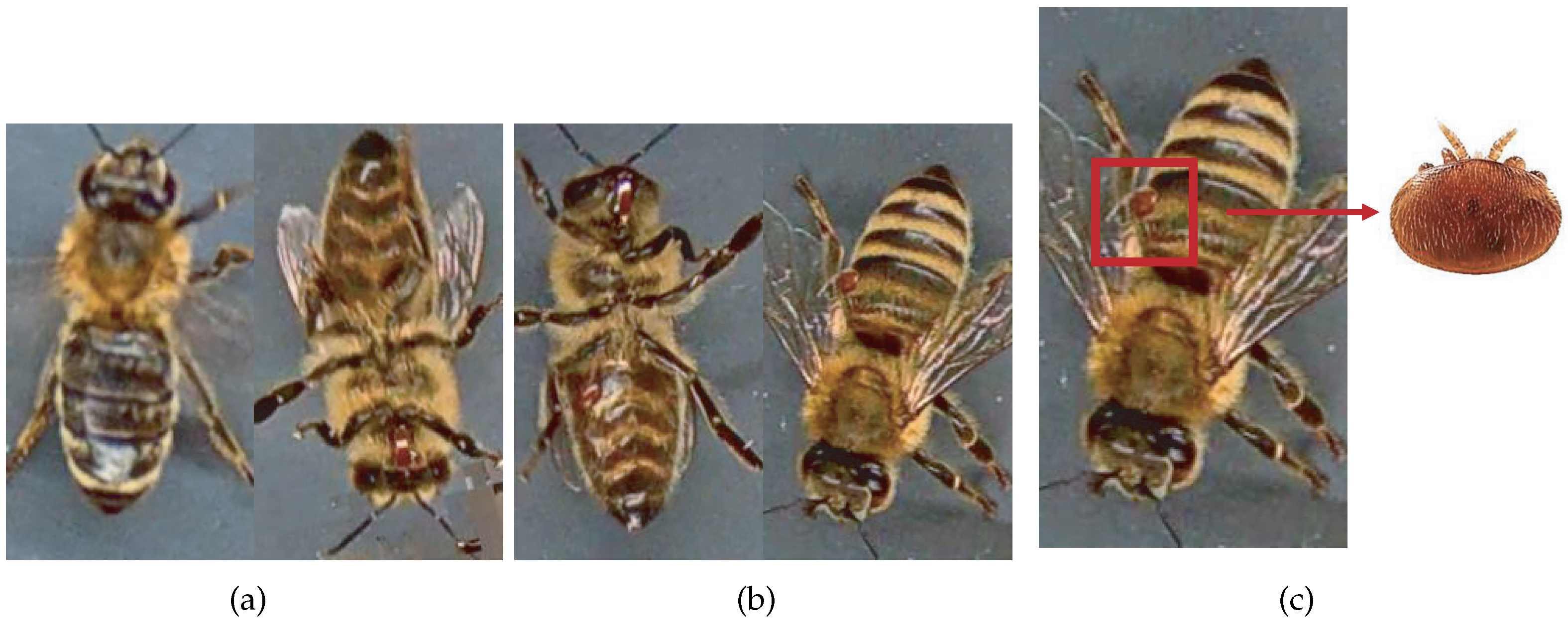

Moments being naturally invariant to rotation, scale, and noise-robust eliminate the need for data enlargement or data reduction preprocessing. However, the acquisition of the dataset images is not static due to the active movement of the bees and mites; for this reason, the images have different positions. The database contains images from the dorsal and ventral sides. Furthermore, both classes consider information about the color and shape of the bee’s legs, wings, eyes, and mouth.

Table 3 shows examples of images according to this classification.

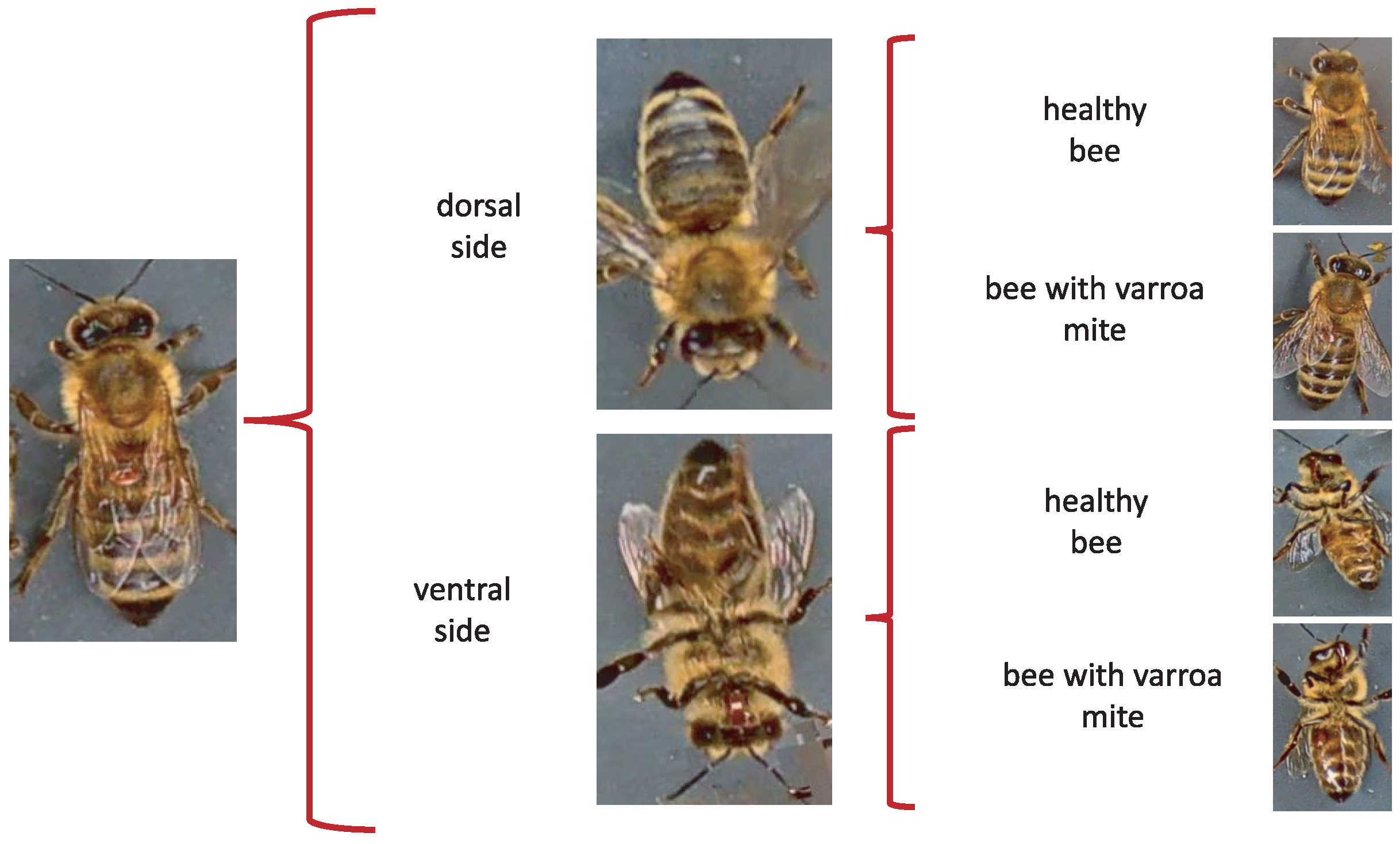

When evaluating different approaches to detect parasites in bees, we considered re-splitting the database to obtain four classes of 500 images each. The new sets respect the original labels of the database through a manual classification separating the images into four categories: bee dorsal side healthy, bee dorsal side with Varroa, bee ventral side healthy, and bee ventral side with Varroa.

The proposal consists of determining the initial orientation of the bee, such as the dorsal or ventral side, regardless of whether it is infested, to identify the presence of the Varroa mite later.

Figure 2 shows the two stages of Varroa identification. First, the position is identified; subsequently, the Varroa mite is detected.

We use MATLAB R2021b (MathWorks, Natick, MA, USA) and the classification learner application in the model implementation with standard parameters. The computer hardware includes an Intel Core i7-9750H processor (Intel Corporation, Santa Clara, CA, USA) and 16 GB RAM. Additionally, a GeForce GTX 1050 graphics card (Nvidia Corporation, Santa Clara, CA, USA) is incorporated to achieve GPU acceleration, significantly enhancing the training processes of specific models.

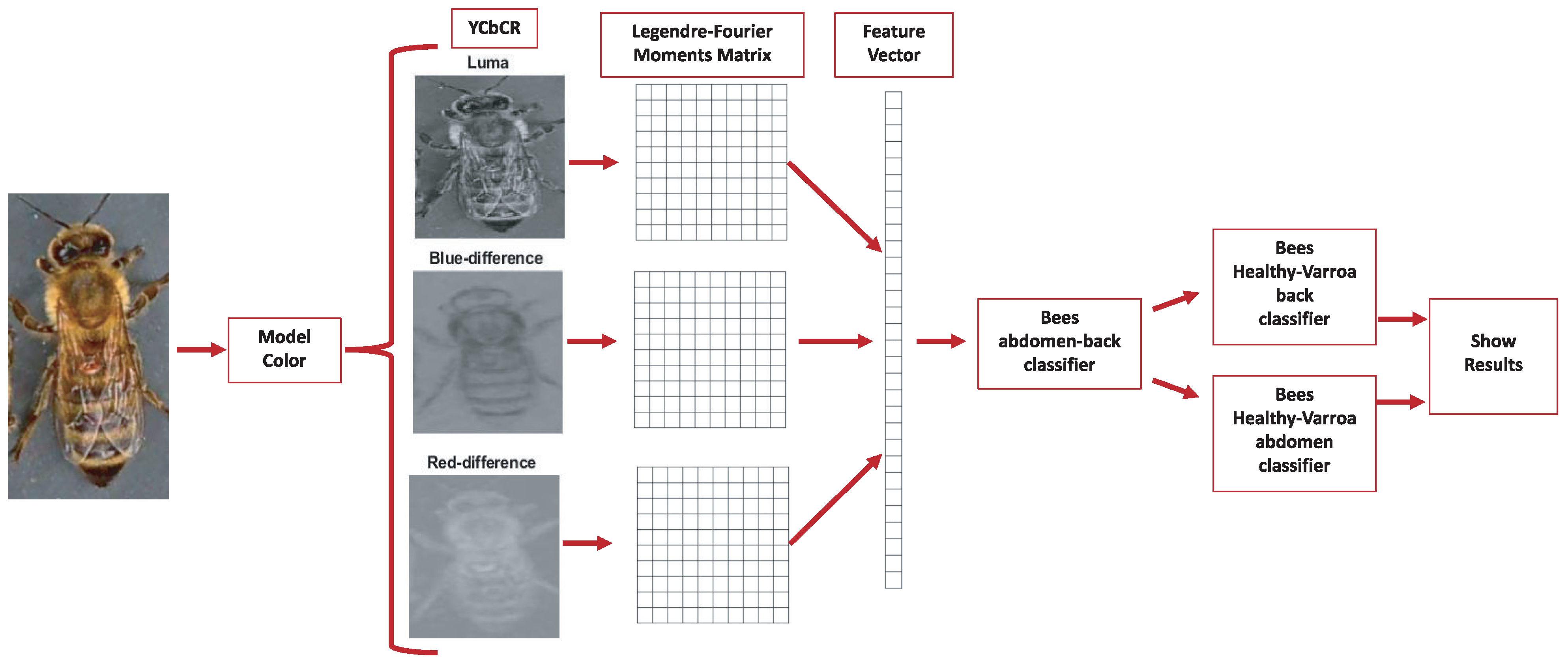

The multichannel moments are calculated from the databases under normal conditions without preprocessing, with order five in the RGB, HSV, and YCbCr color models. Consequently, 75 descriptors are obtained, i.e., 25 per color channel. In this context, “color channel” refers to each component of the color model. For example, in the RGB model, R (red), G (green), and B (blue) are each a color channel. For each channel, 25 descriptors are calculated, leading to 75 descriptors. Therefore, each channel’s individuality in a color space dictates the number of descriptors, not the color space. Layering this information by channel allows for a more detailed and granular analysis, maximizing the amount of information that can be extracted from each image. The analysis considers the classification accuracy using a k-fold cross-validation strategy with

. Furthermore, the study implements a test suite of the VarroaDataset [

17] ensemble to validate the robustness of our conclusions. This set was not used at any stage of training or cross-validation, thus ensuring its complete independence and allowing an unbiased assessment of the generalizability of our model. The final metrics we present in this paper, such as classification accuracy, are derived from this independent test suite. This approach provides a more rigorous and reliable validation of the effectiveness of our model, as it tests its ability to handle previously unseen data and ensures that our results are not biased by potential overfitting during the training phase.

The proposal evaluates the performance of the descriptors in different color models to carry out the subclassification process. In the first stage, the features of the Legendre–Fourier moments are extracted for each channel of the selected color model.

Figure 3 shows an example of the color models used and their respective channels. The descriptors are obtained to enter the first classifier to detect the bee’s orientation (dorsal side or ventral side). According to the output received, the second classifier identifies the presence of the Varroa mite.

Figure 4 shows the proposed classification process for the YCbCr model.

The model performance evaluation metrics generally use the F1 score, conformed by the harmonic mean of precision and recall, the two most common metrics considering class imbalance. On the other hand, the confusion matrix or error matrix allows the visualization of the model’s performance. It makes it easy to check if the system is confusing two classes, i.e., mislabeling one class with another. From the confusion matrix, the accuracy and F1 score metrics are obtained.

Table 4 shows the metrics used to evaluate the proposal.

4. Results

The experiments explore the behavior of the MML-F as descriptors to classify the database with the subdivisions obtained. The first experiment organizes the database into two regular classes, healthy bees and bees infested with the Varroa parasite.

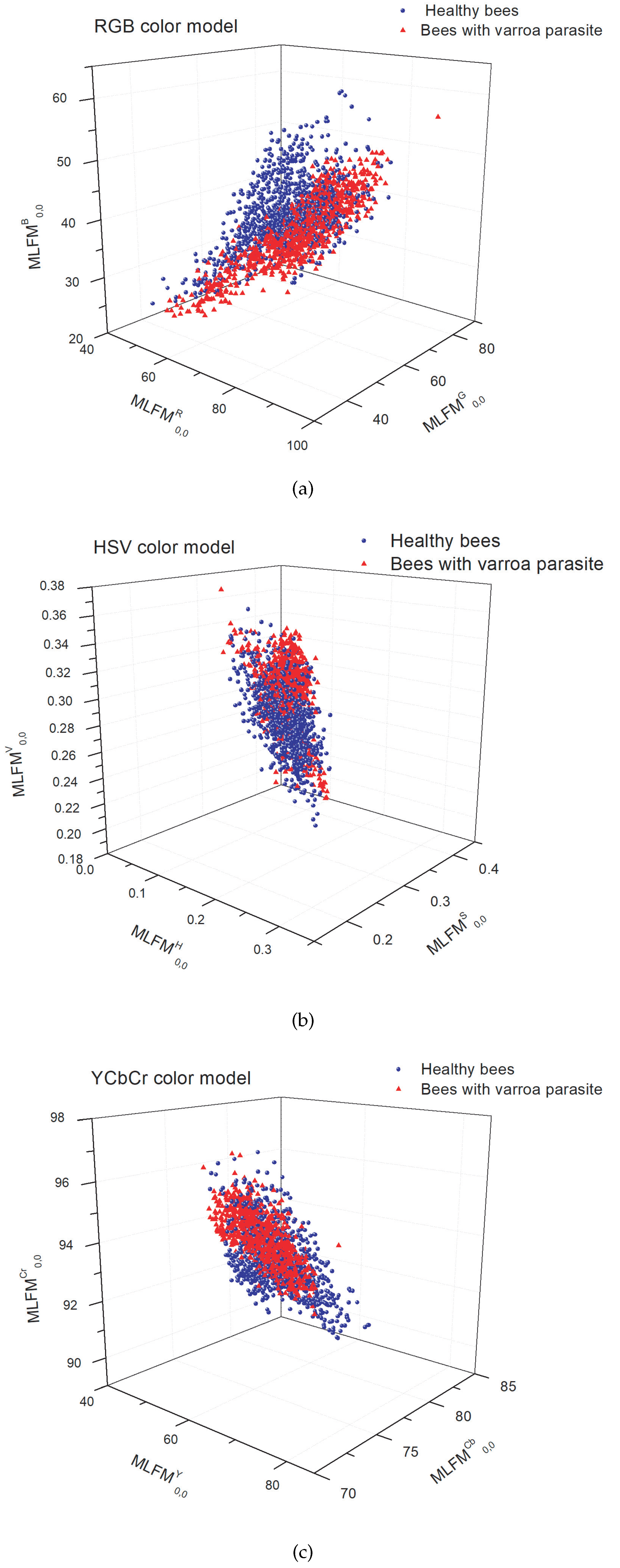

Figure 5 shows the scatter plot of the classification with the three moment-type descriptors in the color models: RGB, HSV, and YCbCr. The image shows an intersection between classes that makes classification difficult.

Table 5 shows an accuracy of

was reached in the YCbCr color model. However, since they are living beings, it is difficult to capture the image in a single position of the bee [

33]. Furthermore, other challenges are associated with distinguishing between the color of the mite and the bee’s eye. Therefore, it is crucial to consider the color information found on the bee’s dorsal and ventral sides [

16]. Furthermore, it is important to note that mites often conceal themselves under adult bees’ sternites, adding a layer of complexity to their detection [

6].

Using moment-type descriptors provides valuable information regarding shape and color characteristics. Therefore, the strategic subdivisions implemented in the database play a crucial role in distinguishing between various bee parts, such as the tongue, and the Varroa mite, even when they exhibit similar color patterns.

The following classification exercises consider the above issues to help determine the bee’s position and the Varroa parasite’s location on the host. The proposal uses the subdivision to perform the classification exercise. First, using 2000 images from the database, the classification determines the bee’s position, with the abdomen in a ventral or dorsal orientation. In the second part of the table, the accuracy in the classification achieved is 99.09% in the YCbCr color model.

The next step allows the determination of the presence of the Varroa parasite. Training is performed using data sets of bees, distinguishing between those with a healthy back and an infested back, those with a healthy ventral side, and those with Varroa on the ventral side. The accuracy increases for the first exercise and reaches 97.70% and 97.34%, respectively.

On the other hand, we use the SVM classifier with a cubic kernel because it has good results when used with moment descriptors in [

47]. Furthermore, we perform the cross-validation with

to validate the proposed model in the different color models.

Table 5 shows the rates obtained with varying metrics in each classification exercise.

The experiments were performed with multichannel moments of higher orders; however, even when a higher order more accurately represents the images, it does not improve the results. In addition, the feature vector is more significant, which increases computation time and presents numerical instability in some cases. Lower-order multichannel moments provide both shape and color information; therefore, they are sufficient for higher classification accuracy.

Finally, all the exercises performed are used to train the classification models of the proposed algorithm for the RGB, HSV, and YCbCr color models to identify the space of color that provides more information for extracting characteristics from the Legendre–Fourier multichannel moments. The double classification carried out with the subdivision of the database allows the extraction of specific characteristics in training to increase the recognition rate of the parasite in bees.

The VarroaDataset has also been analyzed with different deep learning algorithms to identify Varroa in bees using semantic segmentation [

17] and object detection models [

16].

Table 6 shows the results obtained with different classification proposals and the proposed method.

5. Discussion

The results show a particular advantage with multichannel moments and the YCbCr color space over other color spaces and deep learning algorithms. The Varroa color group is more compact in YCbCr than in different color spaces. Furthermore, it has a minor overlap between the Varroa and honeybee data under various lighting conditions. The Y denotes the luminance component, and Cb and Cr represent the chrominance factors. Mainly, the Cr component highlights the characteristic red color of Varroa, thus facilitating the classification.

Furthermore, the difference between the classification results with YCbCr and RGB spaces is that the former represents color as brightness and two color difference signals. In contrast, the latter represents red, green, and blue colors. The color composition in the primary colors limits the classification of the Varroa. In HSV color space, it is separated by hue, saturation, and brightness, so it performs better than in RGB space. The tone component must highlight the Varroa, unlike the Cr of the YCbCr space.

On the other hand, the inherent characteristics of moments, such as their natural invariance to rotation and scale, allow classification with relatively small databases, unlike deep neural networks. Without a doubt, with more extensive databases and transfer of learning, deep neural networks can cover most inconveniences, such as the constant mobility of bees that causes Varroa to be confused with body parts; therefore, deep neural networks easily overcome the proposal. However, with the current dataset, the proposal presented is an excellent option for early detection of the Varroa destructor mite.