Abstract

Most unmanned aerial vehicle (UAV) ground control station (GCS) solutions today are either web-based or native applications, primarily designed to support a single UAV. In this paper, our research aims to provide an open, universal framework intended for rapid prototyping, addressing these objectives by developing a Web Real-Time Communication (WebRTC)-based multi-UAV monitoring and control system for applications such as automated power line inspection (APOLI). The APOLI project focuses on identifying damage and faults in power line insulators through real-time image processing, video streaming, and flight data monitoring. The implementation is divided into three main parts. First, we configure UAVs for hardware-accelerated streaming using the GStreamer framework on the NVIDIA Jetson Nano companion board. Second, we develop the server-side application to receive hardware-encoded video feeds from the UAVs by utilizing a WebRTC media server. Lastly, we develop a web application that facilitates communication between clients and the server, allowing users with different authorization levels to access video feeds and control the UAVs. The system supports three user types: pilot/admin, inspector, and customer. Our research aims to leverage the WebRTC media server framework to develop a web-based GCS solution capable of managing multiple UAVs with low latency. The proposed solution enables real-time video streaming and flight data collection from multiple UAVs to a server, which is displayed in a web application interface hosted on the GCS. This approach ensures efficient inspection for applications like APOLI while prioritizing UAV safety during critical scenarios. Another advantage of the solution is its integration compatibility with platforms such as cloud services and native applications, as well as the modularity of the plugin-based architecture offered by the Janus WebRTC server for future development.

1. Introduction

In recent years, unmanned aerial vehicles (UAVs) have gained significant popularity due to their adaptability and capacity to perform diverse tasks. However, as the number of UAVs in use continues to grow, the need for efficient monitoring and control of large multi-UAV systems has become increasingly urgent [1,2]. Existing methods for monitoring and controlling multiple UAVs are often hindered by slow response times, limited scalability, and complex communication protocols [2,3].

At the Professorship of Computer Engineering at Chemnitz University of Technology, a key area of research is the development of autonomous inspection missions using UAVs, such as automated power line inspection (APOLI) [4,5], which is part of the Adaptive Research Multicopter Platform (AREIOM) [6]. The primary goal of the APOLI project is to assess damage to insulators, electric power poles, and transmission lines. The research focuses on creating new navigation and mission control concepts, utilizing vision-based flight control to detect inspection targets and unforeseen obstacles in real time. This is essential because conventional positioning and orientation sensors, such as the Global Positioning System (GPS), Inertial Measurement Unit (IMU), and compass, can be unreliable near high-voltage systems that emit strong electromagnetic radiation. The on-board mission control system must continuously monitor the flight path and safely maneuver the UAV around poles and along transmission lines. Furthermore, the system should be capable of performing on-board damage assessment of towers, cables, and insulators [4].

In inspection applications, the UAV’s onboard mission control system must continuously monitor its flight path and safely navigate around obstacles such as poles and transmission lines. Failures in system components or environmental factors, such as strong electromagnetic interference or high winds, can create significant challenges during missions. A centralized ground control station (GCS) provides operators with a comprehensive view of the UAV’s operational environment, including real-time telemetry, video feeds, and sensor data. This enables quick, informed decision making and enhances situational awareness, especially in complex scenarios involving multiple UAVs. Real-time monitoring and control are therefore essential, with the GCS playing a critical role in ensuring mission success. For example, in [7], a set of logic processing rules is defined to manage situations such as obstacle detection, low battery voltage, or loss of control signal, improving the flexibility of autonomous UAV inspections. When the UAV detects an obstacle either in front of it or within two meters above, its obstacle avoidance system halts the UAV and alerts the operator. At this point, control is transferred to the operator, who can decide whether to land the UAV or guide it over the obstacle. If the operator chooses to fly over, they must manually navigate the UAV to the next waypoint. Once the UAV reaches the waypoint, it checks its position for accuracy before continuing the inspection.

Today, several streaming protocols are used in various applications. In [8], video streaming communication is categorized into push-based and pull-based protocols, with a primary focus on two widely used protocols: Real-Time Streaming Protocol (RTSP) and Web Real-Time Communication (WebRTC). The research demonstrates that WebRTC delivers better Quality of Experience (QoE) and Quality of Service (QoS) compared with other streaming protocols, based on the experiments conducted. Additionally, researchers in [9] categorized the latency requirements of streaming media applications into four categories: ultra-low-latency (less than 1 s), low-latency live (less than 10 s), non-low-latency live (10 s to a few minutes), and on-demand (hours or more). The study highlights that many ultra-low-latency applications running on IP networks rely on the Real-Time Transport Protocol (RTP) or WebRTC. Specifically, WebRTC employs RTP as its media transport protocol, alongside other protocols essential for secure browser functionality.

This is where WebRTC technology comes into play, and it is the focus of our paper. WebRTC is widely used for real-time communication and multimedia streaming over the internet. It provides a secure, low-latency, and high-quality communication channel, making it an ideal choice for real-time applications. WebRTC is open-source and simplifies the development of high-quality real-time communication (RTC) applications that can run on web browsers and mobile devices. By utilizing standardized communication protocols, devices can engage in real-time interaction. WebRTC also offers different channels for audio, video, and data [10]. The WebRTC data channel, in particular, enables the transmission of text or binary data to a peer during real-time video streaming. This feature is especially beneficial in UAV inspection applications, as it allows for the parallel transmission of data, such as flight information and mission details, alongside the real-time video stream, ensuring synchronized data transfer [2].

In [1], the authors explore the feasibility and performance of using WebRTC for video streaming on resource-constrained platforms, such as the Raspberry Pi. By optimizing WebRTC settings, it is possible to balance video quality and power efficiency, demonstrating that WebRTC is well-suited for managing and overseeing multi-UAV applications.

2. Motivation

The combination of WebRTC and UAVs is highly suitable for scenarios where UAVs are used to identify defects and provide comprehensive control in real time [2]. WebRTC ensures the seamless flow of operations, allowing for real-time communication and data transmission.

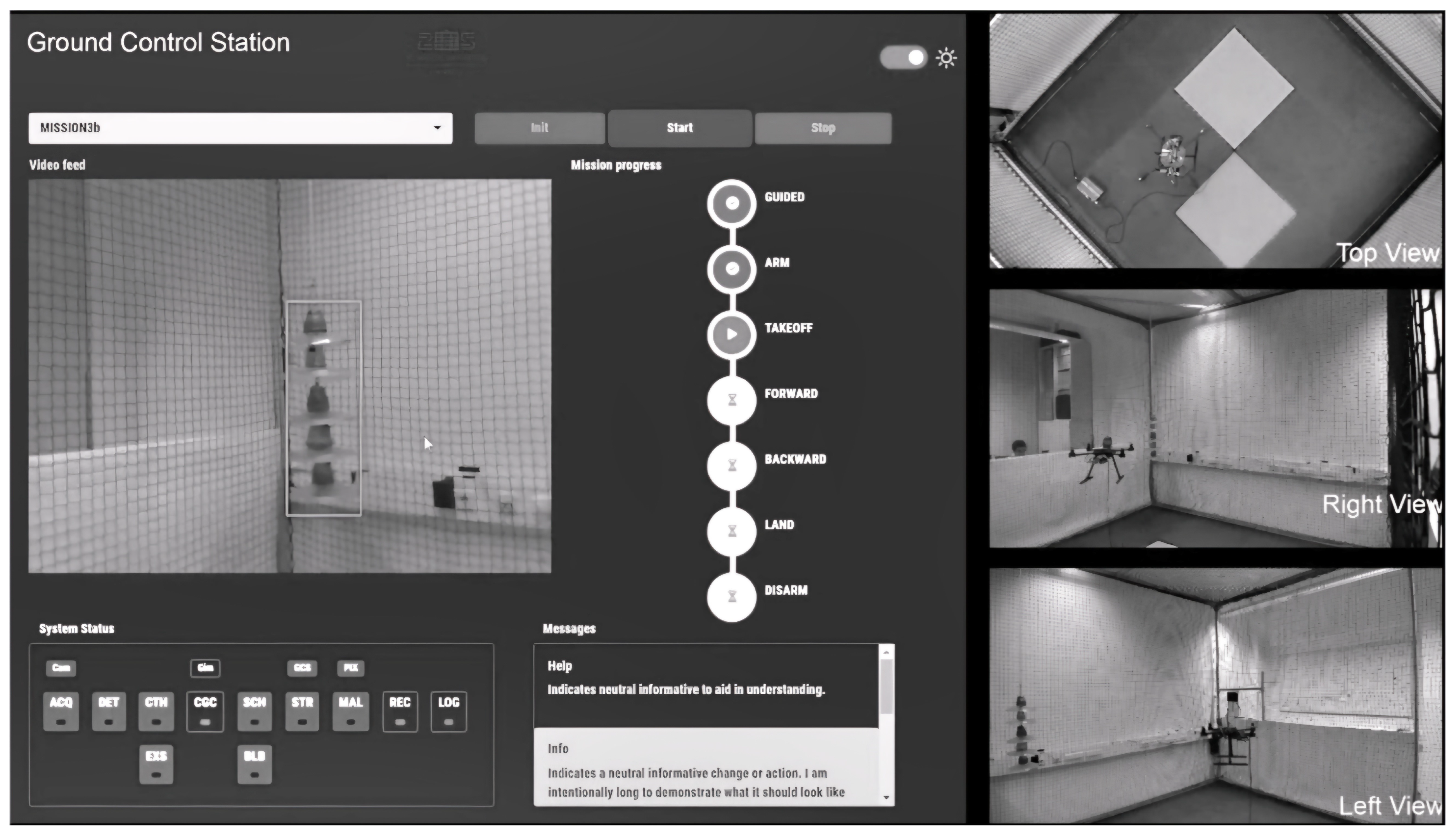

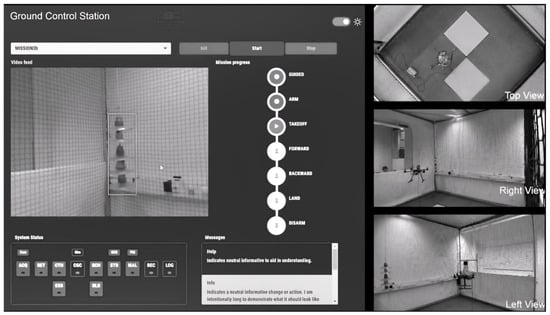

Figure 1 illustrates the GCS user interface and the indoor flight cage, where developments are tested and validated before executing actual missions in outdoor environments. In the test scenario where a WebRTC-based GCS is used in the APOLI project. The UAV and GCS have point-to-point connection. The camera feed, used for insulator detection using advanced image processing techniques in real-time at the UAV’s side, is streamed to the GCS using WebRTC protocol, as well as flight data transmissioning to the GCS using WebRTC data channel. The left side of the image shows the user interface of the GCS developed for the AREIOM project, displaying the video feed and rendering detection results in real time during the flight. The right side shows the indoor flight cage.

Figure 1.

Vision subsystem test in AREIOM Platform [11].

Additionally, using multiple UAVs for inspection applications offers several advantages over using a single UAV. These advantages can be summarized as follows [12]:

- Enhanced Data Collection Quality: Multiple UAVs improve the overall accuracy and comprehensiveness of the inspection.

- Reduced Operation Time: This is particularly beneficial for large-scale inspections, where a single UAV would take significantly longer to complete the task.

- Reliability: The redundancy provided by multiple UAVs ensures that if one UAV fails, others can continue the inspection, thereby increasing the overall reliability of the operation.

- Flexibility and Adaptability: Multiple UAVs can be programmed to follow complex trajectories and cover intricate structures more effectively than a single UAV. This adaptability is crucial for inspecting complex infrastructures such as bridges, wind turbines, and industrial buildings.

Regarding the aforementioned benefits of using multiple UAVs in inspection applications, it becomes necessary to further expand the GCS implementation. This raises the following important research questions:

- How can a GCS efficiently collect and monitor real-time video streams and flight data from multiple UAVs?

- What are the key requirements for developing a WebRTC-based multi-UAV monitoring system, and how can it be successfully implemented?

Problem Statement

Most current WebRTC-based UAV applications are available as web-based or native applications, primarily designed to support a single UAV. In this research, our goal is to propose a solution that offers integration possibilities across various platforms, including web-based applications, native applications, and cloud-based systems. By achieving these objectives, we can significantly enhance the overall functionality and accessibility of a multi-UAV monitoring system.

This article focuses on exploring and optimizing the following features:

- The ability to send video feeds to the GCS from various sources, such as shared memory, USB Video Device Class (UVC), and Camera Serial Interface (CSI), with support for both hardware- and software-encoded video.

- Interoperability, meaning the provision of an adaptable solution that supports integration with native apps, cloud-based systems, and web apps.

- Support for multiple UAVs by utilizing a WebRTC media server to receive real-time video streams and flight data from multiple UAVs.

- Support for multiple client sessions, enabling clients with different authorizations—such as Pilots and Inspectors—to access the GCS. The proposed solution must be capable of relaying multiple WebRTC streams to different user sessions with high performance in real time.

By addressing these features, we aim to enhance the capabilities and effectiveness of WebRTC-based UAV GCS solutions, supporting multi-UAV applications for real-time monitoring and control. It is important to note that, in our application, each UAV is assumed to maintain a point-to-point direct communication link with the GCS, as illustrated in the proposed architecture in the following section. Further investigation and optimization are required if the developed solution is intended for use in different network topologies, such as a Flying Ad Hoc Network, mesh networks, etc. This is because real-time data sharing and video streaming are highly dependent on the used communication technology (e.g., Wi-Fi, 4G, 5G), network topology, and communication protocols.

3. Related Work

In recent years, there has been growing interest in the development of WebRTC-based monitoring and control systems. These systems have the potential to revolutionize real-time UAV monitoring and control, offering significant advantages over traditional approaches. Numerous related works and research studies have explored the use of WebRTC for UAV applications, highlighting its effectiveness and potential to enhance UAV operations. In order to achieve the research objectives outlined in the problem statement, we explored available approaches from both academic and commercial sources.

There is limited literature available that specifically addresses the integration of UAVs with WebRTC technology. Table 1 provides a comparison of video streaming and data collection solutions in the literature, focusing on the articles most relevant to this research.

Table 1.

Overview of Relevant Available Solutions in Literature.

3.1. Literature Review of Related Solutions

Based on the applications presented in Table 1, it can be clearly seen that most existing solutions focus on a single UAV and rely on a single point-to-point video stream. To the best of our knowledge, there is currently no application available that supports multi-UAV video and flight data streaming to the GCS side using WebRTC technology. In contrast, our approach aims to collect one or more video streams and flight data from multiple UAVs at a GCS for real-time monitoring. Additionally, we aim to provide access to this GCS for multiple users with varying levels of authorization, functioning similarly to a UAV conferencing platform. This setup requires the video streams from GCS to be relayed to user sessions with high quality and low latency.

The paper in [1] highlights the potential for optimizing WebRTC settings to extend battery life in power-constrained scenarios without compromising video quality significantly. The work is particularly relevant for UAVs and other IoT devices that require energy-efficient, real-time multimedia communication. However, it does not consider the use of multiple UAVs.

The framework in [2] provides a versatile platform named “WebRTC-based flying monitoring system” for collecting data through various IoT sensors and a camera with a single UAV, facilitating a wide range of monitoring applications. It is well-suited for scenarios that require rapid deployment and adaptability to different monitoring needs. WebRTC is utilized to transmit various sensor data and a single video stream to ensure real-time communication. However, it does not adequately address the collection and transmission of multiple video streams and data from multiple UAVs to a centralized GCS, nor does it effectively facilitate sharing this information with multiple user groups.

In [3], security issues were discussed for IoT applications using UAVs. The paper offers a well-rounded approach to incorporating UAVs into the IoT landscape while addressing significant security and privacy challenges. Its framework aims to transform UAVs into reliable and secure IoT nodes, crucial for sensitive applications like surveillance and healthcare. However, a concrete framework for multi-UAV monitoring and control has not been proposed.

The proposed architecture in [13] includes several components, such as Kafka, a Kafka connector, a MongoDB database, and a video streaming server for data transmission from the UAV to the GCS. A Python API named imagezmq [21] was developed based on the ZeroMQ framework for video streaming. This implementation transmits JPEG-compressed images via a Transmission Control Protocol (TCP) port. While this approach ensures reliable video stream transmission, it does not achieve the low-latency performance required for real-time video streaming, which is provided by protocols like WebRTC.

The paper in [14] describes the development and testing of a UAV-based pollution monitoring system that uses a WebRTC-based platform for real-time video, IoT data, and spatiotemporal metadata transmission. The system, named “Kestrel”, integrates a variety of low-cost environmental sensors, including gas sensors and particulate matter sensors, with a 4K camera and a positioning module. The data collected by the UAV are transmitted to a ground station using WebRTC, where they are visualized and analyzed in real time. However, the framework also does not consider the collection of those data from multiple UAVs at GCS.

Ref. [17] introduces a remote monitoring and navigation system designed for multiple UAV swarm missions utilizing a Real-Time Kinematic Global Positioning System (RTK-GPS) for precise positioning, 4G for long-range communication with ground stations, and a Wi-Fi-Mesh network for inter-UAV communication. The proposed system demonstrates a comprehensive approach to managing UAV swarm missions, addressing challenges in real-time communication and precise positioning. Mission Planner and QGroundControl are used as real-time monitoring software in [17], where the UAV is controlled through Dronekit combined with MAVSDK as external mission control, communicating with PX4 via the Micro Air Vehicle Link (MAVLink) communication architecture. However, as noted in [22], the Image Transmission Protocol in MAVLink is not designed for general image transmission; it was originally intended to transfer small images over a low-bandwidth channel from an optical flow sensor to a GCS. Therefore, it is unsuitable for applications requiring low-latency, high-quality real-time video streaming.

The paper in [18] presents a platform for air quality monitoring with potential for scaling and customization. However, it uses QGroundControl. As mentioned, the protocol in MAVLink is not designed for general image transmission; it was originally intended to transfer small images over a low-bandwidth channel.

The framework in [20] simplifies the development and rapid prototyping of distributed IoT applications by utilizing WebRTC for direct device-to-device communication. It provides a web-based interface with interactive virtual IO ports and a runtime environment for emulating IoT devices, enabling the creation of distributed applications that follow a “computation follows data” model. This approach prioritizes local processing near the data source, thereby enhancing privacy and reducing latency. The framework’s network architecture supports both virtual and physical devices, utilizing WebRTC data channels for direct communication, eliminating the reliance on cloud services. However, the current implementation only leverages the WebRTC Data Channel API for communication and does not provide any benchmark results.

A platform called the Flying Communication Server is proposed in [19], designed to share information among different rescue teams via a WebRTC server using private smart devices in large-scale disaster situations. The WebRTC server was developed using Node.js, but latency-related benchmark results are not provided in the paper to evaluate its suitability for our use case, as our primary focus is on achieving very low-latency video streaming and data collection from multiple UAVs. Additionally, the solution proposed in this study does not fully address the objectives outlined in our problem statement.

The paper in [16] focuses on the integration of IoT and telemedicine communication, presenting a robust model for IoT-driven remote interaction. Specifically, it leverages the Kurento WebRTC Media Server to enable multimedia communication, such as video streaming and data collection. The model facilitates remote monitoring and telemedicine, particularly aimed at enhancing pediatric care in rural areas, providing access to specialist healthcare services for populations with limited medical resources.

In [15], a low-latency, multi-purpose end-to-end video streaming system is presented, supporting applications such as surveillance, event coverage, and object detection. The system is built using WebRTC for real-time video transmission and integrates several modules, including a custom UAV, a backend control platform, and a media gateway. Considering the potential benefits of this approach, as well as the use of the Kurento WebRTC Media Server mentioned in [16], we also explored further research into WebRTC media servers, which is discussed in the following sections.

3.2. Commercial WebRTC Solutions

There are also several commercial implementations of WebRTC-based server-side components, primarily used for high-scale conferencing or broadcasting. Some example commercial WebRTC applications include the following:

- WOWZA [23]: Interactive broadcast, drone streaming, customer management.

- VIDIZMO [24]: IP camera streaming, drone streaming, over-the-top (OTT) broadcast, Virtual Reality (VR) streaming.

- UgCS [25]: Drone-based video streaming software for tablets and computers.

- nanoCOSMOS [26]: Cross-platform WebRTC-based video streaming.

- Liveswitch [27]: WebRTC-based video and audio streaming platform.

- Ant Media [28]: WebRTC-based streaming engine with cloud support.

To the best of our knowledge, no performance analysis of these commercial solutions is currently available, and they are not open-source for performance testing. Therefore, we conducted research on open-source WebRTC-based media servers to gain insights into the development and implementation of a multipeer and multiuser platform using the WebRTC framework. This research helped us identify the requirements for our desired solution, enabling its implementation using open-source tools.

3.3. Open-Source WebRTC Solutions

Several open-source WebRTC media server implementations exist, including Jitsi, Janus, Medooze, Kurento, and Mediasoup. The paper in [29] provides a comparative study of WebRTC Selective Forwarding Units (SFUs) and offers a comprehensive performance analysis. The results are classified into four categories: failure rate, streaming bitrate, latency, and video quality. Under target load conditions:

- Janus [30] and Mediasoup [31] maintain the maximum bitrate under high load, with the bitrate starting to decrease only above 280 peers. On average, Janus achieves a sending bitrate of 1.18 Mbps and a receiving bitrate of nearly 1 Mbps.

- Janus has the highest image quality score.

- Medooze and Mediasoup maintain latency below 50 ms. For Janus, latency rises to 268 ms after surpassing 150 participants, while for Kurento, latency exceeds one second. However, Janus, Mediasoup, and Medooze show similar latency results up to 150 participants. Therefore, Janus remains suitable for multi-UAV applications.

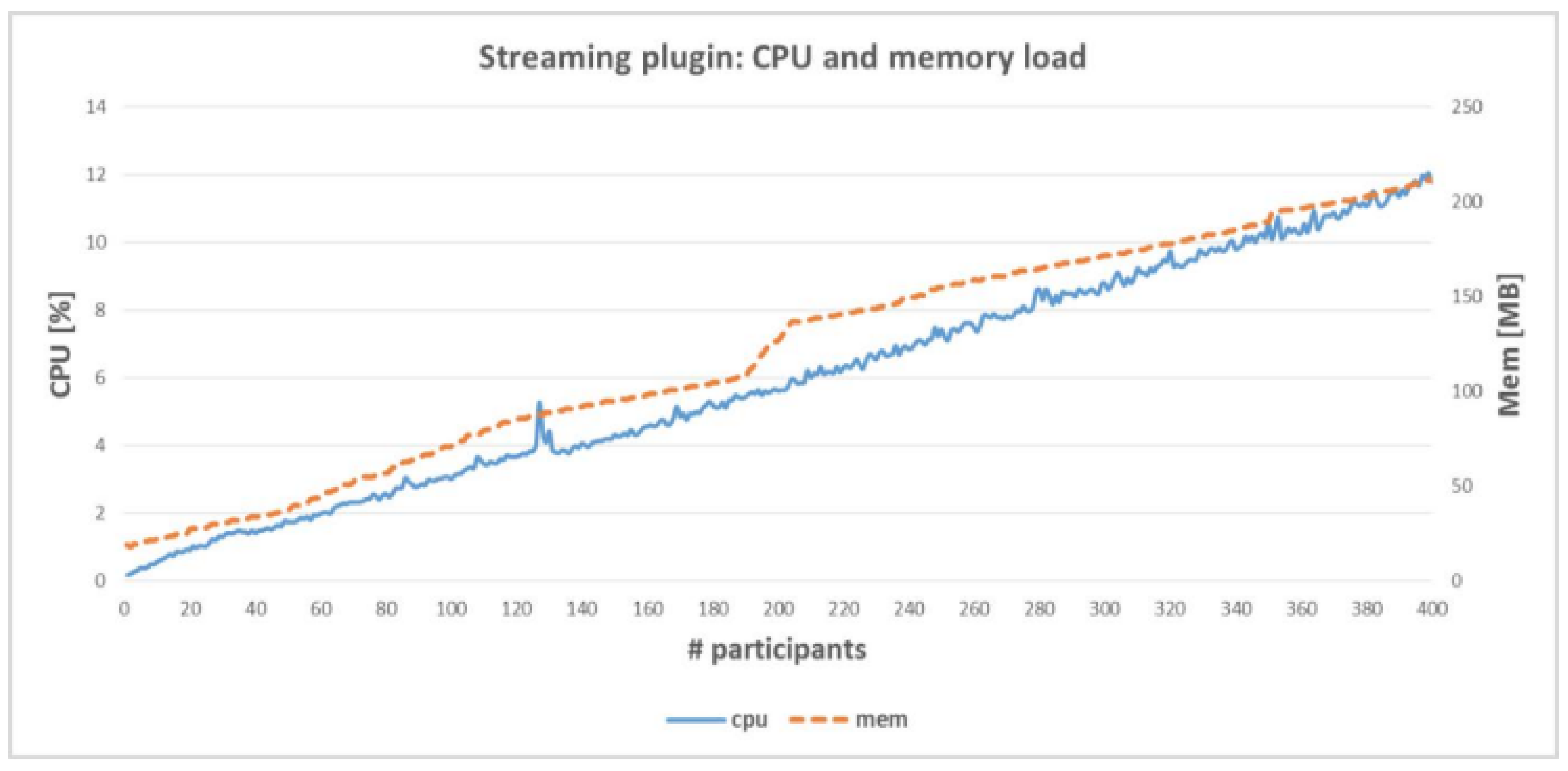

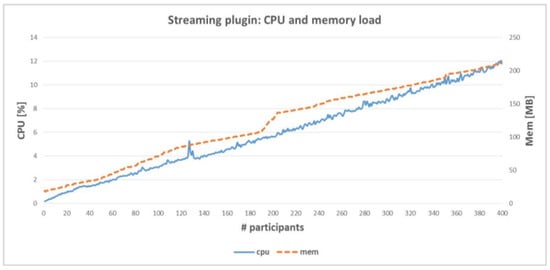

In [32], the Janus WebRTC server was evaluated regarding CPU and memory usage. It was found that the streaming plugin test yielded similar results to the SIP plugin test, where 330 participants used approximately 22% of the CPU. CPU and memory usage increased linearly as the number of participants increased.

3.4. Why the Janus WebRTC Server?

Both Mediasoup and Janus maintain similar latency performance up to 150 participants. Beyond this threshold, Mediasoup demonstrates slightly better latency and failure rates, but Janus offers higher bitrates and better video quality, which is crucial for inspection applications. For scenarios with up to 150 participants, either option is viable, though Janus’s superior image quality makes it particularly well-suited for inspection tasks [32].

Janus is a general-purpose WebRTC server that provides scalable, high-performance video streaming by managing the complexities of WebRTC technology and abstracting them from developers [30]. It supports a wide range of use cases, including one-to-one, one-to-many, and many-to-many communication, and offers compatibility with various protocols and codecs. Janus provides several advantages, as outlined below:

- Scalability: Janus can handle a large number of simultaneous connections, making it suitable for large-scale video streaming applications [32].

- Flexibility: Janus is highly extensible, thanks to its modular plugin-based architecture, and it can be easily integrated into existing systems, offering a versatile solution for video streaming [30,33].

- Performance: Janus is optimized for high performance, with a focus on low latency and minimal overhead [32,33].

- Reliability: Janus is designed to be reliable, with a number of built-in fail-safes to ensure that video streams continue even in the event of network issues [30].

While limited benchmarking data are available comparing Janus against other WebRTC tools, performance analyses of the Janus WebRTC gateway demonstrate its scalability and efficiency [32]. Figure 2 shows the CPU and memory usage of the Janus WebRTC streaming plugin, which is also used in our approach.

Figure 2.

Streaming plugin: CPU and memory [32].

As mentioned earlier, both Mediasoup and Janus maintain similar latency up to a certain number of participants. However, Janus offers a higher bitrate and superior video quality [32]. Based on this information, we believe that it is especially well-suited for inspection tasks.

4. Concept and Methods

In this section, we present a detailed description of the proposed platform, outlining its various components and explaining how they function together to achieve the desired outcomes.

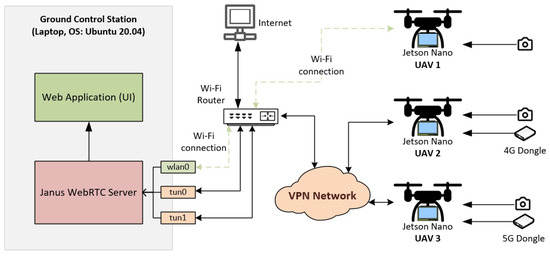

4.1. The Proposed Architecture

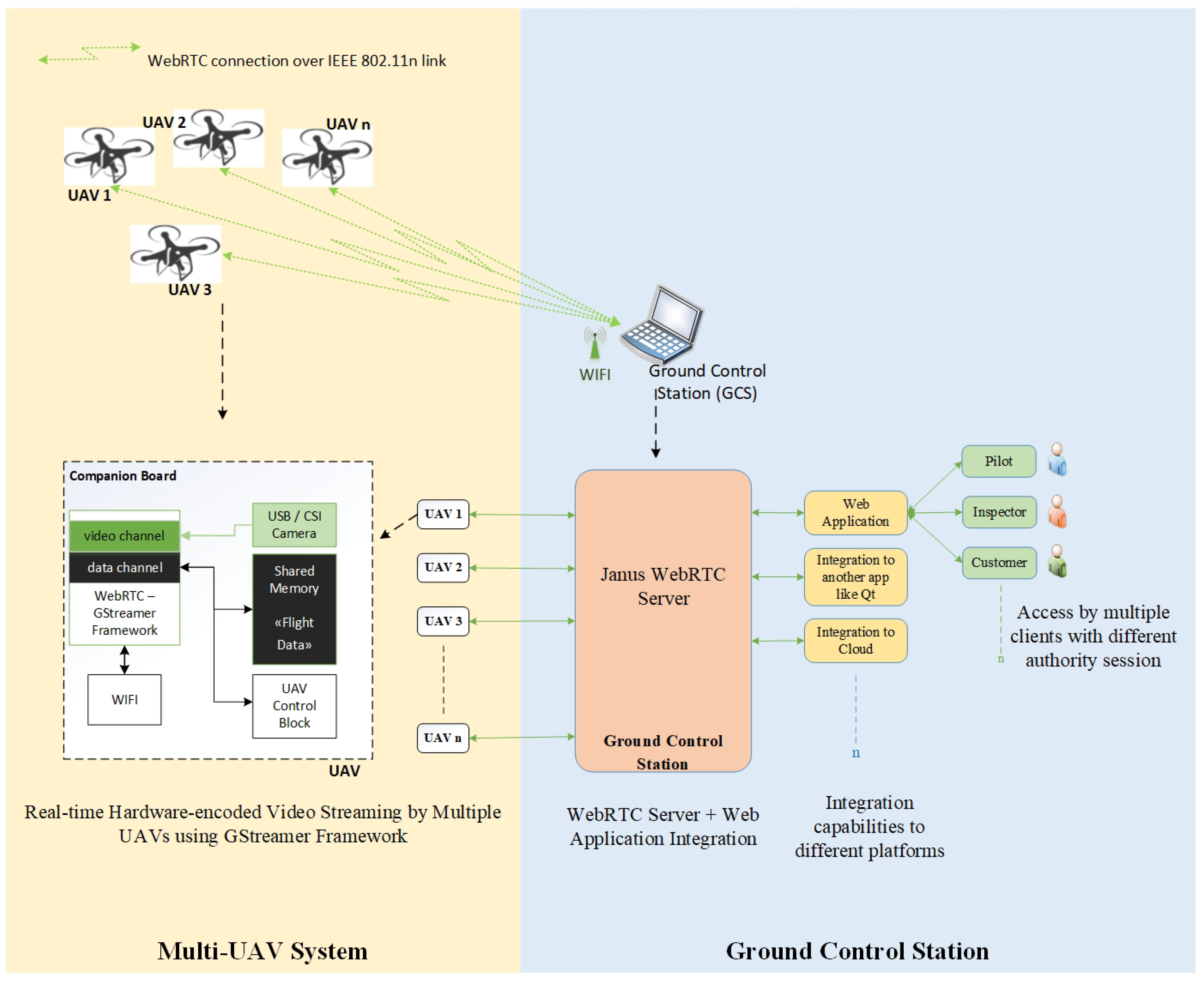

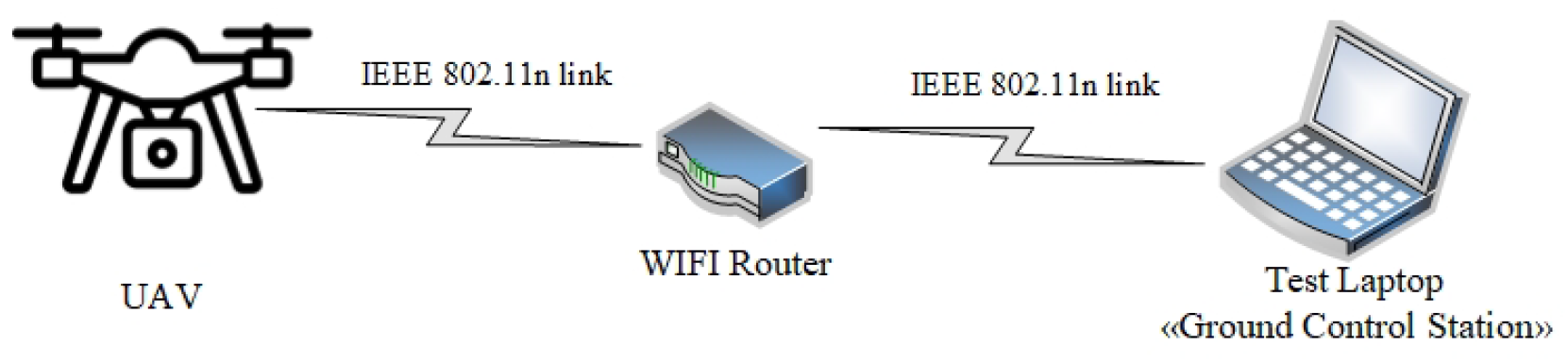

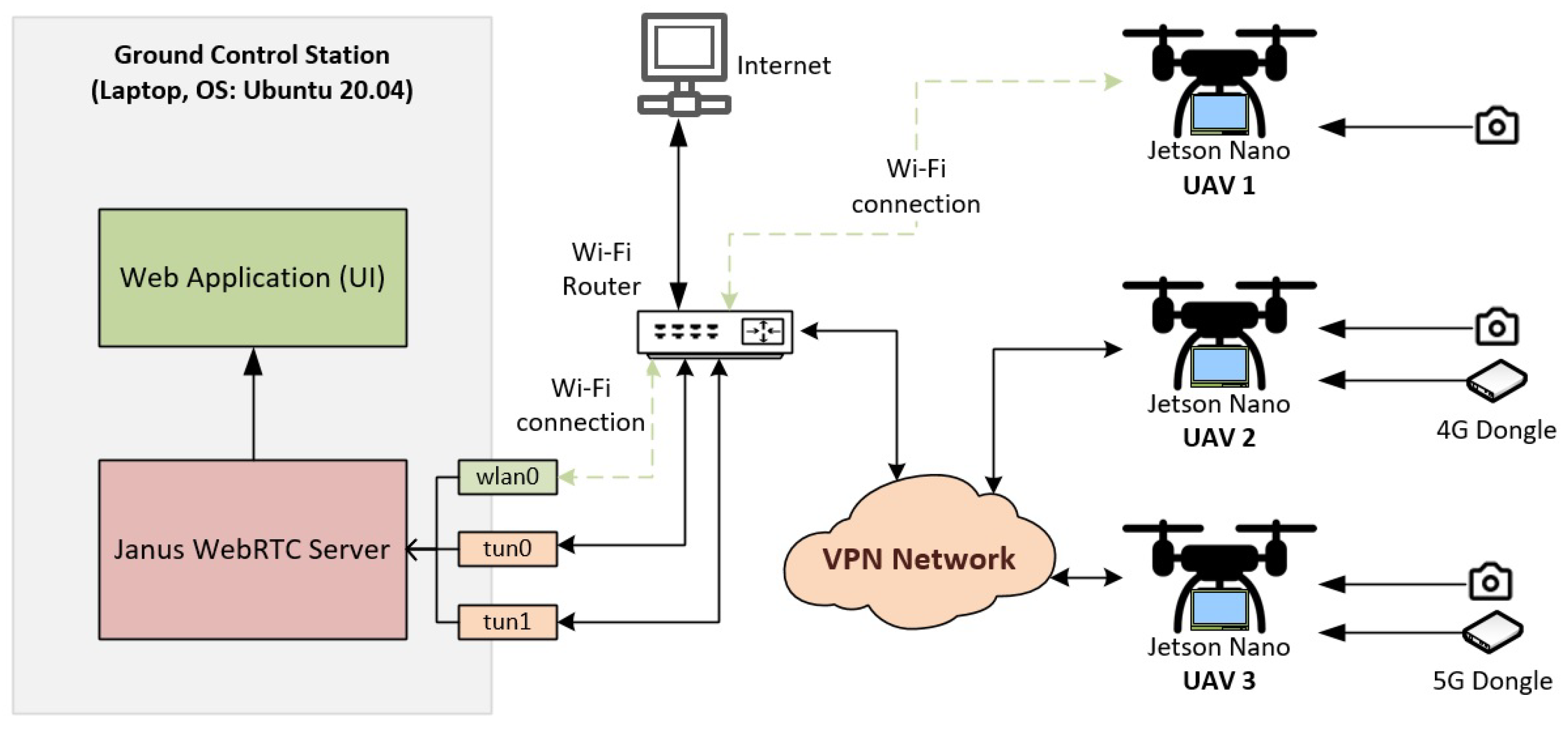

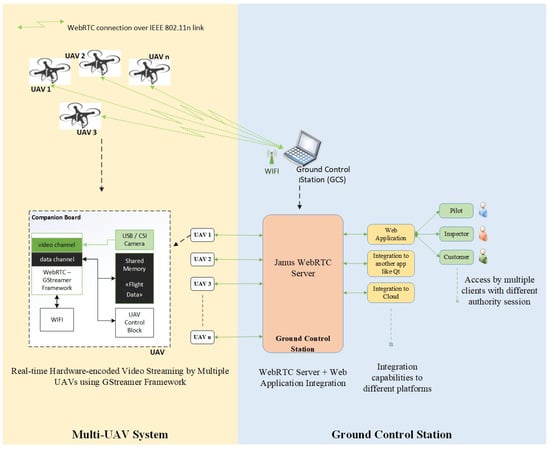

The diagram in Figure 3 illustrates the proposed system architecture, which is divided into two parts: the UAV (WebRTC using the GStreamer Framework [34]) and the GCS (WebRTC Server + User-Side Web Application). The UAV component consists of a companion board, such as the NVIDIA Jetson Nano, connected to an IEEE 802.11n network and transmitting a video feed from a camera (V4L2 USB or CSI) or shared memory. The companion board on the UAV facilitates WebRTC connections using the GStreamer framework for real-time video streaming and flight data transmission. In our use case, we employ the NVIDIA Jetson Nano, which supports hardware encoding via the GStreamer framework. Since the WebRTC data channel supports bidirectional communication, it can be used to control the UAV while simultaneously receiving flight data.

Figure 3.

Proposed architecture.

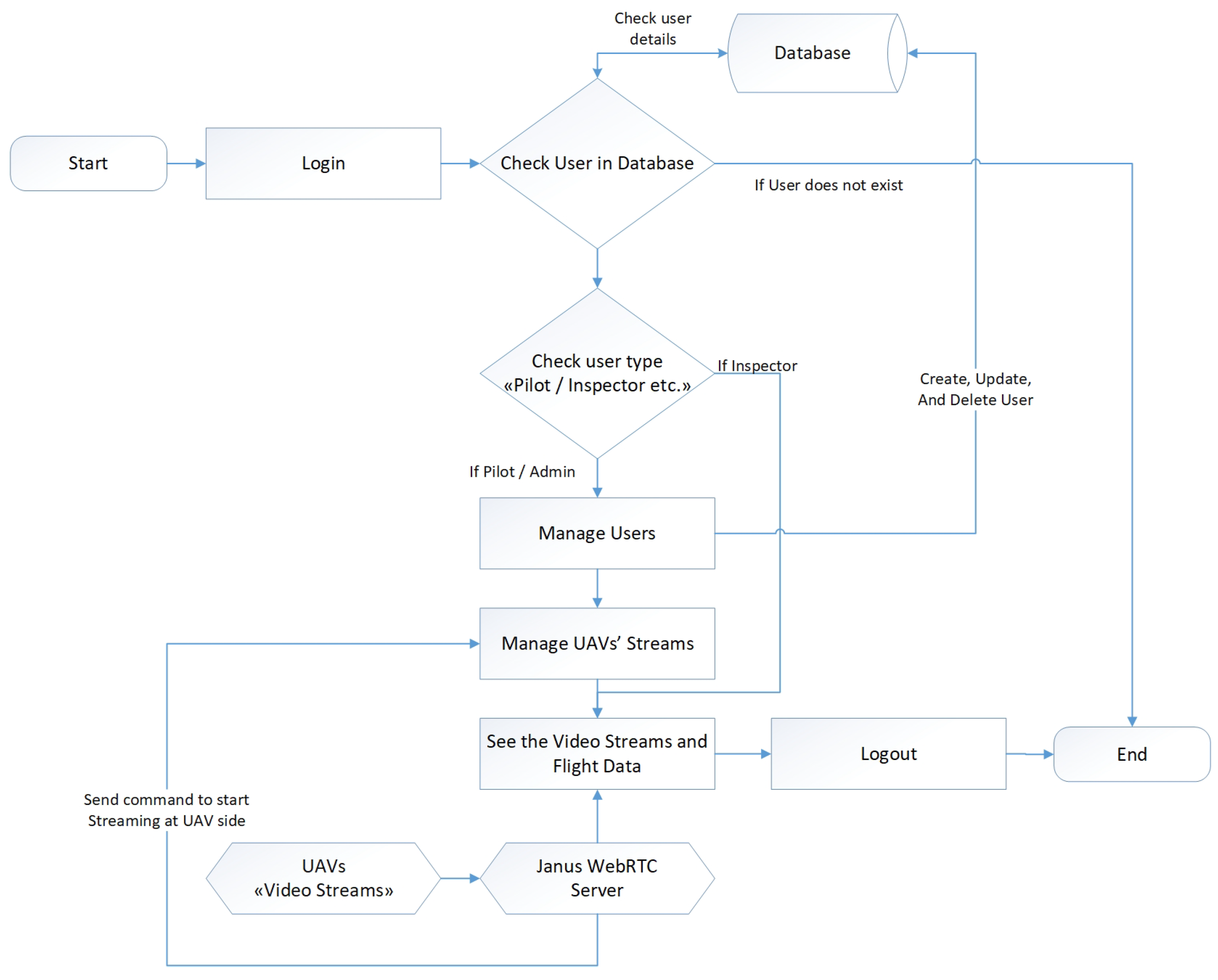

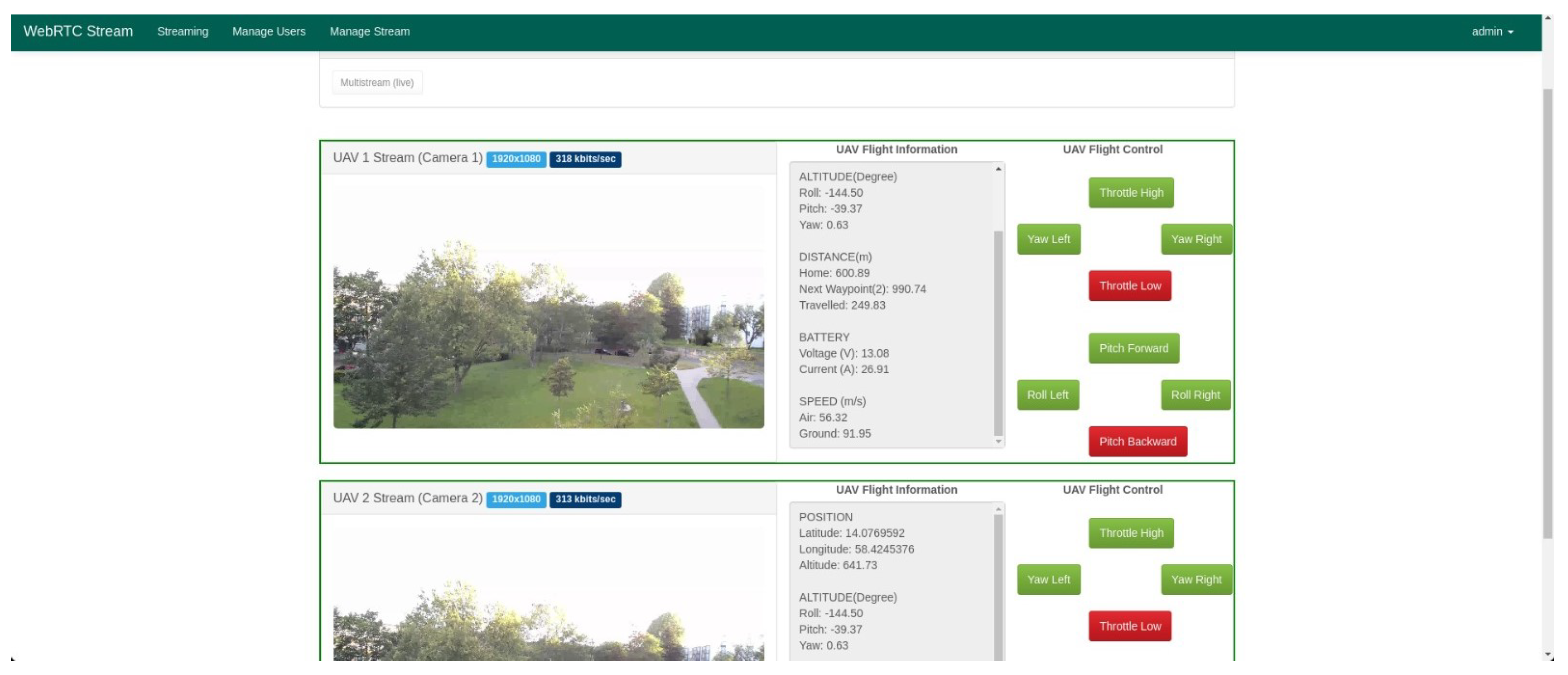

On the GCS side, the WebRTC server receives flight data and video stream(s) from the UAV(s) and relays them to the user(s). The user interface can be a web application, a native application (e.g., using Qt [35]), or a cloud application. The web application includes user management features and displays video streams with specific access restrictions based on user roles (e.g., customers, inspectors, and pilots). The pilot, acting as the administrator, controls the UAV and can create streams for other users. The inspector can select and view the video streams as needed, while customers and other users are limited to viewing the streams chosen by the admin/pilot.

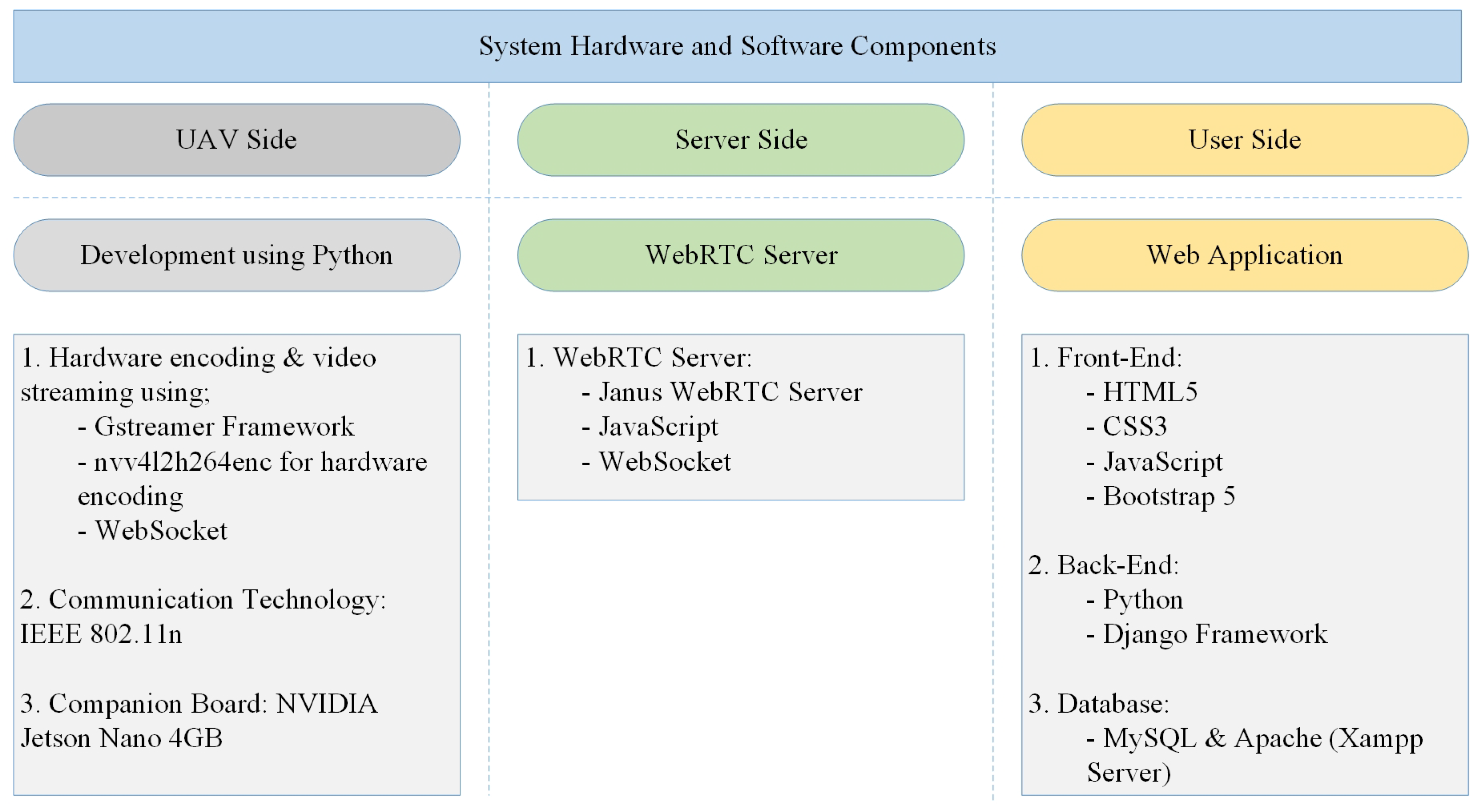

4.2. Utilized Technology

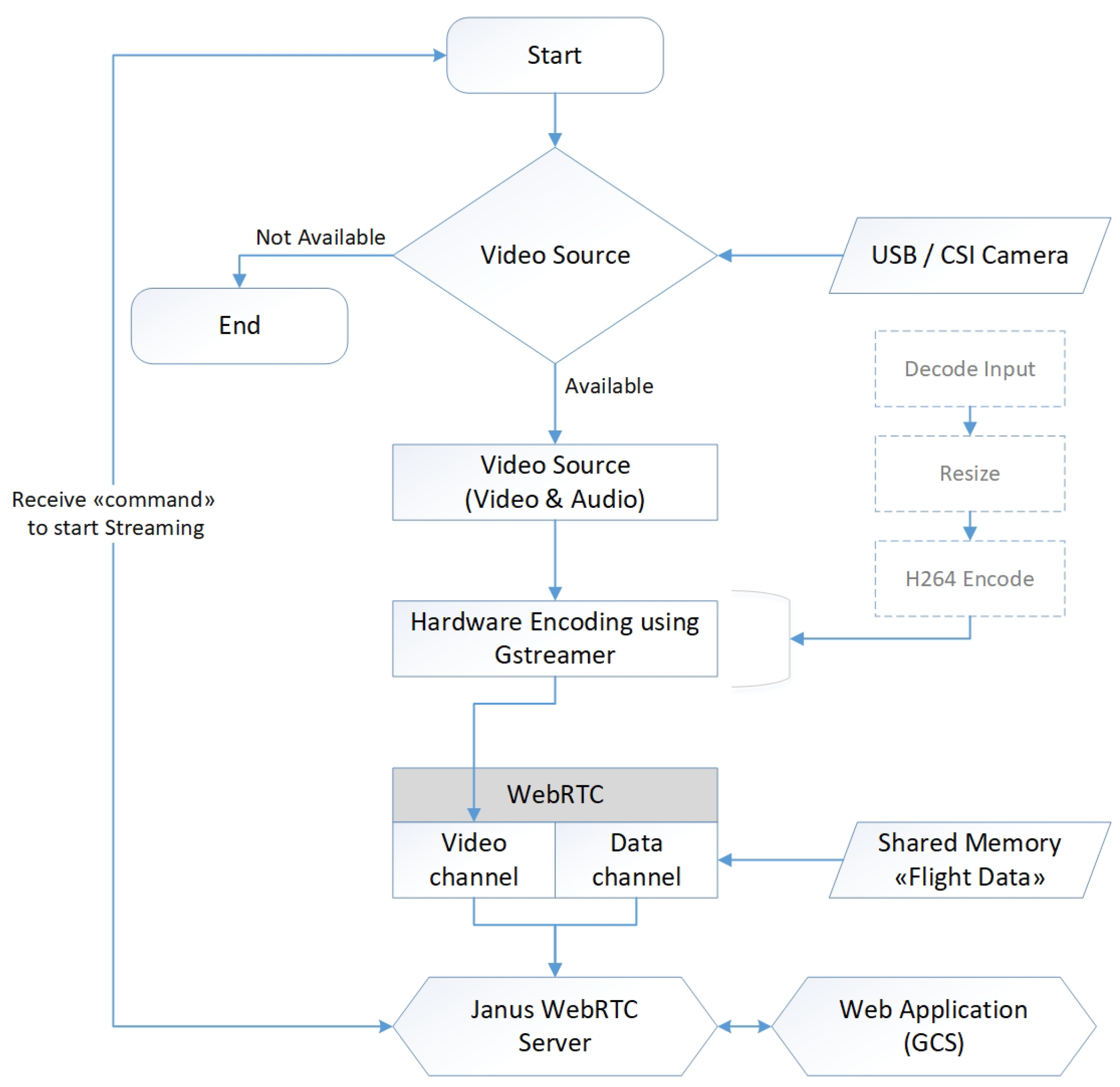

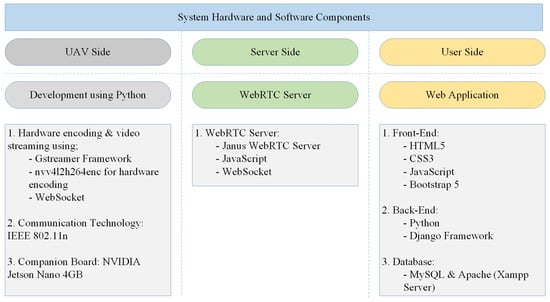

Figure 4 outlines the technology used in each section of our development, which consists of three main parts. On the UAV side, a Python script—accessible via SSH login—initiates video acquisition from different sources, encoding the video using a codec selected in the GCS interface, such as H.264 or H.265. The GStreamer framework on the companion board enables GPU-accelerated hardware encoding, minimizing latency and reducing CPU load to support multiple video streams. For example, two video feeds—one from the navigation camera and another from the inspection camera—can be streamed simultaneously. The encoded video is also recorded and stored onboard the UAV during streaming. Additionally, for companion boards without hardware encoding capabilities, a software-based video streaming pipeline is available, which can be selected in the GCS interface.

Figure 4.

Hardware and software components with utilized technology.

The encoded stream(s) are transmitted via the WebRTC video channel, while flight data are sent through the WebRTC data channel to the Janus WebRTC server. The server is configured with a streaming plugin tailored to our application needs for receiving video streams. The graphical user interface (GUI) is a web application integrated with the Janus WebRTC server to display video streams and flight data, as well as to relay this information to remote users.

In summary, the UAV-side development enables hardware or software encoding of video feeds from different sources, providing live video streaming through the video channel and transmitting flight data via the data channel to the WebRTC server. The server-side development involves receiving multiple video streams and flight data from UAVs and distributing them to the web application interface. In the web application, three main tasks are handled: managing and relaying video streams to users, managing user access, and configuring UAVs. A local MySQL database is used to store user configurations and video stream information for each UAV setup. Additionally, a configuration tool was developed to simplify the setup of the streaming plugin according to specific use case requirements.

4.3. Implementation Flowchart

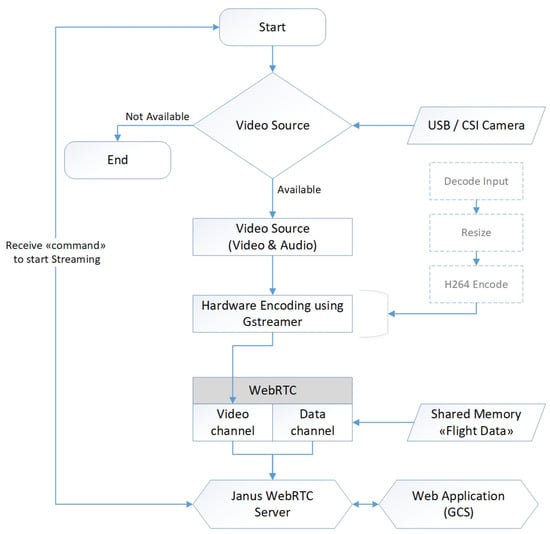

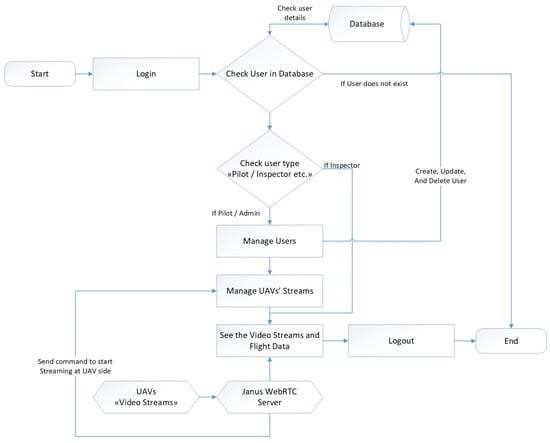

Figure 5 shows the flowchart of the UAV-side code interacting with the Janus WebRTC server at the GCS, while Figure 6 illustrates the flowchart of the GCS-side web application code managing video streams from UAVs across different user sessions.

Figure 5.

Flowchart of the UAV side development.

Figure 6.

Flowchart of the GCS side development.

5. Results

The performance of a WebRTC-based real-time video streaming and data transmission is influenced by several key factors, including video encoding latency (both software and hardware), video resolution, video bitrate, communication technology (Wi-Fi, 4G, 5G, etc.), network topology, and user management. Ensuring that these parameters meet the necessary requirements is crucial for the effective operation of a UAV monitoring and control system.

5.1. Evaluation Criteria

Different types of video codecs, such as VP8, VP9, and H.264, are supported in WebRTC technology. The choice of codec depends on the specific application requirements, with H.264 and H.265 being the most commonly used due to their high quality and efficient compression. Table 2 presents the video stream characteristics for different WebRTC-supported codecs, including their data rates and resolutions. It also provides the frame sizes for various resolutions, offering insights into how many video streams can theoretically be supported using different codecs, based on the available communication bandwidth and the codec’s compression efficiency.

Table 2.

Video stream characteristics for different WebRTC video codecs [36,37].

WebRTC technology can adapt to various video resolutions, offering the flexibility to adjust video quality based on bandwidth and other constraints. It is important to note that video resolution plays a key role in determining the data rate, which, in turn, influences the number of simultaneous streams that can be supported. The results of the proposed solution are evaluated based on the following parameters:

- Video transmission latency must be less than 300 ms for each stream from multiple UAVs to support remote UAV piloting [38].

- The minimum resolution of the video stream should be Full High Definition (HD) (1920 × 1080).

- Flight data transmission must be handled through the WebRTC data channel.

- Support for incoming video streams and flight data transmission from multiple UAVs.

- Capability to relay video streams and flight data to multiple user connections with different authorization levels such as Pilot (Admin), Inspectors, and Customers.

5.2. Evaluation Results

In this section, we discuss the evaluation results of our research work.

5.2.1. Test Results of Proposed System

Latency in a video streaming application refers to the time it takes for a video frame to be captured, encoded, transmitted, decoded, and displayed on the receiving end. It is a critical metric because high latency can result in a poor user experience, such as a noticeable lag between video and audio. The latency in a WebRTC-based video streaming application can vary due to factors such as communication technology, network conditions, encoder and decoder quality, and the processing power of both the UAV companion board and the GCS computer.

Generally, latency of less than 300 ms is considered acceptable for remote piloting UAVs [38], while latency exceeding 500 ms can cause significant delays. In our tests, we calculate transmission (network) latency, which differs from “glass-to-glass latency”. For our application’s transmission latency calculations, we use Wireshark [39], a network protocol analyzer. Wireshark helps measure estimated transmission latency in video streaming applications.

As shown in Figure 7, our transmission latency results capture the total transmission time from the UAV to the Wi-Fi router and from the Wi-Fi router to the test laptop. The transmitted packets from the Jetson Nano to the GCS are recorded using Wireshark and saved as a CSV file. The estimated latency is then calculated using a Python script.

Figure 7.

Testbed for latency measurements.

Test 1: Video transmission latency for multiple video streams from a single UAV.

- Hardware Setup:

- Device: NVIDIA Jetson Nano

- Connection: Wi-Fi IEEE 802.11n dongle

- Cameras: Logitech USB camera (Logitech C920 HD Pro)

- Video Sources: 1 camera source and 8 video test sources in GStreamer

- Resolution: Full HD: 1920 × 1080

- Codec: H.264

- Latency: approximately 140 ms.

Test 2: Video transmission latency for a single video stream from multiple UAVs.

- Hardware Setup:

- Devices: NVIDIA Jetson Nano and Raspberry Pi 4 Model−B 8GB RAM

- Connection: Wi-Fi IEEE 802.11n dongle for both devices

- Cameras: 2 × Logitech USB cameras, one for each device

- Resolution: Full HD (1920 × 1080) for both streams

- Codec: H.264

- Latency: approximately 130 ms for each UAV.

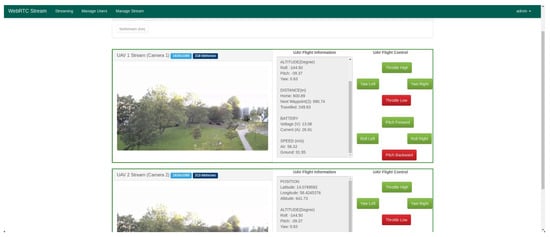

Figure 8 shows the GCS web application we developed for our research work. The screenshot was taken during Test 2.

Figure 8.

Application interface of GCS.

Test 3: Video transmission latency for hardware-encoded video stream from one UAV.

- Hardware Setup:

- Device: NVIDIA Jetson Nano

- Connection: Wi-Fi IEEE 802.11n dongle

- Camera: CSI camera (Model: Waveshare IMX219-160 Camera Module)

- Resolution: Full HD (1920 × 1080)

- Codec: Hardware encoded H.264

- Latency: ranging between approx. 85 ms and 105 ms depending on network condition.

Test 4: Video transmission latency from multiple devices with software/hardware encoded streams using different Wi-Fi (IEEE 802.11n) frequency (2.4 GHz or 5 GHz) links.

- Hardware Setup:

- Devices: 3 × NVIDIA Jetson Nano

- Connections: Device1 and Device2 = 5 GHz 802.11n, Device3 = 2.4 GHz

- Cameras: 2 × Logitech USB camera, 1 × CSI camera for hardware-encoded stream (Device1)

- Testing Variables: Different resolutions and bitrates to observe their impact on latency.

- Latency: The latency measurement results are presented in Table 3 below.

Table 3. Video transmission latency for different resolutions and bitrates.

Table 3. Video transmission latency for different resolutions and bitrates.

The results indicate that reducing the resolution and bitrate typically results in lower latency. High resolutions, when paired with suitable bitrates, offer a balance between video quality and transmission latency.

Test 5: Performance analysis for different network scenarios.

Figure 9 below shows the testbed for network performance analysis across various network scenarios.

Figure 9.

Testbed for Test 5 measurements.

The hardware and system setup details are provided as follows.

- Laptop running the Janus WebRTC server:

- CPU: Intel Core i7

- Memory: 16GB RAM

- Network: Wi-Fi (IEEE 802.11n)

- Operating System: Ubuntu 20.04

- Janus Server: The streaming plugin has been configured to handle multiple video streams while simultaneously managing flight data.

- Interface 1: Wi-Fi (wlan0)

- Interface 2: 4G (tun0)

- Interface 3: 5G (tun1)

- Wi-Fi Router:

- Model: TP-Link Archer C7

- Standard: IEEE 802.11n/ac (2.4 GHz and 5 GHz)

- Max Throughput: Up to 1.75 Gbps

- Configuration of the Devices:

- Device 1—Wi-Fi Connectivity (IEEE 802.11n)

- −

- Connected to the Wi-Fi network using an 802.11n USB dongle.

- Device 2—4G Connectivity

- −

- 4G USB Dongle: A 4G USB dongle was used to enable cellular connectivity.

- −

- Model: Huawei E3372h-320

- −

- Network Compatibility: 4G Long-Term Evolution (LTE) “Cat4” with fallback to 3G/2G.

- −

- Supported Bands: Various global LTE bands supporting download speeds of up to 150 Mbps.

- −

- Connection Type: USB 2.0.

- −

- Driver Compatibility: Plug-and-play on many Linux distributions, including Ubuntu (drivers often available via usb-modeswitch).

- −

- Setup: Plug the 4G USB dongle into the USB port of the NVIDIA Jetson Nano or Raspberry Pi.

- −

- Configuration: The necessary drivers were installed, and the cellular interface on the UAVs was configured using NetworkManager [40]. Static IPs were assigned through a cloud-based Virtual Private Network (VPN) to ensure consistent routing between the UAVs and the Janus server.$ sudo apt-get install modemmanager usb-modeswitch$ nmcli con add type gsm ifname ’*’ con-name ’4G-Connection’ apn ’web.vodafone.de’* Assign static IPs to UAVs:$ sudo apt-get install openvpn$ sudo openvpn –config/opt/vpnconfig.ovpn

- Device 3—5G Connectivity

- −

- 5G Modem: A 5G modem was used for high-speed data transmission.

- −

- Model: Netgear Nighthawk M5 (MR5200)

- −

- Network Compatibility: 5G NR and 4G LTE.

- −

- Supported Bands: Provides comprehensive support for global 5G bands, enabling download speeds of up to 2 Gbps.

- −

- Connection Type: USB-C.

- −

- Driver Compatibility: It may require additional configuration and firmware support, depending on the Linux distribution. Often used in tethering mode via USB, the 5G dongle should be plugged into the companion board (Jetson Nano) using a USB-C to USB-A adapter if necessary. If native driver support is unavailable, configure the dongle in tethering mode to set up the device as a network interface (usb0).

- −

- Configuration: Similar to the 4G setup, NetworkManager was used to establish the 5G connection. The modem settings were optimized for high bandwidth and low latency performance.

- Configuration at Janus Server (GCS):

- Janus Server Setup: Installed and configured Janus WebRTC server on the laptop running Ubuntu 20.04.

- Streaming Plugin: Configured janus.plugin.streaming.jcfg to receive multiple video streams from different UAVs.

The data collection and measurement process involved using various tools and methods to assess network performance. Latency was measured by capturing data packets with Wireshark and tcpdump on both the UAVs and the Janus server, and calculating the time difference between a packet’s transmission from the UAV and its reception at the server. Jitter was evaluated by monitoring the variance in consecutive packet reception times using Janus WebRTC performance metrics and tools like iftop [41]. Packet loss was calculated by comparing the number of packets sent by the UAVs to those successfully received at the Janus server, using Wireshark and the WebRTC-internals dump from Chrome (“chrome://webrtc-internals” (accessed on 14 September 2024)). It was defined as the percentage of packets not received by the server relative to the total packets sent by the UAVs. Bandwidth usage was monitored on the Janus server with iftop and vnStat [42], tracking the total data rate of incoming streams for each resolution and network scenario.

Table 4 below presents the network performance measurements across various network scenarios.

Table 4.

Network performance measurements for different network scenarios.

Based on the results in Table 4, the latency on 5G is significantly lower, ranging from 20 to 30 ms, which is ideal for real-time applications. In comparison, 4G LTE exhibits higher latency (70–90 ms), partly due to network variability and the additional overhead introduced by the VPN connection. Wi-Fi (802.11n) has the highest latency among the three, ranging from 90 to 120 ms. Additionally, 5G demonstrates minimal jitter, between 1 and 3 ms, ensuring a stable and smooth video stream. In comparison, 4G LTE experiences moderate jitter of 8 to 12 ms, and Wi-Fi shows greater fluctuation with jitter reaching up to 10 ms.

When it comes to packet loss, 5G once again leads with a very low rate of 0.2 to 0.5%, providing superior reliability for high-quality video streams. In contrast, 4G LTE has a slightly higher packet loss of 1.3 to 1.8%, and Wi-Fi can experience up to 1.5%, especially in environments with interference. Network bandwidth is also highest with 5G, reaching up to 50 Mbps for high-resolution video streaming, while Wi-Fi supports up to 40 Mbps and 4G LTE lags behind at 35 Mbps.

Overall, while Wi-Fi and 4G LTE can deliver moderate performance for UAV monitoring and control, 5G stands out as the best choice. It offers the optimal combination of low latency, minimal jitter, negligible packet loss, and high bandwidth, making it the ideal solution for high-quality real-time video streaming and UAV operations.

5.2.2. Janus WebRTC Server Performance

The following results and evaluations were conducted during Test 1, involving the transmission of multiple video streams (9 × Full HD resolution stream) to the GCS.

- CPU Usage Analysis: The CPU usage of the Janus server during video streaming can be monitored using the “top” command, which provides real-time system statistics. While handling multiple video streams from UAVs, the Janus WebRTC server process (running under root) consumed 23.9% CPU and 0.4% memory over a period of 17 min and 80 s.

- Memory Usage Analysis: Memory usage during video streaming can be tracked using the “free” command, which provides detailed information about system memory. In this test, the system had 5933.6 MiB of memory, with 774.3 MiB free, 2346.2 MiB used, and 2813.1 MiB buffered/cached. The swap space totaled 2048.0 MiB, all of which was free, resulting in 2986.4 MiB of available memory. Monitoring memory usage ensures sufficient resources are available and helps detect potential memory-related issues during the streaming process.

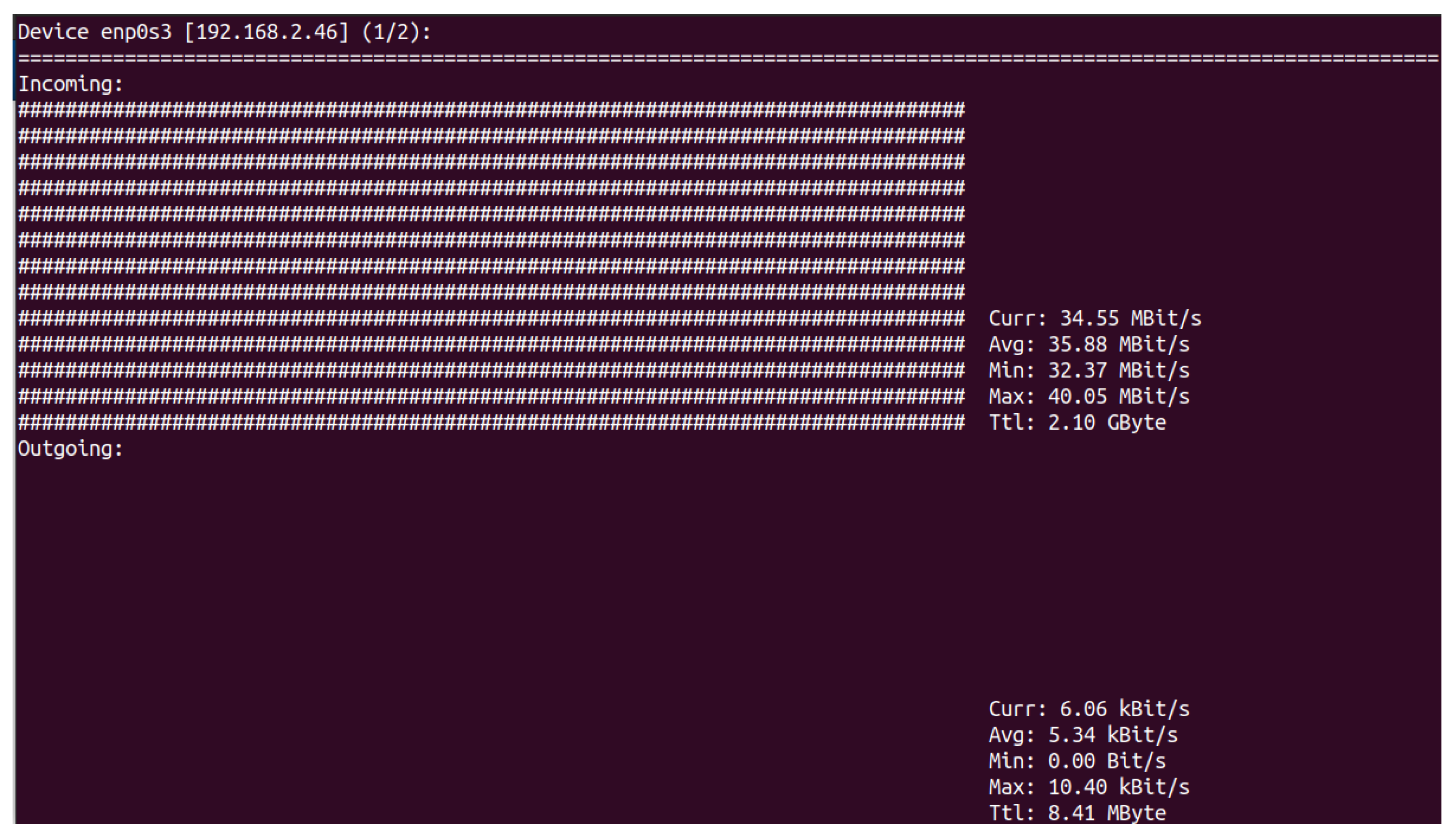

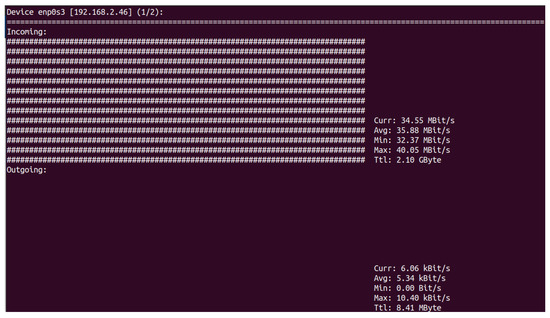

- Network Bandwidth Usage Analysis: To monitor network bandwidth usage during video streaming, tools like “iftop” or “nload” can be used. These tools provide real-time information about incoming and outgoing traffic. In Figure 10, the incoming network bandwidth had a current rate of 34.55 Mbit/s during multiple video streams from UAVs, with an average of 35.88 Mbit/s, a minimum of 32.37 Mbit/s, and a maximum of 40.05 Mbit/s, totaling 2.10 GBytes. Outgoing traffic had a current rate of 6.06 kbit/s, with an average of 5.34 kbit/s, a minimum of 0.00 bit/s, and a maximum of 10.40 kbit/s, totaling 8.41 MBytes.

Figure 10. Network bandwidth during multiple streaming.

Figure 10. Network bandwidth during multiple streaming.

- Logging and Debugging: Enabling logging and debugging in the Janus server allows for monitoring system performance and diagnosing issues during video streaming. Logs can be configured to output to a file or console, offering insights into the system’s behavior and helping to resolve potential problems.

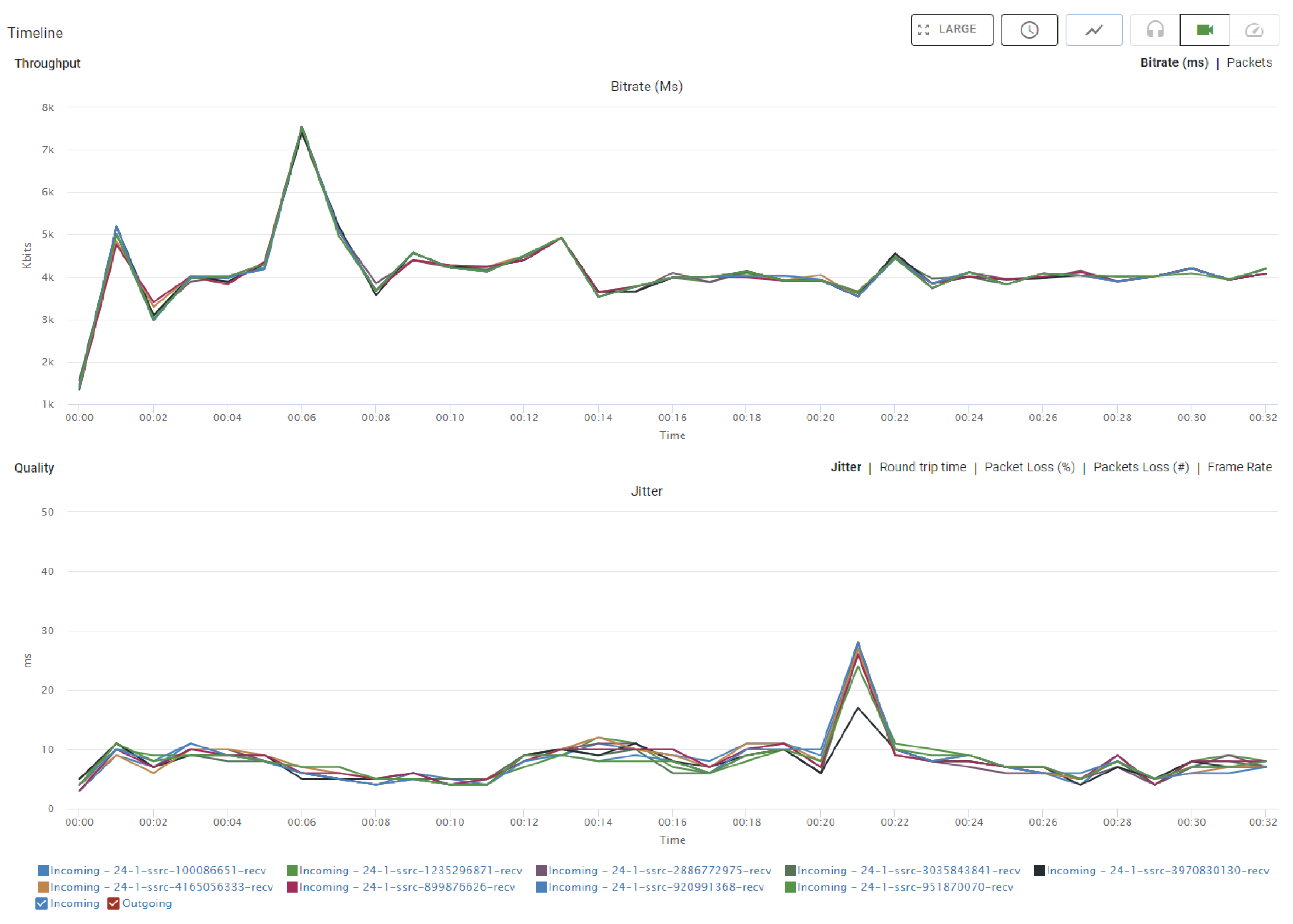

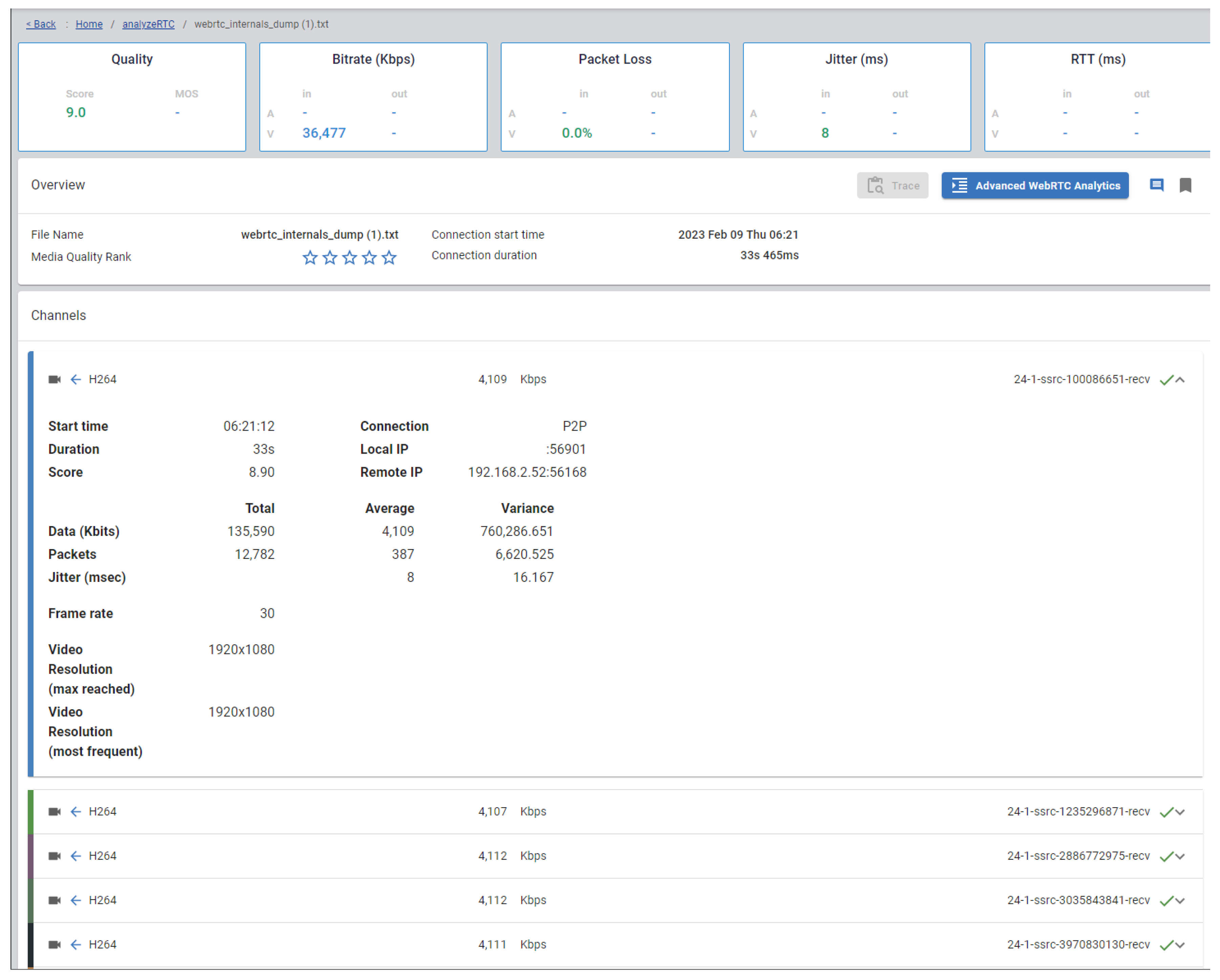

5.2.3. Janus WebRTC Benchmark Results

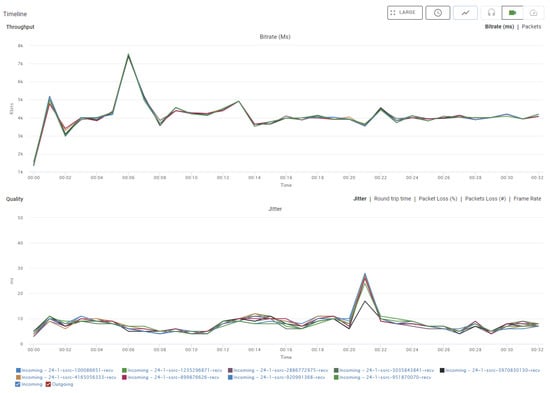

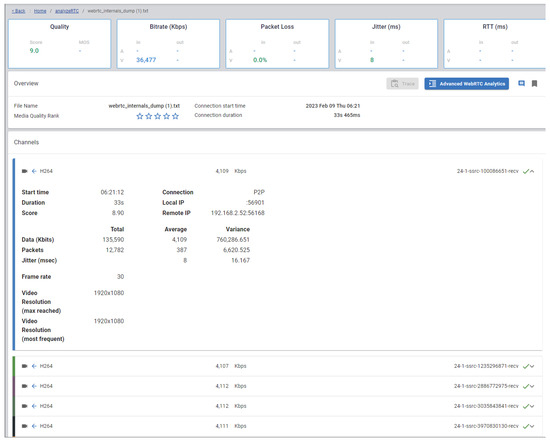

An important aspect of WebRTC performance is the quality of audio and video streams, which can be assessed through tests measuring signal quality, latency, and jitter. For testing, we used testRTC [43], uploading a WebRTC internals dump from Chrome (“chrome://webrtc-internals” (accessed on 14 September 2024)) for analysis. Figure 11 and Figure 12 display the Janus WebRTC benchmark results for our application during Test 1, covering a 9 video stream session over approximately 32 min. These figures are explained in two main sections below: Throughput (bitrate) and Quality (jitter).

Figure 11.

Janus WebRTC benchmark results (9 streams).

Figure 12.

Janus WebRTC benchmark results − webrtc−internals dump (9 streams).

- Throughput: The upper section of the graph shows the bitrate for each stream in kilobits per second (Kbits). At the 00:01 mark, there is a spike reaching approximately 7000 Kbits, likely corresponding to the initial synchronization of the streams. After this peak, the bitrate fluctuates until around the 00:06 mark, after which it stabilizes between 3000 and 5000 Kbits for the remainder of the session. Despite some variability, the streams generally follow a consistent pattern, indicating stable data transmission rates.

- Quality: The lower section of the graph illustrates jitter, measured in milliseconds (ms), which reflects the variability in packet arrival times. At the 00:01 mark, there is a small peak in jitter (around 10 ms) that coincides with the bitrate peak, possibly indicating initial instability as the streams are established. Afterward, jitter remains low, fluctuating between 5 and 10 ms, with a notable peak to 25 ms at the 00:18 mark, possibly due to temporary network instability. Following this peak, jitter returns to stable levels for the rest of the session.

Overall, the benchmark shows that after the initial synchronization period, the streams maintain a relatively stable throughput and quality, with only minor fluctuations. This suggests that the Janus WebRTC server handles the 9-stream session effectively in our Test 1 case, ensuring consistent performance throughout.

5.3. Comparative Analysis

While existing studies provide valuable insights into specific aspects of UAV monitoring systems, none fully address the combined requirements of interoperability across multiple platforms, multi-UAV support for video streaming and flight data collection, UAV control, and multiclient session management with high-performance real-time data relaying as outlined in our problem statement. This gap highlights the need for a new solution that integrates these features into a cohesive system.

The framework described in [2] presents a “WebRTC-based flying monitoring system” that incorporates IoT sensors with a single UAV, enabling real-time communication using WebRTC for both video and data transmission. However, it does not support multiple video streams from multiple UAVs. Solutions, such as the one in [20], use WebRTC data channels for direct device-to-device communication, offering some flexibility. However, this implementation focuses solely on the Data Channel API without providing benchmarks or addressing integration with various platforms. Another example, [19], introduces a Flying Communication Server for sharing information, including video and text, among rescue teams via a WebRTC server, but does not present test results regarding video streaming latency.

For interoperability, a flexible solution is needed that can integrate with native apps, cloud-based systems, and web applications. Although commercial solutions like WOWZA [23] and VIDIZMO [24] provide cross-platform streaming capabilities, they are proprietary, and their performance metrics are not publicly available for evaluation.

Support for multiple UAVs is a critical requirement that is largely unmet by existing solutions. Many studies, such as [13,14], focus on single-UAV applications and lack the capability to handle multiple UAVs simultaneously. The system proposed in [17] manages multiple UAV swarm missions but relies on MAVLink for communication, which is unsuitable for low-latency, high-quality real-time video streaming, essential for our application. Additionally, support for multiple client sessions with different authorization levels is not comprehensively addressed in the existing literature, except in [15].

Our system, developed using the Janus WebRTC media server, shares methodological similarities with the approach presented in [15], which implements a low-latency, end-to-end video streaming system from a UAV using the Janus WebRTC server. However, our application significantly extends the capabilities of the previous work in several key areas. Firstly, our system supports simultaneous flight data collection and control alongside real-time video streaming from multiple UAVs, whereas the previous study focuses solely on a single UAV use case and relaying data to multiple users. These features address a critical need identified in the literature for multi-UAV monitoring systems. Secondly, we provide comprehensive test results across various latency, network bandwidth, jitter, video bitrates, and resolutions using different communication technologies. These evaluations offer valuable insights into the system’s performance under different configurations, which were not explored in the prior work. By examining the impact of various test scenarios and communication technologies, we contribute to a deeper understanding of how to optimize WebRTC-based systems for various operational scenarios using the Janus WebRTC server. Furthermore, our paper discusses various WebRTC media servers and explains why Janus was chosen over alternatives like Mediasoup and Kurento. This comparative analysis is supported by performance studies, as referenced in [29,32]. By detailing the features and advantages of the Janus WebRTC server, we provide a broader perspective and contribute ideas for its application in diverse use cases, creating opportunities for future research in multi-UAV monitoring-control systems and real-time communication frameworks.

In conclusion, our research not only improves the capabilities of current systems but also offers experimental results and analysis that add valuable insights to this field. These advancements and findings expand the understanding and practical use of multi-UAV systems with the Janus WebRTC server, filling existing gaps in the literature and laying the groundwork for future developments.

6. Discussion

WebRTC supports communication through audio, video, and data channels [9], with the data channel being suitable for various purposes such as streaming point cloud data given in [44] and remote control given in [45]. Since the data channel allows for bidirectional communication [46], it can be used to control the UAV while simultaneously receiving flight data for specific use cases. Currently, the process of sending data from the GCS to the UAV has been tested for functionality but has not been fully implemented, and it is still being developed. Therefore, the current GCS setup provides real-time multi-UAV video streaming and flight data collection with low latency.

The proposed solution serves as a foundational framework for designing an advanced system that can efficiently manage extensive UAV fleets for comprehensive monitoring applications. By leveraging a WebRTC media server capable of handling hundreds of simultaneous point-to-point connections, this system can facilitate robust and scalable UAV operations. To give an example, each UAV group within a specific location can be considered as a cluster, and multiple clusters can collectively cover expansive geographic areas. Within this framework, UAVs in each cluster are assigned to distinct videorooms of Janus media server, which can be securely accessed by different user groups. This approach not only enhances operational efficiency but also ensures secure, real-time monitoring and control, making it an ideal solution for large-scale UAV deployment.

The proposed system design provides a prototype for video and data streaming from multiple UAVs to a web application. The system utilizes WebRTC to achieve low latency and high performance in video transmission, as well as hardware encoding on the UAV side to improve latency. In our WebRTC implementation, the video channel is used to stream hardware-encoded video, and the data channel is used to transfer randomly generated test flight data to test the implemented solution.

One of the key accomplishments of this system is the capacity to manage multiple video streams and multiple users with different roles. This makes the system suitable for a wide range of UAV monitoring applications. However, the system has some limitations, including network-related issues, hardware compatibility, processing power demands. Despite these limitations, incorporating a WebRTC media server in GCS development shows promising results for multi-UAV video streaming applications. As WebRTC, UAV, and communication technologies continue to advance, the system is expected to become even more effective and versatile. Future work could focus on the following areas:

- Improving network reliability and performance: The system’s success depends heavily on the stability and speed of the network connection. Tests under challenging network conditions should be investigated to ensure high-quality video transmission.

- Enhancing user interface and control options: Improving the user interface and offering more advanced control features will enhance the system’s usability. Furthermore, enhancing the automation of connection process between UAVs and GCS will minimize the need for manual configuration.

- Adding additional features and capabilities: The system currently only provides real-time video & data streaming and control features. Expanding the system’s capabilities with features such as flight data analysis, mission status reporting, visualizing the current locations of the UAVs on a map, and advanced remote piloting would transform it into a complete GCS dashboard.

7. Conclusions

The measurements in the study in [47] clearly show that latency is influenced not only by communication technology but also by environmental factors and the quality of the connection. The test results indicate that, in some locations, latency was better with radio (Wi-Fi) connectivity compared with cellular, due to varying cellular connection quality. The specifics of communication technology, its equipment, and network topologies are beyond the scope of this research. Our primary objective was to develop a low-latency and real-time monitoring system that efficiently connects multiple UAVs to a web-based GCS. WebRTC was selected for its superior performance on resource-constrained platforms [1] and its advantages over traditional streaming protocols [8]. Several studies have analyzed the use of WebRTC in resource-constrained systems. For instance, one of the recent papers [48] compares the performance of web-based and native WebRTC applications, and examines the application of the WebRTC framework in resource-constrained environments, such as mobile devices and companion boards like the Raspberry Pi. With those findings, it is possible to say that WebRTC shows great promise for real-time monitoring involving a large number of peers.

This paper’s contribution lies in utilizing a WebRTC media server framework to develop a web-based GCS solution. The development and test results show that the Janus WebRTC media server can be effectively used for multi-UAV applications, enabling real-time video streaming and flight data collection. The GCS software solution presented in this work has demonstrated practicality and functionality. However, it is introduced as an initial version, leaving room for improvements to enhance its features and broaden its applications. For example, in applications with complex network topologies, where video quality may occasionally be compromised but the reliability of control commands is critical, one possible enhancement could be the use of a low-latency and reliable protocol for transmitting control commands and mission data to UAVs, replacing the current implementation that relies on a WebRTC data channel for this purpose.

To summarize, the proposed system is an innovative solution that enables efficient video streaming and data collection from multiple UAVs to web applications. By leveraging WebRTC technology and implementing hardware encoding on the UAV side, the system achieves low-latency and high-performance video transmission. A notable achievement of this system is its ability to handle multiple video streams, flight data collection, and accommodate various users with distinct roles. This versatility makes it suitable for a wide range of UAV applications, especially multi-UAV inspection applications to monitor UAVs and the mission, as well as control UAVs.

Author Contributions

Conceptualization, F.K. and M.H.; methodology, F.K. and M.H.; software, M.H. and F.K.; validation, M.H.; formal analysis, F.K. and M.H.; investigation, test, and results, M.H.; writing—original draft preparation, F.K. and M.H.; writing—review and editing, F.K.; supervision, F.K. and W.H.; project administration, W.H. All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding. The research work was supported by the Professorship of Computer Engineering, Chemnitz University of Technology.

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Data Availability Statement

The original contributions presented in the study are included in the article, further inquiries can be directed to the corresponding author/s.

Conflicts of Interest

The authors declare no conflicts of interest.

Abbreviations

The following abbreviations are used in this manuscript:

| APOLI | Automated Power Line Inspection |

| AREIOM | Adaptive Research Multicopter Platform |

| CSV | Comma Separated Values |

| CSI | Camera Serial Interface |

| CPU | Central Processing Unit |

| GCS | Ground Control Station |

| GPS | Global Positioning System |

| GPU | Graphics Processing Unit |

| HD | High Definition |

| H.264 | MPEG-4 AVC (Advanced Video Coding) |

| H.265 | High-Efficiency Video Coding |

| IMU | Inertial Measurement Unit |

| LTE | Long-Term Evolution |

| MAVLink | Micro Air Vehicle Link |

| MCU | Multipoint Control Unit |

| N.A. | Not Available |

| OTT | Over-the-top |

| QoE | Quality of Experience |

| QoS | Quality of Service |

| RTC | Real Time Communication |

| RTK-GPS | Real-time Kinematic Global Positioning System |

| RTP | Real-time Transport Protocol |

| SD | Standard Definition |

| SFU | Selective Forwarding Unit |

| SQL | Structured Query Language |

| TCP | Transmission Control Protocol |

| UAVs | Unmanned Aerial Vehicles |

| UVC | USB Video Device Class |

| V4L2 | Video for Linux 2 |

| VPN | Virtual Private Network |

| VR | Virtual Reality |

| WebRTC | Web Real-Time Communication |

| WoT | Web of Things |

References

- Bacco, M.; Catena, M.; De Cola, T.; Gotta, A.; Tonellotto, N. Performance analysis of WebRTC-based video streaming over power constrained platforms. In Proceedings of the 2018 IEEE Global Communications Conference (GLOBECOM), Abu Dhabi, United Arab Emirates, 9–13 December 2018; pp. 1–7. [Google Scholar]

- Chodorek, A.; Chodorek, R.R.; Sitek, P. UAV-based and WebRTC-based open universal framework to monitor urban and industrial areas. Sensors 2021, 21, 4061. [Google Scholar] [CrossRef] [PubMed]

- Lagkas, T.; Argyriou, V.; Bibi, S.; Sarigiannidis, P. UAV IoT framework views and challenges: Towards protecting drones as “Things”. Sensors 2018, 18, 4015. [Google Scholar] [CrossRef] [PubMed]

- Tudevdagva, U.; Battseren, B.; Hardt, W.; Blokzyl, S.; Lippmann, M. Unmanned Aerial Vehicle-Based Fully Automated Inspection System for High Voltage Transmission Line. In Proceedings of the 12th International Forum on Strategic Technology IEEE Conference, IFOST2017, Ulsan, Republic of Korea, 31 May–2 June 2017; pp. 300–305. [Google Scholar]

- Automated Power Line Inspection. Available online: https://www.tu-chemnitz.de/informatik/ce/projects/projects.php.en#apoli (accessed on 14 September 2024).

- AREIOM: Adaptive Research Multicopter Platform. Available online: https://www.tu-chemnitz.de/informatik/ce/research/areiom-adm.php.en (accessed on 14 September 2024).

- Haohan, H.; Hangfan, Z.; Junhai, L.; Kuanrong, L.; Biao, P. Automatic and intelligent line inspection using UAV based on beidou navigation system. In Proceedings of the 2019 6th International Conference on Information Science and Control Engineering (ICISCE), Shanghai, China, 20–22 December 2019; pp. 1004–1008. [Google Scholar]

- Santos-González, I.; Rivero-García, A.; Molina-Gil, J.; Caballero-Gil, P. Implementation and Analysis of Real-Time Streaming Protocols. Sensors 2017, 17, 846. [Google Scholar] [CrossRef]

- Holland, J.; Begen, A.; Dawkins, S. Operational Considerations for Streaming Media; RFC 9317; RFC Editor: Marina del Rey, CA, USA, 2022. [Google Scholar]

- WebRTC. Available online: https://webrtc.org/ (accessed on 14 September 2024).

- Battseren, B. Software Architecture for Real-Time Image Analysis in Autonomous MAV Missions. Ph.D. Thesis, Chemnitz University of Technology, Chemnitz, Germany, 2024. [Google Scholar]

- Gao, C.; Wang, X.; Chen, X.; Chen, B.M. A hierarchical multi-UAV cooperative framework for infrastructure inspection and reconstruction. Control Theory Technol. 2024, 22, 394–405. [Google Scholar] [CrossRef]

- Liao, Y.H.; Juang, J.G. Real-time UAV trash monitoring system. Appl. Sci. 2022, 12, 1838. [Google Scholar] [CrossRef]

- Chodorek, A.; Chodorek, R.R.; Yastrebov, A. The prototype monitoring system for pollution sensing and online visualization with the use of a UAV and a WebRTC-based platform. Sensors 2022, 22, 1578. [Google Scholar] [CrossRef] [PubMed]

- Sacoto-Martins, R.; Madeira, J.; Matos-Carvalho, J.P.; Azevedo, F.; Campos, L.M. Multi-purpose Low Latency Streaming Using Unmanned Aerial Vehicles. In Proceedings of the 2020 12th International Symposium on Communication Systems, Networks and Digital Signal Processing (CSNDSP), Porto, Portugal, 20–22 July 2020; pp. 1–6. [Google Scholar] [CrossRef]

- Gueye, K.; DEGBOE, B.M.; Samuel, O.; Ngartabé, K.T. Proposition of health care system driven by IoT and KMS for remote monitoring of patients in rural areas: Pediatric case. In Proceedings of the 2019 21st International Conference on Advanced Communication Technology (ICACT), PyeongChang, Republic of Korea, 17–20 February 2019; pp. 676–680. [Google Scholar]

- Wu, C.; Tu, S.; Tu, S.; Wang, L.; Chen, W. Realization of Remote Monitoring and Navigation System for Multiple UAV Swarm Missions: Using 4G/WiFi-Mesh Communications and RTK GPS Positioning Technology. In Proceedings of the 2022 International Automatic Control Conference (CACS), Kaohsiung, Taiwan, 3–6 November 2022; pp. 1–6. [Google Scholar]

- Gu, Q.; Michanowicz, D.R.; Jia, C. Developing a modular unmanned aerial vehicle (UAV) platform for air pollution profiling. Sensors 2018, 18, 4363. [Google Scholar] [CrossRef] [PubMed]

- Kobayashi, T.; Matsuoka, H.; Betsumiya, S. Flying communication server in case of a largescale disaster. In Proceedings of the 2016 IEEE 40th Annual Computer Software and Applications Conference (COMPSAC), Atlanta, GA, USA, 10–14 June 2016; Volume 2, pp. 571–576. [Google Scholar]

- Janak, J.; Schulzrinne, H. Framework for rapid prototyping of distributed IoT applications powered by WebRTC. In Proceedings of the 2016 Principles, Systems and Applications of IP Telecommunications (IPTComm), Chicago, IL, USA, 19 October 2016; pp. 1–7. [Google Scholar]

- GitHub—Jeffbass/Imagezmq: A Set of Python Classes that Transport OpenCV Images from one Computer to Another Using PyZMQ Messaging.—github.com. Available online: https://github.com/jeffbass/imagezmq (accessed on 14 September 2024).

- Image Transmission Protocol; MAVLink Developer Guide. Available online: https://mavlink.io/en/services/image_transmission.html (accessed on 14 September 2024).

- WOWZA—The Embedded Video Platform for Solution Builders. Available online: https://www.wowza.com/ (accessed on 14 September 2024).

- VIDIZMO—Low Latency Live Streaming. Available online: https://www.vidizmo.com/low-latency-live-video-streaming/ (accessed on 14 September 2024).

- Application|Drone-Based Video Streaming with UgCS ENTERPRISE. Available online: https://www.ugcs.com/video-streaming-with-ugcs (accessed on 14 September 2024).

- nanoStream Webcaster. Available online: https://www.nanocosmos.de/v6/webrtc (accessed on 14 September 2024).

- Liveswitch SERVER. Available online: https://developer.liveswitch.io/liveswitch-server/index.html (accessed on 14 September 2024).

- Ultra Low Latency WebRTC Live Streaming Media Server—Ant Media—antmedia.io. Available online: https://antmedia.io/ (accessed on 14 September 2024).

- André, E.; Le Breton, N.; Lemesle, A.; Roux, L.; Gouaillard, A. Comparative study of WebRTC open source SFUs for video conferencing. In Proceedings of the 2018 Principles, Systems and Applications of IP Telecommunications (IPTComm), Chicago, IL, USA, 16–18 October 2018; pp. 1–8. [Google Scholar]

- Janus—General Purpose WebRTC Server. Available online: https://janus.conf.meetecho.com/docs/index.html (accessed on 14 September 2024).

- Mediasoup. Available online: https://mediasoup.org/ (accessed on 14 September 2024).

- Amirante, A.; Castaldi, T.; Miniero, L.; Romano, S.P. Performance analysis of the Janus WebRTC gateway. In Proceedings of the AWeS ’15: Proceedings of the 1st Workshop on All-Web Real-Time Systems, Bordeaux, France, 21 April 2015; pp. 1–7. [Google Scholar]

- Amirante, A.; Castaldi, T.; Miniero, L.; Romano, S.P. Janus: A general purpose WebRTC gateway. In Proceedings of the IPTComm ’14: Proceedings of the Conference on Principles, Systems and Applications of IP Telecommunications, Chicago, IL, USA, 1–2 October 2014; pp. 1–8. [Google Scholar]

- GStreamer: Open source multimedia framework. Available online: https://gstreamer.freedesktop.org/ (accessed on 14 September 2024).

- IIT-RTC 2017 Qt WebRTC Tutorial (Qt Janus Client). 2017. Available online: https://www.slideshare.net/slideshow/iitrtc-2017-qt-webrtc-tutorial-qt-janus-client/86890694 (accessed on 14 September 2024).

- Jansen, B.; Goodwin, T.; Gupta, V.; Kuipers, F.; Zussman, G. Performance evaluation of WebRTC-based video conferencing. ACM SIGMETRICS Perform. Eval. Rev. 2018, 45, 56–68. [Google Scholar] [CrossRef]

- Kostuch, A.; Gierłowski, K.; Wozniak, J. Performance analysis of multicast video streaming in IEEE 802.11 b/g/n testbed environment. In Proceedings of the Wireless and Mobile Networking: Second IFIP WG 6.8 Joint Conference, WMNC 2009, Gdańsk, Poland, 9–11 September 2009; Proceedings. Springer: Berlin/Heidelberg, Germany, 2009; pp. 92–105. [Google Scholar]

- Baltaci, A.; Cech, H.; Mohan, N.; Geyer, F.; Bajpai, V.; Ott, J.; Schupke, D. Analyzing real-time video delivery over cellular networks for remote piloting aerial vehicles. In Proceedings of the 22nd ACM Internet Measurement Conference, Nice, France, 25–27 October 2022; pp. 98–112. [Google Scholar]

- Wireshark · Go Deep. Available online: https://www.wireshark.org/ (accessed on 14 September 2024).

- NetworkManager—Linux network configuration tool suite. Available online: https://networkmanager.dev/ (accessed on 22 September 2024).

- iftop: Display Bandwidth Usage on an Interface. Available online: https://pdw.ex-parrot.com/iftop/ (accessed on 22 September 2024).

- vnStat—A Network Traffic Monitor for Linux and BSD. Available online: https://humdi.net/vnstat/ (accessed on 22 September 2024).

- testRTC Guide. Available online: https://support.testrtc.com/hc/en-us/categories/8260858196239-testRTC-Guide (accessed on 14 September 2024).

- Lee, Y.; Sim, J.; Kim, D.H.; You, D. A Comparison of Serialization Formats for Point Cloud Live Video Streaming over WebRTC. In Proceedings of the 2024 IEEE International Conference on Consumer Electronics (ICCE), Las Vegas, NV, USA, 6–8 January 2024; pp. 1–3. [Google Scholar] [CrossRef]

- Welcome to RTCBot’s Documentation! Available online: https://rtcbot.readthedocs.io/en/latest/ (accessed on 19 September 2024).

- WebRTC Tutorial—Real-Time Data Transmitting with WebRTC. Available online: https://getstream.io/resources/projects/webrtc/basics/rtcdatachannel (accessed on 19 September 2024).

- Green, M.; Mann, D.D.; Hossain, E. Measurement of latency during real-time wireless video transmission for remote supervision of autonomous agricultural machines. Comput. Electron. Agric. 2021, 190, 106475. [Google Scholar] [CrossRef]

- Diallo, B.; Ouamri, A.; Keche, M. A Hybrid Approach for WebRTC Video Streaming on Resource-Constrained Devices. Electronics 2023, 12, 3775. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).