Method for Concrete Structure Analysis by Microscopy of Hardened Cement Paste and Crack Segmentation Using a Convolutional Neural Network

Abstract

1. Introduction

- −

- Creating an original set of images of hardened cement paste;

- −

- Increasing the number of images to improve the generalizing ability of the model by applying an original augmentation algorithm [45];

- −

- Optimization of the parameters of the intellectual model based on the convolutional neural network of the U-Net architecture;

- −

- Calculation of the segmented defect area.

- −

- Preparation of a database of images of hardened cement paste using laboratory equipment;

- −

- Substantiation and description of the chosen SNS architecture;

- −

- Carrying out the process of augmentation to expand the training dataset;

- −

- Implementation, optimization, debugging and testing of the algorithm using SNS architecture U-Net;

- −

- Determination of the quality metrics of the implemented model;

- −

- Calculation of the area of a segmented defect, taking into account the parameters of laboratory equipment;

- −

- Establishing the relationship between the recipe, the proportion of defects in the form of cracks in the microstructure of the samples and their compressive strength.

2. Materials and Methods

2.1. Characterization of Laboratory Samples

- (1)

- Control: cement and water in the proportion of 25% by weight of cement;

- (2)

- Control + GF: cement, water (26% by weight of cement), glass fiber (GF) (3% by weight of cement);

- (3)

- Control + GF + MS: cement, water (28% by weight of cement), microsilica (10% by weight of cement); fiberglass (3% by weight of cement).

2.2. Development of an Intelligent Algorithm Based on a Convolutional Neural Network

- (1)

- Model 1 is a U-Net CNN, where augmentation will be probabilistic; that is, for each batch sample for training, we will apply the following transformations: random cropping of images; image rotation by 90°, vertical rotation/horizontal rotation/rotation by a random angle with a probability of 0.75, adding Gaussian noise sampled from a normal distribution with a probability of 0.7. There is no augmentation on the validation and test sets; preprocessing is reduced to the possibility of using paddings if necessary. This approach allows minimizing the negative effects of retraining the model, as well as minimizing the computational resources required for its training.

- (2)

- Model 2 is a U-Net CNN, the input of which is a set of 1000 images, divided in the ratio 70/20/10 into training, validation and test sets, created using the author’s augmentation code [45].

3. Results and Discussion

3.1. Quality Metrics for Crack Segmentation in Hardened Cement Paste

3.2. Calculating the Area of a Segmented Region

- (1)

- Use of additional devices: images obtained from sensors capable of detecting cracks or changes in hardened cement paste, cement-sand mortar and concrete can be used. For example, ultrasonic or laser sensors can detect imperfections in a material that are not visible on the surface.

- (2)

- Multimodal approach: thermal images and infrared images can be used as a dataset for further processing by a neural network.

- (3)

- Evaluation by other characteristics: using other characteristics, such as thermal conductivity data, mechanical characteristics, or sound signals emanating from the surface of the material and then generating graphs that can be further processed using a CNN.

4. Conclusions

- (1)

- The proposed intelligent algorithms, which are based on the U-Net CNN, allow segmentation of areas containing a defect, a crack, with an accuracy level required for the researcher of 60%.

- (2)

- Evaluation of the quality of the results of the work of model 1 and model 2 suggests the following: both models can be used to solve this problem; however, model 2 showed slightly better results. The difference in performance between models is 0.05 in favor of model 2 in terms of recall, Dice coefficient and IoU are also 0.02 higher in model 2 and F1 is better by 0.01. Although the difference in metrics is not significant, it is worth noting that model 1 is able to detect both significant damage and small cracks, which is an important aspect for this study.

- (3)

- According to the results of the study, it is possible not only to segment the areas of cracks but also to calculate the area occupied by damage.

- (4)

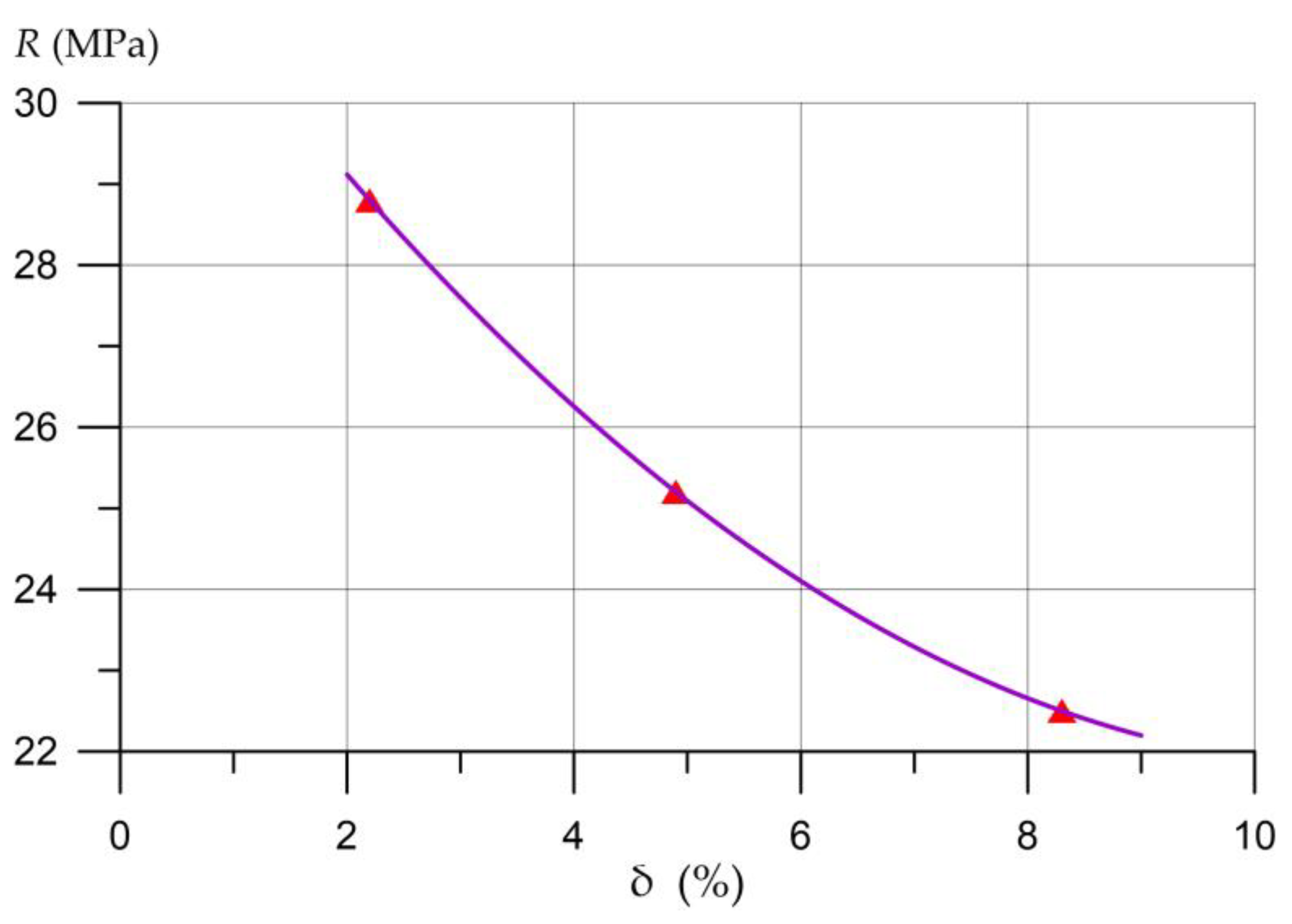

- The relationship between the formulation, the proportion of defects in the form of cracks in the microstructure of hardened cement paste samples and their compressive strength has been established. A decrease in the proportion occupied by cracks in photographs of the microstructure of the samples is characterized by an increase in compressive strength and is directly related to the type of additive in the composite. The use of crack segmentation in the microstructure of a hardened cement paste using a convolutional neural network makes it possible to automate the process of crack detection and calculation of their proportion in the studied samples of cement composites and can be used to assess the state of concrete.

5. Patents

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Khan, M.A.-M.; Kee, S.-H.; Pathan, A.-S.K.; Nahid, A.-A. Image Processing Techniques for Concrete Crack Detection: A Scientometrics Literature Review. Remote Sens. 2023, 15, 2400. [Google Scholar] [CrossRef]

- Dorafshan, S.; Thomas, R.J.; Maguire, M. Comparison of deep convolutional neural networks and edge detectors for image-based crack detection in concrete. Constr. Build. Mater. 2018, 186, 1031–1045. [Google Scholar] [CrossRef]

- Lagaros, N.D.; Plevris, V. Artificial Intelligence (AI) Applied in Civil Engineering. Appl. Sci. 2022, 12, 7595. [Google Scholar] [CrossRef]

- Lee, J.; Lee, S. Construction Site Safety Management: A Computer Vision and Deep Learning Approach. Sensors 2023, 23, 944. [Google Scholar] [CrossRef]

- Halder, S.; Afsari, K.; Serdakowski, J.; DeVito, S.; Ensafi, M.; Thabet, W. Real-Time and Remote Construction Progress Monitoring with a Quadruped Robot Using Augmented Reality. Buildings 2022, 12, 2027. [Google Scholar] [CrossRef]

- Fang, W.; Love, P.E.; Ding, L.; Xu, S.; Kong, T.; Li, H. Computer Vision and Deep Learning to Manage Safety in Construction: Matching Images of Unsafe Behavior and Semantic Rules. IEEE Trans. Eng. Manag. 2021, 9527760, 1–13. [Google Scholar] [CrossRef]

- Ercan, M.F.; Wang, R.B. Computer Vision-Based Inspection System for Worker Training in Build and Construction Industry. Computers 2022, 11, 100. [Google Scholar] [CrossRef]

- Harichandran, A.; Raphael, B.; Mukherjee, A. Equipment activity recognition and early fault detection in automated construction through a hybrid machine learning framework. Comput. Aided Civ. Infrastruct. Eng. 2023, 38, 253–268. [Google Scholar] [CrossRef]

- Lu, X.; Fei, J. Velocity tracking control of wheeled mobile robots by iterative learning control. Int. J. Adv. Robot. Syst. 2016, 13, 1. [Google Scholar] [CrossRef]

- Zhou, X.; Lei, X. Fault Diagnosis Method of the Construction Machinery Hydraulic System Based on Artificial Intelligence Dynamic Monitoring. Mob. Inf. Syst. 2021, 2021, 1093960. [Google Scholar] [CrossRef]

- Stel’makh, S.A.; Shcherban’, E.M.; Beskopylny, A.N.; Mailyan, L.R.; Meskhi, B.; Razveeva, I.; Kozhakin, A.; Beskopylny, N. Prediction of Mechanical Properties of Highly Functional Lightweight Fiber-Reinforced Concrete Based on Deep Neural Network and Ensemble Regression Trees Methods. Materials 2022, 15, 6740. [Google Scholar] [CrossRef]

- Beskopylny, A.N.; Stel’makh, S.A.; Shcherban’, E.M.; Mailyan, L.R.; Meskhi, B.; Razveeva, I.; Chernil’nik, A.; Beskopylny, N. Concrete Strength Prediction Using Machine Learning Methods CatBoost, k-Nearest Neighbors, Support Vector Regression. Appl. Sci. 2022, 12, 10864. [Google Scholar] [CrossRef]

- Chepurnenko, A.S.; Kondratieva, T.N.; Deberdeev, T.R.; Akopyan, V.F.; Avakov, A.A.; Chepurnenko, V.S. Prediction of Rheological Parameters of Polymers, Using Gradient Boosting Algorithm Catboost. Vse Mater. Entsiklopedicheskii Sprav. 2023, 6, 21–29. [Google Scholar] [CrossRef]

- Shi, M.; Shen, W. Automatic Modeling for Concrete Compressive Strength Prediction Using Auto-Sklearn. Buildings 2022, 12, 1406. [Google Scholar] [CrossRef]

- Matić, B.; Marinković, M.; Jovanović, S.; Sremac, S.; Stević, Ž. Intelligent Novel IMF D-SWARA—Rough MARCOS Algorithm for Selection Construction Machinery for Sustainable Construction of Road Infrastructure. Buildings 2022, 12, 1059. [Google Scholar] [CrossRef]

- Beskopylny, A.N.; Mailyan, L.R.; Stel’makh, S.A.; Shcherban’, E.M.; Razveeva, I.F.; Beskopylny, N.A.; Dotsenko, N.A.; El’shaeva, D.M. The Program for Determining the Mechanical Properties of Highly Functional Lightweight Fiber-Reinforced Concrete Based on Artificial Intelligence Methods. Russian Federation Computer Program 2022668999. 14 October 2022. Available online: https://www.fips.ru/registers-doc-view/fips_servlet?DB=EVM&DocNumber=2022668999&TypeFile=html (accessed on 14 June 2023).

- Rahman, A.U.; Saeed, M.; Mohammed, M.A.; Majumdar, A.; Thinnukool, O. Supplier Selection through Multicriteria Decision-Making Algorithmic Approach Based on Rough Approximation of Fuzzy Hypersoft Sets for Construction Project. Buildings 2022, 12, 940. [Google Scholar] [CrossRef]

- Anthony, B., Jr. A case-based reasoning recommender system for sustainable smart city development. AI Soc. 2021, 36, 159–183. [Google Scholar] [CrossRef]

- Zhao, S.; Kang, F.; Li, J. Non-Contact Crack Visual Measurement System Combining Improved U-Net Algorithm and Canny Edge Detection Method with Laser Rangefinder and Camera. Appl. Sci. 2022, 12, 10651. [Google Scholar] [CrossRef]

- Maslan, J.; Cicmanec, L. A System for the Automatic Detection and Evaluation of the Runway Surface Cracks Obtained by Unmanned Aerial Vehicle Imagery Using Deep Convolutional Neural Networks. Appl. Sci. 2023, 13, 6000. [Google Scholar] [CrossRef]

- Nogueira Diniz, J.d.C.; Paiva, A.C.d.; Junior, G.B.; de Almeida, J.D.S.; Silva, A.C.; Cunha, A.M.T.d.S.; Cunha, S.C.A.P.d.S. A Method for Detecting Pathologies in Concrete Structures Using Deep Neural Networks. Appl. Sci. 2023, 13, 5763. [Google Scholar] [CrossRef]

- Kim, J.-Y.; Park, M.-W.; Huynh, N.T.; Shim, C.; Park, J.-W. Detection and Length Measurement of Cracks Captured in Low Definitions Using Convolutional Neural Networks. Sensors 2023, 23, 3990. [Google Scholar] [CrossRef] [PubMed]

- Shokri, P.; Shahbazi, M.; Nielsen, J. Semantic Segmentation and 3D Reconstruction of Concrete Cracks. Remote Sens. 2022, 14, 5793. [Google Scholar] [CrossRef]

- Lee, T.; Kim, J.-H.; Lee, S.-J.; Ryu, S.-K.; Joo, B.-C. Improvement of Concrete Crack Segmentation Performance Using Stacking Ensemble Learning. Appl. Sci. 2023, 13, 2367. [Google Scholar] [CrossRef]

- An, Q.; Chen, X.; Wang, H.; Yang, H.; Yang, Y.; Huang, W.; Wang, L. Segmentation of Concrete Cracks by Using Fractal Dimension and UHK-Net. Fractal Fract. 2022, 6, 95. [Google Scholar] [CrossRef]

- Yu, C.; Du, J.; Li, M.; Li, Y.; Li, W. An improved U-Net model for concrete crack detection. Mach. Learn. Appl. 2022, 10, 100436. [Google Scholar] [CrossRef]

- Liu, Z.; Cao, Y.; Wang, Y.; Wang, W. Computer vision-based concrete crack detection using U-net fully convolutional networks. Autom. Constr. 2019, 104, 129–139. [Google Scholar] [CrossRef]

- Wang, C.; Han, Z.; Wang, Y.; Wang, C.; Wang, J.; Chen, S.; Hu, S. Fine Characterization Method of Concrete Internal Cracks Based on Borehole Optical Imaging. Appl. Sci. 2022, 12, 9080. [Google Scholar] [CrossRef]

- Ding, W.; Zhu, L.; Li, H.; Lei, M.; Yang, F.; Qin, J.; Li, A. Relationship between Concrete Hole Shape and Meso-Crack Evolution Based on Stereology Theory and CT Scan under Compression. Materials 2022, 15, 5640. [Google Scholar] [CrossRef]

- Zhou, S.; Pan, Y.; Huang, X.; Yang, D.; Ding, Y.; Duan, R. Crack Texture Feature Identification of Fiber Reinforced Concrete Based on Deep Learning. Materials 2022, 15, 3940. [Google Scholar] [CrossRef]

- Arbaoui, A.; Ouahabi, A.; Jacques, S.; Hamiane, M. Concrete Cracks Detection and Monitoring Using Deep Learning-Based Multiresolution Analysis. Electronics 2021, 10, 1772. [Google Scholar] [CrossRef]

- Beskopylny, A.; Lyapin, A.; Anysz, H.; Meskhi, B.; Veremeenko, A.; Mozgovoy, A. Artificial Neural Networks in Classification of Steel Grades Based on Non-Destructive Tests. Materials 2020, 13, 2445. [Google Scholar] [CrossRef] [PubMed]

- Climent, M.-Á.; Miró, M.; Eiras, J.-N.; Poveda, P.; de Vera, G.; Segovia, E.-G.; Ramis, J. Early Detection of Corrosion-Induced Concrete Micro-cracking by Using Nonlinear Ultrasonic Techniques: Possible Influence of Mass Transport Processes. Corros. Mater. Degrad. 2022, 3, 235–257. [Google Scholar] [CrossRef]

- Chen, B.; Zhang, H.; Wang, G.; Huo, J.; Li, Y.; Li, L. Automatic concrete infrastructure crack semantic segmentation using deep learning. Autom. Constr. 2023, 152, 104950. [Google Scholar] [CrossRef]

- Bae, H.; An, Y.-K. Computer vision-based statistical crack quantification for concrete structures. Measurement 2023, 211, 112632. [Google Scholar] [CrossRef]

- Qu, S.; Hilloulin, B.; Saliba, J.; Sbartaï, M.; Abraham, O.; Tournat, V. Imaging concrete cracks using Nonlinear Coda Wave Interferometry (INCWI). Constr. Build. Mater. 2023, 391, 131772. [Google Scholar] [CrossRef]

- Mir, B.A.; Sasaki, T.; Nakao, K.; Nagae, K.; Nakada, K.; Mitani, M.; Tsukada, T.; Osada, N.; Terabayashi, K.; Jindai, M. Machine learning-based evaluation of the damage caused by cracks on concrete structures. Precis. Eng. 2022, 76, 314–327. [Google Scholar] [CrossRef]

- Gehri, N.; Mata-Falcón, J.; Kaufmann, W. Refined extraction of crack characteristics in large-scale concrete experiments based on digital image correlation. Eng. Struct. 2022, 251, 113486. [Google Scholar] [CrossRef]

- Golding, V.P.; Gharineiat, Z.; Munawar, H.S.; Ullah, F. Crack Detection in Concrete Structures Using Deep Learning. Sustainability 2022, 14, 8117. [Google Scholar] [CrossRef]

- Islam, M.M.M.; Kim, J.-M. Vision-Based Autonomous Crack Detection of Concrete Structures Using a Fully Convolutional Encoder–Decoder Network. Sensors 2019, 19, 4251. [Google Scholar] [CrossRef]

- Noori Hoshyar, A.; Rashidi, M.; Liyanapathirana, R.; Samali, B. Algorithm Development for the Non-Destructive Testing of Structural Damage. Appl. Sci. 2019, 9, 2810. [Google Scholar] [CrossRef]

- Han, X.; Zhao, Z.; Chen, L.; Hu, X.; Tian, Y.; Zhai, C.; Wang, L.; Huang, X. Structural damage-causing concrete cracking detection based on a deep-learning method. Constr. Build. Mater. 2022, 337, 127562. [Google Scholar] [CrossRef]

- Xiang, C.; Wang, W.; Deng, L.; Shi, P.; Kong, X. Crack detection algorithm for concrete structures based on super-resolution reconstruction and segmentation network. Autom. Constr. 2022, 140, 104346. [Google Scholar] [CrossRef]

- Park, S.E.; Eem, S.-H.; Jeon, H. Concrete crack detection and quantification using deep learning and structured light. Constr. Build. Mater. 2020, 252, 119096. [Google Scholar] [CrossRef]

- Beskopylny, A.N.; Stel’makh, S.A.; Shcherban’, E.M.; Razveeva, I.F.; Kozhakin, A.N.; Beskopylny, N.A.; Onore, G.S. Image Augmentation Program. Russian Federation Computer Program 2022685192. 21 December 2022. Available online: https://www.fips.ru/registers-doc-view/fips_servlet?DB=EVM&DocNumber=2022685192&TypeFile=html (accessed on 14 June 2023).

- de León, G.; Fiorentini, N.; Leandri, P.; Losa, M. A New Region-Based Minimal Path Selection Algorithm for Crack Detection and Ground Truth Labeling Exploiting Gabor Filters. Remote Sens. 2023, 15, 2722. [Google Scholar] [CrossRef]

- GOST R 58894-2020; Silica Fume for Concretes and Mortars. Specifications. Standartinform: Moscow, Russia, 2021. Available online: https://docs.cntd.ru/document/1200173805 (accessed on 16 June 2023).

- BS EN 13263-1:2005+A1:2009; Silica Fume for Concrete—Part 1: Definitions, Requirements and Conformity Criteria. European Standards: Plzen, Czech, 2010. Available online: https://www.en-standard.eu/bs-en-13263-1-2005-a1-2009-silica-fume-for-concrete-definitions-requirements-and-conformity-criteria/ (accessed on 16 June 2023).

- BS EN 934-2:2009+A1:2012; Admixtures for Concrete, Mortar and Grout—Part 2: Concrete Admixtures—Definitions, Requirements, Conformity, Marking and Labeling. European Standards: Plzen, Czech, 2012. Available online: https://www.en-standard.eu/bs-en-934-2-2009-a1-2012-admixtures-for-concrete-mortar-and-grout-concrete-admixtures-definitions-requirements-conformity-marking-and-labelling/ (accessed on 16 June 2023).

- ASTM C 1240-20; Standard Specification for Silica Fume Used in Cementitious Mixtures. ASTM International: West Conshohocken, PA, USA, 2020. Available online: https://www.astm.org/c1240-20.html (accessed on 16 June 2023).

- MSZ EN 196-3:2017; Methods of Testing Cement—Part 3: Determination of Setting Times and Soundness. Slovenian Institute for Standardization: Ljubljana, Slovenian, 2017. Available online: https://docs.cntd.ru/document/554094953 (accessed on 28 July 2023).

- Shcherban’, E.M.; Stel’makh, S.A.; Beskopylny, A.; Mailyan, L.R.; Meskhi, B.; Varavka, V. Nanomodification of Lightweight Fiber Reinforced Concrete with Micro Silica and Its Influence on the Constructive Quality Coefficient. Materials 2021, 14, 7347. [Google Scholar] [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. arXiv 2015, arXiv:1512.03385. [Google Scholar]

- Beskopylny, A.N.; Shcherban’, E.M.; Stel’makh, S.A.; Mailyan, L.R.; Meskhi, B.; Razveeva, I.; Kozhakin, A.; El’shaeva, D.; Beskopylny, N.; Onore, G. Detecting Cracks in Aerated Concrete Samples Using a Convolutional Neural Network. Appl. Sci. 2023, 13, 1904. [Google Scholar] [CrossRef]

- Beskopylny, A.N.; Shcherban’, E.M.; Stel’makh, S.A.; Mailyan, L.R.; Meskhi, B.; Razveeva, I.; Kozhakin, A.; El’shaeva, D.; Beskopylny, N.; Onore, G. Discovery and Classification of Defects on Facing Brick Specimens Using a Convolutional Neural Network. Appl. Sci. 2023, 13, 5413. [Google Scholar] [CrossRef]

- Kingma, D.P.; Ba, J. Adam: A Method for Stochastic Optimization. arXiv 2017, arXiv:1412.6980. [Google Scholar]

- Li, J.; Lu, X.; Zhang, P.; Li, Q. Intelligent Detection Method for Concrete Dam Surface Cracks Based on Two-Stage Transfer Learning. Water 2023, 15, 2082. [Google Scholar] [CrossRef]

| Property | Value |

|---|---|

| Physics and Mechanics | |

| Compressive strength at the age of 28 days (MPa) | 55.5 |

| Setting times (min) | |

| start | 140 |

| end | 260 |

| Specific surface area (m2/kg) | 338 |

| Soundness (mm) | 0.5 |

| Fineness, passage through a sieve No 008 (%) | 98.1 |

| Chemical | |

| C3S (alite) | 66 |

| C2S (belite) | 15 |

| C3A (tricalcium aluminate) | 7 |

| C4AF (tetracalcium aluminoferrite) | 12 |

| Material | Oxide Content (%) | ||||||||

|---|---|---|---|---|---|---|---|---|---|

| SiO2 | Al2O3 | Fe2O3 | CaO | MgO | Na2O | K2O | SO3 | Loss on Ignition | |

| MS-85 | 83.5 | 1.6 | 1.0 | 1.3 | 0.8 | 0.6 | 1.2 | 3.1 | 6.9 |

| Tensile Strength (MPa) | Fiber Diameter (µm) | Fiber Length (mm) | Modulus of Elasticity (GPa) | Density (kg/m3) | Elongation to Break (%) |

|---|---|---|---|---|---|

| 3100 | 13 | 12 | 72 | 2600 | 4.6 |

| Num | Parameter | Value | |

|---|---|---|---|

| Model 1 | Model 2 | ||

| 1 | Number of images in the training set | 200 | 700 (70%) |

| 2 | Number of images in the validation set | 54 | 200 (20%) |

| 3 | Number of images in the test set | 100 | 100 (10%) |

| 4 | BatchSize | 10 | 10 |

| 5 | Number of epochs | 300 | 300 |

| 6 | Number of iterations | 6000 | 21,000 |

| 7 | Learning rate | 10−4 | 10−4 |

| 8 | Overfitting detector | early stopping | early stopping |

| 9 | Solver | Adam | Adam |

| Model | Precision | Recall | F1 | IoU | Dice |

|---|---|---|---|---|---|

| Mean value for the test sample for model 1 | 0.65 | 0.75 | 0.66 | 0.51 | 0.66 |

| Mean value for the test sample for model 2 | 0.65 | 0.8 | 0.67 | 0.53 | 0.68 |

| Sample Modification | Crack Area (%) | Compressive Strength (MPa) |

|---|---|---|

| Control | 8.3 | 22.5 |

| Control + GF | 4.9 | 25.2 |

| Control + MS + GF | 2.2 | 28.8 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Beskopylny, A.N.; Shcherban’, E.M.; Stel’makh, S.A.; Mailyan, L.R.; Meskhi, B.; Razveeva, I.; Kozhakin, A.; Beskopylny, N.; El’shaeva, D.; Artamonov, S. Method for Concrete Structure Analysis by Microscopy of Hardened Cement Paste and Crack Segmentation Using a Convolutional Neural Network. J. Compos. Sci. 2023, 7, 327. https://doi.org/10.3390/jcs7080327

Beskopylny AN, Shcherban’ EM, Stel’makh SA, Mailyan LR, Meskhi B, Razveeva I, Kozhakin A, Beskopylny N, El’shaeva D, Artamonov S. Method for Concrete Structure Analysis by Microscopy of Hardened Cement Paste and Crack Segmentation Using a Convolutional Neural Network. Journal of Composites Science. 2023; 7(8):327. https://doi.org/10.3390/jcs7080327

Chicago/Turabian StyleBeskopylny, Alexey N., Evgenii M. Shcherban’, Sergey A. Stel’makh, Levon R. Mailyan, Besarion Meskhi, Irina Razveeva, Alexey Kozhakin, Nikita Beskopylny, Diana El’shaeva, and Sergey Artamonov. 2023. "Method for Concrete Structure Analysis by Microscopy of Hardened Cement Paste and Crack Segmentation Using a Convolutional Neural Network" Journal of Composites Science 7, no. 8: 327. https://doi.org/10.3390/jcs7080327

APA StyleBeskopylny, A. N., Shcherban’, E. M., Stel’makh, S. A., Mailyan, L. R., Meskhi, B., Razveeva, I., Kozhakin, A., Beskopylny, N., El’shaeva, D., & Artamonov, S. (2023). Method for Concrete Structure Analysis by Microscopy of Hardened Cement Paste and Crack Segmentation Using a Convolutional Neural Network. Journal of Composites Science, 7(8), 327. https://doi.org/10.3390/jcs7080327