Recent Advances in Agricultural Robots for Automated Weeding

Abstract

1. Introduction

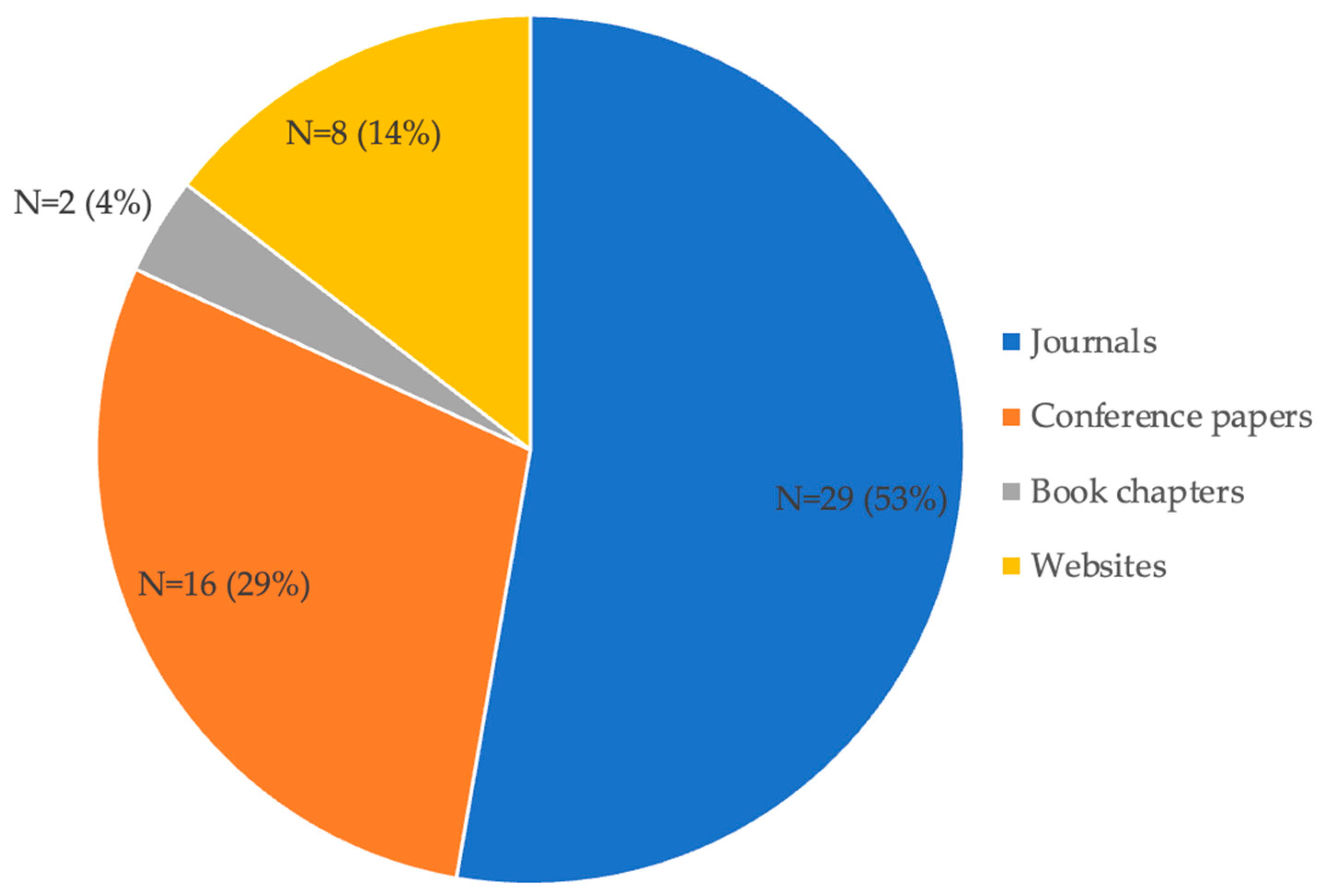

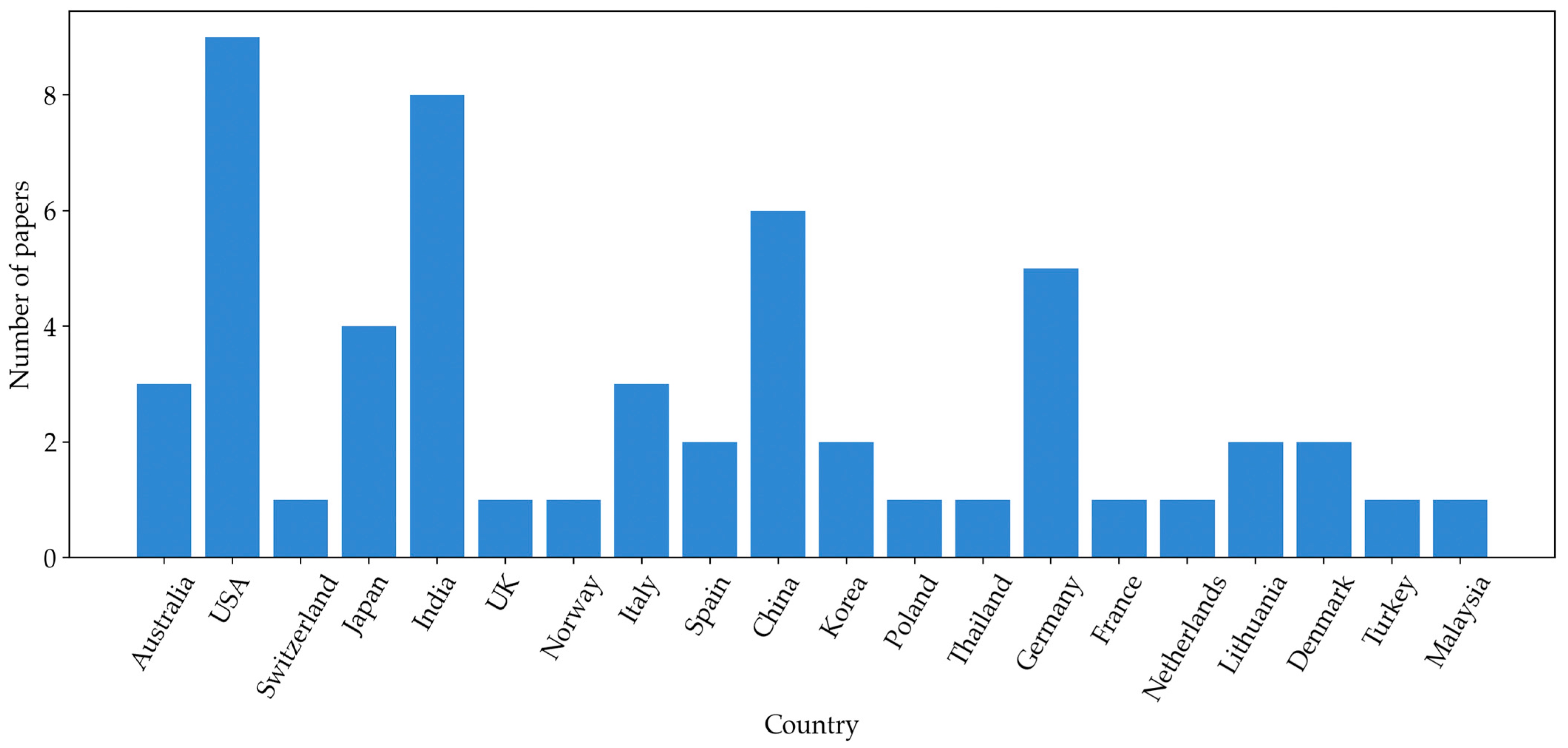

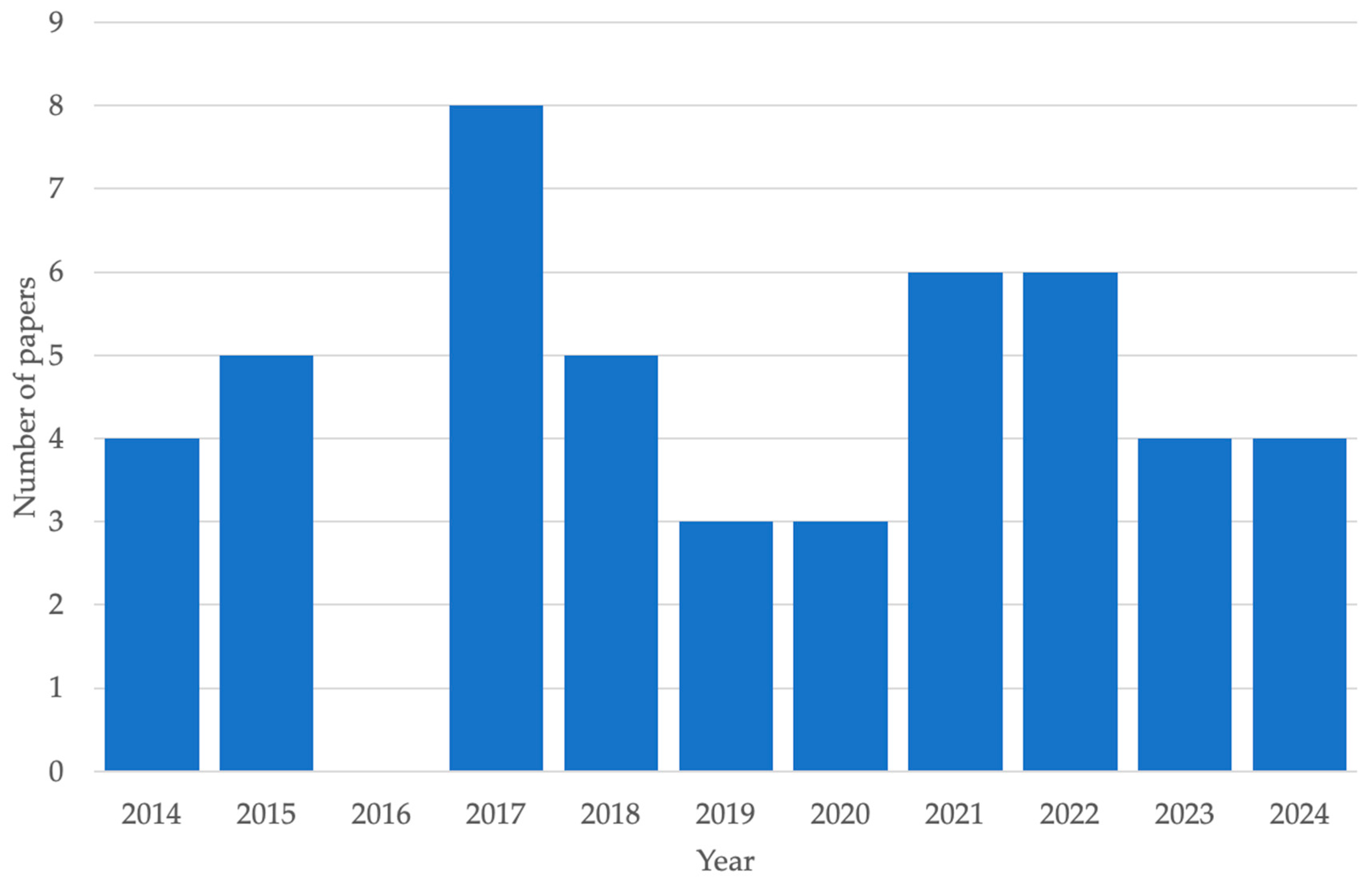

2. Methodology

3. Weeding Robots

3.1. Weeding Robots in Research

3.1.1. Spraying Robots

3.1.2. Non-Chemical Weeding Robots

3.1.3. Cooperative Approaches

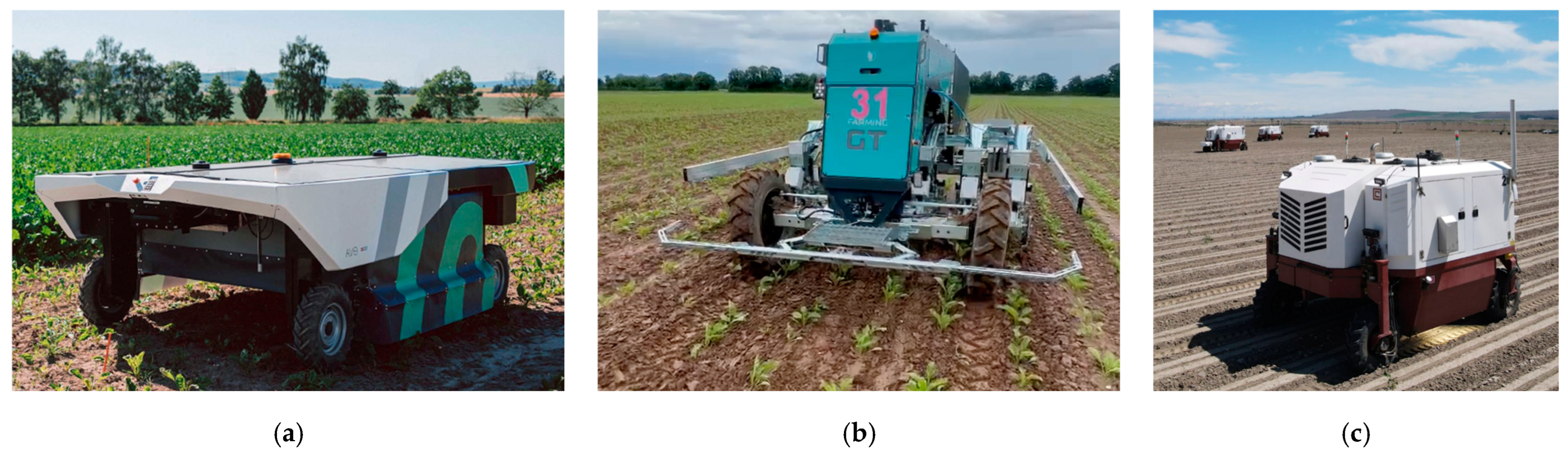

3.2. Examples of Commercial Weeding Platforms

3.3. Trends

4. Discussion

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- United Nations World Population Projected to Reach 9.8 Billion in 2050. Available online: https://www.un.org/development/desa/en/news/population/world-population-prospects-2017.html (accessed on 12 December 2022).

- van Dijk, M.; Morley, T.; Rau, M.L.; Saghai, Y. A Meta-Analysis of Projected Global Food Demand and Population at Risk of Hunger for the Period 2010–2050. Nat. Food 2021, 2, 494–501. [Google Scholar] [CrossRef] [PubMed]

- Droukas, L.; Doulgeri, Z.; Tsakiridis, N.L.; Triantafyllou, D.; Kleitsiotis, I.; Mariolis, I.; Giakoumis, D.; Tzovaras, D.; Kateris, D.; Bochtis, D. A Survey of Robotic Harvesting Systems and Enabling Technologies. J. Intell. Robot. Syst. 2023, 107, 21. [Google Scholar] [CrossRef] [PubMed]

- Oliveira, L.F.P.; Moreira, A.P.; Silva, M.F. Advances in Agriculture Robotics: A State-of-the-Art Review and Challenges Ahead. Robotics 2021, 10, 52. [Google Scholar] [CrossRef]

- Bechar, A. (Ed.) Innovation in Agricultural Robotics for Precision Agriculture; Progress in Precision Agriculture; Springer International Publishing: Cham, Switzerland, 2021; ISBN 978-3-030-77035-8. [Google Scholar]

- dos Santos, F.N.; Sobreira, H.; Campos, D.; Morais, R.; Paulo Moreira, A.; Contente, O. Towards a Reliable Robot for Steep Slope Vineyards Monitoring. J. Intell. Robot. Syst. 2016, 83, 429–444. [Google Scholar] [CrossRef]

- Reiser, D.; Vázquez-Arellano, M.; Paraforos, D.S.; Garrido-Izard, M.; Griepentrog, H.W. Iterative Individual Plant Clustering in Maize with Assembled 2D LiDAR Data. Comput. Ind. 2018, 99, 42–52. [Google Scholar] [CrossRef]

- Xiong, Y.; Ge, Y.; Grimstad, L.; From, P.J. An Autonomous Strawberry-harvesting Robot: Design, Development, Integration, and Field Evaluation. J. Field Robot. 2020, 37, 202–224. [Google Scholar] [CrossRef]

- Starostin, I.A.; Eshchin, A.V.; Davydova, S.A. Global Trends in the Development of Agricultural Robotics. IOP Conf. Ser. Earth Environ. Sci. 2023, 1138, 012042. [Google Scholar] [CrossRef]

- Radoglou-Grammatikis, P.; Sarigiannidis, P.; Lagkas, T.; Moscholios, I. A Compilation of UAV Applications for Precision Agriculture. Comput. Netw. 2020, 172, 107148. [Google Scholar] [CrossRef]

- Lytridis, C.; Kaburlasos, V.G.; Pachidis, T.; Manios, M.; Vrochidou, E.; Kalampokas, T.; Chatzistamatis, S. An Overview of Cooperative Robotics in Agriculture. Agronomy 2021, 11, 1818. [Google Scholar] [CrossRef]

- Mao, W.; Liu, Z.; Liu, H.; Yang, F.; Wang, M. Research Progress on Synergistic Technologies of Agricultural Multi-Robots. Appl. Sci. 2021, 11, 1448. [Google Scholar] [CrossRef]

- Fennimore, S.A.; Cutulle, M. Robotic Weeders Can Improve Weed Control Options for Specialty Crops. Pest Manag. Sci. 2019, 75, 1767–1774. [Google Scholar] [CrossRef] [PubMed]

- Gerhards, R.; Andújar Sanchez, D.; Hamouz, P.; Peteinatos, G.G.; Christensen, S.; Fernandez-Quintanilla, C. Advances in Site-specific Weed Management in Agriculture—A Review. Weed Res. 2022, 62, 123–133. [Google Scholar] [CrossRef]

- Li, N.; Zhang, X.; Zhang, C.; Ge, L.; He, Y.; Wu, X. Review of Machine-Vision-Based Plant Detection Technologies for Robotic Weeding. In Proceedings of the 2019 IEEE International Conference on Robotics and Biomimetics (ROBIO), Dali, China, 6–8 December 2019; pp. 2370–2377. [Google Scholar] [CrossRef]

- Zhang, W.; Miao, Z.; Li, N.; He, C.; Sun, T. Review of Current Robotic Approaches for Precision Weed Management. Curr. Robot. Rep. 2022, 3, 139–151. [Google Scholar] [CrossRef] [PubMed]

- Li, Y.; Guo, Z.; Shuang, F.; Zhang, M.; Li, X. Key Technologies of Machine Vision for Weeding Robots: A Review and Benchmark. Comput. Electron. Agric. 2022, 196, 106880. [Google Scholar] [CrossRef]

- Du, Y.; Mallajosyula, B.; Sun, D.; Chen, J.; Zhao, Z.; Rahman, M.; Quadir, M.; Jawed, M.K. A Low-Cost Robot with Autonomous Recharge and Navigation for Weed Control in Fields with Narrow Row Spacing. In Proceedings of the 2021 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Prague, Czech Republic, 27 September–1 October 2021; IEEE: Piscataway, NJ, USA; pp. 3263–3270. [Google Scholar]

- Sabanci, K.; Aydin, C. Smart Robotic Weed Control System for Sugar Beet. J. Agric. Sci. Technol. 2017, 19, 73–83. [Google Scholar]

- Scholz, C.; Kohlbrecher, M.; Ruckelshausen, A.; Kinski, D.; Mentrup, D. Camera-Based Selective Weed Control Application Module (“Precision Spraying App”) for the Autonomous Field Robot Platform BoniRob. In Proceedings of the International Conference of Agricultural Engineering, Zurich, Switzerand, 6–10 July 2014; pp. 1–8. [Google Scholar]

- Aravind, R.; Daman, M.; Kariyappa, B.S. Design and Development of Automatic Weed Detection and Smart Herbicide Sprayer Robot. In Proceedings of the 2015 IEEE Recent Advances in Intelligent Computational Systems (RAICS), Trivandrum, Kerala, India, 10–12 December 2015; IEEE: Piscataway, NJ, USA; pp. 257–261. [Google Scholar]

- Arakeri, M.P.; Vijaya Kumar, B.P.; Barsaiya, S.; Sairam, H.V. Computer Vision Based Robotic Weed Control System for Precision Agriculture. In Proceedings of the 2017 International Conference on Advances in Computing, Communications and Informatics (ICACCI), Manipal, Karnataka, India, 13–16 September 2017; IEEE: Piscataway, NJ, USA; pp. 1201–1205. [Google Scholar]

- Fan, X.; Chai, X.; Zhou, J.; Sun, T. Deep Learning Based Weed Detection and Target Spraying Robot System at Seedling Stage of Cotton Field. Comput. Electron. Agric. 2023, 214, 108317. [Google Scholar] [CrossRef]

- Utstumo, T.; Urdal, F.; Brevik, A.; Dørum, J.; Netland, J.; Overskeid, Ø.; Berge, T.W.; Gravdahl, J.T. Robotic In-Row Weed Control in Vegetables. Comput. Electron. Agric. 2018, 154, 36–45. [Google Scholar] [CrossRef]

- Jiang, W.; Quan, L.; Wei, G.; Chang, C.; Geng, T. A Conceptual Evaluation of a Weed Control Method with Post-Damage Application of Herbicides: A Composite Intelligent Intra-Row Weeding Robot. Soil Tillage Res. 2023, 234, 105837. [Google Scholar] [CrossRef]

- Wu, X.; Aravecchia, S.; Lottes, P.; Stachniss, C.; Pradalier, C. Robotic Weed Control Using Automated Weed and Crop Classification. J. Field Robot. 2020, 37, 322–340. [Google Scholar] [CrossRef]

- Ahmadi, A.; Halstead, M.; McCool, C. BonnBot-I: A Precise Weed Management and Crop Monitoring Platform. In Proceedings of the 2022 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Kyoto, Japan, 23–27 October 2022; IEEE: Piscataway, NJ, USA; pp. 9202–9209. [Google Scholar]

- Choi, K.H.; Han, S.K.; Han, S.H.; Park, K.-H.; Kim, K.-S.; Kim, S. Morphology-Based Guidance Line Extraction for an Autonomous Weeding Robot in Paddy Fields. Comput. Electron. Agric. 2015, 113, 266–274. [Google Scholar] [CrossRef]

- Choi, K.H.; Han, S.K.; Park, K.-H.; Kim, K.-S.; Kim, S. Vision Based Guidance Line Extraction for Autonomous Weed Control Robot in Paddy Field. In Proceedings of the 2015 IEEE International Conference on Robotics and Biomimetics (ROBIO), Zhuhai China, 6–9 December 2015; IEEE: Piscataway, NJ, USA; pp. 831–836. [Google Scholar]

- Zhang, Q.; Shaojie Chen, M.E.; Li, B. A Visual Navigation Algorithm for Paddy Field Weeding Robot Based on Image Understanding. Comput. Electron. Agric. 2017, 143, 66–78. [Google Scholar] [CrossRef]

- Ju, J.; Chen, G.; Lv, Z.; Zhao, M.; Sun, L.; Wang, Z.; Wang, J. Design and Experiment of an Adaptive Cruise Weeding Robot for Paddy Fields Based on Improved YOLOv5. Comput. Electron. Agric. 2024, 219, 108824. [Google Scholar] [CrossRef]

- Maini, P.; Gonultas, B.M.; Isler, V. Online Coverage Planning for an Autonomous Weed Mowing Robot With Curvature Constraints. IEEE Robot. Autom. Lett. 2022, 7, 5445–5452. [Google Scholar] [CrossRef]

- Kanagasingham, S.; Ekpanyapong, M.; Chaihan, R. Integrating Machine Vision-Based Row Guidance with GPS and Compass-Based Routing to Achieve Autonomous Navigation for a Rice Field Weeding Robot. Precis. Agric. 2020, 21, 831–855. [Google Scholar] [CrossRef]

- Visentin, F.; Cremasco, S.; Sozzi, M.; Signorini, L.; Signorini, M.; Marinello, F.; Muradore, R. A Mixed-Autonomous Robotic Platform for Intra-Row and Inter-Row Weed Removal for Precision Agriculture. Comput. Electron. Agric. 2023, 214, 108270. [Google Scholar] [CrossRef]

- Heravi, A.; Ahmad, D.; Hameed, I.A.; Shamshiri, R.R.; Balasundram, S.; Yamin, M. Development of a Field Robot Platform for Mechanical Weed Control in Greenhouse Cultivation of Cucumber. In Agricultural Robots: Fundamentals and Applications; IntechOpen: London, UK, 2019. [Google Scholar]

- Murugaraj, G.; Selva Kumar, S.; Pillai, A.S.; Bharatiraja, C. Implementation of In-Row Weeding Robot with Novel Wheel, Assembly and Wheel Angle Adjustment for Slurry Paddy Field. Mater. Today Proc. 2022, 65, 215–220. [Google Scholar] [CrossRef]

- Bawden, O.; Kulk, J.; Russell, R.; McCool, C.; English, A.; Dayoub, F.; Lehnert, C.; Perez, T. Robot for Weed Species Plant-specific Management. J. Field Robot. 2017, 34, 1179–1199. [Google Scholar] [CrossRef]

- Hall, D.; Dayoub, F.; Kulk, J.; McCool, C. Towards Unsupervised Weed Scouting for Agricultural Robotics. In Proceedings of the 2017 IEEE International Conference on Robotics and Automation (ICRA), Singapore, 29 May–3 June 2017; pp. 5223–5230. [Google Scholar] [CrossRef]

- Xiong, Y.; Ge, Y.; Liang, Y.; Blackmore, S. Development of a Prototype Robot and Fast Path-Planning Algorithm for Static Laser Weeding. Comput. Electron. Agric. 2017, 142, 494–503. [Google Scholar] [CrossRef]

- Michaels, A.; Haug, S.; Albert, A. Vision-Based High-Speed Manipulation for Robotic Ultra-Precise Weed Control. In Proceedings of the 2015 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Hamburg, Germany, 28 September–2 October 2015; IEEE: Piscataway, NJ, USA; pp. 5498–5505. [Google Scholar]

- Zhu, H.; Zhang, Y.; Mu, D.; Bai, L.; Zhuang, H.; Li, H. YOLOX-Based Blue Laser Weeding Robot in Corn Field. Front. Plant Sci. 2022, 13, 1017803. [Google Scholar] [CrossRef]

- Patel, D.; Gandhi, M.; Shankaranarayanan, H.; Darji, A.D. Design of an Autonomous Agriculture Robot for Real-Time Weed Detection Using CNN. In Advances in VLSI and Embedded Systems; Lecture Notes in Electrical Engineering; Springer: Berlin/Heidelberg, Germany, 2023; Volume 962, pp. 141–161. ISBN 9789811967795. [Google Scholar]

- Sethia, G.; Guragol, H.K.S.; Sandhya, S.; Shruthi, J.; Rashmi, N. Automated Computer Vision Based Weed Removal Bot. In Proceedings of the 2020 IEEE International Conference on Electronics, Computing and Communication Technologies (CONECCT), Bangalore, India, 2–4 July 2020; IEEE: Piscataway, NJ, USA; pp. 1–6. [Google Scholar]

- Florance Mary, M.; Yogaraman, D. Neural Network Based Weeding Robot For Crop And Weed Discrimination. J. Phys. Conf. Ser. 2021, 1979, 012027. [Google Scholar] [CrossRef]

- Sujaritha, M.; Annadurai, S.; Satheeshkumar, J.; Kowshik Sharan, S.; Mahesh, L. Weed Detecting Robot in Sugarcane Fields Using Fuzzy Real Time Classifier. Comput. Electron. Agric. 2017, 134, 160–171. [Google Scholar] [CrossRef]

- Nakai, S.; Yamada, Y. Development of a Weed Suppression Robot for Rice Cultivation: Weed Suppression and Posture Control. Int. J. Electr. Comput. Electron. Commun. Eng. 2014, 8, 1766–1770. [Google Scholar]

- Sellmann, F.; Bangert, W.; Grzonka, S.; Hänsel, M.; Haug, S.; Kielhorn, A.; Michaels, A.; Möller, K.; Rahe, F.; Strothmann, W.; et al. RemoteFarming.1: Human-Machine Interaction for a Field-Robot-Based Weed Control Application in Organic Farming. In Proceedings of the 4th International Conference on Machine Control & Guidance, Braunschweig, Germany, 19–20 March 2014. [Google Scholar]

- McCool, C.S.; Beattie, J.; Firn, J.; Lehnert, C.; Kulk, J.; Bawden, O.; Russell, R.; Perez, T. Efficacy of Mechanical Weeding Tools: A Study into Alternative Weed Management Strategies Enabled by Robotics. IEEE Robot. Autom. Lett. 2018, 3, 1184–1190. [Google Scholar] [CrossRef]

- Uchida, H.; Funaki, T.; Yamano, T. Development of a Remoto Control Type Weeding Machine with Stirring Chains for a Paddy Field. In Proceedings of the 22nd International Conference on Climbing and Walking Robots and the Support Technologies for Mobile Machines (CLAWAR 2019), Kuala Lumpur, Malaysia, 26–28 August 2019; CLAWAR Association Ltd.: Buckinghamshire, UK; pp. 26–28. [Google Scholar]

- Sori, H.; Inoue, H.; Hatta, H.; Ando, Y. Effect for a Paddy Weeding Robot in Wet Rice Culture. J. Robot. Mechatron. 2018, 30, 198–205. [Google Scholar] [CrossRef]

- Quan, L.; Jiang, W.; Li, H.; Li, H.; Wang, Q.; Chen, L. Intelligent Intra-Row Robotic Weeding System Combining Deep Learning Technology with a Targeted Weeding Mode. Biosyst. Eng. 2022, 216, 13–31. [Google Scholar] [CrossRef]

- Gokul, S.; Dhiksith, R.; Sundaresh, S.A.; Gopinath, M. Gesture Controlled Wireless Agricultural Weeding Robot. In Proceedings of the 2019 5th International Conference on Advanced Computing & Communication Systems (ICACCS), Coimbatore, India, 15–16 March 2019; IEEE: Piscataway, NJ, USA; pp. 926–929. [Google Scholar]

- Hossain, M.Z.; Komatsuzaki, M. Weed Management and Economic Analysis of a Robotic Lawnmower: A Case Study in a Japanese Pear Orchard. Agriculture 2021, 11, 113. [Google Scholar] [CrossRef]

- Gerhards, R.; Risser, P.; Spaeth, M.; Saile, M.; Peteinatos, G. A Comparison of Seven Innovative Robotic Weeding Systems and Reference Herbicide Strategies in Sugar Beet (Beta vulgaris Subsp. vulgaris L.) and Rapeseed (Brassica napus, L.). Weed Res. 2024, 64, 42–53. [Google Scholar] [CrossRef]

- Gonzalez-de-Soto, M.; Emmi, L.; Garcia, I.; Gonzalez-de-Santos, P. Reducing Fuel Consumption in Weed and Pest Control Using Robotic Tractors. Comput. Electron. Agric. 2015, 114, 96–113. [Google Scholar] [CrossRef]

- Bručienė, I.; Aleliūnas, D.; Šarauskis, E.; Romaneckas, K. Influence of Mechanical and Intelligent Robotic Weed Control Methods on Energy Efficiency and Environment in Organic Sugar Beet Production. Agriculture 2021, 11, 449. [Google Scholar] [CrossRef]

- Bručienė, I.; Buragienė, S.; Šarauskis, E. Weeding Effectiveness and Changes in Soil Physical Properties Using Inter-Row Hoeing and a Robot. Agronomy 2022, 12, 1514. [Google Scholar] [CrossRef]

- Krupanek, J.; de Santos, P.G.; Emmi, L.; Wollweber, M.; Sandmann, H.; Scholle, K.; Di Minh Tran, D.; Schouteten, J.J.; Andreasen, C. Environmental Performance of an Autonomous Laser Weeding Robot—A Case Study. Int. J. Life Cycle Assess. 2024, 29, 1021–1052. [Google Scholar] [CrossRef]

- Pretto, A.; Aravecchia, S.; Burgard, W.; Chebrolu, N.; Dornhege, C.; Falck, T.; Fleckenstein, F.; Fontenla, A.; Imperoli, M.; Khanna, R.; et al. Building an Aerial–Ground Robotics System for Precision Farming: An Adaptable Solution. IEEE Robot. Autom. Mag. 2021, 28, 29–49. [Google Scholar] [CrossRef]

- Ruckelshausen, A.; Biber, P.; Dorna, M.; Gremmes, H.; Klose, R.; Linz, A.; Rahe, R.; Resch, R.; Thiel, M.; Trautz, D.; et al. BoniRob: An Autonomous Field Robot Platform for Individual Plant Phenotyping. In Precision agriculture ’09; Brill|Wageningen Academic: Leiden, The Netherlands, 2009; pp. 841–847. ISBN 9789086861132. [Google Scholar]

- Gonzalez-de-Santos, P.; Ribeiro, A.; Fernandez-Quintanilla, C.; Lopez-Granados, F.; Brandstoetter, M.; Tomic, S.; Pedrazzi, S.; Peruzzi, A.; Pajares, G.; Kaplanis, G.; et al. Fleets of Robots for Environmentally-Safe Pest Control in Agriculture. Precis. Agric. 2017, 18, 574–614. [Google Scholar] [CrossRef]

- McAllister, W.; Osipychev, D.; Davis, A.; Chowdhary, G. Agbots: Weeding a Field with a Team of Autonomous Robots. Comput. Electron. Agric. 2019, 163, 104827. [Google Scholar] [CrossRef]

- McAllister, W.; Osipychev, D.; Chowdhary, G.; Davis, A. Multi-Agent Planning for Coordinated Robotic Weed Killing. In Proceedings of the 2018 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Madrid, Spain, 1–5 October 2018; IEEE: Piscataway, NJ, USA; pp. 7955–7960. [Google Scholar]

- Pérez-Ruíz, M.; Slaughter, D.C.; Fathallah, F.A.; Gliever, C.J.; Miller, B.J. Co-Robotic Intra-Row Weed Control System. Biosyst. Eng. 2014, 126, 45–55. [Google Scholar] [CrossRef]

- Ecorobotix AVO. Available online: https://ecorobotix.com/en/avo/ (accessed on 9 June 2024).

- EarthSense TerraMax. Available online: https://www.earthsense.co (accessed on 9 June 2024).

- Naïo Technologies Ted. Available online: https://www.naio-technologies.com (accessed on 9 June 2024).

- Farming Revolution Farming GT. Available online: https://farming-revolution.com (accessed on 9 June 2024).

- odd.bot Maverick. Available online: https://www.odd.bot (accessed on 9 June 2024).

- Agrointelli Robotti. Available online: https://agrointelli.com/robotti/ (accessed on 9 June 2024).

- Sanchez, J.; Gallandt, E.R. Functionality and Efficacy of Franklin Robotics’ Tertill™ Robotic Weeder. Weed Technol. 2021, 35, 166–170. [Google Scholar] [CrossRef]

- Carbon Robotics. Autonomous LaserWeeder. Available online: https://carbonrobotics.com/autonomous-weeder (accessed on 9 June 2024).

- FarmDroid FD20. Available online: https://farmdroid.com (accessed on 9 June 2024).

- Zhang, H.; Cao, D.; Zhou, W.; Currie, K. Laser and Optical Radiation Weed Control: A Critical Review. Precis. Agric. 2024, 25, 2033–2057. [Google Scholar] [CrossRef]

- Tran, D.; Schouteten, J.J.; Degieter, M.; Krupanek, J.; Jarosz, W.; Areta, A.; Emmi, L.; De Steur, H.; Gellynck, X. European Stakeholders’ Perspectives on Implementation Potential of Precision Weed Control: The Case of Autonomous Vehicles with Laser Treatment. Precis. Agric. 2023, 24, 2200–2222. [Google Scholar] [CrossRef]

- Qu, H.-R.; Su, W.-H. Deep Learning-Based Weed–Crop Recognition for Smart Agricultural Equipment: A Review. Agronomy 2024, 14, 363. [Google Scholar] [CrossRef]

- Zingsheim, M.L.; Döring, T.F. What Weeding Robots Need to Know about Ecology. Agric. Ecosyst. Environ. 2024, 364, 108861. [Google Scholar] [CrossRef]

- Grimstad, L.; From, P. The Thorvald II Agricultural Robotic System. Robotics 2017, 6, 24. [Google Scholar] [CrossRef]

- Merfield, C.N. Robotic Weeding’s False Dawn? Ten Requirements for Fully Autonomous Mechanical Weed Management. Weed Res. 2016, 56, 340–344. [Google Scholar] [CrossRef]

| Type of Study | Target Crop | Weed Recognition | Weeding Tool | Navigation | Ref. |

|---|---|---|---|---|---|

| Field testing | Flax | N/A | Spraying system | Vision for crop row detection | [18] |

| Lab testing | Sugar beets | Image processing | Spraying unit | Moving on rail | [19] |

| Lab and field tests | N/A | Image processing | Eight spraying nozzles | Manual control | [20] |

| Lab testing | Ragi | Image processing | Spraying system | N/A | [21] |

| Lab testing | Onions | Image processing | Single spraying tool | Remote control | [22] |

| Field testing | Cotton | Deep learning | Spraying nozzles | Row navigation | [23] |

| Field testing | Carrots | Image processing | Controlled spraying | Vision for crop row detection | [24] |

| Lab and field tests | Maize | Deep learning | A weeding tool with a sprayer and brushes to direct herbicide delivery to working zones | Continuously operating reference station (CORS)-based navigation system | [25] |

| Lab testing | Sugar beets | Deep learning | Selective sprayer and mechanical stamping tool | N/A | [26] |

| Lab and field tests | N/A | Deep learning | Spot-spray nozzles | In-row movements with plants at regular intervals | [27] |

| Type of Study | Target Crop | Weed Recognition | Weeding Tool | Navigation | Ref. |

|---|---|---|---|---|---|

| Field testing | Paddy field | Image processing | Screw-type wheels for weed removal | Vision for seedling line detection | [28,29] |

| Lab testing | Paddy field | Image processing | N/A | Vision for seedling line detection | [30] |

| Field testing | Paddy field | Deep learning | Cultivator-weeding wheels | Vision for seedling line detection | [31] |

| Field testing | Cow pasture | N/A | Flail-deck weeding implement | Online coverage using RTK, IMU, and vision | [32] |

| Field testing | Paddy field | Image processing | Three-row paddy weeder with cutter blades | (a) GNSS path planning, (b) compass bearing correction and (c) vision-based row guidance | [33] |

| Lab and field testing | Variety of plants | Deep learning | Gripper for weed picking | Teleoperation | [34] |

| Field testing | Cucumber | N/A | Rotating cutting blade | Monorail | [35] |

| System overview | Paddy field | N/A | N/A | N/A | [36] |

| Type of Study | Target Crop | Weed Recognition | Weeding Tool | Navigation | Ref. |

|---|---|---|---|---|---|

| Field testing | Cotton | Image processing | 1 DOF and 2 DOF weeding mechanisms | Coverage planner | [37] |

| Lab testing | Cotton | Deep learning | N/A | N/A | [38] |

| Field testing | Clover | Image processing | 2 DOFs arm with dual-gimbal laser pointers area | The robot is weeding while static within a predefined frame captured by a camera | [39] |

| Field testing | N/A | Image processing | Flywheel stamping tool | N/A | [40] |

| Field testing | Corn | Deep learning | Laser emitter | Vision and odometry fusion | [41] |

| Lab and field testing | N/A | Deep learning | N/A | Teleoperation and planning using sensor fusion | [42] |

| Field testing | N/A | Deep learning | Rotating blade on a delta arm | Row guidance | [43] |

| System overview | N/A | Deep learning | 3 DOF manipulator with blade as end effector | N/A | [44] |

| Lab testing | Sugarcane | Image processing | Rotating blade | Vision-based guidance | [45] |

| Type of Study | Target Crop | Weed Recognition | Weeding Tool | Navigation | Ref. |

|---|---|---|---|---|---|

| Lab testing | Paddy field | N/A | A robot arm with a brush applies force | Potential method (attraction of repulsion of an obstacle) | [46] |

| Field testing | Carrots | N/A | The manipulator positions the weeding tool (tube stamp) via visual servoing | Row following | [47] |

| Field testing | Cotton | Image processing | Arrow hoe, tine, cutting tool | Row following | [48] |

| Field testing | Paddy field | N/A | Steering chains to stir the soil | Teleoperation | [49] |

| Field testing | Paddy field | Capacitive sensor | Wheels stir soil while moving | Coverage navigation algorithm based on detected rice seedlings | [50] |

| Lab and field testing | Maize | Deep learning | Two vertically rotating discs with weeding knives. | Conveyor belt | [51] |

| Lab testing | N/A | N/A | 3 DOF robotic arm with glove-controlled cutter | N/A | [52] |

| Type of Study | Target Crop | Weed Recognition | Weeding Tool | Navigation | Ref. |

|---|---|---|---|---|---|

| Field testing | Pears | N/A | Three razor-sharp pivoting blades | Map-based guidance | [53] |

| Field testing | Sugar beets | Image processing/Deep learning | Band-spraying and inter-row hoeing | GPS-based positioning | [54] |

| Field testing | Wheat and maise | N/A | Sprayer and flaming | Row guidance with vision and RTK | [55] |

| Field testing | Sugar Beet | Image processing | Mechanical loosening/cutting and mulching/weed smothering with catch crops/thermal steaming | GPS-based positioning | [56] |

| Field testing | Ecological sugar beet | Image processing | Cutting blade | GPS-based positioning | [57] |

| System overview | N/A | Image processing | A laser-based weeding tool | N/A | [58] |

| Description | Type of Study | Target Crops | Weed Recognition | Weeding Tool | Ref. |

|---|---|---|---|---|---|

| BoniRob with aerial drone | System overview | Sugar beets | Deep learning | Sprayer/weed stamping | [59] |

| Three UGVs and two UAVs | Field testing | Maize, wheat, olives | Image processing | Patch spraying/air-blast sprayer/shallow soil tillage/thermal (burner) | [61] |

| Multi-UGV system (AgBots) | Simulation work | N/A | N/A | N/A | [62,63] |

| Human-robot cooperation | Field testing | Tomato | N/A | Intra-row hoes | [64] |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Lytridis, C.; Pachidis, T. Recent Advances in Agricultural Robots for Automated Weeding. AgriEngineering 2024, 6, 3279-3296. https://doi.org/10.3390/agriengineering6030187

Lytridis C, Pachidis T. Recent Advances in Agricultural Robots for Automated Weeding. AgriEngineering. 2024; 6(3):3279-3296. https://doi.org/10.3390/agriengineering6030187

Chicago/Turabian StyleLytridis, Chris, and Theodore Pachidis. 2024. "Recent Advances in Agricultural Robots for Automated Weeding" AgriEngineering 6, no. 3: 3279-3296. https://doi.org/10.3390/agriengineering6030187

APA StyleLytridis, C., & Pachidis, T. (2024). Recent Advances in Agricultural Robots for Automated Weeding. AgriEngineering, 6(3), 3279-3296. https://doi.org/10.3390/agriengineering6030187