Effect of Droplet Contamination on Camera Lens Surfaces: Degradation of Image Quality and Object Detection Performance

Abstract

1. Introduction

2. Image Quality Evaluation Results

2.1. Image Quality Evaluation Metric

2.2. MTF Measurement Setup and Method

2.3. Image Quality Degradation Effects of Single Droplet Contamination

2.4. Image Quality Degradation Effects of Multiple Droplet Contamination

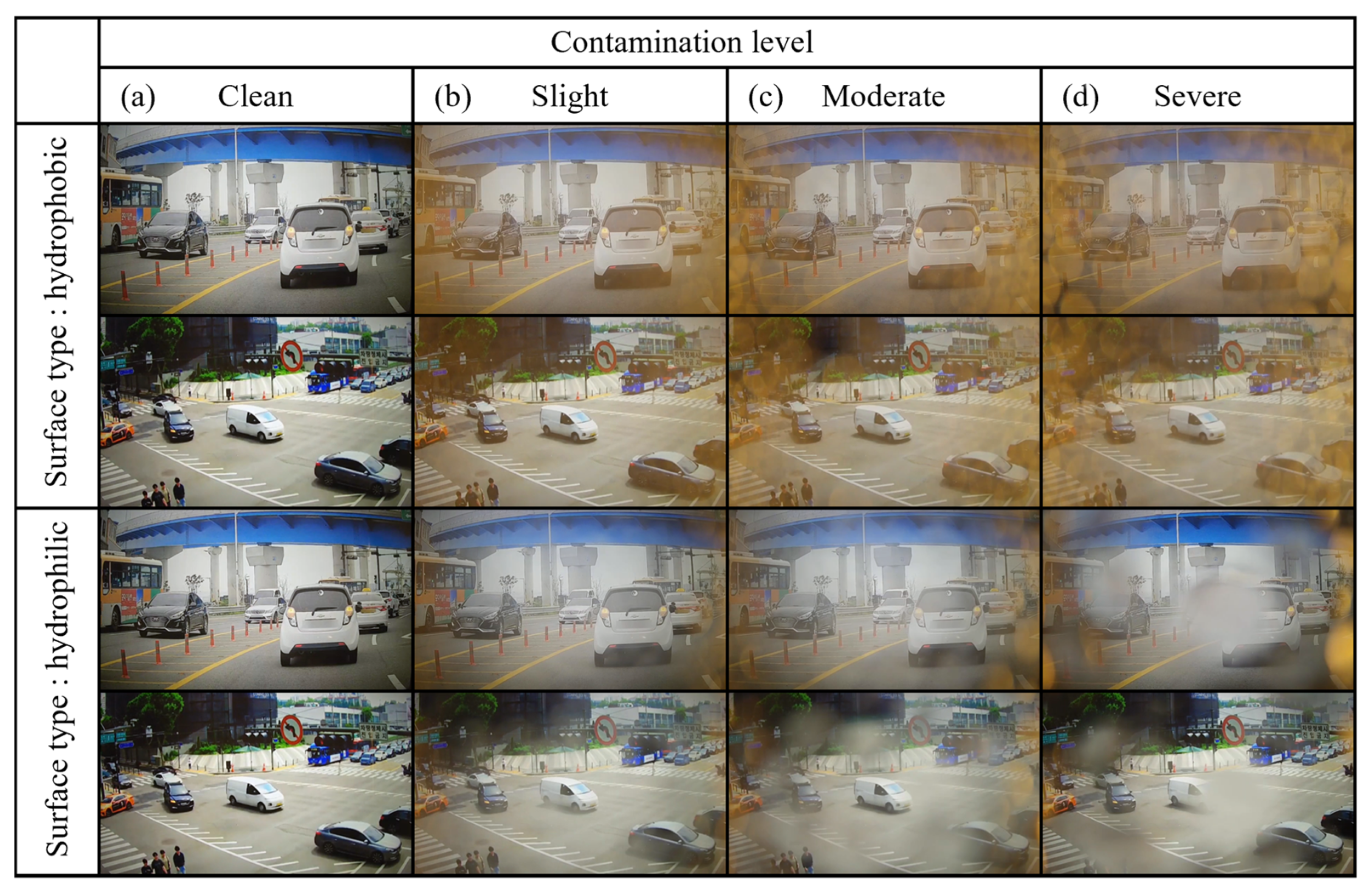

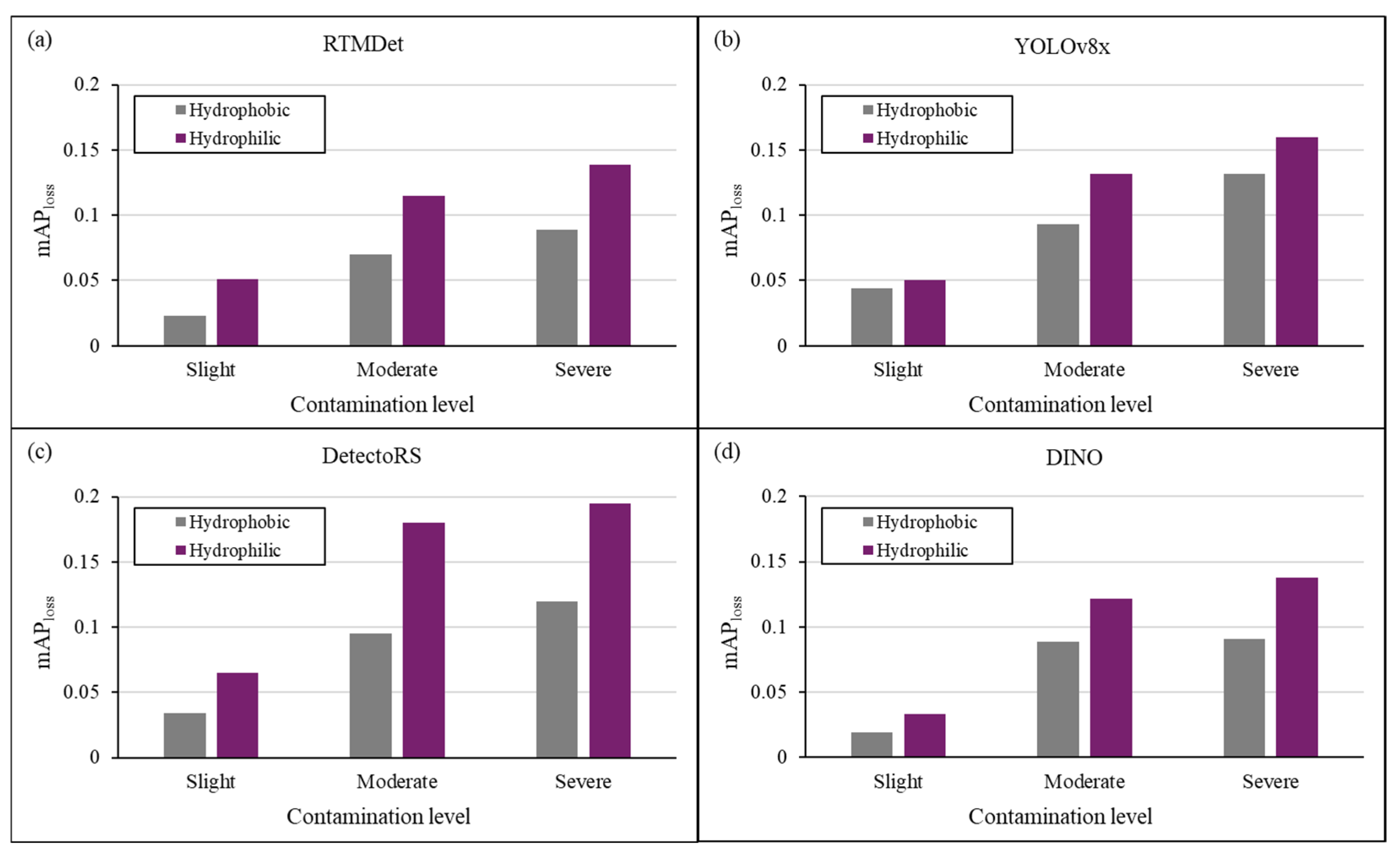

3. Object Detection Performance Evaluation Results

3.1. Object Detection Performance Evaluation Metrics

3.2. Object Detection Performance Measurement Setup and Method

3.3. Object Detection Performance Degradation Effects of Single Droplet and Object Size

3.4. Object Detection Performance Degradation Effects of Multiple Droplet Contamination

4. Discussion and Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| ADAS | Advanced driver assistance system |

| UAVs | Unmanned aerial vehicle |

| MTF | Modulation transfer function |

| mAP | mean average precision |

| AI | Artificial intelligence |

| ROI | Region of Interest |

| CMOS | Complementary metal oxide semiconductor |

| TP | True Positive |

| FP | False Positive |

| FN | False Negative |

| TN | True Negative |

| IOU | Intersection over union |

| UHD | Ultra high definition |

| PC | Personal Computer |

| YOLO | You Only Look Once |

| CNN | Convolution neural network |

| COCOs | Common Objects in Context |

| CCTV | Closed-circuit television |

References

- Fossum, E.R. Digital Camera System on a Chip. IEEE Micro 1998, 18, 8–15. [Google Scholar] [CrossRef]

- Mosqueron, R.; Dubois, J.; Paindavoine, M. High-Speed Smart Camera with High Resolution. Eurasip J. Embed. Syst. 2007, 2007, 024163. [Google Scholar] [CrossRef]

- Kandhalu, A.; Rowe, A.; Rajkimar, R. DSPcam: A camera sensor system for surveillance networks. In Proceedings of the Third ACM/IEEE International Conference on Distributed Smart Cameras (ICDSC), Como, Italy, 30 August–2 September 2009. [Google Scholar] [CrossRef]

- Salcudean, S.E.; Moradi, H.; Black, D.G.; Navab, N. Robot-Assisted Medical Imaging: A Review. Proc. IEEE 2022, 110, 951–967. [Google Scholar] [CrossRef]

- Menolotto, M.; Komaris, D.S.; Tedesco, S.; O’flynn, B.; Walsh, M. Motion Capture Technology in Industrial Applications: A Systematic Review. Sensors 2020, 20, 5687. [Google Scholar] [CrossRef]

- Du, R.; Santi, P.; Xiao, M.; Vasilakos, A.V.; Fischione, C. The Sensable City: A Survey on the Deployment and Management for Smart City Monitoring. IEEE Commun. Surv. Tutor. 2019, 21, 1533–1560. [Google Scholar] [CrossRef]

- Laufs, J.; Borrion, H.; Bradford, B. Security and the Smart City: A Systematic Review. Sustain. Cities Soc. 2020, 55, 102023. [Google Scholar] [CrossRef]

- Kukkala, V.K.; Tunnell, J.; Pasricha, S.; Bradley, T. Advanced Driver-Assistance Systems: A Path Toward Autonomous Vehicles. IEEE Consum. Electron. Mag. 2018, 7, 18–25. [Google Scholar] [CrossRef]

- Nidamanuri, J.; Nibhanupudi, C.; Assfalg, R.; Venkataraman, H. A Progressive Review: Emerging Technologies for ADAS Driven Solutions. IEEE Trans. Intell. Veh. 2022, 7, 326–341. [Google Scholar] [CrossRef]

- Ahmed, F.; Mohanta, J.C.; Keshari, A.; Yadav, P.S. Recent Advances in Unmanned Aerial Vehicles: A Review. Arab. J. Sci. Eng. 2022, 47, 7963–7984. [Google Scholar] [CrossRef]

- Yao, H.; Qin, R.; Chen, X. Unmanned Aerial Vehicle for Remote Sensing Applications—A Review. Remote Sens. 2019, 11, 1443. [Google Scholar] [CrossRef]

- Hassan, S.I.; Alam, M.M.; Illahi, U.; Al Ghamdi, M.A.; Almotiri, S.H.; Su’ud, M.M. A Systematic Review on Monitoring and Advanced Control Strategies in Smart Agriculture. IEEE Access 2021, 9, 32517–32548. [Google Scholar] [CrossRef]

- Finn, C.; Levine, S. Deep Visual Foresight for Planning Robot Motion. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), Singapore, 29 May–3 June 2017. [Google Scholar] [CrossRef]

- Martí, E.; De Miguel, M.Á.; García, F.; Pérez, J. A Review of Sensor Technologies for Perception in Automated Driving. IEEE Intell. Transp. Syst. Mag. 2019, 11, 94–108. [Google Scholar] [CrossRef]

- Sreenu, G.; Saleem Durai, M.A. Intelligent Video Surveillance: A Review through Deep Learning Techniques for Crowd Analysis. J. Big Data 2019, 6, 48. [Google Scholar] [CrossRef]

- Ekermo, A.; Norell, V. Reducing the Need for Manual Cleaning Maintenance of Digital Surveillance Cameras–A Conceptual Study. Master’s Thesis, Lund University, Lund, Sweden, 2013. [Google Scholar]

- Uřičář, M.; Křížek, P.; Sistu, G.; Yogamani, S. SoilingNet: Soiling Detection on Automotive Surround–View Cameras. arXiv 2019. [Google Scholar] [CrossRef]

- Kim, Y.; Kim, W.; Yoon, J.; Chung, S.K.; Kim, D. Deep Learning-Based Multiple Droplet Contamination Detector for Vision Systems Using a You Only Look Once Algorithm. Information 2024, 15, 134. [Google Scholar] [CrossRef]

- Gaylard, A.P.; Kirwan, K.; Lockerby, D.A. Surface Contamination of Cars: A Review. Proc. Inst. Mech. Eng. D J. Automob. Eng. 2017, 231, 1160–1176. [Google Scholar] [CrossRef]

- Wang, W.; Lai, Q.; Fu, H.; Shen, J.; Ling, H.; Yang, R. Salient Object Detection in the Deep Learning Era: An In-Depth Survey. IEEE Trans. Pattern Anal. Mach. Intell. 2022, 44, 3239–3259. [Google Scholar] [CrossRef]

- Song, Z.; Liu, L.; Jia, F.; Luo, Y.; Jia, C.; Zhang, G.; Yang, L.; Wang, L. Robustness-Aware 3D Object Detection in Autonomous Driving: A Review and Outlook. IEEE Trans. Intell. Transp. Syst. 2024, 25, 15407–15436. [Google Scholar] [CrossRef]

- Zhang, C.; Liu, T.; Xiao, J.; Lam, K.-M.; Wang, Q. Boosting Object Detectors via Strong-Classification Weak-Localization Pretraining in Remote Sensing Imagery. IEEE Trans. Instrum. Meas. 2023, 72, 5026520. [Google Scholar] [CrossRef]

- Zhang, C.; Lam, K.-M.; Liu, T.; Chan, Y.-L.; Wang, Q. Structured Adversarial Self-Supervised Learning for Robust Object Detection in Remote Sensing Images. IEEE Trans. Geosci. Remote Sens. 2024, 62, 5613720. [Google Scholar] [CrossRef]

- Zhang, C.; Xiao, J.; Yang, C.; Zhou, J.; Lam, K.-M.; Wang, Q. Integrally Mixing Pyramid Representations for Anchor-Free Object Detection in Aerial Imagery. IEEE Geosci. Remote Sens. Lett. 2024, 21, 6009905. [Google Scholar] [CrossRef]

- Hong, J.; Lee, S.J.; Koo, B.C.; Suh, Y.K.; Kang, K.H. Size-Selective Sliding of Sessile Drops on a Slightly Inclined Plane Using Low–Frequency AC Electrowetting. Langmuir 2012, 28, 6307–6312. [Google Scholar] [CrossRef] [PubMed]

- Lee, K.Y.; Hong, J.; Chung, S.K. Smart Self–Cleaning Lens Cover for Miniature Cameras of Automobiles. Sens. Actuators B Chem. 2017, 239, 754–758. [Google Scholar] [CrossRef]

- Lee, S.; Lee, D.; Hyun, Y.; Lee, K.Y.; Lee, J.; Chung, S.K. Self-Cleaning Drop Free Glass Using Droplet Atomization/Oscillation by Acoustic Waves for Autonomous Driving and IoT Technology. Sens. Actuators A Phys. 2023, 361, 114565. [Google Scholar] [CrossRef]

- Alagoz, S.; Apak, Y. Removal of Spoiling Materials from Solar Panel Surfaces by Applying Surface Acoustic Waves. J. Clean. Prod. 2020, 253, 119992. [Google Scholar] [CrossRef]

- Kim, Y.; Lee, J.; Chung, S.K. Heat-Driven Self–Cleaning Glass Based on Fast Thermal Response for Automotive Sensors. Phys. Scr. 2023, 98, 085932. [Google Scholar] [CrossRef]

- Park, J.; Lee, S.; Kim, D.I.; Kim, Y.Y.; Kim, S.; Kim, H.J.; Kim, Y. Evaporation-Rate Control of Water Droplets on Flexible Transparent Heater for Sensor Application. Sensors 2019, 19, 4918. [Google Scholar] [CrossRef]

- You, S.; Tan, R.T.; Kawakami, R.; Mukaigawa, Y.; Ikeuchi, K. Adherent Raindrop Modeling, Detection and Removal in Video. IEEE Trans. Pattern Anal. Mach. Intell. 2016, 38, 1721–1733. [Google Scholar] [CrossRef]

- Qian, R.; Tan, R.T.; Yang, W.; Su, J.; Liu, J. Attentive Generative Adversarial Network for Raindrop Removal from A Single Image. In Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018. [Google Scholar] [CrossRef]

- Son, S.; Lee, W.; Jung, H.; Lee, J.; Kim, C.; Lee, H.; Park, H.; Lee, H.; Jang, J.; Cho, S.; et al. Evaluation of Camera Recognition Performance under Blockage Using Virtual Test Drive Toolchain. Sensors 2023, 23, 8027. [Google Scholar] [CrossRef]

- Lu, S.; Zhao, Y.; Hellerman, E.A.P. UV-Durable Self-Cleaning Coatings for Autonomous Driving. Sci. Rep. 2024, 14, 8066. [Google Scholar] [CrossRef]

- Pao, W.Y.; Li, L.; Agelin–Chaab, M. Perceived Rain Dynamics on Hydrophilic/Hydrophobic Lens Surfaces and Their Influences on Vehicle Camera Performance. Trans. Can. Soc. Mech. Eng. 2024, 48, 543–553. [Google Scholar] [CrossRef]

- Fursa, I.; Fandi, E.; Mușat, V.; Culley, J.; Gil, E.; Teeti, I.; Bilous, L.; Sluis, I.V.; Rast, A.; Bradley, A. Worsening Perception: Real–Time Degradation of Autonomous Vehicle Perception Performance for Simulation of Adverse Weather Conditions. arXiv 2021. [Google Scholar] [CrossRef]

- Estribeau, M.; Magnan, P. Fast MTF Measurement of CMOS Imagers Using ISO 12233 Slanted–Edge Methodology. In Proceedings of the Optical Systems Design, Etienne, France, 19 February 2004. [Google Scholar] [CrossRef]

- Lee, W.M.; Upadhya, A.; Reece, P.J.; Phan, T.G. Fabricating Low Cost and High Performance Elastomer Lenses Using Hanging Droplets. Biomed. Opt. Express 2014, 5, 1626–1635. [Google Scholar] [CrossRef]

- Dodge, S.; Karam, L. Understanding how image quality affects deep neural networks. In Proceedings of the International Conference on Quality of Multimedia Experience (QoMEX), Lisbon, Portugal, 6–8 June 2016. [Google Scholar] [CrossRef]

- Padilla, R.; Passos, W.L.; Dias, T.L.B.; Netto, S.L.; Da Silva, E.A.B. A Comparative Analysis of Object Detection Metrics with a Companion Open–Source Toolkit. Electronics 2021, 10, 279. [Google Scholar] [CrossRef]

- Padilla, R.; Netto, S.L.; Da Silva, E.A.B. A survey on performance metrics for object-detection algorithms. In Proceedings of the International Conference on Systems, Signals and Image Processing (IWSSIP), Niteroi, Brazil, 1–3 July 2020. [Google Scholar] [CrossRef]

- Zhu, H.; Wei, H.; Li, B.; Yuan, X.; Kehtarnavaz, N. A Review of Video Object Detection: Datasets, Metrics and Methods. Appl. Sci. 2020, 10, 7834. [Google Scholar] [CrossRef]

- Lyu, C.; Zhang, W.; Huang, H.; Zhou, Y.; Wang, Y.; Liu, Y.; Zhang, S.; Chen, K. RTMDet: An Empirical Study of Designing Real–Time Object Detectors. arXiv 2022. [Google Scholar] [CrossRef]

- Qiao, S.; Chen, L.-C.; Yuille, A. DetectoRS: Detecting Objects with Recursive Feature Pyramid and Switchable Atrous Convolution. arXiv 2020. [Google Scholar] [CrossRef]

- Zhang, H.; Li, F.; Liu, S.; Zhang, L.; Su, H.; Zhu, J.; Ni, L.M.; Shum, H.-Y. DINO: DETR with Improved DeNoising Anchor Boxes for End–to–End Object Detection. arXiv 2022. [Google Scholar] [CrossRef]

| Contamination Area (%) | |||

|---|---|---|---|

| Surface Type | Slight Contamination | Moderate Contamination | Severe Contamination |

| Hydrophobic | 12.92 | 36.75 | 44.12 |

| Hydrophilic | 22.27 | 45.88 | 54.90 |

| Ground Truth | ||

|---|---|---|

| Predicted | Positive | Negative |

| Positive | True Positive | False Positive |

| Negative | False Negative | True Negative |

| Object Size | |||||

|---|---|---|---|---|---|

| XL | L | M | S | XS | |

| Average object size [pixel2] | 40,009 | 11,508 | 2247.3 | 773.86 | 564.55 |

| Number of images in the dataset | 200 | 400 | 800 | 400 | 200 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Kim, H.; Yang, Y.; Kim, Y.; Jang, D.-W.; Choi, D.; Park, K.; Chung, S.; Kim, D. Effect of Droplet Contamination on Camera Lens Surfaces: Degradation of Image Quality and Object Detection Performance. Appl. Sci. 2025, 15, 2690. https://doi.org/10.3390/app15052690

Kim H, Yang Y, Kim Y, Jang D-W, Choi D, Park K, Chung S, Kim D. Effect of Droplet Contamination on Camera Lens Surfaces: Degradation of Image Quality and Object Detection Performance. Applied Sciences. 2025; 15(5):2690. https://doi.org/10.3390/app15052690

Chicago/Turabian StyleKim, Hyunwoo, Yoseph Yang, Youngkwang Kim, Dong-Won Jang, Dongil Choi, Kang Park, Sangkug Chung, and Daegeun Kim. 2025. "Effect of Droplet Contamination on Camera Lens Surfaces: Degradation of Image Quality and Object Detection Performance" Applied Sciences 15, no. 5: 2690. https://doi.org/10.3390/app15052690

APA StyleKim, H., Yang, Y., Kim, Y., Jang, D.-W., Choi, D., Park, K., Chung, S., & Kim, D. (2025). Effect of Droplet Contamination on Camera Lens Surfaces: Degradation of Image Quality and Object Detection Performance. Applied Sciences, 15(5), 2690. https://doi.org/10.3390/app15052690