-

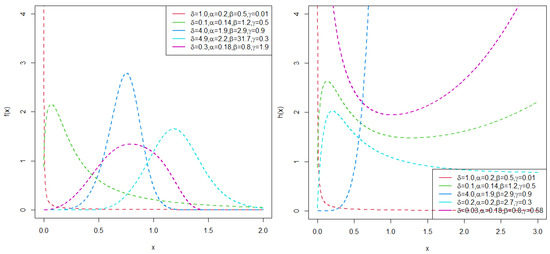

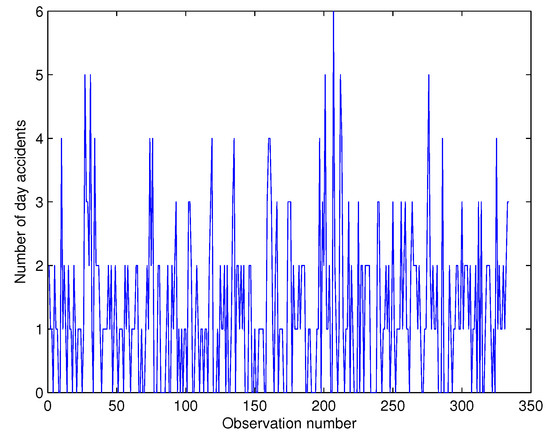

Bidirectional f-Divergence-Based Deep Generative Method for Imputing Missing Values in Time-Series Data

Bidirectional f-Divergence-Based Deep Generative Method for Imputing Missing Values in Time-Series Data -

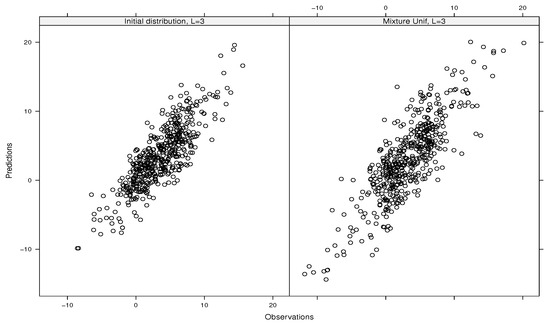

Maximum Penalized-Likelihood Structured Covariance Estimation for Imaging Extended Objects, with Application to Radio Astronomy

Maximum Penalized-Likelihood Structured Covariance Estimation for Imaging Extended Objects, with Application to Radio Astronomy -

EEG Signal Analysis for Numerical Digit Classification: Methodologies and Challenges

EEG Signal Analysis for Numerical Digit Classification: Methodologies and Challenges -

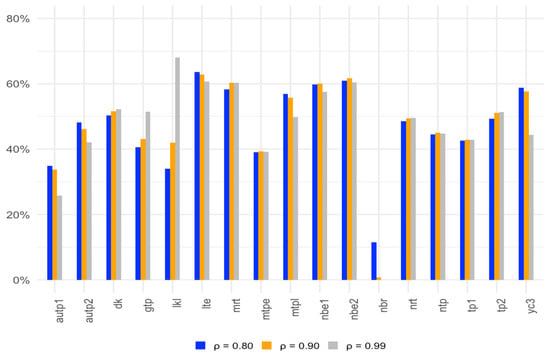

Comparing Robust Haberman Linking and Invariance Alignment

Comparing Robust Haberman Linking and Invariance Alignment

Journal Description

Stats

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within ESCI (Web of Science), Scopus, RePEc, and other databases.

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 19.7 days after submission; acceptance to publication is undertaken in 3.9 days (median values for papers published in this journal in the second half of 2024).

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

Latest Articles

Highly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Deadline: 31 October 2026

Conferences

Special Issues

Deadline: 30 June 2025

Deadline: 31 October 2025

Deadline: 31 December 2025