Abstract

The common geometrical (symplectic) structures of classical mechanics, quantum mechanics, and classical thermodynamics are unveiled with three pictures. These cardinal theories, mainly at the non-relativistic approximation, are the cornerstones for studying chemical dynamics and chemical kinetics. Working in extended phase spaces, we show that the physical states of integrable dynamical systems are depicted by Lagrangian submanifolds embedded in phase space. Observable quantities are calculated by properly transforming the extended phase space onto a reduced space, and trajectories are integrated by solving Hamilton’s equations of motion. After defining a Riemannian metric, we can also estimate the length between two states. Local constants of motion are investigated by integrating Jacobi fields and solving the variational linear equations. Diagonalizing the symplectic fundamental matrix, eigenvalues equal to one reveal the number of constants of motion. For conservative systems, geometrical quantum mechanics has proved that solving the Schrödinger equation in extended Hilbert space, which incorporates the quantum phase, is equivalent to solving Hamilton’s equations in the projective Hilbert space. In classical thermodynamics, we take entropy and energy as canonical variables to construct the extended phase space and to represent the Lagrangian submanifold. Hamilton’s and variational equations are written and solved in the same fashion as in classical mechanics. Solvers based on high-order finite differences for numerically solving Hamilton’s, variational, and Schrödinger equations are described. Employing the Hénon–Heiles two-dimensional nonlinear model, representative results for time-dependent, quantum, and dissipative macroscopic systems are shown to illustrate concepts and methods. High-order finite-difference algorithms, despite their accuracy in low-dimensional systems, require substantial computer resources when they are applied to systems with many degrees of freedom, such as polyatomic molecules. We discuss recent research progress in employing Hamiltonian neural networks for solving Hamilton’s equations. It turns out that Hamiltonian geometry, shared with all physical theories, yields the necessary and sufficient conditions for the mutual assistance of humans and machines in deep-learning processes.

1. Introduction

Chemistry is now deeply rooted in the two fundamental physical theories, quantum and classical mechanics. Quantum chemistry and molecular dynamics computer programs are indispensable devices in almost all kinds of chemical research. Thermodynamics, also a vital theory for chemistry that lacked a genuine mathematical foundation for years, has acquired the necessary mathematical framework in the last two decades, which brings it to the same level as classical and quantum mechanics. On the other hand, the advancements in mathematics that occurred in the 20th century in the fields of differential geometry and topology have revealed common geometrical structures in all physical theories. These discoveries provide a deeper understanding of the physical theories per se and pave the way for their application in other scientific fields, such as chemistry.

The purpose of this article is to render an introductory and graphical presentation of common geometrical structures of the principal physical theories and the consequences they may have in chemistry, especially via their numerical applications. Specifically, after almost two centuries of evolution of Hamiltonian theory, a modern geometrical description emerged, and significant theorems and techniques for locating invariant structures in phase space, and thus constants of motion, have been found [1,2,3]. Chemical dynamics and spectroscopy have tremendously benefited from applying these methods to comprehend the behaviors of highly excited molecules [4,5].

It is essential to underline that the stage of action for molecular dynamics, within a classical mechanical approach, is the phase space and its tangent bundle, which means that both generalized coordinates and conjugate momenta should be taken into account. We also emphasize that in the 1980s, it was found that quantum and classical mechanics share some common geometrical properties, which explain similarities as well as differences. It was shown that instead of the usual algebraic linear quantum theory formulated either in the Schrödinger or Heisenberg picture, we can take the quantum analog of phase space to be the projective Hilbert space of the extended Hilbert space [6,7,8].

Earlier, in the 1970s, it was recognized that the mathematical framework for thermodynamics is the contact geometry [9,10] of the physical states embedded in an odd-dimensional state space [11,12,13,14,15,16,17]. However, at the beginning of the twenty-first century, Balian and Valentin [18] made a significant contribution by publishing a Hamiltonian theory of thermodynamics in an extended phase space. Based on Callen’s [19] formulation of thermodynamics, they produced a Hamiltonian theory for reversible and irreversible processes equivalent to classical mechanics. They studied homogeneous Hamiltonians with generalized coordinates, the complete set of extensive properties (i.e., entropy, internal energy, volume, particle numbers, etc.) and conjugate momenta proportional to intensive properties (i.e., temperature, pressure, chemical potential, etc.), either in the entropy or energy representation. Gibbs’s fundamental equation was employed to describe the physical state manifold. This work triggered numerous studies, the results of which have revealed several geometrical properties common to classical and quantum mechanics [20,21,22,23,24].

It turns out that the mathematical abstraction and generalization of geometrical Hamiltonian theory in extended phase space lead to a common computational platform for working in both microscopic and macroscopic worlds. The aim of the present article is to highlight that Hamiltonian theories share some common geometrical properties in extended phase space with classical mechanics, quantum mechanics, and thermodynamics, which manifest the foundations for formulating and comprehending chemical dynamics and chemical kinetics.

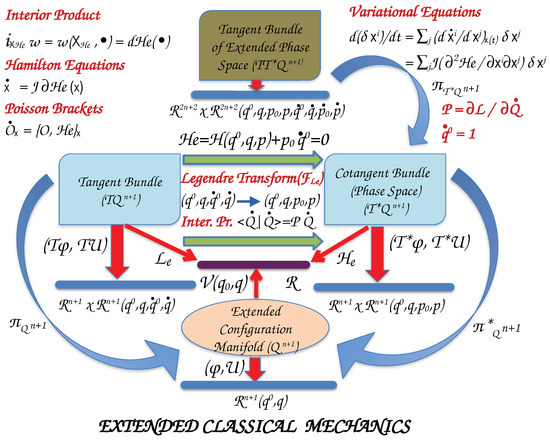

In Section 2, we present, with the help of two pictures, the Hamiltonian theories of classical and quantum mechanics. Similarly, in Section 3, we discuss Hamiltonian thermodynamics in contemporary mathematical language that unveils the geometrical properties of this theory. In Section 4, we describe numerical algorithms for solving Hamilton’s equations of motion and variational equations, common to the three principal theories. We mainly focus on high-order finite-difference (FD) methods and their relation to pseudospectral methods (PS) [25]. Furthermore, to illustrate the mathematical concepts introduced in the previous sections, we have performed numerical calculations with a rather simple two-dimensional nonlinear model, that of Hénon–Heiles [26]. We deduce that high-order finite-difference methods based on the Lagrangian interpolation polynomials are appropriate for solving initial value problems, as well as partial differential equations, such as the Schrödinger equation, necessary in chemical theories. Finally, the conclusions are summarized in Section 5, where recent research on Hamiltonian neural networks (HNN) is discussed. Proofs for some equations, tables that summarize results in Hamiltonian thermodynamics, and an example of formulating a chemical kinetic model with thermodynamic Hamiltonian theory are presented in Appendix A.

2. Geometrical Structures in Chemical Dynamics

Molecules are sets of nuclei and electrons interacting with Coulomb forces. Usually, their quantum mechanical treatment is obtained by solving the Schrödinger equation in the Born–Oppenheimer approximation [27] that separates the electronic from the nuclear motion. The electronic energies are calculated by freezing the nuclei at specific configurations, which produces the adiabatic potential energy surface for each electronic state.

These molecular potentials, named also Potential Energy Surfaces, are employed to solve the nuclear equations of motion either in quantum mechanics or in classical mechanics. It is, thus, important to investigate common geometrical structures in the foundations of the two basic theories of physics, which in turn may assist in the numerical solutions of the corresponding equations of motion. In the following two subsections, we examine the topological and geometrical properties of classical and quantum mechanics, whereas in Section 3, the relatively new Hamiltonian formulation of thermodynamics is reviewed, all of them in extended phase spaces and at the non-relativistic approximation.

2.1. Canonical Classical Mechanics

2.1.1. Manifolds and Maps

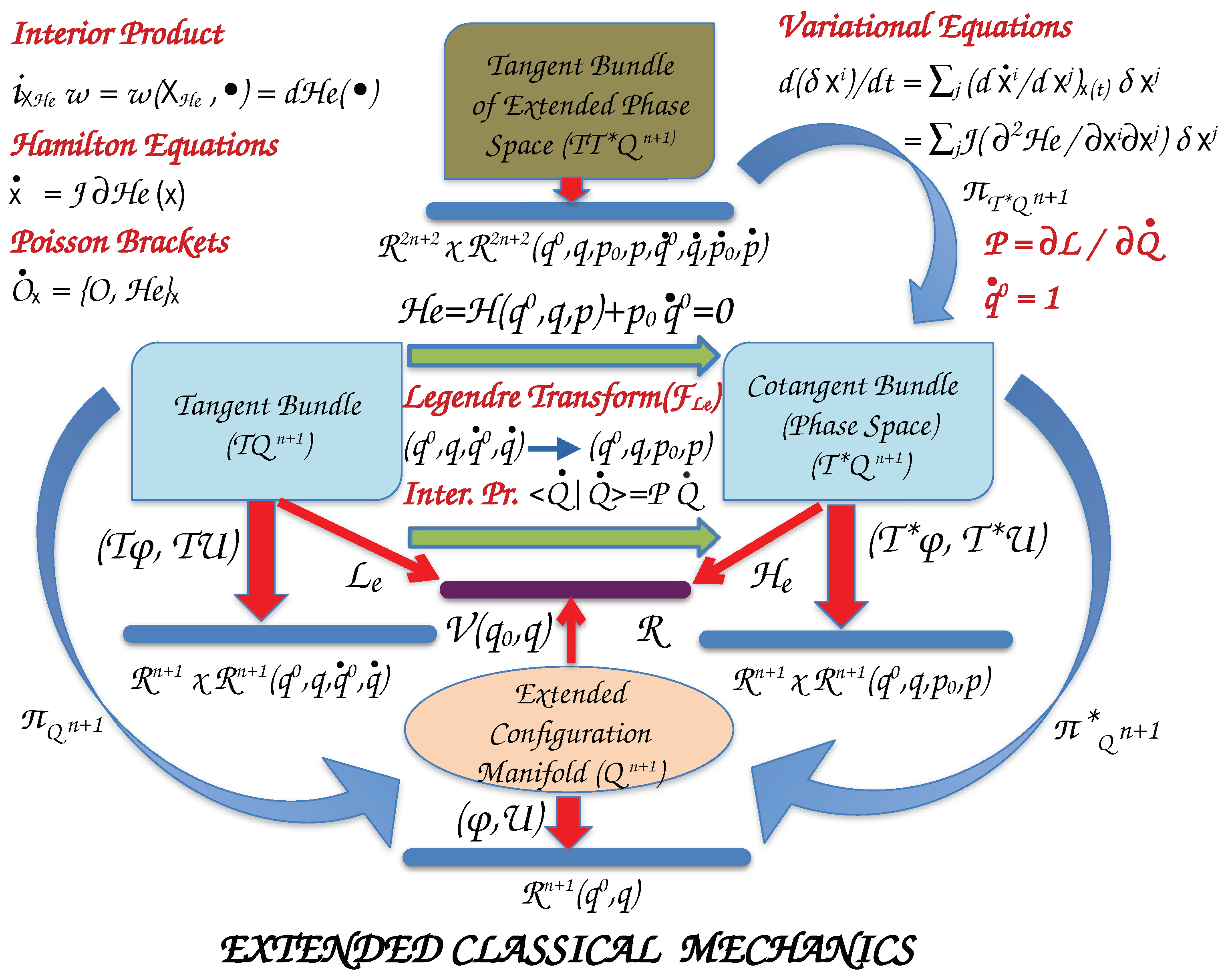

We introduce the basic geometrical concepts of classical mechanics for time-dependent systems by elucidating the graphics of Figure 1. Starting from the bottom and moving upwards, we denote the set of configurations of the system with degrees of freedom (DOF) with the column vector (The symbol denotes the transpose of a matrix. Thus, a row vector becomes a column vector, and vice versa, a column vector is converted into a row vector.), , and the parameter (time) with . Capital letters designate the set of coordinates as a column vector, . These coordinates parametrize the Extended Configuration Manifold, . Generally, this is a smooth (differentiable) nonlinear manifold.

Figure 1.

Manifolds and functions which determine the geometrical structures of a classical system with coordinates. denotes a parameter and, specifically, the time for time-dependent systems. are the n coordinates that define the configurations of the system and their canonical conjugate momenta. Details are given in the text.

Manifolds generalize the geometrical objects of curves and surfaces in three-dimensional Euclidean space into dimensional () spaces. It took more than two centuries to develop the current mathematical definition of a manifold since it required the parallel development of various other branches of Mathematics, such as topology, geometry, and algebra [28].

The extended configuration space can be described locally by a coordinate system (chart) Q, i.e., a homeomorphism

of an open set U of onto an open set of a Euclidean space of dimension .

In Euclidean space, we understand the definition of a coordinate system in as

for every point , and are the canonical projections taken to be differentiable functions. Transition maps provide the transformation from one coordinate system to another for points that belong to the intersection of two different open subsets.

The tangent space of at a point is a vector space, and the union of all tangent spaces for all points s of form the tangent bundle with the extended configuration space to be the base space

The tangent bundle contains both the configuration manifold and its tangent spaces called the fibers, and it is a smooth manifold of dimension . Since is also a smooth manifold, a chart is defined by the diffeomorphism

Each coordinate system from the atlas of induces a coordinate system for . This chart is said to be the bundle chart associated with . The velocities live in this space.

The potential function, , is a function on the configuration manifold to real numbers. The Lagrangian, , is a function on the tangent bundle to real numbers.

The dual space of (the set of all linear maps on tangent bundle to real numbers) is the cotangent bundle (), also named phase space. The phase space is a differentiable manifold of dimension for which the tangent bundle can also be defined with charts described by the generalized coordinates (), the conjugate momenta (Notice that we use superscripts for coordinates and subscripts for momenta.) , and their velocities, where

The Extended Hamiltonian, , is a function on the phase space to real numbers, , obtained by a Legendre transformation () of the Lagrangian. We may consider that the Legendre transformation generates a differentiable map between the tangent and cotangent bundles of , . and are canonical projections to extended configuration manifold of tangent and cotangent bundles, respectively. The tangent bundle of phase space is denoted by and is the canonical projection to phase space.

For a system of particles with DOF, we define the Lagrangian as the difference between kinetic energy and potential energy

with to be the kinetic metric tensor, written as a function of coordinates q and the particle masses m. The metric is the non-degenerate, symmetric, covariant tensor rank-2 that defines the kinetic energy. The momentum is the covector of the velocity ,

which is a map from the tangent bundle () to the cotangent bundle (). Obviously, for a diagonal unit metric, , momenta are equal to velocities.

The velocities can also be extracted from the momenta by the inverse tensor

where (The components of Kronecker delta tensor, , are equal to 1 for and 0 for .).

Momenta may also be considered to be forms which act on the tangent bundle to real numbers, , by taking the interior product (contraction) of forms with vector fields. Employing Dirac’s notation, we write

If is the Hamiltonian of a system of particles with DOF, then the extended Hamiltonian, , is defined with the Legendre transformation (Notice that in chemical thermodynamics the Legendre transformation is defined as the difference between the function and the sum of products of conjugate variables).

The physical states are obtained by imposing the two constraints

which result in

Hence, the time-extended system is a conservative system with Hamiltonian .

2.1.2. Equations of Motion

We collect the generalized coordinates and their conjugate momenta to a single column vector of dimension, where we use . Hamilton’s principle of stationary action leads to Hamilton’s equations of motion. Then, Hamilton’s equations with a Hamiltonian are written in the form

or

where is the gradient of Hamiltonian function, and J the symplectic matrix. J is the map on the tangent bundle of phase space M

and are the zero and unit matrices, respectively. It is proved that J satisfies the relations,

Thus, the Hamiltonian vector field is

or using the coordinate base in the tangent bundle of phase space , we write

that lives in the tangent space of phase space.

In a more general approach, we can extract the Hamiltonian vector field as follows. In the cotangent space of extended coordinate manifold, let us denote with the form acting on the phase space manifold

and with the forms on the configuration manifold

Since is a linear map from to M and an form on M we can pull-back to to produce the form , which lives on the base manifold . Then, the canonical Poincaré form satisfies the relation (tautological form)

Hence, we can expand as

is invariant under coordinate transformations

which we assume to be invertible

This is proved by arguing as follows. The velocities are given by

The new momenta are

Hence,

The canonical Symplectic form is extracted by taking the exterior derivative (Notice the negative sign in our formulation) of

This is a non-degenerate, skew-symmetric, closed form (). In local coordinates , is expressed by the wedge products

If we introduce , the symplectic form (Equation (29)) is written

A pair , i.e., the phase space with the symplectic form, is said to be a symplectic manifold. Those local coordinates which satisfy, , are said to be canonical and symplectic. In the following, we shall see that Hamiltonian mechanics and its geometrical properties can be formulated by .

Let be a symplectic manifold of dimension with a canonical symplectic form. The Hamiltonian function is a smooth function on the phase space. The Hamiltonian vector field, , (Equations (17) and (18)) is then defined via the relationship

symbolizes interior product (contraction) and the triple is a Hamiltonian system. In particular, for the variable we extract the equation

We can see that with this formulation of time-dependent systems and taking into account the constraints Equations (11) and (12), the trajectories are described at each time t in the physical phase space of the system of dimension. With Hamilton’s equations of motion with the time-dependent Hamiltonian are written

For a smooth function on phase space, the Poisson bracket is defined as

Since

or employing the coordinate base in the tangent space of phase space

we write the Poisson brackets in a coordinate system as

We have used

where is the Kronecker’s delta tensor.

The Lie derivative of a dynamical quantity with respect to the Hamiltonian vector field is defined as the directional derivative of along the vector

So,

From the above equation, we infer that for conserved quantities, , the Poisson’s bracket commutes, i.e., .

Applying the above equation to coordinates and conjugate momenta, , we extract Hamilton’s equations

Poisson brackets defined on a set of smooth functions on M satisfy the properties; For , then

- is bilinear,

- antisymmetric,

- , and

- (Jacobi identity).

2.1.3. Integrable Hamiltonian Systems

In classical mechanics, a finite dimensional Hamiltonian system, is completely integrable if it admits n independent constants of motion whose Poisson brackets are in involution, i.e., pairwise commute.

Let the integrals of motion are and are assigned to values . Then, the corresponding level set, is a Lagrangian submanifold.

In a more formal wording, for an even-dimensional phase space, M, the symplectic form , which satisfies the condition (volume form)

the condition determines the Lagrangian submanifold .

For compact phase spaces, the Lagrangian submanifolds have the structure of a torus, . Moreover, in a neighborhood of every such invariant torus, one can find angle-action coordinates ,

In such a coordinate system, the Hamiltonian function depends only on , i.e., , and Hamilton’s equations give

are the normal frequencies, and the action variables are constants. The Poisson brackets of the action coordinates, I, pairwise commute, , and also satisfy .

2.1.4. Complexification of Classical Hamilton’s Equations

Hamilton’s equations in classical mechanics can also be cast in a complex manifold by complexification of phase space, i.e., by introducing the complex transformation

Similarly, in Section 2.2.3 by realification of the quantum Hilbert space, we bring the Schrödinger equation into the form of Hamilton’s equations.

To make the complex transformation canonical, we define the complex variables

The inverse transformation gives the real functions

Because of the symmetry of Hamiltonians under the unitary group , i.e., the Hamiltonian should be invariant with a unitary transformation. We write the canonical Poincaré form as

and thus, the canonical symplectic form becomes

For example, a harmonic oscillator in scaled normal coordinates and introducing these canonical complex coordinates results in

Since the transformation to complex coordinates and conjugate momenta is symplectic, Hamilton’s equations are also written as

where is the Hamiltonian in complex coordinates.

2.1.5. Jacobi Fields and Variational Equations

Geodesic curves are obtained by searching for the critical points of the length functional, i.e., by requiring

is the infinitesimal length of the curve given by the norm of the velocity vector.

The same critical points are obtained by varying the integrand instead

It is worth noting that the above integral depends on the parameter t. This integral is related to the action or the energy of a physical system. Indeed, Hamilton’s principle of stationary action results in Hamilton’s equations, the solutions of which are geodesics on the phase space manifold.

Let C be a trajectory in phase space with kinetic metric g

From the above equations, we deduce that the coefficients result from the action of the metric on the base coordinates of the tangent space

The length of a trajectory starting from the point and finishing at the point is calculated as the line integral

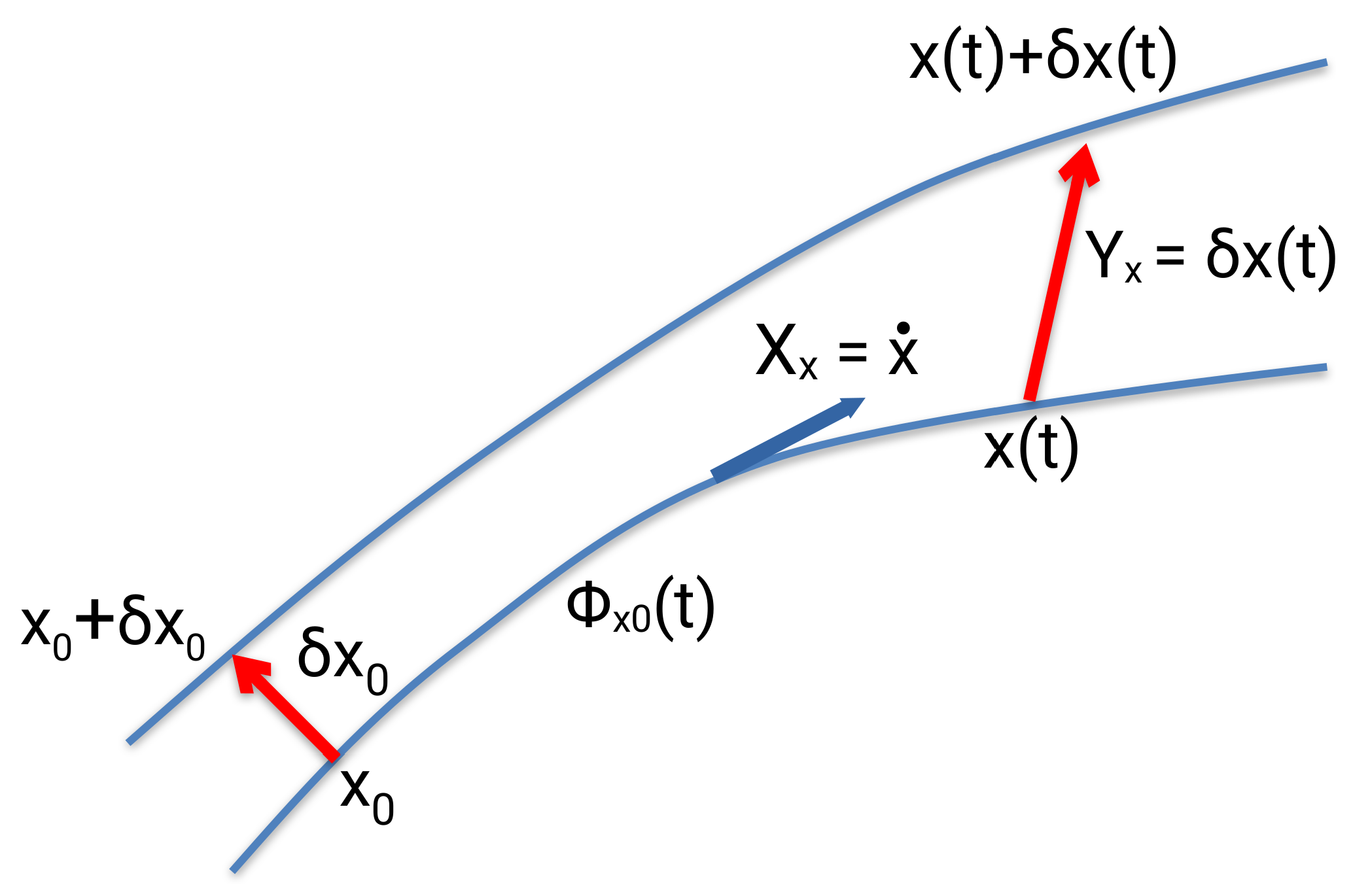

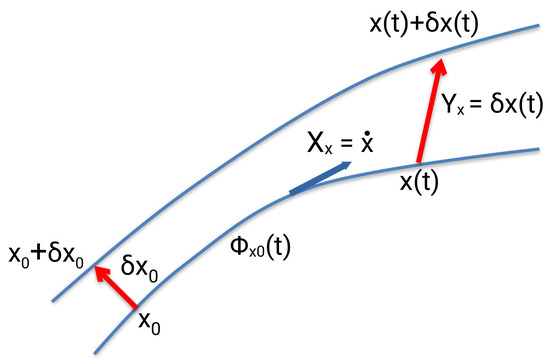

In case we want to investigate the behavior of neighboring trajectories to a reference one in phase space, we examine the time evolution of the variation vector , (Figure 2). The time derivative of the vector field with respect to the vector field (Lie derivative) is equal to

J is the symplectic matrix (Equation (15)). The higher order term (h.o.t.) is a function of the displacement at time t, which contains all the terms in the Taylor expansion larger than the first order. The derivatives are computed at the reference trajectory with initial conditions . is a Jacobi field and the equations

the variational equations.

Figure 2.

The description of the variation vector field, , with respect to a reference trajectory with vector field and initial condition . denotes the Hamiltonian flow.

The fundamental matrix satisfies the variational equations with initial condition . It is a symplectic matrix, and therefore, the following equations are valid [3]

It is proved that if is an eigenvalue of a real symplectic matrix, so are and . are complex conjugate numbers. Also, for every constant of motion, two eigenvalues of the fundamental matrix are equal to one. For proofs and applications see references [4,5,29,30].

2.2. Geometrical Quantum Mechanics

2.2.1. Manifolds and Maps

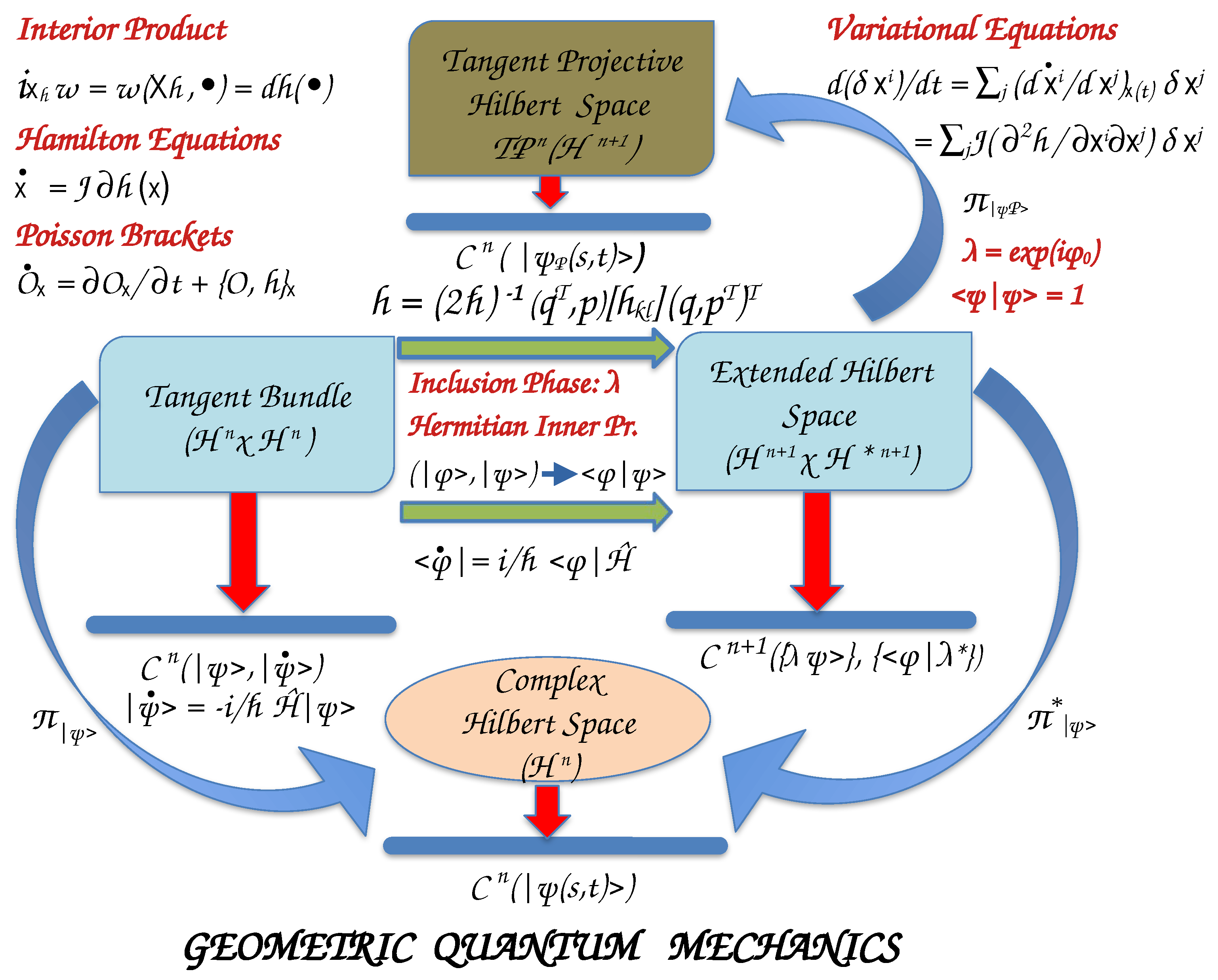

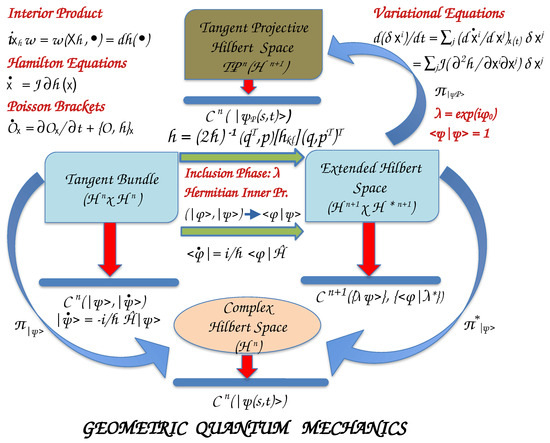

Moving to the quantum world, the states of a system are described by complex vectors, , with s to be the configuration coordinates and t the time instead of the real coordinates and momenta (or velocities) in classical mechanics. The square of the norm of state vectors is interpreted as a probability density, with to be the probability of finding the system in the coordinate intervals at time t. The observable quantities, which are real-valued functions on phase space in classical mechanics, are replaced by operators that designate linear transformations on the complex state vector space, named Hilbert space of dimension n () (Figure 3). Infinite-dimensional Hilbert spaces for quantum systems also exist.

Figure 3.

Manifolds and functions which determine the geometrical structures of a quantum system. The states of the quantum system are the elements of a dimensional complex vector space (Hilbert space that includes the vectors , their complex conjugate , and linear transformations . Hermitian inner products, , are employed for Hilbert spaces. The tangent bundle is mapped to the Extended Hilbert Space by the inclusion of a complex phase , the elements of the unitary group , that produces the rays . The canonical projection, , projects the rays in onto the Projective Hilbert Space , the space where the physical states of the system live. Details are given in the text.

The time evolution of the quantum states is given by Schrödinger’s equation

where , ℏ the reduced Heisenberg constant, is the Hamiltonian operator of the system that corresponds to its energy, and is the Hamiltonian–Schrödinger vector field. To any observable , we assign the operator and the Schrödinger vector field . Hence, we may consider the vectors as vector fields, and thus, the Hilbert space to be also the tangent space at the state .

The cotangent space of the Hilbert space, , at state is , with covectors to be the complex conjugate functionals , and .

(We use Dirac’s (bra, ket) notation.) denotes the Hermitian inner product, ().

Separating the real and imaginary parts of the Hermitian inner product, we write

are the real and the imaginary components, respectively, of the kth component of the complex vectors. G is a Riemannian metric, i.e., it is real, positive definite, and strongly non-degenerate

is a symplectic (antisymmetric) closed form, and strongly non-degenerate

Both act on the tangent bundle .

Since the physical interpretation of a quantum state is probabilistic, i.e., for a pure state (the states of an isolated system), the following normalization condition should be satisfied

The observables, or measurable quantities of the system, , are represented by self-adjoint linear operators, , on , and are thus vector fields. The expectation value of an observable with operator at the state is the real-valued function

In particular, for the Hamiltonian operator of the system, we write

2.2.2. Projective Hilbert Space

For any nonzero factor , such as , yields the same expectation value for the observable as does . are the elements of the unitary Lie group, . Thus, the inclusion of these phases to the initial Hilbert space results in the extended Hilbert space of dimension, , which is isomorphic to with a Hermitian inner product.

The set of vectors obtained by multiplying the state with consists of a dimensional subspace of , called ray, which we symbolize as . A ray is an equivalence class of vectors in : two vectors are equivalent if and only if one is a nonzero complex scalar multiple of the other. Also, adopting normalized vectors (Equation (70)), the physical quantum states are elements of the complex Projective Hilbert space, of dimension obtained by the canonical projection map of the extended Hilbert space .

Hence, a quantum system may be described with what mathematicians call principal bundle

with state vectors the rays in , and physical states in obtained by the projection map . The inverse projection is

To recapitulate, the inclusion of the unitary group extends the dimensional Hilbert space to the extended Hilbert space. For normalized state vectors, the extended Hilbert space is mapped to the unit sphere of dimension embedded in the real Euclidean space. Finally, by the projection we produce the Projective Hilbert space isomorphic to the complex space or to real of dimensional space, ,

The tangent space of the projective space at point , , is isomorphic to the kernel of the ray, , i.e.,

and the push-forward of the projection map, , is a complex conjugate linear isomorphism onto

An illustrative figure is shown in the article of Dorje C. Brody and Lane P. Hughston [7].

For a normalized ray and its kernel, one can argue that [6,7,8]

which gives a well-defined Hermitian inner product on . Moreover, we deduce that the equation

provides a strong symplectic form on , and the equation

defines a strong Riemannian metric on called the Fubini–Study metric. ℜ and ℑ are the real and imaginary parts of the Hermitian inner product, respectively, in the extended complex Hilbert space . Both and are invariant under all transformations , for all unitary operators on .

2.2.3. Realification of Hilbert Space and Kähler Manifolds

Expanding the vectors of Hilbert space in a dimensional basis, as well as its dual basis, we write the coefficients with the real and imaginary components. Similarly, the covectors are written as for the real and for the imaginary components. Thus, working with the real vectors we can describe quantum states in a real vector space .

An almost complex structure is introduced in by replacing the imaginary number with the symplectic matrix , Equation (15). We also derive

The triple attributes a Kähler structure, and thus, is a Kähler manifold [2].

By realification of the Hilbert space, we may express the Hamiltonian–Schrödinger vector field as

Also, for an observable, , the corresponding Schrödinger vector field is

For a time-independent Hamiltonian, , we solve the Schrödinger equation by employing the unitary propagator , and write the Hamiltonian flow as

generates a one-parameter group of transformations on , which preserve the metric G and the symplectic form .

The triple is a Hamiltonian system with Hamiltonian function the Expectation value of at the state

We can prove (see Appendix A.1) that for an observable and normalized states , the differential form of the expectation value of is the interior product of the symplectic form with the Schrödinger vector field (Equation (86))

where . The above equation is in accordance with Equation (31) of classical mechanics. Hence, Hermitian operators give rise to quadratic real-valued functions . The vector field generated by the expectation value function of the operator is a Schrödinger (Hamiltonian) field.

If are the expectation value functions of two observables, the Poisson bracket (Equation (39)) is defined by the equation (see Appendix A.2)

is the commutator of the two operators .

The uncertainty (dispersion) of a quantum observable in a normalized state is defined as [8,31]

If is an eigenvalue of the observable , then, .

For two quantum observables the product of the uncertainties of the expectation value functions is written as

and the covariance or correlation function is expressed as

where the anticommutator is denoted by . It is proved that

The Schrödinger vector fields are expressed as usually

If we use Equation (90), then we can express the commutator

Schwartz inequality implies

Since

and

the Robertson-Schrödinger uncertainty relation is written as (Notice that to be consistent with the literature in discussing the covariance of two observables, we introduce the factor in the commutator and anticommutator).

2.2.4. Equations of Motion

We have seen that in the Extended Hilbert space, the vector states are the rays , which for simplicity we symbolize as . The projection of the Extended Hilbert space on the dimensional tangent Projective Hilbert phase makes the solutions of the time-dependent Schrödinger equation to be equivalent to the solutions of Hamilton’s equations in the Projective Hilbert space, with the expectation value of the Hamiltonian operator playing the role of Hamiltonian function. Furthermore, the phase space is a Kähler manifold, both in the extended Hilbert space as well as in the Projective Hilbert space.

If we use as basis set the coordinate basis the representation of the state is

The coefficient is called wavefunction and it is a complex function, , which we assume normalized to one

With an orthonormal coordinate basis, the completeness relation is written as

In the following, we adopt the coordinate representation of the state vectors using wavefunctions in the Schrödinger picture, . We expand of a dynamical system in an arbitrary orthonormal basis set,

In this expansion, the basis functions are time-independent, whereas the coefficients depend on time. are solutions of the Schrödinger equation evolving in the extended Hilbert space

and their complex conjugate solutions of the equation

We assume to be normalized, , at any time. Then, by substituting Equation (104) in the expectation value of the Hamiltonian, , we obtain

The Schrödinger equation in the extended Hilbert space is mapped into Hamilton’s equations in the Projective Hilbert space with Hamiltonian function the expectation value of the Hamiltonian operator . For simplicity, we again denote the quantum states in the Projective Hilbert space with . Then, we differentiate Equation (107) with respect to

Similarly, we take

We define the complex variables () by introducing the real functions and to correspond to real and imaginary parts of the complex variables, respectively,

Equations (108) and (109) are the quantum equivalent of Hamilton’s equations of motion

Hence,

are the transition frequencies. It is also worth noting that the quantum Hamiltonian is similar to that of a harmonic oscillator after complexification (Equation (56)).

We can take the inverse of Equations (110) and write the real functions and as

Adopting the realification of the complex Projective Hilbert space, we may transform Hamilton’s equations to

or

is the representation of Hamiltonian operator in the basis set , and thus, a Hermitian matrix (The Hamiltonian operator is Hermitian, and its representation in a basis results in a Hermitian matrix, ).

The Hamiltonian vector field is extracted from an equation similar to that of classical mechanics (Equation (31)). Hence, in the realified phase space with coordinates the canonical Poincaré form

provides the symplectic form

Then, the interior product (contraction) of gives

and the Hamiltonian vector field is extracted as

We define the length of a curve in the Projective Hilbert space by introducing the Riemannian metric as

For real wavefunction, the length is the Euclidean distance

2.2.5. Quantum Systems as Totally Integrable Hamiltonian Systems

It has been shown that geometrical quantum mechanics naturally describes a completely integrable system, as follows. Let be a complex separable Hilbert space of dimension , and view the triple as a Hamiltonian dynamical system on the phase space , equipped with the symplectic form . Let be the self-adjoint Hamiltonian operator for the system, and assume that each eigenspace of is one-dimensional (non-degenerate). Choose an orthonormal basis for consisting of eigenvectors of . Define the projection operators by

where we have used Equation (104).

Without loss of generality, we set the lowest eigenvalue () to be 0 so that we can expand the Hamiltonian operator as

Observe that the projectors form a mutually commuting set of n operators on , and we can define the corresponding expectation value functions in the Projective Hilbert space as

Hence,

are the real and imaginary parts of the wavefunction. Thus, the Hamiltonian in the projective Hilbert space resembles that of a sum of n harmonic oscillators in scaled canonical coordinates (Equation (55)).

Therefore, we may conclude that there are n constants of motion, , and the trajectories lie on dimensional tori (Lagrangian tori).

3. Hamiltonian Chemical Thermodynamics

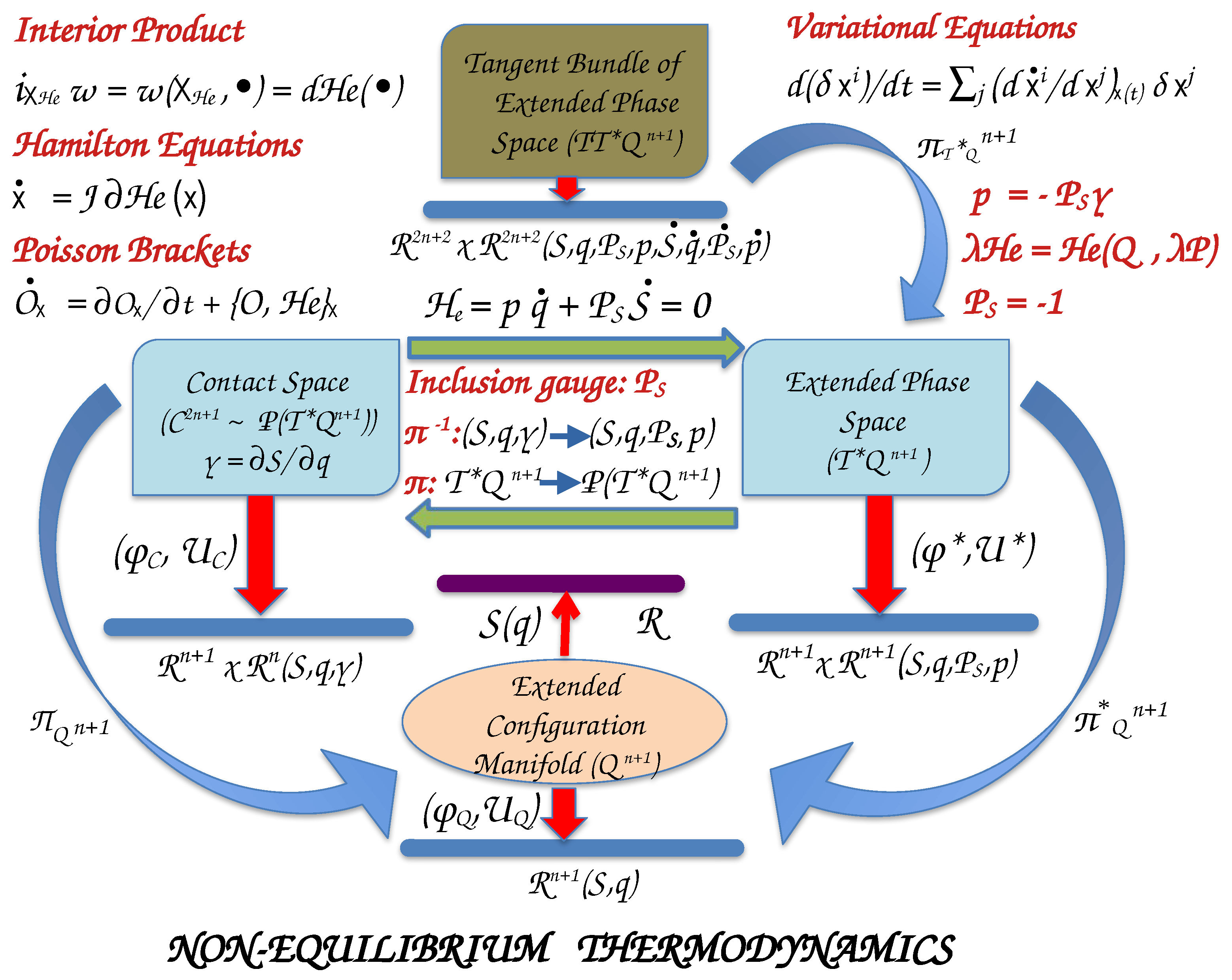

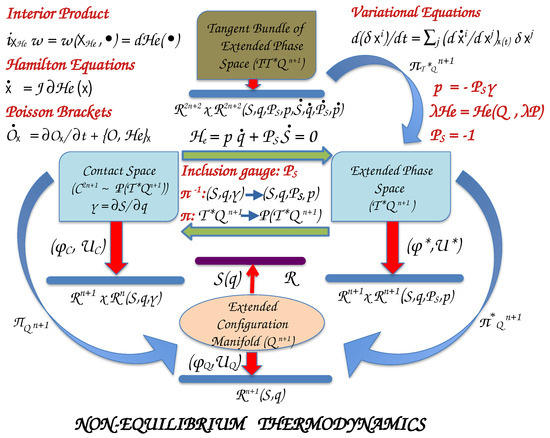

3.1. Manifolds and Maps

To establish an analogous theoretical framework for classical thermodynamics to that of Hamiltonian classical mechanics, we consider the macroscopic properties of the system to consist of the configuration manifold (Figure 4). For a typical chemical system we usually take the extensive physical properties of entropy (S), internal energy (U), volume (V), and the number of molecules (or moles) () of M chemical species to consist of the coordinates of the extended configuration manifold , where . If we attribute entropy to be a homogeneous first-degree function of , then according to Euler’s theorem for homogeneous functions we can write

where we have introduced the conjugate intensive variables as the partial derivatives of entropy

T is the absolute temperature, P the pressure, and the chemical potentials of M compounds.

Figure 4.

Manifolds and functions which determine the geometrical structures of a thermodynamical system with coordinates. S denotes the entropy and are the coordinates of extensive properties. are the partial derivatives of entropy that correspond to the intensive properties of the system. With the inclusion of gauge , the conjugate momenta p are defined with respect to which homogeneous Hamiltonians, , of first-degree are determined. Details are given in the text.

and entropy comprise of a coordinate system for the contact manifold , where the physical states of the system live. The extended configuration manifold and the contact manifold are generally nonlinear, and the maps and determine local coordinate systems in Euclidean space.

The total differential of S is Gibbs’s fundamental equation that describes the physical thermodynamic submanifold (PTS) of a thermodynamic system

If we assign the entropy S to coordinate , we can also write

From Equations (129) and (130) we deduce that the form satisfies

This equation expresses the physical state submanifold of a thermodynamic system, named Legendrian submanifold, and it is what we represent with the equation of states [32].

In a more formal wording, in the contact space the locally defined form that satisfies the condition, i.e., there is the volume form

ascertains the Legendrian submanifold of by requiring . In other words, the kernel of provides the maximal dimension hyperplanes tangent to .

To construct a Hamiltonian system, we need to define the canonical conjugate momenta. Following Balian and Valentine [18], we denote the conjugate momentum of entropy with , and we take it as a non-vanishing free parameter or a gauge variable. Then, the canonical conjugate momenta to the other coordinates of are defined as

Hence, act as new variables, which replace the physical intensive variables . We write and Equation (131) determines the Poincaré form

which produces the symplectic form

This equation sets the Thermodynamic Extended Physical State Submanifold (TEPSS) in the extended phase space, , named Lagrangian submanifold.

In a more formal wording, for an even-dimensional phase space, , the symplectic form (see also Equation (29)), which satisfies the condition (volume form)

determines the Lagrangian submanifold in by requiring .

The thermodynamic contact space is the projective space of thermodynamic extended phase space (see Appendix A.4.1)

where is the projection map.

It is proved that the submanifold is a Legendrian submanifold, if and only if

is a homogeneous in momenta Lagrangian submanifold (see Table A1). This means that as well as

We say that all , which satisfy Equation (136), belong to the ray . Then, it is valid for every vector field tangent to . Also, every homogeneous Lagrangian submanifold originates from a Legendrian submanifold of the form . We can also state that a submanifold is homogeneous if its generating function is homogeneous.

In the entropy representation, the n extensive independent variables together with the parameterize the Lagrangian submanifold. If the entropy is the generating function of the Legendrian submanifold, then for the Lagrangian submanifold the generating function is . This is a property of homogeneous functions of first-degree. Similarly, in energy representation we take as generating function for the Lagrangian submanifold [29]. With the generating function in the entropy representation, we extract the remaining variables as

The extended Hamiltonian is a function on phase space to real numbers, whereas the tangent bundle of phase space, , is described by coordinates and momenta, , as well as their time derivatives. The extended Hamiltonian is a homogeneous function of first-degree in momenta, i.e.,

is the projection map of the tangent bundle of phase space to phase space.

In Appendix A.4, we summarize in Table A2 and Table A3 several formulae of Hamiltonian thermodynamics in entropy- and energy-representations, respectively.

3.2. Equations of Motion

The Hamiltonian function acting in the extended phase space is a homogeneous function of first-degree in momenta [18] and according to Euler’s theorem for homogeneous functions of first-degree, we can write

For a simple thermodynamic system, the extended Hamiltonian is . To extract the physical states, we impose the constrain , which implies on thermodynamic extended physical state submanifold, also written as [29]

We point out that a Hamiltonian system is the triple , where is the Hamiltonian vector field defined on the tangent bundle of phase space, and a skew-symmetric, non-degenerate, closed differential form. Then, we can use the machinery of geometrical classical mechanics as was developed in Section 2.1 to write down equations for equilibrium and non-equilibrium processes. Thus, the Hamiltonian vector field can be extracted from the equation

given the Hamiltonian function on the phase space . This equation provides the Hamiltonian vector field

From the definition of the extended Poincaré form (Equation (133)) we prove that

for homogeneous in momenta of first-degree Hamiltonian functions.

Hence, Hamilton’s equations take their usual form (Equation (33))

Moreover, is a canonical coordinate system in the corresponding phase space, and thus, satisfy the Poisson brackets Equation (34)

3.2.1. Contact Equations of Motion

Since the Poisson bracket (Equation (34)) is invariant under gauge (canonical) transformations, we obtain

where F is a generating function and

we extract the contact vector field (see Appendix A.3)

For a generating function F, which is independent of variable, as well as a homogeneous function of first-degree in momenta, the contact equations of motion are reduced to those of Hamilton’s equations in phase space, i.e.,

3.2.2. Riemannian Metric on Lagrangian Submanifold

We can define a Riemannian metric [28] on a Lagrangian submanifold, , with generating function . In canonical coordinates it takes the form (see Table A2)

is the metric introduced by Ruppeiner [33,34] and it is usually called Ruppeiner metric.

Weinhold [35,36] has extracted a metric, , in the energy representation of thermodynamics with generating function [29] (see Table A3).

3.3. Embedding Systems in Homogeneous Media

We examine the case of a simple system with internal energy U and constant volume and number of particles. The embedded mechanical system is described by the Hamiltonian . Here, we consider the augmented system, system plus environment, isolated with total energy E constant,

The entropy of the thermodynamic system is given by the equation

i.e., a function of independent variables. In the extended thermodynamic phase space, we include entropy as an independent variable as well

The canonical conjugate momenta are and . Hence, the dimension of thermodynamic extended phase space is .

In the entropy representation, we take as the generating function of the Legendrian submanifold in the thermodynamic contact space the entropy . Hence, the generating function of the corresponding Lagrangian submanifold in the extended phase space is . For the canonical momenta become

Therefore, we have attained the important conclusion that the thermodynamic extended state manifold in thermodynamic extended phase space is the Lagrangian submanifold, ,

From Equation (140) the Hamiltonian function in the extended phase space is written as

Hamilton’s equations are then determined

where the velocities are determined from the definition of the embedded system.

3.4. Chemical Kinetics

The kinetics of chemical reaction networks can also be formulated using a geometric Hamiltonian theory as an embedded system. However, classical chemical kinetics is better studied by introducing temperature, pressure, and the elementary chemical reaction coordinates as the independent variables.

3.4.1. Thermodynamics of Chemical Reactions

A general chemical reaction with reactant compounds and product compounds can be expressed by the formula

where . The stoichiometric integers are negative for the reactant molecules and positive , for the product molecules, .

For K elementary chemical reactions, we must employ K independent reaction coordinates . Then, the time variation of the number of molecules is related to the velocities of reactions by the equation

or simply as

where and , being the stoichiometric matrix for the K elementary reactions.

The reaction Gibbs free energy is defined by introducing the chemical potentials of the compounds

where is Gibbs free energy, T the temperature and P the pressure. From Gibbs’s fundamental equation

and for a general chemical reaction at constant temperature and pressure, Equation (169), we have

Therefore, the above equation becomes

Usually, the derivative of Gibbs free energy with the reaction coordinate is denoted as and is called molar reaction Gibbs free energy.

For K elementary reactions, we write

employing the rates of forward () and backward () reactions, as expressed by the law of Mass Action [37,38,39,40].

Also, for an elementary chemical reaction De Donder [41,42] introduced the quantity of Affinity

which can be written as [30]

3.4.2. Thermodynamic Hamiltonian in Massieu-Gibbs Representation

Chemical reactions are better studied at constant temperature and pressure. Hence, we first transform the entropy from a function of the internal energy and volume to a function in temperature and pressure by a Legendre transformation

is Massieu function related to Gibbs free energy [19].

The above Legendre transformation is a diffeomorphism on the Legendrian and Lagrangian submanifolds. This means that we can define Affinity with the chemical potentials of M chemical compounds (reactants and products) given by Gibbs free energy

The rate of entropy production for the kth-irreversible reaction is written with the molar reaction Gibbs free energy

where is the Affinity of kth-reaction and is the reaction coordinate that describes the progress (extent) of the reaction. The total entropy rate for K reactions becomes

or employing Equations (176) and (178), as

where R is the ideal gas constant. Obviously, for reactions at equilibrium in a closed thermodynamic system, we have , which means that , and thus, the total entropy is conserved. The inequality in Equation (183), which is consistent with the second thermodynamic law, holds because ln is a monotonous function in the domain of its definition.

The thermodynamic extended state manifold in thermodynamic extended phase space is a Lagrangian submanifold, embedding in phase space with . The generating function is

is the conjugate momentum of .

The Hamiltonian function in the extended cotangent bundle (phase space) is written as

At constant temperature and pressure and using concentrations instead of the number of molecules for reactants and products, with conjugate momenta also denoted by , we take

For K elementary reactions in the reaction coordinate space, , and under the transformation of stoichiometric matrix we have

and the Hamiltonian (Equation (189)) becomes

Then, Hamilton’s equations are written

We can also define a metric on the Lagrangian submanifold in Massieu-Gibbs representation, and this is given as

Therefore, to measure the distance between initial and equilibrium states, we compute the length of a path on the thermodynamic extended state manifold

with to be the maximum integration time and substituting . The distance of two states has been utilized to define a better low bound of the entropy production or dissipated work (availability) than zero for finite time irreversible processes [43,44].

In Appendix A.5, an example of two elementary consecutive chemical reactions is presented and described in detail.

4. Numerical Implementations

4.1. High-Order Finite-Difference and Pseudospectral Methods

High-order finite-difference methods are widespread for solving the differential equations encountered in classical and quantum mechanics. They are based on polynomial approximations to the solution functions, and thus, finite-difference methods (FD) are suited for problems that can be solved by expanding the unknown functions to Taylor series [25,45]. In the past, we developed and tested high-order multivariable finite-difference methods in configuration space and time (herein they are denoted with x), formulated by Lagrange interpolating polynomials [46,47]. Given that variable order finite-difference algorithms are among the most suitable for modern high-performance computing, the computer technology to which computational chemistry is mainly addressed, as well as their programming simplicity, finite-difference methods emerge as one of the best choices for studying chemical dynamics.

There is a huge literature concerning finite-difference approximations to initial value problems (Notice that Hamilton’s equations, Jacobi fields, and Poisson brackets can be written as initial value problems, and thus, ordinary differential equations can simultaneously be integrated.), such as Hamilton’s and variational equations [45], as well as calculating the action of Hamiltonian operator on wavefunctions in quantum mechanics [25].

For ordinary differential equations (ODEs) and partial differential equations, we approximate the solution functions by expanding them in an appropriate basis set

Different global basis functions produce different pseudospectral methods. From such so-called finite basis representation, we can transform to a cardinal set of basis functions, , also called discrete variable representation, by choosing N grid points, , at which the function is calculated. The cardinal functions obey the Kronecker property

so that the wavefunction is expressed by the set of grid points

The transformation from to the cardinal basis set is unitary, and the new basis is given in terms of the old one by the equation

where the grid points and the corresponding weights depend on the chosen quadrature rule.

The mth-derivative of the approximate solution is written as

where

The differentiation matrix contains the coefficients necessary for calculating the mth- derivative at the collocation points, and T is the column vector of dimension N containing the basis functions.

Usually, the functions are interpolated by Lagrange cardinal basis set of order

where

It is proved that by increasing the order of Lagrange polynomials, the series converges to the corresponding pseudospectral limits. For uniform equidistant grids, , and , it is shown that the pseudospectral limit is a function

Also, for periodic functions, Fourier series are associated with the cardinal functions

A relation of Fourier cardinal functions and sinc functions can be seen by the formula

Since sinc functions are the infinite order limit of an equispaced FD (Equation (203)), the correspondence now is that periodically repeated FD stencils will tend to the PS limit of Fourier functions as the number of grid points in the stencil approaches the total number of grid points in one period. Also, because equispaced FD can be considered to be a robust sum acceleration scheme of a sinc function series, we expect the same convergence properties of the FD approximation to the Fourier series as the one we find for radial variables [46].

To solve Hamilton’s and variational differential equations [5], we use methods for solving ordinary differential equations, such as those described in the book of Shampine and Gordon [45]. The algorithms are based on predictor-corrector methods with variable order finite-difference approximations, as well as variable time step and backward difference formulae. The accuracy of solutions is controlled by prespecified values.

The action of the Hamiltonian operator on a wavefunction can also be approximated by high-order finite-difference schemes or pseudospectral methods. We have demonstrated that the algorithm developed by Fornberg [25] to construct Lagrange interpolating polynomials for solving the Schrödinger equation is robust and fast. Applications can be found in references [46,47].

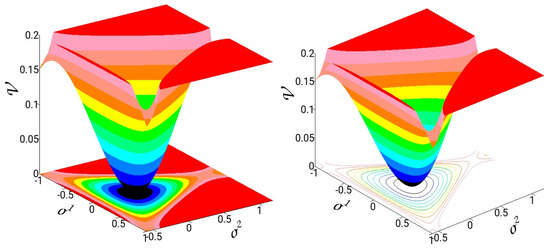

4.2. The Hénon–Heiles Model

In this section, we show results that demonstrate the effectiveness of solving the Hamiltonian ODEs encountered in the three principal theories: classical, quantum, and thermodynamic. We use a test model that of Hénon–Heiles [26] and mainly the software POMULT [48]. The Hénon–Heiles potential was initially proposed as a model for studying the dynamics of a galaxy. The potential function introduces cubic nonlinearities, and soon, it became the model of choice for numerically investigating the nonlinear behavior of two degrees of freedom systems.

Numerous articles have been published that explore the phase space structures of this nonlinear dynamical system in detail [49]. The Hamiltonian function for a Hénon–Heiles system is written as

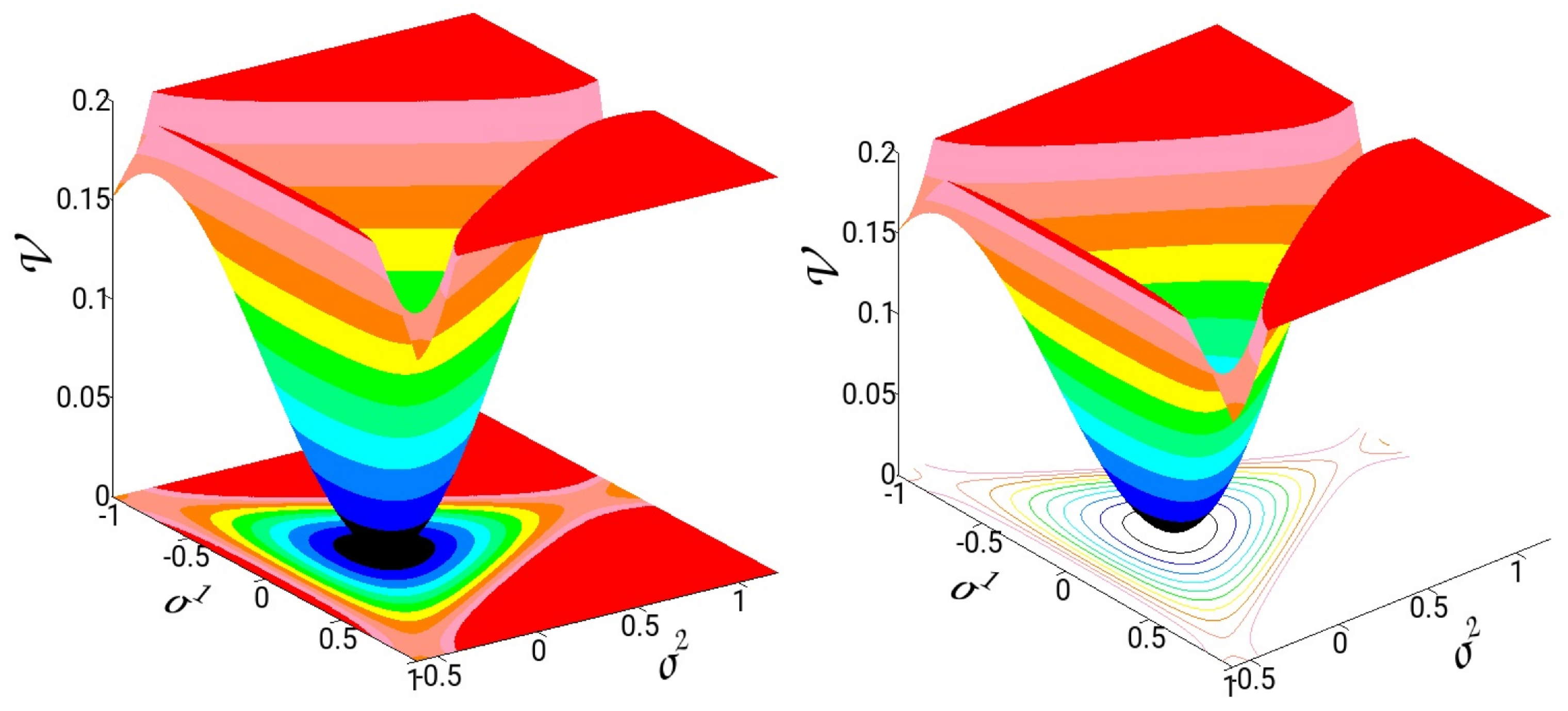

denote the coordinates and the canonical conjugate momenta. In Figure 5, we present 3D graphs and isocontours from the projections of the potential in the coordinate plane. The potential has one minimum at and three saddles at the energy of . Trajectories with energy above D may escape to infinity from the three exit channels. The harmonic normal mode frequencies are , and thus, the system shows a 1:1 resonance. Notice that the spatial symmetry of the Hénon–Heiles potential is , the same symmetry as triatomic molecules with the same atom.

Figure 5.

Potential energy surface of the Hénon–Heiles model and isocontours in the configuration plane.

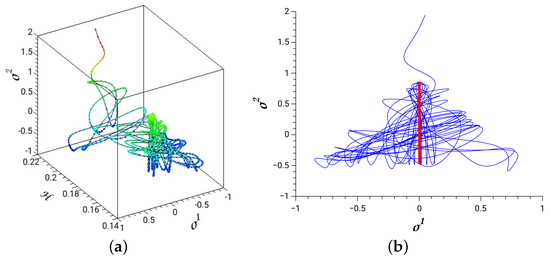

4.2.1. A Classical Time-Dependent Hénon–Heiles System

A time-dependent Hénon–Heiles system is produced by adding the term coupled to coordinate (see Figure 1)

with , and canonical conjugate momentum, . We solve Hamilton’s equations as given by Equations (14), and with the extended Hamiltonian, .

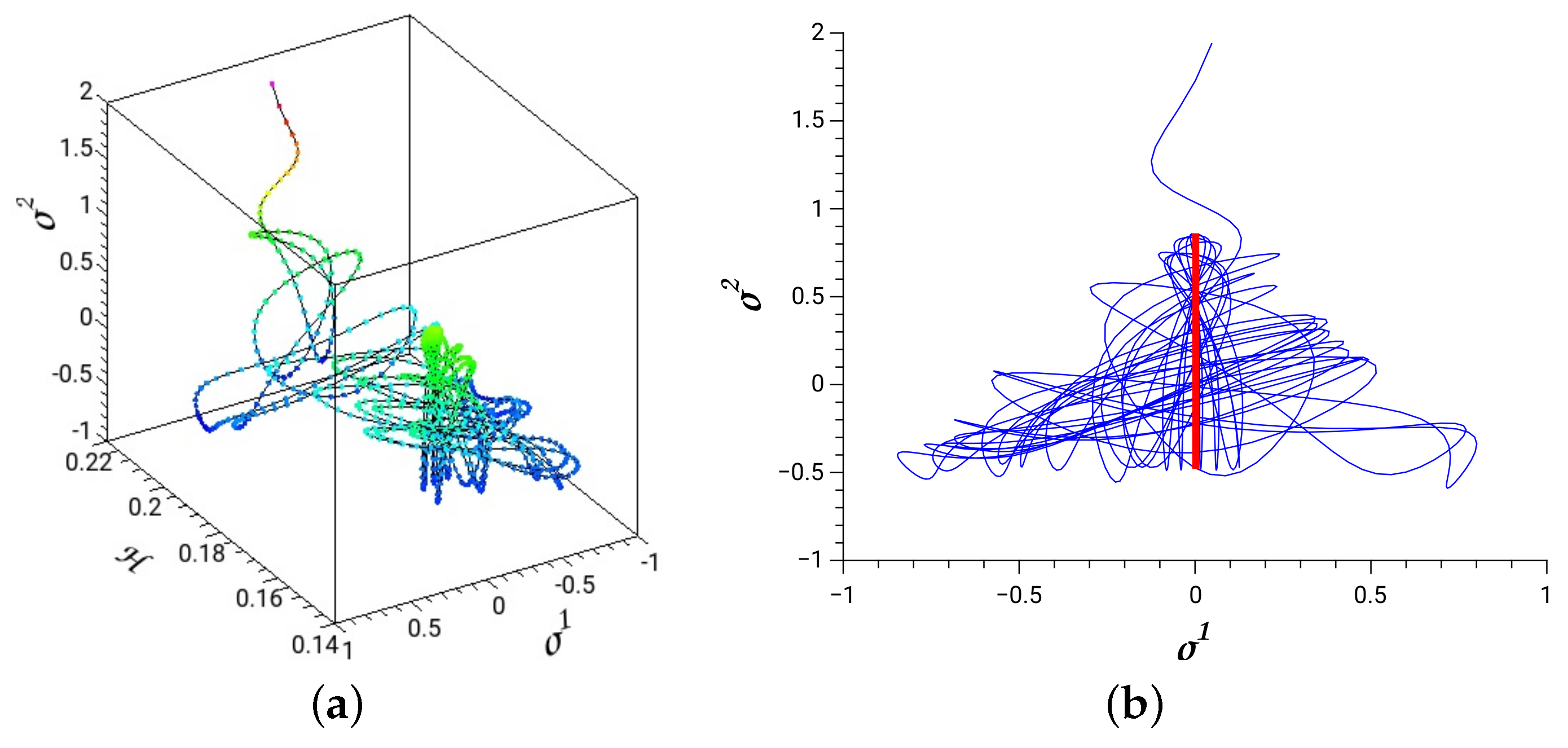

In Figure 6, we depict a dissociating trajectory with parameters of the time-dependent term, and . The initial conditions are selected from the stable periodic orbit of the principal family along the coordinate and at energy 0.1575. The red thick line in Figure 6b is the projection of this periodic orbit on the coordinate plane. The 3D plot in Figure 6a shows the evolution of the system with the absorbed energy that leads to dissociation.

Figure 6.

(a) A dissociating trajectory of a time-dependent Hénon–Heiles potential. (b) The trajectory is initialized from a periodic orbit (red thick line) and with energy .

4.2.2. The Quantum Hénon–Heiles System

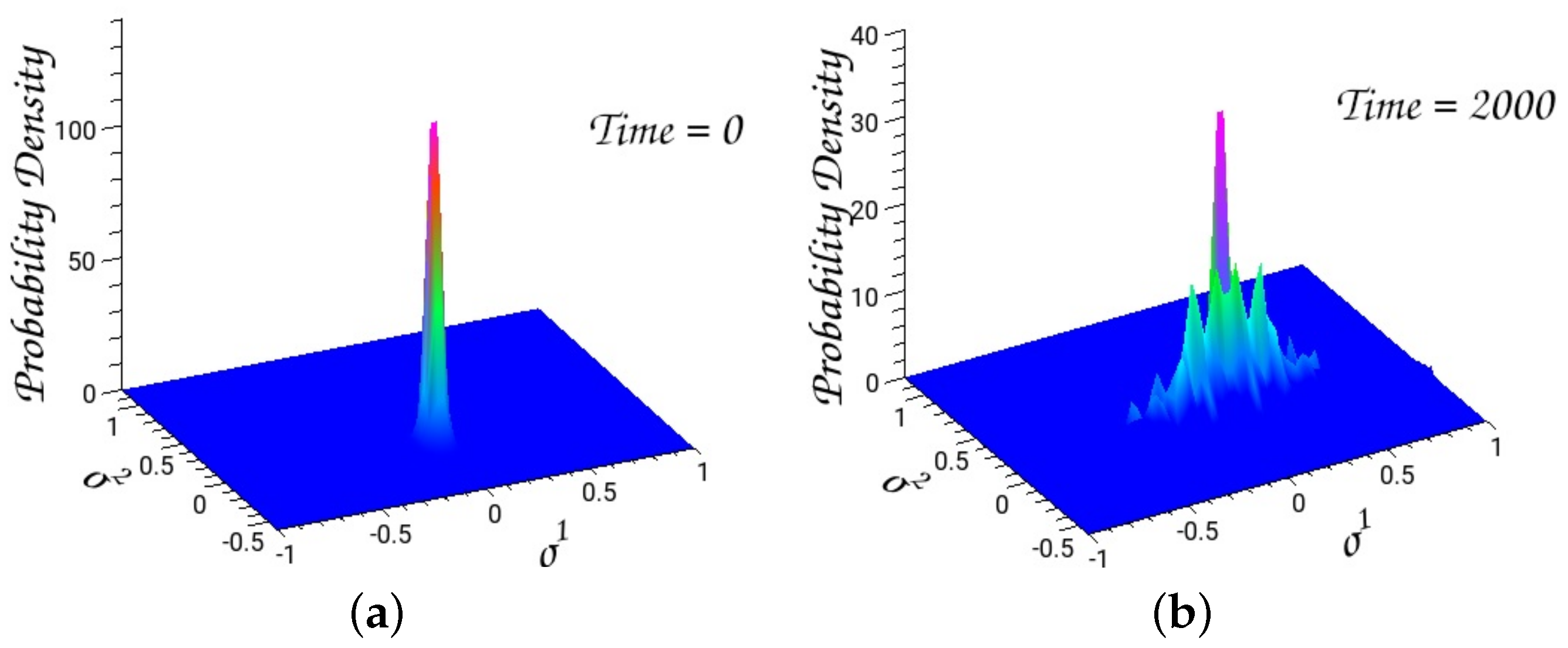

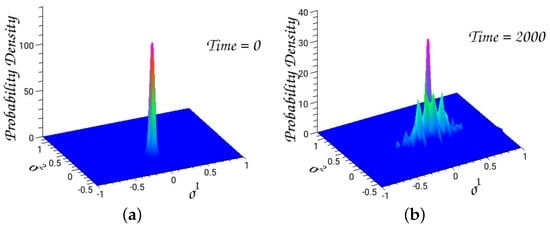

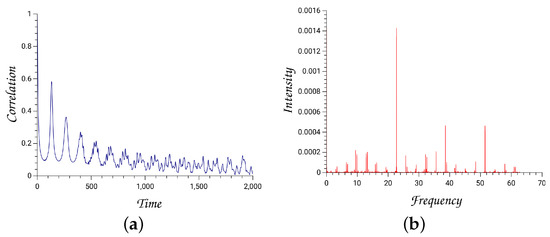

We solve the time-dependent Schrödinger equation with an initial Gaussian wavepacket centered at the minimum of the Hénon–Heiles potential, and widths and . The average energy is 0.124.

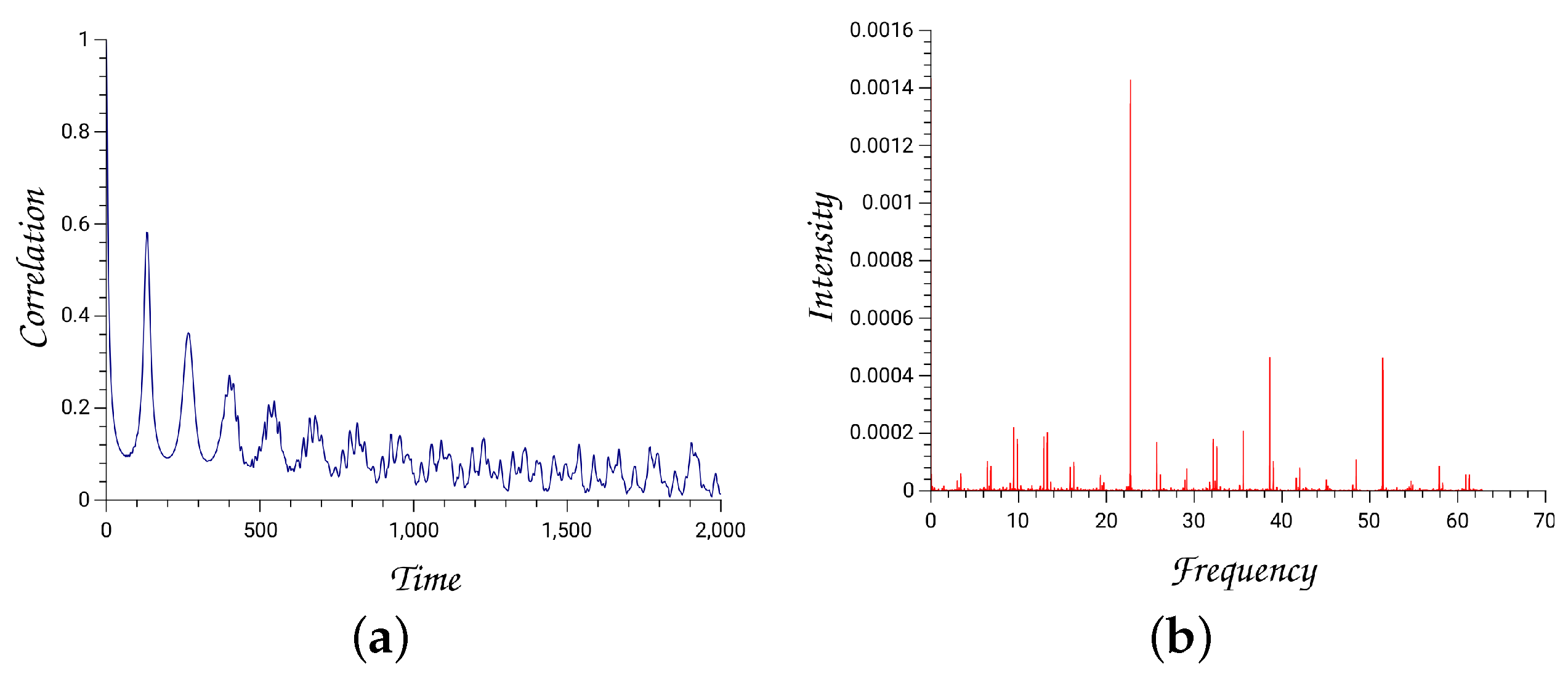

The autocorrelation function is the overlap integral of the initial Gaussian with the evolving wavepacket

The Fourier transformation of the autocorrelation function depicts the power spectrum from which one can extract eigenenergies and eigenfunctions. Numerical technologies of accurately calculating the energy levels, as well as the corresponding eigenfunctions, have extensively been investigated [50].

Figure 7.

(a) The initial wavepacket centered at the minimum of the potential well. (b) The evolved wavepacket after 2000 time units.

Figure 8.

(a) The autocorrelation function for the initial Gaussian wavepacket. (b) The power spectrum was obtained by taking the Fourier transformation of the autocorrelation function.

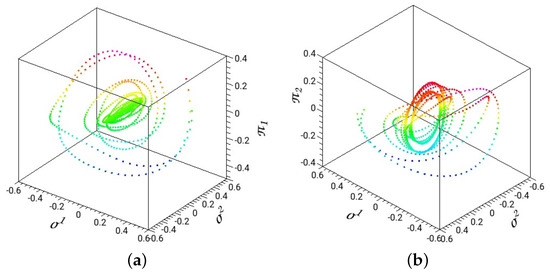

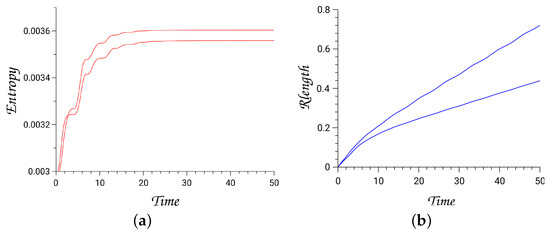

4.2.3. Energy Dissipation of Hénon–Heiles System in Homogeneous Media

For a simple thermodynamic system, such as that of an inert atomic gas with constant volume and particle number (1 mole), the entropy function is given by the equation

with reference the energy at and to be the specific heat. denotes the Boltzmann constant and the Avogadro constant.

We assume a diagonal friction parameter matrix that couples the Hénon–Heiles nonlinear oscillator with the homogeneous environment. We assign the nonzero elements to the values, . This is an example of loosely coupling one DOF of the dynamical system to the environment, and we investigate the effect it may have on the entropy production and length of the path [29].

For zero frictions, the dynamical system remains uncoupled to the environment and thus, is a conserved Hamiltonian system. When the friction parameters are turned on, energy dissipates from the dynamical system to the environment. In the extended thermodynamic space, the number of DOF is 6 with coordinates the entropy, S, the total energy, E, and the four variables of the dynamical system, . Hence, the dimension of the extended phase space is 12.

The extended Hamiltonian (Equation (162)) is equal to zero on the Lagrangian thermodynamic extended state manifold. We integrate trajectories up to 50 time units with a precision in the value of extended Hamiltonian to about .

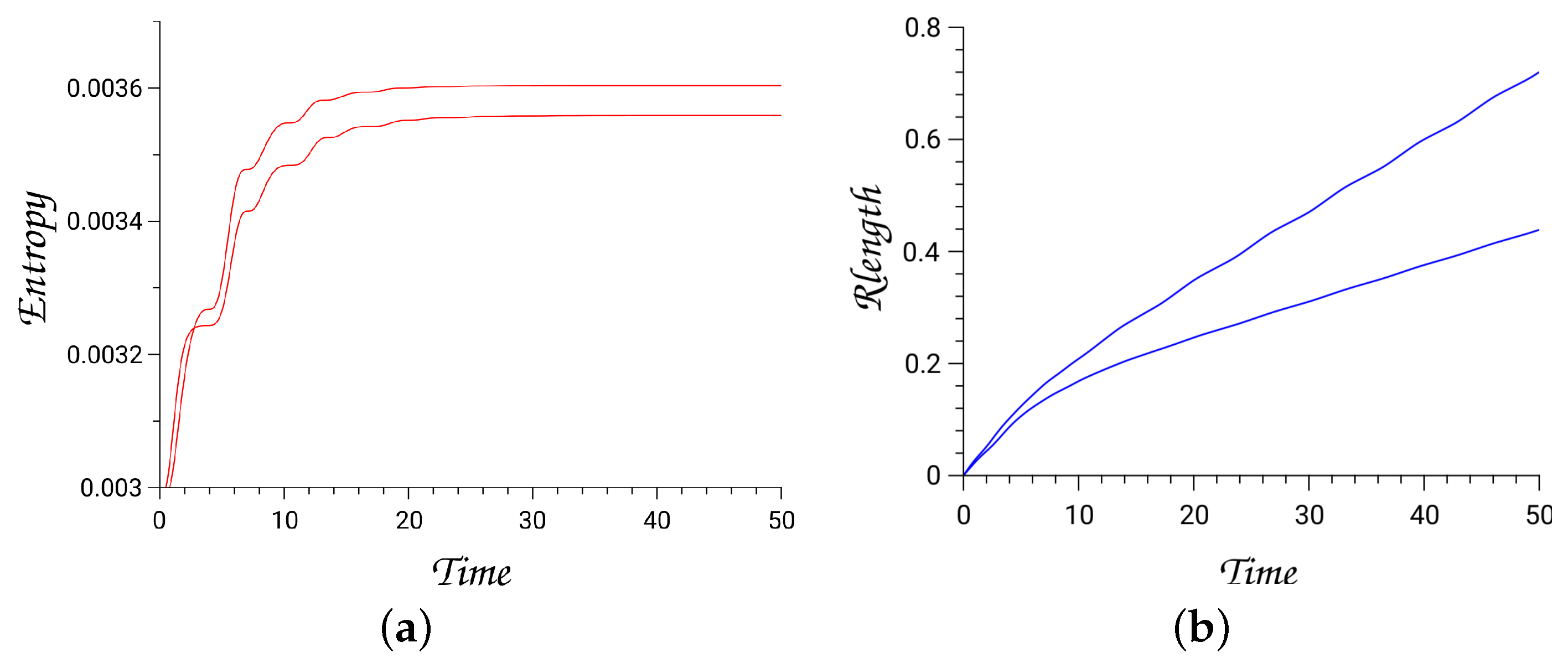

In Figure 9 and Figure 10, the results for two trajectories are depicted by treating the Hénon–Heiles as a dissipating system. We plot the produced entropy (Equation (163)) and the lengths of trajectories, computed via the Ruppeiner metric (Rlength), Equation (154), and with initial energy of the dynamical system .

Figure 9.

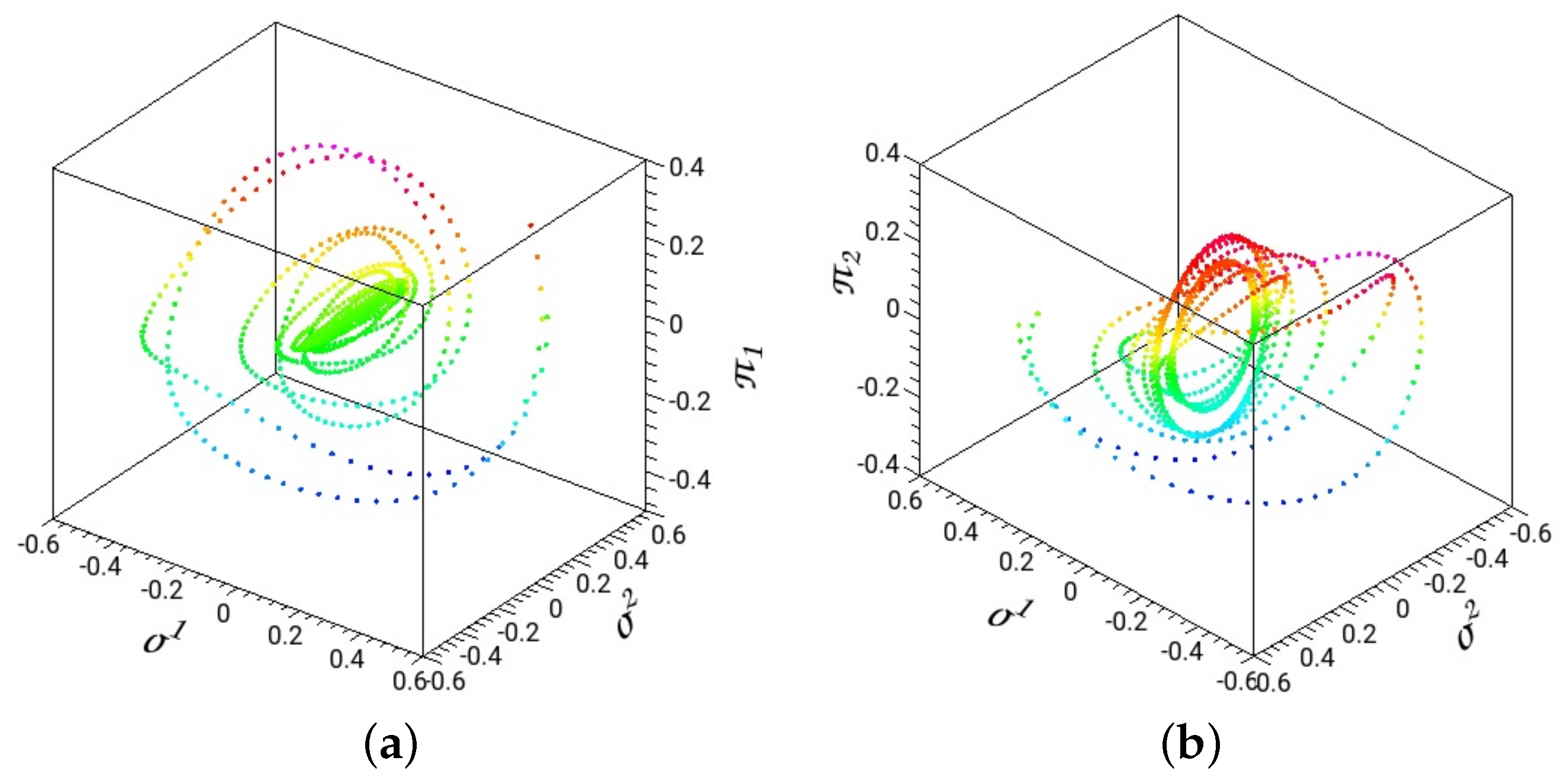

Projections of two representative trajectories in the Hénon–Heiles phase space are shown with friction parameters ; (a) in the space and (b) in the space.

Figure 10.

Panel (a) is the time evolution of the entropy production and (b) the trajectory length calculated with the Ruppeiner metric for the two trajectories shown in Figure 9.

Since one DOF of the dynamical system is weakly coupled to the environment, we find that for the integration times, trajectories are trapped around reduced tori related to the approximately decoupled mode. The thermodynamic length provides an estimate of how close the initial state is to the physical equilibrium state. Hence, we expect trapped trajectories in the approximately decoupled modes to have large lengths and to produce low entropies [29].

5. Conclusions

All cardinal physical theories have acquired a Lagrangian or Hamiltonian formalism. The advantages of employing a Hamiltonian framework stem from the fact that global and local constants of motion that dictate the dynamics of the system are respected by solving Hamilton’s equations. In particular, the geometrical structures of Hamiltonian theory were mainly investigated in the second half of the twentieth century with the development of contemporary differential geometry. The purpose of this article is to introduce and unveil common geometrical structures of the three most frequently applied theories in computational chemistry, those of classical mechanics, quantum mechanics, and classical thermodynamics, all of them at the non-relativistic approximation. We have shown that working in extended phase space, the physical states of the system in the three theories are described by Lagrangian submanifolds. Observables are calculated by canonically projecting the extended phase space in a reduced dimensional space. In classical mechanics, integrable systems guarantee the existence of constants of motion, and thus dimensional Lagrangian submanifolds embedded into dimensional phase space. Quantum systems can also be considered to be integrable systems in the projective Hilbert space. Finally, classical thermodynamics have also been given a Hamiltonian formalism in an extended phase space, similar to classical mechanics, and capable of formulating irreversible processes. We can obtain representations of Lagrangian manifolds when we use Hamiltonians in action-angle variables in classical mechanics and Gibbs’s fundamental equation in classical thermodynamics.

Noether’s theorem [51] proves that constants of motion are associated with symmetries of the system, which leave the Hamiltonian function invariant. Global continuous symmetries, such as time-reversal, translational, and rotational transformations, are theorized by Lie groups and their associated Lie algebras. Advanced methods to extract the constants of motion related to symmetries and to reduce the dimensionality of the problem have been developed in recent decades [2].

Here, we have not explored the important role of symmetry in dynamical systems. However, we have emphasized the importance of approximating local constants of motion by solving the variational equations of a Hamiltonian system, such as around equilibria and stable periodic orbits. This requires the diagonalization of the fundamental matrix (see Section 2.1.5). It is proved that each constant of motion corresponds to a pair of eigenvalues equal to one.

Numerically solving the equations of motion (classical, quantum, thermodynamic) is the main task of computational chemistry. Finite-difference methods have been broadly adopted for finding solutions of ordinary differential equations, as well as partial differential equations, such as the Schrödinger equation. Nevertheless, in spite of their many successes, they are restricted to low-dimensional systems since they require substantial computational resources for many degrees of freedom systems. Furthermore, non-symplectic integrators for Hamiltonian ODEs inevitably introduce numerical instability in long time integrations.

Molecules are generally complex systems with many degrees of freedom. Their phase space is entangled with regular and chaotic regions. Therefore, it is not a surprise that computational chemistry has always tried to exploit new computer advances, such as parallel computing (including grid and cloud), as well as GPU technology. As far as the integration of the trajectories of large molecules for a long time is concerned, the good performance of several symplectic integrators has been recognized. Among the most popular ones are the simple low-order symplectic algorithms, such as the leapfrog or velocity Verlet algorithms [52].

Presently, AI (artificial intelligence) methods, particularly machine-learning techniques, are under exploration. In the last few years, an intense interest has been directed at finding algorithms that incorporate physics knowledge into neural networks. Hamiltonian neural networks label techniques that try to solve Hamilton’s equations with deep neural networks [53,54,55]. Solving the Schrödinger equation has also attracted the interest of researchers in this field [56]. However, we must note that at present, physics neural networks (PNN) algorithms are tested with low-dimensional systems, and their extension to systems with many degrees of freedom remains to be proved.

Irrespective of the final result of this endeavor, what is worth pursuing is developing computational methods that take into account multiscale physical theories that cover extended temporal/spatial scales. What we have shown in this review is that Hamiltonian (symplectic) geometry is the foundation of principal physical theories. This should be taken into account in building PNN algorithms [57], and it signals the need for new projects. Hamiltonian geometry yields the necessary and sufficient conditions for the mutual assistance of humans and machines in deep-learning processes.

Funding

This research received no external funding.

Data Availability Statement

The raw data supporting the conclusions of this article will be made available by the authors on request.

Conflicts of Interest

The author declares no conflicts of interest.

Abbreviations

The following abbreviations are used in this manuscript:

| FD | Finite Difference |

| PS | Pseudospectral |

| DOF | degrees of freedom |

| TEPS | Thermodynamic Extended Phase Space |

| TEPSS | Thermodynamic Extended Physical State Submanifold |

| TCS | Thermodynamic Contact Space |

| PTS | Physical Thermodynamic Submanifold |

| ODEs | Ordinary Differential Equations |

| HNN | Hamiltonian neural networks |

| PNN | Physics neural networks |

| nD | dimensional |

Appendix A

Appendix A.1. Proof of Equation (89)

For an observable and normalized states, , its expectation value is the function

and

We can prove that the differential form of the expectation value in the real projective Hilbert space is given by

where and normalized states .

Indeed,

According to Equation (15) we have replaced with 𝚤.

Appendix A.2. Proof of Equation (90)

If are the expectation value functions of two observables, the Poisson bracket is defined by the equation

is the commutator of the two operators . Indeed, the Poisson bracket of the expectation functions at state is developed as follows

Appendix A.3. Proof of Equation (152)

For a thermodynamic system the Hamiltonian in the extended phase space is written as, . Then, the time derivative of an observable function is given by the Poisson brackets as

The partial derivatives are

that result in

Hence, the thermodynamic contact vector field is

Appendix A.4. Tables

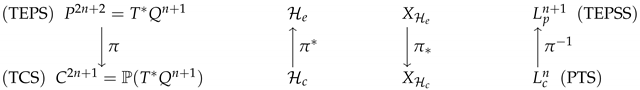

Appendix A.4.1. Projection Maps between Thermodynamic Extended Phase Space and Thermodynamic Contact Space

Table A1.

Projection maps between thermodynamic extended phase space (TEPS) and thermodynamic contact space (TCS). denotes the inverse map, the push-forward of vector fields operation and the pull-back of functions (forms). is the extended phase space, the contact state space, the Lagrangian submanifold in TEPS, which describes the thermodynamic extended physical state submanifold (TEPSS), the Legendrian submanifold in TCS, which describes the physical thermodynamic submanifold (PTS), the extended Hamiltonian, the extended Hamiltonian vector field, the contact Hamiltonian and the contact Hamiltonian vector field.

Table A1.

Projection maps between thermodynamic extended phase space (TEPS) and thermodynamic contact space (TCS). denotes the inverse map, the push-forward of vector fields operation and the pull-back of functions (forms). is the extended phase space, the contact state space, the Lagrangian submanifold in TEPS, which describes the thermodynamic extended physical state submanifold (TEPSS), the Legendrian submanifold in TCS, which describes the physical thermodynamic submanifold (PTS), the extended Hamiltonian, the extended Hamiltonian vector field, the contact Hamiltonian and the contact Hamiltonian vector field.

|

Appendix A.4.2. Thermodynamic Manifolds in Entropy Representation

Table A2.

Thermodynamic Manifolds in Entropy Representation.

Table A2.

Thermodynamic Manifolds in Entropy Representation.

| Manifold | |||

| Coordinates | |||

| Momenta | |||

| form | |||

| form | |||

| PTS | (TEPSS) | ||

| Metric |

Appendix A.4.3. Thermodynamic Manifolds in Energy Representation

Table A3.

Thermodynamic Manifolds in Energy Representation.

Table A3.

Thermodynamic Manifolds in Energy Representation.

| Manifold | |||

| Coordinates | |||

| Momenta | |||

| form | |||

| form | |||

| PTS | (TEPSS) | ||

| Metric | |||

Appendix A.5. Hamiltonian Chemical Kinetics: A Simple Chemical Kinetic Example: Consecutive First-Order Elementary Reactions

Here, we present how a classical kinetic network can be cast into Hamiltonian formalism. As an example, we take two consecutive elementary chemical reactions and dress them with a Hamiltonian mantle [30]. Our generalized coordinates now, instead of distances and angles to define the positions of the atoms in the molecule, as a microscopic description requires, are the macroscopic extensive properties, such as internal energy, volume, and the number of moles (or molecules). The conjugate momenta to these thermodynamic coordinates are related to the intensive properties of temperature, pressure, and chemical potentials, respectively. By treating the macroscopic system in phase space and integrating Hamilton’s equations, we can define measures for classifying the trajectories and investigate alternative kinetic models.

The chemical equations are

where are the rate constants for the forward reactions and the rate constants for the backward reactions. , and are the constituent compounds with concentrations

The reaction coordinates (extension or progress of the reactions) are denoted by . Then, the time variation of concentrations is written as

C is the stoichiometric matrix.

We consider the reactive system as a closed thermodynamic system, i.e., it exchanges energy with the environment, but not mass. If the initial concentrations of the three substances , and are and , respectively, and assuming at , then the solution of Equations (A10) is

According to the law of mass action [37,38,39,40] the reaction rates are written as

where are the rates of the forward reactions and the rates of the backward reactions, respectively. Then, the rates of reaction coordinates are

The analytical solutions of the above differential equations for the case , as well as are

with limits at equilibrium

As discussed in Section 3, the extended phase space is obtained by adding the entropy to the set of generalized coordinates and as a conjugate momentum. Then, the momenta of thermodynamic coordinates are defined as .

We study chemical reactions under isothermal and isobaric conditions. In this case, we take to denote the conjugate momentum of the entropy expressed by the Massieu-Gibbs function, , with G to be the Gibbs thermodynamic potential. The Affinities of the reactions, , are then written as

Following the theory described in Section 3.4.2, the above kinetic scheme is put in a Hamiltonian framework by first writing the Hamiltonian in reaction coordinates

where denote the conjugate momenta of . The reaction affinities are written as

Hence, Hamilton’s equations take the form (Equation (192))

Appendix A.5.1. Discussion

We make the following remarks. Integrating Equation (A17), we calculate the entropy production for the selected reaction pathway. It also provides the time variation of Gibbs free energy (). Equations (A18) and (A19) are the fluxes of chemical reactions given as the difference of the rates of forward and backward reactions. Usually, reaction rates are obtained by phenomenological kinetic models, such as those provided by the Mass Action kinetics (MAK) [37,38].

Equation (A20) designates the conservation of the gauge momentum with an initial value equal to .

The terms in the second summation of the RHS of Equations (A21) and (A22) are zero on the thermodynamic extended state manifold (Lagrangian submanifold), and they can be ignored during the integration of Hamilton’s equations. However, in solving the variational equations, we must keep all the terms.

By studying the kinetics of chemical reactions in phase space, we can adopt measures for classifying reaction paths in phase space. For example, we define a metric on the Lagrangian submanifold in Massieu-Gibbs representation as

and calculate the distance between the initial and equilibrium states

with to be the maximum integration time and substituting . The distance of two states has been utilized to define a better low bound of the entropy production or dissipated work (availability) than zero for finite time irreversible processes [43,44].

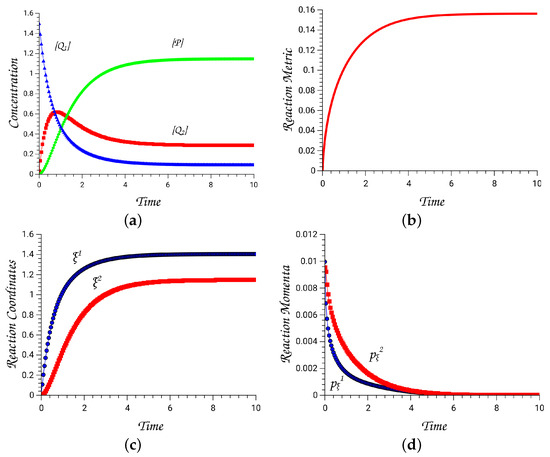

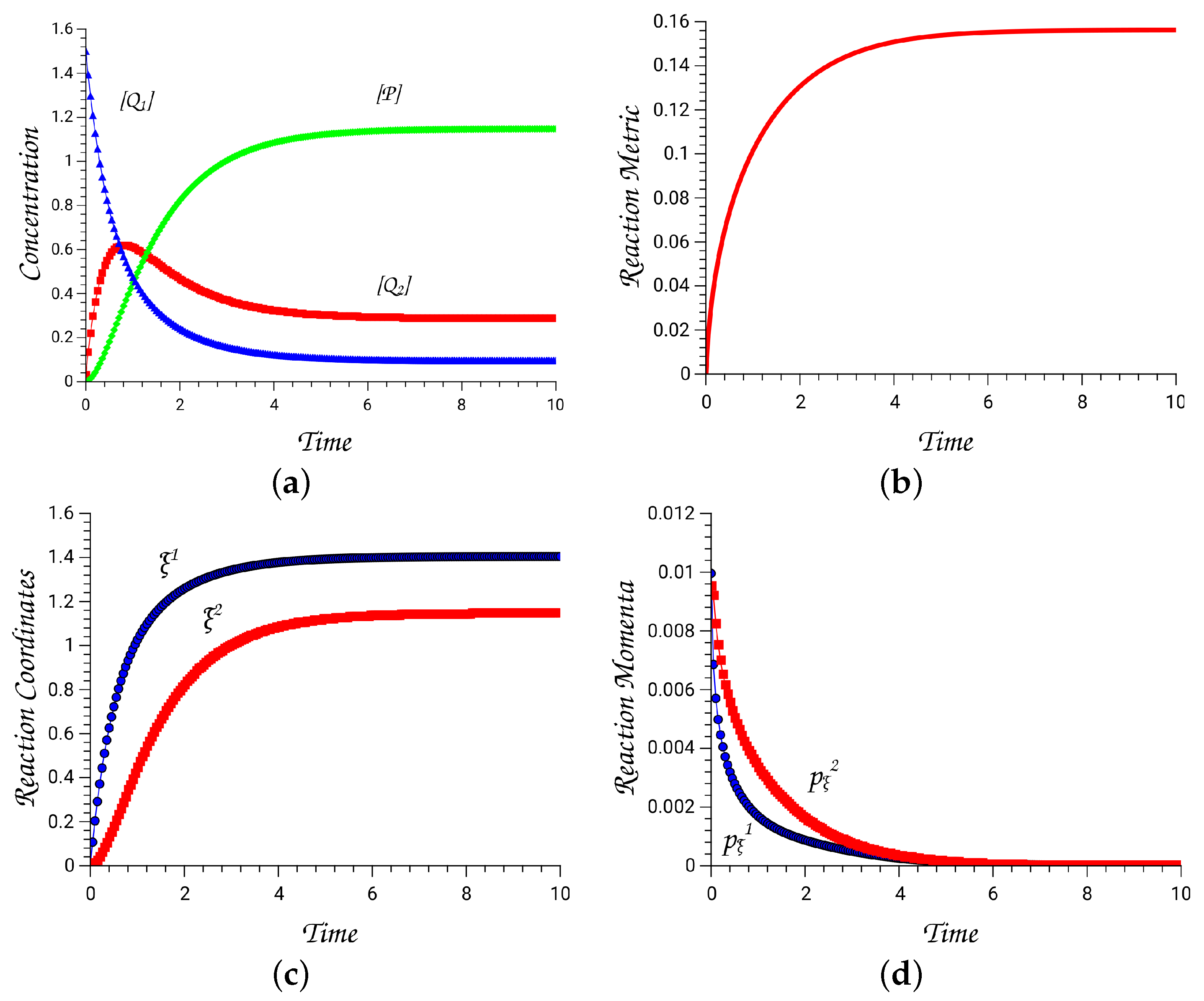

For the chosen example, we have numerically solved Hamilton’s equations for several combinations of rate constants and initial concentrations [30]. A representative trajectory is shown in Figure A1 with parameter values given in the caption of the figure. For all trajectories run with double precision arithmetic, the extended Hamiltonian is conserved approximately to zero with an accuracy . The symplectic fundamental matrix is obtained by solving the variational equations [29,48] for a time interval of 5 t.u. (time units). Diagonalizing this matrix, we find three pairs of eigenvalues. One pair is always equal to one as a result of the conservation of the Hamiltonian function, whereas the other two come in combinations of two real positive numbers . For the present trajectory, these are and . All panels in Figure A1 demonstrate that for this highly unstable trajectory, equilibrium is reached in 4 t.u., with the momenta to approach zero.

Figure A1.

(a) Concentrations of the constituent chemical species as functions of time, (b) reaction metric, (c) reaction coordinates , (d) conjugate momenta to reaction coordinates. The quantities have been calculated from a trajectory run with initial concentrations, . The rate constants for the forward reactions are taken equal to , and the backward reactions .

Figure A1.

(a) Concentrations of the constituent chemical species as functions of time, (b) reaction metric, (c) reaction coordinates , (d) conjugate momenta to reaction coordinates. The quantities have been calculated from a trajectory run with initial concentrations, . The rate constants for the forward reactions are taken equal to , and the backward reactions .

References

- Scheck, F. Mechanics; Springer: Berlin/Heidelberg, Germany, 1990. [Google Scholar]

- Marsden, J.E.; Ratiu, T.S. Introduction to Mechanics and Symmetry: A Basic Exposition of Classical Mechanical Systems, 2nd ed.; Springer: Berlin/Heidelberg, Germany, 1999; Volume 17. [Google Scholar]

- Meyer, K.R.; Hall, G.R.; Offin, D. Introduction to Hamiltonian Dynamical Systems and the N-Body Problem, 2nd ed.; Applied Mathematical Sciences; Springer: New York, NY, USA, 2009; Volume 90. [Google Scholar]

- Farantos, S.C.; Schinke, R.; Guo, H.; Joyeux, M. Energy Localization in Molecules, Bifurcation Phenomena, and their Spectroscopic Signatures: The Global View. Chem. Rev. 2009, 109, 4248–4271. [Google Scholar] [CrossRef] [PubMed]

- Farantos, S.C. Nonlinear Hamiltonian Mechanics Applied to Molecular Dynamics: Theory and Computational Methods for Understanding Molecular Spectroscopy and Chemical Reactions; Springer: Berlin/Heidelberg, Germany, 2014. [Google Scholar]

- Ashtekar, A.; Schilling, T. Geometrical Formulation of Quantum Mechanics. In On Einstein’s Path: Essays in Honor of Engelbert Schucking; Harvey, A., Ed.; Springer: New York, NY, USA, 1999; pp. 23–65. [Google Scholar] [CrossRef]

- Brody, D.C.; Hughston, L.P. Geometric quantum mechanics. J. Geom. Phys. 2001, 38, 19–53. [Google Scholar] [CrossRef]

- Heydari, H. Geometric formulation of quantum mechanics. arXiv 2016. [Google Scholar] [CrossRef]

- Arnold, V.I. Mathematical Methods of Classical Mechanics, 2nd ed.; Springer: New York, NY, USA, 1989. [Google Scholar]

- Libermann, P.; Marle, C.M. Symplectic Geometry and Analytical Mechanics; Reidel Publishing Company: Dordrecht, Holland, 1987. [Google Scholar]

- Hermann, R. Geometry, Physics, and Systems. Pure and Applied Mathematics; Marcel Dekker, Inc.: New York, NY, USA, 1973. [Google Scholar]

- Mrugala, R. Geometric formulation of equilibrium phenomenological thermodynamics. Rep. Math. Phys. 1978, 14, 419. [Google Scholar] [CrossRef]

- Mrugala, R. On equivalence of two metrics in classical thermodynamics. Phys. A 1984, 125, 631–639. [Google Scholar] [CrossRef]

- Mrugala, R. Submanifolds in the thermodynamic phase space. Rep. Math. Phys. 1985, 21, 197. [Google Scholar] [CrossRef]

- Mrugala, R.; Nulton, J.; Schoen, J.; Salamon, P. Contact structures in thermodynamic theory. Rep. Math. Phys. 1991, 29, 109–121. [Google Scholar] [CrossRef]

- Peterson, M.A. Analogy between Thermodynamics and Mechanics. Am. J. Phys. 1979, 47, 488–490. [Google Scholar] [CrossRef]

- Salamon, P.; Andresen, B.; Nulton, J.; Konopka, A.K. The Mathematical Structure of Thermodynamics; CRC Press: Boca Raton, FL, USA, 2007; pp. 207–221. [Google Scholar]

- Balian, R.; Valentin, P. Hamiltonian structure of thermodynamics with gauge. Eur. Phys. J. B 2001, 21, 269–282. [Google Scholar] [CrossRef]

- Callen, H.B. Thermodynamics and an Introduction to Thermostatistics, 2nd ed.; John Wiley and Sons: Hoboken, NJ, USA, 1985. [Google Scholar]

- Bravetti, A.; Cruz, H.; Tapias, D. Contact Hamiltonian mechanics. Ann. Phys. 2017, 376, 17–39. [Google Scholar] [CrossRef]

- Gay-Balmaz, F.; Yoshimura, H. A Lagrangian variational formulation for nonequilibrium thermodynamics. Part I: Discrete systems. J. Geom. Phys. 2017, 111, 169–193. [Google Scholar] [CrossRef]

- Gay-Balmaz, F.; Yoshimura, H. Dirac structures in nonequilibrium thermodynamics. IFAC Pap. Line 2018, 51, 31–37. [Google Scholar] [CrossRef]

- Liu, Q.; Torres, P.J.; Wang, C. Contact Hamiltonian dynamics: Variational principles, invariants, completeness and periodic behavior. Ann. Phys. 2018, 395, 26–44. [Google Scholar] [CrossRef]

- van der Schaft, A.; Maschke, B. Geometry of Thermodynamic Processes. Entropy 2018, 20, 925. [Google Scholar] [CrossRef] [PubMed]

- Fornberg, B. A Practical Guide to Pseudospectral Methods; Cambridge Monographs on Applied and Computational Mathematics; Cambridge University Press: Cambridge, UK, 1998; Volume 1. [Google Scholar]

- Hénon, M.; Heiles, C. The Applicability of the Third Integral of Motion: Some Numerical Experiments. Astron. J. 1964, 69, 73–79. [Google Scholar] [CrossRef]

- Born, M.; Oppenheimer, R. On the Quantum Theory of Molecules. Ann. Phys. 1927, 84, 457–484. [Google Scholar]

- Frankel, T. The Geometry of Physics: An Introduction; Cambridge University Press: Cambridge, UK, 2004. [Google Scholar]

- Farantos, S.C. Hamiltonian Thermodynamics in the Extended Phase Space: A unifying theory for non-linear molecular dynamics and classical thermodynamics. J. Math. Chem. 2020, 58, 1247–1280. [Google Scholar] [CrossRef]

- Farantos, S.C. Hamiltonian classical thermodynamics and chemical kinetics. Phys. D 2021, 417, 132813. [Google Scholar] [CrossRef]

- Sen, D. The uncertainty relations in quantum mechanics. Curr. Sci. 2014, 104, 203–218. [Google Scholar]

- Essex, C.; Andresen, B. The principal equations of state for classical particles, photons, and neutrinos. J. Non-Equilib. Thermodyn. 2013, 38, 293–312. [Google Scholar] [CrossRef]

- Ruppeiner, G. Thermodynamics: A Riemannian geometric model. Phys. Rev. A 1999, 20, 1608–1613. [Google Scholar] [CrossRef]

- Ruppeiner, G. Riemannian geometry in thermodynamic fluctuation theory. Rep. Mod. Phys. 1995, 67, 605–659. [Google Scholar] [CrossRef]

- Weinhold, F. Metric Geometry of Equilibrium THermodynamics. J. Chem. Phys. 1975, 63, 2479–2483. [Google Scholar] [CrossRef]

- Weinhold, F. Classical and Geometrical Theory of Chemical and Phase Thermodynamics; John Wiley & Sons: Hoboken, NJ, USA, 2009. [Google Scholar]

- Engelmann, W. Translation: Investigations of Chemical Affinities. Essays by C.M. Guldberg and P. Waage from the Years 1864, 1867, 1879; Wilhelm Engelmann: Leipzig, Germany, 1864. [Google Scholar]

- Voit, E.O.; Martens, H.A.; Omholt, S.W. 150 Years of the Mass Action Law. PLoS Comput. Biol. 2015, 11, e1004012. [Google Scholar] [CrossRef] [PubMed]

- van der Schaft, A.; Rao, S.; Jayawardhana, B. On the Mathematical Structure of Balanced Chemical Reaction Networks Governed by Mass Action Kinetics. SIAM J. Appl. Math. 2013, 73, 953–973. [Google Scholar] [CrossRef]

- van der Schaft, A.; Rao, S.; Jayawardhana, B. Complex and detailed balancing of chemical reaction networks revisited. J. Math. Chem. 2015, 53, 1445–1458. [Google Scholar] [CrossRef][Green Version]