A Transferable Learning Classification Model and Carbon Sequestration Estimation of Crops in Farmland Ecosystem

Abstract

:1. Introduction

2. Study Sites and Data

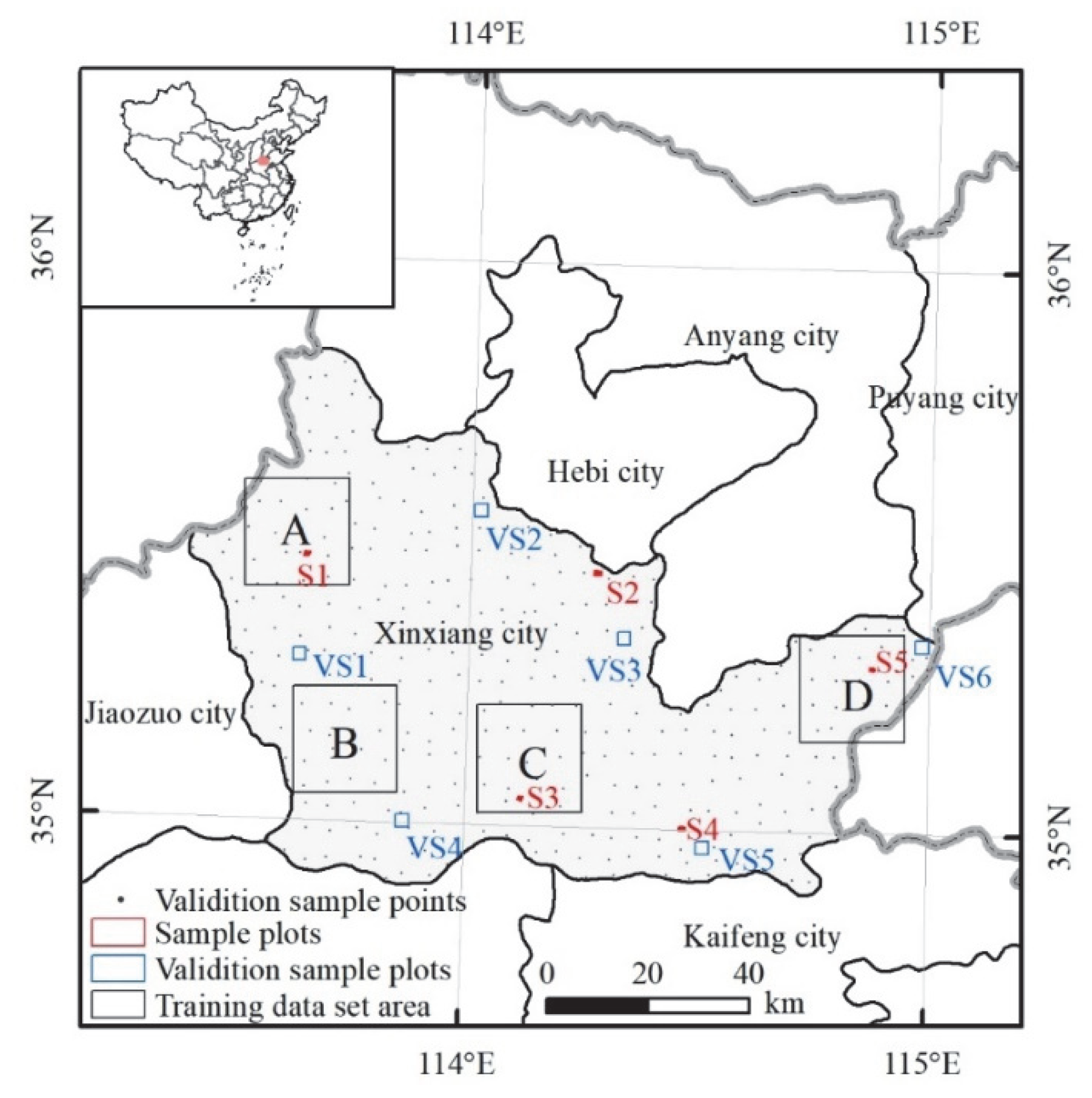

2.1. Study Sites

2.2. Sentinel-2A Data

2.3. Sample Data

3. Methodology

3.1. UNet++ Model and Assessment Indicators

3.2. Baseline Classification Models and Evaluation Indicators

3.3. Estimation of Crop Carbon Sequestration

4. Results

4.1. Performance Evaluation of the UNet++ Model

4.2. Evaluation of Baseline Models and Prediction Classification Accuracy

4.3. Reclassification Rules and Crop Mapping

- (1)

- We manually drew the approximate boundary of the disaster area shown in Figure 6c, and then converted the three-year classification results into the vector format;

- (2)

- The disaster area was spatially intersected with the 2021 classification results (including other crops, cities, and water) and the result was named as 2021_ disaster_ intersect_area;

- (3)

- The 2021_ disaster_ intersect_area data was spatially intersected with the 2019 and 2020 classification results (including corn, peanuts, soybean, rice, NCL, other crops, and greenhouses), respectively, and the results were named as 2019_ disaster_ intersect_area and 2020_ disaster_ intersect_area, respectively;

- (4)

- We spatially intersected 2021_ disaster_ intersect_area and 2019_ disaster_ intersect_area, and 2021_ disaster_ intersect_area and 2020_ disaster_ intersect_area, respectively, and the results were named as 2019_2021_ disaster_ intersect_area and 2020_2021_ disaster_ intersect_area, respectively. Finally, after operating by merge tool, attribute field assignment, dissolve tool, and manual editing, the disaster area result was shown in Figure 5d. The land use and crop mapping of each year recorrected by using the road data of Xinxiang City were shown in Figure 6.

4.4. Estimation of Crop Area and Carbon Sequestration

5. Discussion

5.1. Evaluation of Model Parameters and Classification Accuracy

5.2. Comparison of Local Results and Error Analysis

5.3. Analysis of Crop Carbon Sequestration

5.4. Limitations and Future Work

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Guo, L.; Sun, X.R.; Fu, P.; Shi, T.Z.; Dang, L.N.; Chen, Y.Y.; Linderman, M.; Zhang, G.L.; Zhang, Y.; Jiang, Q.H.; et al. Mapping soil organic carbon stock by hyperspectral and time-series multispectral remote sensing images in low-relief agricultural areas. Geoderma 2021, 398, 115118. [Google Scholar] [CrossRef]

- Anderegg, W.R.L.; Trugman, A.T.; Badgley, G.; Anderson, C.M.; Bartuska, A.; Ciais, P.; Cullenward, D.; Field, C.B.; Freeman, J.; Goetz, S.J.; et al. Climate-driven risks to the climate mitigation potential of forests. Science 2020, 368, eaaz7005. [Google Scholar] [CrossRef] [PubMed]

- Chapungu, L.; Nhamo, L.; Gatti, R.C. Estimating biomass of savanna grasslands as a proxy of carbon stock using multispectral remote sensing. Remote Sens. Appl. Soc. Environ. 2020, 17, 100275. [Google Scholar] [CrossRef]

- Zhao, J.F.; Liu, D.S.; Cao, Y.; Zhang, L.J.; Peng, H.W.; Wang, K.L.; Xie, H.F.; Wang, C.Z. An integrated remote sensing and model approach for assessing forest carbon fluxes in China. Sci. Total Environ. 2022, 811, 152480. [Google Scholar] [CrossRef] [PubMed]

- Li Johansson, E.; Brogaard, S.; Brodin, L. Envisioning sustainable carbon sequestration in Swedish farmland. Environ. Sci. Policy 2022, 135, 16–25. [Google Scholar] [CrossRef]

- Xu, J.F.; Yang, J.; Xiong, X.G.; Li, H.F.; Huang, J.F.; Ting, K.C.; Ying, Y.B.; Lin, T. Towards interpreting multi-temporal deep learning models in crop mapping. Remote Sens. Environ. 2021, 264, 112599. [Google Scholar] [CrossRef]

- Liu, X.K.; Zhai, H.; Shen, Y.L.; Lou, B.K.; Jiang, C.M.; Li, T.Q.; Hussain, S.B.; Shen, G.L. Large-scale crop mapping from multisource remote sensing images in Google Earth Engine. IEEE J.-Stars 2020, 13, 414–427. [Google Scholar] [CrossRef]

- Wang, L.J.; Wang, J.Y.; Qin, F. Feature fusion approach for temporal land use mapping in complex agricultural areas. Remote Sens. 2021, 13, 2517. [Google Scholar] [CrossRef]

- Wang, L.J.; Wang, J.Y.; Zhang, X.W.; Wang, L.G.; Qin, F. Deep segmentation and classification of complex crops using multi-feature satellite imagery. Comput. Electron. Agric. 2022, 200, 107249. [Google Scholar] [CrossRef]

- Camps-Valls, G.; Bioucas-Dias, J.; Crawford, M. A special issue on advances in machine learning for remote sensing and geosciences. IEEE Geosci. Remote Sens. Mag. 2016, 4, 5–7. [Google Scholar] [CrossRef]

- Watts, J.D.; Lawrence, R.L.; Miller, P.R.; Montagne, C. Monitoring of cropland practices for carbon sequestration purposes in north central Montana by Landsat remote sensing. Remote Sens. Environ. 2009, 113, 1843–1852. [Google Scholar] [CrossRef]

- Reichstein, M.; Camps-Valls, G.; Stevens, B.; Jung, M.; Denzler, J.; Carvalhais, N.; Prabhat. Deep learning and process understanding for data-driven Earth system science. Nature 2019, 566, 195–204. [Google Scholar] [CrossRef]

- Morid, M.A.; Borjali, A.; Del Fiol, G. A scoping review of transfer learning research on medical image analysis using ImageNet. Comput. Biol. Med. 2021, 128, 104115. [Google Scholar] [CrossRef]

- Wang, L.J.; Wang, J.Y.; Liu, Z.Z.; Zhu, J.; Qin, F. Evaluation of a deep-learning model for multispectral remote sensing of land use and crop classification. Crop J. 2022, 10, 1435–1451. [Google Scholar] [CrossRef]

- Yu, R.G.; Fu, X.Z.; Jiang, H.; Wang, C.H.; Li, X.W.; Zhao, M.K.; Ying, X.; Shen, H.Q. Remote sensing image segmentation by combining feature enhanced with fully convolutional network. Lect. Notes Comput. Sci. 2018, 11301, 406–415. [Google Scholar]

- Ma, L.; Liu, Y.; Zhang, X.L.; Ye, Y.X.; Yin, G.F.; Johnson, B.A. Deep learning in remote sensing applications: A meta-analysis and review. Isprs J. Photogramm. 2019, 152, 166–177. [Google Scholar] [CrossRef]

- Li, J.T.; Shen, Y.L.; Yang, C. An adversarial generative network for crop classification from remote sensing timeseries images. Remote Sens. 2021, 13, 65. [Google Scholar] [CrossRef]

- Giannopoulos, M.; Tsagkatakis, G.; Tsakalides, P. 4D U-Nets for multi-temporal remote sensing data classification. Remote Sens. 2022, 14, 634. [Google Scholar] [CrossRef]

- Yang, L.B.; Huang, R.; Huang, J.F.; Lin, T.; Wang, L.M.; Mijiti, R.; Wei, P.L.; Tang, C.; Shao, J.; Li, Q.Z.; et al. Semantic segmentation based on temporal features: Learning of temporal-spatial information from time-series SAR images for paddy rice mapping. IEEE Trans. Geosci. Remote 2022, 60, 4403216. [Google Scholar] [CrossRef]

- Wang, C.S.; Du, P.F.; Wu, H.R.; Li, J.X.; Zhao, C.J.; Zhu, H.J. A cucumber leaf disease severity classification method based on the fusion of DeepLabV3+and U-Net. Comput. Electron. Agric. 2021, 189, 106373. [Google Scholar] [CrossRef]

- Zhou, Z.W.; Siddiquee, M.M.R.; Tajbakhsh, N.; Liang, J.M. UNet plus plus: A nested U-Net architecture for medical image segmentation. In Deep Learning in Medical Image Analysis and Multimodal Learning for Clinical Decision Support, Dlmia 2018; Springer: Cham, Switzerland, 2018; Volume 11045, pp. 3–11. [Google Scholar]

- Zhang, A.X.; Deng, R.R. Spatial-temporal evolution and influencing factors of net carbon sink efficiency in Chinese cities under the background of carbon neutrality. J. Clean. Prod. 2022, 365, 132547. [Google Scholar] [CrossRef]

- Tang, X.L.; Zhao, X.; Bai, Y.F.; Tang, Z.Y.; Wang, W.T.; Zhao, Y.C.; Wan, H.W.; Xie, Z.Q.; Shi, X.Z.; Wu, B.F.; et al. Carbon pools in China’s terrestrial ecosystems: New estimates based on an intensive field survey. Proc. Natl. Acad. Sci. USA 2018, 115, 4021–4026. [Google Scholar] [CrossRef] [Green Version]

- Pineux, N.; Lisein, J.; Swerts, G.; Bielders, C.L.; Lejeune, P.; Colinet, G.; Degre, A. Can DEM time series produced by UAV be used to quantify diffuse erosion in an agricultural watershed? Geomorphology 2017, 280, 122–136. [Google Scholar] [CrossRef]

- Feyisa, G.L.; Palao, L.K.; Nelson, A.; Gumma, M.K.; Paliwal, A.; Win, K.T.; Nge, K.H.; Johnson, D.E. Characterizing and mapping cropping patterns in a complex agro-ecosystem: An iterative participatory mapping procedure using machine learning algorithms and MODIS vegetation indices. Comput. Electron. Agric. 2020, 175, 105595. [Google Scholar] [CrossRef]

- Chen, R.; Zhang, R.Y.; Han, H.Y.; Jiang, Z.D. Is farmers’ agricultural production a carbon sink or source?—Variable system boundary and household survey data. J. Clean. Prod. 2020, 266, 122108. [Google Scholar] [CrossRef]

- Zhang, Y.L.; Song, C.H.; Hwang, T.; Novick, K.; Coulston, J.W.; Vose, J.; Dannenberg, M.P.; Hakkenberg, C.R.; Mao, J.F.; Woodcock, C.E. Land cover change-induced decline in terrestrial gross primary production over the conterminous United States from 2001 to 2016. Agric. For. Meteorol. 2021, 308, 108609. [Google Scholar] [CrossRef]

- Wang, Y.C.; Tao, F.L.; Yin, L.C.; Chen, Y. Spatiotemporal changes in greenhouse gas emissions and soil organic carbon sequestration for major cropping systems across China and their drivers over the past two decades. Sci. Total Environ. 2022, 833, 155087. [Google Scholar] [CrossRef]

- Chen, Z.Y.; Li, D.L.; Fan, W.T.; Guan, H.Y.; Wang, C.; Li, J. Self-attention in reconstruction bias U-Net for semantic segmentation of building rooftops in optical remote sensing images. Remote Sens. 2021, 13, 2524. [Google Scholar] [CrossRef]

- Luo, B.H.; Yang, J.; Song, S.L.; Shi, S.; Gong, W.; Wang, A.; Du, L. Target classification of similar spatial characteristics in complex urban areas by using multispectral LiDAR. Remote Sens. 2022, 14, 238. [Google Scholar] [CrossRef]

- Ienco, D.; Interdonato, R.; Gaetano, R.; Minh, D.H.T. Combining Sentinel-1 and Sentinel-2 satellite image time series for land cover mapping via a multi-source deep learning architecture. Isprs J. Photogramm. 2019, 158, 11–22. [Google Scholar] [CrossRef]

- Radosavovic, I.; Johnson, J.; Xie, S.N.; Lo, W.Y.; Dollar, P. On network design spaces for visual recognition. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Korea, 27 October–2 November 2019; pp. 1882–1890. [Google Scholar]

- Jung, H.; Choo, C. SGDR: A simple GPS-based disrupt-tolerant routing for vehicular networks. In Proceedings of the 2017 International Conference on Information and Communication Technology Convergence (ICTC), Jeju Island, Korea, 18–20 October 2017; pp. 1013–1016. [Google Scholar]

- Chen, L.C.E.; Zhu, Y.K.; Papandreou, G.; Schroff, F.; Adam, H. Encoder-decoder with atrous separable convolution for semantic image segmentation. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; Volume 11211, pp. 833–851. [Google Scholar]

- Zhao, H.S.; Shi, J.P.; Qi, X.J.; Wang, X.G.; Jia, J.Y. Pyramid scene parsing network. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 6230–6239. [Google Scholar]

- Shi, L.G.; Fan, S.C.; Kong, F.L.; Chen, F. Preliminary study on the carbon efficiency of main crops production in North China Plain. Acta Agron. Sin. 2011, 37, 1485–1490. [Google Scholar] [CrossRef]

- Zhang, P.Y.; He, J.J.; Pang, B.; Lu, C.P.; Qin, M.Z.; Lu, Q.C. Temporal and spatial differences in carbon footprint in farmland ecosystem: A case study of Henan Province, China. Chin. J. Appl. Ecol. 2017, 28, 3050–3060. [Google Scholar]

- Wang, L.; Liu, Y.Y.; Zhang, Y.H.; Dong, S.H. Spatial and temporal distribution of carbon source/sink and decomposition of influencing factors in farmland ecosystem in Henan Province. Acta Sci. Circumstantiae 2022, 1–13. [Google Scholar] [CrossRef]

- Tan, M.Q.; Cui, Y.P.; Ma, X.Z.; Liu, P.; Fan, L.; Lu, Y.Y.; Wen, W.; Chen, Z. Study on carbon sequestration estimation of cropland ecosystem in Henan Province. J. Ecol. Rural. Environ. 2022, 9, 1–14. [Google Scholar]

- Zhong, L.H.; Hu, L.N.; Zhou, H. Deep learning based multi-temporal crop classification. Remote Sens. Environ. 2019, 221, 430–443. [Google Scholar] [CrossRef]

- Wang, H.; Chen, X.Z.; Zhang, T.X.; Xu, Z.Y.; Li, J.Y. CCTNet: Coupled CNN and Transformer Network for crop segmentation of remote sensing images. Remote Sens. 2022, 14, 1956. [Google Scholar] [CrossRef]

- Teimouri, N.; Dyrmann, M.; Jorgensen, R.N. A novel spatio-temporal FCN-LSTM network for recognizing various crop types using multi-temporal radar images. Remote Sens. 2019, 11, 990. [Google Scholar] [CrossRef]

| Scheme | Features | Feature Variables |

|---|---|---|

| 1 | Spetral bands | Blue (B), green (G), eed (R), red edge 1 (RE1), red edge 2 (RE2), red edge 3 (RE3), near-infrared (NIR), narrow NIR (NNIR), shortwave infrared 1 (SWIR1), shortwave infrared 2 (SWIR2) |

| 2 | Spetral bands, vegetation indices, and texture | B, G, R, RE1, RE2 RE3, NIR, NNIR, SWIR1, SWIR2, modified normalized difference water index (MNDWI), normalized difference build-up index (NDBI), normalized difference vegetation index (NDVI), red-edge NDVI (RENDVI)), sum average (SAVG), correlation (CORR), dissimilarity (DISS) |

| Type | ||||

|---|---|---|---|---|

| Corn | 0.470 | 0.13 | 0.170 | 0.438 |

| Peanuts | 0.450 | 0.10 | 0.200 | 0.556 |

| Soybean | 0.450 | 0.13 | 0.130 | 0.425 |

| Rice | 0.410 | 0.12 | 0.125 | 0.489 |

| Other crops | 0.450 | 0.90 | 0.250 | 0.830 |

| Model | Total Epoch | Loss | mIoU | Total Time | Average Time |

|---|---|---|---|---|---|

| UNet | 72 | 0.499 | 0.796 | 331.43 | 4.60 |

| DeepLab V3+ | 72 | 0.521 | 0.777 | 527.65 | 7.33 |

| PSPNet | 70 | 0.588 | 0.708 | 162.51 | 2.32 |

| Year | Indicator | Scheme 1 | Scheme 2 | |||||

|---|---|---|---|---|---|---|---|---|

| WUS | US | WUS | US | US | ||||

| UNet++ | UNet | DeepLabV3+ | PSPNet | |||||

| 2019 | OA | 79.46 | 83.34 | 80.59 | 88.18 | 86.02 | 80.26 | 71.66 |

| Macro F1 | 50.51 | 52.68 | 50.66 | 58.54 | 55.11 | 54.73 | 47.85 | |

| 2020 | OA | 76.15 | 78.31 | 80.76 | 87.56 | 80.07 | 81.12 | 77.99 |

| Macro F1 | 47.03 | 51.14 | 51.29 | 58.71 | 54.52 | 54.73 | 49.86 | |

| 2021 | OA | 76.91 | 79.87 | 78.01 | 83.52 | 74.76 | 75.85 | 67.42 |

| Macro F1 | 58.43 | 59.09 | 59.58 | 59.67 | 53.21 | 52.16 | 47.39 | |

| Year | Indicator | Corn | Peanuts | Soybean | Rice | NCL | OTH | GH | FL | Urban | Water |

|---|---|---|---|---|---|---|---|---|---|---|---|

| 2019 | PA | 0.94 | 0.95 | 0.71 | 0.97 | 0.21 | 0.28 | 0.00 | 0.54 | 0.76 | 0.57 |

| UA | 0.89 | 0.95 | 0.90 | 0.95 | 0.43 | 0.10 | 0.00 | 0.80 | 0.76 | 0.31 | |

| F1 score | 0.91 | 0.95 | 0.80 | 0.96 | 0.29 | 0.15 | 0.00 | 0.64 | 0.76 | 0.40 | |

| 2020 | UA | 0.86 | 0.95 | 0.82 | 0.92 | 0.08 | 0.27 | NAN | 0.76 | 0.88 | 0.54 |

| PA | 0.90 | 0.79 | 0.84 | 0.93 | 0.59 | 0.19 | NAN | 0.91 | 0.85 | 0.21 | |

| F1 score | 0.88 | 0.86 | 0.83 | 0.93 | 0.14 | 0.22 | 0.00 | 0.83 | 0.87 | 0.31 | |

| 2021 | PA | 0.98 | 0.93 | 0.83 | 0.83 | 0.40 | 0.15 | 0.00 | 0.70 | 0.76 | 0.39 |

| UA | 0.91 | 0.89 | 0.75 | 0.89 | 0.44 | 0.17 | NAN | 0.60 | 0.71 | 0.69 | |

| F1 score | 0.95 | 0.91 | 0.79 | 0.86 | 0.42 | 0.16 | 0.00 | 0.65 | 0.73 | 0.50 |

| Year | Indicator | Corn | Peanuts | Soybean | Rice | NCL | OTH | GH | FL | Urban | Water |

|---|---|---|---|---|---|---|---|---|---|---|---|

| 2019 | AP | 35.45 | 7.82 | 2.19 | 1.49 | 1.23 | 3.17 | 0.09 | 14.16 | 33.14 | 1.27 |

| CS | 1997.85 | 248.13 | 62.98 | 64.19 | 87.41 | ||||||

| 2020 | AP | 36.84 | 6.79 | 2.15 | 0.88 | 0.56 | 4.31 | 0.11 | 13.53 | 33.18 | 1.65 |

| CS | 2114.99 | 218.43 | 55.54 | 40.44 | 119.76 | ||||||

| 2021 | AP | 35.15 | 7.23 | 1.35 | 0.69 | 0.16 | 3.33 | 0.16 | 12.93 | 32.88 | 2.63 |

| CS | 1442.01 | 217.09 | 35.69 | 32.90 | 86.38 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Wang, L.; Bai, Y.; Wang, J.; Qin, F.; Liu, C.; Zhou, Z.; Jiao, X. A Transferable Learning Classification Model and Carbon Sequestration Estimation of Crops in Farmland Ecosystem. Remote Sens. 2022, 14, 5216. https://doi.org/10.3390/rs14205216

Wang L, Bai Y, Wang J, Qin F, Liu C, Zhou Z, Jiao X. A Transferable Learning Classification Model and Carbon Sequestration Estimation of Crops in Farmland Ecosystem. Remote Sensing. 2022; 14(20):5216. https://doi.org/10.3390/rs14205216

Chicago/Turabian StyleWang, Lijun, Yang Bai, Jiayao Wang, Fen Qin, Chun Liu, Zheng Zhou, and Xiaohao Jiao. 2022. "A Transferable Learning Classification Model and Carbon Sequestration Estimation of Crops in Farmland Ecosystem" Remote Sensing 14, no. 20: 5216. https://doi.org/10.3390/rs14205216

APA StyleWang, L., Bai, Y., Wang, J., Qin, F., Liu, C., Zhou, Z., & Jiao, X. (2022). A Transferable Learning Classification Model and Carbon Sequestration Estimation of Crops in Farmland Ecosystem. Remote Sensing, 14(20), 5216. https://doi.org/10.3390/rs14205216