1. Introduction

Synthetic aperture radar (SAR) is used in a variety of applications such as disaster monitoring [

1], agricultural monitoring [

2], and geological surveys [

3] due to its all-weather, all-day Earth observation capability. These applications require a variety of technical tools to support them, such as change detection [

4], image fusion [

5] and 3D reconstruction [

6]. Image registration plays a vital role in these technical tools. Image registration is the process of transforming multiple images acquired at different times or from different viewpoints into the same coordinate system. However, implementing robust and highly accurate geometric registration of SAR images is a challenging task because SAR sensors produce varying degrees of geometric distortion when imaging at different viewpoints. In addition, SAR images suffer from severe speckle noise.

Existing SAR image registration methods can be roughly divided into two categories, i.e., area-based methods and feature-based methods. The area-based methods, also known as intensity-based methods, realize registration of image pairs by a template-matching scheme. Classical template-matching schemes include mutual information (MI) [

7] and normalized cross-correlation coefficient (NCC) [

8]. However, these area-based methods generally require high computation cost. Although the computational cost can be reduced to a large extent by precoarse registration [

9,

10] or local search strategies [

11,

12], the area-based methods still perform poorly in scenes with large geometric distortions.

In contrast to the area-based methods, the feature-based methods achieve registration by extracting feature descriptors of keypoints, and their registration speed is usually better than that of the area-based methods. The feature-based methods can be further divided into handcrafted approaches and learning-based approaches. The traditional handcrafted approaches for SAR image registration include SAR-SIFT [

13,

14,

15], speeded-up robust features (SURF) [

16,

17], KAZE-SAR [

18], etc. Although these methods reduce the interference of speckle noise in SAR image registration, there is still a large number of incorrectly matched point pairs in SAR image registration with complex scenes. One reason is that due to the complexity of texture and structural information in SAR images with complex scenes, the accuracy and repeatability of the detected keypoints is not well supported. Eltanany et al. [

19] used phase congruency (PC) and a Harris corner keypoint detector to detect meaningful keypoints in SAR images with complex intensity variations. Xiang et al. [

20] proposed a novel keypoint detector based on feature interaction in which a local coefficient of variation keypoint detector was used to mask keypoints in mountainous and built-up areas. However, it is difficult to detect uniformly distributed keypoints in SAR images with complex scenes using these methods. Another reason is that the handcrafted feature descriptors are unable to extract significant features such as line or contour structures in complex scenes. With the development of deep learning, methods such as convolutional neural networks (CNNs) have achieved exciting success in the field of SAR image interpretation. Quan et al. [

21] designed a deep neural network (DNN) for SAR image registration and confirmed that deep learning can extract more robust features and achieve more accurate matching. Zhang et al. [

22] proposed a Siamese fully convolutional network to achieve SAR image registration and used the strategy of maximizing the feature distance between positive and hard negative samples to train the network model. Xiang et al. [

20] proposed a Siamese cross-stage partial network (Sim-CSPNet) to extract feature descriptors containing both deep and shallow features, which increases the expressiveness of the feature descriptors.Fan et al. [

23] proposed a transformer-based SAR image registration method, which exploits the powerful learning capability of the transformer to extract accurate feature descriptors of keypoints under weak texture conditions.

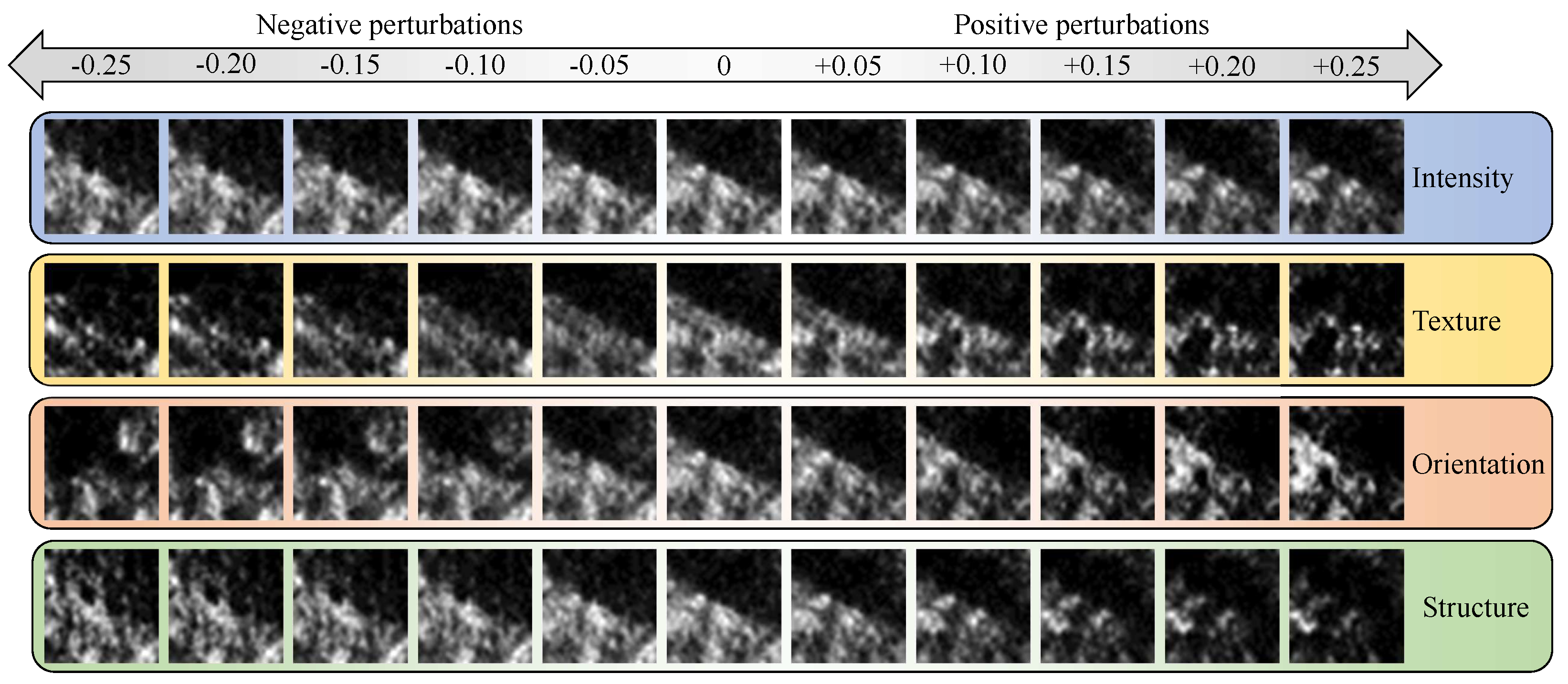

Although CNN-based methods extract feature descriptors that contain salient information useful for registration, such as structure, intensity, orientation, and texture information, CNNs improve feature robustness by virtue of “translation invariance” in convolution and pooling operations. “Translation invariance” means that the CNN is able to steadily extract salient features from an image patch even when the patch undergoes small slides (including small translations and rotations) [

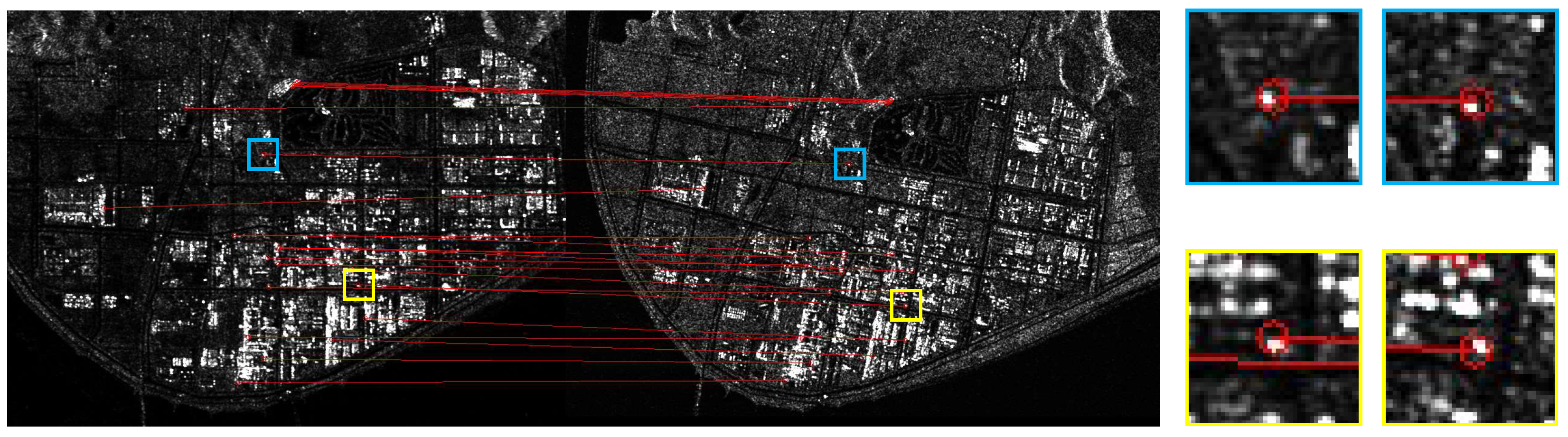

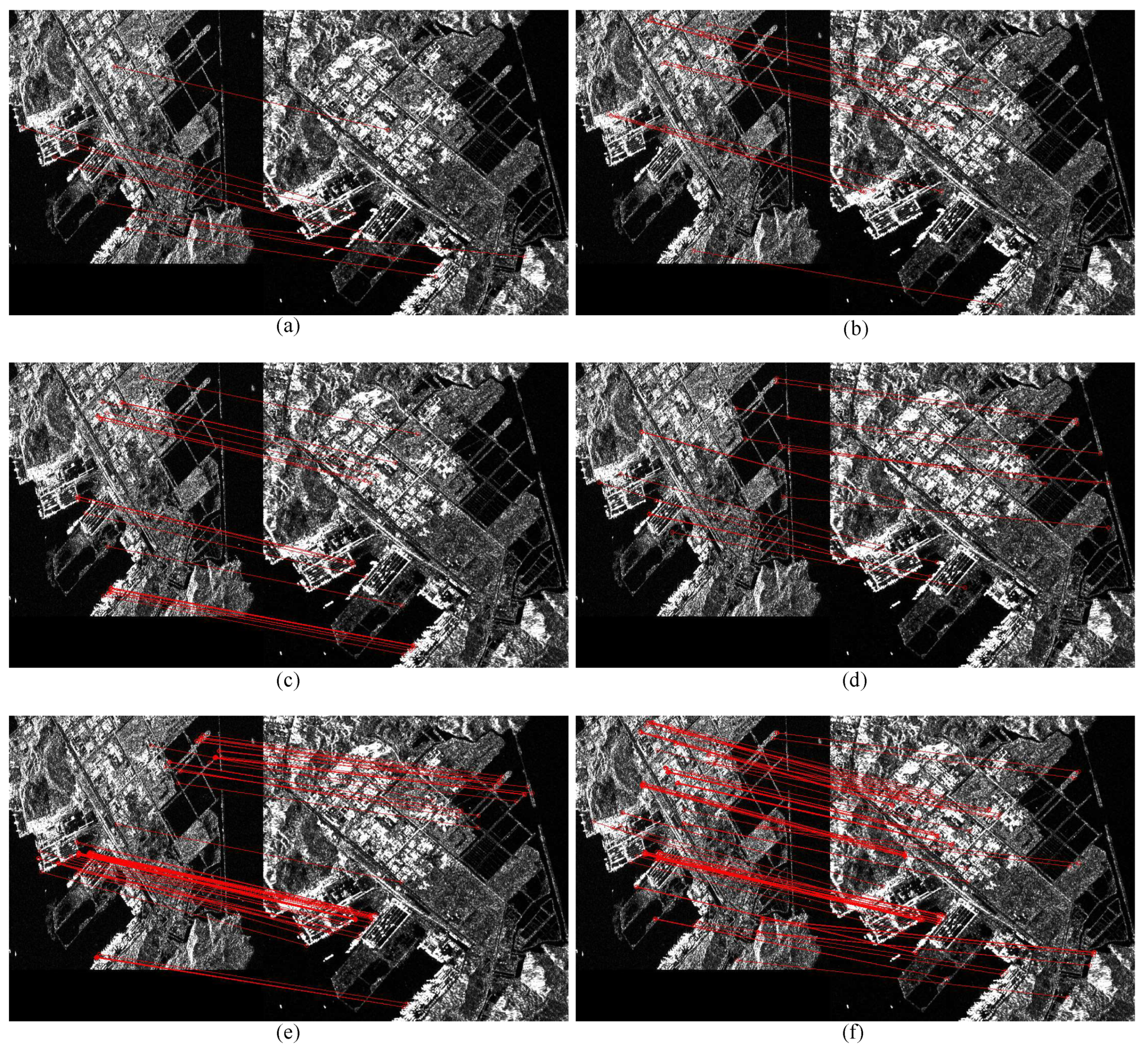

24]. However, this “translation invariance” is fatal in image registration, as it leads to small displacement errors in the matched point pairs, as shown in

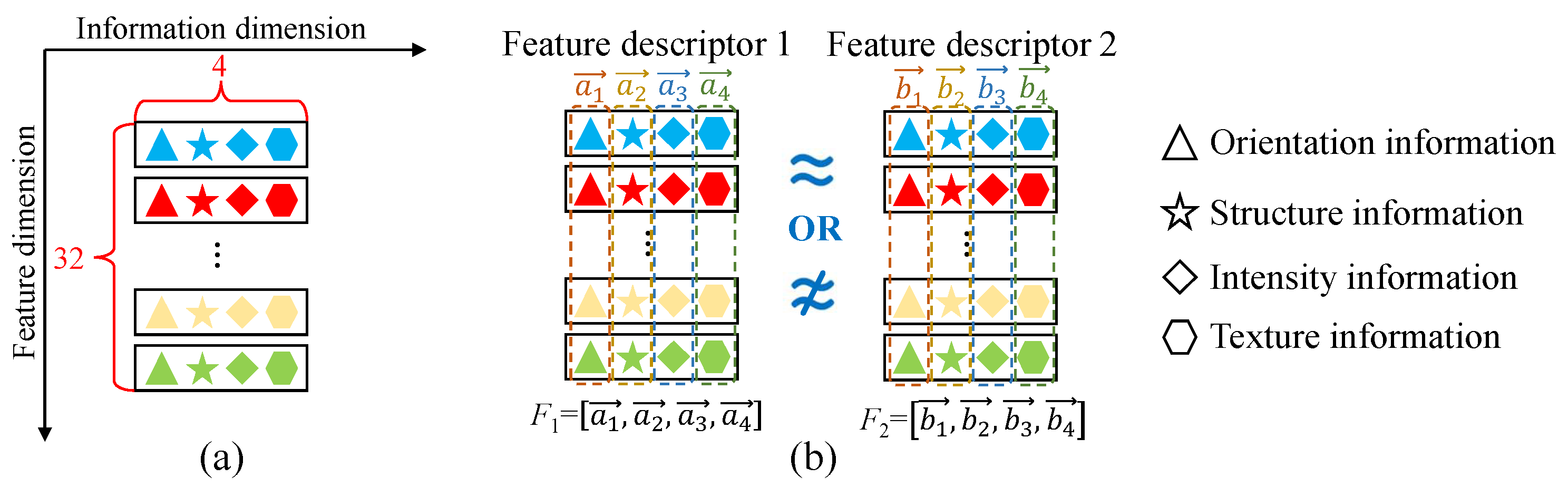

Figure 1. In addition, the feature descriptors obtained by the CNN-based methods are mostly described in a vector form. However, in traditional deep learning networks, the activation of one neuron can only represent one kind of information, and the singularity of its dimensionality dictates that the neuron itself cannot represent multiple kinds of information at the same time [

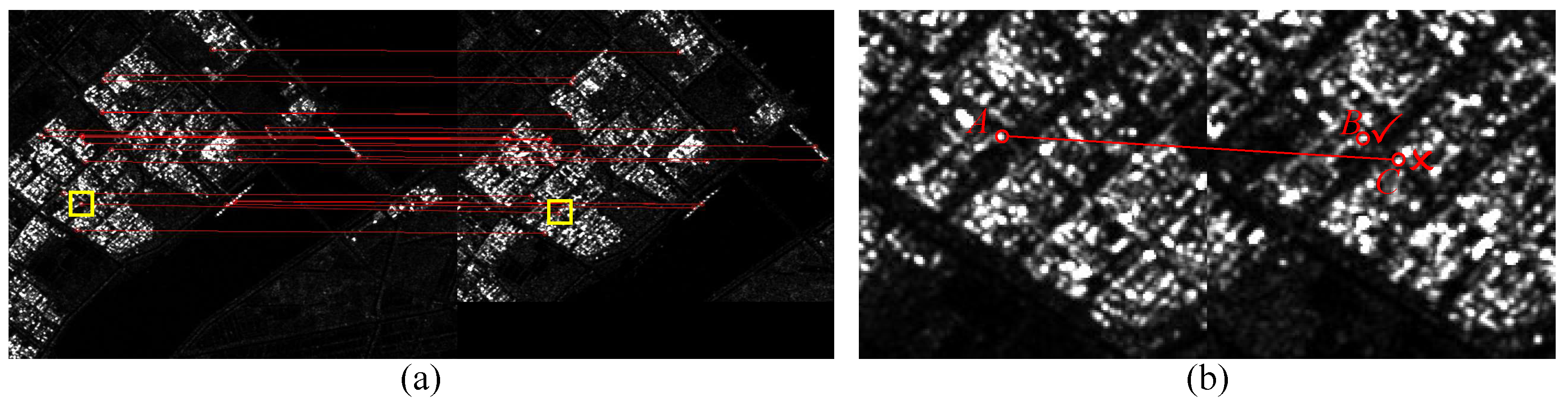

25]. Therefore, the multiple pieces of information of keypoints and the relationship between the information hidden in a large number of network parameters pose many problems for SAR image registration. For instance, (1) the network requires a large number of training samples to violently decipher the relationships between multiple kinds of information. However, the scarcity of SAR image registration datasets often leads to incomplete training of models or causes data to fall into local optima. (2) The presence of complex non-linear relationships in feature descriptors detaches them from their actual physical interpretation, resulting in poor generalization performance. (3) The feature descriptors in vector form are not capable of representing fine information about keypoints in complex scenes. When two keypoints at different locations have similar structure and intensity information, their feature descriptors may be very similar in vector space, so that the registration algorithm is unable to recognize the differences between the feature descriptors, which may result in mismatched point pairs. As shown in

Figure 2, although the advanced registration algorithm Sim-CSPNet is used, keypoint

A is still incorrectly matched to keypoint

C.

The advent of capsule network (CapsNet) [

26] has broken the limitation that an active neuron in traditional neural networks can only represent certain kinds of information, achieving more competitive results than CNNs in various image application fields [

25,

27,

28,

29]. CapsNets encapsulate multiple neurons to form neuron capsules (NCs), enabling the evolution from “scalar neurons” to “vector neurons”. In this case, a single NC can represent multiple messages at the same time, and the network does not need to additionally encode the complex relationships between multiple pieces of information. Therefore, CapsNets require far less training data than CNNs, whereas the effectiveness of the deep features is no less than that of CNNs. Despite the many advantages of CapsNets over CNNs, there are still some limitations to the application of CapsNets for image alignment tasks; therefore, no relevant research has been published.

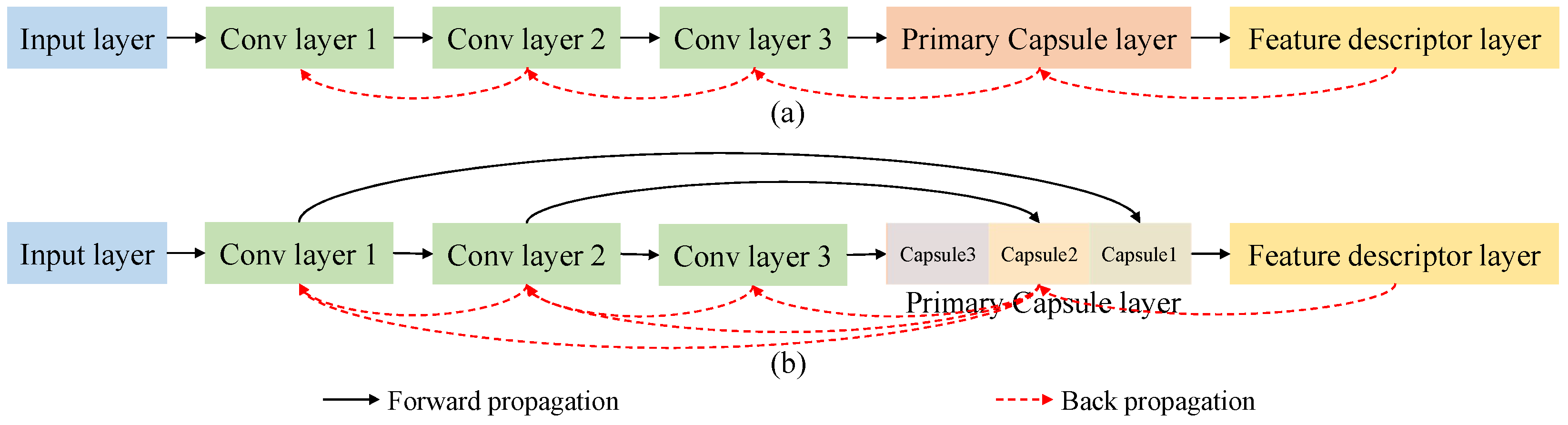

One limitation is that the traditional CapsNet adopts a single convolutional layer to extract deep features, which is not sufficient to obtain significant features. The most direct solution is to add more convolutional layers to extract deep features [

29,

30]. However, the small amount of training data cannot support the training of a deep model, and the increased backpropagation distance leads to reduced convergence performance of the network. In addition, as mentioned earlier, most feature descriptors are in the form of vectors, whereas the feature descriptors extracted by CapsNets are in a special matrix form (the rows of the matrix represent the NCs, and the columns of the matrix represent a variety of useful information). Therefore, how to measure the distance between the feature descriptors is one of the key difficulties that constrain the application of CapsNet to image registration.

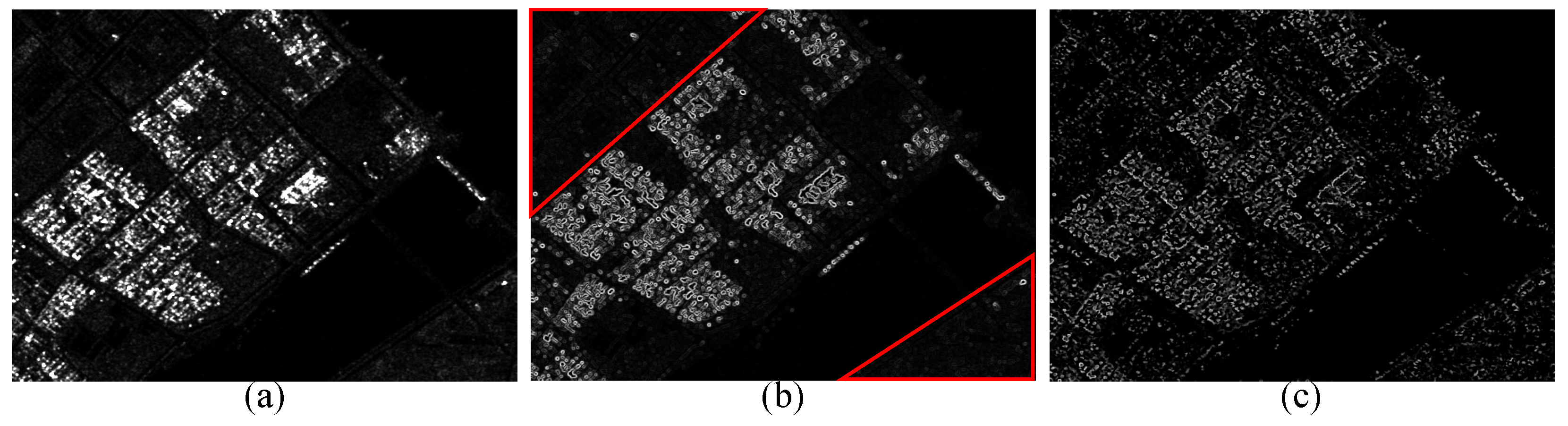

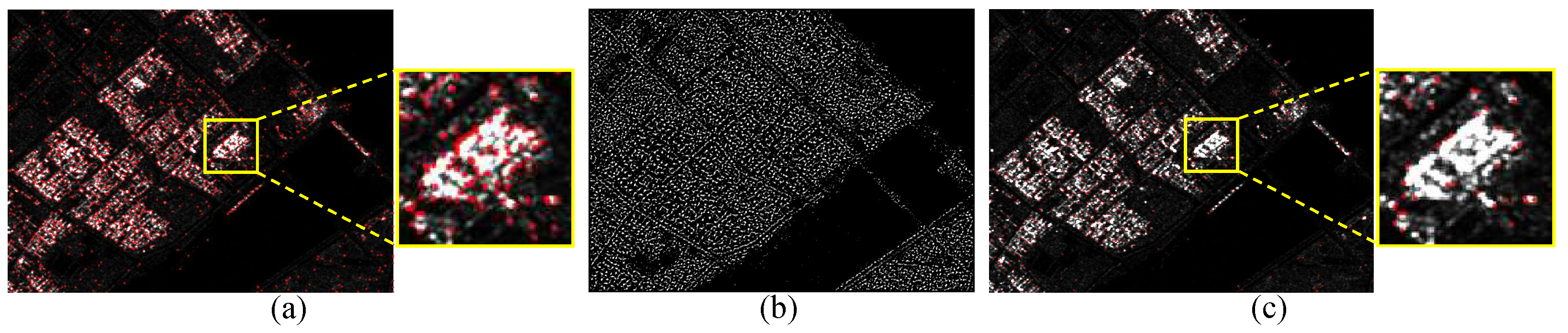

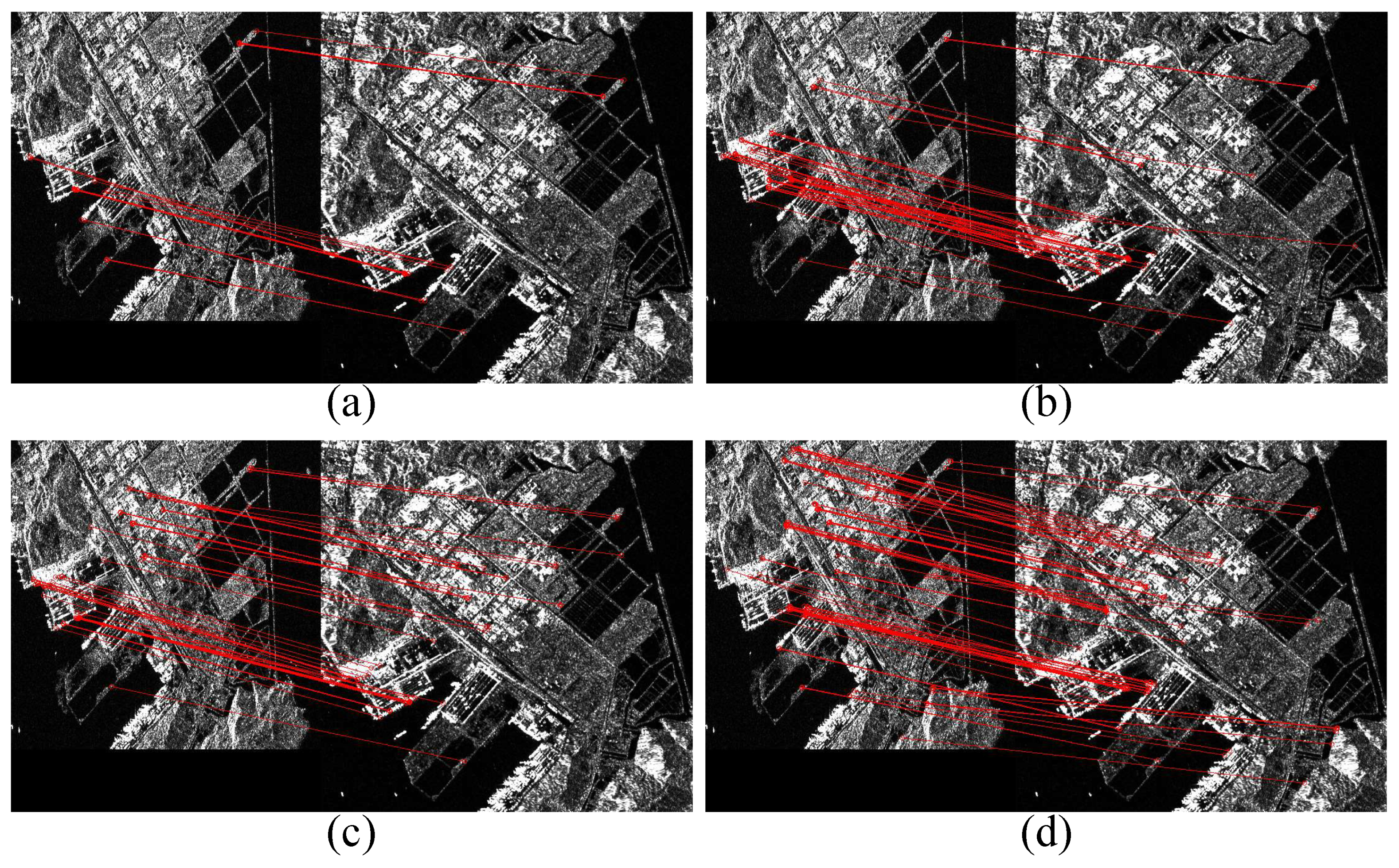

In this paper, we propose a novel registration method for SAR images with complex scenes based on a Siamese dense capsule network (SD-CapsNet), as shown in

Figure 3. The proposed registration method consists of two main components, namely a texture constraint-based phase congruency (TCPC) keypoint detector and a Siamese dense capsule network-based (SD-CapsNet) feature descriptor extractor. In SAR data processing, phase information has been shown to be superior to amplitude information, reflecting the contour and structural information of the image [

31,

32]. However, the phase information contains contour and structural information generated by geometric distortions such as overlays or shadows within complex scenes, which may affect the correct matching of keypoints. Therefore, we add a texture feature to the PC keypoint detector to constrain the keypoint positions. Specifically, when a keypoint has a large local texture feature, we consider that the keypoint may be located inside a complex scene, making it difficult to extract discriminative feature descriptors. Furthermore, considering the possible rotation between the reference and sensed images, the use of rotation-invariant texture features facilitates the acquisition of keypoints with high repeatability. Therefore, we adopt the rotation-invariant local binary pattern (RI-LBP) to describe the local texture features of the keypoints detected by the PC keypoint detector, and we discard a detected keypoint when its RI-LBP value is higher than the average global RI-LBP value. To break the limitation of traditional capsule networks in terms of deep feature extraction capability, we cascade three convolutional layers in front of the primary capsule layer and design a dense form of connection from the convolutional layer to the primary capsule layer, which makes the primary capsules contain both deep semantic information and shallow detail information. The dense connection form shortens the distance of loss backpropagation, which improves the convergence performance of the network and reduces the dependence of the model on training samples. In addition, in the parameter settings of most CapsNets, the dimension of the high-level capsules is greater than that of the primary capsules, allowing the high-level capsules to represent more complex entity information [

29,

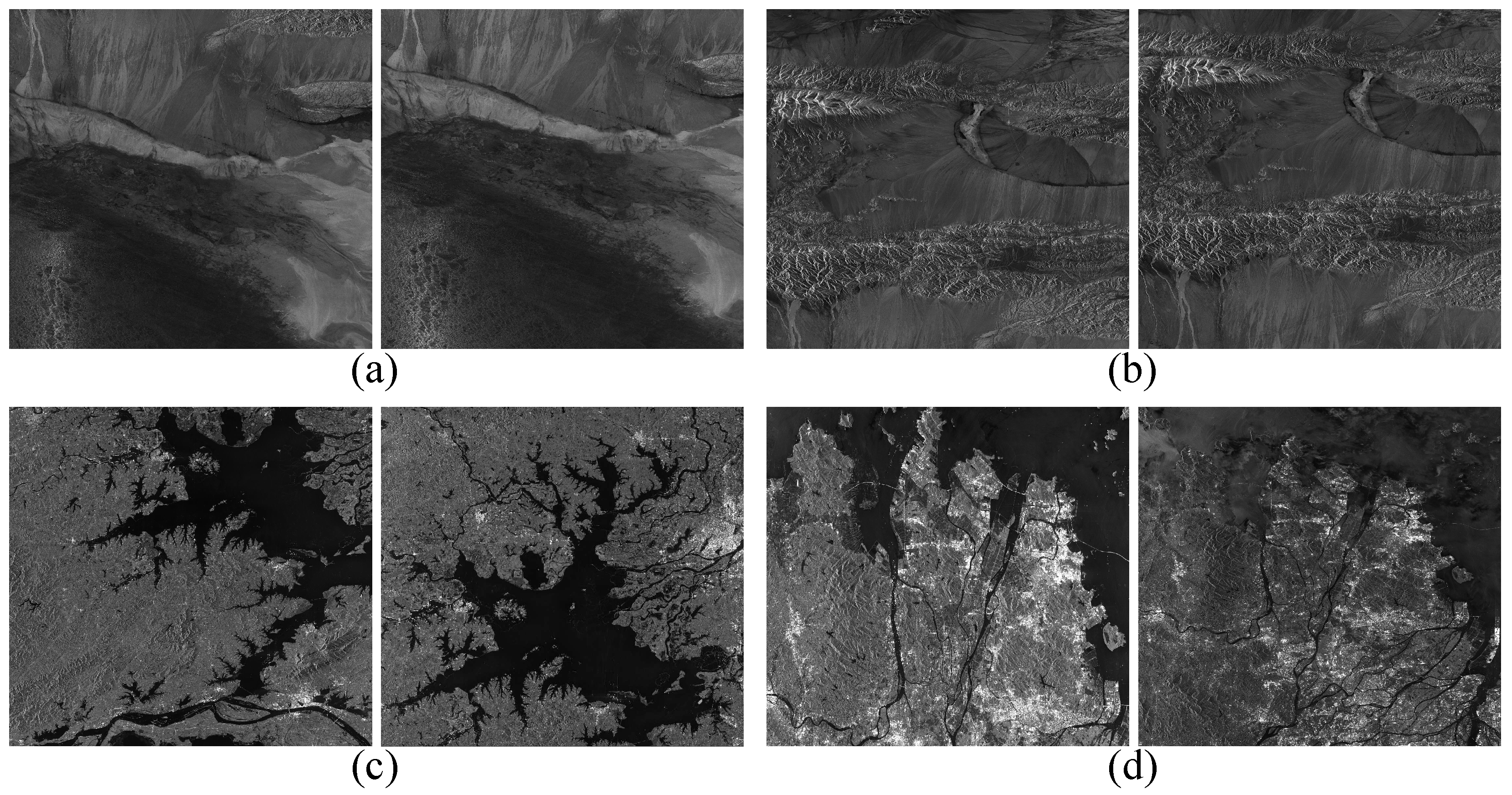

30]. However, in our proposed method, high-level capsules are used as feature descriptors to describe important information of keypoints, such as structure information, intensity information, texture information, and orientation information; therefore, the feature descriptors extracted by SD-CapsNet require only four dimensions, greatly reducing the computational burden of the dynamic routing process. In addition, we define the L2-distance between capsules for feature descriptors in the capsule form. The effectiveness of the proposed method is verified using data for Jiangsu Province and Greater Bay Area in China obtained by Sentinel-1.

The main contributions of this paper are briefly summarized as follows.

- (1)

We propose a texture constraint-based phase congruency (TCPC) keypoint detector that can detect uniformly distributed keypoints in SAR images with complex scenes and remove keypoints that may be located in overlay or shadow regions, thereby improving the high-repeatability of keypoints;

- (2)

We propose a Siamese dense capsule network (SD-CapsNet) to implement feature descriptor extraction and matching. SD-CapsNet designs a dense connection to construct the primary capsules, which shortens the backpropagation distance and makes the primary capsules contain both deep semantic information and shallow detail information;

- (3)

We innovatively construct feature descriptors in the capsule form and verify that each dimension of the capsule corresponds to intensity, texture, orientation, and structure information. Furthermore, we define the L2 distance between capsules for feature descriptors in the capsule form and combine this distance with the hard L2 loss function to implement the training of SD-CapsNet.

The rest of this paper is organized as follows. In

Section 2, the proposed method is introduced.

Section 3 presents the experimental results of our proposed method, as well as a comparisons with other state-of-the-art methods.

Section 4 includes a discussion on the effectiveness of the keypoint detector and feature descriptor extractor. Finally, conclusions are presented in

Section 5.