Deep-Learning Correction Methods for Weather Research and Forecasting (WRF) Model Precipitation Forecasting: A Case Study over Zhengzhou, China

Abstract

1. Introduction

2. Data and Methodology

2.1. Scheme of Precipitation Correction

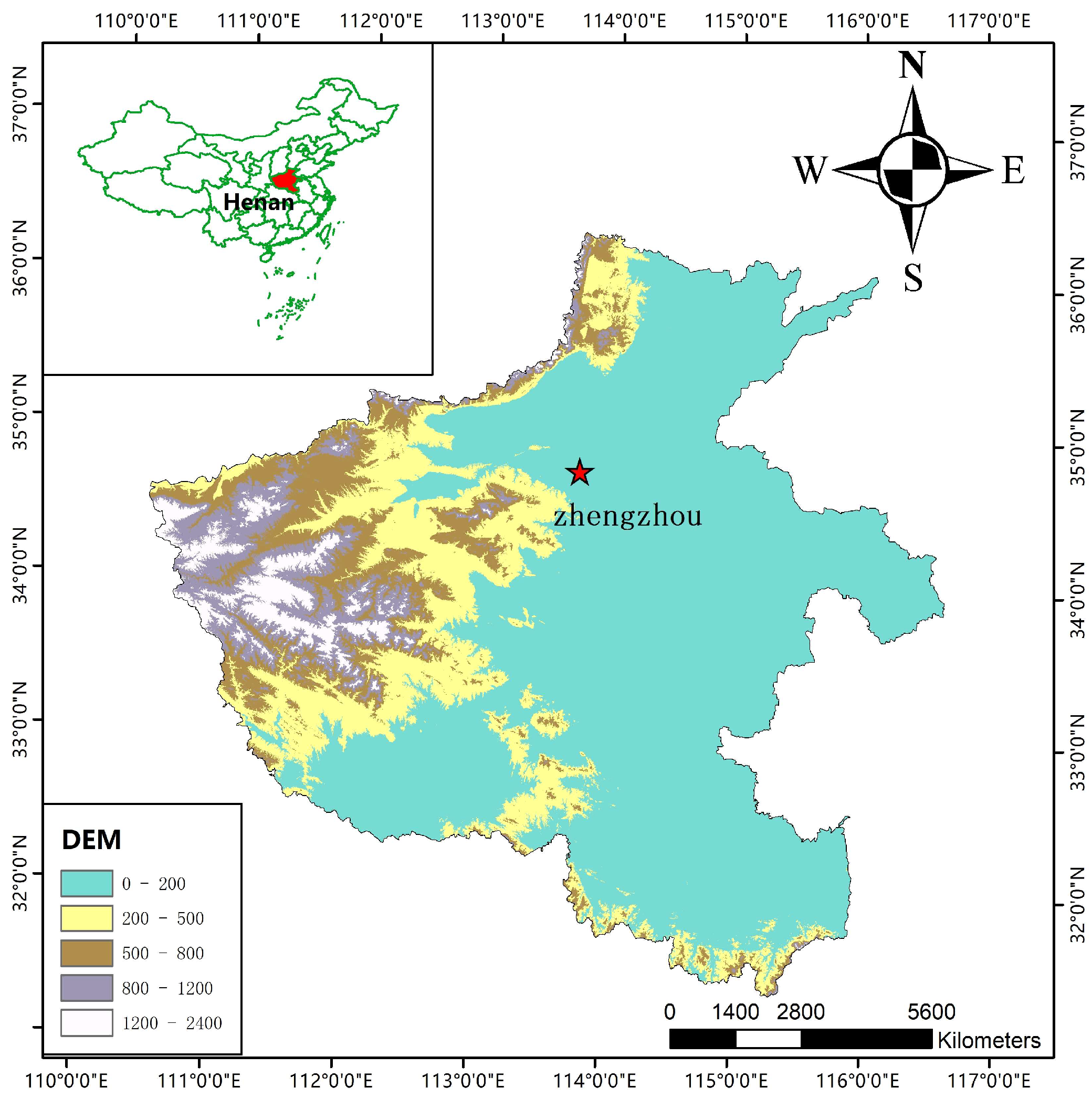

2.2. Study Area

2.3. Construction of the Sample Database

2.4. Data Standardization

- h: the number of forecasted events that match the actual events.

- m: the number of actual events that were not forecasted.

- f: the number of forecasted events that did not occur in reality.

- c: the number of events that were neither forecasted nor occurred in reality.

2.5. Training and Test Dataset

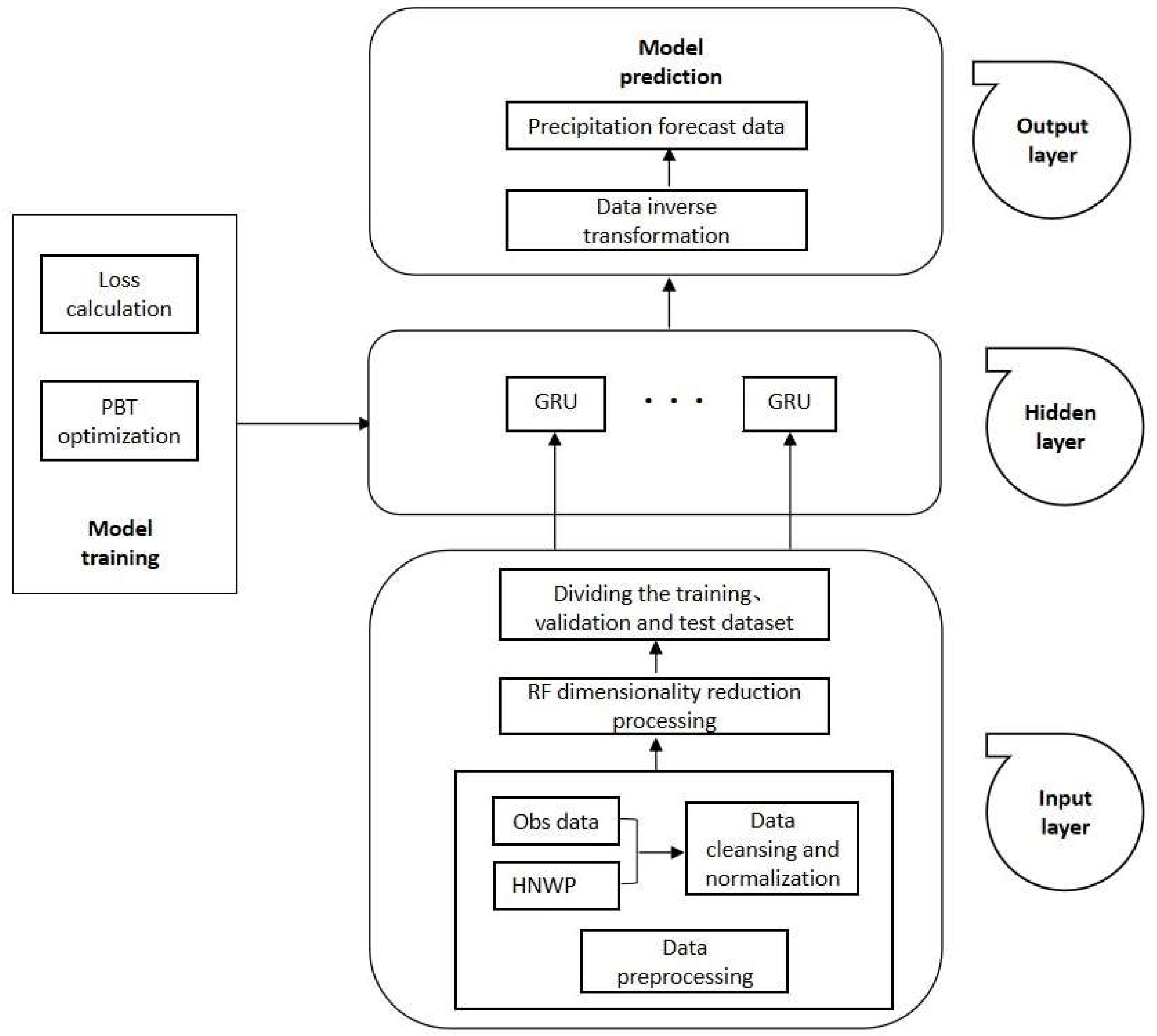

3. Correction Model Construction Based on PBT and GRU

3.1. Dataset Dimensionality Reduction by RF

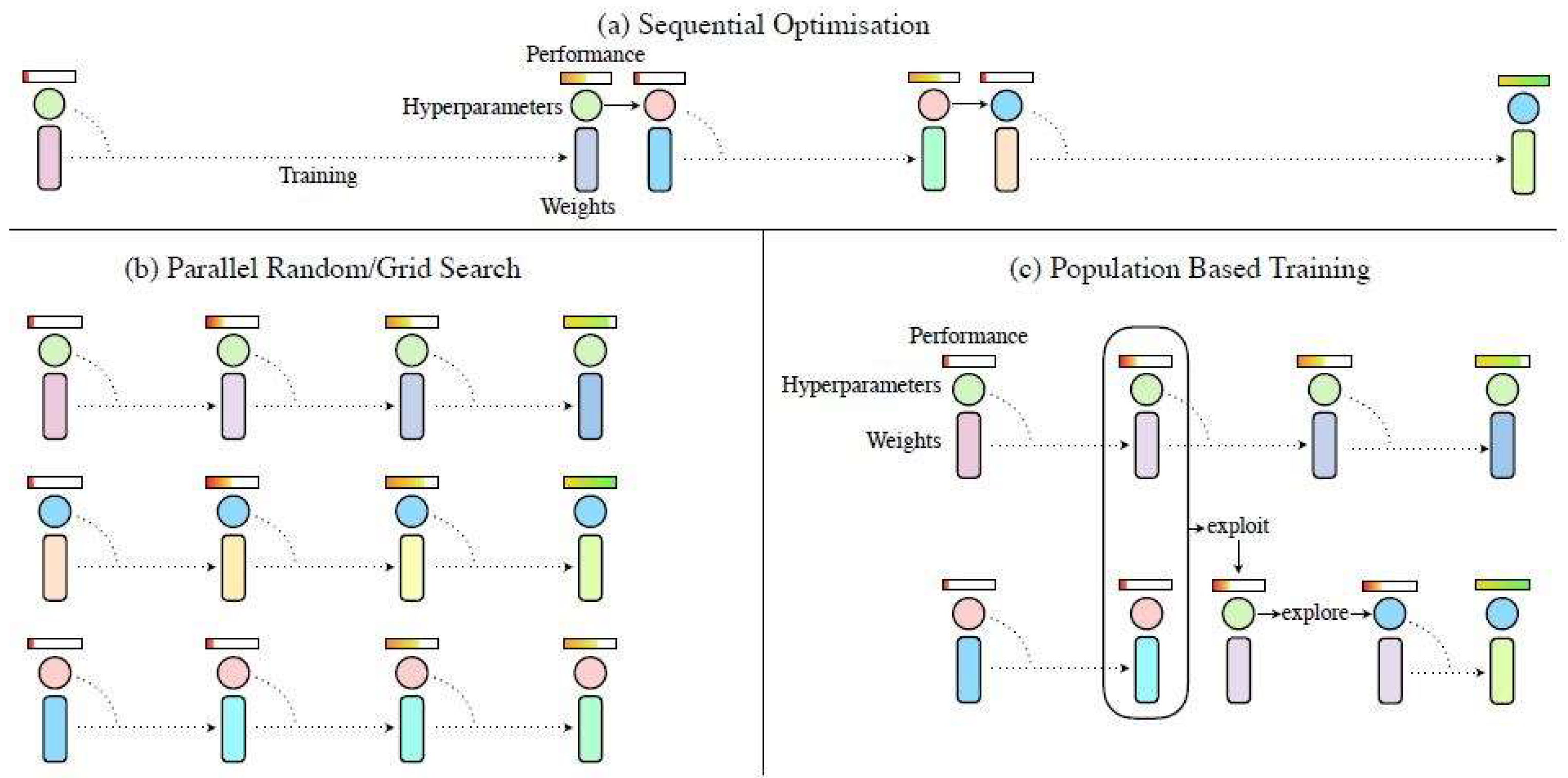

3.2. The PBT Optimization Algorithm

3.3. Construction of the Model

3.4. Experimental Setup

4. Results and Discussion

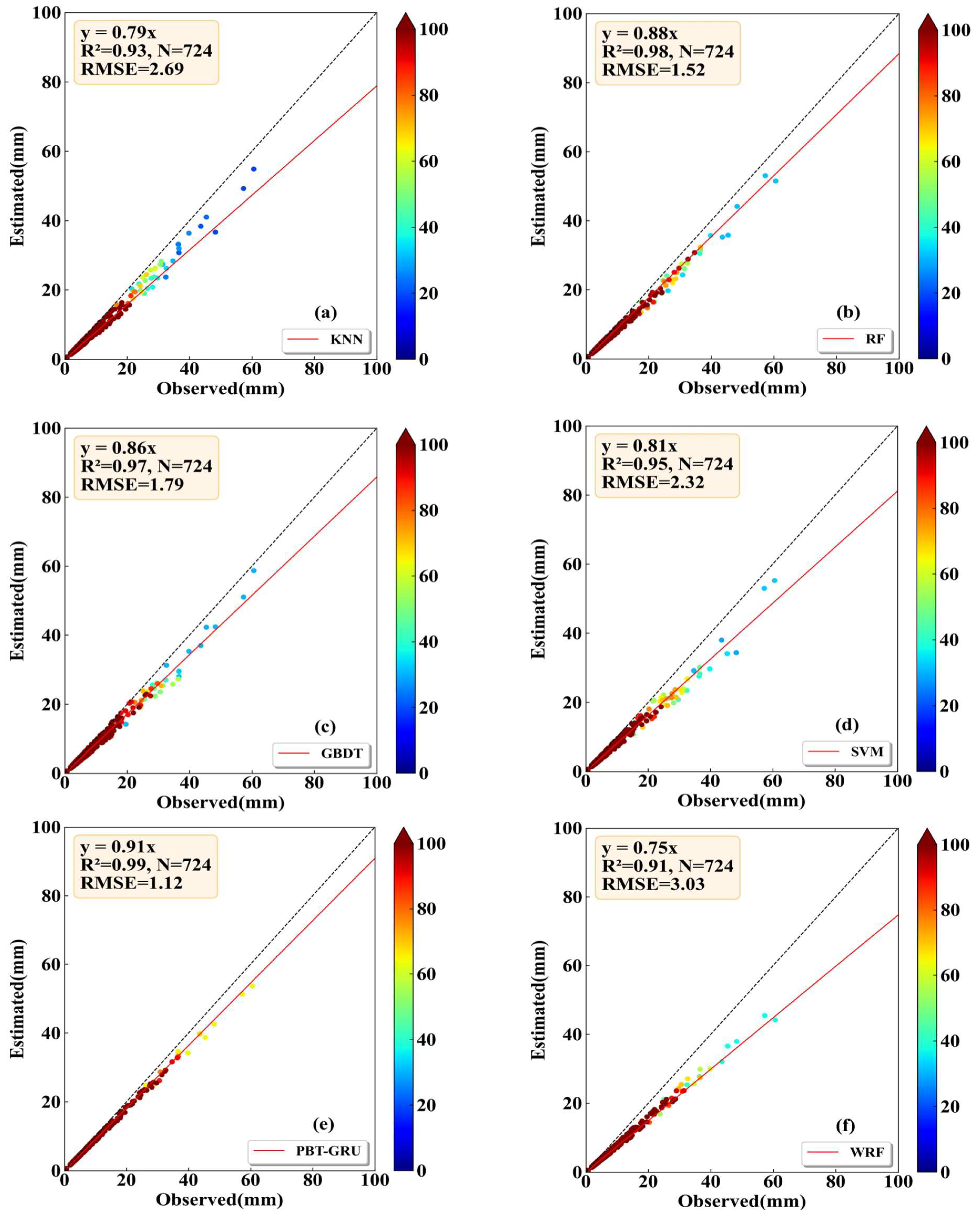

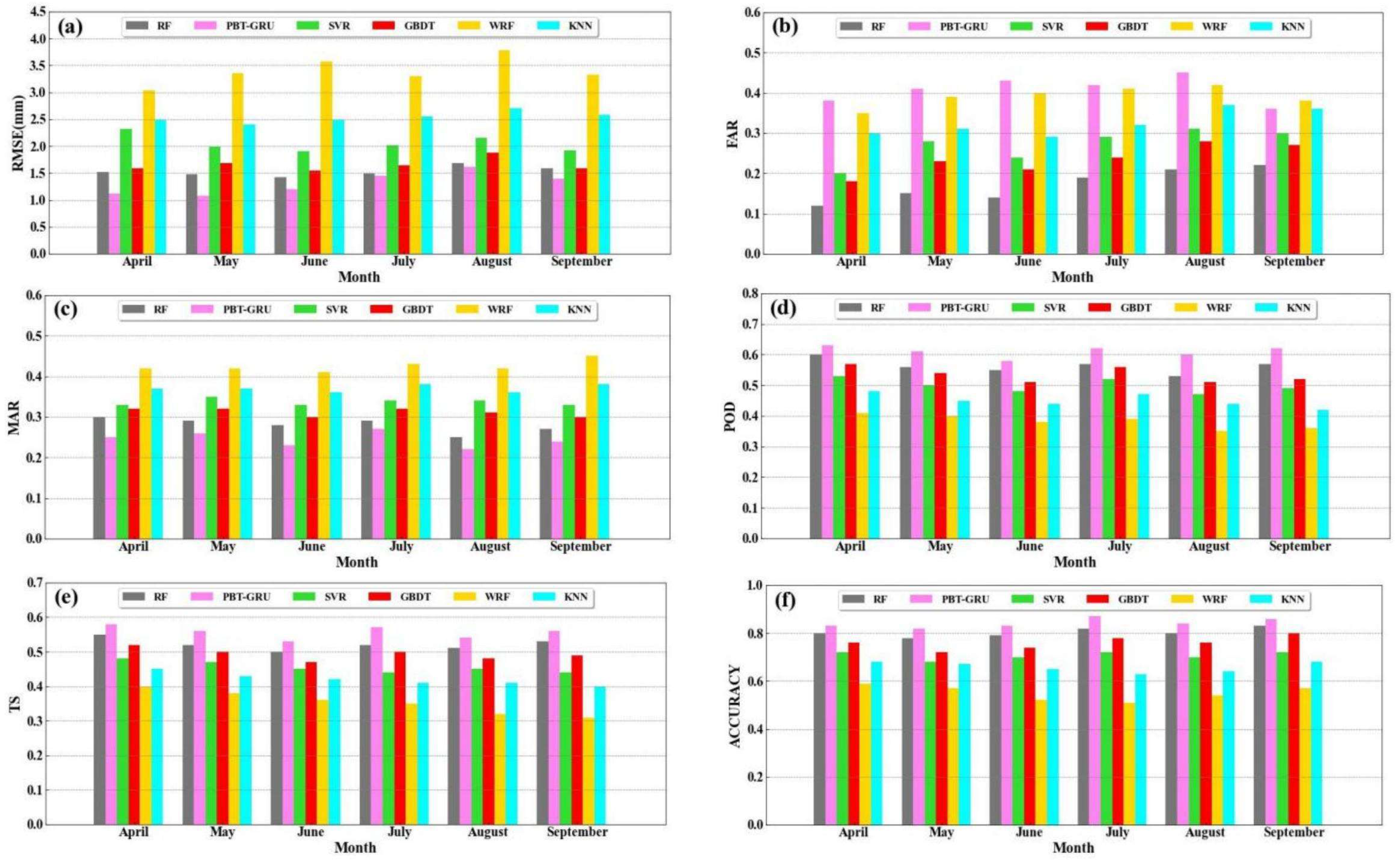

4.1. Comparison with Other ML Methods

4.2. Individual Case Forecast Evaluation

4.2.1. Spatial Distribution

4.2.2. Temporal Variations

4.3. Stability Analysis of the Proposed Models

5. Summary

- (1)

- The sample balancing experiment results revealed that when the ratio of positive and negative samples was 1:1, both the accuracy and TS scores reached their highest values, while the POD score was slightly lower. As the number of positive samples increased, the POD score improved, yet the accuracy and TS scores slightly decreased. Conversely, when the number of negative samples increased, all three scores, namely the POD, accuracy, and TS, experienced a significant decline with the increase in negative samples.

- (2)

- To optimize the model’s performance, we utilized RF to evaluate the significance of various forecast features. As a result, nine key features were identified and selected, including radar reflectivity factor, 3 h precipitation, automatic observation of minimum visibility, 6 h precipitation, artificial visibility, 12 h precipitation, automatic observation of 10 min average visibility, automatic observation of 1 min average visibility, and maximum wind speed. By incorporating these features, the model’s input size was significantly reduced, leading to improved computational efficiency.

- (3)

- Combining the advantages of PBT and GRU, a DL model named PBT-GRU was constructed, which took the forecast features in the first 72 h as input features, fully considering the evolution law and characteristics of the weather system. The experimental results showed that the RMSE of the PBT-GRU was only 1.12 mm, which was reduced by 51.72%, 58.36%, 37.43% and 26.32% compared with SVM, KNN, GBDT and RF, respectively. The and r of the PBT-GRU, RF, SVM, GBDT and KNN were 1.02 and 0.99, 1.12 and 0.98, 1.24 and 0.95, 1.15 and 0.97, 1.26 and 0.93, respectively. According to the comprehensive analysis of the accuracy, TS, RMSE, and r, the PBT-GRU model performed the most ideally, and its correction effect was significantly better than that of the ML methods. This model can be applied to forecast applications in private industry, providing a platform and technical support for future weather forecasting and early warning services.

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Hamill, T.M.; Engle, E.; Myrick, D.; Peroutka, M.; Finan, C. The US National Blend of Models for statistical postprocessing of probability of precipitation and deterministic precipitation amount. Mon. Weather. Rev. 2017, 145, 3441–3463. [Google Scholar] [CrossRef]

- Wu, Q.S.; Han, M.; Guo, H.; Su, T.H. The optimal training period scheme of MOS temperature forecast. Appl. Meteor. Sci. 2016, 27, 426–434. [Google Scholar]

- Wu, Q.S.; Han, M.; Liu, M.; Chen, F.J. A comparison of optimal score based correction algorithms of model precipitation prediction. Appl. Meteor. Sci. 2017, 28, 306–317. [Google Scholar]

- Zaytar, M.A.; Amrani, C.E. Sequence to Sequence Weather Forecasting with Long Short-Term Memory Recurrent Neural Networks. Int. J. Comput. Appl. 2016, 143, 7–11. [Google Scholar]

- Herman, G.R.; Schumacher, R.S. “Dendrology” in Numerical Weather Prediction: What Random Forests and Logistic Regression Tell Us about Forecasting Extreme Precipitation. Mon. Weather. Rev. 2018, 146, 1785–1812. [Google Scholar] [CrossRef]

- Ahmed, K.; Sachindra, D.A.; Shahid, S.; Iqbal, Z.; Nawaz, N.; Khan, N. Multi-model ensemble predictions of precipitation and temperature using machine-learning algorithms. Atmos. Res. 2020, 236, 104806. [Google Scholar] [CrossRef]

- Xu, X.F. From physical model to intelligent analysis: A new exploration to reduce uncertainty of weather forecast. Meteor. Mon. 2018, 44, 341–350. [Google Scholar]

- Sun, Q.D.; Jiao, R.L.; Xia, J.J.; Yan, Z.W.; Li, H.C.; Sun, J.H.; Wang, L.Z.; Liang, Z.M. Adjusting wind speed prediction of numerical weather forecast model based on machine-learning methods. Meteor. Mon. 2019, 45, 426–436. [Google Scholar]

- Shi, X.J.; Gao, Z.H.; Lausen, L.; Wang, H.; Yeung, D.Y.; Wong, W.K.; Woo, W.C. Deep Learning for Precipitation Nowcasting: A Benchmark and A New Model. In Proceedings of the 31st International Conference on Neural Information Processing Systems, Long Beach, CA, USA, 4–9 December 2017; pp. 5622–5632. [Google Scholar]

- Guo, H.Y.; Chen, M.X.; Han, L.; Zhang, W.; Qin, R.; Song, L.Y. High resolution nowcasting experiment of severe convection based on deep-learning. Acta Meteor. Sin. 2019, 77, 715–727. [Google Scholar]

- Teng, Z.W. Study on Doppler Radar Echo Extrapolation Algorithm Based on Deep-Learning; Hunan Normal University: Changsha, China, 2017. [Google Scholar]

- Xu, H.; Xu, C.Y.; Chen, S.; Chen, H. Similarity and difference of global reanalysis datasets (WFD and APHRODITE) in driving lumped and distributed hydrological models in a humid region of China. J. Hydrol. 2016, 542, 343–356. [Google Scholar] [CrossRef]

- Legasa, M.; Manzanas, R.; Calviño, A.; Gutiérrez, J. A Posteriori Random Forests for Stochastic Downscaling of Precipitation by Predicting Probability Distributions. Water Resour. Res. 2022, 58, e2021WR030272. [Google Scholar] [CrossRef]

- Long, D.; Bai, L.; Yan, L.; Zhang, C.; Yang, W.; Lei, H.; Quan, J.; Meng, X.; Shi, C. Generation of spatially complete and daily continuous surface soil moisture of high spatial resolution. Remote Sens. Environ. 2019, 233, 111364. [Google Scholar] [CrossRef]

- Mei, Y.; Maggioni, V.; Houser, P.; Xue, Y.; Rouf, T. A nonparametric statistical technique for spatial downscaling of precipitation over High Mountain Asia. Water Resour. Res. 2020, 56, e2020WR027472. [Google Scholar] [CrossRef]

- Pour, S.H.; Shahid, S.; Chung, E.S. A hybrid model for statistical downscaling of daily rainfall. Procedia Eng. 2016, 154, 1424–1430. [Google Scholar] [CrossRef]

- Vandal, T.; Kodra, E.; Ganguly, A.R. Intercomparison of machine learning methods for statistical downscaling: The case of daily and extreme precipitation. Theor. Appl. Climatol. 2019, 137, 557–570. [Google Scholar] [CrossRef]

- Ham, Y.G.; Kim, J.H.; Luo, J.J. Deep learning for multi-year ENSO forecasts. Nature 2019, 573, 568–572. [Google Scholar] [CrossRef] [PubMed]

- Reichstein, M.; Camps-Valls, G.; Stevens, B.; Jung, M.; Denzler, J.; Carvalhais, N. Deep learning and process understanding for data-driven Earth system science. Nature 2019, 566, 195–204. [Google Scholar] [CrossRef] [PubMed]

- Shen, C. A transdisciplinary review of deep learning research and its relevance for water resources scientists. Water Resour. Res. 2018, 54, 8558–8593. [Google Scholar] [CrossRef]

- Kumar, B.; Chattopadhyay, R.; Singh, M.; Chaudhari, N.; Kodari, K.; Barve, A. Deep learning–based downscaling of summer monsoon rainfall data over Indian region. Theor. Appl. Climatol. 2021, 143, 1145–1156. [Google Scholar] [CrossRef]

- Sha, Y.; Gagne, D.J., II; West, G.; Stull, R. Deep-learning-based gridded downscaling of surface meteorological variables in complex terrain. Part II: Daily precipitation. J. Appl. Meteorol. Climatol. 2020, 59, 2075–2092. [Google Scholar] [CrossRef]

- Sha, Y.; Gagne, D.J., II; West, G.; Stull, R. Deep-learning-based gridded downscaling of surface meteorological variables in complex terrain. Part I: Daily maximum and minimum 2-m temperature. J. Appl. Meteorol. Climatol. 2020, 59, 2057–2073. [Google Scholar] [CrossRef]

- Baño-Medina, J.; Manzanas, R.; Gutiérrez, J.M. Configuration and intercomparison of deep learning neural models for statistical downscaling. Geosci. Model. Dev. 2020, 13, 2109–2124. [Google Scholar] [CrossRef]

- Sun, A.Y.; Tang, G. Downscaling satellite and reanalysis precipitation products using attention-based deep convolutional neural nets. Front. Water 2020, 2, 536743. [Google Scholar] [CrossRef]

- Harris, L.; McRae, A.T.; Chantry, M.; Dueben, P.D.; Palmer, T.N. A Generative Deep Learning Approach to Stochastic Downscaling of Precipitation Forecasts. arXiv 2022, arXiv:2204.02028. [Google Scholar] [CrossRef]

- Li, W.; Pan, B.; Xia, J.; Duan, Q. Convolutional neural network-based statistical postprocessing of ensemble precipitation forecasts. J. Hydrol. 2022, 605, 127301. [Google Scholar] [CrossRef]

- François, B.; Thao, S.; Vrac, M. Adjusting spatial dependence of climate model outputs with cycle-consistent adversarial networks. Clim. Dyn. 2021, 57, 3323–3353. [Google Scholar] [CrossRef]

- Pan, B.; Anderson, G.J.; Goncalves, A.; Lucas, D.D.; Bonfils, C.J.; Lee, J.; Tian, Y.; Ma, H.Y. Learning to correct climate projection biases. J. Adv. Model. Earth Syst. 2021, 13, e2021MS002509. [Google Scholar] [CrossRef]

- Wang, F.; Tian, D.; Lowe, L.; Kalin, L.; Lehrter, J. Deep learning for daily precipitation and temperature downscaling. Water Resour. Res. 2021, 57, e2020WR029308. [Google Scholar] [CrossRef]

- Chen, Y. Increasingly uneven intra-seasonal distribution of daily and hourly precipitation over Eastern China. Environ. Res. Lett. 2020, 15, 104068. [Google Scholar] [CrossRef]

- Skamarock, W.C.; Klemp, J.B.; Dudhia, J.; Gill, D.O.; Liu, Z.; Berner, J.; Wang, W.; Powers, J.G.; Duda, M.G.; Barker, D.M.; et al. A Description of The Advanced Research WRF Model Version 4; National Center for Atmospheric Research: Boulder, CO, USA, 2019; p. 45. [Google Scholar]

- Zhou, K.H.; Zheng, Y.G.; Wang, T.B. Very short-range lightning forecasting with NWP and observation data: A deep-learning approach. Acta Meteorol. Sinica. 2021, 79, 1–14. [Google Scholar]

- Hong, S.Y.; Lim, J.O. The WRF single-moment 6-class microphysics scheme (WSM6) Asia-Pac. J. Atmos. Sci. 2006, 42, 129–151. [Google Scholar]

- Janjić, Z.I. The step-mountain eta coordinate model: Further developments of the convection, viscous sublayer, and turbulence closure schemes. Mon. Weather. Rev. 1994, 122, 927–945. [Google Scholar] [CrossRef]

- Mlawer, E.J.; Taubman, S.J.; Brown, P.D.; Iacono, M.J.; Clough, S.A. Radiative transfer for inhomogeneous atmospheres: RRTM, a validated correlated-k model for the longwave. J. Geophys. Res. Atmos. 1997, 102, 16663–16682. [Google Scholar] [CrossRef]

- Chen, F.; Dudhia, J. Coupling an advanced land surface–hydrology model with the Penn State–NCAR MM5 modeling system. Part I: Model implementation and sensitivity. Mon. Weather. Rev. 2001, 129, 569–585. [Google Scholar] [CrossRef]

- Ek, M.B.; Mitchell, K.E.; Lin, Y.; Rogers, E.; Grunmann, P.; Koren, V.; Gayno, G.; Tarpley, J.D. Implementation of Noah land surface model advances in the National Centers for Environmental Prediction operational mesoscale Eta model. J. Geophys. Res. Atmos. 2003, 108, GCP12-1. [Google Scholar] [CrossRef]

- Liu, Y.; Chen, Y.; Chen, O.; Wang, J.; Zhuo, L.; Rico-Ramirez, M.A.; Han, D. To develop a progressive multimetric configuration optimisation method for WRF simulations of extreme precipitation events over Egypt. J. Hydrol. 2021, 598, 126237. [Google Scholar] [CrossRef]

- Song, X.J.; Huang, J.J.; Song, D.W. Air quality prediction based on LSTM-Kalman model. In Proceedings of the IEEE 8th Joint International Information Technology and Artificial Intelligence Conference (ITAIC), Chongqing, China, 24–26 May 2019; pp. 695–699. [Google Scholar]

- Krawczyk, B. Learning from imbalanced data: Open challenges and future directions. Prog. Artif. Intell. 2016, 5, 221–232. [Google Scholar] [CrossRef]

- Buda, M.; Maki, A.; Mazurowski, M.A. A systematic study of the class imbalance problem in convolutional neural networks. arXiv 2018, arXiv:1710.05381. [Google Scholar] [CrossRef] [PubMed]

- Breiman, L. Random forests. Mach. Learn. 2001, 45, 5–32. [Google Scholar] [CrossRef]

- Robin, G.; Poggi, J.M.; Christine, T.M. Variable selection using random forest. Pattern Recognit. Lett. 2010, 31, 2225–2236. [Google Scholar]

- McGovern, A.; Lagerquist, R.; Gagne II, D.J.; Jergensen, G.E.; Elmore, K.L.; Homeyer, C.R.; Smith, T. Making the black box more transparent: Understanding the physical implications of machine learning. Bull. Am. Meteorol. Soc. 2019, 100, 2175–2199. [Google Scholar] [CrossRef]

- Fan, G.F.; Ma, H.; Ren, L.; Xiao, J.J. Impact of Precipitation on Atmospheric Visibility and the PM2.5 Concentration Based on the Minute-Scale High-Resolution Observations. Meteorol. Mon. 2017, 43, 1527–1533. [Google Scholar]

- Jaderberg, M.; Dalibard, V.; Osindero, S.; Czarnecki, W.M.; Donahue, J.; Razavi, A.; Vinyals, O.; Green, T.; Dunning, L.; Simonyan, K.; et al. Population Based Training of Neural Networks. DeepMind 2017. arXiv 2017. [Google Scholar] [CrossRef]

| Mode configurations | Option selection |

| Nesting ratio | 1:3:3, d01 27 km; d02 9 km; d03 3 km |

| Vertical levels | 50 levels |

| Microphysics | WRF Single-Moment 6-class scheme |

| Planetary boundary layer | Mellor–Yamada–Janjic scheme |

| Longwave radiation | RRTM scheme |

| Shortwave radiation | RRTM scheme |

| Land surface | Noah land-surface model |

| Spin-up time | per 6 h |

| Category | Meteorological Variables |

|---|---|

| P | ‘Sea-level pressure (Pa)’, ‘3 h pressure change (Pa)’, ‘24 h pressure change (Pa)’, ‘Maximum pressure (Pa)’, ‘Time of maximum pressure’, ‘Minimum pressure (Pa)’, ‘Time of minimum pressure’, ‘Ground Pressure (Pa)’. |

| VIS | ‘Visibility (m)’, ‘1 min average Visibility (m)’, ‘10 min average Visibility (m)’, ‘Minimum visibility (m)’, ‘Minimum visibility occurrence time’. |

| WD | ‘2 min average wind direction (°)’, ‘10 min average wind direction (°)’, ‘Maximum wind direction (°)’, ‘Instantaneous wind direction (°)’. |

| WS | ‘2 min average wind speed (m/s)’, ‘10 min average wind speed (m/s)’, ‘Maximum wind speed (m/s)’, ‘Instantaneous wind speed (m/s)’. |

| T | ‘Air temperature (°C)’, ‘Tmax (°C)’, ‘Occurrence time of Tmax (°C)’, ‘Tmin (°C)’, ‘Time of tmin (°C)’, ‘24 h of temperature change (°C)’, ‘Maximum temperature in the last 24 h (°C)’, ‘The lowest temperature in the last 24 h (°C)’, ‘5 cm ground temperature (°C)’, ‘10 cm ground temperature (°C)’, ‘15 cm ground temperature (°C)’, ‘20 cm ground temperature (°C)’, ‘40 cm ground temperature (°C)’, ‘80 cm ground temperature (°C)’, ‘160 cm ground temperature (°C)’, ‘320 cm ground temperature (°C)’, ‘Ground temperature (°C)’, ‘Maximum ground temperature (°C)’, ‘Maximum ground temperature occurrence time’, ‘Minimum ground temperature (°C)’, ‘Minimum ground temperature occurrence time’, ‘Minimum ground temperature in the last 12 h (°C)’. |

| RH | ‘Relative humidity (%)’, ‘Minimum relative humidity (%)’, ‘Minimum relative humidity occurrence time’, ‘Water vapor pressure (Pa)’, ‘Dew point temperature (°C)’. |

| Pre | ‘Hourly precipitation (mm)’, ‘Precipitation in the last 3 h (mm)’, ‘Precipitation in the last 6 h (mm)’, ‘Precipitation in the last 12 h (mm)’, ‘Precipitation in the last 24 h (mm)’. |

| NWP | ‘The radar reflectivity factor’. |

| Precipitation Levels | Precipitation Intensity/mm·h−1 | Number of Samples | Sample Ratio/% |

|---|---|---|---|

| No precipitation | [0, 0.1) | 72,645 | 95.25 |

| Weak precipitation | [0.1, 15) | 3563 | 4.67 |

| Moderate precipitation | [15, 30) | 40 | 0.05 |

| Heavy precipitation | [30, ∞) | 17 | 0.02 |

| Var1 (t − 1) | ⋯ | Var54 (t − 1) | Var1 (t) | ⋯ | Var54 (t) |

|---|---|---|---|---|---|

| 0.774230 | ⋯ | 0.525610 | 0.204615 | ⋯ | 0.204606 |

| 0.778103 | ⋯ | 0.525630 | 0.204626 | ⋯ | 0.204604 |

| 0.775722 | ⋯ | 0.525688 | 0.204639 | ⋯ | 0.204587 |

| 0.775758 | ⋯ | 0.525694 | 0.204653 | ⋯ | 0.204589 |

| 0.772680 | ⋯ | 0.525671 | 0.204642 | ⋯ | 0.204600 |

| 0.774621 | ⋯ | 0.525621 | 0.204606 | ⋯ | 0.204617 |

| ⋮ | ⋮ | ⋮ | ⋮ | ⋮ | ⋮ |

| 0.777357 | ⋯ | 0.525613 | 0.204637 | ⋯ | 0.204681 |

| 0.776291 | ⋯ | 0.525610 | 0.204600 | ⋯ | 0.204626 |

| 0.776006 | ⋯ | 0.525660 | 0.204626 | ⋯ | 0.204571 |

| 0.777001 | ⋯ | 0.525660 | 0.204589 | ⋯ | 0.204606 |

| 0.774514 | ⋯ | 0.525619 | 0.204622 | ⋯ | 0.204639 |

| Experimental Category | Proportion of Positive and Negative Samples in the Training Set | Accuracy | POD | TS |

|---|---|---|---|---|

| Practical sampling test | 3620:72645 | 0.6114 | 0.6237 | 0.5982 |

| Resampling test 1 | 1:1 | 0.8718 | 0.8921 | 0.7766 |

| Resampling test 2 | 1:2 | 0.7023 | 0.6715 | 0.6434 |

| Resampling test 3 | 1:3 | 0.6938 | 0.6523 | 0.6235 |

| Resampling test 4 | 2:1 | 0.8546 | 0.9468 | 0.7512 |

| Resampling test 5 | 3:1 | 0.8549 | 0.9657 | 0.7567 |

| Serial Number | Feature Value | Importance | Cumulative Importance |

|---|---|---|---|

| 1 | Radar reflectivity factor | 0.327 | 0.327 |

| 2 | 3 h of precipitation | 0.213 | 0.540 |

| 3 | Automatic observation of the minimum visibility | 0.109 | 0.649 |

| 4 | 6 h of precipitation | 0.063 | 0.712 |

| 5 | Artificial visibility | 0.043 | 0.755 |

| 6 | 12 h of precipitation | 0.041 | 0.796 |

| 7 | Automatic observed 10 min average horizontal visibility | 0.026 | 0.822 |

| 8 | Automatic observed 1 min average horizontal visibility | 0.017 | 0.839 |

| 9 | Extreme wind speed | 0.014 | 0.853 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhang, J.; Gao, Z.; Li, Y. Deep-Learning Correction Methods for Weather Research and Forecasting (WRF) Model Precipitation Forecasting: A Case Study over Zhengzhou, China. Atmosphere 2024, 15, 631. https://doi.org/10.3390/atmos15060631

Zhang J, Gao Z, Li Y. Deep-Learning Correction Methods for Weather Research and Forecasting (WRF) Model Precipitation Forecasting: A Case Study over Zhengzhou, China. Atmosphere. 2024; 15(6):631. https://doi.org/10.3390/atmos15060631

Chicago/Turabian StyleZhang, Jianbin, Zhiqiu Gao, and Yubin Li. 2024. "Deep-Learning Correction Methods for Weather Research and Forecasting (WRF) Model Precipitation Forecasting: A Case Study over Zhengzhou, China" Atmosphere 15, no. 6: 631. https://doi.org/10.3390/atmos15060631

APA StyleZhang, J., Gao, Z., & Li, Y. (2024). Deep-Learning Correction Methods for Weather Research and Forecasting (WRF) Model Precipitation Forecasting: A Case Study over Zhengzhou, China. Atmosphere, 15(6), 631. https://doi.org/10.3390/atmos15060631