Topological Data Analysis for Eye Fundus Image Quality Assessment

Abstract

1. Introduction

1.1. Public Health Dimension

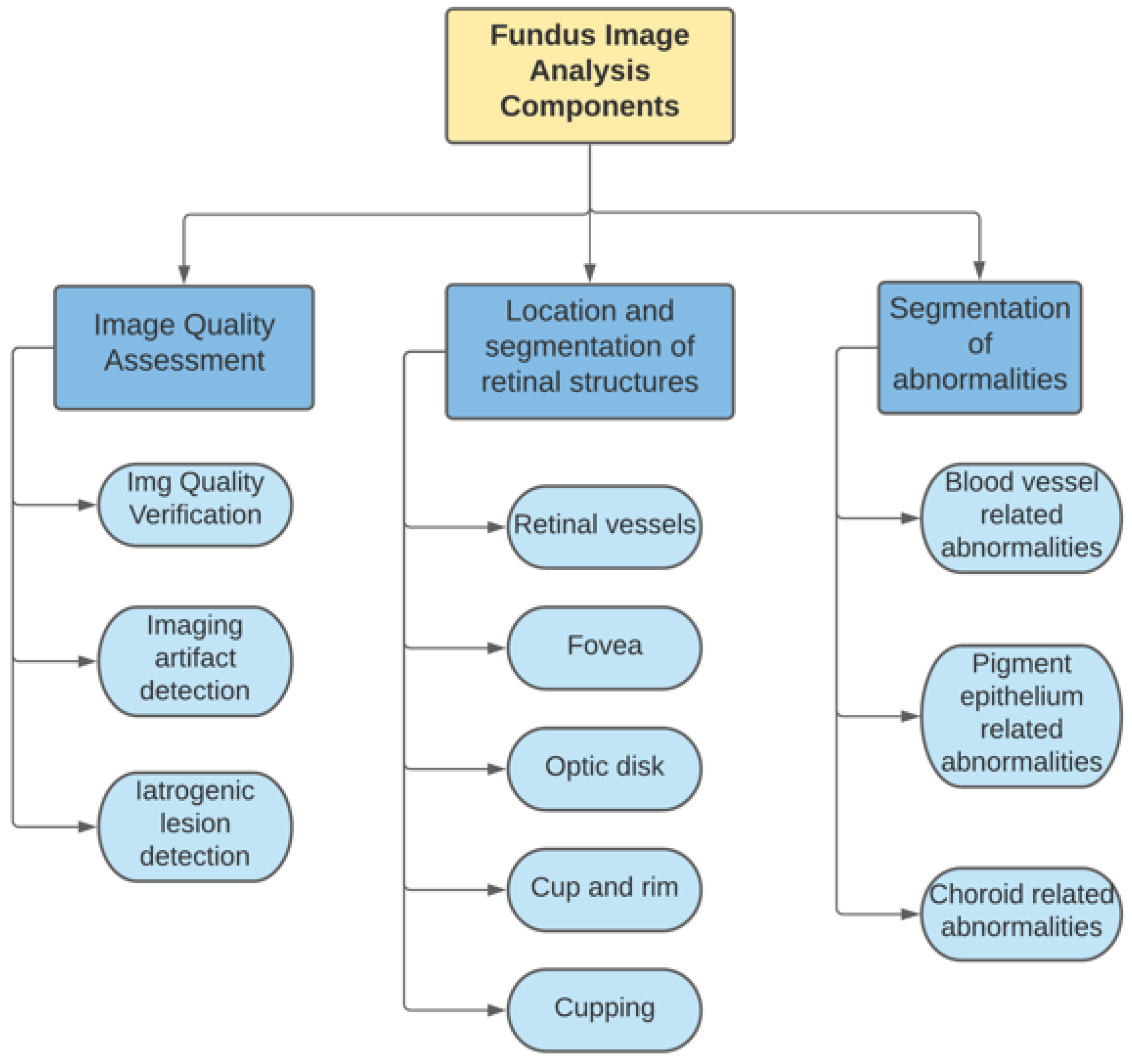

1.2. Fundus Image Analysis

- Image quality parameters.

- 2.

- Based on segmentation.

- 3.

- Deep learning.

1.3. Topological Data Analysis

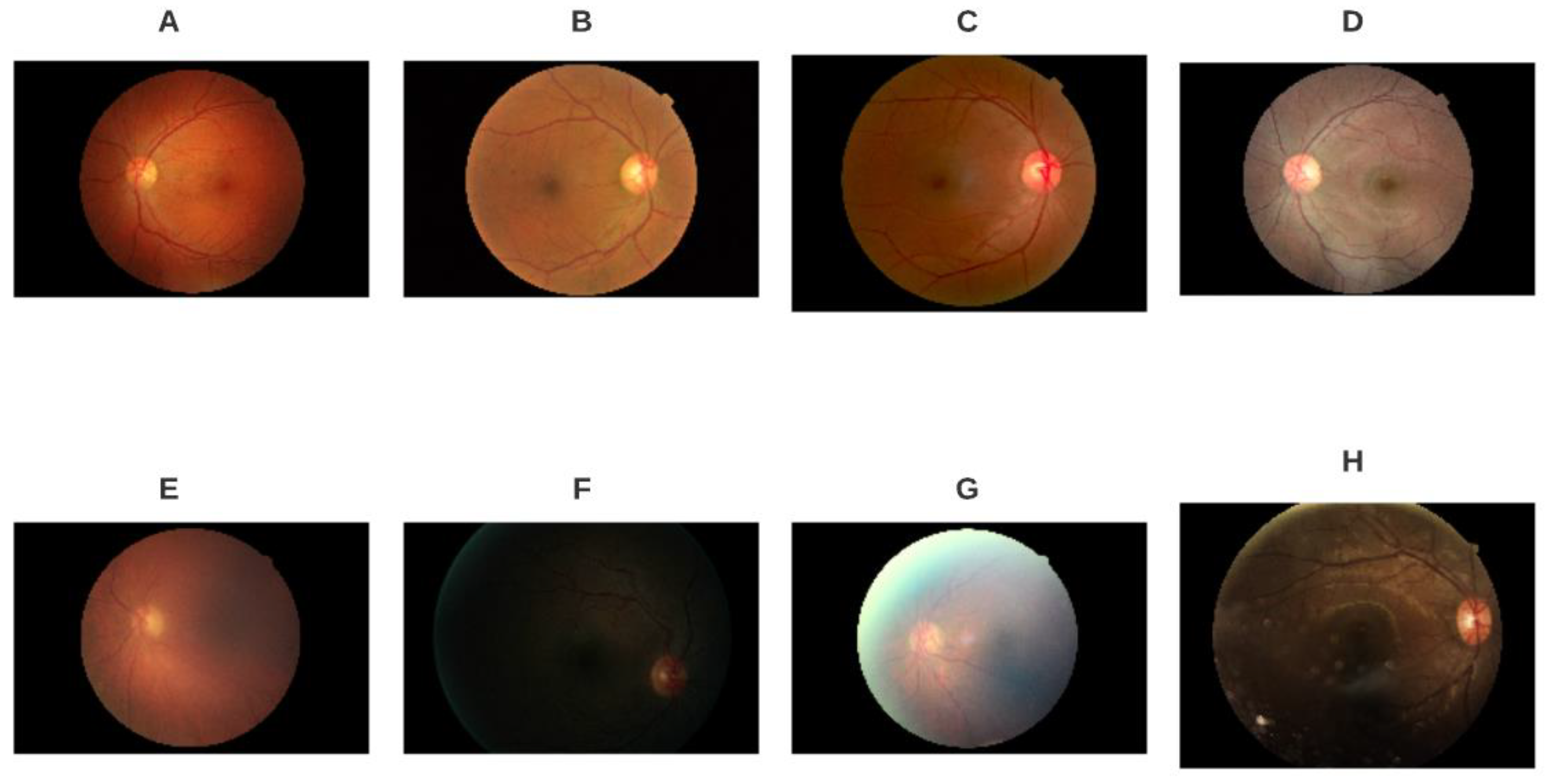

2. Materials and Methods

3. Methods

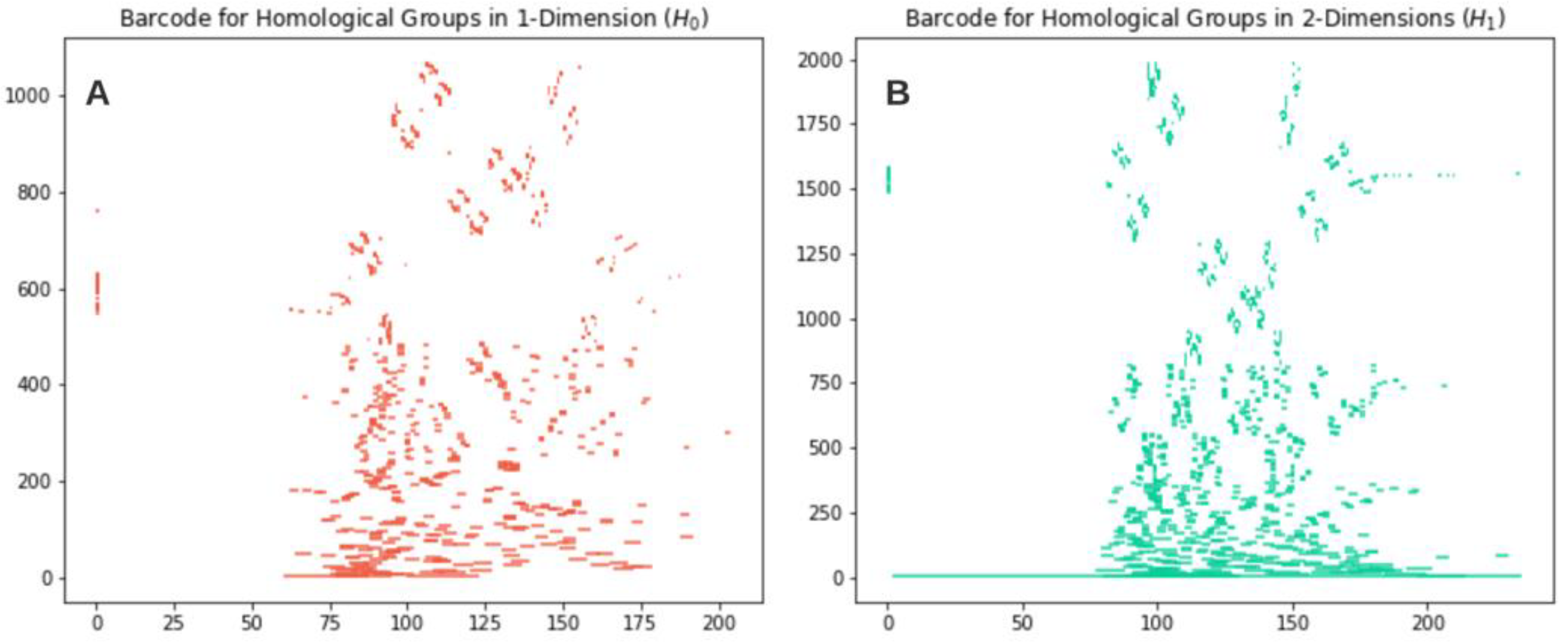

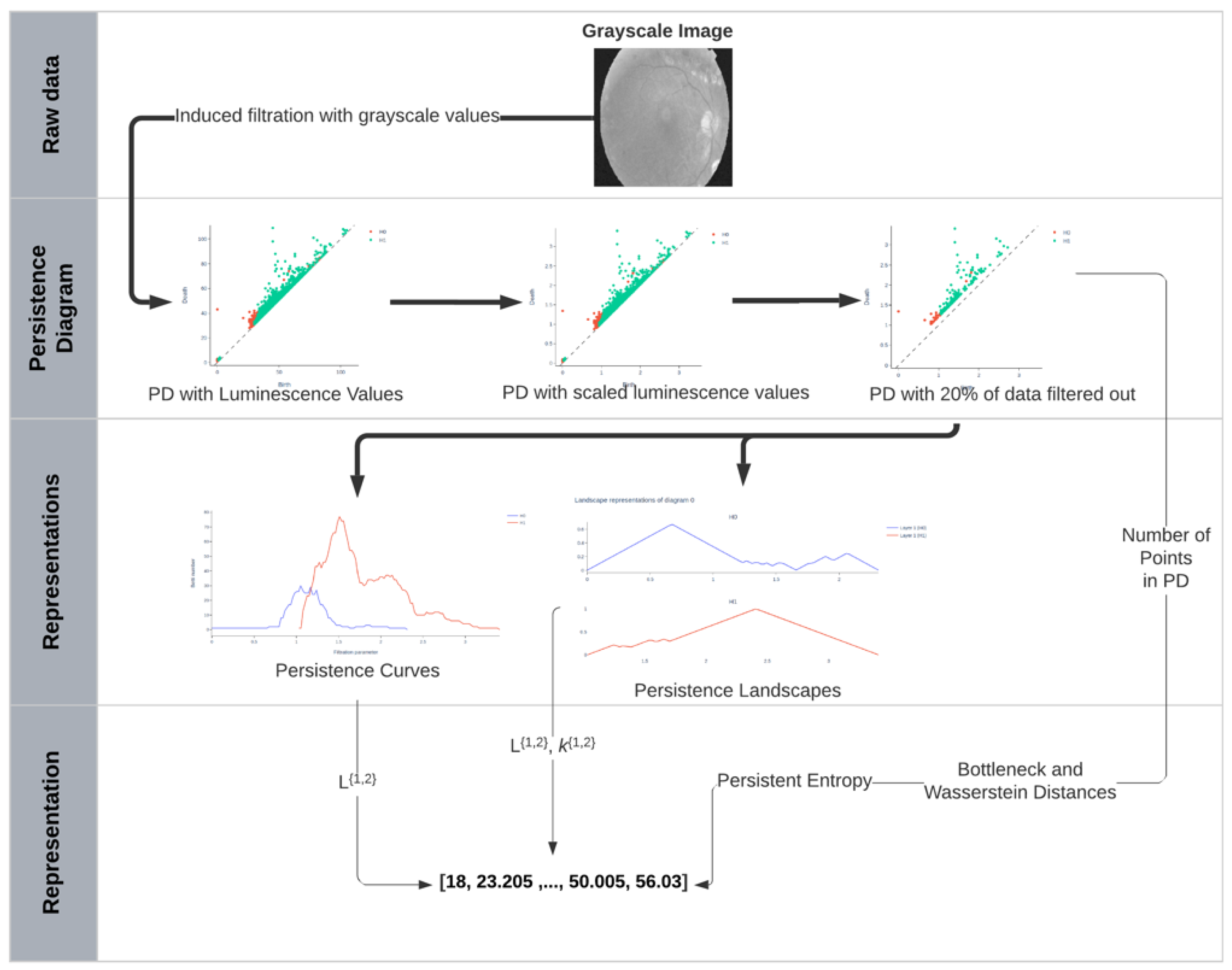

3.1. Topological Interpretation of Digital Images

3.1.1. Cubical Complexes for the Representation of Digital Medical Images

3.1.2. Cubical Filtrations

3.2. Topological Indicators Derived from Digital Images

3.2.1. Persistence Diagrams

3.2.2. Persistent Entropy of Persistence Diagrams

3.2.3. Bottleneck Distance

3.2.4. p-Wasserstein Distance

3.2.5. Persistence Landscape

3.2.6. Betti Curves

3.2.7. Gaussian Kernel

3.2.8. Number of Points in Persistence Diagram

3.3. Machine Learning Classifiers

- Support vector machine;

- Classification tree;

- k-nearest neighbors;

- Random forest;

- Logistic regression;

- Multilayered perceptron.

3.4. Metrics for Evaluation of Performance of Classification Algorithms

- True positives (TP): entities classified by the algorithm as true to the label evaluated when the reference is also true.

- True negatives (TN): entities classified by the algorithm as true to the label evaluated when the reference is false.

- False positives (FP): entities classified by the algorithm as false to the label evaluated when the reference is also false, also known as Type I Error.

- False negatives (FN): entities classified by the algorithm as false to the label evaluated when the reference is true, also known as Type II Error.

- Accuracy:

- Precision:

- Recall:

- F1-score:

- Receiver-operating characteristic (ROC) curve:

- Area under the curve (AUC):

- Matthews correlation coefficient (MCC):

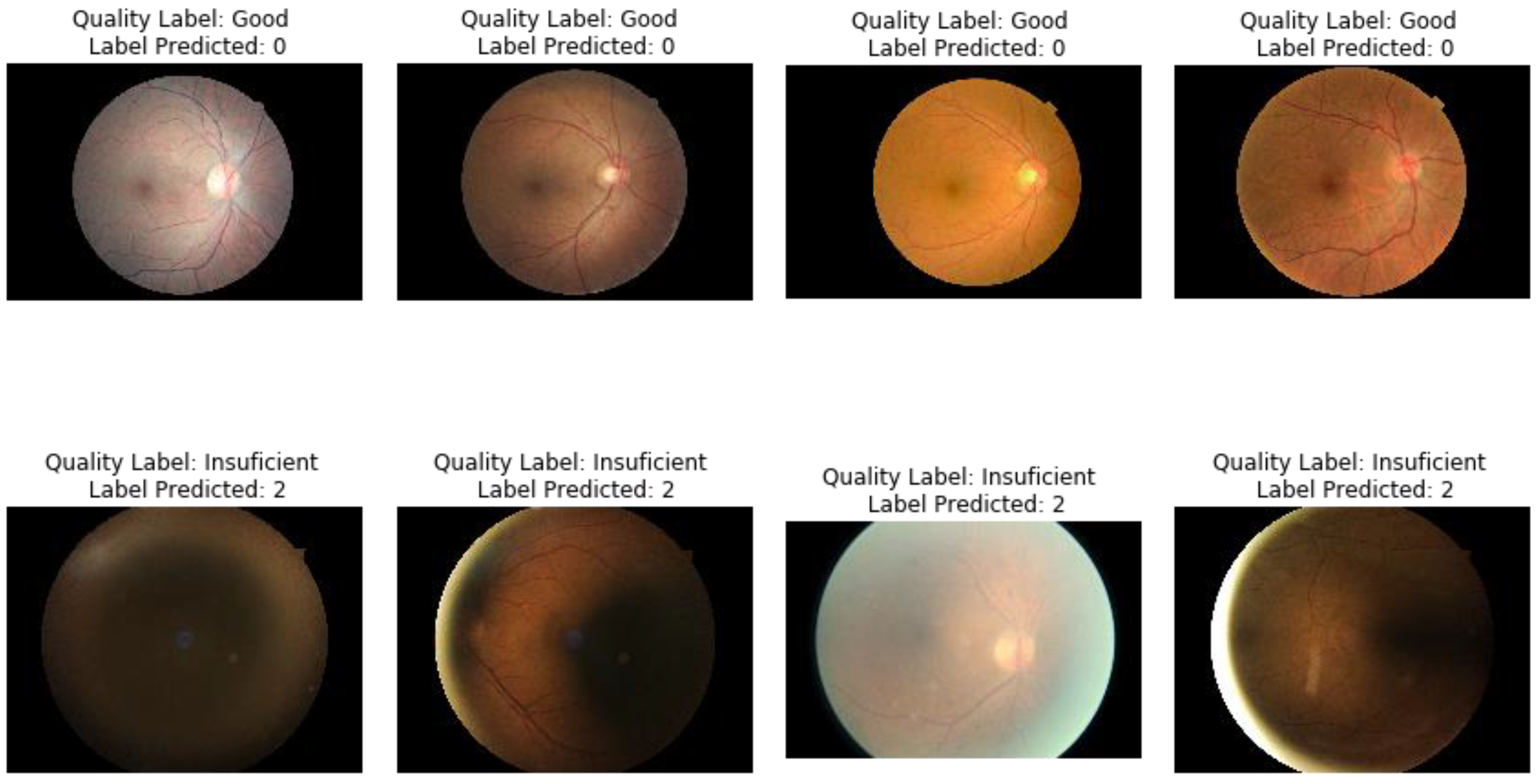

4. Results

5. Discussion

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Burton, M.J.; Faal, H.B.; Ramke, J.; Ravilla, T.; Holland, P.; Wang, N.; West, S.K.; Bourne, R.R.A.; Congdon, N.G. Announcing the Lancet global health commission on global eye health. Lancet Glob. Health 2019, 7, e1612–e1613. [Google Scholar] [CrossRef]

- Bourne, R.R.A.; Seth, H.L.; Flaxman, R.; Braithwaite, T.; Cicinelli, M.V.; Das, A.; Jonas, J.B.; Keeffe, J.; Kempen, J.H.; Leasher, J. Magnitude, temporal trends, and projections of the global prevalence of blindness and distance and near vision impairment: A systematic review and meta-analysis. Lancet Glob. Health 2017, 5, e888–e897. [Google Scholar] [CrossRef]

- Gordois, A.; Cutler, K.C.H.; Pezzullo, L.; Gordon, K.; Cruess, A.; Winyard, S.; Hamilton, W. An estimation of the worldwide economic and health burden of visual impairment. Glob. Public Health 2012, 7, 465–481. [Google Scholar] [CrossRef]

- WHO. World Report on Vision; WHO: Geneva, Switzerland, 2019. [Google Scholar]

- Rono, H.K.; Bastawrous, A.; Macleod, D.; Wanjala, E.; Di Tanna, G.L.; Weiss, H.A. Smartphone-based screening for visual impairment in Kenyan school children: A cluster randomised controlled trial. Lancet Glob. Health 2018, 6, e924–e932. [Google Scholar] [CrossRef]

- Mookiah, M.R.K.; Acharya, U.R.; Chua, C.K.; Lim, C.M.; Ng, E.Y.K.; Laude, A. Computer-aided diagnosis of diabetic retinopathy: A review. Comput. Biol. Med. 2013, 43, 2136–2155. [Google Scholar] [CrossRef]

- Yanoff, M. Ophthalmic Diagnosis & Treatment; JP Medical Ltd.: London, UK, 2014. [Google Scholar]

- Bruce, B.B. Examining the Ocular Fundus and Interpreting What You See; The American Academy of Neurology Institute: Minneapolis, MN, USA, 2017. [Google Scholar]

- Gulshan, V.; Peng, L.; Coram, M.; Stumpe, M.C.; Wu, D.; Narayanaswamy, A.; Venugopalan, S.; Widner, K.; Madams, T. Development and validation of a deep learning algorithm for detection of diabetic retinopathy in retinal fundus photographs. JAMA 2016, 316, 2402–2410. [Google Scholar] [CrossRef]

- Voets, M.; Møllersen, K.; Bongo, L.A. Reproduction study using public data of: Development and validation of a deep learning algorithm for detection of diabetic retinopathy in retinal fundus photographs. PLoS ONE 2019, 14, e0217541. [Google Scholar] [CrossRef]

- Son, J.; Shin, J.Y.; Kim, H.D.; Jung, K.H.; Park, K.H.; Park, S.J. Development and validation of deep learning models for screening multiple abnormal findings in retinal fundus images. Ophthalmology 2020, 127, 85–94. [Google Scholar] [CrossRef] [PubMed]

- Krause, J.; Gulshan, V.; Rahimy, E.; Karth, P.; Widner, K.; Corrado, G.S.; Peng, L. Grader variability and the importance of reference standards for evaluating machine learning models for diabetic retinopathy. Ophthalmology 2018, 125, 1264–1272. [Google Scholar] [CrossRef]

- Razzak, M.I.; Naz, S.; Zaib, A. Deep learning for medical image processing: Overview, challenges and the future. Classif. BioApps 2018, 323–350. [Google Scholar]

- Abràmoff, M.D.; Garvin, M.K.; Sonka, M. Garvin retinal imaging and image analysis. IEEE Rev. Biomed. Eng. 2010, 3, 169–208. [Google Scholar] [CrossRef]

- Bernardes, R.; Serranho, P.; Lobo, C. Digital Ocular Fundus Imaging: A Review. Ophthalmologica 2011, 226, 161–181. [Google Scholar] [CrossRef]

- Lalonde, M.; Gagnon, L.; Boucher, M.C. Automatic visual quality assessment in optical fundus images. Vis. Interface VI2001 2001, 32, 259–264. [Google Scholar]

- Davis, H.; Russell, S.; Barriga, E.; Abramoff, M.; Soliz, P. Vision-Based, Real-Time Retinal Image Quality Assessment. In Proceedings of the 2009 22nd IEEE International Symposium on Computer-Based Medical Systems, Albuquerque, NM, USA, 2–5 August 2009; pp. 1–6. [Google Scholar]

- Fleming, A.D.; Philip, S.; Goatman, K.A.; Olson, J.A.; Sharp, P.F. Automated assessment of diabetic retinal image quality based on clarity and field definition. Investig. Ophthalmol. Amp Vis. Sci. 2006, 47, 1120–1125. [Google Scholar] [CrossRef]

- Dias, J.M.P.; Oliveira, C.M.; da Silva Cruz, L.A. Retinal image quality assessment using generic image quality indicators. Inf. Fusion 2014, 19, 73–90. [Google Scholar] [CrossRef]

- Lee, S.C.; Wang, Y. Automatic Retinal Image Quality Assessment and Enhancement. Med. Imaging 1999 Image Process. 1999, 3661, 1581–1590. [Google Scholar]

- Bartling, H.; Wanger, P.; Martin, L. Peter Wanger Automated Quality Evaluation of Digital Fundus Photographs. Acta Ophthalmol. 2009, 87, 643–647. [Google Scholar] [CrossRef]

- Niemeijer, M.; Abramoff, M.D.; van Ginneken, B. Image structure clustering for image quality verification of color retina images in diabetic retinopathy screening. Med. Image Anal. 2006, 10, 888–898. [Google Scholar] [CrossRef]

- Welikala, R.A.; Fraz, M.M.; Foster, P.J.; Whincup, P.H.; Rudnicka, A.R.; Owen, C.G.; Strachan, D.P.; Barman, S.A. Automated retinal image quality assessment on the uk biobank dataset for epidemiological studies. Comput. Biol. Med. 2016, 71, 67–76. [Google Scholar] [CrossRef] [PubMed]

- Mahapatra, D.; Roy, P.K.; Sedai, S.; Garnavi, R. Retinal image quality classification using saliency maps and CNNs. In Proceedings of the International Workshop on Machine Learning in Medical Imaging, Athens, Greece, 17 October 2016; pp. 172–179. [Google Scholar]

- Munkres, J.R. Elements of Algebraic Topology; CRC Press: Boca Raton, FL, USA, 2018. [Google Scholar]

- Edelsbrunner, H.; Harer, J. Computational Topology: An Introduction; American Mathematical Soc.: Providence, RI, USA, 2010. [Google Scholar]

- Chazal, F.; Michel, B. An introduction to topological data analysis: Fundamental and practical aspects for data scientists. arXiv 2017, preprint. arXiv:1710.04019. [Google Scholar]

- Carlsson, G. Topology and data. Bull. Am. Math. Soc. 2009, 46, 255–308. [Google Scholar] [CrossRef]

- Cuadros, J.; Bresnick, G. EyePACS: An adaptable telemedicine system for diabetic retinopathy screening. J. Diabetes Sci. Technol. 2009, 3, 509–516. [Google Scholar] [CrossRef]

- Fu, H.; Wang, B.; Shen, J.; Cui, S.; Xu, Y.; Liu, J.; Shao, L. Evaluation of retinal image quality assessment networks in different color-spaces. In Proceedings of the International Conference on Medical Image Computing and Computer-Assisted Intervention, Shenzhen, China, 13–17 October 2019; pp. 48–56. [Google Scholar]

- Pérez, A.D.; Perdomo, O.; González, F.A. A Lightweight Deep Learning Model for Mobile Eye Fundus Image Quality Assessment. In Proceedings of the 15th International Symposium on Medical Information Processing and Analysis, Medellin, Colombia, 6–8 November 2020; Volume 11330. [Google Scholar]

- Zomorodian, A. Topological data analysis. Adv. Appl. Comput. Topol. 2012, 70, 1–39. [Google Scholar]

- Niethammer, M.; Stein, A.N.; Kalies, W.D.; Pilarczyk, P.; Mischaikow, K.; Tannenbaum, A. Analysis of blood vessel topology by cubical homology. In Proceedings of the International Conference on Image Processing, Rochester, NY, USA, 22–25 September 2002; Volume 2. [Google Scholar]

- Pilarczyk, P.; Real, P. Computation of cubical homology, cohomology, and (co) homological operations via chain contraction. Adv. Comput. Math. 2015, 41, 253–275. [Google Scholar] [CrossRef]

- Wagner, H.; Chen, C.; Vuçini, E. Efficient computation of persistent homology for cubical data. In Topological Methods in Data Analysis and Visualization II.; Springer: Berlin, Germany, 2012; pp. 91–106. [Google Scholar]

- Chung, M.K.; Bubenik, P.; Kim, P.T. Persistence diagrams of cortical surface data. In Proceedings of the International Conference on Information Processing in Medical Imaging, Williamsburg, VA, USA, 5–10 July 2009; pp. 386–397. [Google Scholar]

- Atienza, N.; Escudero, L.M.; Jimenez, M.J.; Soriano-Trigueros, M. Persistent entropy: A scale-invariant topological statistic for analyzing cell arrangements. arXiv 2019, arXiv:1902.06467. [Google Scholar]

- Rucco, M.; Castiglione, F.; Merelli, E.; Pettini, M. Characterisation of the Idiotypic Immune Network Through Persistent Entropy. In Proceedings of the ECCS 2014; Battiston, S., De Pellegrini, F., Caldarelli, G., Merelli, E., Eds.; Springer International Publishing: Cham, Switzerland, 2016; pp. 117–128, ISBN 978-3-319-29226-7. [Google Scholar]

- Efrat, A.; Itai, A.; Katz, M.J. Geometry helps in bottleneck matching and related problems. Algorithmica 2001, 31, 1–28. [Google Scholar] [CrossRef]

- Kerber, M.; Morozov, D.; Nigmetov, A. Geometry helps to compare persistence diagrams. arXiv 2016, arXiv:160603357. [Google Scholar]

- Bubenik, P. Statistical topological data analysis using persistence landscapes. J. Mach. Learn. Res. 2015, 16, 77–102. [Google Scholar]

- Bubenik, P.; Dłotko, P. A persistence landscapes toolbox for topological statistics. J. Symb. Comput. 2017, 78, 91–114. [Google Scholar] [CrossRef]

- Reininghaus, J.; Huber, S.; Bauer, U.; Kwitt, R. A stable multi-scale kernel for topological machine learning. In Proceedings of the 2015 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Boston, MA, USA, 7–12 June 2015; pp. 4741–4748. [Google Scholar]

- Demšar, J.; Curk, T.; Erjavec, A.; Gorup, Č.; Hočevar, T.; Milutinovič, M.; Movzina, M.; Polajnar, M.; Toplak, M.; Staric, A.; et al. Orange: Data Mining Toolbox in Python. J. Mach. Learn. Res. 2013, 14, 2349–2353. [Google Scholar]

- Jiao, Y.; Du, P. Performance measures in evaluating machine learning based bioinformatics predictors for classifications. Quant. Biol. 2016, 4, 320–330. [Google Scholar] [CrossRef]

- Chicco, D.; Jurman, G. The advantages of the Matthews correlation coefficient (MCC) over F1 score and accuracy in binary classification evaluation. BMC Genomics 2020, 21, 1–13. [Google Scholar] [CrossRef] [PubMed]

- DeBry, P.W. Considerations for choosing an electronic medical record for an ophthalmology practice. Arch. Ophthalmol. 2001, 119, 590–596. [Google Scholar] [CrossRef] [PubMed][Green Version]

- Barikian, A.; Haddock, L.J. Smartphone assisted fundus fundoscopy/photography. Curr. Ophthalmol. Rep. 2018, 6, 46–52. [Google Scholar] [CrossRef]

- Díez-Sotelo, M.; Díaz, M.; Abraldes, M.; Gómez-Ulla, F.; Penedo, M.G.; Ortega, M. A novel automatic method to estimate visual acuity and analyze the retinal vasculature in retinal vein occlusion using swept source optical coherence tomography angiography. J. Clin. Med. 2019, 8, 1515. [Google Scholar] [CrossRef] [PubMed]

- Arsalan, M.; Owais, M.; Mahmood, T.; Cho, S.W.; Park, K.R. Aiding the diagnosis of diabetic and hypertensive retinopathy using artificial intelligence-based semantic segmentation. J. Clin. Med. 2019, 8, 1446. [Google Scholar] [CrossRef]

- López-Reyes, V.; Cosío-León, M.A.; Avilés-Rodríguez, G.J.; Martínez-Vargas, A.; Romo-Cárdenas, G. A topological approach for the pattern analysis on chest X-Ray images of COVID-19 patients. In Medical Imaging 2021: Physics of Medical Imaging; International Society for Optics and Photonics: Bellingham, WA, USA, 2021; Volume 11595. [Google Scholar]

| Variables 1–6 | Variables 7–12 | Variables 13–18 | Variables 19–24 | Variables 25–30 |

|---|---|---|---|---|

| Persistence entropy | 2-Wasserstein distance | Persistence landscape | Betti curve | Gaussian kernel |

| Persistence entropy | 2-Wasserstein distance | Persistence landscape | Betti curve | Gaussian kernel |

| Bottleneck distance | Persistence landscape | Persistence landscape | Gaussian kernel | Gaussian kernel |

| Bottleneck distance | Persistence landscape | Persistence landscape | Gaussian kernel | Gaussian kernel |

| 1-Wasserstein distance | Persistence landscape | Betti curve | Gaussian kernel | Number of points in diagram |

| 1-Wasserstein distance | Persistence landscape | Betti curve | Gaussian kernel | Number of points in diagram |

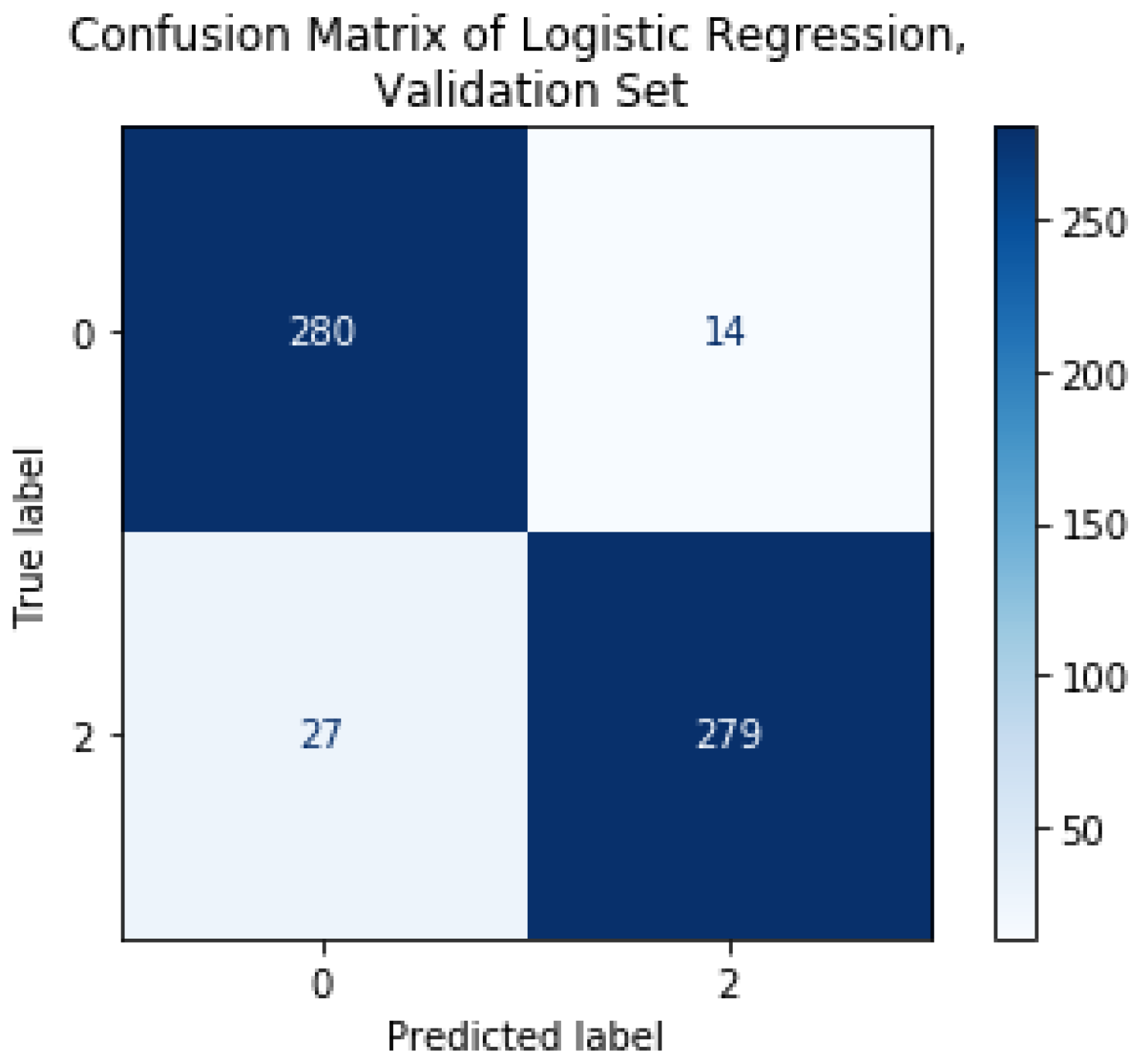

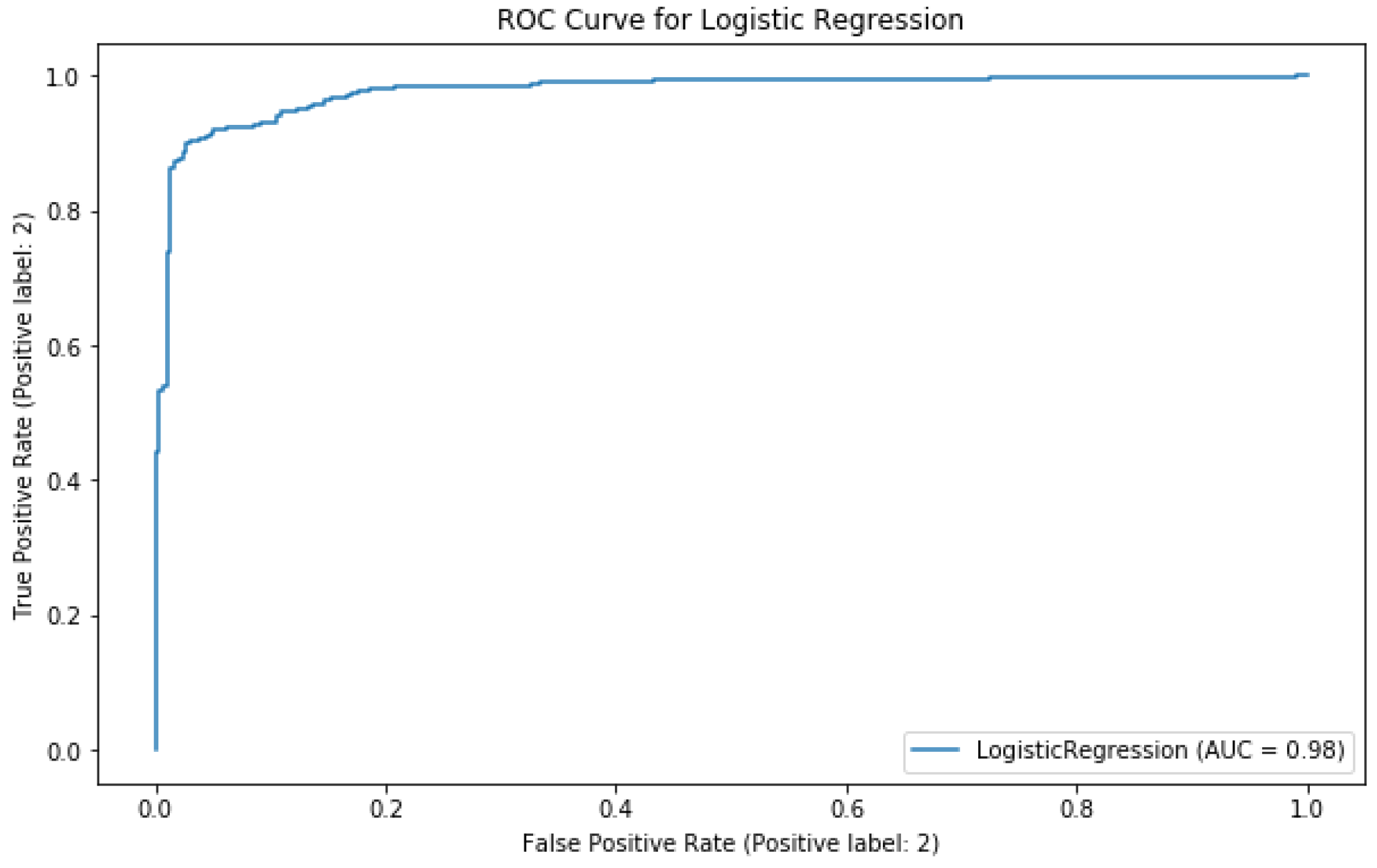

| Model | AUC | CA | Precision | Recall | F1-Score |

|---|---|---|---|---|---|

| Support Vector Machine (SVM) | 0.845 | 0.749 | 0.761 | 0.749 | 0.746 |

| Decision Tree | 0.870 | 0.894 | 0.895 | 0.894 | 0.894 |

| k-Nearest Neighbors (k-NN) | 0.941 | 0.898 | 0.900 | 0.898 | 0.898 |

| Random Forest (RFC) | 0.960 | 0.911 | 0.912 | 0.911 | 0.911 |

| Logistic Regression (LoGit) | 0.974 | 0.925 | 0.925 | 0.925 | 0.925 |

| Multilayer Perceptron (MLP) | 0.981 | 0.935 | 0.935 | 0.935 | 0.935 |

| Algorithm | Precision Training Set | Precision Testing Set | Recall Training Set | Recall Testing Set | F1-Score Training Set | F1-Score Testing Set |

|---|---|---|---|---|---|---|

| SVM | 0.961 | 0.957 | 0.961 | 0.957 | 0.961 | 0.957 |

| MLP | 0.910 | 0.930 | 0.910 | 0.930 | 0.910 | 0.930 |

| LoGit | 0.989 | 0.987 | 0.989 | 0.987 | 0.989 | 0.987 |

| Parameter | Value |

|---|---|

| Tolerance | {1 × 10−4, 1 × 10−6, 1 × 10−8} |

| C | {50,000, 100,000, 150,000} |

| Solver | {lbfgs, saga, liblinear} |

| Maximum iterations | {10,000, 50,000, 100,000} |

| Label | Precision | Recall | F1-Score | Classification Accuracy | Count |

|---|---|---|---|---|---|

| good | 0.912 | 0.952 | 0.932 | 0.932 | 294 |

| bad | 0.952 | 0.912 | 0.932 | 306 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Avilés-Rodríguez, G.J.; Nieto-Hipólito, J.I.; Cosío-León, M.d.l.Á.; Romo-Cárdenas, G.S.; Sánchez-López, J.d.D.; Radilla-Chávez, P.; Vázquez-Briseño, M. Topological Data Analysis for Eye Fundus Image Quality Assessment. Diagnostics 2021, 11, 1322. https://doi.org/10.3390/diagnostics11081322

Avilés-Rodríguez GJ, Nieto-Hipólito JI, Cosío-León MdlÁ, Romo-Cárdenas GS, Sánchez-López JdD, Radilla-Chávez P, Vázquez-Briseño M. Topological Data Analysis for Eye Fundus Image Quality Assessment. Diagnostics. 2021; 11(8):1322. https://doi.org/10.3390/diagnostics11081322

Chicago/Turabian StyleAvilés-Rodríguez, Gener José, Juan Iván Nieto-Hipólito, María de los Ángeles Cosío-León, Gerardo Salvador Romo-Cárdenas, Juan de Dios Sánchez-López, Patricia Radilla-Chávez, and Mabel Vázquez-Briseño. 2021. "Topological Data Analysis for Eye Fundus Image Quality Assessment" Diagnostics 11, no. 8: 1322. https://doi.org/10.3390/diagnostics11081322

APA StyleAvilés-Rodríguez, G. J., Nieto-Hipólito, J. I., Cosío-León, M. d. l. Á., Romo-Cárdenas, G. S., Sánchez-López, J. d. D., Radilla-Chávez, P., & Vázquez-Briseño, M. (2021). Topological Data Analysis for Eye Fundus Image Quality Assessment. Diagnostics, 11(8), 1322. https://doi.org/10.3390/diagnostics11081322