Abstract

Background: With the availability of extensive health data, artificial intelligence has an inordinate capability to expedite medical explorations and revamp healthcare.Artificial intelligence is set to reform the practice of medicine soon. Despite the mammoth advantages of artificial intelligence in the medical field, there exists inconsistency in the ethical and legal framework for the application of AI in healthcare. Although research has been conducted by various medical disciplines investigating the ethical implications of artificial intelligence in the healthcare setting, the literature lacks a holistic approach. Objective: The purpose of this review is to ascertain the ethical concerns of AI applications in healthcare, to identify the knowledge gaps and provide recommendations for an ethical and legal framework. Methodology: Electronic databases Pub Med and Google Scholar were extensively searched based on the search strategy pertaining to the purpose of this review. Further screening of the included articles was done on the grounds of the inclusion and exclusion criteria. Results: The search yielded a total of 1238 articles, out of which 16 articles were identified to be eligible for this review. The selection was strictly based on the inclusion and exclusion criteria mentioned in the manuscript. Conclusion: Artificial intelligence (AI) is an exceedingly puissant technology, with the prospect of advancing medical practice in the years to come. Nevertheless, AI brings with it a colossally abundant number of ethical and legal problems associated with its application in healthcare. There are manifold stakeholders in the legal and ethical issues revolving around AI and medicine. Thus, a multifaceted approach involving policymakers, developers, healthcare providers and patients is crucial to arrive at a feasible solution for mitigating the legal and ethical problems pertaining to AI in healthcare.

1. Introduction

1.1. Background and Rationale

Artificial Intelligence (AI) can be defined as “the part of digital technology that indicates the utility of coded computer technology routines with specific instructions to carry out tasks for which generally a human brain is considered necessary” [1]. The emergence of artificial intelligence is one of the most profound advancements in the history of medicine, with applications in all the medical specialties [2]. Artificial intelligence bears stupendous promise in terms of propitious, precise and efficacious preventive treatment and curative interventions [3]. The principal class of applications includes diagnosis and treatment strategies, patient adherence to drug regimens and hospital administrative affairs [4]. AI applications in hospitals are instrumental in alleviating the pressure on healthcare professionals, are cost-effective and, in the long run, improve the quality of healthcare services [5]. AI can be deployed for structuring the existing medical data by transforming colloquial language into a clinical narrative, scrutinizing patient data, recognizing clinical diagnostic resemblances, as well as substantiating medical hypotheses [6]. The application of artificial intelligence in healthcare delivery, nonetheless, gives rise to transnational ethical, legal, social and commercial concerns. The utilization of digital technologies and software in healthcare facilities has created testing circumstances for software developers, policymakers and healthcare providers. AI presents ethical concerns that impede the progress of its usage in the healthcare field [7].

The use of AI in healthcare takes advantage of the exceptional volume of health data and computing potential to inform evidence-based decision-making. This paves the way for new ethical dilemmas pertaining to the confidentiality of the data collected, data privacy, the transparency of data use, accountability of data governance and probable inequities in AI deployment [8]. Most of the algorithms of artificial intelligence function inside a “black box” environment, where the course of analysis is not transparent. AI is subject to basic ethical complications related to autonomy, beneficence, justice, non-maleficence and respect for knowledge. In the healthcare system, the privacy of an individual should be revered, as is obliged by patient autonomy or self-governance, personal identity and well-being. Henceforth, it is ethically vital to give due respect to patients’ privacy and maintain confidentiality [9]. Artificial intelligence and machine learning systems have an algorithmic bias, predicting the probable diagnosis of a disease on the grounds of sex or race, when these might not be the key causative factors [4]. One feature that differentiates the medical industry from other service sectors is the unconditional trust patients have in healthcare professionals, which is reinforced by the placebo effect. In AI-assisted healthcare, patients have to construct a relationship with an artificial system rather than a human. This significantly affects the treatment outcomes [7].

Technological and scientific advancements and the ubiquitous presence of digital technology have facilitated the establishment of a unified trust in digital space. The main purpose of modern digital legal regulation encompassing AI technology in medical care is, fundamentally, to impede the emergence of public health risks and give due respect to the confidentiality of patients’ private data. In February 2017, European legislation approved the use of AI technology in healthcare under the conditions that a robot should not cause harm to the users, should abide by human commands and ensure its safety [10]. The proposed act by the European Commission mandates the documentation of AI technology, data sheets with information on training modality and the process of implementation with their scope and characteristics [11]. In India, there are no concrete laws pertaining to AI in healthcare. DISHA, or the Digital Information Security in Healthcare Act, if brought into action, will cover the regulations under this domain [12].

The year 2015 marked the adoption of the Sustainable Development Goals by all the member states of the United Nations. The Sustainable Development Goals aim to achieve good health and well-being [13]. They also aim to reduce inequalities. While the goals are well-grounded on the ethical principles of equality, equity, universal solidarity and a commitment to exclude no one, the evolution of artificial intelligence has the potential to further aggravate health inequities. With the fast-paced propagation of AI technologies in the medical field, it is exigent to recognize and address the ethical implications comprehensively, in view of the able benefits of AI, and alleviate its potential harms [14,15]. An extensive outlook on every plausible ethical problem associated with AI in healthcare facilities is required. To that end, our study aimed to perform a scoping review of the scholarly studies published in peer-reviewed journals to determine the ethical concerns with the application of AI in healthcare.

1.2. Objectives

This study aims to scrutinize the ethical complications associated with the application of artificial intelligence in the healthcare field. This scoping review further extends to put forth recommendations and guidelines for an ethical and legal framework.

2. Methodology

2.1. Search Strategy

The description of this scoping review is extracted from scholarly articles from the PubMed and Google Scholar databases describing the ethical issues related to AI in healthcare. A five-stage methodological framework from Arksey and O’Malley that included the following steps was used: 1. Defining the research topic. 2. Identifying relevant research papers. 3. Selecting the study. 4. Charting the data. 5. Collating, reporting, and summarizing the findings. The structure of the scoping review follows the PRISMA extension for scoping reviews (PRISMA-Scr) [16].

2.2. Identification of Relevant Studies

An extensive search of articles was conducted over a period of 4 days from 24 September 2022 to 27 September 2022. Key search terms such as, ‘artificial intelligence’; ‘machine learning’; ‘deep learning’; ‘ethics’; ‘medical ethics’; ‘ethical complications’; ‘autonomy’; ‘artificial intelligence in healthcare’; ‘legal and ethical guidelines’; and ‘application in healthcare’ were applied to extract the filters. The inclusion and exclusion criteria are as follows:

2.3. Inclusion Criteria

- Articles reporting on all the key search topics (AI, health, ethics);

- Studies dealing with ethics in the application of artificial intelligence exclusively in healthcare/medicine;

- Qualitative\quantitative\mixed-method studies, literature reviews published in indexed and peer-reviewed journals and grey literature;

- Articles published in the English language.

2.4. Exclusion Criteria

- Studies elucidating ethical challenges in the application of AI in disciplines other than medicine;

- Manuscripts written in languages other than English.

2.5. Selection of Sources of Evidence

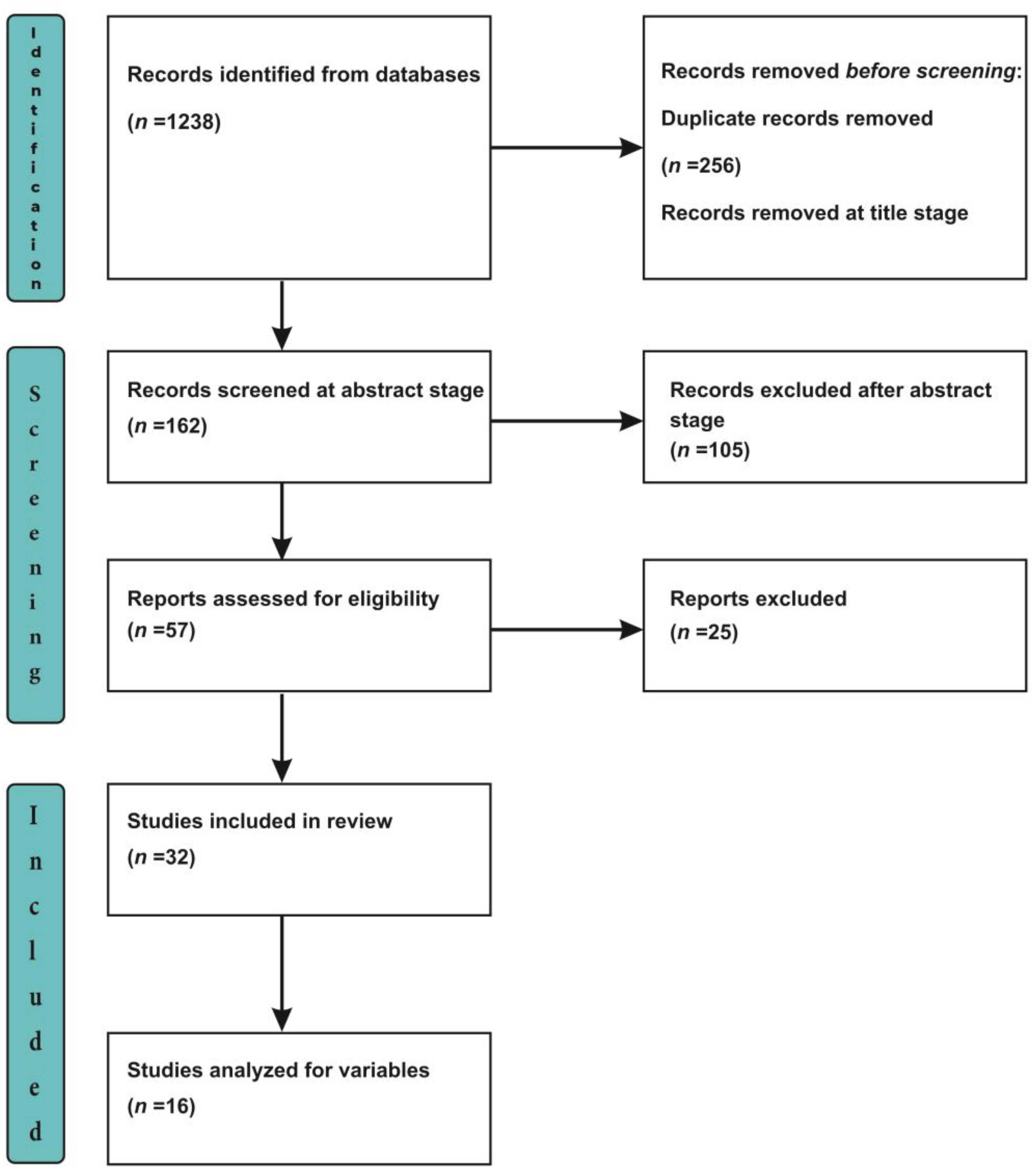

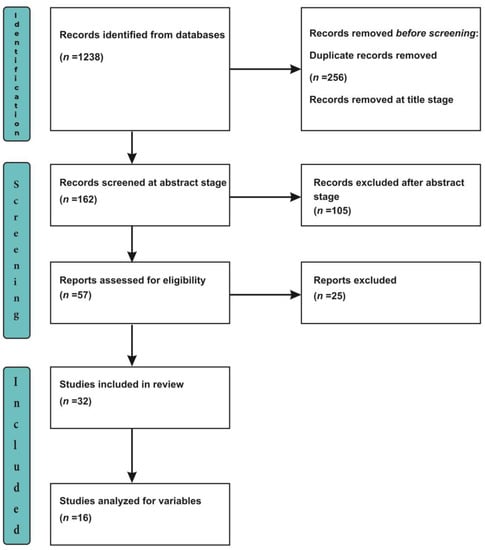

Using the Preferred Reporting Items for Systematic Reviews and Meta-Analysis (PRISMA) extension for Scoping Reviews (PRISMA-Scr) checklist [16], we extracted 1238 articles from our systematic search of the databases. A total of 256 articles were removed as they were identified to be duplicate records, and 820 articles were excluded at the title-screening stage as they were not relevant to the objectives of our present study. An abstract-stage screening was done for 162 articles, of which 57 articles were selected for further screening, with the exclusion of 105 articles at the abstract stage as they failed to meet the inclusion criteria for this review. In total, 32 articles were selected for the full-text screening, with the exclusion of 25 manuscripts (unable to locate full texts). Finally, 16 reviews were included in this scoping review, and 16 manuscripts were excluded (books\book chapters, comments, editorials, letters) as they did not fit into the inclusion criteria (Figure 1).

Figure 1.

PRISMA Flowchart.

2.6. Data Charting

The data variables established from the articles analyzed for this study are the year of publication, type of review, aim, time frame of the search strategy, key words used to retrieve articles, key ethical issues discussed, major findings, conclusions and recommendations.

3. Results

3.1. Search Results

An extensive search of the PubMed and Google Scholar electronic databases yielded 1238 articles using appropriate keywords and, finally, 16 articles were selected for the present review on the grounds of the inclusion and exclusion criteria, along with the removal of duplicate articles (Figure 1).

3.2. List of Selected Studies

The records of studies analyzed for this scoping review are listed in Table 1, along with the study ID that will be used for further references in this manuscript.

Table 1.

Depicts the titles and first authors along with the study ID of the articles reviewed.

3.3. Study Characteristics

The selected manuscripts were thoroughly analyzed and the following relevant variables were extracted for this scoping review (Table 2 and Table 3). An extensive data tabulation, which encompasses all the characteristic variables of the studies included, was sorted under the columns of the year of publication, study characteristics, aim, time period of study, keywords used for data retrieval, ethical issues discussed, major findings and conclusion and recommendations.

Table 2.

Characteristics of data extracted for analysis.

Table 3.

Characteristics of data extracted for analysis.

3.4. Analysis Study Characteristics

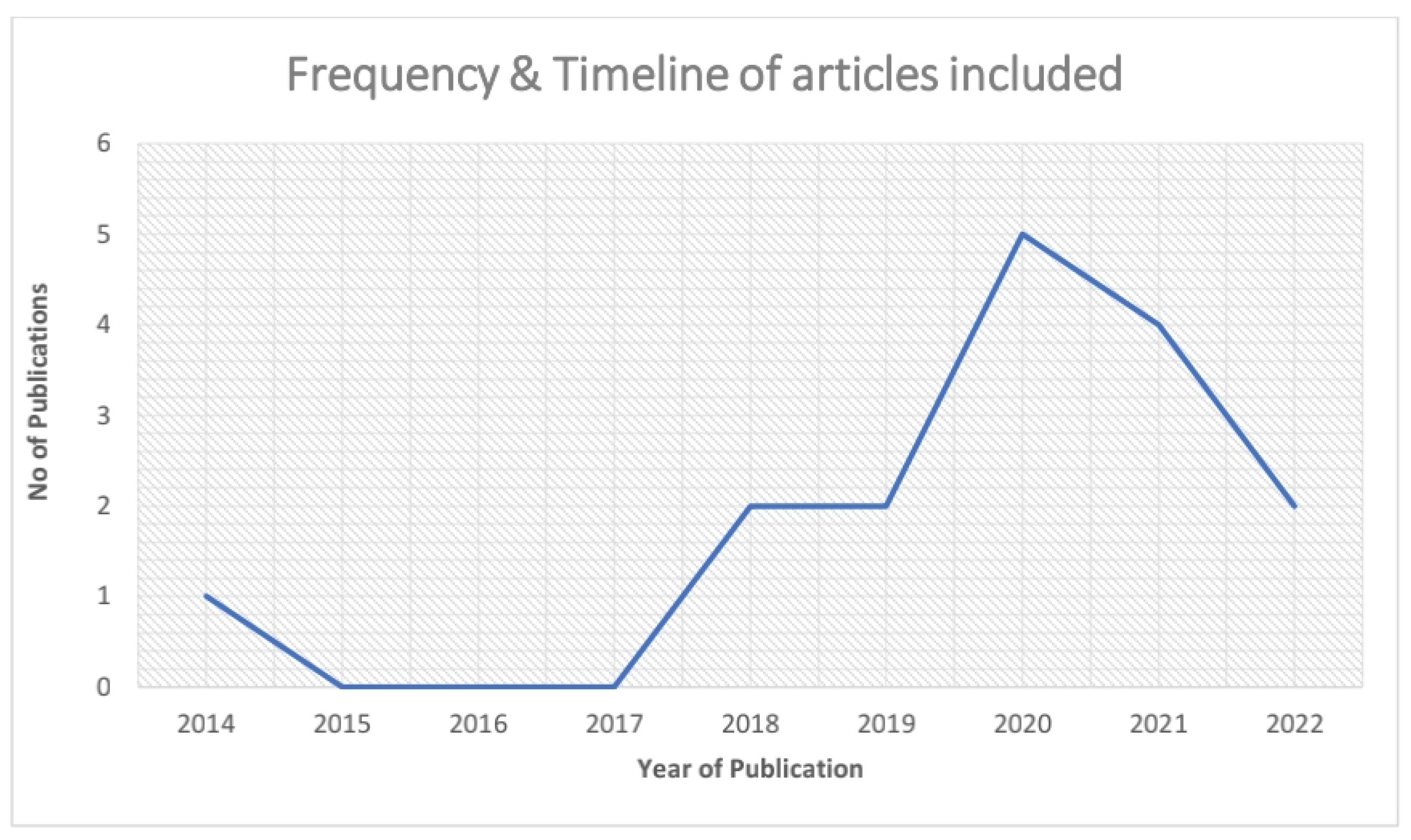

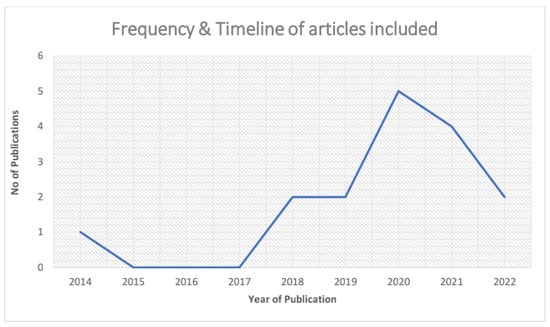

The timeline of the studies included the earliest published article on the ethics of artificial intelligence in healthcare, which was in September 2014 [Study ID 1]. Five articles published in 2020, followed by four articles in 2021 and two articles each in 2018, 2019 and 2022 (Table 4 & Figure 2).

Table 4.

Timeline of studies included in this review.

Figure 2.

Depicts the Frequency & Timeline of articles that are included for this review.

3.5. Keywords

The major keywords used by the reviewers to retrieve the scientific literature for their studies are illustrated in Figure 3. The most commonly used keyword is represented in darker tints of blue and less common words in lighter shades of blue.

Figure 3.

Displays the major key words used by the reviewers to retrieve the scientific literature for their studies.

3.6. Major Ethical Concerns

The most frequently raised ethical issues regarding the application of artificial intelligence in healthcare settings are displayed in Figure 4. The predominant issues are highlighted in darker tints of red and the lesser addressed issues are displayed in lighter tints of red.

Figure 4.

Illustrates the most frequently raised ethical issues regarding the application of artificial intelligence in healthcare.

3.7. Synthesis of Results

Major ethical dilemmas and concerns over the implementation of artificial intelligence in healthcare and the potential solutions to the same have been outlined below:

- (a)

- Predominantly, all the studies voiced concerns about the need to devise ethical principles and guidelines for facilitating the use of AI in healthcare; the existing code of laws and ethical frameworks are not up to date with the current or future application of artificial intelligence in healthcare. Considering the vulnerability of artificial intelligence to errors, patients prefer empathetic humans to treat them rather than artificial systems. However, an AI system, under the able supervision of healthcare professionals, has immense potential to bring about beneficial reforms in the healthcare system. In view of the massive scope for artificial intelligence in healthcare, it is obligatory for governments and other regulatory bodies to keep a check on the negative implications of AI in medical facilities.

- (b)

- It was also found that the standard guidelines testing the applicability of AI upholding the ethical principles of fairness, justice, prevention of harm and autonomy are nonexistent. In spite of diverse research on artificial intelligence and its ethical implications, it was determined that the scientific data lacks a globally accepted ethical framework. The prevailing system of guidelines has been proven to be insufficient to assuage the ethical concerns about artificial systems in medicine. Wide scale revisions are needed in the current law and ethical codes to monitor AI in medical systems. Contrary to many reviews, a study conducted by Daniel Schonberger et al., in 2019, claimed that AI enhances the equity and equality of healthcare services, and the paucity of knowledge to sustainably implement AI is a major challenge in its application in healthcare [5].

- (c)

- The ethical concerns ranging from data security to data privacy via the misuse of personal data have led to strained doctor–patient relationships. Achieving unimpeachable control over the risks associated with the use of AI plays a pivotal role. Concerns about using the obtained data, along with data protection and privacy, are important issues that need our attention for a successful artificial intelligence-driven healthcare administration. The optimum potential of AI in medical care cannot be achieved without addressing these ethical and legal conundrums. The meticulous planning of regulation and the implementation of AI is of utmost importance to harvest the maximum benefits from this unprecedented technology.

- (d)

- The evolution of artificial intelligence and machine learning technologies has led to the development of a novel strategy called ‘co-design’. The concept of co-design has the ability to fix the loopholes in the existing code of ethics by actively involving stakeholders, software developers, policymakers, patients and healthcare professionals in ethical decision-making pertaining to artificial intelligence in healthcare. It is imperative for healthcare professionals to thoroughly study these innovative technologies to ethically implement them in clinical practice, which assists in mindful decision-making.

3.8. Recommendations Stated in the Articles Reviewed

Artificial intelligence systems can provide efficacious results in the healthcare system with the integration and standardization of the electronic health record regulatory system. A collaborative association comprising stakeholders, administrators and AI system developers can provide virtuous solutions to alleviate ethical hitches in the application of AI in healthcare. Moreover, a multifaceted, interdisciplinary approach actively involving the beneficiaries of AI in healthcare (patients) in the decision-making process in the implementation of AI in medicine is necessary. Data protection systems should be reinforced to prevent the seepage of patients’ personal data.

4. Discussion

The novel field of artificial intelligence is vigorous and growing at a fast pace. Even though the benefits of artificial intelligence in healthcare are versatile, its ethical concerns range from the diminishing nature of the doctor–patient relationship to the aggravation of existing health inequities [27]. The present review of scholarly articles on the ethics of artificial intelligence in healthcare aimed to explore the ethical complications associated with AI in healthcare and emphasize the ethical knowledge gaps in the literature by analyzing diverse studies conducted across the globe. The reviewed literature demonstrated an overwhelming ethical concern about data privacy, trust, accountability, bias, explicability, patient autonomy, fairness, transparency, data governance, confidentiality and data security [5,14,17,28,29]. These ethical drawbacks of AI applications in healthcare should be rigorously investigated and interpreted to utilize AI technologies to their maximum potential.

Autonomy is one of the four pillars of ethical principles in medicine. A qualitative study in the USA (conducted in 15 focus group discussions with 87 participants) reported that the participants, in general, have a welcoming and enthusiastic attitude towards AI in healthcare, as it has the potential to improve the care they receive at medical facilities, but they felt their patient safety, privacy and autonomy was at stake with the inclusion of artificial intelligence in healthcare. Patients should be given the freedom to decide if they wish to incorporate AI technology in their treatment care and be able to drop out of AI inclusion in their treatment at any point in the course of therapeutic treatment. The participants also raised concerns about the individuality of AI-assisted clinical decision-making, as they felt that every patient and clinical case scenario is unique [1,8,30]. Clinician–patient trust is built upon effective communication and relationships. They are crucial for the effectiveness of treatments and for improving treatment outcomes. In an AI-based healthcare delivery system, patients are confronted by artificial systems, and for those who have had hardly any exposure to digital technologies, establishing trust with an AI system can be arduous [7,31]. Privacy concerns over artificial intelligence in healthcare are ever-growing, with the circulation of confidential healthcare data across numerous unauthorized companies. This is inevitable, as machine learning and deep learning algorithms need an enormous amount of data in the process of training, testing and validating the algorithms [22,24,32,33].

AI-based decision-making lacks transparency. This feature of artificial intelligence, called the “black box” element, renders the AI decision-making process opaque [25,32]. Artificial intelligence should aid in achieving the well-being and safety of patients. Nevertheless, artificial intelligence has been proven to reinforce the existing biases in the healthcare industry [9,34]. Unreliable and under-representative data sets for AI development lead to inequity, data bias, discrimination and deceptive predictions. Health data are the most sensitive and intimate information. Respecting the privacy of the patient is a critical ethical principle, as privacy is built upon the grounds of autonomy and bound to individual identity and well-being [9,35].

These ethical considerations spotlight the significance of patient involvement to make sure that these novel technologies are incorporated into medical care in a way that strengthens patient trust and alleviates widespread ethical concerns about AI in healthcare. Furthermore, as patients are the target beneficiaries of all AI uses and innovations, characterizing their requirements, moral values and preferences is indispensable for ensuring that these novel technologies are developed, applied and implemented in an ethically appropriate way [8,36,37,38]. A multifaceted approach involving all the stakeholders, policymakers, patients and healthcare providers—in the application and implementation of AI in healthcare is needed [39].

AI has the capability to achieve universal health coverage, alleviate health and social inequities and enhance health outcomes on a larger scale. In view of the unclear boundaries of AI in health, we must be assiduous in responding to the ethical complications of its application in healthcare [40]. It is imperative to approach AI in healthcare with cautious enthusiasm, enlightened by a comprehensive body of ethical principles, to ensure its ethical application [14,41].

Artificial intelligence techniques can also be used for molecular analysis. Visible infrared spectroscopy data can be used as a significant quantitative indication for bimolecular investigations. This novel technique helps in obtaining spatial and spectral information as well as the spatial distribution of biomolecules to be analyzed. Moreover, the data transmission between the spectroscopy instruments and mobile devices is important for handheld field monitoring. Recently, it has been found that on-the-go communication technology is an effective mode of data transmission between spectroscopy equipment and mobile devices [42,43].

5. Knowledge Gaps

AI-driven healthcare practice has immense potential to add to the capacity and enrich the knowledge base of practicing clinicians. However, the existing literature clearly sets forth the ethical complications in the large-scale adoption of AI in healthcare. The literature largely lacks the findings from developing nations, as it is centered on developed countries. The most important component not addressed in the existing literature is a standard, universally acceptable framework or guidelines that ensure the ethical application of artificial intelligence in healthcare. The governance of artificial intelligence is of paramount importance, but the current literature review revealed a paucity of data on the governance of artificial intelligence in healthcare in different countries. Currently, very little research has been done characterizing the patients’ and other stakeholders’ perspectives on the application of AI in healthcare. Furthermore, the factors to be considered while preparing a checklist for AI ethics have not been explored. There is an acute paucity in the scientific research that elaborates on the ethical challenges and potential solutions to address the same. The lack of research on the ethical considerations, with only advancements in the technology, makes it difficult to implement and manage artificial intelligence interventions in the long run.

6. Directions for Future Research

There is a pressing demand for developing practical tools for testing the applicability of artificial intelligence in healthcare following ethical principles. Furthermore, future research is needed to recognize various stakeholders, users and beneficiaries of AI-related technology in medicine and to actively include them in dialogues about AI ethics and feasible solutions to establish ethical AI applications. For healthcare professionals to attain proficiency in artificial intelligence and implement it in daily clinical practice without compromising ethical principles, prior training is essential. To achieve this, it is imperative to incorporate artificial intelligence in undergraduate and postgraduate medical curricula, keeping in view the uniqueness of the various disciplines of medicine; extensive research investigating the ethical and legal problems of the AI application in diverse specialties and sub-specialties of healthcare is required. Comprehensive qualitative and quantitative studies, with an aim to decipher the perspectives of healthcare professionals and consumers of AI (patients), are needed.

7. Limitations

This study has certain inherent limitations. Due to the ever-expanding and evolving characteristics of artificial intelligence technologies, studies conducted since2014 were included in this review, as the findings of the studies before this time period may not be relevant to the recent developments seen in this digital era.

8. Conclusions

It is certain that AI will have extensive ramifications that transform healthcare practices, altering the patient experience and clinicians’ approach towards patients. However, a naïve implementation of artificial intelligence in healthcare may result in a wide array of ethical complications. There is an increasing demand for feasible solutions to the ethical implementations of AI in healthcare. This scoping review provided insights on patient privacy and autonomy, informed consent, transparency, fairness, data bias, inequity, the “black box” element, etc. There is a need to expand and codify the existing framework and organize it into categories connected to ethical principles such as autonomy, privacy, transparency, fairness and justice. It is crucial for healthcare facilities, governmental and regulatory organizations to build guidelines to tackle ethical issues, act in an accountable and responsible way and construct governance techniques to monitor the complications. Artificial intelligence is projected to have a high potential to transform healthcare. Universal standard guidelines for ethical and legal implications can lead to optimal utilization of AI in healthcare. We strongly believe that the establishment of a comprehensive and systematic ethical framework for AI application in healthcare can offer promising results in health outcomes.

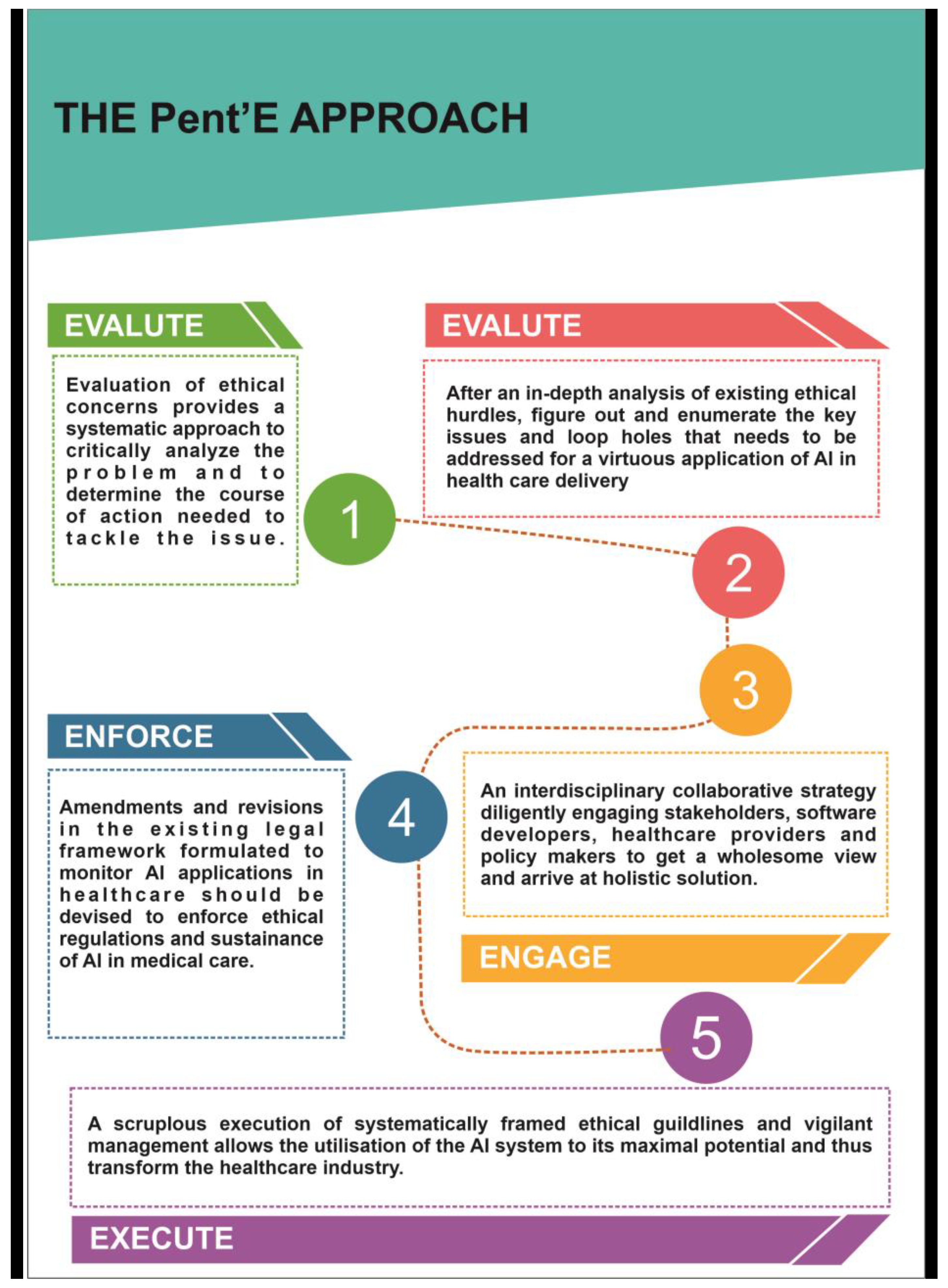

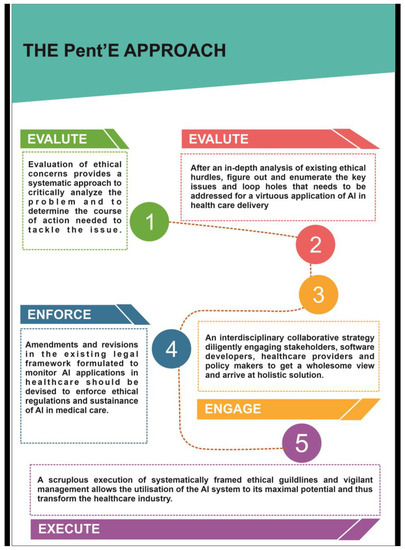

9. Conceptual Framework

From the extensive review of the existing scientific literature, it is well established that there is an exigent need for an ethical framework for developing, implementing, governing and monitoring artificial intelligence in healthcare.

In this regard, we conceptualized the “Pent’E” approach that encompasses a five-step process of identifying, analyzing and implementing interventions to address the ethical and legal disputes in the application of artificial intelligence in healthcare for the benefit of patients (Figure 5).

Figure 5.

Enumerates the Pent’E approach of resolving ethical challenges in the application of AI in healthcare.

The Pent’E approach:

- EVALUATE

- ENUMERATE

- ENGAGE

- ENFORCE

- EXECUTE

The proposed framework consists of five main steps that range from problem identification to implementation. Critically analyzing the ethical challenges in the application of artificial intelligence in healthcare and appraising the existing literature for loopholes in the ethical and legal concerns will help in conceptualizing a plan to resolve these issues. This process will help in considering the current pressing issues in the ethics of artificial intelligence, and to further engage with stakeholders, policymakers, governing bodies, software developers and healthcare providers—to design, develop and deliver an efficient and ethically bound technical invention, with the enforcement of the current legal and ethical principles in favor of patients and healthcare providers, which strictly adheres to the principles of beneficence, non-maleficence, justice and autonomy. The final step is the execution of the planned course of action in a time-framed and effective manner, which brings to light all the ethical regulations and ways in a systematic way to widen the acceptance, utility and benefits of artificial intelligence in healthcare without any kind of malpractice.

Author Contributions

Conceptualization: K.M.S.; methodology: S.P., J.N.B., A.J. and K.M.S.; software: K.M.S.; validation: S.P., J.N.B., A.J. and K.M.S.; formal analysis: S.P., J.N.B., A.J. and K.M.S.; investigation: S.P., J.N.B. and K.M.S.; resources: K.M.S.; data curation: A.J. and K.M.S.; writing—original draft preparation: S.P., J.N.B. and K.M.S.; writing—review and editing: S.P., J.N.B., A.J. and K.M.S.; visualization: S.P., J.N.B. and K.M.S.; supervision: K.M.S.; project administration: K.M.S.; and funding acquisition: K.M.S. All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding.

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Data Availability Statement

The data that supports this study are available upon request from the corresponding author.

Acknowledgments

The authors acknowledge Panimalar Medical College Hospital & Research Institute, Chennai and the Department of Education, Bioethics, Melbourne, Australia for introducing the “International Foundation Course on Moral Values, Bioethics, Professionalism and Personal well-being for first year medical students” course in the Ist Professional MBBS curriculum, that provided the knowledge and skills required for conceptualising this study. The authors also acknowledge the Panimalar Medical College Hospital & Research Institute, Chennai and the Foundation of Healthcare Technologies Society, New Delhi for introducing the “Foundations in Research Methodologies” course in the Ist Professional MBBS curriculum, that provided the knowledge and skills required for conducting and publishing this study.

Conflicts of Interest

All authors declare that there exists no conflict of interest.

References

- Bærøe, K.; Miyata-Sturm, A.; Henden, E. How to achieve trustworthy artificial intelligence for health. Bull. World Health Organ. 2020, 98, 257–262. [Google Scholar] [CrossRef]

- Malik, P.; Pathania, M.; Rathaur, V.K. Overview of artificial intelligence in medicine. J. Fam. Med. Prim. Care 2019, 8, 2328–2331. [Google Scholar] [CrossRef]

- Goodman, K.; Zandi, D.; Reis, A.; Vayena, E. Balancing risks and benefits of artificial intelligence in the health sector. Bull. World Health Organ. 2020, 98, 230–230A. [Google Scholar] [CrossRef]

- Davenport, T.; Kalakota, R. The potential for artificial intelligence in healthcare. Future Healthc. J. 2019, 6, 94–98. [Google Scholar] [CrossRef]

- Daniel Schönberger, Artificial intelligence in healthcare: A critical analysis of the legal and ethical implications, International. J. Law Inf. Technol. 2019, 27, 171–203. [CrossRef]

- Sounderajah, V.; McCradden, M.D.; Liu, X.; Rose, S.; Ashrafian, H.; Collins, G.S.; Anderson, J.; Bossuyt, P.M.; Moher, D.; Darzi, A. Ethics methods are required as part ofreporting guidelines for artificial intelligence in healthcare. Nat. Mach. Intell. 2022, 4, 316–317. [Google Scholar] [CrossRef]

- Lee, D.; Yoon, S.N. Application of Artificial Intelligence-Based Technologies in the Healthcare Industry: Opportunities and Challenges. International. J. Environ. Res. Public Health 2021, 18, 271. [Google Scholar] [CrossRef]

- Richardson, J.P.; Smith, C.; Curtis, S.; Watson, S.; Zhu, X.; Barry, B.; Sharp, R.R. Patient apprehensions about the use of artificial intelligence in healthcare. Npj Digit. Med. 2021, 4, 140. [Google Scholar] [CrossRef]

- Sunarti, S.; Rahman, F.F.; Naufal, M.; Risky, M.; Febriyanto, K.; Masnina, R. Artificial intelligence in healthcare: Opportunities and risk for future. Gac. Sanit. 2021, 35 (Suppl. 1), S67–S70. [Google Scholar] [CrossRef]

- Laptev, V.A.; Ershova, I.V.; Feyzrakhmanova, D.R. Medical Applications of Artificial Intelligence (Legal Aspects and Future Prospects). Laws 2022, 11, 3. [Google Scholar] [CrossRef]

- Ganapathy, K. Artificial Intelligence and Healthcare Regulatory and Legal Concerns. Telehealth Med. Today 2021. [Google Scholar] [CrossRef]

- Dettling, H.-U. How the Challenge of Regulating AI in Healthcare Is Escalating. 2021. Available online: https://www.ey.com/en_kz/law/how-the-challenge-of-regulating-ai-in-healthcare-is-escalating (accessed on 7 October 2022).

- United Nations. The 2030 Agenda and the Sustainable Development Goals: An Opportunity for Latin America and the Caribbean; LC/G.2681-P/Rev.3; United Nations Publication: Santiago, Chile, 2018. [Google Scholar]

- Murphy, K.; Di Ruggiero, E.; Upshur, R.; Willison, D.J.; Malhotra, N.; Cai, J.C.; Malhotra, N.; Lui, V.; Gibson, J. Artificial intelligence for good health: A scoping review of the ethics literature. BMC Med. Ethics. 2021, 22, 14. [Google Scholar] [CrossRef] [PubMed]

- Leimanis, A.; Palkova, K. Ethical Guidelines for Artificial Intelligence inHealthcare from the Sustainable Development Perspective. Eur. J. Sustain. 2021, 10, 90. [Google Scholar] [CrossRef]

- Tricco, A.C.; Lillie, E.; Zarin, W.; O’Brien, K.K.; Colquhoun, H.; Levac, D.; Moher, D.; Peters, M.D.J.; Horsley, T.; Weeks, L.; et al. PRISMA extension for scoping reviews (PRISMA-ScR): Checklist and explanation. Ann.Intern. Med. 2018, 169, 467–473. [Google Scholar] [CrossRef]

- Luxton, D.D. Recommendations for the ethical use and design of artificial intelligent care providers. Artif. Intell. Med. 2014, 62, 1–10. [Google Scholar] [CrossRef]

- Karimian, G.; Petelos, E.; Evers, S.M.A.A. The ethical issues of the application of artificial lintelligence in healthcare: A systematic scoping review. AI Ethics 2022, 2, 539–551. [Google Scholar] [CrossRef]

- Vellido A: Societal Issues Concerning the Application of Artificial Intelligence in Medicine. Kidney Dis. 2019, 5, 11–17. [CrossRef]

- Currie, G.; Hawk, K.E.; Rohren, E.M. Ethical principles for the application of artificialintelligence (AI) in nuclear medicine. Eur. J. Nucl. Med. Mol. Imaging 2020, 47, 748–752. [Google Scholar] [CrossRef]

- Gerke, S.; Minssen, T.; Cohen, G. Chapter 12–Ethical and Legal Challenges of Artificial Intelligence-Driven Healthcare. In Artificial Intelligence in Healthcare; Bohr, A., Memarzadeh, K., Eds.; Academic Press: Cambridge, MA, USA, 2020; pp. 295–336. ISBN 9780128184387. [Google Scholar] [CrossRef]

- Pesapane, F.; Volonté, C.; Codari, M.; Sardanelli, F. Artificial intelligence as a medical device in radiology: Ethical and regulatory issues in Europe and the United States. Insights Imaging 2018, 9, 745–753. [Google Scholar] [CrossRef]

- Abdullah, Y.I.; Schuman, J.S.; Shabsigh, R.; Caplan, A.; Al-Aswad, L.A. Ethics of Artificial Intelligence in Medicine and Ophthalmology. Asia-Pac. J. Ophthalmol. 2021, 10, 289–298. [Google Scholar] [CrossRef]

- Brady, A.P.; Neri, E. Artificial Intelligence in Radiology—Ethical Considerations. Diagnostics 2020, 10, 231. [Google Scholar] [CrossRef] [PubMed]

- Danton, S.; Michael, D.C.; Feudtner, C.A. Identifying Ethical Considerations for Machine Learning Healthcare Applications. Am. J. Bioeth. 2022, 20, 7–17. [Google Scholar] [CrossRef]

- Rubeis, G. iHealth: The ethics of artificial intelligence and big data in mental healthcare. Internet Interv. 2022, 28, 100518. [Google Scholar] [CrossRef]

- Donia, J.; Shaw, J.A. Co-design and ethical artificial intelligence for health: An agenda for critical research and practice. Big Data; Soc. 2021, 8, 20539517211065248. [Google Scholar] [CrossRef]

- Chiruvella, V.; Guddati, A.K. Ethical Issues in Patient Data Ownership. Interact. J. Med. Res. 2021, 10, e22269. [Google Scholar] [CrossRef]

- Kooli, C.; Al Muftah, H. Artificial intelligence in healthcare: A comprehensive review of its ethical Concerns. Technol. Sustain. 2022. [Google Scholar] [CrossRef]

- Safdar, N.M.; Banja, J.D.; Meltzer, C.C. Ethical considerations in artificial intelligence. Eur. J. Radiol. 2020, 122, 108768. [Google Scholar] [CrossRef] [PubMed]

- Char, D.S.; Shah, N.H.; Magnus, D. Implementing Machine Learning in Health Care–Addressing Ethical Challenges. N. Engl. J. Med. 2018, 378, 981–983. [Google Scholar] [CrossRef]

- Samuel, G.; Chubb, J.; Derrick, G. Boundaries Between Research Ethics and Ethical Research Use in Artificial Intelligence Health Research. J. Empir. Res. Hum. Res. Ethics. 2021, 16, 325–337. [Google Scholar] [CrossRef]

- Li, X.; An, T.Z. Exploration on Artificial Intelligence Application: From Security, Privacy and Ethic Perspective. In Proceedings of the 2017 IEEE 2nd International Conference on Cloud Computing and Big Data Analysis (ICCCBDA), Chengdu, China, 28–30 April 2017; pp. 416–420. [Google Scholar] [CrossRef]

- Hallows, R.; Glazier, L.; Katz, M.S.; Aznar, M.; Williams, M. Safe and Ethical Artificial Intelligence in Radiotherapy – Lessons Learned From the Aviation Industry. Clin. Oncol. 2022, 34, 99–101. [Google Scholar] [CrossRef]

- Cath, C. Governing artificial intelligence: Ethical, legal and technical opportunities and challenges. Phil. Trans. R. Soc. A 2018, 376, 20180080. [Google Scholar] [CrossRef] [PubMed]

- Geis, J.R.; Brady, A.P.; Wu, C.C.; Spencer, J.; Ranschaert, E.; Jaremko, J.L.; Langer, S.G.; Kitts, A.B.; Birch, J.; Shields, W.F.; et al. Ethics of Artificial Intelligence in Radiology: Summary of the Joint European and North American Multisociety Statement. Can. Assoc. Radiol. J. 2019, 70, 329–334. [Google Scholar] [CrossRef] [PubMed]

- Astromskė, K.; Peičius, E.; Astromskis, P. Ethical and legal challenges of informed consent applying artificial intelligence in medical diagnostic consultations. AI Soc. 2021, 36, 509–520. [Google Scholar] [CrossRef]

- Nebeker, C.; Torous, J.; Bartlett Ellis, R.J. Building the case for actionable ethics in digital health research supported by artificial intelligence. BMC Med. 2019, 17, 137. [Google Scholar] [CrossRef] [PubMed]

- Molnár-Gábor, F. Artificial Intelligence in Healthcare: Doctors, Patients and Liabilities. In Regulating Artificial Intelligence; Wischmeyer, T., Rademacher, T., Eds.; Springer: Cham, Switzerland, 2020. [Google Scholar] [CrossRef]

- Martinho, A.; Kroesen, M.; Chorus, C. A healthy debate: Exploring the views of medical doctors on the ethics of artificial intelligence. Artif. Intell. Med. 2021, 121, 102190. [Google Scholar] [CrossRef] [PubMed]

- Gupta, S.; Kamboj, S.; Bag, S. Role of Risks in the Development of Responsible Artificial Intelligence in the Digital Healthcare Domain. Inf. Syst. Front. 2021. [CrossRef]

- Wang, T.; Shen, F.; Deng, H.; Cai, F.; Chen, S. Smartphone imaging spectrometer for egg/meat freshness monitoring. Anal. Methods 2022, 14, 508–517. [Google Scholar] [CrossRef]

- Shen, F.; Deng, H.; Yu, L.; Cai, F. Open-source mobile multispectral imaging system and its applications in biological sample sensing. Spectrochim. Acta Part A Mol. Biomol. Spectrosc. 2022, 280, 121504. [Google Scholar] [CrossRef]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).