1. Introduction

High dynamic range and wide color gamut (HDR/WCG) video has recently received much attention due to its significant impact on the improvement of video quality by using a much higher contrast range, wider color primaries, and higher bit depth than conventional standard dynamic range (SDR) video. In order to facilitate the usage of such HDR/WCG video, standardization efforts have been made, including the production format of HDR-TV [

1], the HDR electro-optical transfer function (EOTF) [

2], the common media format for consumer electronics [

3], and so on.

In dealing with such HDR/WCG video, chroma subsampling, which is a key component for a video preprocessing system, introduces a severe quality problem on subjective luminance perception. Several Moving Picture Experts Group (MPEG) contributions identified this problem [

4,

5], which is likely caused by the combination of the Y’CbCr 4:2:0 nonconstant luminance (NCL) format with the highly nonlinear transfer function of [

2]. Appearing as a type of false contouring or noise-like speckles in the smooth area, the artifacts of this problem sometimes become very annoying to viewers even without compression.

To ameliorate such artifacts from chroma subsampling, various luma control schemes have been suggested in the literature [

6,

7,

8,

9,

10]. Luma control implies an intentional change of luma signal, which is not subsampled, for the purpose of reducing the perception error introduced by chroma subsampling. In one category of such luma control schemes, the perception error is defined in a nonlinear light domain using the signals obtained after the application of an optoelectrical transfer function (OETF) and quantization [

6,

7]. The schemes in this category can be easily applied to conventional imaging systems, but the incorporated perception error could not be correctly matched to the human visual system (HVS) because most HVS measures (i.e., CIEDE2000 [

11]) are defined in the linear light domain.

For this reason, the other category of luma control methods optimizes the error function defined in the linear light domain. One solution proposed in [

8] was to simulate the NCL Y’CbCr 4:2:0 signal conversion followed by chroma upsampling for iterating over different luma values to choose the best one, resulting in the closest linear luminance to that of the original 4:4:4 signal. By searching for the best possible luma value, the solution achieved a significant linear luminance gain (i.e., more than 17 dB of tPSNR-Y in [

8]) over the plain NCL Y’CbCr 4:2:0 signal. However, the iterative nature of searching, even done quickly with the tight bounds (also proposed in [

8]), requires an uneven amount of complex computations per processing unit. To avoid such iterations for luma control, Norkin proposed a closed form solution in [

9] based on a truncated Taylor series approximation for the nonlinear HDR EOTF function. This solution requires a fixed number of operations per pixel and thus is well suited for a real-time and/or hardware implementation of the NCL Y’CbCr 4:2:0 HDR system, but its performance is limited for some videos that have highly saturated colors. To fill the performance gap between the above two schemes, the authors proposed an enhanced fast luma control algorithm in [

10]. Based on the fact that the linear approximation for a convex function using truncated Taylor series is always less than the function value, the enhanced luma control scheme modifies the linear approximation, resulting in a meaningful gain over the previous fast scheme. However, there still remains a nonnegligible performance gap and the algorithm requires a parameter which is not determined automatically.

Considering the pros and cons of these previous luma control schemes, this paper focuses on an interesting question: can we design a linear approximation of the nonlinear HDR EOTF which can provide a similar performance to that of the iterative luma control method while being free of the limiting factors in real-time or hardware implementation such as the adaptive selection of control parameters or the uneven amount of required computations? To answer this question, we first analyzed the errors involved in the closed form solution of [

9], which derives an upper and a lower bound on the optimal luma value from the convexity property of the EOTF function. Then, we tried various linear approximations employing the derived bounds to design an efficient linear approximation of the nonlinear EOTF function. Based on the trials, we argue that the straight lines passing two points on the EOTF curve are quite useful, where one is the position of the original 4:4:4 signal and the other is the point somewhere between the derived lower and upper bounds. Via the modification of the closed form solution using these straight lines, we show that the proposed scheme can provide nearly the same quality of the iterative solution without any limiting factors in its real-time or hardware implementation.

The rest of this paper is organized as follows.

Section 2 describes the problem of luma control and the approaches taken by the previous representative algorithms. Then, in

Section 3, we investigate the luminance perception error minimized by the fast solution in [

9], resulting in two new bounds on the position of the best luma value. This section also explains the proposed linear approximation using the derived two bounds. Simulations for an extensive comparison study and their results are presented in

Section 4, and then we conclude this paper in

Section 5.

2. Luma Control Problem

To define the luma control problem, let

denote the original pixel values in the linear light domain, which are to be transformed to NCL Y’CbCr 4:2:0 pixel values. For HDR-10 video [

3], this transformation employs the inverse ST.2084 [

2] Perceptual Quantizer (PQ), the Y’CbCr color-space conversion, the narrow-band 10-bit quantization, and chroma subsampling in this order, as described in [

12] and depicted in

Figure 1.

After the processing steps, such as video encoding, transmission, reception, and decoding, the reconstructed NCL Y’CbCr 4:2:0 video is supposed to be transformed back to the RGB display signal in the linear light domain. The postprocessing for this transformation shall comprise the stages, which are exactly the inverses of the corresponding preprocessing blocks. Hence, this processing involves chroma upsampling, inverse 10-bit quantization, RGB color-space conversion, and then ST.2084 EOTF. For now, in order to consider the artifacts caused by chroma subsampling, let us leave the processing blocks from video encoding to decoding behind. If we denote by

the reconstructed output pixel values of the postprocessing, we can define the luminance error by

where

represents the contribution of each linear light component to luminance and is given by (0.2126, 0.7152, 0.0722) for BT.709 [

13] and (0.2627, 0.6780, 0.0593) for BT.2020 [

14] color gamut. Translation of this error to the one with nonlinear reconstructed signals provides

where the “prime” notation, as a well-known convention, illustrates that the signal is in the “nonlinear” domain, and

denotes the ST 2084 EOTF, which is defined by

where

,

,

,

, and

.

For further investigation of the reconstructed nonlinear signal,

, let us denote the chroma subsampling errors by

and

, such that

where

and

represent the reconstructed and the original chroma signal pairs in nonlinear 4:4:4 format, respectively. In order to compensate for these subsampling errors, if we assume that the original luma value,

, is adjusted to a new one,

, then the reconstructed signal,

, or equivalently, the reconstruction difference,

, can be represented by

where

means the contribution of a chroma component for each color and is given by (1.5748, −0.1873, −0.4681, 1.8556) for BT.709 and (1.4746, −0.1646, −0.5714, 1.8814) for BT.2020. This shows the adjusted luma value,

, controls the nonlinear domain reconstruction,

, and thus determines the luminance error given in (2). Hence, the luma control problem is to find the best luma value that produces the minimum luminance error of (2).

The iterative luma control scheme in [

8] searches for the best compensation using the bisection method with their proposed bounds on the optimal luma value. The iterative nature of the scheme comes from the nonlinearity of

in (2), resulting in its repeated and complex computations for each candidate luma value. To get rid of the iterative nature of the luma control scheme, [

9] proposed an approximation of

based on a truncated Taylor series, such that

where

are the original pixel values in nonlinear RGB color space and

denotes the derivative of the EOTF,

. This approximation, after combined with (5) and (2), provides an optimal luma value,

, as a closed form solution of

where

This fast scheme is very simple and no longer iterative but shows limited performance for some videos having highly saturated colors. As a reason for this performance limitation, [

10] pointed out that the approximation of (6) can be severely limited when

has a high curvature at the point

and when

is not small. To fill the performance gap between the above two luma control schemes, a modified linear approximation was proposed in [

10], such that

where

is the resulting value from (5) with (7), specifically,

The parameters

and

in (9) are defined by

where

is a nonautomatic parameter, called the “reduction factor”, in the range of (0,1).

4. Simulations and Results

To evaluate the performance of the proposed algorithm, an extensive comparison study was conducted using the previous luma control schemes explained in

Section 2. The comparison is based on the pre-encoding and post-decoding processes defined in [

12], with the downsampling filter,

, having the filter coefficients of (1/8, 6/8, 1/8). Tested video sequences are shown in

Figure 3, where the first three (denoted by “Fireeater”, “Market”, and “Tibul”) are the BT.709 HDR video sequences used before in MPEG [

15] and the last five sequences (denoted by “Beerfest”, “Carousel”, “Cars”, “Fireplace”, and “Showgirl”) are the BT.2020 HDR sequences chosen from [

16]. In contrast to the MPEG sequences, some of the chosen BT.2020 sequences have multiple shots of a scene with too many frames (more than 2000) for simulation. Thus, we further selected a representative 200–400-frames-long portion of each sequence for the performed simulations. Detailed information on these selections and the characteristics of each test sequence are summarized in

Table 1.

All the test videos were of the same 1920 × 1080 resolution, maximum luminance of 4000 cd/m

2, and large amount of highly saturated colors. The color saturation was the most prominent property for the test sequences “Market”, “Beerfest”, and “Carousel”, which had highly saturated colors around all three color gamut boundaries, while the others had one or two. The sequences “Fireeater” and “Fireplace” were low-key scenes (filmed in low-key) with flames covering a wide range of color temperatures. The “Cars” sequence showed directional sunlight on a black car, resulting in glare on the car bonnet and windows, with dark shades under the car. Finally, the “Tibul” and “Showgirl” sequences contained object(s) exposed to the maximum luminance, resulting in extremely high-contrast images. The characteristics of the test sequences described here are also summarized in

Table 1.

As an objective measure for the performance comparison of luma control schemes, we used the tPSNR, defined in the Annex F of [

15], on the luminance signal (i.e., tPSNR-Y) and on the overall XYZ color signal (i.e., tPSNR-XYZ). The tPSNR measure is a new metric for HDR material involving the color conversion to CIE XYZ space and the average of two transfer functions, ST.2084 and Philips, for the calculation of PSNR.

Figure 4 summarizes the simulation results, where each number represents the tPSNR value averaged over all the frames of each test sequence. First, from each subfigure, we can easily identify that the performance difference is larger in tPSNR-Y (i.e., in

Figure 4a) than in tPSNR-XYZ (i.e., in

Figure 4b). This result is attributed to the objective function of luma control (i.e., Equation (1)), which concerns only the luminance perception error. Luma control optimizes such luminance error by modifying luma values and thereby directly enhances the luminance perception (i.e., tPSNR-Y) while indirectly enhancing the reconstructed color components (i.e., the

, in Equation (1)). Because of the weights,

in the objective function, the improvement of tPSNR-X (closely related to the red color) is usually larger than that of tPSNR-Z (closely related to the blue color), and these indirect improvements are much less than that of tPSNR-Y. This limited improvement on tPSNR-X and tPSNR-Y restricts the difference of tPSNR-XYZ performance among tested luma control algorithms. One interesting point in the tPSNR-XYZ result given in

Figure 4b is that the averaged result of the proposed scheme is better than that of the “Iterative” scheme (which is regarded as the optimal solution for luminance perception), although the gain is only 0.01 dB. This phenomenon tells us that better luminance perception may not always provide better overall signal perception, which justifies a new direction of luma control research based on a better perception metric or incorporating chroma modifications.

Now, let us examine the tPSNR-Y performance of the proposed algorithm. The “No Control” case in the figure is the conventional signal conversion using the NCL Y’CbCr4:2:0 format without luma control. If we compare the “Average” result of each luma control with that of this “No Control” case, we can identify that the proposed scheme achieved the tPSNR-Y improvement of 14.79 dB on average, while the “Fast” and the “E-Fast” schemes achieved 10.73 and 13.49 dB, respectively. On a sequence basis, the proposed luma control scheme enhanced the “Fast” and the “E-Fast” algorithms by up to 7.44 and 3.53 dB on the “Fireplace” and on “Market” sequences, respectively. One important observation here about these improvements is that there is no case of negative improvement. The proposed scheme is, on average, always superior to the compared previous fast luma control algorithms in all test sequences. Compared with the “Iterative” case (i.e., the optimal case), the tPSNR-Y of the proposed scheme is less than only 0.04 dB on average, indicating that the proposed scheme achieves nearly the same performance. However, we must note that nearly the same performance comes without iterations, i.e., there is no uneven amount of computations per pixel, which can be of great help to the hardware implementation of the proposed algorithm. Finally, let us look into the numbers inside the brackets in the “E-Fast” row of

Figure 4a. They are the reduction factors,

, in (12), which were chosen as the best for each test sequence. As shown in the subfigure, the values are quite different for each test sequence (i.e., hard to use a fixed value) and the factor is known to have a great impact on the reconstruction quality (i.e., around 2 dB on average) [

10]. On the other hand, in all the simulations summarized in

Figure 4, we used the same values of

and

for the proposed algorithm in (22).

In order to identify the influence of the parameters

and

on the reconstruction quality of the proposed algorithm, we tested a set of parameters and summarized the results in

Table 2. The tested parameters are the equally spaced nine samples of the point

between

(i.e.,

and

) and

(i.e.,

and

), except for the end points. Based on the assumption that the true optimal to be approximated is uniformly distributed over the range bounded by the two end points, we can expect the best quality comes from the point near the center (i.e.,

) but slightly biased to the upper bound

(i.e.,

) considering the convexity of the EOTF.

However, as can be seen from the boldface figures (the best results) in

Table 2, the best reconstruction qualities including the best “average” quality come mostly from the points near center but slightly biased to the lower bound (i.e.,

), indicating the lower bound is usually tighter than the upper bound. Moreover, the worst-case results (i.e., the underlined numbers in each row) are shown mostly from the points near the lower bounds, which seems reasonable from the convexity of EOTF. Above all these results,

Table 2 shows that the parameters

and

do not cause a significant change of the performance of the proposed algorithm. The performance difference between the best and the worst cases corresponds to only 0.12 dB on average (the average was calculated from the difference for each test sequence (i.e. the average of the biggest differences), not directly from the “Average” case of

Table 2 (i.e., the difference of averages)), and the biggest difference is 0.33 dB from the “Market” sequence. This limited change of the performance comes from the tightness of the derived bounds and enables us to use a fixed parameter just near the center point of the two bounds.

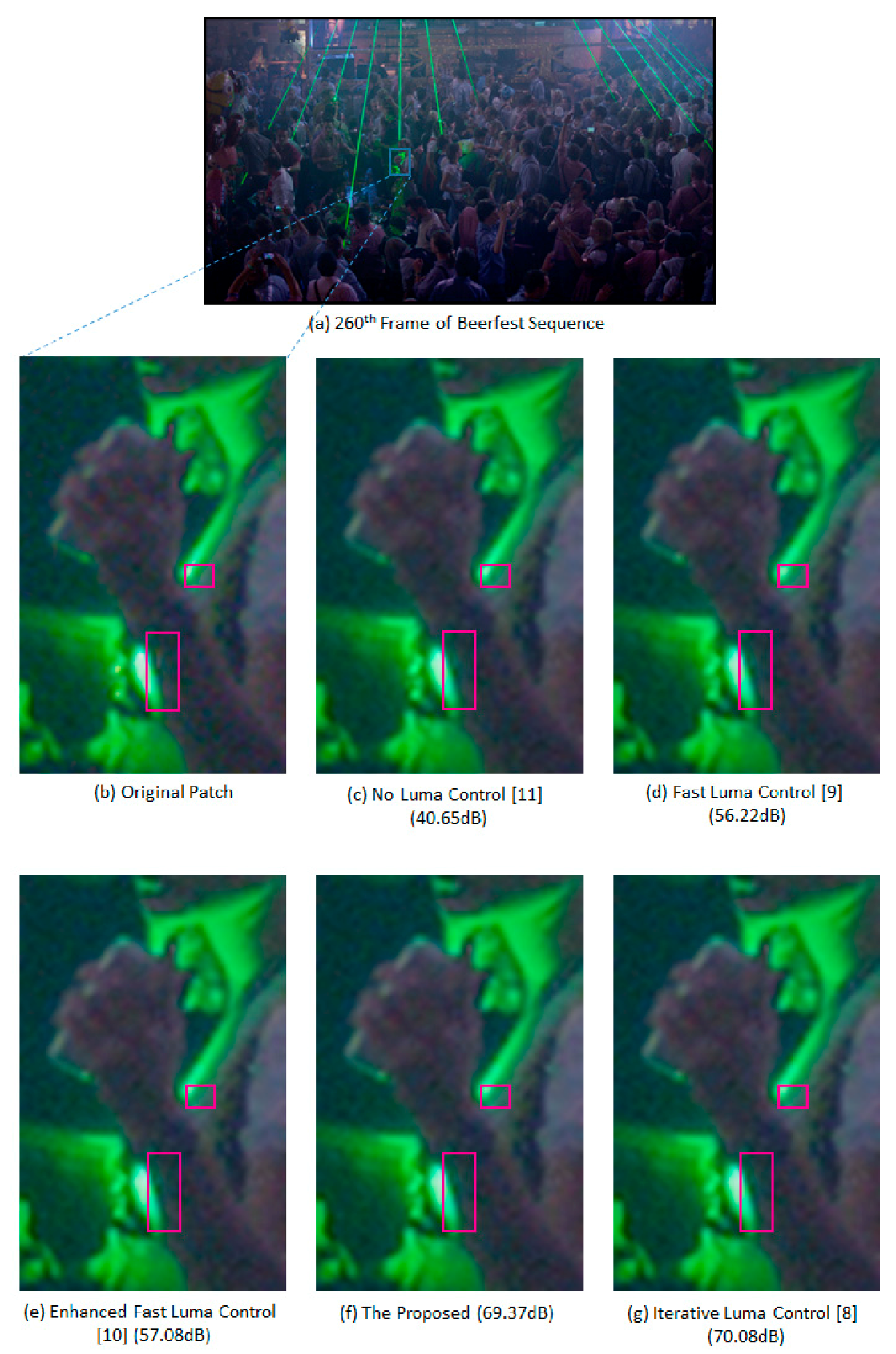

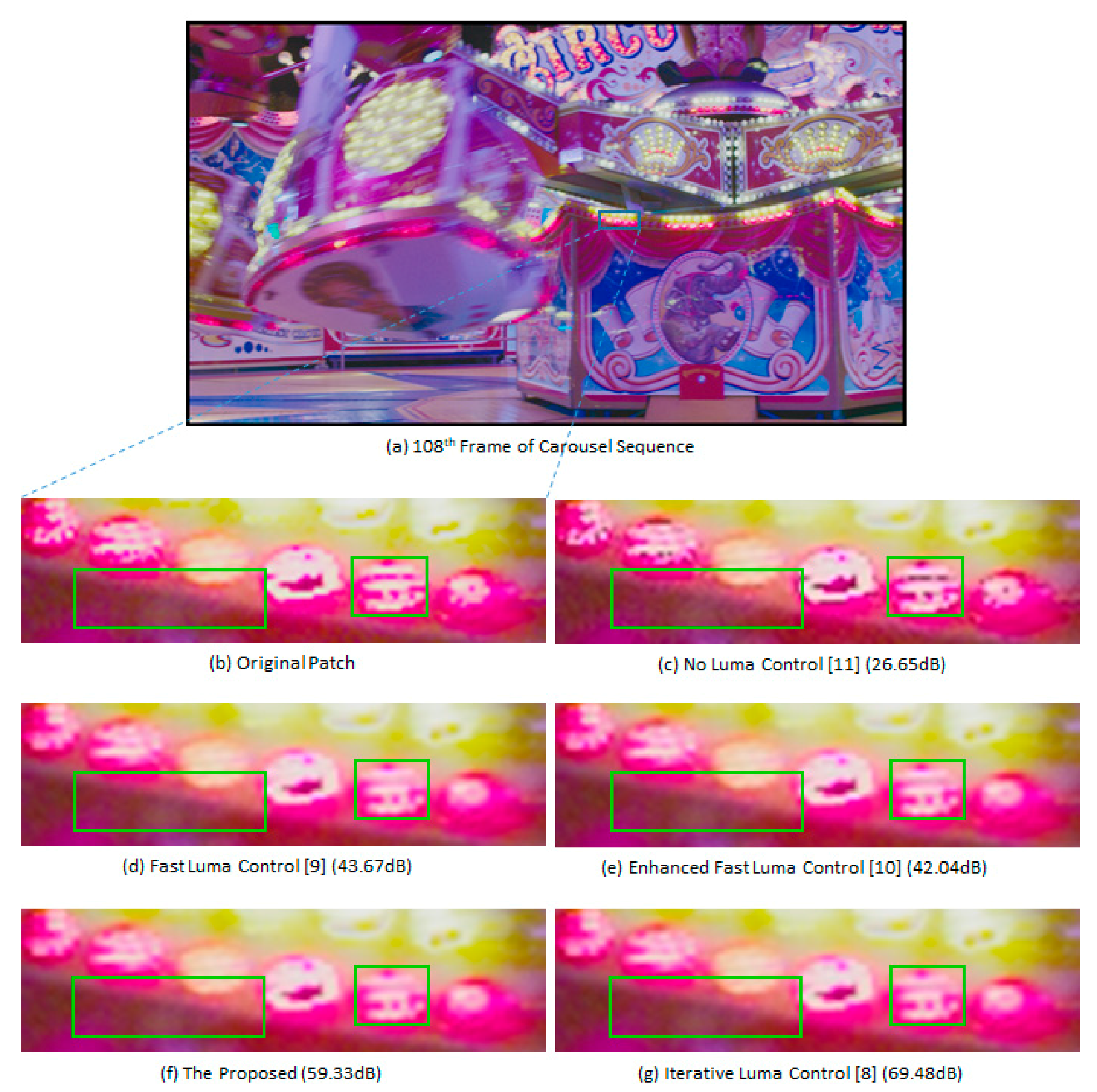

Finally, we show an example of the subjective quality comparison among the tested luma control algorithms. As noted earlier in [

5,

8,

9,

10], the artifacts introduced by the NCL Y’CbCr 4:2:0 format would appear as false contours around the object boundary and/or speckle noises in the smooth area. These artifacts become significant in a bright region of highly saturated colors and/or an edge region having large brightness changes. Hence, those artifacts can be easily seen from bright yellow, cyan, or magenta color regions rather than neutral color regions with low-to-medium brightness.

Figure 5 shows such artifacts and the quality enhancement by luma control algorithms for the 108th frame of the test sequence “Carousel”, where we highlighted the differences in two parts (see green boxes) of the cropped image patch (i.e., as shown in

Figure 5a) among different luma control algorithms. The subfigures b,c of the

Figure 5 clearly show the subjective quality problem in the 4:2:0 media format of HDR/WCG video. We can observe that the texture inside the left green box became rougher and the bright pink dots in the right green box got dark after 4:2:0 conversion without luma control. Because of such big changes in brightness, the quality became only 26.65 dB in tPSNR-Y, as shown in

Figure 5c. On the other hand, from the subfigures d–g of

Figure 5, we can identify that the luma control schemes significantly ameliorate such quality problems and enhance the subjective quality. The rough texture and the dark pink dots disappeared in all luma control outputs, resulting in a better perception of the scene brightness. However, the problematic pink dots are observed to be not fully recovered and the rough textures look smoother than the original, illustrating that a video format with higher chroma resolution is desirable for better perception of HDR/WCG video.

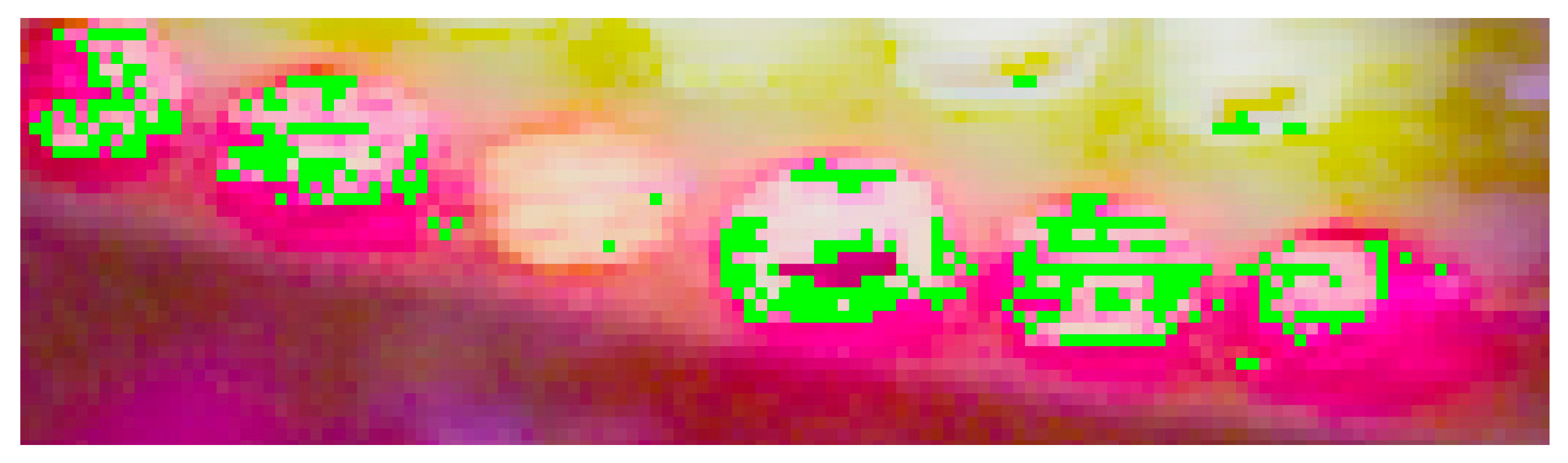

Although the tPSNR-Y values of the subfigures d–g are quite different (i.e., from the 42.04 dB of the “Enhanced Fast Luma Control” scheme in (e) to the 69.48 dB of the “Iterative Luma Control” scheme in (g)), it is hard to observe any subjective difference among the luma control schemes. In order to identify which part was attributed to such a big difference of tPSNR-Y values, we compared the luminance error defined in (1) for the outputs of the 108th frame of “Carousel” sequence produced by the fast and the proposed luma control schemes. After subtracting the per-pixel error of the proposed output from the fast luma control error, we sorted the difference to find the pixel location having high error difference. Then, we marked top 0.1% location with “Green” pixels and cropped the same area as that which was compared in

Figure 5.

Figure 6 shows the area of the biggest quality difference between the two luma control schemes. We can observe that the green pixels are mostly concentrated on the boundary area showing big brightness changes. Although these differences in a single frame are not clearly perceived as subjectively different in

Figure 5, the perturbations of this type of error in consecutive video frames may yield small flicker artifacts in such a boundary area, which can be very annoying to viewers. More examples of the subjective quality comparison can be found in

Appendix A of this paper.

5. Conclusions

As a promising type of emerging immersive media, HDR/WCG is starting to replace the main stream of content production for providing far better quality ultra-high definition (UHD) media. The media format, known as HDR10 or HDR10+, has been adopted in various fields of media industry but has possible degradation on luminance perception. Luma control is a method to cope with such potential luminance perception problems and is perceived to be an essential preprocessing technology in HDR/WCG content production. In this paper, we proposed a fast and reliable luma control scheme that can significantly ameliorate the luminance perception error of HDR10/10+ format video and is highly suitable for hardware implementations.

The proposed algorithm employs a linear approximation of EOTF using a straight line passing two points on the EOTF curve, where one is from the original signal and the other from a lower and an upper bound of the optimal luma value. This new linear approximation is the first contribution of this paper. Further, for a more accurate and robust approximation capability of the proposed straight line, we derived two new bounds on the true optimal value based on the solution using truncated Taylor series. This is the second contribution of this paper. Then, in order to demonstrate the feasibility of the proposed luma control scheme, we conducted an extensive comparison study among the previous representative luma control algorithms. Based on the contributions mentioned above, the proposed linear approximation has been identified to provide nearly the same quality of the optimal solution, i.e., only 0.04 dB less than the iterative luma control scheme, in tPSNR-Y on average. Moreover, nearly the same quality was obtained without iteration, resulting in a friendlier nature for hardware implementations. The proposed algorithm showed an impressive quality improvement over the previous fast luma control schemes, i.e., up to 7.4 dB in tPSNR-Y over the fast luma control scheme on the “Fireplace” sequence and up to 3.6 dB over the enhanced fast luma control algorithm on the “Market” sequence. Again, this quality improvement was obtained without any adaptive parameters, which were the required cost for the quality enhancement of the enhanced fast luma control scheme over the fast luma control algorithm.

With these desirable features, the proposed scheme is expected to be highly useful for a practical production system of high-quality HDR/WCG video and to be more valuable due to tighter and more computation-efficient bounds on the optimal luma value.