Abstract

In order to meet the demand of the intelligent and efficient picking of fresh citrus fruit in a natural environment, a flexible and independent picking method of fresh citrus fruit based on picking pattern recognition was proposed. The convolutional attention (CA) mechanism was added in the YOLOv7 network model. This makes the model pay more attention to the citrus fruit region, reduces the interference of some redundant information in the background and feature maps, effectively improves the recognition accuracy of the YOLOv7 network model, and reduces the detection error of the hand region. According to the physical parameters of the citrus fruit and stem, an end-effector suitable for picking citrus fruit was designed, which effectively reduced the damage during the picking of citrus fruit. According to the actual distribution of citrus fruits in the natural environment, a citrus fruit-picking task planning model was established, so that the adaptability of the flexible handle can make up for the inaccuracy of the deep learning method to a certain extent when the end-effector picks fruits independently. Finally, on the basis of integrating the key components of the picking robot, a production test was carried out in a standard citrus orchard. The experimental results show that the success rate of the citrus-picking robot arm is 87.15%, and the success rate of picking in the natural field environment is 82.4%, which is better than the success rate of 80% of the market picking robot. In the picking experiment, the main reason for the unsuccessful positioning of citrus fruits is that the position of citrus fruits is beyond the picking range of the end-effector, and the motion parameters of the robot arm joint will produce errors, affecting the motion accuracy of the robot arm, leading to the failure of picking. This study can provide technical support for the exploration and application of the intelligent fruit-picking mode.

1. Introduction

Fruit is an indispensable part of the human diet and a significant source of income through cash crops [1,2]. Data from the National Bureau of Statistics indicate that over the past decade, China’s fruit cultivation area and output have been consistently increasing. By 2021, these figures were projected to reach 12,962 square kilometers and 296.11 million tons, respectively, placing China at the forefront globally [3,4]. Since 2004, advancements in agricultural mechanization have enabled the complete mechanization of traditional grain crops, such as rice and wheat, from sowing to harvesting. However, fruit harvesting remains largely dependent on manual labor, which is labor-intensive [5].

At present, researchers in China and abroad have conducted a lot of research on the identification of fruit trees. The main method of fruit recognition is visual recognition, that is, image recognition, which uses image acquisition equipment to collect images, and then classifies and recognizes the images. Traditional image recognition methods are mainly used to extract the features from the image manually, and then to recognize the fruit. With the development of computer technology, image recognition based on machine learning methods and deep convolutional neural networks has been widely used in fruit detection. (1) Traditional visual fruit recognition: RGB images captured by image acquisition devices include major features such as color, shape, and texture. Early studies have generally focused on extracting a single feature to identify fruit. Qian Jianping et al. introduced the mixed color space recognition method with a V value on the basis of RGB color, which effectively improved the recognition success rate of apples under different lighting conditions [6]. (2) Fruit recognition based on machine learning methods: the above artificial feature extraction methods are time-consuming and laborious, and a large number of feature combination experiments are needed to obtain the best results. Since the 1980s, machine learning has developed rapidly. Many scholars combine machine learning theory with fruit recognition technology, and mainly use SVM, Canny, HOG, and other methods for feature extraction to enable fruit recognition. Wei et al. proposed a fruit recognition method using color features based on the improved OSTU threshold algorithm and OHTA color feature model, and a large number of tests proved that the recognition accuracy of this recognition method was above 95% [7]. Arefi et al. used the combination of RGB, HSI, and YIQ space to extract the color features of ripe tomatoes, and the success rate of identifying tomato fruits in a greenhouse was about 96.36% [8]. (3) Fruit recognition based on convolutional neural networks: Ref. [9] used RGB and YCbCr to perform color segmentation on the acquired images and performed variable texture segmentation on adjacent pixels in the image, so as to enable the detection and recognition of mango. This recognition method can effectively reduce the influence of light on the recognition effect. Ref. [10] optimized the structure of the convolution layer and the pooling layer in a Faster R-CNN model, making the identification more accurate and faster. The ability to recognize fruits in different stages of maturity and with complex backgrounds, and the ability to generalize in actual orchard environments, need to be further optimized with algorithms and adjusted model parameters. Compared with the traditional recognition methods, algorithms have higher detection accuracy and shorter processing times, and the average accuracy of detecting multiple fruits on a homemade fruit dataset reaches 91% [11].

In the early 1980s, Japanese scholars initiated research on fruit-picking robots, with significant contributions from experts such as Kondo and Kadota Charishi, whose findings have substantially influenced the development of this technology. Recently, Yukio Honda of Panasonic has spearheaded the development of a greenhouse tomato-picking robot capable of gently harvesting fruit and transporting it to a cart, as well as automatically replacing the harvest box. This innovation aims to reduce daytime labor by enabling night-time automated picking. Researchers at Kochi University of Technology, including Bachche et al. [12], have developed an articulated arm robot designed for harvesting bell peppers, particularly those grown in V-frame structures within greenhouses. Yaguchi et al. [13] from the University of Tokyo have utilized advanced technology, including an electric wheeled omni-directional chassis, a UR5 6-axis robotic arm, and a Sony PS4 binocular stereo camera, coupled with a gripper-twist end-effector, to create a tomato-picking robot. This robot is capable of operating in natural light within narrow greenhouse aisles. However, challenges remain, including issues with grip failure, damage to the fruit’s calyx, and difficulties in gripping multiple fruits simultaneously. Chen et al. [14] from the University of Tokyo have also made strides with their development of a humanoid two-armed tomato-picking robot. This robot features an omni-directional chassis and is equipped with Xtion and Carmine somatosensory cameras on its head and wrist, respectively. The picking arm boasts seven degrees of freedom and a scissor-type end-effector. While the robot has been tested for indoor hanging tomato-picking, it currently requires human command input to perform its tasks. Further enhancements are needed in the areas of identification, localization, and the picking process. In the United States, the Energid company [15] has received financial backing from the U.S. Department of Agriculture to develop a citrus-picking robot. This robot is mounted on a diesel truck and uses visual sensors and high-speed computers for information acquisition, processing, analysis, and control. According to the company’s website, the robot can pick each citrus fruit in 2–3 s, with an approximate picking rate of 50%.

Domestic research into fruit-picking robots commenced in the mid-1990s. At Jiangsu University, Refs. [16,17] developed a prototype mobile tomato-picking robot equipped with a wheeled chassis, a clamping and shearing end-effector, and a binocular vision system. This prototype, along with greenhouse fruit-picking and transporting robots, was designed to work in coordinated operations to achieve full automation in tomato-picking, on-site grading, collection, transportation, and unloading. Refs. [18,19] from the same university developed an apple-picking robot capable of automatically detecting and locating apples in trees using a support vector machine with radial basis functions. This robot is equipped with a five-degree-of-freedom PRRRP-type robotic arm to autonomously perform the harvesting task. Researchers at Nanjing Agricultural University, including Ref. [20], have developed an intelligent mobile fruit-picking robot designed for low and dense planting type orchards. Outdoor picking experiments have been conducted using apple fruit trees, demonstrating the robot’s capabilities in autonomous navigation, robotic arm motion control, fruit identification and localization, end-effector fruit grasping, and fruit boxing. The National Agricultural Intelligent Equipment Engineering Technology Research Center, with Feng Youth and colleagues [21], has developed a tomato-picking robot for hanging line cultivation. This robot features a rail-type mobile lifting platform, a four-degree-of-freedom articulated robotic arm, and a line laser vision system. It uses a CCD camera and laser vertical scanning for fruit identification and localization. Experiments showed that the robot could pick a single tomato fruit in about 24 s, with a picking success rate of 83.9% under strong light and 79.4% under low light. The team led by Professor Ref. [22] has developed a kiwifruit-picking robot that uses a right-angled coordinate robotic arm to pick the fruit from underneath. The average time to pick a single fruit is 11.61 s, with a success rate of 91.7%. Refs. [23,24] proposed a two-armed tomato-picking robot, equipped with two three-degree-of-freedom robotic arms and two different types of end-effectors. These arms can cooperate to harvest tomatoes, thereby improving the harvesting efficiency [25,26,27].

In order to solve the above problems, and in order to meet the demand of the intelligent and efficient picking of fresh citrus fruit in natural environment, a flexible and independent picking method of fresh citrus fruit based on picking pattern recognition was proposed. The CA mechanism was added in the YOLOv7 network model. This makes the model pay more attention to the citrus fruit region, reduces the interference of some redundant information in the background and feature maps, effectively improves the recognition accuracy of the YOLOv7 network model, and reduces the detection error of the hand region. According to the physical parameters of the citrus fruit and stem, an end-effector suitable for picking citrus fruit was designed, which effectively reduced the damage during the picking of citrus fruit. According to the actual distribution of citrus fruits in the natural environment, a citrus fruit-picking task planning model was established, so that the adaptability of the flexible handle could make up for the inaccuracy of the deep learning method to some extent when the end-effector picked fruits independently. Finally, on the basis of integrating the key components of the picking robot, the key performance of the citrus-picking robot is verified and analyzed, which provides a reference for the research and development and application of orchard intelligent picking equipment.

2. Related Work

2.1. Overview of Autonomous Gripping Systems

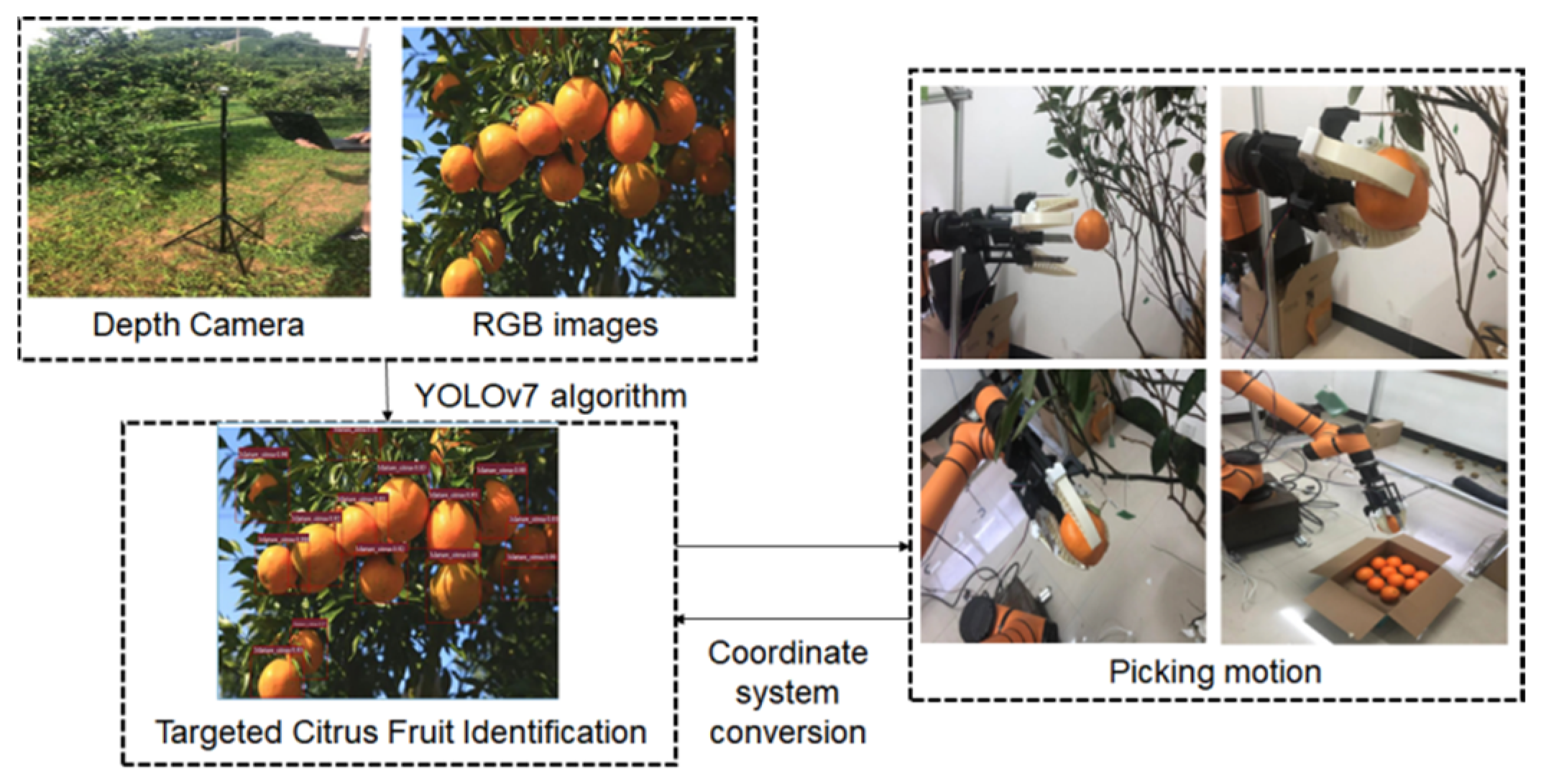

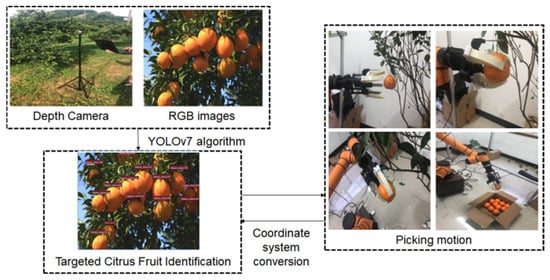

This study explores the flexible picking method for citrus fruits in a field environment; the grasping objects are citrus fruits in a natural environment, and the framework of the autonomous grasping system is shown in Figure 1. Firstly, the depth camera is used to obtain the RGB image of the object, and the image is input into the YOLOv7 target detection algorithm based on the deep neural network model to predict the grasping mode and grasping area of the output object; secondly, the kinematic analysis of the picking robotic arm is carried out by using the ADAMS dynamics simulation software (2020a), and finally, the MATLAB robotics toolbox (2020a) is utilized for the simulation of the robotic arm, to obtain information on the joint motion parameters of the picking robotic arm; then, the kinematic information of the robotic arm is obtained according to the object’s joint motion parameter information; then, according to the object grasping mode control flexible hand claw movement, in addition to the obtained grasping area and grasping angle from the image coordinate system to the camera coordinate system, the kinematic information is converted to the robotic arm coordinate system; and finally, the robotic arm is controlled to cooperate with the completion of the adaptive grasping of the flexible hand claw. The depth camera adopts Intel D435i, and the industrial robot adopts the Aubo 6-axis robot arm. The detailed description is shown in Table 1.

Figure 1.

Framework for an autonomous picking system for citrus-picking robots.

Table 1.

Specifications for depth cameras and industrial robots.

2.2. Visual Recognition and Localization of Citrus Fruits

2.2.1. Recognition of Citrus Fruits Based on YOLOv7

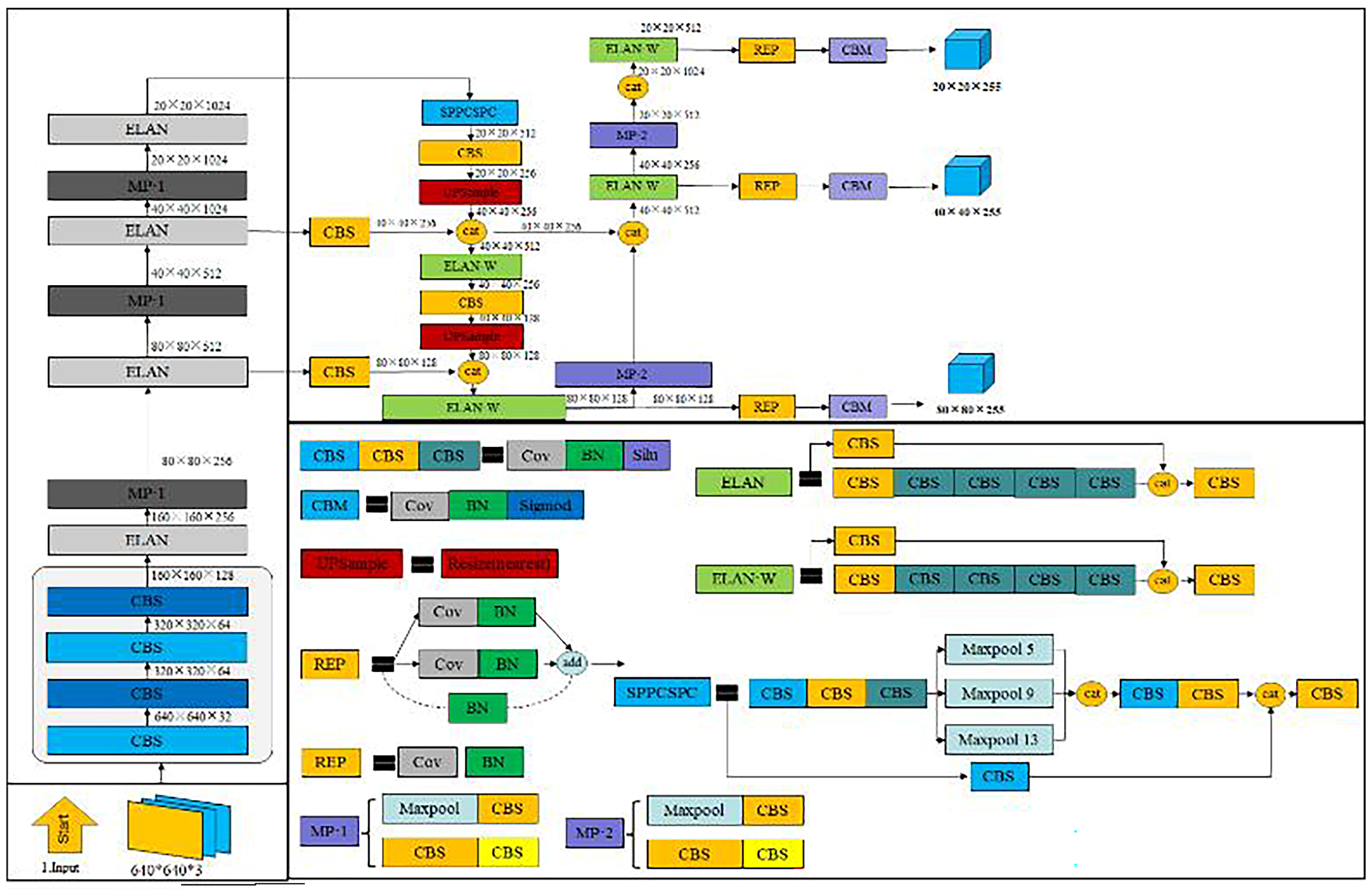

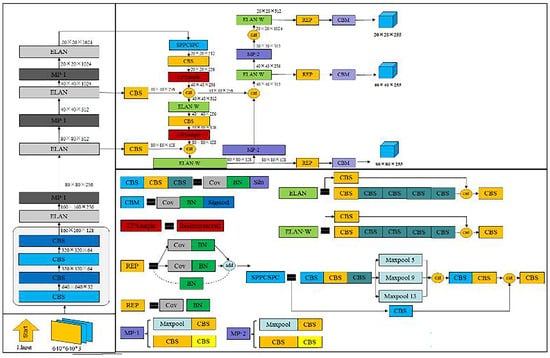

The YOLOv7 algorithm model is the most typical representative of a one-stage target algorithm, which is able to identify and locate the detected target and run quickly for real-time detection. YOLOv7 is the most advanced target detector in the YOLO series, which is faster than other YOLO series algorithm models and has the highest accuracy rate. The YOLOv7 algorithm model mainly contains three parts, namely, the backbone, neck, and head network. The overall architecture of YOLOv7 is shown in Figure 2. During the YOLOv7 algorithm process, the image is pre-processed with data enhancement, then fed into the backbone feature extraction network to extract the basic features of the image, and then fused into the neck layer to obtain three sizes of features: small, large, and medium; then, the features are fused through the neck layer to obtain three kinds of size features. Finally, the fused features are transmitted to the head detection module, which outputs the results after detection.

Figure 2.

Improvement of YOLOv7 algorithm structure.

When people are observing things, they must not focus on the all the details in the visual field, but focus on the region of interest, so as to observe the details of this region more carefully, ignoring the interference of information in other locations, which is generally referred to as attention focus. This enables people to obtain the main information about the things to which they pay attention, and improves the ability and efficiency for human beings to process visual information. The attention mechanism is the same; it lets the neural network know what we focus on, so as to more effectively extract the important features we need, inhibit the interference of useless information, and reduce the misdetection and omission of detection. The principle is to set different parameter weights in the network layer and give the feature information that we need to pay attention to a higher weight to allow the neural network to know the key information that we need to pay attention to. The parameter weights are updated during the training process using gradient descent rather than being set artificially. This enables the network to obtain the most suitable way of assigning weights. Therefore, in order to make the YOLOv7 network model pay better attention to the citrus fruit region features, the CA mechanism is added and improved so that the model can pay more attention to the citrus fruit features, suppress the useless features, and enhance the feature extraction ability of the model.

- (1)

- Attention mechanism module

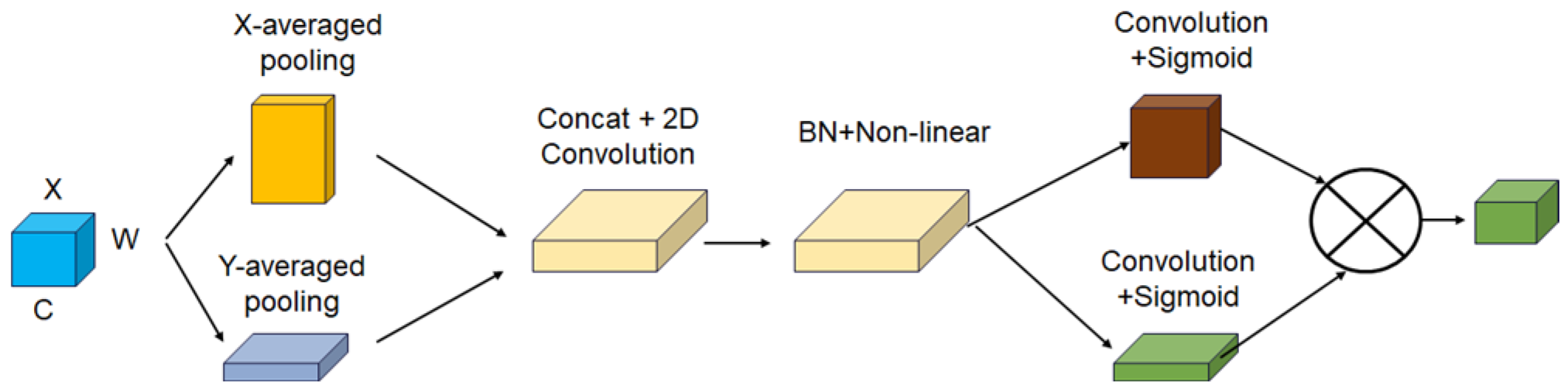

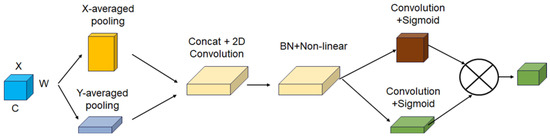

Citrus fruit is yellow, and the main background of its growing environment is green. Although the contrast is high, the natural growing environment of citrus fruit is complex, and the citrus fruit has dense leaves. In order to better improve the accuracy of network recognition and simplify the network, a collaborative attention mechanism is introduced into the YOLOv7 network. The convolutional attention (CA) mechanism is a lightweight attention method, which mainly encodes the relationship between different channels by embedding attention coordinate information and generating attention. The main criteria for evaluating the effectiveness of the CA (convolutional attention) mechanism in reducing background interference during fruit inspection include detection accuracy (the proportion of fruits accurately identified), recall rate (the ability to correctly identify all fruits), F1 score (a measure of the balance of accuracy and recall), average accuracy (the average of performance at different confidence thresholds), and background interference rate (error detection). Together, these indicators measure the ability of the attention mechanism to filter out background noise and improve detection performance in the actual detection task. The introduction of the CA mechanism enhances the detection network’s learning ability of target features. The structure of the CA mechanism is shown in Figure 3.

Figure 3.

Structure of CA mechanism.

First of all, the CA mechanism wants to obtain the image width and image height; it will encode the input feature maps along the horizontal and vertical coordinates and obtain the feature maps embedded with the information about both the width and height coordinates by average pooling; this can be obtained as follows:

where xc is the input feature vector; is the output at height h; is the output at width w; H is the height of the initial input feature vector; and W is the width of the initial input feature vector.

After this, the two average pooled feature maps are spliced and then batch normalized to obtain the feature map F1, and then the feature map f is obtained by the nonlinear activation function, and the following feature map f equation:

After splitting the feature map f into feature maps Fh and Fw with the same number of channels along the vertical and horizontal directions, the attention weight information gh and gw in the height and width directions are obtained by the sigmoid activation function, and the attention weight information gh and gw are given by the following equations:

Finally, the attentional weight information gh and gw in the height and width directions are multiplicatively weighted and computed to output a feature map with attentional weights yc(i,j).

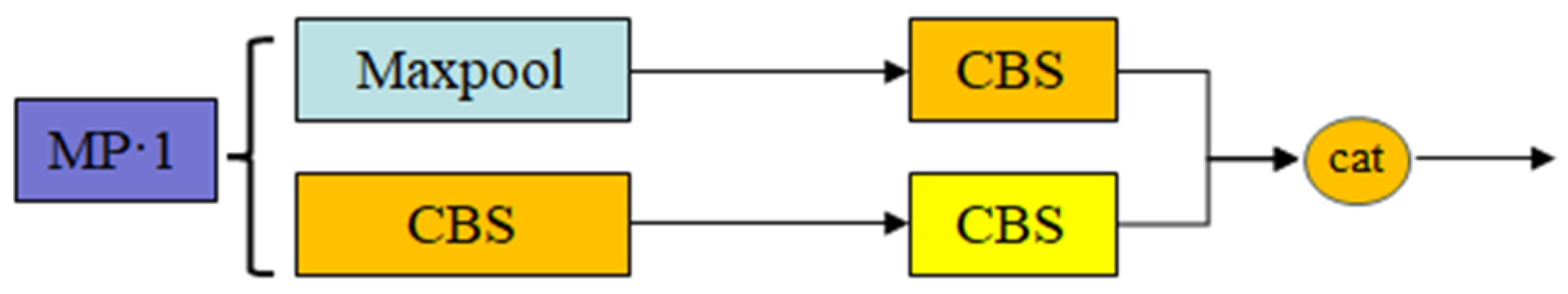

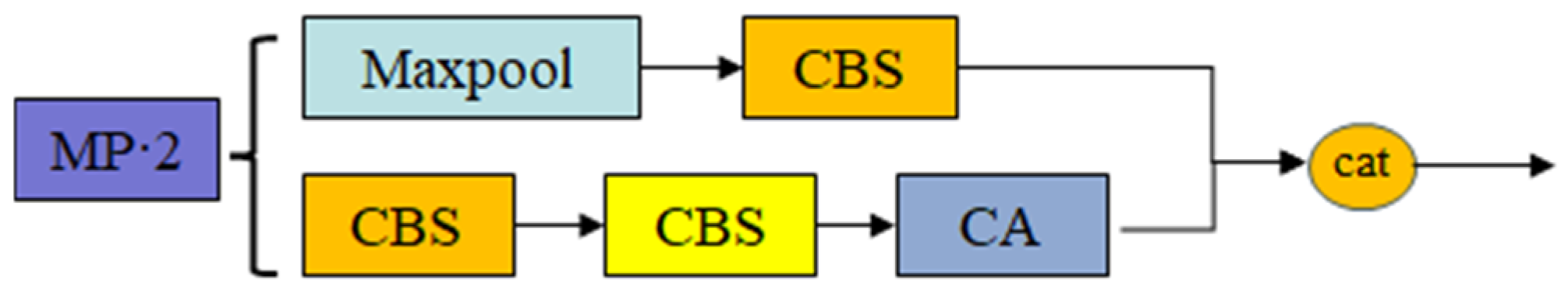

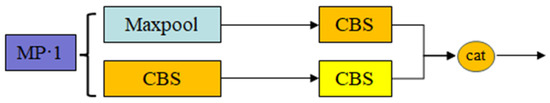

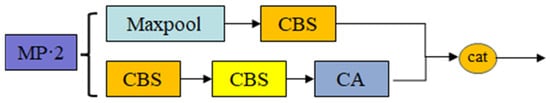

The structure of the MP-2 module before improvement is shown in Figure 4, and the structure of the MP-2-CA module after improvement is shown in Figure 5. The MP-2 module is the same as the MP-1 module, only the number of channels is different. The MP-2 module is divided into two branches, one with a maximum pooling layer and a CBS convolutional layer, and the other with two CBS convolutional layers. Then, the features extracted from the two branches are fused with the Cat operation to improve the feature extraction ability of the network, and the Max Pool layer is used to increase the sensory field of the current feature layer and fuse it with the convolutional processed feature information, which can improve the robustness of the network. Since the maximum pooling layer will reduce a lot of feature information, the CA mechanism is added to the two CBS convolutional layers.

Figure 4.

MP-1 module structure.

Figure 5.

MP-2 module structure.

- (2)

- Backbone network improvement

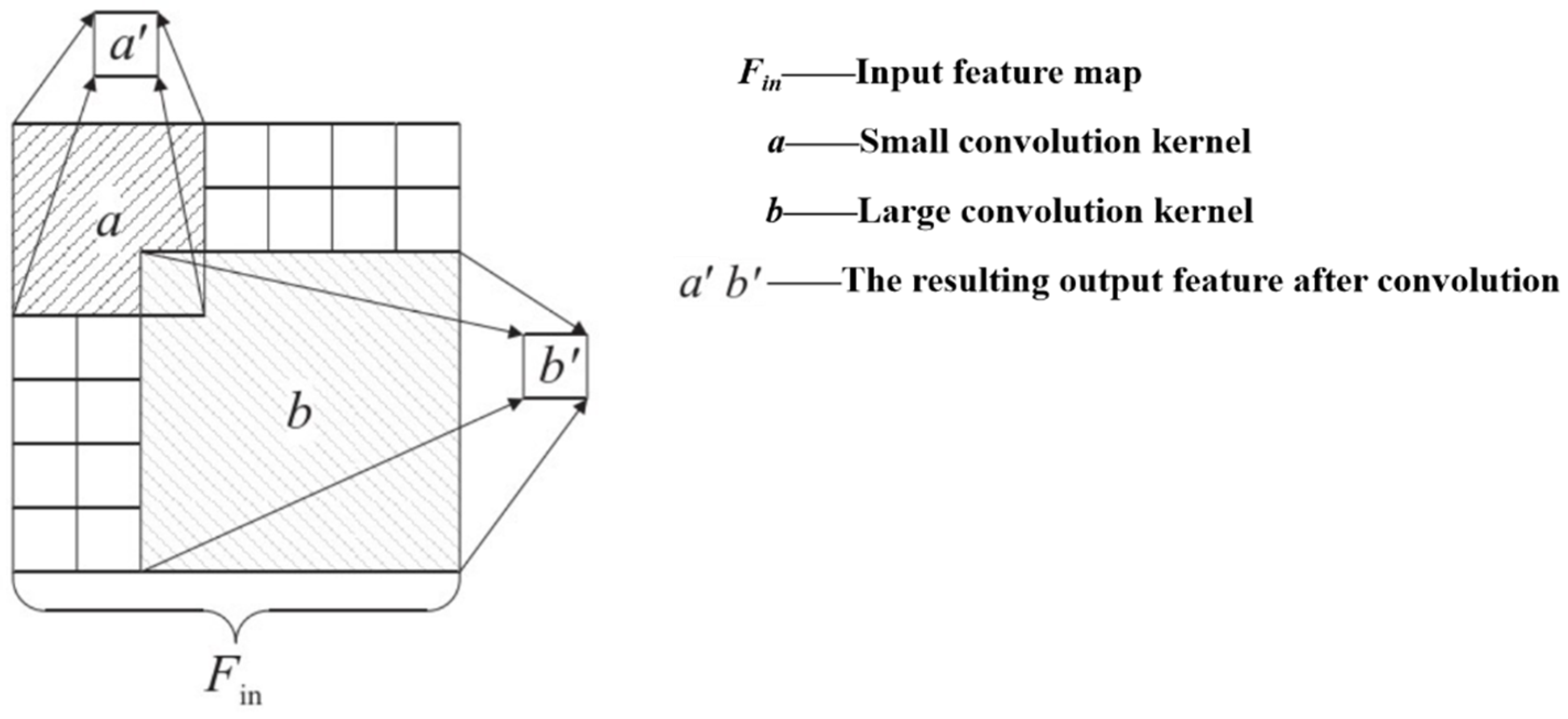

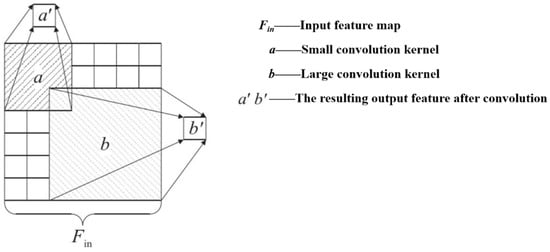

In citrus fruit detection, since the resolution of small target citrus fruit is low and the visualization information is less abundant, it is difficult to extract distinguishing features, and it is easy to be disturbed by environmental factors. In addition, when the distance of small target citrus fruit is relatively close to the large target, the clustering phenomenon of small target fruit occurs easily. When the clustering of small target citrus fruit occurs, the small target fruit adjacent to the clustering area cannot be distinguished. Focal Next Block can improve the fine-grained local interaction ability and enable network models to produce stronger fine-grained features and amplify the small differences among different targets; so, it is necessary to excavate the small differences among different types of targets with little feature information. The classification ability of the model needs to be improved. The difficulty of small target detection also lies in the fact that small targets cover a small area in the image, especially in the YOLOv7 model, which is stacked with small convolutional layers to obtain a larger receptive field; as a result, the image contains less context information and has a weaker semantic information expression ability. Compared with small convolutional cores, under the same number of layers, a large convolution kernel will bring more parameters, and it can obtain a more effective receptive field and increase the output context information, as shown in Figure 6.

Figure 6.

Output of convolutional kernels of different sizes.

2.2.2. Citrus Fruit Positioning Analysis

In this study, after training and improving the model using the recognition dataset, the model is applied to the color map in the localization dataset obtained by Real Sense D435i to obtain the 2D planar coordinates of the center of mass of the fruit in the color map, and then the depth values are obtained at the corresponding pixel points of the center of mass of the fruit in the depth map, which is aligned with the color map, to complete the 3D spatial localization of the fruit.

Based on the trained improved YOL0v4 orchard orange recognition model for extracting the image features in the color map, the bounding box of each orange fruit in the color map is calculated (xi, yi, wi, hi), where xi and yi are the X and Y coordinates of the upper left corner of each bounding box, and wi and hi are the width and height of the bounding box, respectively. The pixel coordinates of the center point of each bounding box are obtained based on the following formula (cxi, cyi):

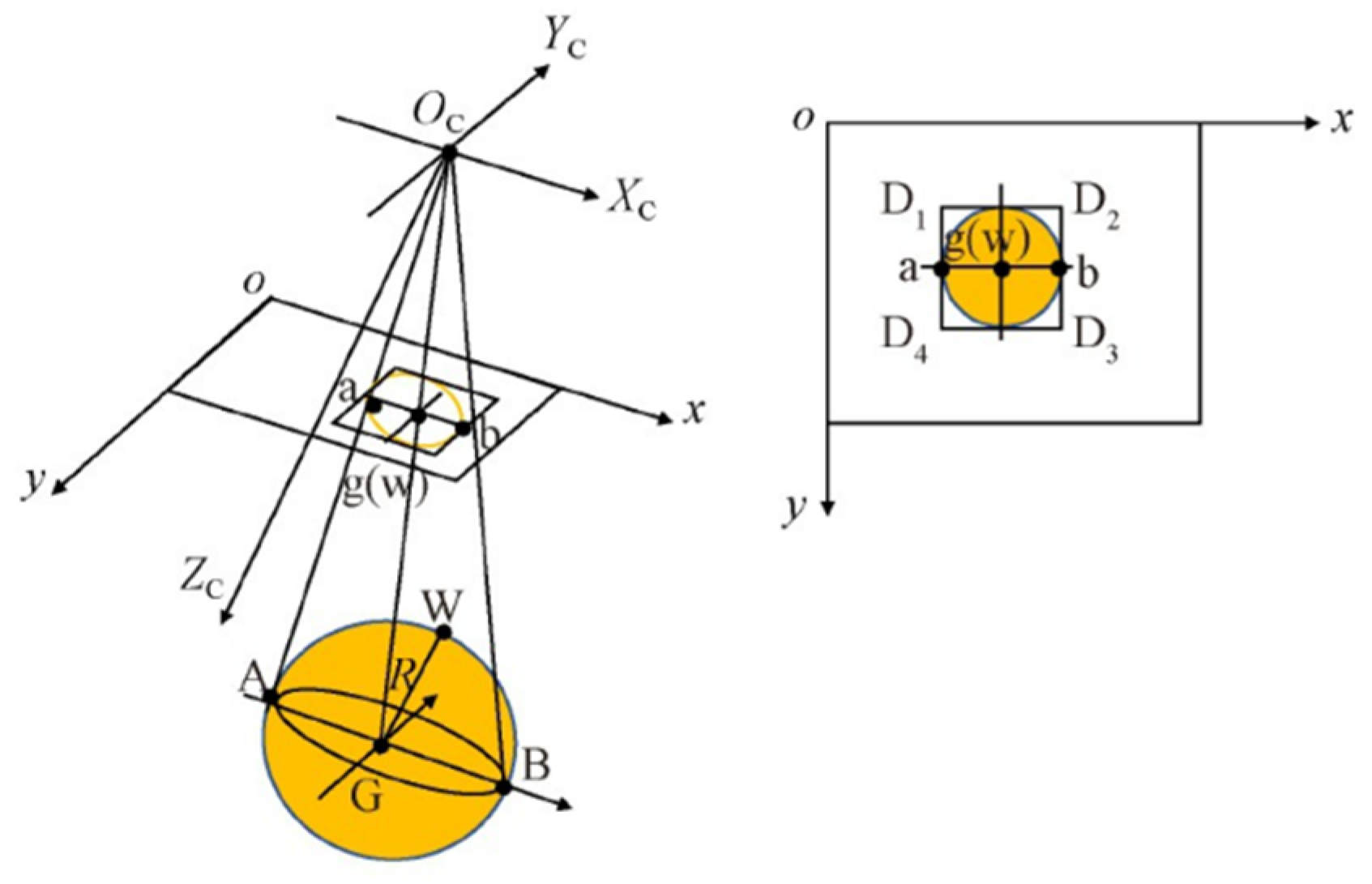

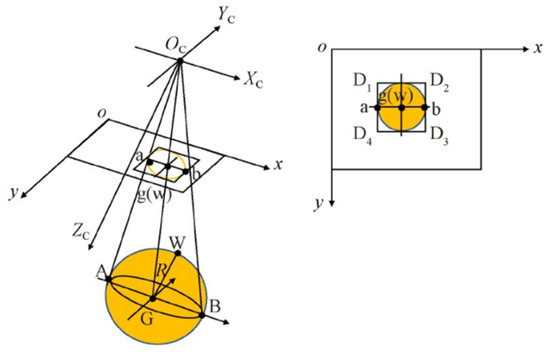

The citrus fruit can be approximated as a sphere, as shown in Figure 7. G is the center of mass of the fruit, AB is the diameter of the fruit, and W is the outer surface of the fruit, which is close to the center of the projection of the camera part of the corresponding space point. The camera coordinate system is OCXCYCZC; OXY is the pixel coordinate system. The circle with G as the center and AB as the diameter is parallel to the imaging plane, and GW is parallel to the ZC axis. According to the projection principle, the projections of A, B, G, and W, are a, b, g, and w, respectively, in Figure 5. The projection of the fruit diameter AB in the pixel coordinate system is ab, and the projection of the center of mass of the fruit and the outer surface of the fruit near the center of the camera portion of the projection is the center of the circle with the diameter of ab as the center of the circle g(w). Through the Real Sense camera, the depth value of the projection center of the outer surface of the fruit, i.e., the spatial point W, can be obtained and expressed as ZW; then, the actual depth reference value of the geometric center can be expressed as ZW + R.

Figure 7.

Schematic diagram of fruit imaging.

Based on the center point pixel of the obtained bounding box, the depth value ZW of the spatial point corresponding to this pixel point in the camera coordinate system obtained from the acquired depth data is calculated as follows:

where scale is the scale and Depth[cxi][cyi] is the pixel coordinate of the center point of the bounding box (cxi, cyi) corresponding to the depth value. Then, the radius of the citrus fruit is determined as follows:

where fx is the camera internal reference obtained by calibration. xa and xb are the pixel horizontal coordinates of points A and B, respectively. The results of depth information prediction were evaluated in terms of the mean absolute error and mean absolute percentage error between the results obtained using the algorithm and the reference value of the two-dimensional position of the center of mass of the fruit, and the reference value of the actual depth of the geometric center, which was calculated as follows:

where n is the total number of samples, yipred is the algorithm prediction, and yitrue is the actual measurement.

2.3. Citrus-Picking Robot Control and Planning

To operate in the natural environment of citrus-picking, the citrus-picking robot must use action planning to control the end-effector to choose the best picking attitude to complete the picking of citrus fruit. For this, the integration of the robot’s system is needed in order to adapt to the picking conditions of the orchard.

2.3.1. End-Effector Control

The end-effector clamps and fixes the citrus fruits through the clamping fingers. In order to adapt to citrus fruits of different sizes and forms, the clamping fingers are counter-driven by the stepping motor to maximize the degree of opening of the clamping fingers before grasping the fruits. When the end-effector reaches the picking point, the clamping finger slides downward under the positive drive of the stepping motor of the wire rod so as to drive the clamping finger to carry out the closing movement until the clamping finger adaptively contacts the surface of the citrus fruit to form a certain envelope, so as to reliably grasp the citrus fruit with a small gripping force and generate a large friction force, thereby causing no damage to the citrus fruit. After the gripping fingers have gripped the citrus fruit, the end-effector is rotated so that the cutting blade fixed between the gripping fingers completes the cutting of the citrus fruit stalk.

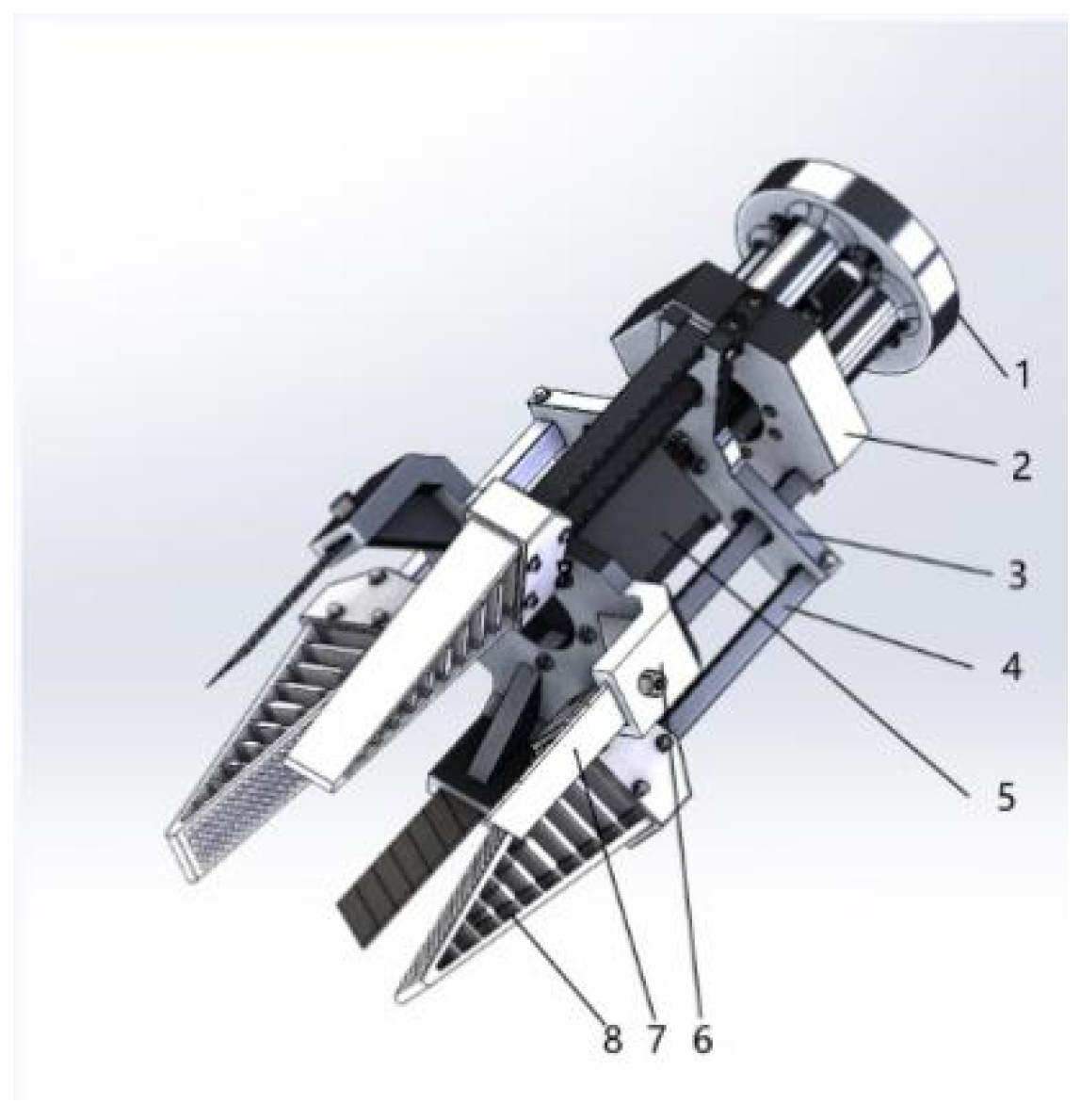

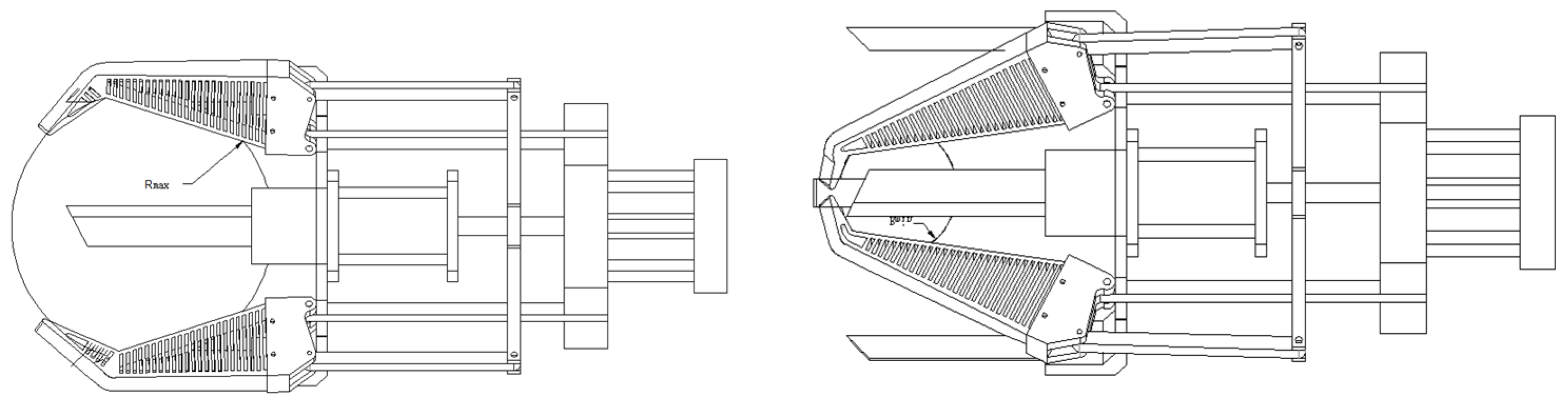

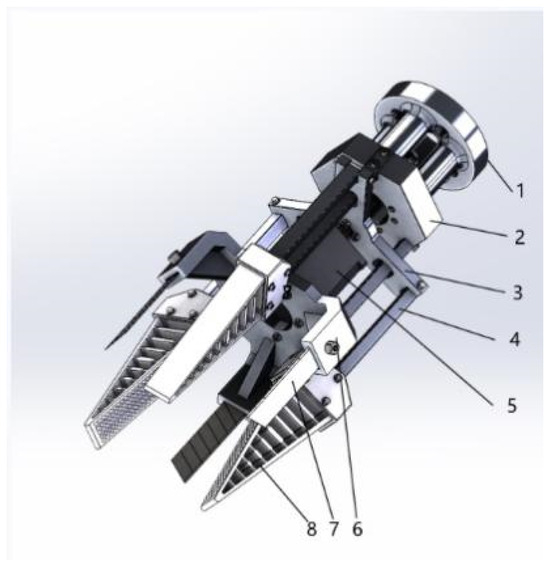

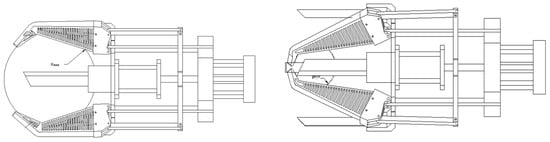

The end-effector developed in this paper can be adapted to ball fruit-picking; as shown in Figure 8, the specific picking process is as follows:

Figure 8.

Flexible picking end-effector. 1. Connect chuck 2. Fix bracket 3. Clamp fingertip 4. Move connecting rod 5. Stepper motor 6. Blade holder 7. Cutting blade 8. Grip finger.

- In order to reduce the damage of the end-effector to the citrus stems and leaves in the process of movement, the end-effector maintains a horizontal position with the target fruits for picking, and the gripping fingers are opened to the maximum angle under the drive of the stepping motor.

- After the end-effector moves horizontally to the picking point of the picking robot arm, the gripping fingers are driven by the stepping motor to close the movement until the target fruit is tightened.

- The shaft movement of the picking robot arm drives the whole end-effector to rotate, so that the cutting blade of the end-effector completes the cutting of the fruit stalk and completes the separation of the fruit and the fruit stalk.

- After completing the separation between the fruit and the fruit stalk, the end-effector is moved to the fruit recovery basket by the picking robot arm to complete the picking process.

2.3.2. Structural Design of Citrus-Picking End-Effector

- (1)

- Clamping finger form

The citrus-picking robot is often affected by branches and nearby fruits in the picking process, and the end-effector flexes its fingers when picking target fruits, resulting in damage to the target fruits. Fin-like flexible fingers can better adapt to the size of citrus fruits and can wrap the citrus surface without damaging the citrus skin during the picking process. Moreover, ABS materials can be used for 3D printing at a low cost. Therefore, in this study, a fin-imitation flexible finger was chosen as the clamping finger of the end-effector.

- (2)

- Number of fingers

Depending on the spherical structure of the citrus fruit, it is generally held by two or more gripping fingers. However, the two gripping fingers are unstable for fruit with a spherical structure, and it is necessary to ensure that the gripping fingers are held near the equatorial plane of the citrus fruit to achieve stable fruit clamping. However, the picking robot often has visual positioning errors and control errors of the robot arm when picking fruits, etc. Multiple gripping fingers will lead to a more complicated end-effector control system. The picking efficiency of the end-effector in the picking process is reduced and the cost is increased. To sum up, considering the problems of picking accuracy and cost, the three-finger end-effector is adopted in this study, which can effectively ensure the errors of the picking robot in the working process are reduced and ensure the effectiveness of the control system.

- (3)

- Mathematical model of finger length in citrus-picking

Due to the great differences in the growth characteristics of citrus fruits, in order to determine the effective grasp of target fruits by grasping fingers, the transverse diameter range of mature citrus fruits was 48.25~80.85 mm, and the average transverse diameter of citrus fruits was 62.64 mm, according to the previous physical characteristic measurement of actual citrus fruits. The longitudinal diameter ranged from 41.83 to 72.62 mm, and the average longitudinal diameter of citrus fruit was 55.85 mm. The diameter of the citrus fruit stem ranged from 1.25 to 3.03 mm, and the average diameter of citrus fruit stem was 2.03 mm. Citrus fruit can be defined as a sphere. The holding finger of the end-effector holds and wraps the citrus fruit, as shown in Figure 9. In order to determine the length of the gripper finger, a gripper mechanism model based on the equatorial plane of citrus fruit was established with the transverse diameter range of citrus fruit as the index. In order to ensure the effective clamping of the gripping finger on the citrus fruit, when picking a large-diameter fruit, the clamping area of the gripping finger must be greater than 55%. According to the analysis, the length of the gripping finger is 70 mm.

Figure 9.

Diagram of citrus-picking at different sizes and diameters.

2.3.3. Picking Robot Arm Motion Planning

After obtaining the 2D coordinates (u, v) of the center point of the gripping frame under the image coordinate system by the gripping detection, the depth value zc detected by the depth camera and the internal reference matrix K of the camera are converted into 3D coordinates (xc, yc, zc) of the gripping point under the camera coordinate system by the camera driver software3.0, and the conversion formula is as follows:

Through the hand–eye calibration of the robotic arm and the depth camera, the transformation matrices R and T from the camera coordinate system to the robotic arm base coordinate system are obtained, and then, the transform frame (TF) coordinate conversion software 2.0 in the robot operating system (ROS) is used to obtain the three-dimensional coordinates of the gripping point (x, y, z) under the robotic arm base coordinate system. The conversion formula is as follows:

The attitude r, p, and q values of the robotic arm represent the radian values of the rotation angle of the end of the robotic arm around the x, y, and z axes, respectively. According to the characteristics of the dexterous hand structure in this study, the end of the robotic arm is slightly tilted towards the front and a fixed tilt angle is maintained. That is, during the movement of the arm, the rotation of the end around the x, y direction is fixed, and its r and p value remains unchanged from the initial value. When the object is in a different position, or a different rotation is required, the detection of the grasping angle directly corresponds to the end of the arm around the vertical direction of the z-axis of the rotation angle; the grasping angle of the radian value is the attitude of the q value of the arm. The motion planning of the robotic arm is realized by the ROS software system 3.0, the path is planned in Moveit 2.0, and the simulated motion is realized in Gazebo 5.0. The motion control of the arm is realized by programming the end position x, y, z coordinates and the attitude r, p, q values.

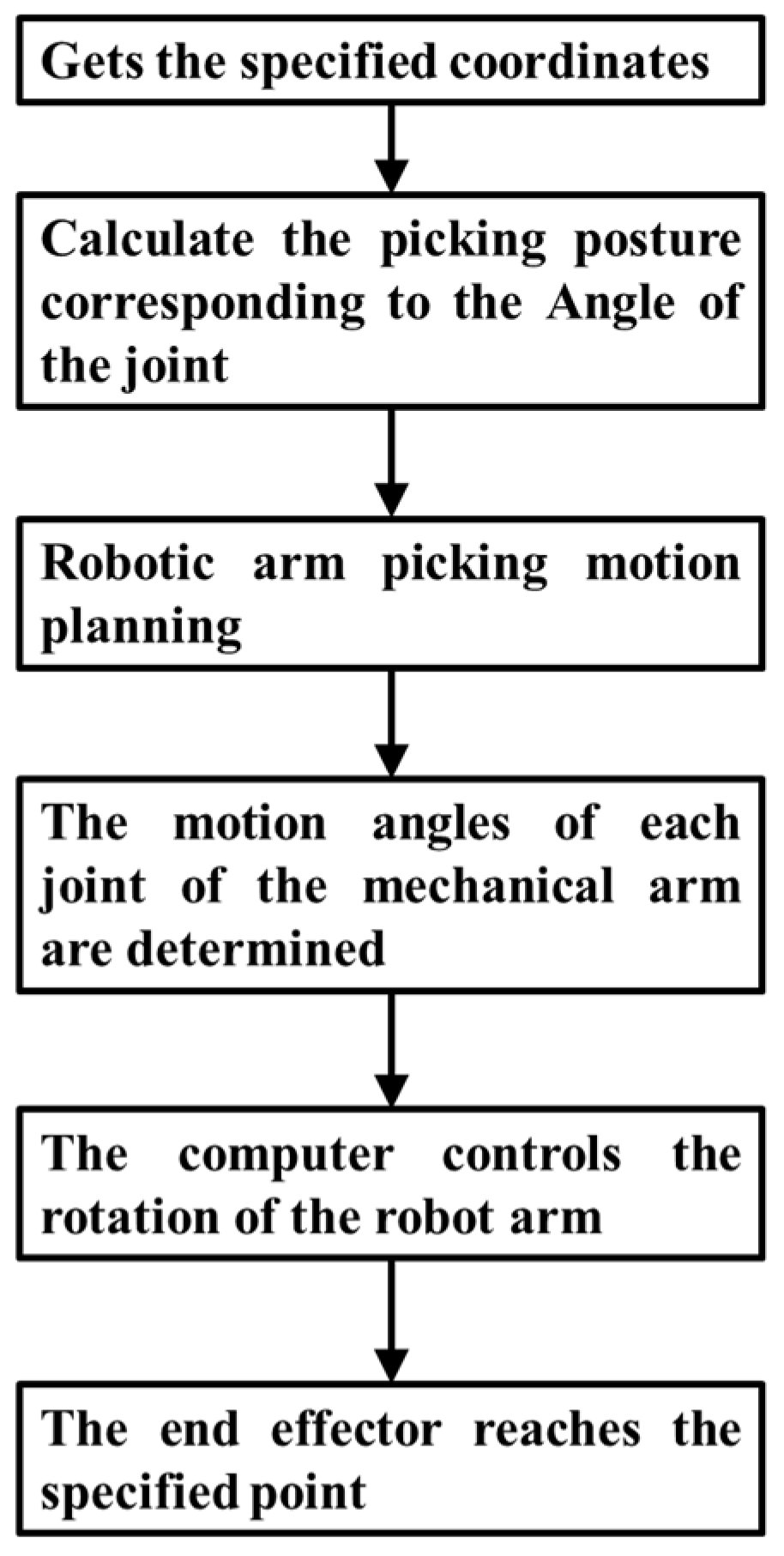

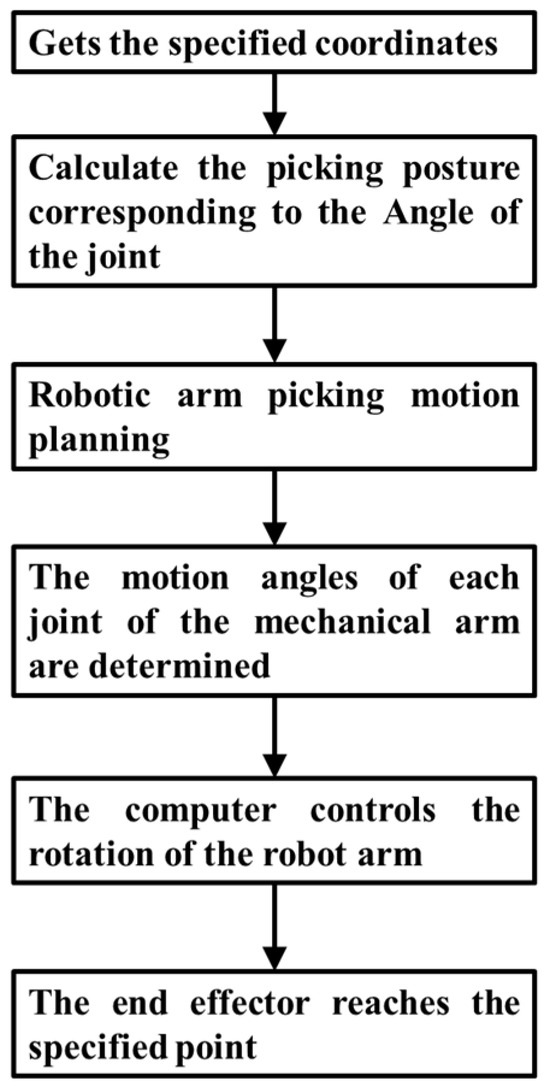

2.3.4. Design of Picking Robot Control System

During the citrus-picking process, the picking robot can be autonomously controlled based on visual and motion planning systems. The specific workflow is as follows: the robot vision system can recognize and locate citrus fruits and transmit the fruit position information to the computer. The computer plans the path based on the received information and issues commands to drive the picking robot arm to reach the picking point. At the same time, the end-effector stepper motor is controlled to open and close the end-effector to complete the picking of the fruit, and the end-effector is rotated to complete the cutting of the citrus stem by the blade. Finally, the picking robot arm is moved to the fruit collection point to complete the single fruit-picking. The operation flowchart of the citrus-picking robot is shown in Figure 10.

Figure 10.

The control process of manipulator.

3. Experimental Results and Analysis

3.1. Improved YOLOv7 Model for Fruit Recognition Results

In order to demonstrate the effectiveness of the model improvement, the detection networks of different frameworks are comparatively analyzed, and the detection results in the complete test set are shown in Table 2. From the table, it can be seen that the improved YOLOv7 model proposed in this paper has a detection accuracy P, recall R, reconciliation mean F1, and average precision AP of 95.27%, 93.28%, 92.88%, and 96.55%, respectively, and compared with the classical detection Faster RCNN and SSD models, the F1 value of the improved method proposed in this paper has been improved by 12.22 and 10.65 percentage points, and the average detection accuracy is improved by 2.69 and 3.11 percentage points, respectively. Compared with the traditional YOLOv7 model, the improved model also has some advantages in detection accuracy, and the average accuracy AP values are improved by 0.92 and 0.72 percentage points, respectively.

Table 2.

Detection results of different detection network models.

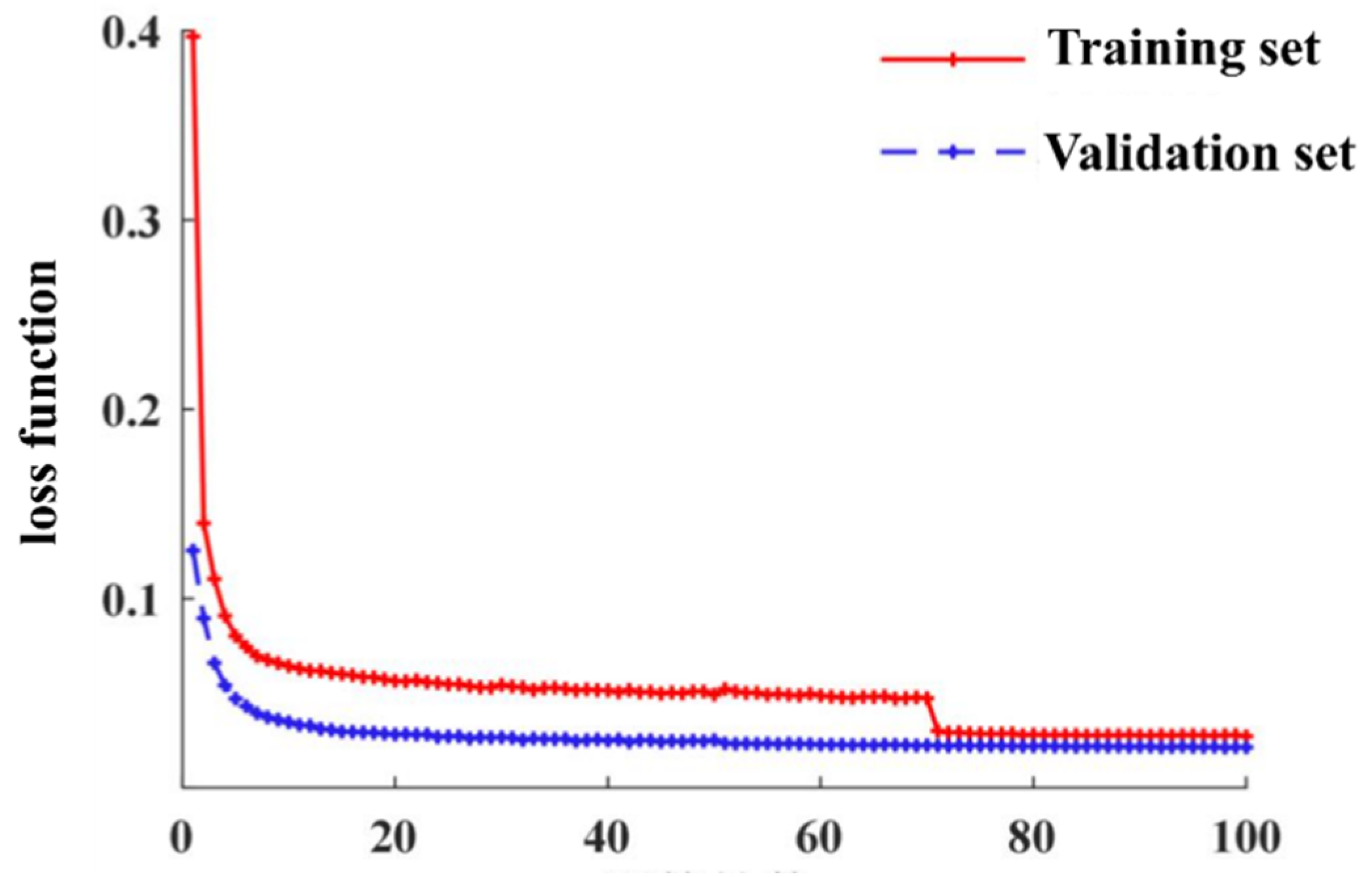

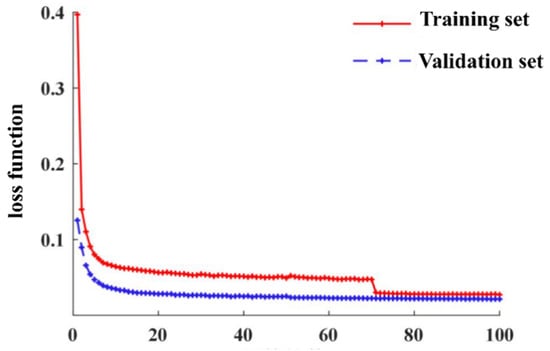

Considering the detection accuracy and real-time and lightweight degree, the improved YOLOv7 model proposed in this paper shows the best comprehensive detection performance, which can accurately obtain the fruit position information on the tree in real time and meet the requirements of the picking operation. The loss value l of the model training process is shown in Figure 11, and the model reaches the convergence state in the 71st generation.

Figure 11.

Loss value change curve during training.

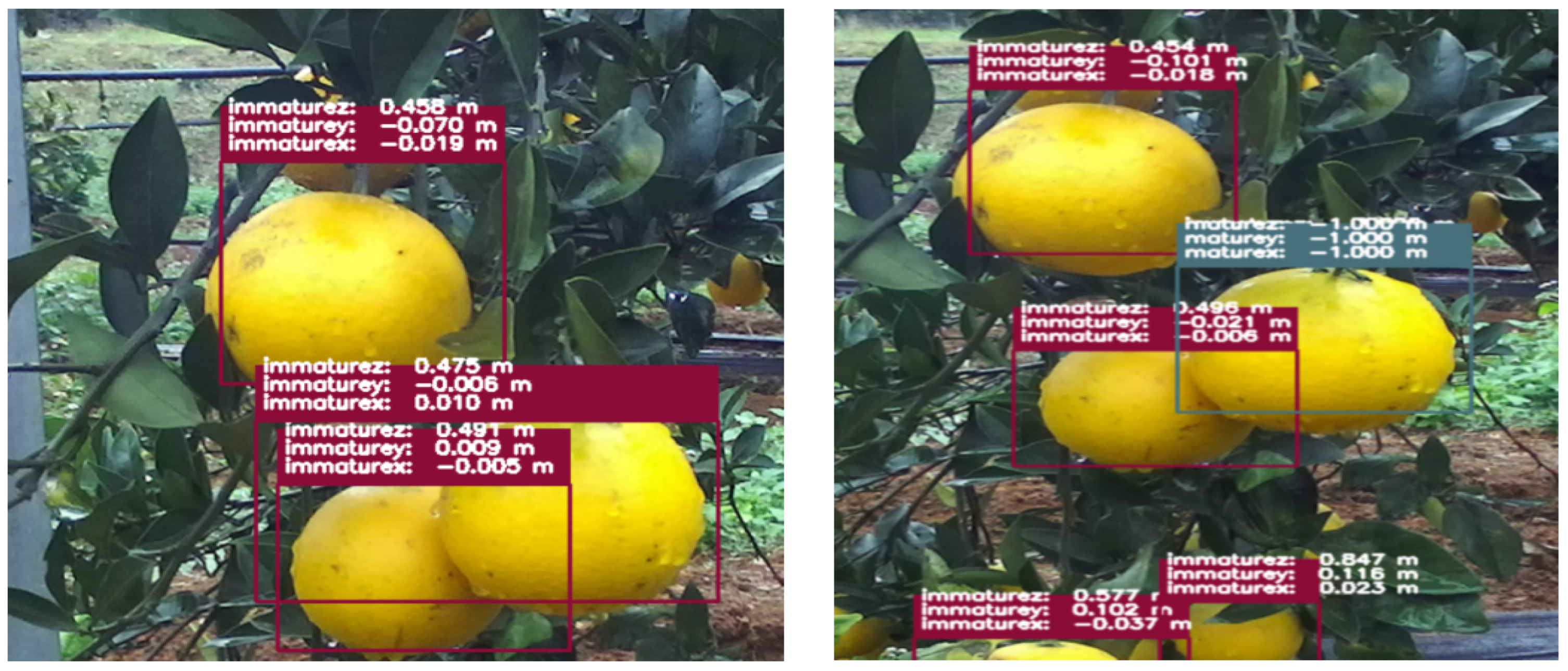

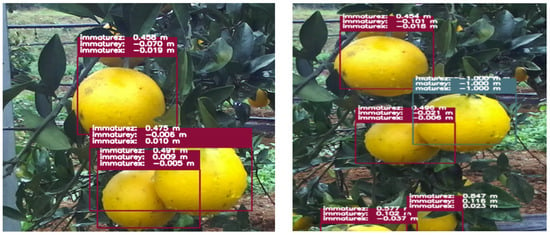

3.2. Citrus Fruit Positioning Results

The real-time detection of citrus fruits was carried out in the citrus orchard of the Hunan Academy of Agricultural Sciences, where the depth camera was fixed on a tripod to test the recognition and localization of citrus fruits, which were fixed in a green background frame during the test. The improved YOLOv7 algorithm and the 3D spatial localization algorithm that comes with the depth camera were used on a computer running Ubuntu 16.04 under the ROS (robot operating system) environment. The depth camera identifies and localizes citrus fruits in real time by setting the center point of the binocular camera as the origin of the world coordinate system, the horizontal direction of the origin to the left camera as the X-axis direction, the horizontal direction of the origin to the right camera as the Z-axis direction, and the vertical upward direction of the origin as the Y-axis direction. The distance measurement of the identified citrus fruit was carried out using a tape measure, and the relative spatial position of the target citrus fruit to the ZED binocular depth camera was measured as (Xa, Ya, Za), and compared with the position information returned by the binocular depth camera; a total of 80 measurements were made. The deviation of the spatial position of the target citrus fruit in the Xa measured by the binocular depth camera compared to the manual measurement was 1.02%; the deviation of the spatial position of the target citrus fruit in the Ya measured by the binocular depth camera compared to the manual measurement was 1.57%; the deviation of the spatial position of the target citrus fruit in the Za measured by the binocular depth camera compared to the manual measurement was 2.02%. In addition, the same was also performed for the recognition speed of citrus fruitsAs shown in Figure 12, which was about 4.25 f/s.

Figure 12.

Visual recognition testbed for citrus-picking robots.

3.3. Citrus Fruit-Picking Performance Test

From 30 November to 13 December 2023, a field verification experiment of a citrus-picking robot was carried out in the orchard of the Hunan Academy of Agricultural Sciences. The test time was from 1 PM to 6 PM, and different groups of tests were carried out on cloudy and sunny days, respectively. The test fruit tree variety was “Dafen 4”. As shown in Figure 13, 2000 naturally growing citrus fruits were selected for 10 groups of picking experiments. Groups 1–5 were tested on cloudy days and groups 6–10 were tested on sunny days.

Figure 13.

Field test environment of citrus-picking robot.

As can be seen from Table 3, the positioning success rate of the picking robotic arm for citrus fruits in the natural environment of the field is 87.15%, and the picking success rate is 82.4%. During the picking test, the unsuccessful completion of positioning and picking was mainly due to the location of citrus fruits beyond the end-effector picking range, and the movement parameters of the joints of the robotic arm had errors which affected the movement accuracy of the robotic arm, leading to the failure of picking.

Table 3.

Field performance test results of picking robots.

4. Conclusions

A six-axis autonomous citrus-picking robot system was designed to meet the demand of efficient picking in typical standard orchards in China, which enabled the independent picking operation of citrus fruits in a natural environment. An improved YOLO v7 dense citrus detection model was proposed, which not only makes full and effective use of the interaction characteristics of the global dimension, but also makes the network balance the problem of computing speed and model complexity. According to the physical parameters of the citrus fruit and stem, an end-effector suitable for picking citrus fruit was designed, which effectively reduced the damage associated with citrus-picking. Finally, according to the actual distribution of citrus fruits in the natural environment, a citrus fruit-picking task planning model was established to ensure the efficient and orderly picking operation of the end-effector. The detailed conclusions are as follows:

- (1)

- In this study, based on the complementary nature of deep learning methods in citrus-picking, targeting the problems of leakage, false detection, and low confidence in citrus fruit region detection, we used the Mobile Net network instead of the CSPDarknet network as the backbone network in the YOLOv7 network model, and incorporated the CA mechanism, which dramatically increases the recognition performance of citrus fruits by picking robots. it can be seen that the improved YOLOv7 model proposed in this paper has a detection accuracy P, recall R, reconciliation mean F1, and average precision AP of 95.27%, 93.28%, 92.88%, and 96.55%.

- (2)

- A localization test of citrus fruits was carried out, and the data statistics of the binocular depth camera compared to the manually measured spatial position nal deviation of target citrus fruits in X-axis was 1.02%; the depth camera compared to the manually measured spatial position deviation of target citrus fruits in the Y-axis was 1.57%; and the depth camera compared to the manually measured spatial position deviation of target citrus fruits in the Z-axis was 2.02%. In addition, the recognition speed of the citrus fruits was likewise counted, and the recognition speed was about 4.25 f/s.

- (3)

- The robot was tested for picking performance in the field. The picking robot first identifies and locates citrus fruits through binocular cameras, then controls the robotic arm to move to the picking point, and finally controls the end-effector to complete the picking task. The experimental results show that the success rate of the citrus-picking robot arm is 87.15%, and the success rate of picking in the natural field environment is 82.4%, which is better than the success rate of 80% of the market picking robot. To improve the performance of picking robots in the future, it is necessary to integrate cutting-edge sensing technology, deep learning algorithms, precision mechanical control, and utilize intelligent optimization and adaptive learning with the help of the Internet of Things to achieve efficient and accurate automated picking.

Author Contributions

Software, X.X.; Formal analysis, Y.J.; Investigation, Y.W.; Writing—original draft, B.Z. All authors have read and agreed to the published version of the manuscript.

Funding

This research is funded by Hunan Intelligent Agricultural Machinery Equipment Innovation Research and Development Project (Z2023260002414).

Institutional Review Board Statement

Not applicable.

Data Availability Statement

Data are contained within the article.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Wang, D.; Song, H.; He, D. Research advance on vision system of apple picking robot. Trans. Chin. Soc. Agric. Eng. 2017, 33, 59–69. [Google Scholar]

- Xiang, J.; Wang, L.; Li, L.; Lai, K.-H.; Cai, W. Classification-design-optimization integrated picking robots: A review. J. Intell. Manuf. 2023, 1–24. [Google Scholar] [CrossRef]

- Vasconez, J.P.; Kantor, G.A.; Cheein, F.A.A. Human–robot interaction in agriculture: A survey and current challenges. Biosyst. Eng. 2019, 179, 35–48. [Google Scholar] [CrossRef]

- Bai, Y.; Zhang, B.; Xu, N.; Zhou, J.; Shi, J.; Diao, Z. Vision-based navigation and guidance for agricultural autonomous vehicles and robots: A review. Comput. Electron. Agric. 2023, 205, 107–124. [Google Scholar] [CrossRef]

- Li, Z.; Yuan, X.; Yang, Z. Design, simulation, and experiment for the end effector of a spherical fruit picking robot. Int. J. Adv. Robot. Syst. 2023, 20, 17298806231213442. [Google Scholar] [CrossRef]

- Wu, H.; Wang, Y.; Shi, Y.; Zhu, Q.; Tan, H.; Wu, Z. Bottom-Up Clustering and Merging Strategy for Irregular Curvature Aero-blade Surface Extraction. IEEE Trans. Instrum. Meas. 2023, 72, 1–9. [Google Scholar] [CrossRef]

- Ji, W.; Zhang, T.; Xu, B.; He, G. Apple recognition and picking sequence planning for harvesting robot in the complex environment. J. Agric. Eng. 2023, 55, 1549. [Google Scholar] [CrossRef]

- Xu, R.; Li, C. A modular agricultural robotic system (MARS) for precision farming: Concept and Implementation. J. Field Robot. 2022, 39, 387–409. [Google Scholar] [CrossRef]

- Wu, H.; Wang, Y.; Vela, P.A.; Zhang, H.; Yuan, X.; Zhou, X. Geometric Inlier Selection for Robust Rigid Registration with Application to Blade Surfaces. IEEE Trans. Ind. Electron. 2022, 69, 9206–9215. [Google Scholar] [CrossRef]

- Gao, G.; Guo, H.; Zhou, W.; Luo, D.; Zhang, J. Design of a control system for a safflower picking robot and research on multisensor fusion positioning. Eng. Agrícola 2023, 43, e20210238. [Google Scholar] [CrossRef]

- Zhang, X.; Yao, M.; Cheng, Q.; Liang, G.; Fan, F. A novel hand-eye calibration method of picking robot based on TOF camera. Front. Plant Sci. 2023, 13, 1099033. [Google Scholar] [CrossRef] [PubMed]

- Bachche, S. Deliberation on design strategies of automatic harvesting systems: A survey. Robotics 2015, 4, 194–222. [Google Scholar] [CrossRef]

- Yaguchi, Y.; Takeuchi, K.; Waragai, T.; Tateno, T. Durability evaluation of an additive manufactured biodegradable composite with continuous natural fiber in various conditions reproducing usage environment. Int. J. Autom. Technol. 2020, 14, 959–965. [Google Scholar] [CrossRef]

- Chen, X.; Chaudhary, K.; Tanaka, Y.; Nagahama, K.; Yaguchi, H.; Okada, K.; Inaba, M. Reasoning-based vision recognition for agricultural humanoid robot toward tomato harvesting. In Proceedings of the 2015 IEEE/RSJ international conference on intelligent robots and systems (IROS), Hamburg, Germany, 28 September–2 October 2015. [Google Scholar]

- Ma, W.; Yang, Z.; Qi, X.; Xu, Y.; Liu, D.; Tan, H.; Li, Y.; Yang, X. Study on the Fragrant Pear-Picking Sequences Based on the Multiple Weighting Method. Agriculture 2023, 13, 1923. [Google Scholar] [CrossRef]

- Liu, J.; Peng, Y.; Faheem, M. Experimental and theoretical analysis of fruit plucking patterns for robotic tomato harvesting. Comput. Electron. Agric. 2020, 173, 105330. [Google Scholar] [CrossRef]

- Liu, J.; Yuan, Y.; Gao, Y.; Tang, S.; Li, Z. Virtual model of grip-and-cut picking for simulation of vibration and falling of grape clusters. Trans. ASABE 2019, 62, 603–614. [Google Scholar] [CrossRef]

- Ji, W.; Zhao, D.; Cheng, F.; Xu, B.; Zhang, Y.; Wang, J. Automatic recognition vision system guided for apple harvesting robot. Comput. Electr. Eng. 2012, 38, 1186–1195. [Google Scholar] [CrossRef]

- Cao, S.; Zhao, D.; Liu, X.; Sun, Y. Real-time robust detector for underwater live crabs based on deep learning. Comput. Electron. Agric. 2020, 172, 105339. [Google Scholar] [CrossRef]

- Li, G.; Ji, B.Y.; Gu, B.X. Recognition and location of oscillating fruit based on monocular vision and ultrasonic testing. Nongye Jixie Xuebao/Trans. Chin. Soc. Agric. Mach. 2015, 46, 1–8. [Google Scholar]

- Mai, X.; Zhang, H.; Jia, X.; Meng, M.Q.-H. Faster R-CNN with classifier fusion for automatic detection of small fruits. IEEE Trans. Autom. Sci. Eng. 2020, 17, 1555–1569. [Google Scholar] [CrossRef]

- Ding, X.; Chen, T.; Wei, Y.; He, Z.; Cui, Y.; Yang, Q.C. Design and Implementation of the Positioning and Directing Precision Seeder for Cucurbita Ficifolia Seeds. Appl. Eng. Agric. 2023, 40, 1–14. [Google Scholar] [CrossRef]

- Wang, M.; Ning, P.; Su, K.; Yoshinori, G.; Wang, W.; Cui, Y.; Cui, G. Slip-draft embedded control system by adaptively adjusting the battery position for electric tractors. Int. J. Agric. Biol. Eng. 2023, 16, 155–164. [Google Scholar] [CrossRef]

- Ling, X.; Zhao, Y.; Gong, L.; Liu, C.; Wang, T. Dual-arm cooperation and implementing for robotic harvesting tomato using binocular vision. Robot. Auton. Syst. 2019, 114, 134–143. [Google Scholar] [CrossRef]

- Zhao, Y.; Gong, L.; Huang, Y.; Liu, C. A review of key techniques of vision-based control for harvesting robot. Comput. Electron. Agric. 2016, 127, 311–323. [Google Scholar] [CrossRef]

- Zhang, Y.; Zhang, K.; Yang, L.; Zhang, D.; Cui, T.; Yu, Y.; Liu, H. Design and simulation experiment of ridge planting strawberry picking manipulator. Comput. Electron. Agric. 2023, 208, 107690. [Google Scholar] [CrossRef]

- Wang, Y.; He, Z.; Cao, D.; Ma, L.; Li, K.; Jia, L.; Cui, Y. Coverage path planning for kiwifruit picking robots based on deep reinforcement learning. Comput. Electron. Agric. 2023, 205, 107593. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).