Abstract

Dysarthric speech has several pathological characteristics, such as discontinuous pronunciation, uncontrolled volume, slow speech, explosive pronunciation, improper pauses, excessive nasal sounds, and air-flow noise during pronunciation, which differ from healthy speech. Automatic speech recognition (ASR) can be very helpful for speakers with dysarthria. Our research aims to provide a scoping review of ASR for dysarthric speech, covering papers in this field from 1990 to 2022. Our survey found that the development of research studies about the acoustic features and acoustic models of dysarthric speech is nearly synchronous. During the 2010s, deep learning technologies were widely applied to improve the performance of ASR systems. In the era of deep learning, many advanced methods (such as convolutional neural networks, deep neural networks, and recurrent neural networks) are being applied to design acoustic models and lexical and language models for dysarthric-speech-recognition tasks. Deep learning methods are also used to extract acoustic features from dysarthric speech. Additionally, this scoping review found that speaker-dependent problems seriously limit the generalization applicability of the acoustic model. The scarce available speech data cannot satisfy the amount required to train models using big data.

1. Introduction

Speech is generated via the coordinated movements of articulators, which are regulated via neural activities in the speech-related functional areas of the brain [1]. Speech plays an important role in people’s daily communication and is a crucial medium for social interaction [2,3]. Dysarthria, a speech disorder, refers to neuromuscular impairments affecting the strength, speed, tone, steadiness, or accuracy of the speech-production muscles. Cortical lesions can cause a series of neuropathological characteristics in dysarthric speakers, and the severity of dysarthria varies depending on the location and severity of the neuropathies [1]. Unfortunately, dysarthric speakers often have difficulty pronouncing words correctly, making communication with others extremely challenging. This can not only cause significant physical and psychological distress to patients but may also impede their ability to participate fully in society [4]. Therefore, it is essential to investigate effective rehabilitation strategies for dysarthric speakers to improve their communication skills and help them resume productively participating in society.

Dysarthria, also known as motor dysarthria, is a speech disorder caused by neuropathy, muscle paralysis, decreased contractility, or uncoordinated movement related to speech production [5]. Generally, dysarthria is categorized as “mild, moderate and severe (or additionally, extremely severe)”. Speakers with severe dysarthria suffer a serious impairment of speech function and have difficulty recovering communication even with speech rehabilitation [6]. Dysarthria can also be categorized as “spastic, atonic, ataxic, hyperkinetic, hypokinetic, and mixed” [1]. Spastic dysarthria is the form most commonly seen in clinical practice. The speech pronounced by speakers with spastic dysarthria is discontinuous, and the volume of the speech is uncontrolled. The speakers’ bilateral superior motor neurons suffer damage. As the tension of the articulation muscles increases and the muscle strength decreases, the elevation of the soft palate decreases, and the lips and tongue show poor movement. Atonic dysarthria and ataxic dysarthria are less common. The speech of speakers with atonic dysarthria has some defects, such as slow speed, abnormal volume, explosive pronunciation, improper pauses and excessive nasal phonemes. The speaker’s cerebellum suffers damages. The speaker’s articulatory muscles lack control over the direction and scale of movements. Therefore, the speaker’s tongue is easily raised, and the direction of alternating movement is poorly regulated. Speakers with ataxic dysarthria suffer damage in their lower motor neurons, which leads to the paralysis of the pharyngeal muscles and soft palate. Due to impaired lip muscles, the speech of speakers with ataxic dysarthria has some defects, such as poor pronunciation continuity, air-flow noise, and low volume [1].

Intensive therapy is an effective means of speech rehabilitation for dysarthric speakers [7,8,9,10]. Speech rehabilitation can be easily realized with the help of a computer-aided training system [11]. Moreover, the direct application of speech signal processing to improve the intelligibility of dysarthric speech is also an effective and powerful research route. In particular, automatic speech recognition (ASR) is now one of the most popular tools [12,13,14,15,16,17,18,19,20,21,22]. Dysarthric speakers are easily exhausted, are less able to express emotions, and are prone to drooling and dysphagia. As a result, collecting dysarthric speech is extremely difficult [23]. This difficulty leads to the scarcity of dysarthric speech data, which adds to the difficulty of implementing ASR for dysarthric speech. In addition, as the pathogeneses of their dysarthria differ, dysarthric speakers vary significantly in their pronunciation, which also results in a larger and more complex variations in the acoustic space of dysarthric speech compared with normal speech [24,25].

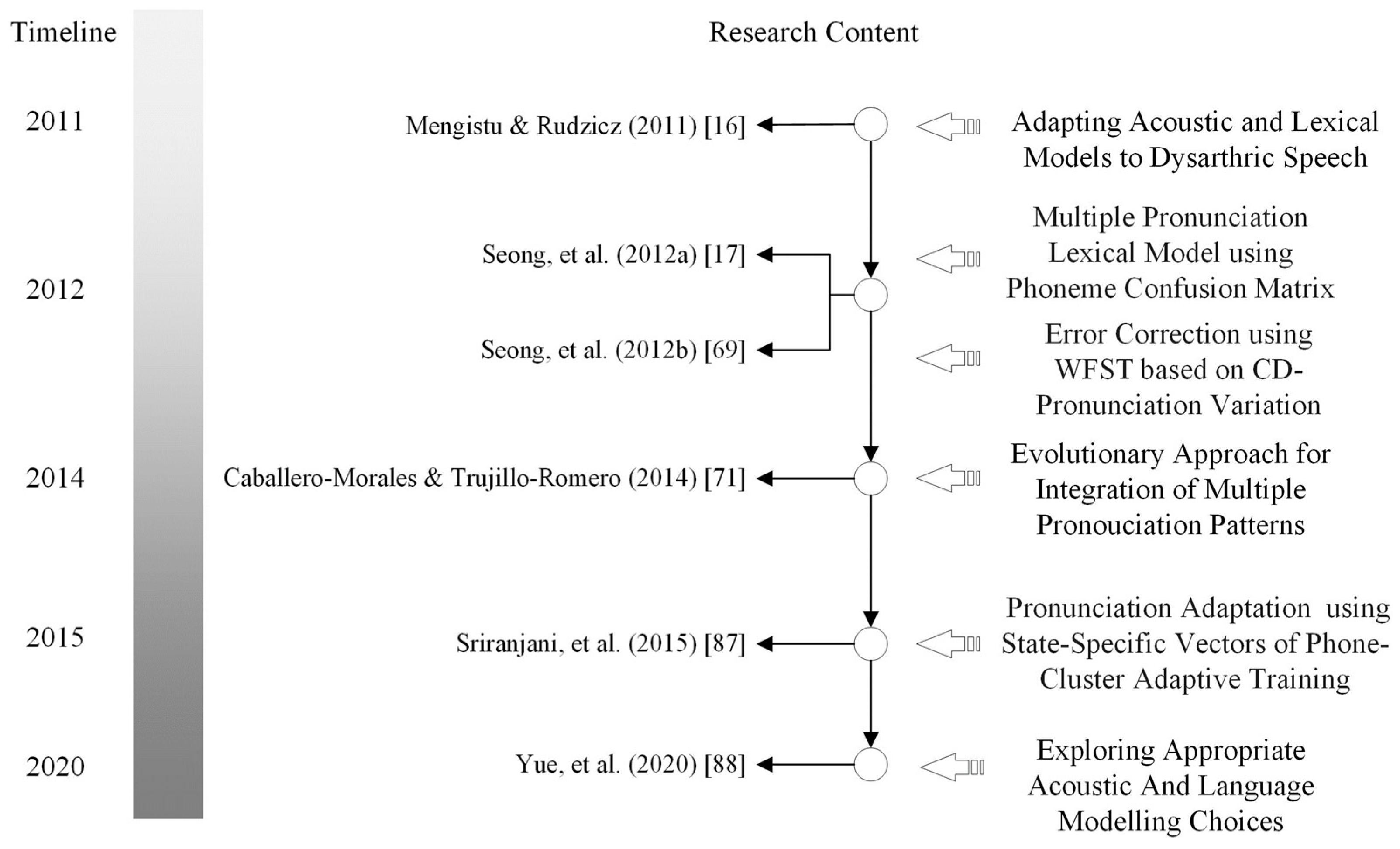

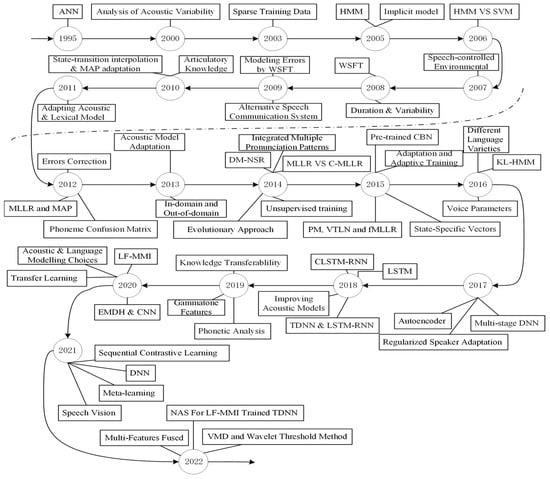

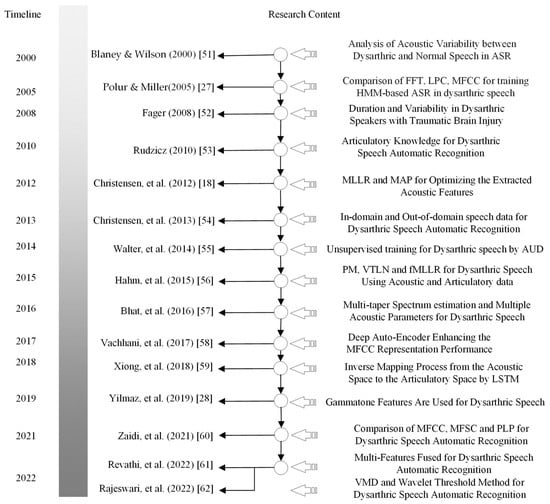

Many researchers have made efforts to improve the performance of ASR for dysarthric speech. The trend in the development of ASR for dysarthric speech can be found in Figure 1. In Figure 1, the top part of the dashed line shows the research studies conducted before deep learning, and the bottom part of the dashed line shows the research studies conducted in the era of deep learning.

Figure 1.

The trend of ASR for dysarthric speech.

1.1. ASR Technologies for Dysarthric Speech before Deep Learning

Before deep learning, technologies relating to ASR for dysarthric speech were limited by the computational abilities of devices. Machine learning methods have been widely used to design acoustic models for ASR for dysarthric speech. For example, Jayaram and Abdelhamied [26] used an artificial neural network (ANN) and analysed the experimental results of ASR for dysarthric speech. Polur and Miller [27] used the Hidden Markov Model (HMM) to design ASR for dysarthric speech and compared the results of different acoustic features such as fast Fourier transform, linear predictive and cepstral coefficients. The most remarkable characteristic before deep learning is that the research developed slowly.

1.2. ASR Technologies for Dysarthric Speech during Deep Learning

With the development of technologies for ASR using deep learning methods and the great improvement of computational abilities, a large amount of research has been carried out to improve the performance of ASR for dysarthric speech. In this era, representative research has come to the fore of this field. For example, Takashima et al. [20] used the convolutional Restricted Boltzmann Machine (CRBM) to address the local overfitting problem when a pre-trained convolutional bottleneck neural network was applied to obtain acoustic features. Yilmaz et al. [28] proposed the use of “bottleneck features and pronunciation features” to reduce the acoustic space variation caused by the poor pronunciation ability of dysarthric speakers, aiming to improve the accuracy of ASR for dysarthric speech. Second, researchers have improved the extraction of acoustic features to improve acoustic expression, using speaker-adaptive models to reduce the differences among acoustic spaces of dysarthric speech and improving the mapping of acoustic features to the phonemes. Kim et al. used Kullback–Leibler divergence HMM (KL-HMM) [29] and convolutional long short-term memory recurrent neural network (CLSTM-RNN) [30] to automatically recognize dysarthric speech. Overall, these advancements in ASR technology have significant potential to improve communication outcomes for dysarthric individuals, helping them to express themselves more effectively and enhance their quality of life.

Presently, the previous reviews of ASR for dysarthric speech mainly discussed the difficulties of ASR applications for elderly people with dysarthria [31] and explored what general and specific factors influence the accuracy of ASR for dysarthric speech [32]. Moreover, ASR technologies for dysarthric speech have greatly developed, especially in the era of deep learning. The main objective of our survey is to discuss the trends of ASR technologies for dysarthric speech, including research about dysarthric speech databases, acoustic features, acoustic models, language–lexical models and end-to-end ASR models. Our survey provides a more comprehensive and systematic review of the development of ASR for dysarthric speech, highlighting the latest advancements and future directions in this field.

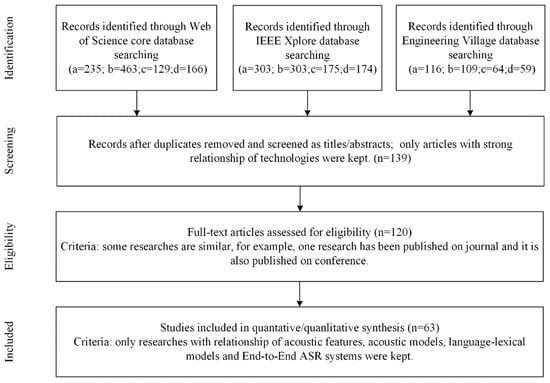

Regarding the main objective, this review follows, where possible, well-established practices for conducting and reporting scoping reviews, as suggested by the PRISMA statement [33]. Of the 27 items on the PRISMA checklist, we were able to follow 13 items in the title, introduction, methods, results, discussion and funding sections.

1.3. Retrieval Strategy of Papers

In the course of the scoping paper retrieval, the following databases were searched: “Web of Science”, “Engineering Village” and “IEEE Xplore”. The time limitation of the above databases was set from 1900 to 2022. Keywords, including “Dysarthric Speech Recognition”, “Dysarthria Speech Recognition”, “Automatic Speech Recognition of Dysarthric Speech” and “Automatic Speech Recognition of Dysarthria Speech”, were used as the retrieval conditions.

1.4. Selection Strategy of Papers

All authors jointly decided on the following selection criteria to reduce possible deviations during selection. Firstly, we excluded the papers that are not cited by other researchers, as their contribution may be insufficient. Secondly, we excluded less related papers. At the screening stage, all authors jointly decided whether one paper is relevant to our research. We also excluded duplicated papers due to eligibility concerns. Finally, we selected 63 representative papers fitting the theme of our research. The papers fit in five categories of “dysarthric speech databases”, “acoustic feature extraction”, “acoustic model”, “language-lexical model” and “End-to-End ASR”. The whole selection process can be found in Figure 2.

Figure 2.

PRISMA flow diagram of search methods.

In this paper, we summarize the development of ASR for dysarthric speech and compare traditional approaches based on acoustic feature extraction, acoustic models and language–lexical models, aiming to provide a reference for future research. The main contributions of this paper include the following four aspects:

- (1)

- the influence of different acoustic feature parameters on the performance of ASR for dysarthric speech was analysed;

- (2)

- the construction and improvement of acoustic models for dysarthric speech recognition based on different machine learning (or deep learning) methods were introduced;

- (3)

- the effects of different approaches on improving the performance of different language–lexical models for dysarthric speech recognition were introduced;

- (4)

- several advanced approaches of end-to-end ASR were introduced in the field of dysarthric speech recognition.

The rest of this paper is as follows: Section 2 compares and analyses the effects of different “approaches of acoustic feature extraction”, “acoustic models”, “language-lexical models” and “End-to-End ASR”, respectively. Section 3 discusses the challenges and the future prospects dysarthric speech recognition. Section 4 gives a conclusion.

2. Summary of the Evolutional Trends in ASR

From a technological perspective, factors that affect the performance of ASR systems can be external and internal. External factors mainly concern the characteristics of data, such as the quality and quantity of the training data used for acoustic modelling and language modelling. Internal factors mainly concern the performance of models in ASR systems, such as the acoustic feature extraction model, acoustic model and language model. In this paper, we review and analyse ASR for dysarthric speech from both internal and external aspects. We explore the impact of these factors on the performance of ASR systems for dysarthric speech and provide insights into the challenges and opportunities in developing more effective ASR systems for this population.

2.1. Dysarthric Speech Database for Training ASR

Databases of dysarthric speech play an important role in training the acoustic and language model of ASR systems. The choice of databases has a significant impact on system performance. Some classic databases related to dysarthric speech present in this paper are listed in Table 1.

Table 1.

Classical databases of dysarthric speech.

2.1.1. Whitaker Database

Whitaker is a database of dysarthric speech produced by Deller et al. [34], which includes recordings of 19,275 isolated words of six speakers with dysarthria whose conditions are caused by cerebral palsy. Whitaker also contains the voices of healthy speakers as references. The words in the Whitaker database can be divided into two categories: the “TI-46” word list and the “grandfather” word list. The “TI-46” word list contains 46 words, including 26 letters, 10 numbers and 10 control words of “start, stop, yes, no, go, help, erase, ruby, repeat, and enter”. TI-46 is a standard vocabulary recommended by Texas Instruments Corporation [35] and has been widely used to test ASR algorithms. The grandfather level word list contains 35 words. The reason why the “grandfather word list” gets its name is that it is taken from a paragraph that begins with “Let me tell you my grandfather…” and is often used by speech pathologists [36]. Each word in the TI-46 and grandfather word list was repeated at least 30 times by six speakers with dysarthria. In most cases, 15 additional repetitions are also included, achieving a total of 45 repetitions. Normal speakers repeated the words 15 times for the database, which are then used as references. The Whitaker database was published in 1993 [33], providing an appropriate starting point for research in the field of dysarthric speech recognition.

2.1.2. UASpeech Database

The UASpeech database [37] contains dysarthric speech from 19 speakers with cerebral palsy. This database consists of 765 isolated words, including 455 different words, among which 155 are repeated three times. In addition, the corpus contains 300 rare words, as well as numbers, computer commands, radio alphabets and common words, to maximize the diversity of phoneme sequences. To our best knowledge, UASpeech is the first audio–visual speech database collected from speakers with dysarthria, containsing facial video files and dysarthric speech audio files. The UASpeech database is widely used in the task of audio–visual fusion for dysarthric speech recognition [38].

2.1.3. TORGO Database

TORGO [39] was completed jointly by the Departments of Computer Science and Speech-Language Pathology of the University of Toronto and the Holland Bloorview Kids Rehabilitation Hospital in Toronto. Speech pathologists of the Bloorview Institute in Toronto recruited seven dysarthric subjects. The subjects were between 16 and 50 years old and suffered from dysarthria caused by cerebral palsy (such as spasticity, athetosis and ataxia). In addition, a subject diagnosed with amyotrophic lateral sclerosis (ALS) was included in the study. The speech motor function of each dysarthric speaker was given a standardized evaluation by speech language pathologists. The acoustic data in the database were directly collected via a headset microphone and a directional microphone. The occlusal data were collected via electromagnetic articulography (EMA), which was used to measure the speaker’s tongue and other occlusal organs during the speech. The collected data were obtained through 3-dimensional (3-D) reconstructions of binocular video sequences. The stimuli were observed from a variety of sources, including the TIMIT database, lists of identified telephone contacts, and assessments of speech intelligibility. The category of speech material is shown in Table 2.

Table 2.

Category of speech material in TORGO database.

In the TORGO database, “Non words” were used to control the baseline capabilities of dysarthric speakers, especially to gauge their artistic control in the presence of plosives and prosody. Speakers pronouncing /iy-p-ah/,/ah-p-iy/, and /p-ah-t-ah-k-ah/, were asked to repeat them 5–10 times. These pronunciation sequences could be helpful in analysing the characteristics of pronunciation concerning blasting consonants [46]. The speakers were asked to keep pronouncing treble and bass vowels for over 5 s (i.e., pronouncing “e-e-e” for 5 s). This operation helped researchers explore how to apply prosody to speech-assistance technology because many dysarthric speakers could control their pitch only to a certain extent [47]. “Short words” were critical for acoustic research [48] as voice activity detection was not necessary here. The stimuli included formant conversion between consonants and vowels, formant frequency of vowels, and sound energy of plosive consonants. Restricted sentences were used to help the recording of complete and syntactically correct sentences for ASR. Unrestricted sentences were used to supplement restricted sentences as they included sentences that were not fluent and had syntactic variations. All the participants spontaneously read sentences from the description of Webber Photo Cards: Story Starters [49].

2.1.4. Nemours Database

The Nemours database [43] contains 814 short nonsense sentences out of which 74 were spoken by the 11 male speakers with varying degrees of dysarthria. In addition, the database also contains two continuous paragraphs recorded by the 11 speakers. The recording of all speakers was carried out in a special room with Sony PCM-2500 microphones. Speakers repeated the content following the instructor for an average time of 2.5 to 3 h, including breaks. The database was marked on word and phoneme levels. Words were labelled manually, while phonemes were marked using the DNN-HMM-based ASR (Deep Neural Networks and Hidden Markov Model) and manually corrected later. As the Nemours database has sparse phoneme distribution, researchers find it difficult to use the database to train ASR models and explore the potential influence of dysarthria [14,50].

2.1.5. MOCHA-TIMIT Database

The MOCHA-TIMIT database of dysarthria was created by Wrench [44] in 1999. He selected 460 short sentences from the TIMIT database [45], aiming to include the major connected speech processes in English. The researcher used EMA (500 Hz sampling rate), laryngography (16 kHz sampling rate) and electropalatography (EPG, 200 Hz sampling rate) to collect the movement of the speakers’ upper lip, lower lip, upper incisor, lower incisor, tongue tip, tongue blade (1 cm from the tip of the tongue) and tongue dorsum (1 cm from the blade of the tongue). The researcher planned to record the speech of two healthy speakers (one male and one female) and 38 speakers with dysarthria in May 2001. At present, detailed recording results are unclear and dysarthric data are unavailable in the online database.

In summary, the current databases concerning dysarthric speech are precious and scarce. Collecting data from speakers with dysarthria is too difficult, which severely limits the performance of models trained using these data corpus. In the future, considering the cost of data collection, two directions would be where we make efforts: to collect more multi-modality data, including the facial video and speech audio from the speakers with dysarthria; to design more effective methods to improve the performance of the model trained using few (or zero) resource data corpus.

2.2. ASR System for Dysarthria Speech

The research on ASR for dysarthric speech mainly focuses on four fields: (1) the parameters of acoustic feature extraction, (2) acoustic model, (3) language–lexical models and (4) end-to-end (or Sequence-to-Sequence) ASR for dysarthric speech.

2.2.1. Acoustic Features of Dysarthric Speech for Training ASR

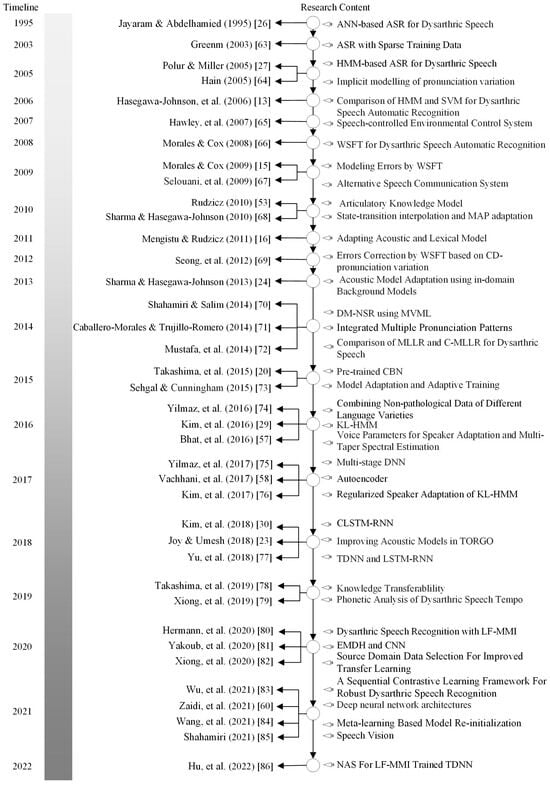

The trend of development of technologies following the timeline for extracting acoustic features of dysarthric speech for training ASR is shown in Figure 3.

Figure 3.

The diagram of evolution of acoustic features for dysarthric speech recognition.

In an early stage, Blaney and Wilson [51] explained the cause of articulatory difference between dysarthric and healthy speakers and explored the relationship between articulatory difference and the accuracy of ASR. For example, the accuracy of ASR is affected by the voice offset time (VOT) of voiced plosives, fricatives, and vowels. Meanwhile, speakers with moderate dysarthria show wider variation in acoustic tests as compared with speakers with mild dysarthria and healthy speakers.

In an experiment comparing different acoustic features in ASR based on HMM model configurations, Polur and Miller [27] analysed the performance of several feature extraction techniques, including fast Fourier transform (FFT), linear predictive coding (LPC), and Mel-Frequency Cepstrum Coefficients (MFCC). The researchers trained several whole-word HMM configurations using the provided training input and evaluated their performance using standardized measures. Their experimental results show that a 10-state ergodic model using 15 msec frames was better than other configurations, and the MFCC outperformed both FFT and LOP in terms of its ability to effectively capture the acoustic characteristics of dysarthric speech for training ASR systems. These findings highlight the importance of selecting appropriate feature extraction techniques for improving the accuracy of ASR systems for dysarthric speech.

In a study investigating the duration variability of isolated words and voice types in dysarthric speech, Fager [52] performed a detailed analysis of the performance of 10 speakers with dysarthria caused by traumatic brain injury (TBI) and 10 healthy speakers. The researcher explored not only the relationship between intelligibility and duration but also the relationship between intelligibility and variability in dysarthric speech. The findings of this study suggest that dysarthric speech is characterized by greater variability in both the duration of isolated words and voice types compared to healthy speech, which can impact its overall intelligibility. Further research is needed to understand the underlying causes of these differences and develop methods for improving the comprehensibility of dysarthric speech.

In addition, Rudzicz [53] used the articulatory knowledge of the vocal tract to mark the segmented and non-segmented sequences of non-typical speech. The research [53] combined the above models with discriminative learning models, such as ANN, support vector machines (SVMs) and conditional random fields (CRFs). By integrating information concerning the acoustic structure of dysarthric speech with machine learning techniques, Rudzicz’s study aimed to develop more effective methods for improving the intelligibility of dysarthric speech in ASR systems. These findings have important implications for the development of ASR systems that can better accommodate the unique challenges faced by individuals with dysarthria in communication.

In a study exploring the use of maximum likelihood linear regression (MLLR) and maximum a posteriori (MAP) adaptation strategies to improve the performance of ASR for dysarthric speech, Christensen et al. [18] found that these approaches significantly improved recognition performance for all speakers with an average increase in accuracy of 3.2% and 3.5%, respectively against speaker-dependent and speaker-independent baseline systems. These findings suggest that incorporating information about the acoustic structure of dysarthric speech into ASR systems can lead to significant improvements in their ability to accurately transcribe this type of speech. The authors suggest that further research is needed to optimize the effectiveness of these adaptation strategies and to develop ASR systems that can effectively accommodate the unique challenges faced by individuals with dysarthria in communication.

In a study investigating an alternative domain adaptive approach to improve the performance of ASR for dysarthric speech, Christensen et al. [54] proposed a method that combined in-domain and out-domain data to train a deep belief network (DBN) for acoustic feature extraction. During this process, out-domain data were used to generate the DBN, which was then optimized using the augmented multi-party interaction (AMI) meeting corpus and TED talk corpus. Finally, the optimized model was verified on the UASpeech database and found to result in an average recognition accuracy of 62.5%, which was 15% higher than the baseline method before optimization. These findings suggest that incorporating information from multiple domains into ASR systems can lead to significant improvements in their ability to accurately transcribe dysarthric speech and that the use of deep learning techniques such as DBNs can further enhance these improvements.

In a study exploring the use of unsupervised learning models for automatic dysarthric speech recognition, Walter et al. [55] employed vector quantization (VQ) to obtain Gaussian posteriorgrams at the frame level. These posteriorgrams were then used to train acoustic unit descriptors (AUD), which are hierarchical multi-state models of phone-like units, in an unsupervised manner. The researchers found that the AUD model was able to accurately capture the acoustic structure of dysarthric speech and that this information could be used to improve the performance of subsequent speech recognition systems. These findings suggest that unsupervised learning techniques can be effective in automatically extracting relevant features from dysarthric speech and that these features can be used to improve the accuracy of ASR systems for this type of speech. Further research is needed to explore the generalizability of these findings and to develop more robust unsupervised learning approaches for dysarthric speech recognition.

In a study exploring the use of three across-speaker normalization methods in the acoustic and articulatory spaces of speakers with dysarthria, Hahm et al. [56] applied Procrustes Matching (a physiological method in the articulatory space), Vocal Tract Length Normalization (VTLN, a data-driven method in the acoustic space), and MLLR (method of modelling the two spatial parameters) to tackle the large variation in phoneme among different speakers with dysarthria. The study was based on the ALS database and showed that training the triple phoneme DNN-HMM (Triph-DNN-HMM) by acoustic and articulatory data and normalizing three methods achieved the best performance. The phoneme error rate of the best combination in reference [56] was 30.7%, the absolute value being 15.3% lower than that under the baseline method “triple phoneme GMM-HMM (Triph-GMM-HMM) trained using acoustic data. These findings suggest that combining information from both the acoustic and articulatory domains can significantly improve the accuracy of ASR for dysarthric speech.

Bhat et al. [57] proposed to combine multi-taper spectrum estimation and multiple acoustic parameters (such as jitter or shimmer) with MLLR (fMLLR) and speaker-adaptive methods to improve the performance of ASR for dysarthric speech. The findings of this study suggest that the combination of multi-acoustic features can be useful for improving the accuracy of ASR for dysarthric speech.

Vachhani et al. [58] proposed the use of a deep auto-encoder to enhance the MFCC representation performance of ASR for dysarthric speech. In the research [58], normal speech was used to train the auto-encoder, and then the trained auto-encoder was used in transfer learning to improve the representation of acoustic features. Test results on the UASpeech showed that the accuracy of ASR for dysarthric speech improved by 16%. These findings suggest that using an auto-encoder in transfer learning can be an effective way to improve the performance of ASR for dysarthric speech.

Xiong et al. [59] proposed the use of long short-term memory (LSTM) to simulate inverse mapping from the acoustic to the articulatory space. The proposed approach supplemented information for deep neural networks (DNNs), taking advantage of acoustic and articulatory information to improve the accuracy of ASR for dysarthric speech.

Yilmaz et al. [28] explored the gammatone features in ASR for dysarthric speech and compared the gammatone with acoustic features calculated through the use of traditional Mel-filters. The gammatone features could capture resolution variation in the spectrum, which was more similar to men’s listening filters than traditional Mel-filters. The features in this study [28] were continuous and more representative of vocal tract kinematics. Using the gammatone features to represent acoustic space was conducive to explaining the variability observed in the acoustic space, and thus, making the ASR more robust.

Zaidi et al. [60] explored how to combine DNN, CNN (convolutional neural networks) and LSTM to improve the accuracy of ASR for dysarthric speech. MFCC, MFSC (Mel-Frequency Spectrum Coefficient) and PLP (Perceptual Linear Prediction) were compared with the proposed method in reference [60]. The experiment results on the Nemours database showed that the accuracy of CNN-based ASR reached 82% when PLP parameters were used, 11% and 32% higher, respectively, than that of LSTM-based and GMM-HMM-based ASR. These findings suggest that combining DNN, CNN, and LSTM can be an effective way to improve the accuracy of ASR for dysarthric speech and that the PLP parameterization used in this study may provide a useful framework for further improving the accuracy of ASR for dysarthric speech.

Revathi et al. [61] proposed to combine GFE (gammatone energy with filters calibrated in different nonlinear frequency scales), stock well features, MGDFC (modified group delay Cepstrum), speech enhancement and VQ-based classifiers. After all the acoustic feature parameters had been fused at the decision level of speech enhancement, the error rate of ASR for dysarthric speech (with an intelligibility of 6%) was 4%. In addition, the error rate of ASR for dysarthric speech (if with an intelligibility of 95%) was 0%. However, this research [61] was based on a corpus where only digital pronunciation was available. Therefore, the applicability of the study [61] is difficult to evaluate. Rajeswari et al. [62] processed distorted or noisy dysarthric speech. They first used VMD (variational mode decomposition) and wavelet threshold to enhance the speech. Then, the enhanced speech served as inputs and was recognized by CNN as characters. Tests on UASpeech showed that the average accuracy of ASR for dysarthric speech was 91.8% without enhancement and 95.95% with VMD enhancement [62]. However, from the works of Rajeswari et al. [62], we cannot obtain statistically significant conclusions. Their results [62] have statistical bias because no standard deviation or confidence interval have been given in their results.

2.2.2. Acoustic Model of ASR for Dysarthric Speech

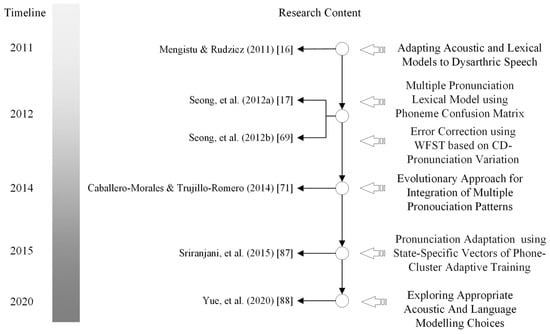

The development trend of technologies and the timeline for designing acoustic models of ASR for dysarthric speech are shown in Figure 4.

Figure 4.

The diagram of evolution of acoustic models for dysarthric speech recognition.

During the early stage, Jayaram and Abdelhamied [26] studied the ANN-based acoustic model and applied it to dysarthric speakers. The data used in this study [26] included ten words recorded by a male dysarthric speaker. His speech had an intelligibility of only 20%. The highest accuracy of ASR was 78.25% [26].

Greenm [63] designed ASR based on CD-HMM (context-dependent HMM) to recognize the audio commands pronounced by speakers with severe dysarthria. The results showed that speakers with severe dysarthria had poor control over the target machine.

Polur and Miller [27] designed an HMM-based ASR system for dysarthric speech (isolated words). They explored the conditions set up for improving the performance of ASR systems. This research studied the pronunciation of three speakers with cerebral palsy. The research also compared the influence of model structure, state quantity and frame rate on ASR performance. The experiment results showed that at the frame rate of 15 ms, the model with 10 states had the best performance. Hain [64] proposed gradually reducing the number of pronunciation variants of each word. This approach was similar to the classification, and the pronunciation variants were implicitly modelled. The experimental results showed that this approach could achieve good ASR performance with just a single pronunciation lexical model. This research was verified against both the Wall Street Journal and Switchboard datasets.

Hasegawa-Johnson et al. [13] compared HMM-based and SVM-based ASR to test the performance of different approaches when dealing with dysarthric speech. This study collected data from three dysarthric speakers (two males and one female). The experimental results showed that HMM-based ASR effectively recognized the speech of all dysarthric speakers, but the recognition result was poor when the consonants were damaged. The accuracy of SVM-based ASR was low for the stuttering speaker and was high for the other two dysarthric speakers. Therefore, this research illustrated that HMM-based ASR for dysarthric speech was robust for speech with large variations in terms of word length, while SVM-based ASR was suited to processing dysarthric speech with missing consonants (with an average recognition accuracy of 69.1%). However, the intelligibility of dysarthric speech used in this research was unknown.

Hawley et al. [65] developed a limited vocabulary, speaker-dependent ASR. This ASR was robust in dealing with speech variabilities. The accuracy of the training data set improved from 88.5% to 95.4% (p < 0.001). Even for speakers with severe dysarthria, the average accuracy of word-level recognition still reached 86.9%.

Morales and Cox [15,66] improved the acoustic model using WFST (weighted finite-state transducers) at the levels of the confusion matrix and word and language, respectively. The experimental results showed that this approach [66] had better performance than the MLLR and meta-model. Selouani et al. [67] designed an auxiliary system with ASR and TTS (Text-to-Speech) for dysarthric speakers to improve the speakers’ speech quality. The re-synthesized speech had a high intelligibility.

Rudzicz [53] compared ANN, SVM, CRF and other discriminative models in recognizing dysarthric speech with articulatory knowledge. The research showed that the accuracy of ASR can be significantly improved through the use of articulatory knowledge. In addition, this research also found that articulatory knowledge could not be transferred between healthy and dysarthric speakers. However, before the re-training, models could gain pre-training knowledge with the articulatory data of healthy speakers. Transfer learning could thus effectively improve the accuracy of ASR for dysarthric speech. Sharma and Hasegawa-Johnson [68] trained a speaker-dependent ASR system for normal speakers based on the TIMIT database and verified the trained system with UASpeech data of seven dysarthric speakers. The experimental results showed that the average accuracy of the speaker-adaptive ASR system was 36.8%, while the average accuracy of the speaker-dependent ASR system was 30.84%.

Mengistu and Rudzicz [16] proposed using acoustic and lexical adaptation to improve ASR for dysarthric speech. The experimental results showed that acoustic adaptation reduced the word error rate by 36.99%, thus greatly improving the accuracy. In addition, the pronunciation lexicon adaptation (PLA) model further reduced the word error rate by an average of 8.29% when six speakers with moderate-to-severe dysarthria were asked to pronounce a large vocabulary pool of over 1500 words. The experiment for a speaker-dependent system with five-fold cross-validation showed that PLA-based ASR also reduced the average word error rate by 7.11%.

Seong et al. [69] used WFST-based ASR for dysarthric speech. Firstly, the word sequence at the phoneme level was aligned to the target sequence and the context-dependent confusion matrix model was built. However, the context-dependent confusion matrix might be incorrectly estimated because the database of dysarthric speech hardly covered all the phoneme groups. Secondly, in order to tackle erroneous estimation, interpolation was used to replace the context-dependent confusion matrix with a context-independent one. Finally, WFST based on the interpolated matrix, which was integrated with language–lexical model, was applied to correct the recognition errors. The experimental results showed that compared with the baseline approach, ASR based on context-dependent confusion matrix reduced the word error rate by 13.68%, and ASR based on a context-independent confusion matrix reduced the word error rate by 5.93%.

Sharma and Hasegawa-Johnson [24] proposed an interpolation-based approach that obtained prior articulatory knowledge from healthy speakers and applied the knowledge to dysarthric speech through adaptation. This study was validated on the UASpeech database, which included 16 patients with different degrees of dysarthria. The experimental results showed that compared with the baseline approach of MAP adaptation, the interpolation-based approach achieved an absolute improvement of 8% and a relative improvement of 40% in recognition accuracy.

Shahamiri and Salim [70] proposed a dysarthric multi-networks speech recognizer (DM-NSR) using multi-views, multi-learners approach, which was also called multi-nets artificial neural networks. The approach tolerated the variability of dysarthric speech. The experiment results on the UASpeech showed that compared with a dysarthric single-network speech recognizer, DM-NSR improved accuracy by 24.67%. Caballero-Morales and Trujillo-Romero [71] integrated multiple pronunciation patterns to achieve better performance in terms of ASR for dysarthric speech. This integration was achieved through weighing the response of the ASR system when different language model restrictions were set. The response weight parameters were estimated through the use of a genetic algorithm. The algorithm also optimized the structure of the HMM-based implementation process (Meta-models). This research was tested on the Nemours speech database. The experimental results showed that the integrated approach had higher accuracy than the standard Meta-model and speaker-adaptation approach. Mustafa et al. [72] used well-known adaptive technologies like MLLR and constrained MLLR to improve the performance of ASR for dysarthric speech. The model trained using dysarthric speech and normal speech was applied as the source model. The experimental results showed that training normal and dysarthria speech together could effectively improve the accuracy of the ASR system for dysarthric speech. Constrained MLLR performed better than MLLR in dealing with mildly and moderately dysarthric speech. In addition, the phoneme confusion was the main factor causing errors in ASR for severe dysarthric speech.

Takashima et al. [20] used the pre-trained convolutional bottleneck network (CBN) to extract the acoustic features of dysarthric speech and used the network to train ASR. This study was based on the acoustic data of patients with dysarthria caused by hand–foot cerebral palsy. Generally, it is extremely difficult to collect the acoustic data of dysarthric speakers as their tense speech muscles nearly prohibit pronunciation. As CBN relies on limited data, overfitting becomes an issue. The use of a convolution-limited Boltzmann machine during pre-training can effectively tackle overfitting. Word recognition experiments showed that this approach had better performance than the convolution network without pre-training. Sehgal and Cunningham [73] discussed the applicability of various speaker-independent systems, as well as the effectiveness of speaker-adaptive training in implicitly eliminating the differences in pronunciation among dysarthric speakers. This research relied on hybrid MLLR-MAP for the speaker-independent and speaker-adaptive training systems and was tested on the UASpeech database. The experimental results showed that, compared with the baseline approach, the research achieved an increase of 11.05% in absolute accuracy and 20.42% in relative accuracy. In addition, the speaker-adaptive training system was more suited to dealing with severely dysarthric speech and had better performance than speaker-independent systems.

Yilmaz et al. [74] trained a speaker-independent acoustic model based on DNN-HMM using the speech of different Dutch languages and used Flemish speech for testing. The experimental results showed that using the speech of different Dutch languages for training improved the performance of ASR in terms of Flemish data. Kim et al. [29] designed ASR for dysarthric speech based on KL-HMM. Here, the emission probability of state was modelled based on the posterior probability distribution of phonemes estimated through the use of DNN. This approach thus effectively captured the variation of dysarthric speech. The research was based on a corpus recorded by 30 speakers (12 speakers with mild dysarthria, eight speakers with moderate dysarthria and 10 healthy speakers), and the speakers were asked to pronounce several hundred words. The experimental results showed that the DNN-HMM approach based on KL divergence had a substantial advantage over traditional GMM-HMM and DNN approaches. Bhat et al. [57] compared the performance of different acoustic feature parameters in ASR based on DNN-HMM and GMM-HMM, respectively. The experimental results showed that using incremental data on dysarthric speech effectively improved the accuracy of ASR for dysarthric speech.

Yilmaz et al. [75] proposed a multi-stage DNN training scheme, aiming to achieve high ASR performance for dysarthric speech with a small amount of in-domain training data. The experimental results showed that, compared with a single-stage baseline system trained with a large amount of normal speech or a small amount of in-domain data, the multi-stage DNN significantly improved the accuracy of ASR for Dutch dysarthric speech. Vachhani et al. [58] proposed using a deep auto-encoder to enhance the representation performance of MFCC, thus improving the recognition accuracy of dysarthric speech. The auto-encoder was trained using normal speech and was applied to improve the performance of the acoustic model. In addition, this research also analysed the performance of the adaptive model for the rhythm of dysarthric speech and evaluated the performance of enhancement with an auto-encoder. The evaluation test was carried out on the UASpeech database. The experimental results showed that ASR based on DNN-HMM improved absolute accuracy by 16%. Kim et al. [76] proposed using KL-HMM to capture the variations of dysarthric speech. In the framework [76], the state emission probability was predicted by the posterior probability value of a phoneme. In addition, this research proposed a speaker adaptation method based on “L2-norm” regularization (also called ridge regression) to further reflect the speech variation patterns of specific speakers. This approach of speaker adaptation was used to reduce confusion. The approach improved the distinguishability of state classification distributions in KL-HMM while retaining the specific speaker information. This research [76] was performed on a self-made database whose data included 12 speakers with mild dysarthria, eight speakers with moderate dysarthria, and 10 normal people. The experimental results showed that DNN combined with KL-HMM had a better performance than the traditional speaker-adaptive DNN-based approach in dysarthric speech recognition tasks.

Kim et al. [30] designed a CLSTM-RNN-based ASR for dysarthric speech, which was validated on a self-made database. This database contained nine dysarthric speakers. The experimental results showed that CLSTM-RNN achieved better performance than CNN and LSTM-RNN (long short-term memory recurrent neural networks). Joy and Umesh [23] explored a variety of methods to improve the performance of ASR for dysarthric speech. First, they adjusted the parameters of various acoustic models. Second, they used the speaker-normalized Cepstrum features. Third, they trained the speaker-specific acoustic model by building a complex DNN-HMM model with dropout and sequence-discrimination strategies. Finally, they used specific information from dysarthric speech to improve the accuracy of severely and severely–moderately dysarthric speech. The DNN models were trained with audio files of both dysarthric and normal speech through a generalized distillation framework. This research was tested on TORGO, and an ideal recognition accuracy was obtained. Yu et al. [77] proposed a series of deep-neural-network-framework acoustic models based on TDNN (time-delayed neural networks), LSTM-RNN and their more advanced variants to design ASR for dysarthric speech. This study also used learning hidden unit contribution (LHUC) to adapt to the acoustic variation of dysarthric speech and improved the bottleneck feature extraction by constructing a semi-supervised complementary auto-encoder. Test results on UASpeech showed that this integrated approach achieved an overall word recognition accuracy of 69.4% on the test set of 16 speakers.

Takashima et al. [78] proposed the use of transfer learning to obtain knowledge from normal and dysarthric speech and then used the target dysarthric speech to fine-tune the pre-trained model. This approach was tested on Japanese dysarthric speech. The experimental results showed that this transfer learning approach significantly improved the performance of ASR for dysarthric speech. Xiong et al. [79] proposed a nonlinear approach to modify speech rhythm. This approach aimed to reduce the mismatch between typical and atypical speech in the following two ways: (i) modifying dysarthric speech into typical speech and training ASR with typical speech; (ii) modifying typical speech into dysarthric speech and training ASR with dysarthric speech for data augmentation. The experimental results showed that the latter way was more effective. The approach was tested on the UASpeech database. Compared with the speaker-dependent trained systems, this approach improved absolute accuracy by nearly 7%. The experimental results also showed that the approach’s improvement was most obvious for ASR for speakers with moderate and severe dysarthria.

Hermann and Doss [80] proposed the use of lattice-free maximum mutual information (LF-MMI) in the advanced sequence discriminative model. This research [80] aimed to further improve the accuracy of ASR for dysarthric speech. The experimental results on the TORGO database showed that the performance of ASR for dysarthric speech using LF-MMI was effectively improved. Yakoub et al. [81] proposed a deep learning architecture including CNN and EMDH (empirical mode decomposition and Hurst-based mode selection) to improve the accuracy of ASR for dysarthric speech. The results of k-fold cross-validation test on the Nemours database showed that, compared with the GMM-HMM and CNN baseline approach without EMDH enhancement, this architecture [81] improved overall accuracy by 20.72% and 9.95%, respectively. Xiong et al. [82] proposed an improved transfer-learning framework, which was suitable for increasing the robustness of dysarthric speech recognition. The proposed approach was utterance-based and selected source domain data based on the entropy of posterior probability. The ensuing statistical analysis obeyed a Gaussian distribution. Compared with CNN-TDNN (convolutional neural networks time-delay deep neural networks) trained using source domain data (as the transfer learning baseline), the proposed approach performed better on the UASpeech database. The proposed approach could accurately select potentially useful source domain data and improve absolute accuracy by nearly 20% against the transfer learning baseline for recognition of moderately and severely dysarthric speech.

Wu et al. [83] proposed a contrastive learning framework to capture the acoustic variations of dysarthric speech, aiming to obtain robust recognition results of dysarthric speech. This study also explored data augmentation strategies to alleviate the scarcity of speech data. Zaidi et al. [60] explored DNN’s capacity to improve accuracy through incorporating both CNN and LSTM. This research compared the performance of ASR for dysarthric speech based on CNN, LSTM and GMM-HMM, respectively. The experimental results showed that compared with LSTM and GMM-HMM, the DNN approach incorporating CNN and LSTM improved accuracy by 11% and 32%, respectively. Wang et al. [84] proposed using meta-learning to re-initialize the basic model to tackle the mismatch between the statistical distribution of normal and dysarthric speech. This research extended MAML (model-agnostic Meta-learning) and Reptile algorithms to update the basic model, repeatedly simulating adaptation to different speakers with dysarthria. The experimental results on UASpeech showed that compared with the DNN-HMM-based ASR without fine-tuning and ASR with fine-tuning, this Meta-learning approach in reference [84] reduced the relative word error rate by 54.2% and 7.6%, respectively. Shahamiri [85] proposed a new dysarthric-specific ASR system (called Speech Vision), which learned to recognize the shape of the words pronounced by dysarthric speakers. This visual–acoustic modelling of Speech Vision helped eliminate phoneme-related challenges. In addition, in order to address data scarcity, visual data augmentation was also used in this study. The experimental results on the UASpeech database showed that the accuracy was improved by 67%, with the biggest improvement being achieved for severely dysarthric speech.

Hu et al. [86] applied neural architecture search (NAS) to automatically learn the two hyper-parameters of factored time-delay neural networks (TDNN-Fs), namely the left and right splicing context offsets and the dimensionality of the bottleneck linear projection at each hidden layer. These techniques included the differentiable neural architecture search (DARTS) to integrate architecture learning with lattice-free MMI training; Gumbel-Softmax and Pipelined DARTS to reduce the confusion over candidate architectures and improve the generalization of architecture selection; and penalized DARTS to incorporate resource constraints to balance the trade-off between system performance and complexity. The test results on the UASpeech database showed that this proposed NAS approach for TDNN-Fs improved the performance of ASR for dysarthric speech.

2.2.3. Language–Lexical Model of ASR for Dysarthric Speech

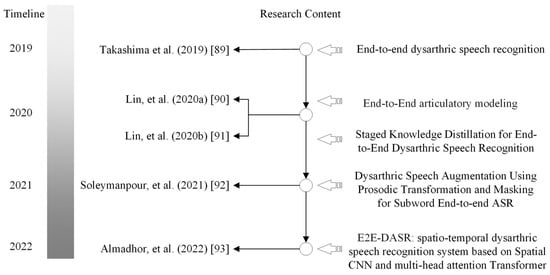

The development trend of technologies along with the timeline for designing language–lexical models of ASR for dysarthric speech are shown in Figure 5.

Figure 5.

The diagram of evolution of language–lexical models for dysarthric speech recognition.

Mengistu and Rudzicz [16] improved the recognition performance of dysarthric speech using the adaptive acoustic and lexical model. The relative word error rate of the pronunciation lexical adaptation (PLA) on a large vocabulary task of over 1500 words was reduced by an average of 8.29%. The experiment was performed on the database including six speakers with moderate-to-severe dysarthria. In addition, the speaker-dependent experimental test results of five-fold cross-validation showed that the average word error rate of PLA-based ASR was relatively reduced by 7.11%.

Seong et al. [17] proposed multiple pronunciation lexical modelling based on a phoneme confusion matrix to improve the performance of ASR for dysarthric speech. First, the system established a confusion matrix according to the recognition results at the phoneme level. Second, pronunciation variation rules were extracted according to the analysis of the confusion matrix. Finally, these rules were applied to build the multiple pronunciation lexical model. The experimental results showed that compared with the model using a group-dependent multiple pronunciation lexicon, this speaker-dependent multiple pronunciation lexicon reduced the relative word error rate by 5.06%. The researchers also used interpolation to integrate the lexicon and WSFT of a context-dependent confusion matrix, aiming at correcting the wrongly recognized phonemes [69]. The experimental results showed that compared with the baseline approach and the error correction approach with a context-independent confusion matrix [69], this approach reduced the word error rate by 5.93% and 13.68%, respectively. In research [17], the baseline ASR system was constructed in the following two steps: (1) the ASR system was trained using WSJCAM0, which was composed of 10,000 sentences spoken by 92 non-dysarthric speakers; (2) the ASR system was adapted and evaluated on the Nemours database.

Caballero-Morales and Trujillo-Romero [71] integrated multiple pronunciation patterns to improve the performance of ASR for dysarthric speech. This approach focused on designing models based on pronunciation patterns. Under this integrated approach, different ASR systems responded to the assigned weight of the constraints of each language model. Test results showed that the accuracy of this integrated method was higher than that of the standard Meta model and of the adaptation model trained using normal speech.

Sriranjani et al. [87] proposed a new approach to identify the pronunciation errors of each speaker with dysarthria, using the state-specific vector (SSV) of the acoustic model trained using phoneme cluster adaptation (Phone-CAT). SSV was a low-dimensional vector estimated for each binding state, with each element in the vector representing the weight of a specific mono-phoneme. SSV used the pronunciation lexicon of a specific speaker to adjust the pronunciation of a specific dysarthric speaker. The experimental results relating to the Nemours database showed that, compared with the ASR system constructed using the standard lexical model, this approach improved the relative accuracy of all speakers by 9%.

Yue et al. [88] used the classic TORGO database to study the influence of the language model (LM) in ASR systems. Using the LM trained using different vocabulary, they analysed the confusion results of the speakers with various degrees of dysarthria. The results showed that the complexity of the optimal LM is highly speaker-dependent.

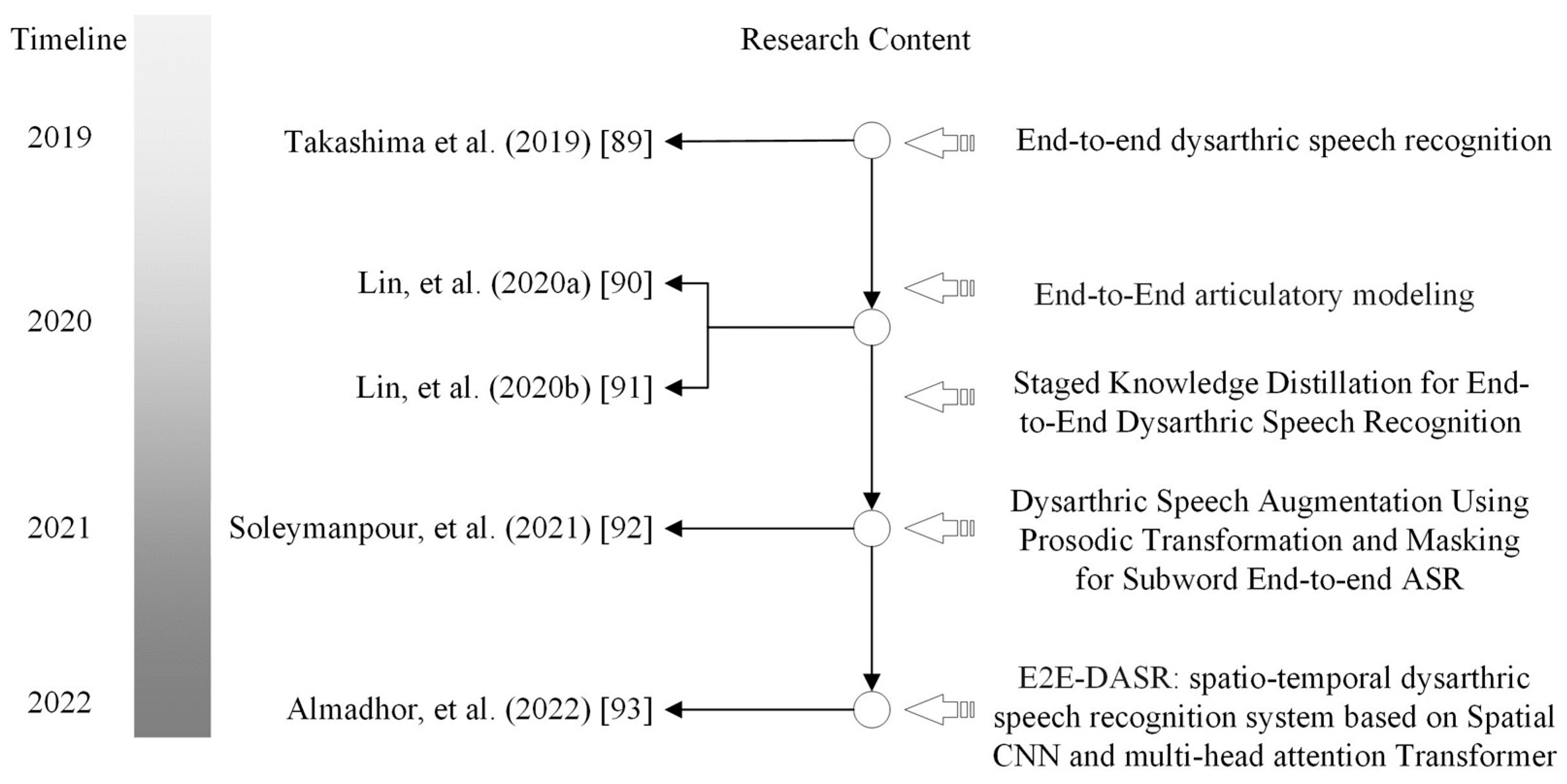

2.2.4. End-to-End ASR for Dysarthric Speech

The development trend of technologies along with the timeline for designing the end-to-end ASR for dysarthric speech are shown in Figure 6.

Figure 6.

The diagram of evolution of end-to-end ASR for dysarthric speech.

End-to-end ASR is a powerful system for processing speech; however, training this system requires a large amount of data. Unfortunately, obtaining dysarthric speech is difficult because the speaker cannot pronounce words fluently. Researchers have devoted themselves to exploring how to improve the accuracy of dysarthric speech recognition based on end-to-end ASR with low-resource speech data. For example, Takashima et al. [89] proposed an end-to-end ASR framework, which jointly encapsulates an acoustic and language model. The acoustic model portion of their framework is shared among speakers with dysarthria, and the language model portion of this framework is assigned to each language, regardless of dysarthria. The experimental results from their self-made Japanese data corpus show that end-to-end ASR can effectively recognize dysarthric speech, although the amount of speech data is very small.

Lin et al. [90] proposed refactoring the parameters of the acoustic model into two layers, and only one layer is retrained. Their operation aims to effectively use the limited data to train the end-to-end ASR for dysarthric speech. In addition, Lin et al. [91] proposed a staged knowledge distillation method to design an end-to-end ASR and automatic speech attribute transcription system for speakers with dysarthria caused by either cerebral palsy or amyotrophic lateral sclerosis. The experimental results from the TORGO database show that, compared with the baseline method, their proposed method can achieve a 38.28% relative phone error rate.

Different from the above methods of dealing with dysarthric speech, Soleymanpour et al. [92] proposed a specialized data augmentation approach to enhance the performance of end-to-end ASR based on sub-word models. Their proposed methods contain two parts: “prosodic transformation” and “time-feature masking”. The experimental results of the TORGO database show that their proposed method can reduce the character error rate by 11.3% and the word error rate by 11.4%.

Almadhor et al. [93] proposed a spatio–temporal dysarthric speech recognition system based on spatial CNN and multi-head attention transformer to visually extract the acoustic features from dysarthric speech. The experimental results from UASpeech show that the best word recognition accuracy (WRA: they used WRA to evaluate their proposed method) achieved 90.75%, 61.52%, 69.98% and 36.91% for low-level dysarthric speech, mild-level dysarthric speech, high-level dysarthric speech and very-high-level dysarthric speech, respectively. However, overfitting problems influence the generalization of the system.

3. Discussion

This scoping review aimed to summarize the development and trends in ASR technologies for dysarthric speech. Different from the previous surveys [31,32], our survey systematically reviewed ASR technologies for dysarthric speech, including “dysarthric speech databases”, “acoustic features”, “acoustic models”, “language-lexical models” and “end-to-end models”. Our survey discussed the trend of ASR technologies for dysarthric speech along with the development of machine learning and deep learning methods.

Before deep learning, ASR technologies for dysarthric speech developed slowly. In addition, the commercial application of ASR for speakers with dysarthria is not yet mature. Several challenges need to be addressed. For example, the accuracy is not high enough in practical applications. Furthermore, the performance of trained ASR models is not stable enough. In the era of deep learning, high-performance computation and powerful deep learning methods can effectively improve the accuracy of ASR for dysarthric speech. Nevertheless, the scarcity and dearth of data pose significant limitations to further improving ASR for dysarthric speech. A problem we cannot avoid is that data collection from speakers with dysarthria is too difficult. In the future, considering cost and limited resources, researchers could make efforts in two directions: “how to make more data corpus of dysarthric speech” and “how to further improve the performance of ASR trained by few or zero resource data”. Especially, we can consider fusing more modality signals to solve the problem of too little resource data.

4. Conclusions

This scoping survey analysed 63 papers selected from 139 papers in the field of ASR for dysarthric speech. From four aspects of “acoustic feature”, “acoustic model”, “language-lexical model” and “End-to-End ASR for dysarthric speech”, we summarized the development of ASR for dysarthric speech. The poor generalization applicability of the acoustic model caused by the large variations among dysarthric speakers is a major challenge in terms of ASR to reduce speaker dependence. In addition, the limited availability of speech data makes it difficult to train ASR models with sufficient data to achieve high accuracy. These challenges pose significant obstacles to the commercialization and widespread adoption of ASR systems for dysarthric speech. This scoping survey provides technical references for researchers in the field of ASR for dysarthric speech, highlighting the need for continued research in this area to address these challenges. Future research should focus on developing more robust and adaptive acoustic models that can account for the diverse vocal characteristics of dysarthric speakers, as well as exploring alternative approaches to data acquisition and representation that can overcome data scarcity issues. By addressing these challenges, ASR systems for dysarthric speech can become more accurate, reliable, and widely accessible.

Author Contributions

Conceptualization, Z.Q.; methodology, Z.Q. and K.X.; writing, original draft preparation, Z.Q.; writing, review and editing, Z.Q. and K.X.; supervision, Z.Q.; project administration, Z.Q.; funding acquisition, Z.Q. All authors have read and agreed to the published version of the manuscript.

Funding

This research was funded by the Humanity and Social Science Youth Foundation of the Ministry of Education of China, grant number 21YJCZH117.

Data Availability Statement

Not applicable.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Rampello, L.; Rampello, L.; Patti, F.; Zappia, M. When the word doesn’t come out: A synthetic overview of dysarthria. J. Neurol. Sci. 2016, 369, 354–360. [Google Scholar] [CrossRef]

- Rauschecker, J.P.; Scott, S.K. Maps and streams in the auditory cortex: Nonhuman primates illuminate human speech processing. Nat. Neurosci. 2009, 12, 718–724. [Google Scholar] [CrossRef] [PubMed]

- Hauser, M.D.; Chomsky, N.; Fitch, W.T. The faculty of language: What is it, who has it, and how did it evolve? Science 2002, 298, 1569–1579. [Google Scholar] [CrossRef] [PubMed]

- Sapir, S.; Aronson, A.E. The relationship between psychopathology and speech and language disorders in neurologic patients. J. Speech Hear. Disord. 1990, 55, 503–509. [Google Scholar] [CrossRef] [PubMed]

- Kent, R.D. Research on speech motor control and its disorders: A review and prospective. J. Commun. Disord. 2000, 33, 391–428. [Google Scholar] [CrossRef] [PubMed]

- Li, M.; Lyden, P.; Brady, M. Aphasia and dysarthria in acute stroke: Recovery and functional outcome. Int. J. Stroke Off. J. Int. Stroke Soc. 2005, 10, 400–406. [Google Scholar] [CrossRef]

- Ramig, L.O.; Sapir, S.; Fox, C.; Countryman, S. Changes in vocal loudness following intensive voice treatment (LSVT®) in individuals with Parkinson’s disease: A comparison with untreated patients and normal age-matched controls. Mov. Disord. 2001, 16, 79–83. [Google Scholar] [CrossRef] [PubMed]

- Bhogal, S.K.; Teasell, R.; Speechley, M. Intensity of Aphasia Therapy, Impact on Recovery. Stroke 2003, 34, 987–993. [Google Scholar] [CrossRef]

- Kwakkel, G. Impact of intensity of practice after stroke: Issues for consideration. Disabil. Rehabil. 2006, 28, 823–830. [Google Scholar] [CrossRef] [PubMed]

- Rijntjes, M.; Haevernick, K.; Barzel, A.; Van Den Bussche, H.; Ketels, G.; Weiller, C. Repeat therapy for chronic motor stroke: A pilot study for feasibility and efficacy. Neuro Rehabil. Neural Repair 2009, 23, 275–280. [Google Scholar] [CrossRef]

- Beijer, L.J.; Rietveld, T. Potentials of Telehealth Devices for Speech Therapy in Parkinson’s Disease, Diagnostics and Rehabilitation of Parkinson’s Disease; pp. 379–402. 2011. Available online: https://api.semanticscholar.org/CorpusID:220770421 (accessed on 9 September 2023).

- Sanders, E.; Ruiter, M.B.; Beijer, L.; Strik, H. Automatic Recognition of Dutch Dysarthric Speech: A Pilot Study. In Proceedings of the 7th International Conference on Spoken Language Processing, ICSLP2002–INTERSPEECH, Denver, CO, USA, 16–20 September 2002; pp. 661–664. [Google Scholar] [CrossRef]

- Hasegawa-Johnson, M.; Gunderson, J.; Penman, A.; Huang, T. Hmm-Based and Svm-Based Recognition of the Speech of Talkers with Spastic Dysarthria. In Proceedings of the IEEE International Conference on Acoustics Speech and Signal Processing Proceedings, Toulouse, France, 14–19 May 2006; pp. III.1060–III.1063. [Google Scholar] [CrossRef]

- Rudzicz, F. Comparing speaker-dependent and speaker-adaptive acoustic models for recognizing dysarthric speech. In Proceedings of the Assets 07: 9th International ACM SIGACCESS Conference on Computers and Accessibility, New York, NY, USA, 15–17 October 2007; pp. 255–256. [Google Scholar] [CrossRef]

- Morales, S.O.C.; Cox, S.J. Modelling Errors in Automatic Speech Recognition for Dysarthric Speakers. EURASIP J. Adv. Signal Process. 2009, 2009, 308340. [Google Scholar] [CrossRef]

- Mengistu, K.; Rudzicz, F. Adapting acoustic and lexical models to dysarthric speech. In Proceedings of the IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Prague, Czech Republic, 22–27 May 2011; pp. 4924–4927. [Google Scholar] [CrossRef]

- Seong, W.K.; Park, J.H.; Kim, H.K. Multiple pronunciation lexical modeling based on phoneme confusion matrix for dysarthric speech recognition. Adv. Sci. Technol. Lett. 2012, 14, 57–60. [Google Scholar]

- Christensen, H.; Cunningham, S.; Fox, C.; Green, P.; Hain, T. A comparative study of adaptive, automatic recognition of disordered speech. In Proceedings of the Interspeech’12: 13th Annual Conference of the International Speech Communication Association, Portland, OR, USA, 9–13 September 2012; pp. 1776–1779. [Google Scholar]

- Shahamiri, S.R.; Salim, S.S.B. Artificial neural networks as speech recognisers for dysarthric speech: Identifying the best-performing set of MFCC parameters and studying a speaker-independent approach. Adv. Eng. Inform. 2014, 28, 102–110. [Google Scholar] [CrossRef]

- Takashima, Y.; Nakashika, T.; Takiguchi, T.; Arikii, Y. Feature extraction using pre-trained convolutive bottleneck nets for dysarthric speech recognition. In Proceedings of the 23rd European Signal Processing Conference (EUSIPCO), Nice, France, 31 August–4 September 2015; pp. 1411–1415. [Google Scholar] [CrossRef]

- Lee, T.; Liu, Y.Y.; Huang, P.W.; Chien, J.T.; Lam, W.K.; Yeung, Y.T.; Law, T.K.T.; Lee, K.Y.S.; Kong, A.P.H.; Law, S.P. Automatic speech recognition for acoustical analysis and assessment of Cantonese pathological voice and speech. In Proceedings of the IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Shanghai, China, 20–25 March 2016; pp. 6475–6479. [Google Scholar] [CrossRef]

- Joy, N.M.; Umesh, S.; Abraham, B. On improving acoustic models for Torgo dysarthric speech database. In Proceedings of the 18th Annual Conference of the International Speech Communication Association (INTERSPEECH 2017), Stockholm, Sweden, 20–24 August 2017; pp. 2695–2699. [Google Scholar] [CrossRef]

- Joy, N.M.; Umesh, S. Improving Acoustic Models in TORGO Dysarthric Speech Database. IEEE Trans. Neural Syst. Rehabil. Eng. 2018, 26, 637–645. [Google Scholar] [CrossRef] [PubMed]

- Sharma, H.V.; Hasegawa-Johnson, M. Acoustic model adaptation using in-domain background models for dysarthric speech recognition. Comput. Speech Lang. 2013, 27, 1147–1162. [Google Scholar] [CrossRef]

- Tu, M.; Wisler, A.; Berisha, V.; Liss, J.M. The relationship between perceptual disturbances in dysarthric speech and automatic speech recognition performance. J. Acoust. Soc. Am. 2016, 140, EL416–EL422. [Google Scholar] [CrossRef]

- Jayaram, G.; Abdelhamied, K. Experiments in dysarthric speech recognition using artificial neural networks. J. Rehabil. Res. Dev. 1995, 32, 162. [Google Scholar]

- Polur, P.D.; Miller, G.E. Experiments with fast Fourier transform, linear predictive and cepstral coefficients in dysarthric speech recognition algorithms using hidden Markov model. IEEE Trans. Neural Syst. Rehabil. Eng. 2005, 13, 558–561. [Google Scholar] [CrossRef]

- Yilmaz, E.; Mitra, V.; Sivaraman, G.; Franco, H. Articulatory and bottleneck features for speaker-independent ASR of dysarthric speech. Comput. Speech Lang. 2019, 58, 319–334. [Google Scholar] [CrossRef]

- Kim, M.; Wang, J.; Kim, H. Dysarthric Speech Recognition Using Kullback-Leibler Divergence-based Hidden Markov Model. In Proceedings of the 17th Annual rence of the International Speech Communication Association (INTERSPEECH 2016), San Francisco, CA, USA, 8–12 September 2016; pp. 2671–2675. [Google Scholar] [CrossRef]

- Kim, M.; Cao, B.; An, K.; Wang, J. Dysarthric Speech Recognition Using Convolutional LSTM Neural Network. In Proceedings of the 19th Annual Conference of the International Speech Communication Association (INTERSPEECH 2018), Hyderabad, India, 2–6 September 2018. [Google Scholar] [CrossRef]

- Young, V.; Mihailidis, A. Difficulties in automatic speech recognition of dysarthric speakers and implications for speech-based applications used by the elderly: A literature review. Assist. Technol. 2010, 22, 99–112. [Google Scholar] [CrossRef]

- Mustafa, M.B.; Rosdi, F.; Salim, S.S.; Mughal, M.U. Exploring the influence of general and specific factors on the recognition accuracy of an ASR system for dysarthric speaker. Expert Syst. Appl. 2015, 42, 3924–3932. [Google Scholar] [CrossRef]

- Moher, D.; Liberati, A.; Tetzlaff, J.; Altman, D.G.; The PRISMA Group. Preferred reporting items for systematic reviews and analyses: The PRISMA statement. PLoS Med. 2009, 6, e1000097. [Google Scholar] [CrossRef] [PubMed]

- Deller, J.R., Jr.; Liu, M.S.; Ferrier, L.J.; Robichaud, P. The Whitaker database of dysarthric (cerebral palsy) speech. J. Acoust. Soc. Rica 1993, 93, 3516–3518. [Google Scholar] [CrossRef] [PubMed]

- Dodding, G.R.; Schalk, T.B. Speech Recognition: Turning Theory to Practice. IEEE Spectr. 1981, 18, 26–32. [Google Scholar] [CrossRef]

- Johnson, W.; Darley, F.; Spriestersbach, D. Diagnostic Methods in Speech Pathology; Harper & Row: New York, NY, USA, 1963. [Google Scholar]

- Kim, H.; Hasegawa-Johnson, M.; Perlman, A.; Gunderson, J.; Huang, T.; Watkin, K.; Frame, S. Dysarthric speech database for universal access research. In Proceedings of the Ninth Annual Conference of the International Speech Communication Association (Interspeech 2008), Brisbane, Australia, 22–26 September 2008; pp. 741–1744. [Google Scholar]

- Chongchong, Y.; Xiaosu, S.; Zhaopeng, Q. Multi-Stage Audio-Visual Fusion for Dysarthric Speech Recognition with Pre-Trained Models. IEEE Trans. Neural Syst. Rehabil. Eng. 2023, 31, 1912–1921. [Google Scholar] [CrossRef]

- Rudzicz, F.; Namasivayam, A.K.; Wolff, T. The TORGO database of acoustic and articulatory speech from speakers with dysarthria. Lang. Resour. Eval. 2012, 46, 523–541. [Google Scholar] [CrossRef]

- Enderby, P. Frenchay dysarthria assessment. Br. J. Disord. Commun. 1980, 15, 165–173. [Google Scholar] [CrossRef]

- Yorkston, K.M.; Beukelman, D.R.; Traynor, C. Assessment of Intelligibility of Dysarthric Speech; Pro-ed.: Austin, TX, USA, 1984. [Google Scholar]

- Clear, J.H. The British National Corpus. In The Digital Word: Text-Based Computing in the Humanities; MIT: Cambridge, MA, USA, 1993; pp. 163–187. [Google Scholar]

- Menendez-Pidal, X.; Polikoff, J.B.; Peters, S.M.; Leonzio, J.E.; Bunnell, H.T. The Nemours database of dysarthric speech. In Proceeding of the Fourth International Conference on Spoken Language Processing, ICSLP’96, Philadelphia, PA, USA, 3–6 October 1996; pp. 1962–1965. [Google Scholar] [CrossRef]

- Wrench, A. The MOCHA-TIMIT Articulatory Database. 1999. Available online: https://data.cstr.ed.ac.uk/mocha/ (accessed on 9 September 2023).

- Zue, V.; Seneff, S.; Glass, J. Speech database development at MIT: TIMIT and beyond. Speech Commun. 1990, 9, 351–356. [Google Scholar] [CrossRef]

- Bennett, J.W.; van Lieshout, P.H.H.M.; Steele, C.M. Tongue control for speech and swallowing in healthy younger and older subjects. Int. J. Facial Myol. Off. Publ. Int. Assoc. Orofac. Myol. 2007, 33, 5–18. [Google Scholar] [CrossRef]

- Patel, R. Prosodic Control in Severe Dysarthria: Preserved Ability to Mark the Question-Statement Contrast. J. Speech Lang. Hear. Res. 2002, 45, 858–870. [Google Scholar] [CrossRef] [PubMed]

- Roy, N.; Leeper, H.A.; Blomgren, M.; Cameron, R.M. A Description of Phonetic, Acoustic, and Physiological Changes Associated with Improved intelligibility in a speaker With Spastic Dysarthria. Am. J. Speech-Lang. Pathol. 2001, 10, 274–290. [Google Scholar] [CrossRef]

- Webber, S.G. Webber Photo Cards: Story Starters. 2005. Available online: https://www.superduperinc.com/webber-photo-cards-story-starters.html (accessed on 9 September 2023).

- Rudzicz, F. Applying discretized articulatory knowledge to dysarthric speech. In Proceedings of the IEEE International Conference on Acoustics, Speech and Signal Processing, Taipei, Taiwan, China, 19–24 April 2009; pp. 4501–4504. [Google Scholar] [CrossRef]

- Blaney, B.; Wilson, J. Acoustic variability in dysarthria and computer speech recognition. Clin. Linguist. Phon. 2000, 14, 27. [Google Scholar] [CrossRef]

- Fager, S.K. Duration and Variability in Dysarthric Speakers with Traumatic Brain Injury; The University of Nebraska-Lincol: Lincoln, NE, USA, 2008. [Google Scholar]

- Rudzicz, F. Articulatory knowledge in the recognition of dysarthric speech. IEEE Trans. Audio Speech Lang. Process. 2010, 19, 947–960. [Google Scholar] [CrossRef]

- Christensen, H.; Aniol, M.B.; Bell, P.; Green, P.; Hain, T.; King, S.; Swietojanski, P. Combining in-domain and out-of-domain speech data for automatic recognition of disordered speech. In Proceedings of the 14th Annual Conference of International Speech Communication Association (INTERSPEECH 2013), Lyon, France, 25–29 August 2013; pp. 3642–3645. [Google Scholar]

- Walter, O.; Despotovic, V.; Haeb-Umbach, R.; Gemnzeke, J.F.; Ons, B.; Van Hamme, H. An evaluation of unsupervised acoustic model training dysarthric speech interface. In Proceedings of the 15th Annual Conference of the International Speech Communication Association (INTERSPEECH 2014), Singapore, 14–18 September 2014; pp. 3–1017. [Google Scholar]

- Hahm, S.; Heitzman, D.; Wang, J. Recognizing dysarthric speech due to amyotrophic lateral sclerosis with across-speaker articulatory normalization. In Proceedings of the 6th Workshop on Speech and Language Processing for Assistive Technologies, Dresden, Germany, 11 September 2015; pp. 47–54. [Google Scholar]

- Bhat, C.; Vachhani, B.; Kopparapu, S. Recognition of Dysarthric Speech Using Voice Parameters for Speaker Adaptation and Multi-Taper ral Estimation. In Proceedings of the 17th Annual Conference of the International Speech Communication Association (INTER-SPEECH 2016), San Francisco, CA, USA, 8–12 September 2016; pp. 228–232. [Google Scholar] [CrossRef]

- Vachhani, B.; Bhat, C.; Das, B.; Kopparapu, S.K. Deep Autoencoder Based Speech Features for Improved Dysarthric Speech Recognition. In Proceedings of the 18th Annual Conference of International-Speech-Communication-Association (INTERSPEECH 2017), Stockholm, Sweden, 20–24 August 2017; pp. 1854–1858. [Google Scholar] [CrossRef]

- Xiong, F.; Barker, J.; Christensen, H. Deep learning of articulatory-based representations and applications for improving dysarthric speech recognition. speech Communication. In Proceedings of the 13th ITG-Symposium, 2018, VDE, Oldenburg, Germany, 16 December 2018; pp. 1–5. [Google Scholar]

- Zaidi, B.F.; Selouani, S.A.; Boudraa, M.; Yakoub, M.S. Deep neural network architectures for dysarthric speech analysis and recognition. Neural Comput. Appl. 2021, 33, 9089–9108. [Google Scholar] [CrossRef]

- Revathi, A.; Nagakrishnan, R.; Sasikaladevi, N. Comparative analysis of Dysarthric speech recognition: Multiple features and robust templates. Multimed. Tools Appl. 2022, 81, 31245–31259. [Google Scholar] [CrossRef]

- Rajeswari, R.; Devi, T.; Shalini, S. Dysarthric Speech Recognition Using Variational Mode Decomposition and Convolutional Neural Networks. Wirel. Pers. Commun. 2022, 122, 293–307. [Google Scholar] [CrossRef]

- Greenm, P.D.; Carmichael, J.; Hatzis, A.; Enderby, P.; Hawley, M.S.; Parker, M. Automatic speech recognition with sparse training data for dysarthric speakers. In Proceedings of the European Conference on Speech Communication and Technology, (EUROSPEECH 2003–INTERSPEECH 2003), ISCA, Geneva, Switzerland, 1–4 September 2003. [Google Scholar]

- Hain, T. Implicit modelling of pronunciation variation in automatic speech recognition. Speech Commun. 2005, 46, 171–188. [Google Scholar] [CrossRef]

- Hawley, M.S.; Enderby, P.; Green, P.; Cunningham, S.; Brownsell, S.; Carmichael, J.; Parker, M.; Hatzis, A.; Peter, O.; Palmer, R. A speech-controlled environmental control system for people with severe dysarthria. Med. Eng. Phys. 2007, 29, 586–593. [Google Scholar] [CrossRef]

- Morales, S.O.C.; Cox, S.J. Application of weighted finite-state transducers to improve recognition accuracy for dysarthric speech. In Proceedings of the 9th Annual Conference of the International Speech Communication Association (INTERSPEECH 2008), Brisbane, Australia, 22–26 September 2008. [Google Scholar]

- Selouani, S.A.; Yakoub, M.S.; O’Shaughnessy, D. Alternative speech communication system for persons with severe speech disorders. EURASIP J. Adv. Signal Process. 2009, 2009, 540409. [Google Scholar] [CrossRef]

- Sharma, H.V.; Hasegawa-Johnson, M. State-transition interpolation and MAP adaptation for HMM-based dysarthric speech recognition. In Proceedings of the NAACL HLT 2010 Workshop Speech and Language Processing for Assistive Technologies, Los Angeles, CA, USA, 5 June 2010; pp. 72–79. [Google Scholar]

- Seong, W.K.; Park, J.H.; Kim, H.K. Dysarthric speech recognition error correction using weighted finite state transducers based on context-dependent pronunciation variation. In Proceedings of the ICCHP’12: 13th International Conference Computers Helping People with Special Needs, Linz, Austria, 11–13 July 2012; Part II. pp. 475–482. [Google Scholar] [CrossRef]

- Shahamiri, S.R.; Salim, S.S.B. A Multi-Views Multi-Learners Approach Towards Dysarthric Speech Recognition Using Multi-Nets Artificial Neural Networks. IEEE Trans. Neural Syst. Rehabil. Eng. 2014, 22, 1053–1063. [Google Scholar] [CrossRef]

- Caballero-Morales, S.O.; Trujillo-Romero, F. Evolutionary approach for integration of multiple pronunciation patterns for enhancement of dysarthric speech recognition. Expert Syst. Appl. 2014, 41, 841–852. [Google Scholar] [CrossRef]