4.4.1. Quantitative Analysis

In this paper, some representative Block-Matching and 3D Filtering (BM3D) [

21], DnCNN [

23], Wasserstein Generative Adversarial Network with VGG Loss (WGAN-VGG) [

28], and Residual-Generative Adversarial Network (Re-GAN) [

42] are used as comparison algorithms, and Gaussian white noise with noise intensity

of 15, 25 and 50 is added to the BSD68 dataset, respectively. The PSNR and SSIM of different image denoising methods are illustrated in

Table 3. For a more intuitive representation,

Figure 7 describes the PSNR values of different algorithms in the form of a bar chart under varying levels of noise intensity. The values of PSNR and SSIM represent the average results of all images in the entire dataset. The experimental data obtained in the table shows that the denoising effect of the algorithm proposed in this paper surpasses that of other comparable algorithms to a significant extent under different noise intensities, and both PSNR and SSIM have been improved to varying degrees.

When the noise intensity is set to = 15, the application of the algorithm proposed in this paper results in an impressive average increase of 9.05 dB in PSNR value after denoising. In direct comparison with the BM3D algorithm, both the PSNR and SSIM values show notable improvements, increasing by 3.7% and 2.3%, respectively. Similarly, in comparison to the fundamental DnCNN algorithm, the PSNR value and SSIM value demonstrate improvements of 2.3% and 1.0%, respectively. In contrast with WGAN-VGG, the PSNR value and SSIM value experience enhancements of 1.5% and 0.8%, respectively. Lastly, in comparison to Re-GAN, the PSNR value and SSIM value show increases of 0.7% and 0.1%, respectively. Notably, experimental data reveals that even when noise intensity remains relatively low, RCA-GAN consistently exhibits strong denoising capabilities, particularly in scenarios where differences between deep learning methods are not substantial.

When the noise intensity is set to = 25, the denoising capabilities of RCA-GAN far exceed those of the initial three denoising algorithms. In direct comparison to the denoising outcomes achieved by BM3D, DnCNN, and WGAN-VGG, the PSNR value exhibits substantial improvements, increasing by 10.6%, 4.0%, and 1.7%, respectively. Interestingly, the average PSNR value after applying the RCA-GAN denoising method is marginally lower than that of the Re-GAN algorithm. This suggests that the disparities in pixel values between the two resulting images are not particularly conspicuous. However, when evaluating structural similarity, it becomes evident that RCA-GAN surpasses Re-GAN by a margin of 0.4%. This signifies superior visual performance in images denoised by the algorithm presented in this paper.

At a noise intensity level of = 50, RCA-GAN demonstrates notably enhanced content integrity and improved structural similarity. In direct comparison to the BM3D algorithm, both the PSNR and SSIM values exhibit substantial increases, showing improvements of 8.2% and 16.2%, respectively. Similarly, when compared to the fundamental DnCNN method, both the PSNR value and SSIM value experience significant enhancements, demonstrating improvements of 5.9% and 6.3%, respectively. In contrast, when pitted against WGAN-VGG, the PSNR value and SSIM value demonstrate appreciable improvements of 2.6% and 2.5%, respectively. Additionally, compared to the Re-GAN algorithm, both the PSNR value and SSIM value register increases of 2.4% and 1.7%, respectively. What stands out is that, unlike scenarios with low noise, it becomes evident that the approach proposed in this paper exponentially augments the denoising effect in cases characterized by high noise intensity.

Apart from common metrics like PSNR and SSIM, time complexity is also an important criterion for evaluating image denoising algorithms. To clearly demonstrate the complexity of different algorithms, multiple experiments were conducted using images with a noise standard deviation of 15 and a resolution of 256 pixels × 256 pixels. The average execution times for different algorithms were obtained, and

Table 4 presents the average runtime of different denoising algorithms. From the data in

Table 4, it is evident that in a CPU runtime environment, RCA-GAN reduces the average denoising time by 8.06 s compared to BM3D, by 2.02 s compared to DnCNN, by 1.15 s compared to WGAN-VGG, and by 0.58 s compared to Re-GAN. In a GPU runtime environment, RCA-GAN exhibits a 35.3% improvement in average denoising efficiency compared to DnCNN, a 26.7% improvement compared to WGAN-VGG, and a 21.4% improvement compared to Re-GAN. In comparison to other deep learning denoising algorithms, our algorithm enhances effective feature utilization by incorporating an attention mechanism while reducing the number of feature extraction layers and residual blocks. This reduces computational complexity compared to traditional convolutional layers and enhances processing speed. Therefore, our algorithm demonstrates superior performance in terms of runtime.

In comparison to other conventional algorithms, although our algorithm excels in terms of the average denoising time per single image, it is important to note that our algorithm’s training time is relatively prolonged. This situation primarily arises from the utilization of the Deep GAN architecture, which demands a substantial number of training iterations and computational resources. Particularly, when handling high-resolution images or extensive datasets, the training time may experience significant extensions. While the extended training time stands as a limitation of our algorithm, it is imperative to recognize that this challenge is prevalent within the current domain of deep learning methodologies. Future endeavors may be directed toward further optimizing the training process, enhancing computational efficiency, and exploring swifter model architectures to augment the feasibility of our algorithm. We acknowledge this aspect and encourage prospective research to persistently refine and advance the technologies within this field to overcome the temporal constraints.

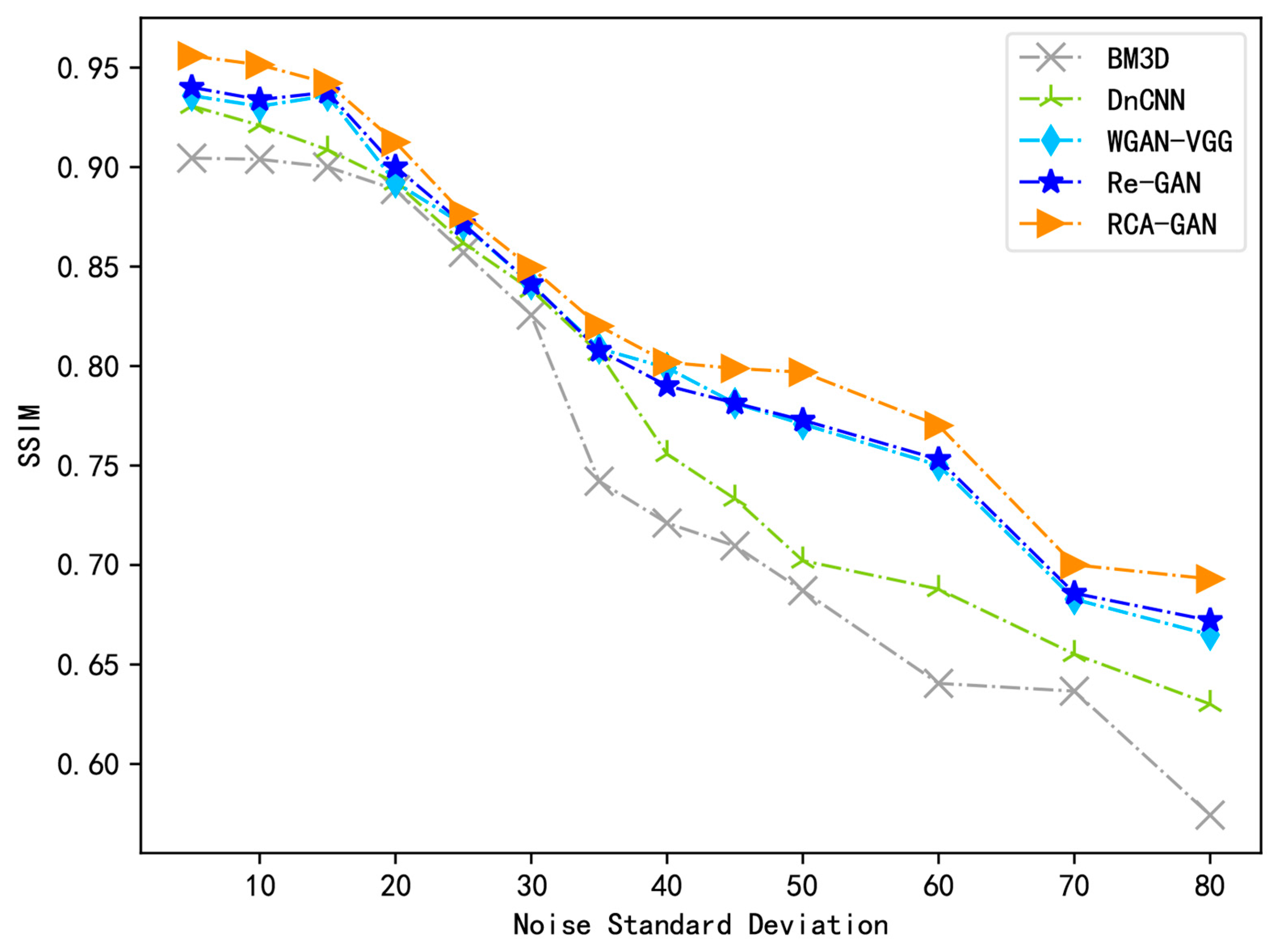

To ensure the experiment’s rigor, this study assesses the denoising effectiveness of RCA-GAN within a specific range of noise intensities. Initially, noise is incrementally added to the same test set image, commencing from a noise intensity of

= 5, and progressing to a maximum noise intensity of

= 80 (beyond which image repair becomes nearly impossible). This process yields a total of 13 noisy images. Subsequently, each of the five denoising models introduced in this paper is applied to denoise the aforementioned noisy images individually. Finally, the PSNR and SSIM values of the denoised images are computed to objectively evaluate the denoising capabilities of the RCA-GAN model.

Figure 8 and

Figure 9 depict the change curves in PSNR and SSIM values for each algorithm model within the specified range of noise intensities following image denoising.

The experimental results reveal that RCA-GAN exhibits enhanced PSNR and SSIM metrics in comparison to other benchmark algorithms. Consequently, with the incorporation of the mixed attention mechanism, RCA-GAN demonstrates superior capabilities in preserving image details while effectively eliminating noise.

4.4.2. Qualitative Analysis

When evaluating denoising effects, subjective impressions are as crucial as objective experimental data. To comprehensively assess the disparities between our proposed approach and the comparison algorithms, we selected two images—Barbara and Boats—from the CSet8 test set. These images were subjected to different levels of Gaussian white noise (

= 25, 50) to evaluate denoising outcomes, as depicted in

Figure 10 and

Figure 11.

Figure 10 illustrates the denoising visual outcomes of the Boats image, alongside results obtained using other denoising algorithms, at a noise intensity of

= 25. Upon close examination, it becomes evident that the traditional denoising algorithm BM3D effectively eliminates noise from the entire image. However, the processed image exhibits noticeable blurriness, resulting in a loss of vital image detail information. In the case of DnCNN and WGAN-VGG, their denoising processes introduce a blurred smoothing effect along the edges of the image. Meanwhile, Re-GAN succeeds in preserving a greater amount of detailed information, and its visual results closely resemble those produced by the algorithm presented in this paper. Nevertheless, upon closer scrutiny of enlarged detail areas, the algorithm proposed in this paper demonstrates superior capabilities in retaining image texture, edge definition, and other critical details, ultimately leading to enhanced visual effects.

Figure 11 illustrates the denoising visual results of the House image with a noise intensity of

= 50 in comparison to other benchmark algorithms. From

Figure 10, it is evident that as noise intensity increases, the traditional denoising algorithm BM3D struggles to effectively address the denoising task. DnCNN, while affected by noise, mistakenly preserves noise as useful information. WGAN-VGG and Re-GAN, though proficient at removing noise, overly smooth the image’s structure, resulting in a loss of fine texture details in the denoised images. In contrast, employing our proposed algorithm, RCA-GAN, not only retains a relatively higher level of fine detail information but also presents a clearer overall visual perception that closely resembles the original image. This demonstrates that our algorithm excels in effective noise removal while preserving more image texture details, showcasing its robust denoising performance.

The RCA-GAN model additionally chooses three monochrome images from the BSD68 dataset—Man, Traffic, and Alley—for testing and visualization purposes. The denoising effect diagrams for these images are presented in

Figure 12,

Figure 13 and

Figure 14.

As demonstrated in the sleeve portion of the Man image within

Figure 12g, it becomes evident that RCA-GAN achieves significantly higher image clarity and texture quality after denoising at low noise intensity, surpassing the performance of DnCNN and WGAN-VGG. Likewise, in the leafy region at the top-left corner of the Traffic image featured in

Figure 13g, encompassing the outline of the white car and the shadow effects of the car door handle, RCA-GAN exhibits impressive pixel retention capabilities under medium noise intensity. Further observations, illustrated by the enlarged wall section in the Alley image showcased in

Figure 14g, reveal that the RCA-GAN model excels in restoring texture details and preserving edge structures. Collectively, the experimental results establish that the RCA-GAN model excels in reconstructing intricate image features and texture characteristics while effectively reducing noise, thereby enhancing the quality and content accuracy of the generated image.

4.4.4. Analysis of Different Weight Coefficients in the Loss Function

In this experiment, the optimal combination of weighting factors for the Multimodal Loss Function was determined through multiple iterations. These weighting factors included perceptual feature loss, pixel space content loss, texture loss, and adversarial loss. The ideal set of weighting values, which resulted in the best performance in terms of evaluation metrics, was found to be

= 1.0,

= 0.01,

= 0.001, and

= 1.0, respectively. This specific set of weight values enabled our model to achieve its peak performance across various performance indicators. A quantitative comparison of denoising results with different weight coefficients for the loss function is presented in

Table 6.

The adjustment of the perceptual feature loss weight impacts the perceptual quality and structural characteristics of the image. A lower weight leads to insufficient optimization of the network for the perceptual features of the input image, thereby affecting the quality and structural characteristics of the reconstructed image. Conversely, increasing the weight of the perceptual feature loss encourages the model to focus more on pixel-level details. Nevertheless, this might introduce some high-frequency noise, resulting in a decreased PSNR value.

When the weight of pixel space content loss is increased, the PSNR values demonstrate a relatively stable trend, signifying the preservation of pixel-level similarity. Nonetheless, we observe alterations in SSIM values, indicating a minor compromise in the model’s capacity to maintain the image’s structural and semantic information. This outcome highlights the delicate balance in weight settings, in which an elevation in pixel space content loss weight aids in conserving pixel-level similarity while concurrently diminishing structural similarity in the image.

Texture loss is intended to capture the details and textures within an image, and an increase in its weight encourages the model to focus more on these specific features. However, this adjustment can introduce high-frequency noise, leading to a reduction in pixel-level similarity and causing fluctuations in pixel-level comparisons. Simultaneously, we also observed slight variations in SSIM values, suggesting that the model’s treatment of the image’s structural and semantic information was only minimally affected. Nevertheless, these variations did not demonstrate a significant trend.

When adjusting the weight of the adversarial loss, there was no significant change in image reconstruction quality with this weight modification. However, the decrease in SSIM is relatively more pronounced, indicating a certain reduction in the visual quality of the images. This is because adversarial loss plays a crucial role in the image generation process and is essential for maintaining the visual and perceptual quality of the images. Therefore, fine-tuning the weight of the adversarial loss has a critically important impact on improving the image generation quality of the model.