Abstract

With the growing robustness of modern power systems, the occurrence of load curtailment events is becoming lower. Hence, the simulation of these events constitutes a challenge in adequacy indices assessment. Due to the rarity of the load curtailment events, the standard Monte Carlo simulation (MCS) estimator of adequacy indices is not practical. Therefore, a framework based on the enhanced cross-entropy-based importance sampling (ECE-IS) method is introduced in this paper for computing the adequacy indices. The framework comprises two stages. Using the proposed ECE-IS method, the first stage’s purpose is to identify the samples or states of the nodal generation and load that are greatly significant to the adequacy indices estimators. In the second stage, the density of the input variables’ conditional on the load curtailment domain obtained by the first stage are used to compute the nodal and system adequacy indices. The performance of the ECE-IS method is verified through a comparison with the standard MCS method and the recent techniques of rare events simulation in literature. The results confirm that the proposed method develops an accurate estimation for the nodal and system adequacy indices (loss of load probability (LOLP), expected power not supplied (EPNS)) with appropriate convergence value and low computation time.

1. Introduction

Power system reliability evaluation plays a crucial role in the decision-making process of power system development planning. The reliability evaluation process invariably involves consideration of adequacy and security concepts [1,2]. Security is explained as “the measure of how an electric power system can withstand sudden disturbances such as electric short circuits or unanticipated loss of system components”. While adequacy is determined as ”a measure of the ability of a bulk power system to supply the aggregate electric power and energy requirements of the customers within component ratings and voltage limits, taking into account scheduled and unscheduled outages of system components and the operating constraints imposed by operations”. Technically, there is a strong relationship between both concepts, but they are quite different from the economic aspects [3]. This paper mainly concentrates on the adequacy evaluation problem. As a rule, three main functional zones of a power system are highlighted in the part of reliability related to adequacy [1]. HL1 is associated with the total system generation. HL2, in addition to generation facilities, includes transmission facilities. HL3 considers a full power system structure up to individual consumer points. The hierarchical levels mentioned above require power system models of different levels of detail. The adequacy evaluation problem is here considered for HL2.

In power system adequacy analysis, the goal is to assess the effects of uncertain input variables (generations–loads) on the adequacy of a power system. The main indices of the adequacy of power systems are the probability and mathematical expectation of a power shortage (load curtailment) [4]. In practice, a widely used method for the adequacy indices calculation is the Monte Carlo simulation (MCS) because of its advantages [5]. It can handle high-dimensional problems. In addition, the MCS converges, regardless of the complexity of the power system model. Another important aspect is its highly distributable aspect, which allows the calls to the model to be run in parallel. However, the MCS method relies on sampling over the entire input space, no matter where the load curtailment domain is. After that, power flow analysis is needed for each state. Depending on this analysis, corrective actions such as load curtailment and generating unit reschedule are applied in the case of power shortage. The implementation of corrective actions is made through optimization algorithm. The objective of optimization problem is to minimize the total amount of load curtailment constrained to the operating limits of generating units and transmission circuits, and to the power flow equations. As there are several procedures in the evaluation of a state, the computation burden could be very heavy. Moreover, since the modern power systems are now characterized by the rare occurrence of the load curtailment events, the MCS method requires a great number of samples before appropriate load curtailment events are carried out, and so high computation time is needed to reach proper convergence criterion on the estimation of load curtailment probability. Such a computational burden is often unaffordable in practice due to the long runtime of a state evaluation for a power system model. Hence, there are two research tracks for the purpose of overcoming the MCS computational burden. A first research track is to develop high performance programming paradigms [6,7,8,9,10,11,12,13,14,15,16,17,18,19,20] for the purpose of reducing the computation time of state evaluation. A second track could be to derive more efficient sampling techniques [21,22,23,24,25,26,27,28,29,30,31,32,33,34,35,36] for reducing the number of the states needed to be evaluated through concentrating the sampling effort in the regions of interest. This significantly reduces the number of calls to the power system model.

Parallel computing (PAC) [6,7,8], object-oriented programming (OOP) [9], and agent-based technology (ABT) [10,11] are examples of the programming paradigms that can be used to reduce the MCS computational burden. However, the relationship between the number of computing resources and the computational time is close to a linear function [7]. Thus, the hardware could still be expensive. In order to overcome this shortcoming, advanced techniques-based MCS methods have been developed such as pattern classification techniques [12,13,20,21,22] and metamodels or surrogate models [23,24,25,26] to make an equivalent model for the computer code to fasten the computations. Pattern classification techniques are utilized to categorize states (load curtailment or not) of a power system. Some models of these techniques are artificial immune recognition systems [13], artificial neural network [12], least squares support vector machine [20], and deep-learning and multi-label classification methods [21,22]. However, the computational accuracy and flexibility of the model relies on size of training samples. In the structural safety analysis, various types of surrogate models [23,24,25,26] were proposed to change the limit-state functions, which expresses the performance of a system, by an equivalent metamodel-based approximation. These techniques have shown a significant reduction in the computational burden of the MCS method. However, reliability results depend on the accuracy of the surrogate model. Therefore, the accuracy of the surrogate model needs to be ensured to avoid adding uncertainty to the already existing input uncertainty. The main disadvantage to this technique is that there is a difficulty in measuring the impact of the modeling errors on reliability results. Compared with the abovementioned methods, sampling-based methods have the advantages of being insensitive to the complexity of limit-state functions, avoiding errors from approximations of the limit-state function, and being straightforward to apply. Thus, more efficient sampling techniques for overcoming the MCS computation burden are adopted in this paper.

In the context of reducing the number of the states that need to be evaluated, variance reduction techniques enable us to extract the set of states, which make a significant contribution to the evaluation of adequacy indices. Since the variance of the MCS estimate is inversely proportional to the failure probability [5], the variance reduction methods [21,22,23,24,25,26,27,28,29,30,31,32,33,34,35,36] have been developed for reducing the variance of the MCS estimate through generating samples exploring the rare load curtailment events and so shortening the computation time of obtaining an accurate estimate of the adequacy indices. The name of variance reduction techniques gathers various techniques, such as subset sampling [16], importance sampling [17,18,19], control variates [27], antithetic variates [28], stratified sampling [29], line sampling [30,31], and directional sampling [32]. For the sake of conciseness, subset simulation (SS) and importance sampling (IS) are reviewed in this paper, as they are the most substantial variance reduction approaches applied in adequacy studies. SS is based on splitting the failure domain into a series of partial failure domains. This facilitates describing the probability of failure event as a product of conditional probabilities of the partial failure events. The main advantages of SS are its capability to handle complex limit-state functions (e.g., nonlinear, with possibly multiple failure regions). On the other hand, SS have some drawbacks. Firstly, the variance estimator is not directly calculated by an analytical formula as MCS and IS techniques but must be evaluated by repetition. Secondly, even if SS provides a variance reduction compared to MCS, the number of samples needed to achieve convergence is larger than that needed with other IS techniques [16]. The IS techniques propose an alternate sampling density, called the ISD, which is the density of the input variables conditional on the failure domain. The optimal selection of the ISD can result in zero variance of the estimate of failure probability. However, in practice, sampling from the theoretically optimal ISD is not handy, because it needs knowing the failure probability and failure domain in advance. To overcome this problem, the cross-entropy [33,34] and sequential importance sampling (SIS) [35,36] techniques were applied to approximate the optimal ISD in a sequential manner.

The CE method determines the ISD iteratively through defining a sequence of more frequent events (intermediate failure events) in several probability spaces that gradually reach the target theoretical ISD (small failure event). The densities of intermediate failure events are chosen as parametric family of densities. Typically, the densities are chosen as the same family as the density of the input random variables, and the initial intermediate sampling density is chosen as the original density of the random variables. For each intermediate failure event , the CE method identifies the parameter of a chosen density model through minimizing KL divergence between the optimal ISD of -th intermediate failure event and the chosen probability density. The optimal ISD of -th intermediate failure event is defined based on the intermediate failure threshold (), which is estimated such that a fraction () of the limit-state function values of the samples from the fitted parametric density obtained at the previous sampling step are beyond this threshold . Starting from an initial sampling density, the density parameter updating is executed until the threshold becomes beyond zero (i.e., at least of the limit-state function values of the samples fall in the actual failure domain). In this case, the target optimal ISD is approximated well enough by the current parametric density. The advantage of the CE-IS approach is related to the fact that analytical updating formulas can be derived for density parameters when dealing with probability densities belonging to the natural exponential family. Another advantage concerns the fact that, similarly to the standard MCS, the estimation error is directly controlled using the estimator of the variance [34]. However, the major difficulty is to construct efficient intermediate densities used in the adaptive sampling process to approach the target optimal ISD.

This paper presents an improved version of the CE-IS by incorporating two enhancements. The first one is developing a new updating scheme for the parameter of the intermediate density. In the proposed method, the indicator function of the intermediate failure events is defined by a smooth approximation function instead of using step function as the traditional CE-IS method. This allows exploiting all the samples from intermediate sampling levels in the density parameter updating, contrary to the traditional CE-IS method, which uses a small portion of the samples. In addition, a smooth shifting for the optimal ISD of intermediate failure events towards the target optimal ISD of the small failure event is achieved. This effective use of the intermediate samples leads to better estimate of the density parameter and hence to a smaller sampling error in the corresponding probability estimate. Secondly, exploiting as stopping criterion the coefficient of variation of the weights according to the smooth approximation of the optimal ISD of intermediate failure events with regard to the target optimal ISD improves the robustness of the method convergence. These modifications contribute to obtaining the accurate optimal ISD of nodal generations and loads, and so, the nodal and system adequacy indices are computed accurately. We compare the performance of the proposed method to the traditional CE-IS and recently proposed techniques in literature such as sequential importance sampling and subset simulation. The paper is structured as follows. After a brief introduction, Section 2 illustrates the principles of adequacy assessment in power systems. Moreover, the mathematical objective function and constraints of the optimal power flow (OPF) algorithm are defined. Section 3 describes the framework of adequacy indices evaluation based on the proposed ECE-IS method. In addition, the mathematical description of the ECE-IS is presented. Section 4 depicts the case study and numerical results. The results discussion is explained in Section 5. Section 6 outlines the main findings.

2. Principles of Adequacy Evaluation in Power Systems

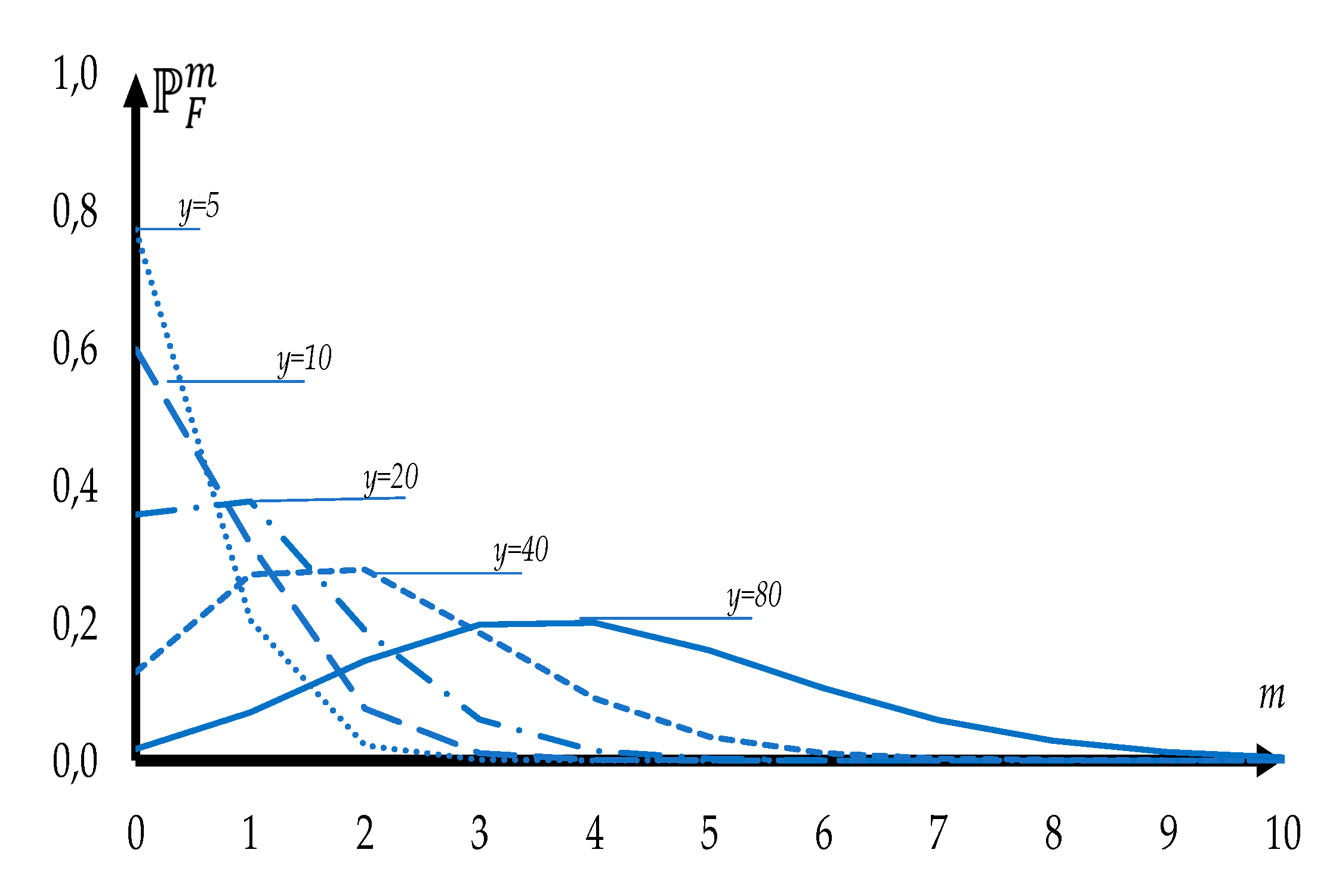

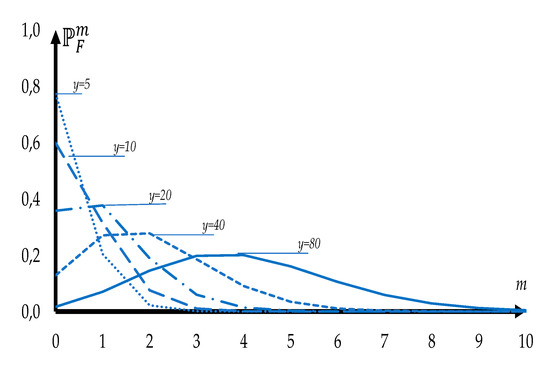

For adequacy evaluation purposes, a bulk power system model is normally represented by a group of areas or market nodes connected by transmission lines. Then, the uncertainties of total available generation capacity and total required load are depicted for each area or node. The available capacity of generators in each node can be defined by a binomial distribution. Figure 1 shows the binomial PDF for the failure of generators in a power plant including generators with the same probability of failure ). The probability of failure is calculated using Equation (1).

Figure 1.

Binomial PDF for different numbers of generators.

For a large number of generators, according to the de Moivre–Laplace theorem, the binomial distribution could be approximated by a Gaussian one. Such an approach is accepted in this paper. The Gaussian distribution is also used for describing the aggregated load connected to each node. In order to calculate realistic adequacy indices of each node, it is necessary to take into account both load curtailment strategy and physical constraints [37,38]. For each sample within the simulation method, a power flow model must be solved to detect the state of the transmission system. If there are transmission lines outside their loading limits, an OPF algorithm is executed to apply corrective actions (e.g., generation rescheduling and/or load curtailment) by solving an optimization problem. The objective of optimization problem is to minimize the total amount of load curtailment constrained to the operating limits of generating units and transmission circuits, and to the power flow equations. As there are several procedures in the evaluation of a state, a linear representation for the power flow equations is often adopted in HL2 adequacy studies to significantly decrease the computational effort [39]. This representation cannot analyze the impact of bus voltages and reactive power on the system adequacy. This simplification is acceptable, since the adequacy studies mainly deal with long term power system analysis [16]. Hence, a DC-power-flow-based optimization method is used because it is simpler (being linear model) and faster in computation compared with AC power flow.

2.1. Objective Function

A load curtailment sharing philosophy is considered in this work. Unserved demand is shared across all the nodes of a power system. Using weight factors in the objective function, a priority order policy is employed for nodes [18]. The objective function is presented in a quadratic form:

In addition to the objective function, the power system physical constraints are of paramount importance and will strongly affect resulting adequacy indices.

2.2. Constraints

The main aim of adequacy evaluation is to calculate adequacy indices of a power system, while not paying special attention to voltages drops, congestions, and frequency problems of the grid [2]. The nodal power balance constraint can be written in the next form:

In addition to (3), a system power balance equation is used:

The power flows vector is calculated using a simplified form of DC power flows equations:

Power losses for each Monte Carlo simulation sample are calculated based on the assumption that all nodal voltages are equal to the nominal ones.

Power flows through transmission lines are limited by maximum transmission line capacities:

The generation and curtailed load in each node are restricted by supply and demand bid limits:

3. A Framework of Adequacy Indices Evaluation

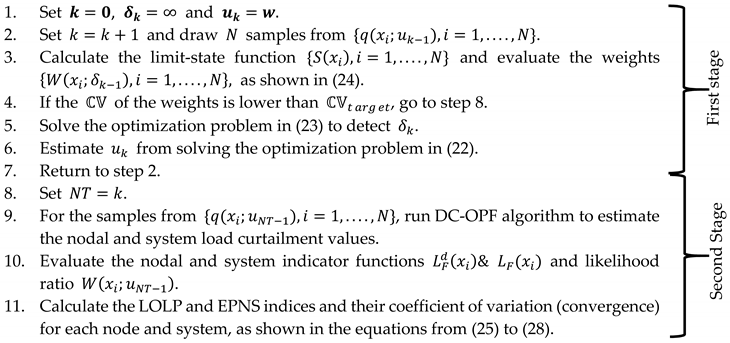

The framework includes two stages. In the first stage, the samples or states of the nodal available generations and required loads that lead to load curtailment events are extracted. These samples are used in the second stage to compute the adequacy indices. The adequacy indices adopted here are loss of load probability (LOLP) and expected power not supplied (EPNS) for system and nodes.

The load curtailment event is defined as the inability to satisfy the loads at all nodes without violating the system operating constraints such as the limited capacity of transmission lines. Thus, the load curtailment event may result from low available generation or high required load or the limited capacity of transmission lines or union of some (or all) above. To carry out the first stage aim, the occurrence of load curtailment is verified at each sampled state of the uncertain inputs , where , is the number of samples, and is the number of nodes. Suppose the set is the failure domain in the input parameter space, i.e., , which leads to a load curtailment in the system. The failure domain is expressed by a limit-state function as follows: .

The function defines the degree of deficiency of system state . It equals the amount of load curtailment when the state fails, i.e., . Otherwise, the system deficiency is defined as the sum of the difference between nodal available generation capacity and the required load as shown in (10). Since the target load curtailment event has a small probability in the original sample space, the limit-state function is used to define a sequence of simulations of more frequent events (intermediate failure events) in several probability spaces. This is illustrated in detail in the following subsections. In (10), the amount of load curtailment or power not supplied (PNS) is computed through the DC-OPF algorithm. The OPF algorithm includes two actions: generation redispatch and load curtailment that aim to minimize the system load curtailment and satisfy the security constraints of the transmission network, as illustrated in the previous section.

For estimating the target failure probability (load curtailment probability), the 2n-variate normal distribution of uncertain inputs is expressed by , which is depicted by mean and covariance vectors, and so . To simplify the writing of equations, the vector is symbolized by . Hence, the probability of failure can be computed by the following expression:

in which indicates that the expectation operator is taken with respect to the density and denotes the indicator function. The MCS estimator for is

where

Since the estimator is unbiased,

and its variance is defined by

The coefficient of variation is considered as the error measure for the estimator. The squared is given as follows:

Hence, the of MCS is approximately and so, for small , the needed number of samples is large for getting an accurate estimate. To improve the efficiency of MCS, the proposed method, ECE-IS, as a variance reduction technique has been developed for reducing the variance of the MCS estimator. We primarily revise the traditional CE-IS to develop the ECE-IS.

3.1. Implementation of CE-IS Method

IS introduces an alternate sampling density , termed the ISD. A proper selection of is required to represent accurately the failure domain of inputs. The probability of failure shown in (10) is computed regarding and rewritten in the following manner:

where is the likelihood ratio or importance weight function, which is expressed as a ratio of original and proposed densities. The IS estimate of is given by:

in which are identically distributed samples based on . To obtain the optimal selection of the ISD , the variance of estimators have to be minimized as follows:

The theoretically optimal ISD leading to zero variance is given by the following Equation [5]:

Since the optimal ISD is dependent on unknown quantities, and , as shown in (14), the computation of the optimal ISD is not possible directly. The CE as a sampling technique is used to find a near-optimal ISD through fitting a parametric density model. It exploits the samples from intermediate sampling levels for fitting the selected parametric density. The parameter vector () is defined through minimizing the cross entropy or KL divergence between the unknown optimal ISD given in (15) and the selected probability density . In this work, the selected is a multivariate normal probability distribution having . The cross entropy between the and can be described as follows (see [17]):

Then, the optimization problem can be expressed in the following manner:

By substituting in (16) with the Equation (14), the optimization problem becomes

in which

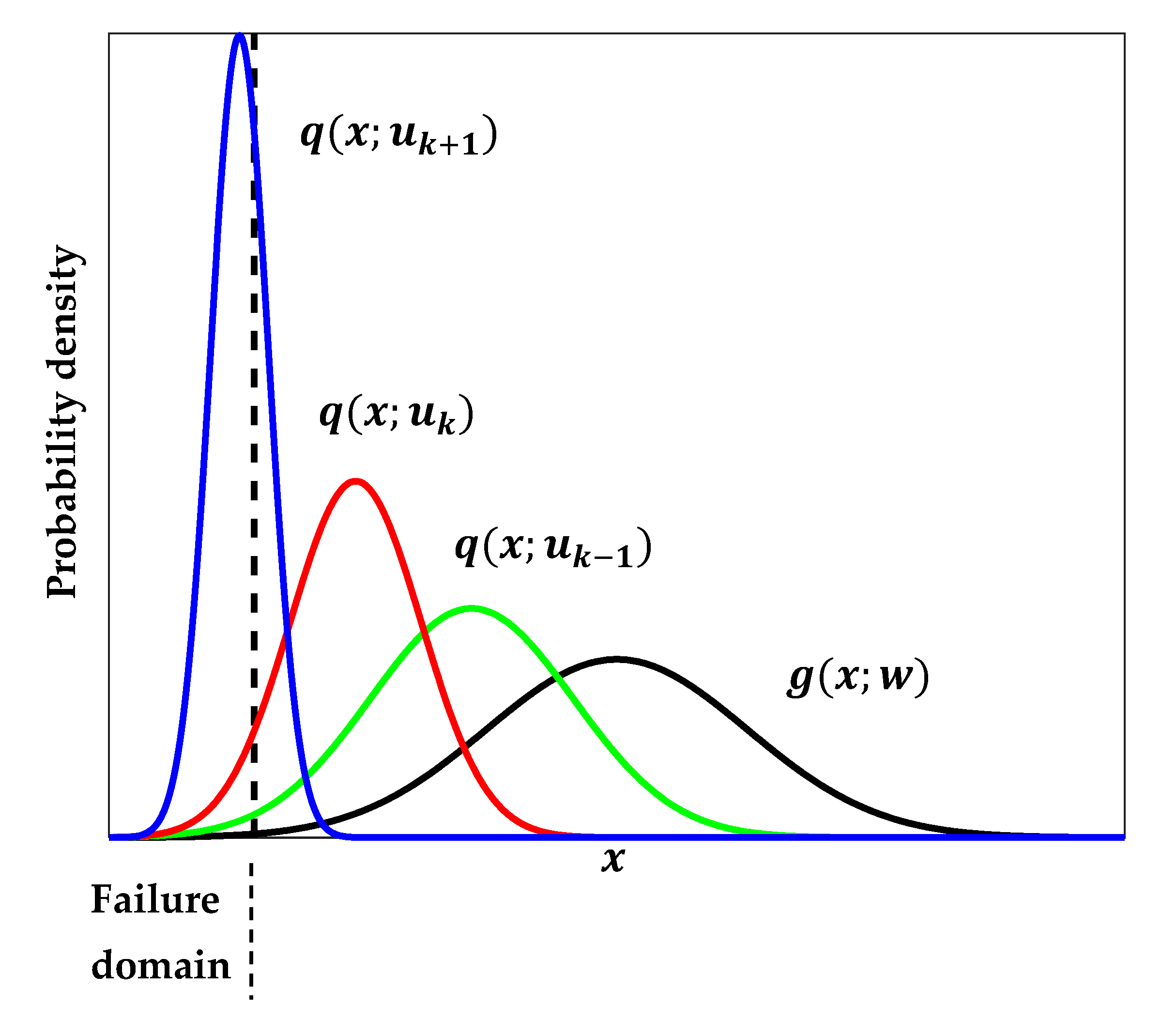

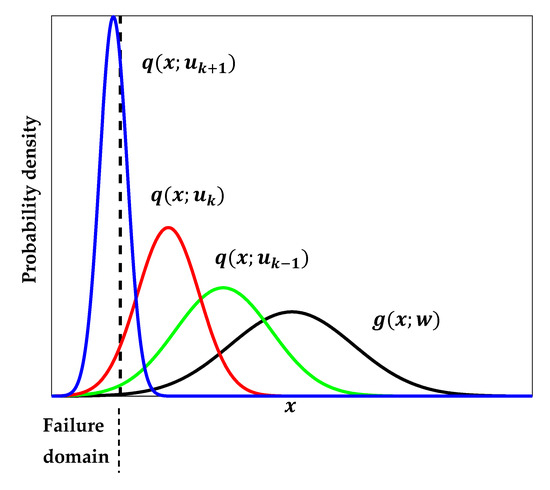

The CE method solves this optimization problem iteratively through defining a series of intermediate densities that gradually reach the target density representing well the failure region as shown in Figure 2. The intermediate failure domain is defined using a threshold as stated in (19). is calculated as the -quantile of the sorted limit-state function values from smallest to largest of the samples from the fitted parametric density obtained at the previous step . For each intermediate failure event , the optimization problem is solved to get its optimal parameter using the samples distributed based on . The aim is to obtain the final parameter vector , which approximates the solution of (17).

Figure 2.

A gradual approach of the sequence of intermediate distributions to the target failure region.

Starting from the initial parameter vector , each following is evaluated by solving the CE optimization problem written in (20) with target optimal ISD set to , which is the optimal ISD of the -th intermediate failure event. The is taken as nominal parameter vector of input variables, which is .

in which and . The procedure is repeated until becomes a negative value, i.e., at least samples fall in the actual failure domain, where [17]. Thus, is set to the current event , and the optimal ISD is approximated quite by the density , and the probability of failure is estimated as follows:

3.2. Implementation of ECE-IS Method

In the traditional CE-IM, the intermediate failure domain is identified by the intermediate failure threshold . Because of the step indicator function , represents only a fraction () of the samples of the preceding ISD , and the other samples are neglected. In the ECE-IS, the indicator failure function of the intermediate failure events is defined by a smooth approximation function. This allows a smooth transition for the approximately optimal ISD towards the optimal ISD of the destination failure event through exploiting all samples from the intermediate density in parameter updating.

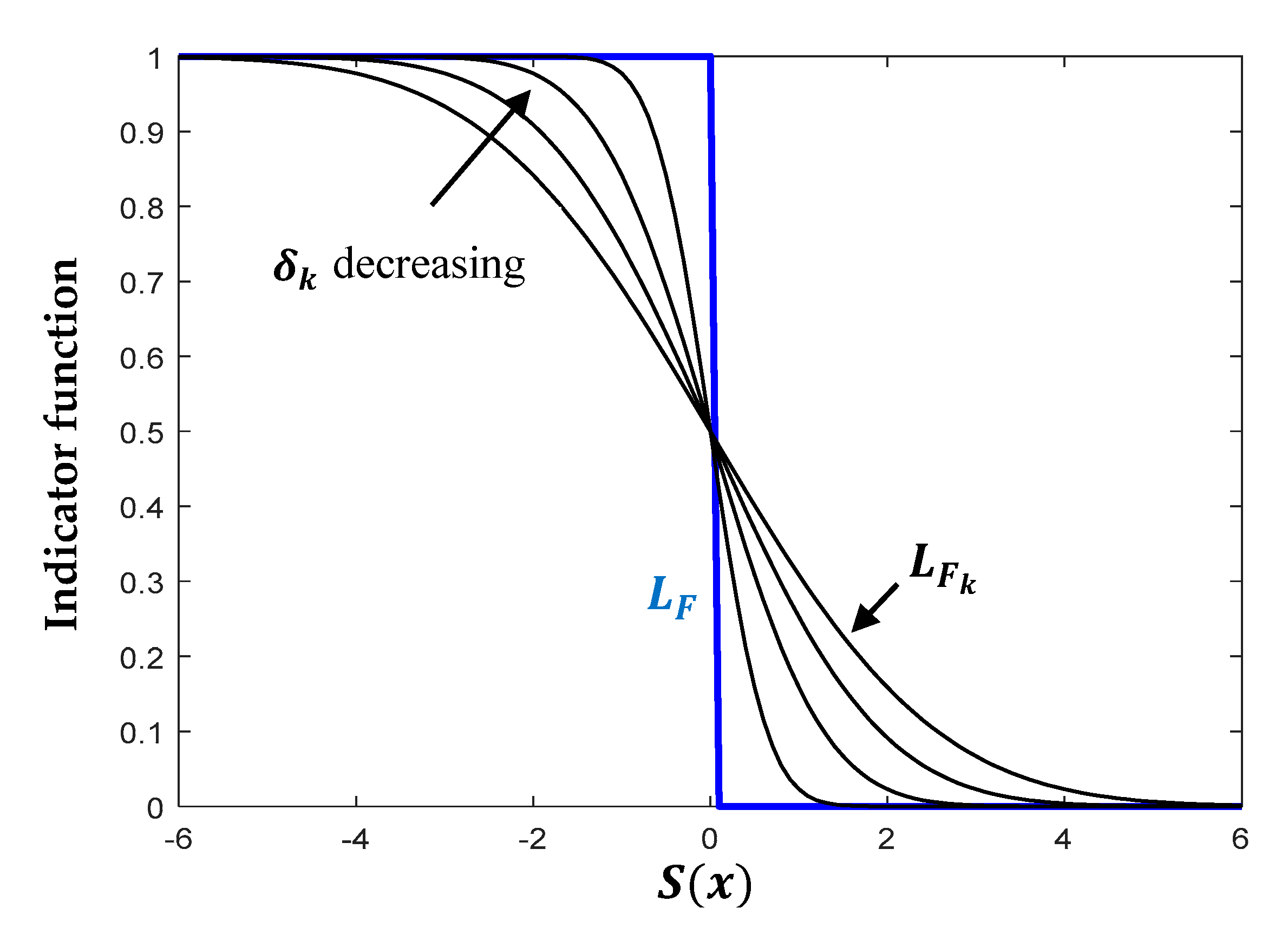

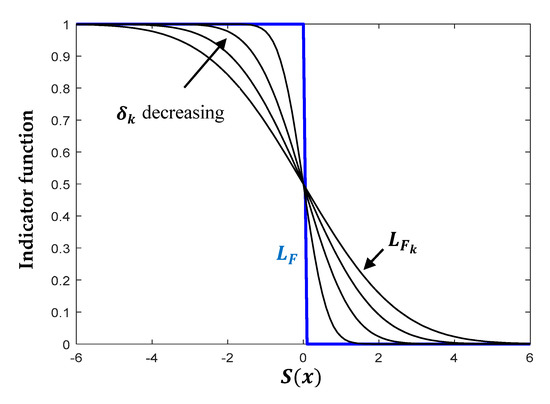

The indicator failure function can be defined as follows: , where is the control parameter of function bandwidth, and is the standard normal CDF. When approaches zero, the smooth function approaches to the target step indicator function. Hence, defines a decreasing series of bandwidths, as shown in Figure 3.

Figure 3.

Indicator function approximation.

Using the smooth function of , the optimization problem (20) becomes as follows:

in which . is determined such that the optimal ISD is approximated well enough by samples drawn from , i.e., the variance of the importance weights is small. This is done by minimizing the difference between the of the weights and the specified at each intermediate event , as written in (22).

As shown in Algorithm 1, starting with and as a nominal parameter vector, this procedure is reiterated and stopped when the of the weights calculated in (24) of the present smooth approximation of the optimal ISD of intermediate failure events with regard to the target optimal ISD is lower than the . Hence, is set to the current event , and the optimal ISD is approximated well enough by the density . Utilizing the of the weights as stopping criterion instead of the parametric density improves the robustness of the method convergence, as will be shown in the section of results.

The samples from the density will be used in the second stage as shown in Algorithm 1 for computing the system adequacy indices (LOLP-EPNS) for each node and system. The system LOLP and EPNS can be expressed as follows:

For nodes, the LOLP and EPNS can be rewritten as follows:

in which

| Algorithm 1. Procedure of adequacy indices assessment using ECE-IS |

|

4. Results

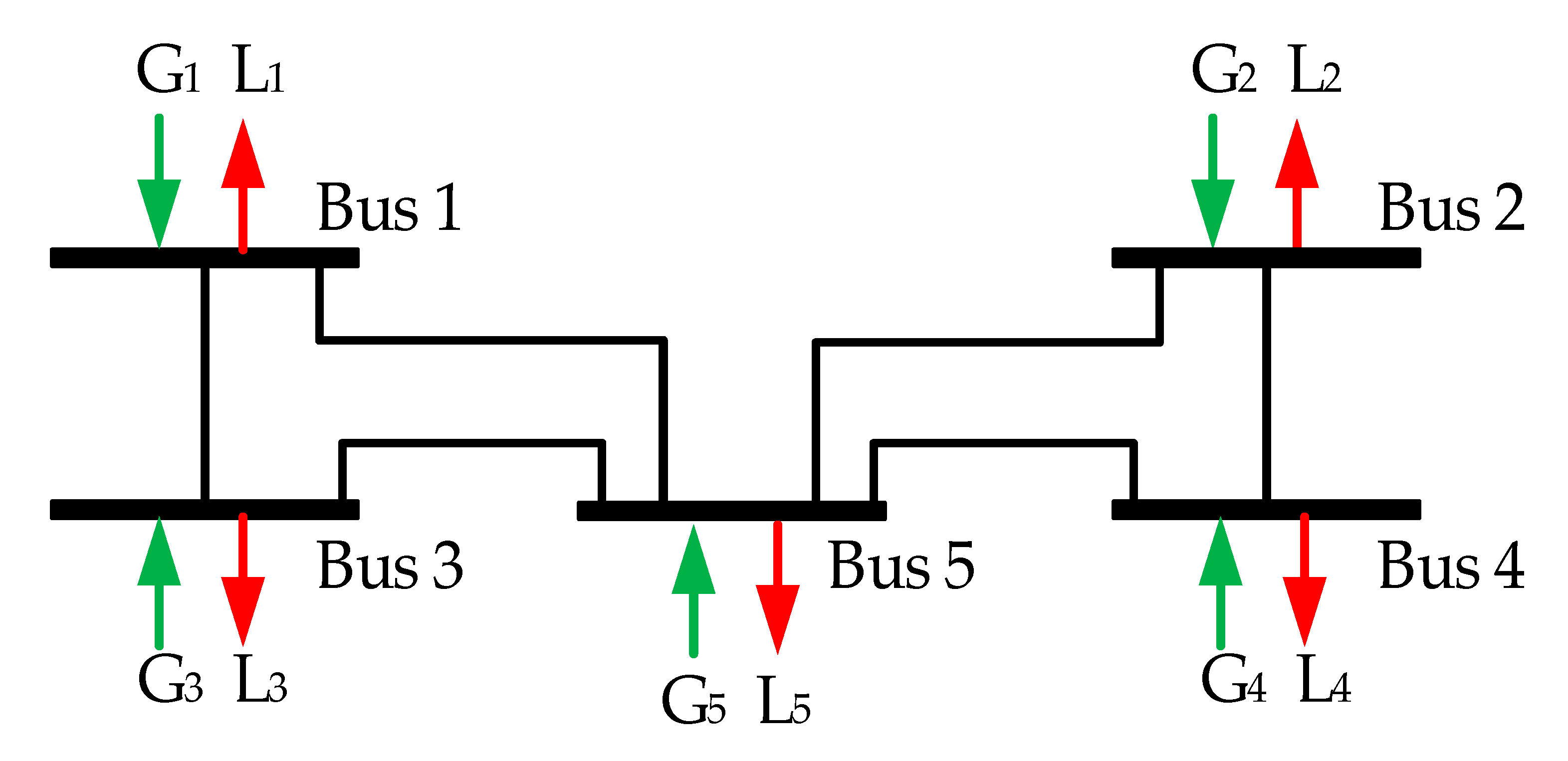

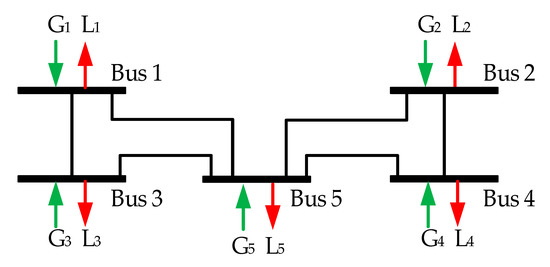

The proposed method is tested and evaluated for the five-bus test scheme, presented in Figure 4. The data for the buses and transmission lines are presented in Table 1. In terms of mean values of the generation and demand capacities, the test scheme includes two in surplus, one in balance, and two deficient buses. The probabilistic nature of the generation and demand could potentially result in different combinations of operational states of the power system buses. All the buses are connected by transmission lines of the same transmission capacity but different resistance and reactance. All tests are carried out using MATLAB 2017 on an Intel Core i5-8 G memory personal computer. The standard MCS simulation method is used as a benchmarking method. The maximum number of simulation samples for MCS is 5 × 104. A coefficient of variation (convergence) of 5% for both system adequacy indices (LOLP-EPNS) is used as the stopping criterion. The rare events simulation techniques (CE-IS [33], SIS [35], SS [16], ECE-IS) use the following parameter values: , maximum number of iterations = 50, and number of samples per iteration = 2000.

Figure 4.

Test scheme.

Table 1.

Test scheme data.

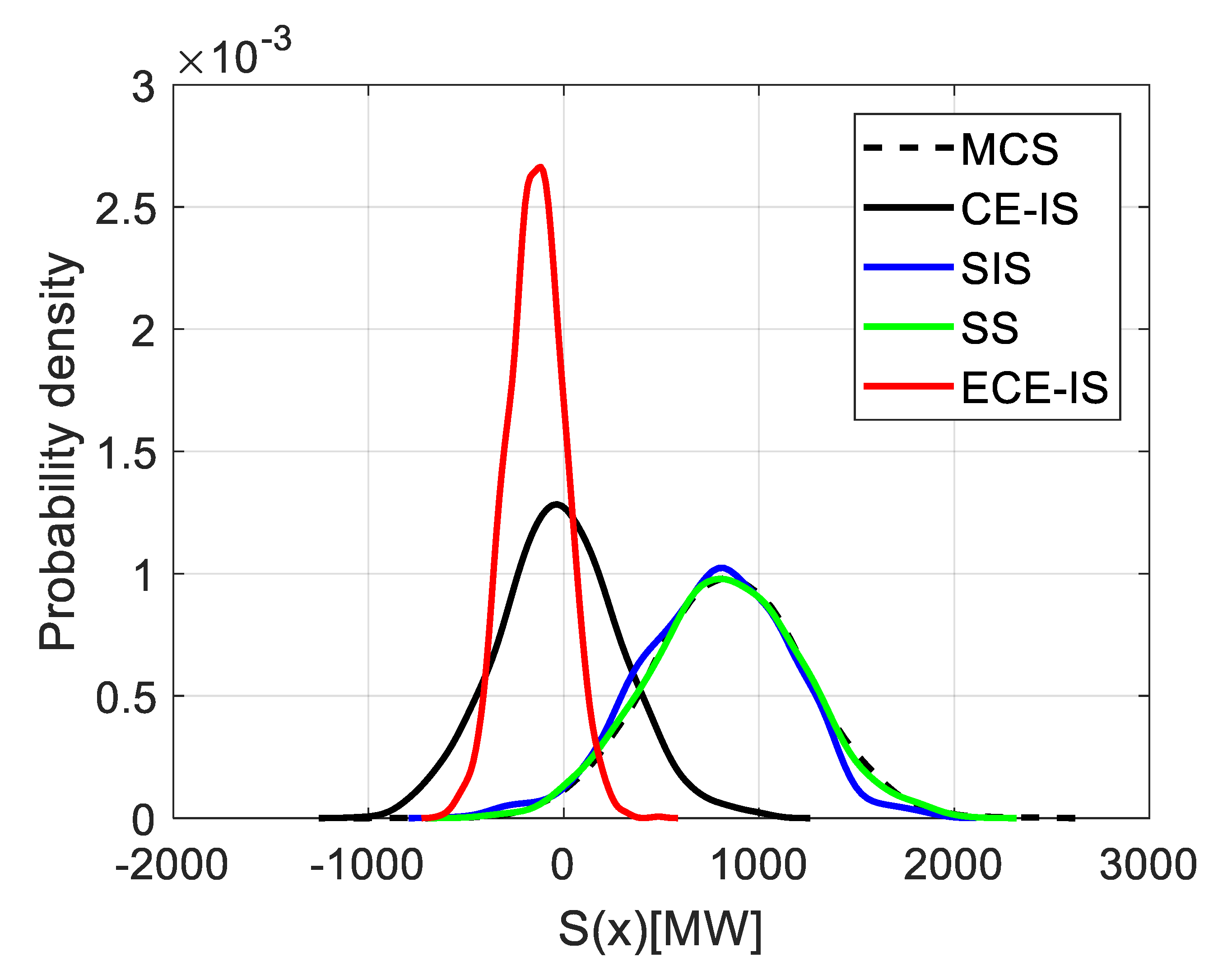

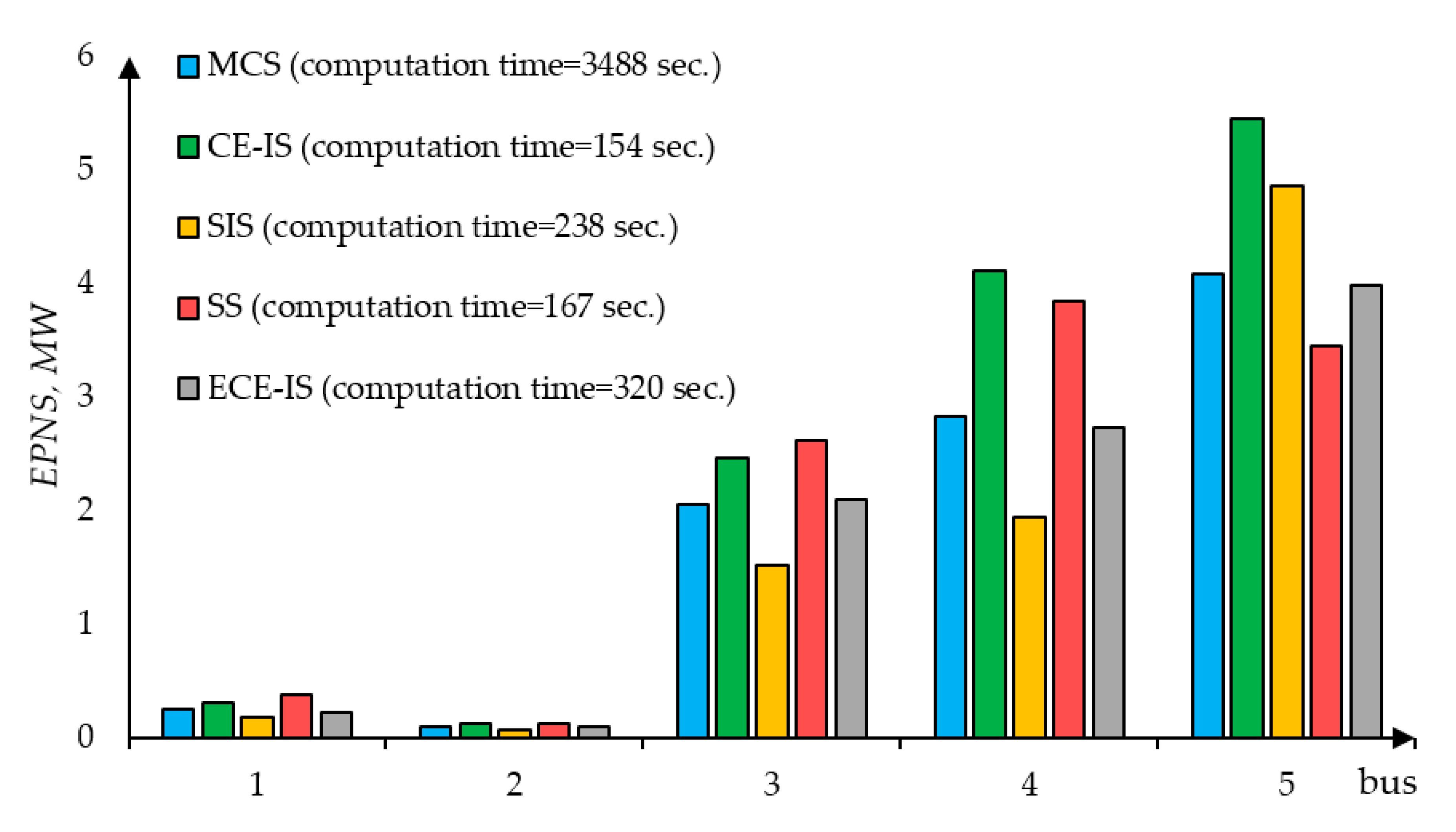

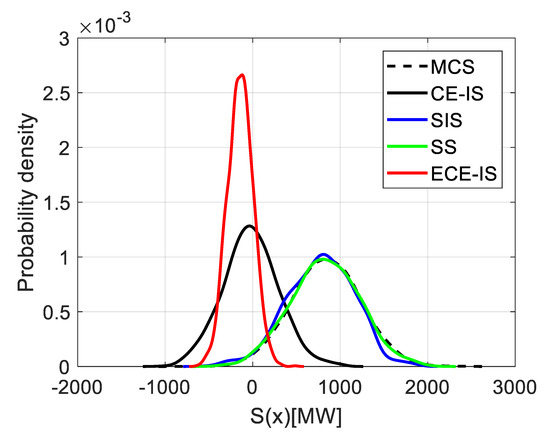

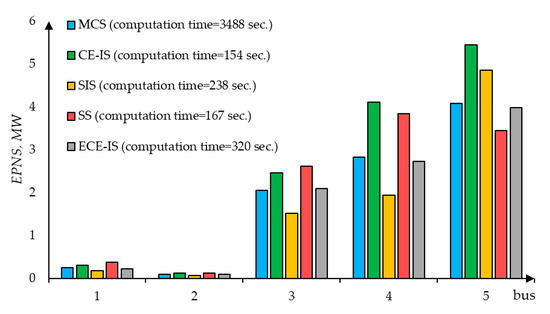

In Figure 5, the PDFs of the system limit-state function are shown for different methods (CE-IS, SIS, SS, ECE-IS) and MCS. It illustrates that the proposed ECE-IS method has the largest probability of , which is 80% in comparison with 23, 55, 32, and 20% for MCS, CE-IS, SIS, and SS, respectively. This means that the samples extracted by the ECE-IS method are more likely to cause the load curtailment events. It represents 80% of the total sampled system states. Once the samples from the optimal distributions of nodal generations and loads are obtained, the adequacy indices (EPNS, LOLP) can be evaluated. For test purposes the EPNS, LOLP are calculated for all the buses. The histogram in Figure 6 shows the computation time and the EPNS values obtained by the different methods. The computation time includes the time spent in the first stage for extracting the rare load curtailment events.

Figure 5.

Probability distribution of system limit-state function for different methods.

Figure 6.

Test scheme buses—expected power not supplied (EPNS).

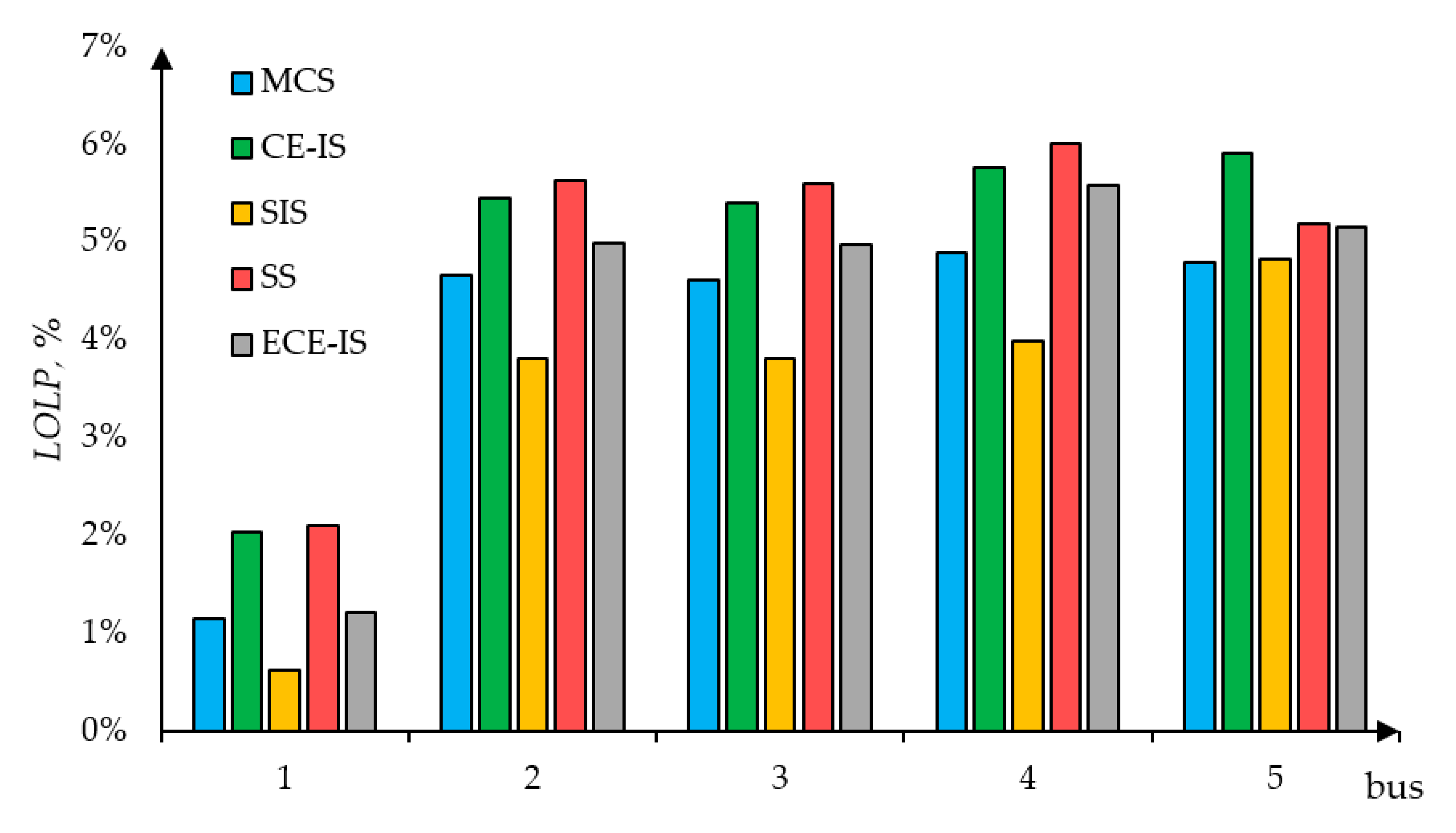

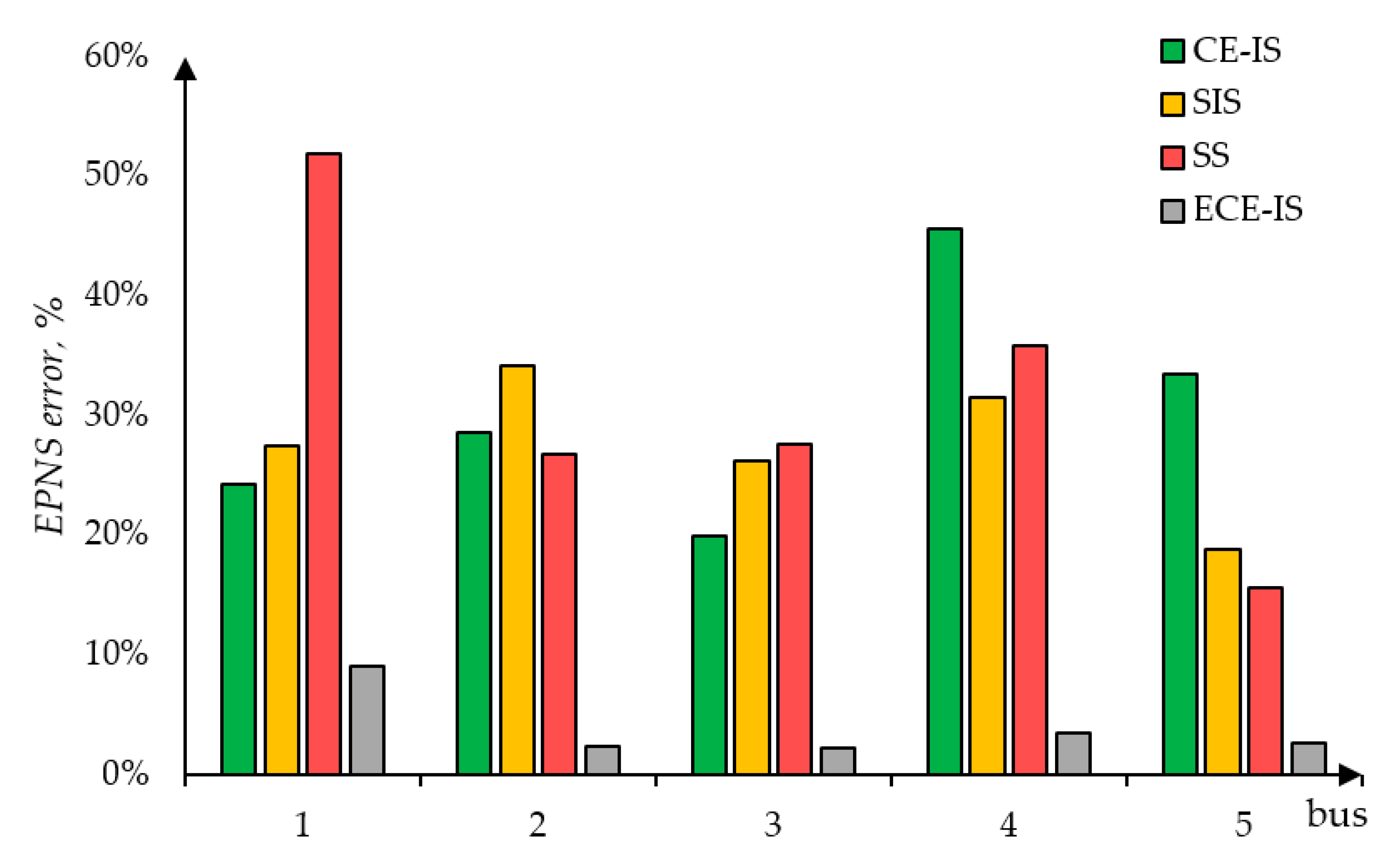

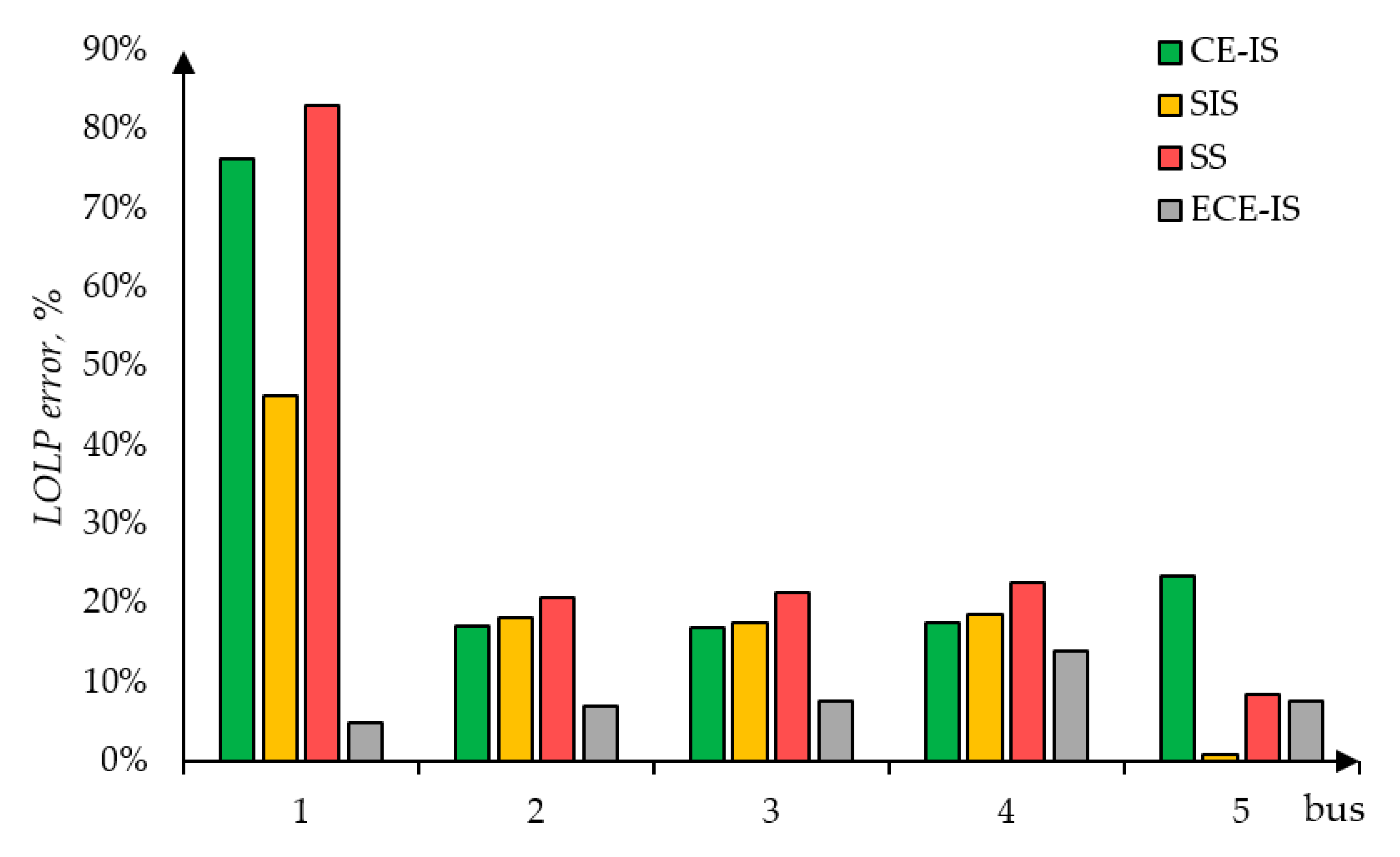

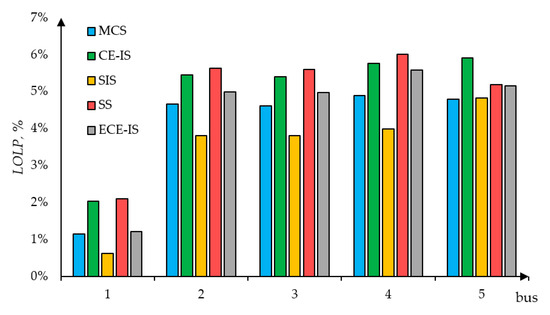

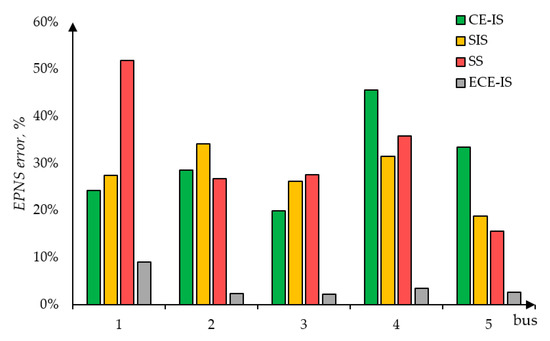

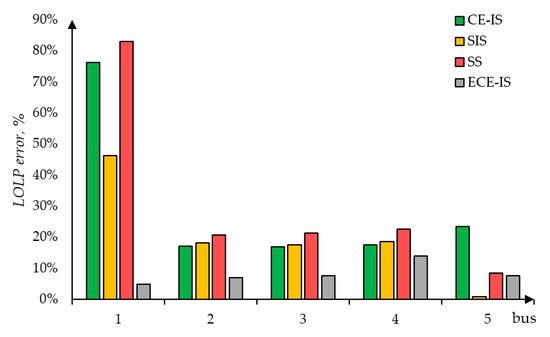

The load curtailment sharing philosophy results in relatively small EPNS values of potentially deficient buses 4 and 5. At the same time, the limited transmission lines capacity does not allow unlimited power supply from surplus buses and so the greatest EPNS is at bus 5. From the simulation results, it became clear that all the discussed methods significantly reduce a computation burden and the most computationally effective is the CE-IS method. It also could be seen that the method used in extracting load curtailment events could significantly affect results. Figure 7 shows the LOLP values for all the busses of the test scheme. Theoretically, in the case of unlimited transmission lines capacity, the objective function (1) must result in equal LOLP of all the buses. However, in terms of mean values, the power surplus of the bus 1 is 10% higher than the transmission capacity of the connected lines. Possible power supply from bus 1 is locked, and as a result, in some deficient test scheme state samples, the demand at the bus is not curtailed. It can be seen from the histogram that the LOLP of bus 1 is relatively small in comparison to other bus values. Figure 8 and Figure 9 show the errors of methods in comparison to the standard MCS method. For almost all the buses, EPNS values computed using the proposed method are accurate within range of 8%. Even though the ECE-IS method is less computationally effective than other methods, it could provide significantly more accurate results. In comparison to the traditionally used MCS approach, the proposed method is nearly eleven times faster. The smallest LOLP error values are generally also represented by the ECE-IS approach. Notice, that in the case of LOLP, even small deviations would introduce a great error, as it can be seen.

Figure 7.

Test scheme buses—loss of load probability (LOLP).

Figure 8.

EPNS error compared to the standard Monte Carlo simulation (MCS) method.

Figure 9.

LOLP error compared to the standard MCS method.

5. Discussion

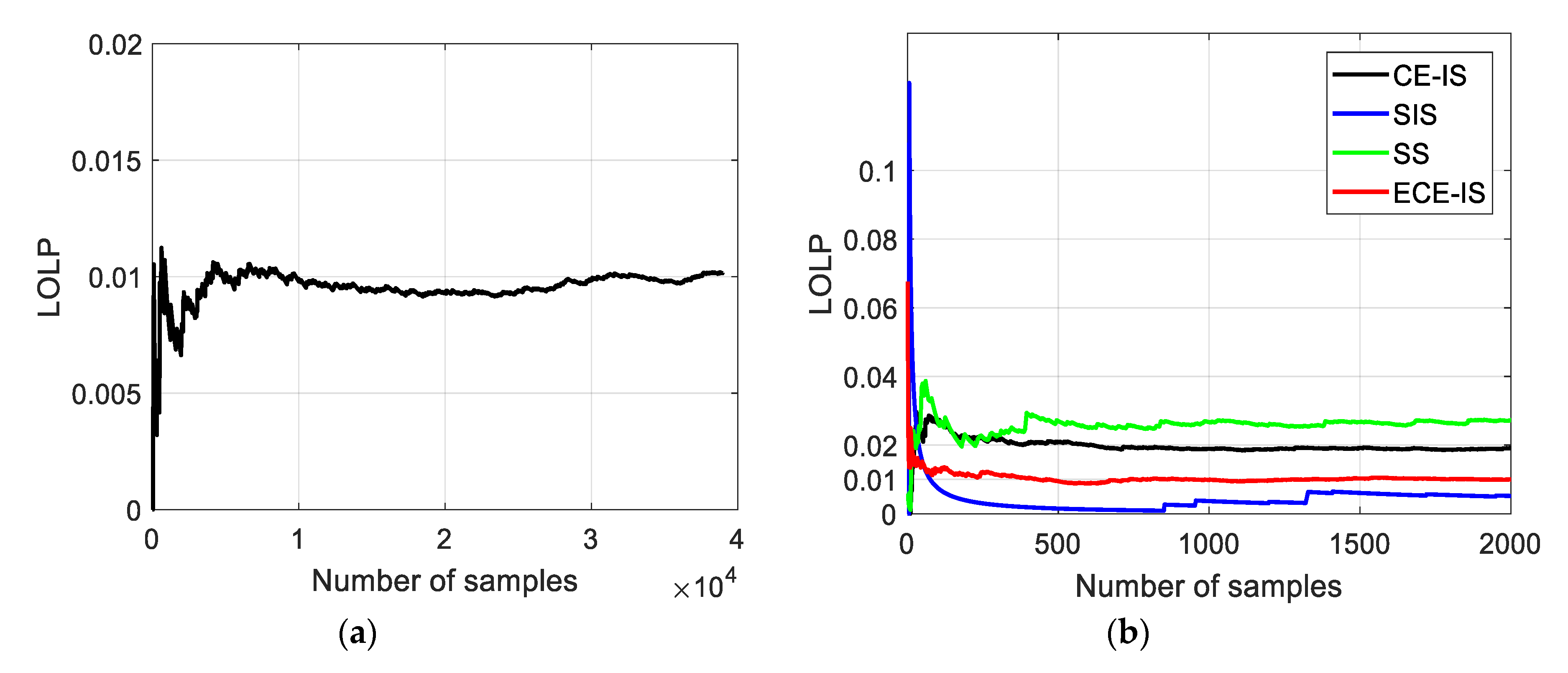

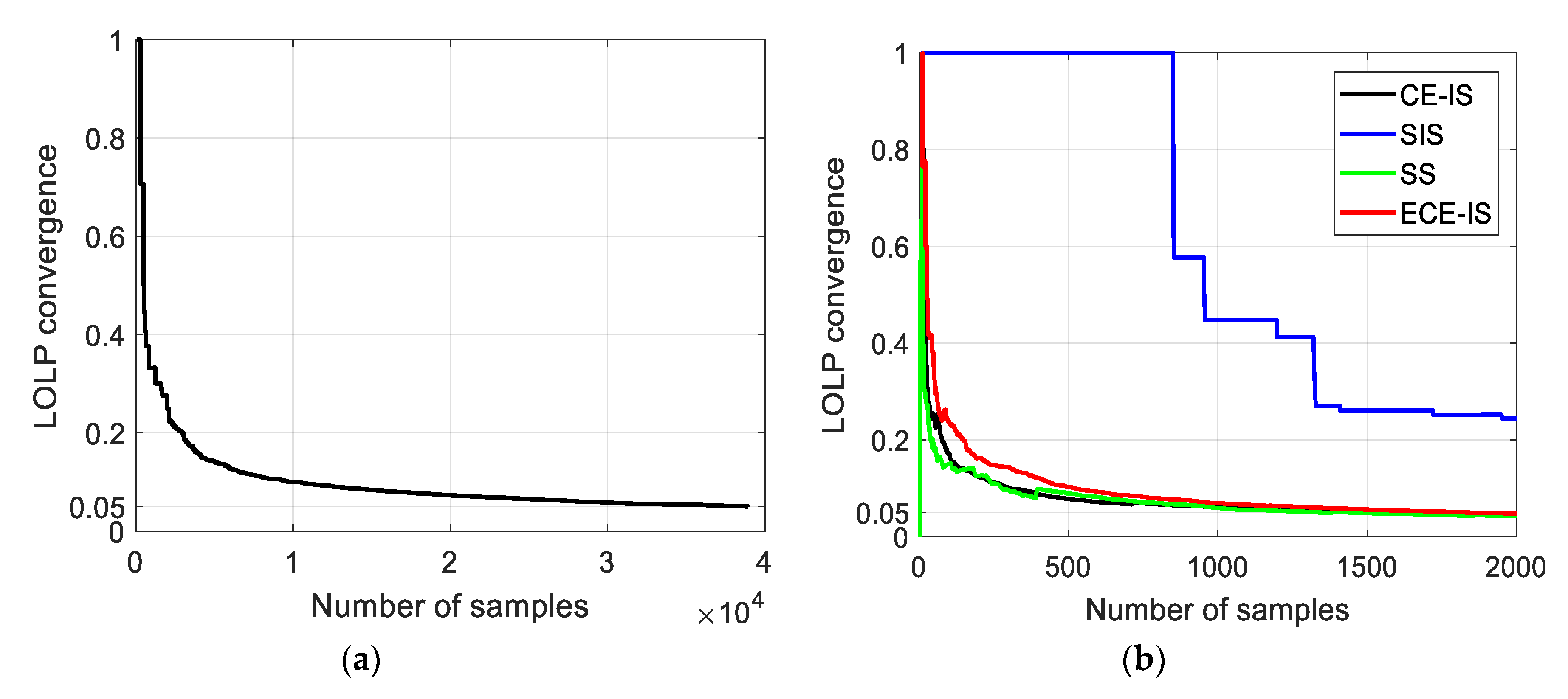

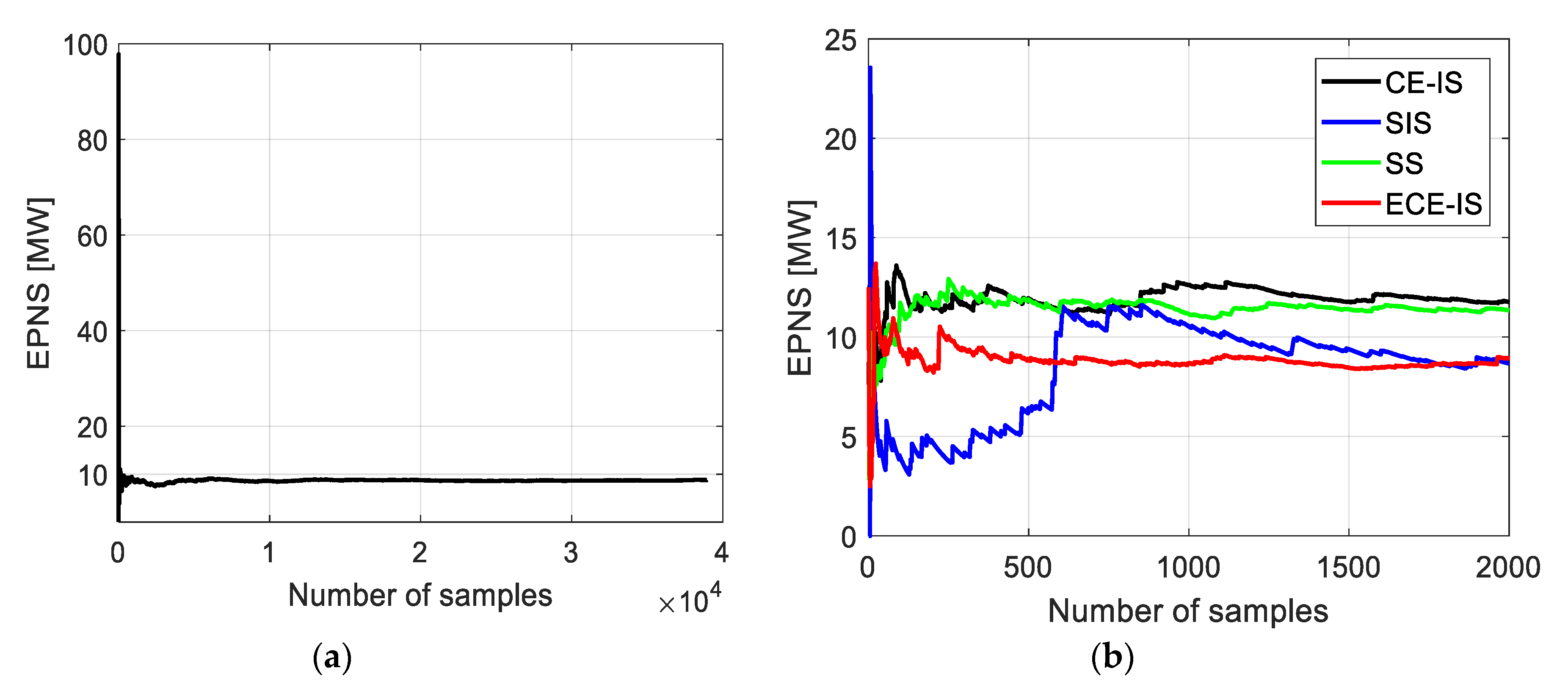

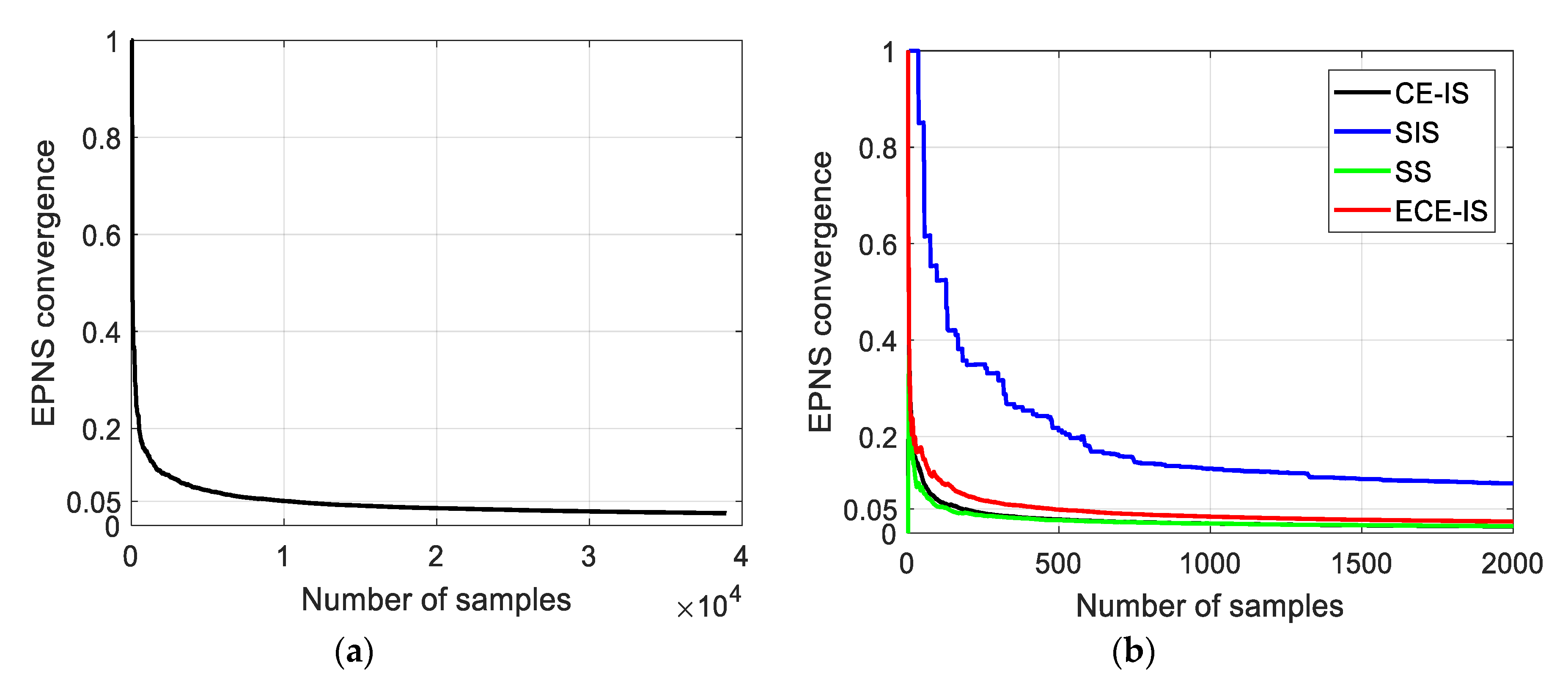

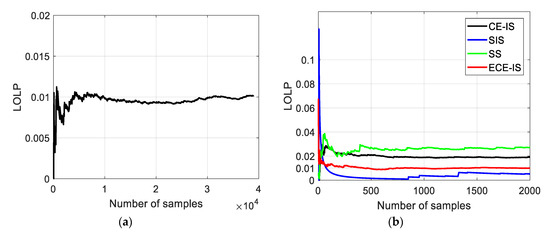

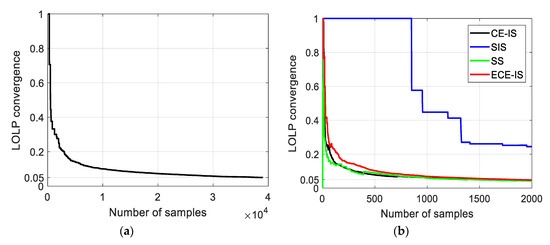

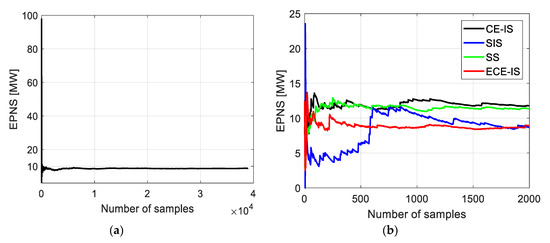

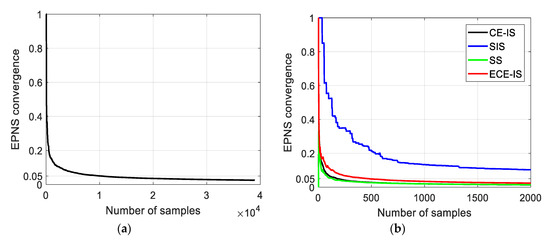

A trade-off between the computational accuracy and computational efficiency leads to a wide number of approaches for a power system adequacy evaluation. Therefore, the comparison among methods must include the number of samples or computation time required to reach the accurate adequacy indices with acceptable convergence. In terms of the number of samples, the system LOLP and EPNS indices and their convergence using the standard MCS method and the different rare events simulation approaches are shown in Figure 10, Figure 11, Figure 12 and Figure 13. For MCS, the number of samples to reach a convergence of 5% for both system LOLP and EPNS indices is 38,945 samples. The CE-IS and SS methods have the fastest convergence rate, while they do not develop accurate LOLP values. The ECE-IS has performed better, reaching a 0.0099 LOLP value with 4.7% convergence and 8.95 MW EPNS with 2.4% convergence. On the other hand, the standard MCS has achieved 0.0102 with 5% and 8.8 MW with 2.5% for LOLP and EPNS, respectively. Therefore, the ECE-IS can achieve the same accuracy of the standard MCS method for both nodal indices, as shown in Figure 8 and Figure 9, and system indices, as shown in Figure 10 and Figure 12. In the attitude of computational efficiency, the ECE-IS needs seven iterations for extracting 2000 samples representing the load curtailment events, and so, the total number of samples is 14,000 samples. Hence, the ECE-IS can achieve accurate results with a smaller number of samples and computation time. As shown in Figure 6, an 11-times speedup is achieved.

Figure 10.

(a) System LOLP in case of using standard MCS method; (b) system LOLP in case of using CE-IS, SIS, SS, and ECE-IS approaches.

Figure 11.

(a) System LOLP convergence in case of using standard MCS method; (b) system LOLP convergence in case of using CE-IS, SIS, SS, and ECE-IS approaches.

Figure 12.

(a) System EPNS in case of using standard MCS method; (b) system EPNS in case of using CE-IS, SIS, SS, and ECE-IS approaches.

Figure 13.

(a) System EPNS convergence in case of using MCS method; (b) system EPNS convergence in case of using CE-IS, SIS, SS, and ECE-IS approaches.

For comparing the robustness of the ECE-IS method with other rare events simulation techniques, the system LOLP, LOLP relative bias, and LOLP convergence values are illustrated in Table 2 for different numbers of samples per iteration. The sample analyzing times differ from each other; hence, the computation time is included in Table 2. Taken the LOLP value (0.0102) obtained by the standard MCS method as a reference value, the relative bias of LOLP values is computed as follows: . From the results in Table 2, the convergence acceleration for all methods is achieved by increasing the number of samples. However, there is a significant accuracy loss with a small number of samples for CE-IS and SS methods, while the SIS achieves a proper LOLP bias at 1000 samples but bad convergence. However, the ECE-IS is still effective for a small number of samples. It achieves a small LOLP bias (17%) with 21% convergence at 250 number of samples.

Table 2.

Methods comparison for different numbers of samples.

In order to verify the computation accuracy and efficiency of the proposed method with the dimension of the power network and the number of random variables being considered, the results of adequacy indices are presented for the IEEE-RTS 79 system. The system includes 24 buses and 32 generators divided among 14 generating stations totalizing 3405 MW of installed capacity. The annual system peak load is 2850 MW. More information on the IEEE-RTS 79 can be found in [40]. The mean and standard deviation values for the loads were taken at the peak value and the of the peak value, respectively. A coefficient of variation (convergence) of 5% for both system adequacy indices (LOLP-EPNS) is used as the stopping criterion. For the ECE-IS and MCS methods, Table 3 shows the results of the system LOLP and EPNS indices, number of samples, and computation time. The computation time includes the time spent in the first stage for extracting the rare load curtailment events. The results of the ECE-IS method are 0.00121 and 0.16 MW for LOLP and EPNS, respectively. On the other hand, the standard MCS has achieved 0.00119 and 0.154 MW for LOLP and EPNS, respectively. Therefore, the ECE-IS can achieve the same accuracy of the standard MCS method for both system indices. Moreover, the ECE-IS method is considerably more efficient than the standard MCS method. The proposed method needs only a small fraction (23%) of the samples needed by the MCS. However, in contrast to MCS, the ECE-IS method, as other techniques of rare events simulation, come along with its own set of tuning parameters, which are the target the coefficient of variation of importance weight function and the number of samples per iteration. The proper tuning of these parameters has consequences on the efficiency of the technique.

Table 3.

System adequacy indices for IEEE-RTS 79.

6. Conclusions

In this paper, a framework of adequacy indices evaluation has been proposed in composite generation and transmission power systems. The main purpose of the framework is to obtain accurate adequacy indices and enhance the computational efficiency of the standard MCS method. This is achieved by integrating rare events simulation methods in the framework’s first stage for identifying the approximately optimal distortion of the nodal generations and load distributions, making rare load curtailment events more likely to be drawn. As a result of the integration of the rare event simulation methods, a new approach named ECE-IS is proposed that is more efficient and robust than other methods, such as traditional CE-IS, SIS, and SS in extracting the optimal distortion. From the reported results of the framework’s second stage, the proposed method contributes to accurately evaluating the adequacy indices (LOLP-EPNS) and further enhancing the convergence of the indices in comparison with other methods. Moreover, a great speed-up was shown in terms of computation time with respect to the standard MCS method.

The implementation of the proposed method in adequacy evaluation could allow us to use more detailed power system models, which would more accurately reflect real power system operation. The impact of different network topologies (i.e., transmission network contingencies), non-linearity of the power flow equations, and chronological characteristics of power systems, such as time and spatially correlated load models, the time-dependency of renewable energy resources could be included into the power system model. This method can also be included into the many problems in both operation and planning phases, such as the evaluation of spinning reserve margins and the integration of renewable energy resources. Using adequacy indices assessments and knowing the critical nodes during system disturbance, planners can better manage the penetration of renewable sources, ensuring sustainable and reliable operation at both the system and nodal level. In the context of power system operation, operators schedule the generating units and allocate enough generation reserve amounts to ensure a lower adequacy index (the probability of load curtailment) than the maximum allowed. These issues will be adopted in future works.

Author Contributions

Conceptualization, V.O. and T.S.H.; Data curation, A.A.M.; Formal analysis, V.O. and A.A.M.; Investigation, R.V. and M.N.I.; Methodology, V.O.; Software, A.A.M.; Supervision, V.O., M.N.I., and H.R.; Validation, M.N.I.; Visualization, V.O. and H.R.; Writing—original draft, A.A.M.; Writing—review & editing, V.O., T.S.H., M.N.I., and H.R. All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding.

Conflicts of Interest

The authors declare no conflict of interest.

Abbreviations

| CDF | – | Cumulative distribution function |

| CE-IS | – | Cross entropy-based importance sampling |

| ECE-IS | – | Enhanced cross entropy-based importance sampling |

| EPNS | – | Expected power not supplied |

| HL1 (2,3) | – | Hierarchical level 1 (2,3) |

| IS | – | Importance sampling |

| ISD | – | Importance sampling density |

| KL | – | Kullback–Leibler |

| LOEE | – | Loss of energy expectation |

| LOLE | – | Loss of load expectation |

| LOLH | – | Loss of load hours |

| LOLP | – | Loss of load probability |

| MCS | – | Monte Carlo simulation |

| OPF | – | Optimal power flow |

| – | Probability distribution function | |

| SS | – | Subset simulation |

| Notations | ||

| – | Probability of m generators failure | |

| q | – | Unavailability factor |

| – | Binomial coefficient | |

| – | Load weight factor for the load of the node | |

| – | Curtailed load at the node | |

| n | – | Number of nodes in the power system |

| – | Power injection at the node | |

| – | Power flow from the node to the node | |

| – | Used generation at the node | |

| – | Load at the node | |

| – | Sum of power injections at all the nodes of the power system | |

| – | Sum of transmission lines power losses | |

| – | Power flows vector | |

| – | Power system incidence matrix reduced by one row | |

| – | Bus susceptance matrix | |

| – | Diagonal matrix made of transmission lines susceptance | |

| – | Vector of power injections | |

| – | Transmission line resistance [Ohm] | |

| – | Nominal voltage of a power system [KV] | |

| – | Power flow through line [MW] | |

| – | Maximum power flow from the node to the node | |

| – | Maximum power flow from the node d to the node | |

| – | Available generation at the node | |

References

- Billinton, R.; Allan, R.N. Reliability Evaluation of Power Systems, 2nd ed.; Springer Science + Business Media New York: New York, NY, USA, 1996; ISBN 9788578110796. [Google Scholar]

- Poncela-Blanco, M.; Spisto, A.; Hrelja, N.; Gianluca, F. Generation Adequacy Methodologies Review; EUR 27944 EN; Joint Research Centre (JRC), European Union: Luxembourg, 2016. [Google Scholar] [CrossRef]

- Tómasson, E. Impact of High Levels of Variable Renewable Energy on Power System Generation Adequacy. Ph.D. Thesis, KTH Royal Institute of Technology School of Electrical Engineering and Computer Science, Stockholm, Sweden, 2020. [Google Scholar]

- ENTSO-E. Mid-Term Adequacy Forecast 2019 Appendix 2 Methodology; ENTSO-E: 2019, ENTSO-E AISBL Avenue de Cortenbergh 100, 1000; ENTSO-E: Brussels, Belgium, 2019. [Google Scholar]

- Rubino, G.; Tuffin, B. Rare Event Simulation using Monte Carlo Methods; John Wiley & Sons, Ltd.: Chichester, UK, 2009; ISBN 9780470772690. [Google Scholar]

- Giuntoli, M.; Pelacchi, P.; Poli, D. Parallel computing of sequential MonteCarlo techniques for reliable operation of Smart Grids. In Proceedings of the IEEE EUROCON 2015—International Conference on Computer as a Tool (EUROCON), Salamanca, Spain, 8–11 September 2015. [Google Scholar] [CrossRef]

- Martinez, J.A.; Guerra, G. A parallel Monte Carlo method for optimum allocation of distributed generation. IEEE Trans. Power Syst. 2014, 29, 2926–2933. [Google Scholar] [CrossRef]

- Ge, H.; Asgarpoor, S. Parallel Monte Carlo simulation for reliability and cost evaluation of equipment and systems. Electr. Power Syst. Res. 2011, 81, 347–356. [Google Scholar] [CrossRef]

- Dias, J.A.S.; Borges, C.L.T. Object oriented model for composite reliability evaluation including time varying load and wind generation. In Proceedings of the 2010 IEEE 11th International Conference on Probabilistic Methods Applied to Power Systems, Singapore, Singapore, 14–17 June 2010. [Google Scholar]

- Da Rosa, M.A.; Leite Da Silva, A.M.; Miranda, V. Multi-agent systems applied to reliability assessment of power systems. Int. J. Electr. Power Energy Syst. 2012. [Google Scholar] [CrossRef]

- Augusto da Rosa, M.; Henrique Barrosa Pinto de Miranda, V.; Martins Leite da Silva, A.; Porto, I. Agent-based Technology Applied to Power Systems Reliability; University of Porto: Porto, Portugal, 2009. [Google Scholar]

- Singh, C.; Wang, L. Role of artificial intelligence in the reliability evaluation of electric power systems. Turk. J. Electr. Eng. Comput. Sci. 2008, 16, 189–200. [Google Scholar]

- Leite da Silva, A.M.; de Resende, L.C.; da Fonseca Manso, L.A.; Miranda, V. Composite reliability assessment based on Monte Carlo simulation and artificial neural networks. IEEE Trans. Power Syst. 2007. [Google Scholar] [CrossRef]

- Cai, J.; Xu, Q.; Cao, M.; Yang, B. A novel importance sampling method of power system reliability assessment considering multi-state units and correlation between wind speed and load. Int. J. Electr. Power Energy Syst. 2019. [Google Scholar] [CrossRef]

- Zhao, Y.; Tang, Y.; Li, W.; Yu, J. Composite Power System Reliability Evaluation Based on Enhanced Sequential Cross-Entropy Monte Carlo Simulation. IEEE Trans. Power Syst. 2019, 34, 3891–3901. [Google Scholar] [CrossRef]

- Hua, B.; Bie, Z.; Au, S.K.; Li, W.; Wang, X. Extracting rare failure events in composite system reliability evaluation via subset simulation. IEEE Trans. Power Syst. 2015, 30, 753–762. [Google Scholar] [CrossRef]

- Kroese, D.P.; Taimre, T.; Botev, Z.I. Handbook of Monte Carlo Methods; John Wiley & Sons, Inc: Hoboken, NJ, USA, 2011; ISBN 9781118014967. [Google Scholar]

- Billinton, R.; Wenyan, L. Reliability Assessment of Electric Power Systems Using Monte Carlo Methods, 1st ed.; Springer: New York, NY, USA, 1994; ISBN 9781489913487. [Google Scholar]

- Wang, Y.; Guo, C.; Wu, Q.; Dong, S. Adaptive sequential importance sampling technique for short-term composite power system adequacy evaluation. IET Gener. Transm. Distrib. 2014, 8, 730–741. [Google Scholar] [CrossRef]

- Pindoriya, N.M.; Jirutitijaroen, P.; Srinivasan, D.; Singh, C. Composite reliability evaluation using Monte Carlo simulation and least squares support vector classifier. IEEE Trans. Power Syst. 2011. [Google Scholar] [CrossRef]

- Urgun, D.; Singh, C.; Vittal, V. Importance Sampling Using Multilabel Radial Basis Classification for Composite Power System Reliability Evaluation. IEEE Syst. J. 2020. [Google Scholar] [CrossRef]

- Urgun, D.; Singh, C. A Hybrid Monte Carlo Simulation and Multi Label Classification Method for Composite System Reliability Evaluation. IEEE Trans. Power Syst. 2019. [Google Scholar] [CrossRef]

- Nannapaneni, S.; Mahadevan, S. Probability-space surrogate modeling for fast multidisciplinary optimization under uncertainty. Reliab. Eng. Syst. Saf. 2020. [Google Scholar] [CrossRef]

- Schneider, F.; Papaioannou, I.; Ehre, M.; Straub, D. Polynomial chaos based rational approximation in linear structural dynamics with parameter uncertainties. Comput. Struct. 2020, 233, 106223. [Google Scholar] [CrossRef]

- Kalinina, A.; Spada, M.; Vetsch, D.F.; Marelli, S.; Whealton, C.; Burgherr, P.; Sudret, B. Metamodeling for Uncertainty Quantification of a Flood Wave Model for Concrete Dam Breaks. Energies 2020, 13, 3685. [Google Scholar] [CrossRef]

- Moustapha, M.; Sudret, B. Surrogate-assisted reliability-based design optimization: A survey and a unified modular framework. Struct. Multidiscip. Optim. 2019, 60, 2157–2176. [Google Scholar] [CrossRef]

- Pereira, M.V.F.; Maceira, M.E.P.; Oliveira, G.C.; Pinto, L.M.V.G. Combining analytical models and monte-carlo techniques in probabilistic power system analysis. IEEE Trans. Power Syst. 1992. [Google Scholar] [CrossRef]

- Billinton, R.; Jonnavithula, A. Composite system adequacy assessment using sequential Monte Carlo simulation with variance reduction techniques. IEEE Proc. Gener. Transm. Distrib. 1997. [Google Scholar] [CrossRef]

- Zhang, F.; Lu, Z.; Cui, L.; Song, S. Reliability sensitivity algorithm based on stratified importance sampling method for multiple failure modes systems. Chin. J. Aeronaut. 2010. [Google Scholar] [CrossRef]

- Papaioannou, I.; Safety, D.S.-S. Combination line sampling for structural reliability analysis. Struct. Saf. 2020, 88, 102025. [Google Scholar] [CrossRef]

- Song, J.; Wei, P.; Valdebenito, M.; Signal, M.B.-M.S. Active learning line sampling for rare event analysis. Mech. Syst. Signal. Process. 2020, 147, 107113. [Google Scholar] [CrossRef]

- Song, S.; Lu, Z.; Song, Z. Reliability sensitivity analysis involving correlated random variables by directional sampling. In Proceedings of the ICQR2MSE 2011—Proceedings of 2011 International Conference on Quality, Reliability, Risk, Maintenance, and Safety Engineering, Xi’an, China, 17–19 June 2011. [Google Scholar]

- González-Fernández, R.A.; Leite Da Silva, A.M.; Resende, L.C.; Schilling, M.T. Composite systems reliability evaluation based on Monte Carlo simulation and cross-entropy methods. IEEE Trans. Power Syst. 2013, 28, 4598–4606. [Google Scholar] [CrossRef]

- Cao, Q.D.; Choe, Y. Cross-entropy based importance sampling for stochastic simulation models. Reliab. Eng. Syst. Saf. 2019. [Google Scholar] [CrossRef]

- Papaioannou, I.; Papadimitriou, C.; Straub, D. Sequential importance sampling for structural reliability analysis. Struct. Saf. 2016, 62, 66–75. [Google Scholar] [CrossRef]

- Wagner, F.; Latz, J.; Papaioannou, I.; Ullmann, E. Multilevel Sequential Importance Sampling for Rare Event Estimation. Siam J. Sci. Comput. 2020. [Google Scholar] [CrossRef]

- Valiev, R.; Gusev, S.; Oboskalov, V. Mathematical models and optimal load shedding strategies for power system generation adequacy problem. In Proceedings of the 2017 14th International Conference on Engineering of Modern Electric Systems (EMES), Oradea, Romania, 1–2 June 2017; pp. 41–46. [Google Scholar] [CrossRef]

- Zhang, Z.; Yang, H.; Yin, X.; Han, J.; Wang, Y.; Chen, G. A load-shedding model based on sensitivity analysis in on-line power system operation risk assessment. Energies 2018, 11, 727. [Google Scholar] [CrossRef]

- Pourbeik, P.; Hiskens, I.A.; Gou, B.; Huang, J.; Boyer, R.; Illian, H.F.; Orths, A.; Eriksen, P.B.; Hansen, A.B.; Massucco, S.; et al. Review of the Current Status of Tools and Techniques for Risk-Based and Probabilistic Planning in Power Systems, 1st ed.; Cigre: Paris, France, 2010; ISBN 978-2-85873-122-0. [Google Scholar]

- Subcommittee, P.M. IEEE reliability test system. IEEE Trans. Power Appar. Syst. 1979, 98, 2047–2054. [Google Scholar] [CrossRef]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).