1. Introduction

Much multivariate data is being collected by monitoring natural and social processes. IBM estimates that we all generate 175 zettabytes of data every day. To add, the data were collected at a rapidly increasing rate, i.e., it is estimated that 90% of data has been generated in the last two years. The need to extract useful information from continuously generated data sets drives demand for data specialists and the development of robust analysis methods.

Data analytics is inconceivable without testing the goodness of fit hypothesis. The primary task of a data analyst is to become familiar with the data sets received. This usually starts by identifying the distribution of the data. Then, the assumption that the data follow a normal distribution is usually tested. Since 1990, many tests have been developed to test this assumption, mostly for univariate data.

It is important to use the powerful tests for the goodness of fit hypothesis to test the assumption of normality because an alternative distribution is not known in general. Based on the outcome of normality verification, one can choose suitable analysis methods (parametric or non-parametric) for further investigation. From the end of the 20th century to the present day, multivariate tests for testing the goodness of fit hypothesis have been developed by a number of authors [

1,

2,

3,

4,

5,

6,

7,

8,

9,

10,

11,

12,

13,

14]. Some of the most popular and commonly used multivariate tests are Chi-Square [

8], Cramer von Mises [

2], Anderson-Darling [

2], and Royston [

3].

Checking the assumption of normality of multivariate data is more complex compared to univariate. Additional data processing is required (e.g., standardization). The development of multivariate tests is more complex because they require checking the properties of invariance and contingency. While for the univariate tests, the invariance property is always satisfied. The properties of invariance, contingency are presented in

Section 2 and are discussed in more detail in [

2,

12,

15].

The study aims to perform a power analysis of the multivariate goodness of fit hypothesis tests for the assumption of normality, to find out proposed test performances compared to other well-known tests and to apply the multivariate tests to the real data. The power estimation procedure is discussed in [

16].

Scientific novelty. The power analysis of multivariate goodness of fit hypothesis testing for different data sets was performed. The goodness of fit tests were selected as representatives of popular techniques, which had been analyzed by other researchers experimentally. In addition, we proposed a new multivariate test based on the mean absolute deviation of the empirical distribution density from the theoretical distribution density. In this test, the density estimate is derived by using an inversion formula which is presented in

Section 3.

The rest of the paper is organized as follows.

Section 2 defines the tests for the comparative multivariate test power study.

Section 3 presents details of our proposed test.

Section 4 presents the data distributions used for experimental test power evaluation.

Section 5 presents and discusses the results of simulation modeling.

Section 6 discusses the application of multivariate goodness of fit hypothesis tests to real data. Finally, the conclusions and recommendations are given in

Section 7.

2. Multivariate Tests for Normality

We denote the

p-variate normal distribution as

, where

is an expectation vector

and

is the nonsingular covariance matrix.

indicates a set of all possible

p-variate normal distributions. Let

where

and

, be a finite sample generated by a random

p-variate (column) vector

with distribution function

. The mean vector

is given by

where

is the sample size and the sample covariate matrix is

To assess multivariate normality of

(based on the observed sample

) a lot of statistical tests have been developed. Before reviewing specific tests, selected for this study, let us consider two essential properties. The set

is closed with respect to affine transformations, i.e.,

for any translation vector

and any nonsingular matrix

Thus, a reasonable statistic

for checking the null hypothesis (

) of multivariate normality should have the same value for a sample and its affine transforms, that is

An invariant test has a statistic, which satisfies the condition (1). It might seem that a test based on a standardized sample

is invariant, however Henze and Zirkler [

2] note that this is not always the case. In practice, for a given sample

the alternative distribution is not know. In such a case it is important to use a test for which the probability of correctly rejecting

tends to one as

. Such a test is said to be consistent. For more elaborate discussion on these properties we refer the reader to [

2]. Other important denotes are given in

Appendix A.

2.1. Tests Based on Squared Radii

This section reviews the properties of several measures of squared radii concerning their use for assessing multivariate normality. Squared radii are defined as

have a distribution which, under normality, is

times a

distribution [

9]. Under

, the distribution of

is approximately

for large

.

2.1.1. Chi-Squared (CHI2)

In 1981, Moore and Stubblebine presented multivariate Chi-Squared goodness of fit test based on order statistics [

8]. The statistic of the test is defined as

where

Since

takes the equivalent form [

8]:

where

is the probability distribution function of

.

.

2.1.2. Cramer-Von Mises (CVM)

In 1982, Koziol proposed the use of Cramer-von Mises-type multivariate goodness of fit test based on order statistics [

2]. This test statistic is defined as

where

is order statistics.

2.1.3. Anderson-Darling (AD)

In 1987, Paulson, Roohan and Sullo proposed the Anderson-Darling type multivariate goodness of fit test based on order statistics [

2]. The test statistic is defined as

2.2. Tests Based on Skewness and Kurtosis

This section reviews the properties of several measures of multivariate skewness and kurtosis regarding their use as statistics for assessing multivariate normality [

2]. The skewness and kurtosis are defined as

where

.

2.2.1. Doornik-Hansen (DH)

In 2008, Doornik-Hansen proposed a new multivariate goodness of fit test based on the skewness and kurtosis of multivariate data transformed to ensure independence [

6]. The Doornik-Hansen test statistic is defined as the sum of squared transformations of the skewness and kurtosis. Approximately, the test statistic follows a

distribution

where

and

are defined as

where

2.2.2. Royston (Roy)

In 1982, Royston proposed a test that uses the

Shapiro-Wilk/

Shapiro-Francia statistic to test multivariate normality. If the kurtosis of the sample is greater than 3, then it uses the

Shapiro-Francia test for leptokurtic distributions. Otherwise it uses the

Shapiro-Wilk test for platykurtic distributions [

3,

5]. Let

be the

Shapiro-Wilk/

Shapiro-Francia test statistic for the

th variable (

) and

be the values obtained from the normality transformation [

3,

5].

Thus, it are observed that

and

change with the sample size. The transformed values of each random variable are obtained by [

3,

5]

where

,

and

are derived from the polynomial approximations. The polynomial coefficients are provided for different [

3,

5]:

The Royston’s test statistic for multivariate normality is defined as

where

is the equivalent degrees of freedom,

is the cumulative distribution function for the standard normal distribution such that,

Let

be the correlation matrix and

is the correlation between

th and

th observations. Then, the extra term

is found by

where

When

and

, then

can be defined as

where

,

and

are the unknown parameters, which are estimated by Ross modeling [

4]. It was found that

and

for sample size

and

is a cubic function

2.2.3. Mardia (Mar1 and Mar2)

In 1970, K.V. Mardia proposed a new multivariate goodness of fit test based on skewness and kurtosis. The statistic for this test is defined as [

17]

2.3. Other Tests

This section reviews the properties of several measures of non-negative functional distance, a covariance matrix and Energy distance concerning their use as statistics for assessing multivariate normality. A non-negative functional distance that measures the distance between two functions is defined as

where

is the characteristic function of the multivariate standard normal,

is the empirical characteristic function of the standardised observations,

is a kernel (weighting) function

where

and

is a smoothing parameter that needs to be selected [

10].

2.3.1. Energy (Energy)

In 2013, G. Szekely and M. Rizzo introduced a new multivariate goodness of fit test based on Energy distance between multivariate distributions. The statistic for this test is defined as [

18]

where

,

is called scattering residues.

and

are independent randomly distributed vectors according to the normal distribution.

, where

is a Gamma function. The null hypothesis is rejected when

acquires large values.

2.3.2. Lobato-Velasco (LV)

In 2004, I. Lobato and C. Velasco improved the Jarque and Bera test and applied it to stationary processes. The statistic for this test is defined as [

19]

where

is an auto-covariance function.

2.3.3. Henze-Zirkler (HZ)

In 1990, Henze and Zirkler introduced the HZ test [

1]. The statistic for this test is defined as

where

,

.

gives the squared Mahalanobis distance of

th observation to the centroid and

gives the Mahalanobis distance between

th and

th observations. If the sample follows a multivariate normal distribution, the test statistic is approximately log-normally distributed with mean [

1]

and variance [

1]

where

and

Henze and Zirkler also proposed an optimal choice of the parameter

in using

in the

p-variate case as [

1]

A drawback of the Henze-Zirkler test is that, when

is rejected, the possible violation of normality is generally not straightforward. Thus, many biomedical researchers would prefer a more informative and equally or more powerful test than the Henze-Zirkler test [

5].

2.3.4. Nikulin-Rao-Robson (NRR) and Dzhaparidze-Nikulin (DN)

In 1981, Moore and Stubblebine suggested a multivariate Nikulin-Rao-Robson (NRR) goodness of fit test [

7,

8]. This test statistic for a covariance matrix of any dimension is defined as

where

is a vector of standardized cell frequencies with components

where

is the number of random vectors

falling into

. Then the limiting covariance matrix of standardized frequencies is

, where

is the

matrix with elements

where

is a

r-vector with its entries as

, 𝕞

is the number of unknown parameters,

is the Fisher information matrix of size

for one observation which evaluated as

where

is the

covariance matrix of

(a vector of the entries of

arranged column-wise by taking the upper triangular elements) [

7]:

The second term of

recovers information lost due to data grouping. Another useful decomposition of

is defined as

where

is the multivariate statistic defined by Dzhaparidze and Nikulin (1974) [

7]. It is defined as

and in 1985, McCulloch presented a multivariate test statistic [

7]:

If rank , then and are asymptotically independent and distributed in the limit as and , respectively.

3. The New Test

Our test is based on distribution distance and has been derived using an inversion formula. The estimation of a sample distribution density is based on application of the characteristic function and inversion formula. This method is known for its good properties (i.e., low sensitivity) and has been introduced in [

20]. Marron and Wand [

21] carried out an extensive comparison of density estimation methods (including the adapted kernel method) and concluded that density estimation based on application of characteristic function and inversion is more accurate for non-Gaussian data sets.

The random

-variate vector

, which follows a distribution of a mixture model has a density function

where

is the number of clusters (i.e., components, classes) of the mixture, and

is the a priori probability which satisfy

The is a distribution of the th class and is a set of parameters . We denote the -variate sample of independent and identically distributed random values .

When examining approximations of parametric methods, it should be emphasized that as the data dimension increases, the number of model parameters increases rapidly, making it more difficult to find accurate parameter estimates. It is much easier to find density of univariate data projections

than multivariate data density

because of mutually unambiguous compliance.

It is quite natural to try to find the multivariate density

using the density estimates

of univariate observational projections [

20]. In case of Gaussian mixture model, the projection of the observations (15) is also distributed according to the Gaussian mixture model:

where

is univariate Gaussian density. The parameter set

θ of the multivariate mixture and the distribution parameters of the data projections

,

are related by equations:

The inversion formula is used

where

where

denotes the characteristic function of the random variable

. Given that

,

and by changing the variables to a spherical coordinate system we obtain

where the first integral is the surface integral of the unit sphere. The characteristic function of the projection of the observed random variable is

and has the property

By selecting the set

of uniform distributed directions on the sphere and replacing the characteristic function with its estimate, a density estimate is obtained [

20,

22]:

where

denotes a size of set

. Using the

p-variate ball volume formula

the constant

defined as

Computer simulation studies have shown that the density estimates obtained using the inversion formula are not smooth. Therefore, in Formula (24), an additional multiplier

is used. This multiplier smoothes the estimate

with the Gaussian kernel function. Moreover, this form of the multiplier allows the integral value to be calculated analytically. Monte Carlo studies have shown that its use significantly reduces the error of estimates. Formula (24) can be used to estimate the characteristic function of the projected data. Let us consider two approaches. The first one is based on the density approximation of the Gaussian distribution mixture model. In this case, the parametric estimate of the characteristic function is used:

By substituting

in (24) by (27), we get

where

We note, that only the real part of the expression is considered here (the sum of the imaginary parts must be equal to zero) in other words, the density estimate can acquire only the real values. The chosen form of the smoothing multiplier allows relating the smoothing parameter with the variances of the projection clusters, i.e., in the calculations the variances are simply increased by . Next, the expression (29) is evaluated.

Let

then (29) can be written as

By integrating in parts, we get

is expressed analogously. With respect to the limitations of the

j index, the following recursive equations are obtained:

The initial function

is founded by starting with the relation

From (35) and (38) it follows that

satisfies the differential equation

which is solved by writing down

as the Taylor series:

By equating the coefficients of the same powers, its values are obtained:

which gives us

is found from expression (30):

The value of the integral (24) then is

One of the disadvantages of the inversion formula method (defined by (24)) is that the Gaussian distribution mixture model (13) described by this estimate (for ) does not represent density accuratelly, except around observations. When approximating the density under study with a mixture of Gaussian distributions, the estimation of the density using the inversion formula often becomes complicated due to a large number of components. Thus, we merge components with small a priori probabilities into one noise cluster.

We have developed and examined a modification of the algorithm which is based on the use of a multivariate Gaussian distribution mixture model. The parametric estimate of the characteristic function of uniform distribution density is defined as

in the inversion Formula (19). In the density estimate calculation Formula (24), the estimation of the characteristic function is constructed as a union of the characteristic functions of a mixture of Gaussian distributions and uniform distribution with corresponding a priori probabilities:

where the second member describes uniformly distributed noise cluster,

—noise cluster weight,

,

. Based on the established estimates of the parameters of the uniform distribution and data projections, it is possible to define the range

By inserting (46) to (24) we obtain

Using notations such as (28), we define the density estimate as

where

is given in (29) which is evaluated by (44) and

By integrating, we get

where

and

are defined in (30) and (31). Then the integral (51) evaluates to

The above procedure is called

a modified inversion formula density estimate. Our proposed normality test is based on the distance function

where

is a standardized value,

is an estimate of density function.

The choice of

G(z) (54) is influenced by three aspects [

23]:

G(z) assigns high weight where |f(z)−| is large, f(z) pertaining to the alterative hypothesis. the distribution density is related to the alternative hypothesis.

G(z) gives high weight where the is a relatively precise estimator of f(z).

G(z) is such that the integral (54) has a closed form.

For the

distribution free method, the first two aspects are fulfilled by adequately selecting the smoothness parameter

, in addition it yields a closed (54) integral form

does not depend on a moderate sample volume (

32) but depends on the data dimension. It is convenient to use the test statistics

which had the lowest sensitivity based on the exploratory study. Under the null hypothesis statistic

approximately follows the Johnson S

U distribution which is specified by the shape (

), scale (

), location (

) parameters and has the density function

where

,

.

In the middle of the twentieth century, N. L. Johnson [

24] proposed certain systems of curve derived by the method of translation, which, retain most of the advantages and eliminate some of the drawbacks of the systems first based on this method. Johnson introduced log-normal (S

L), bounded (S

B), and unbounded (S

U) systems. The bounded system range of variation covers the area between the bounding line

and the Pearson Type III distribution; where (

,

) points are obtained from the distribution moments defined by Wicksell [

25]:

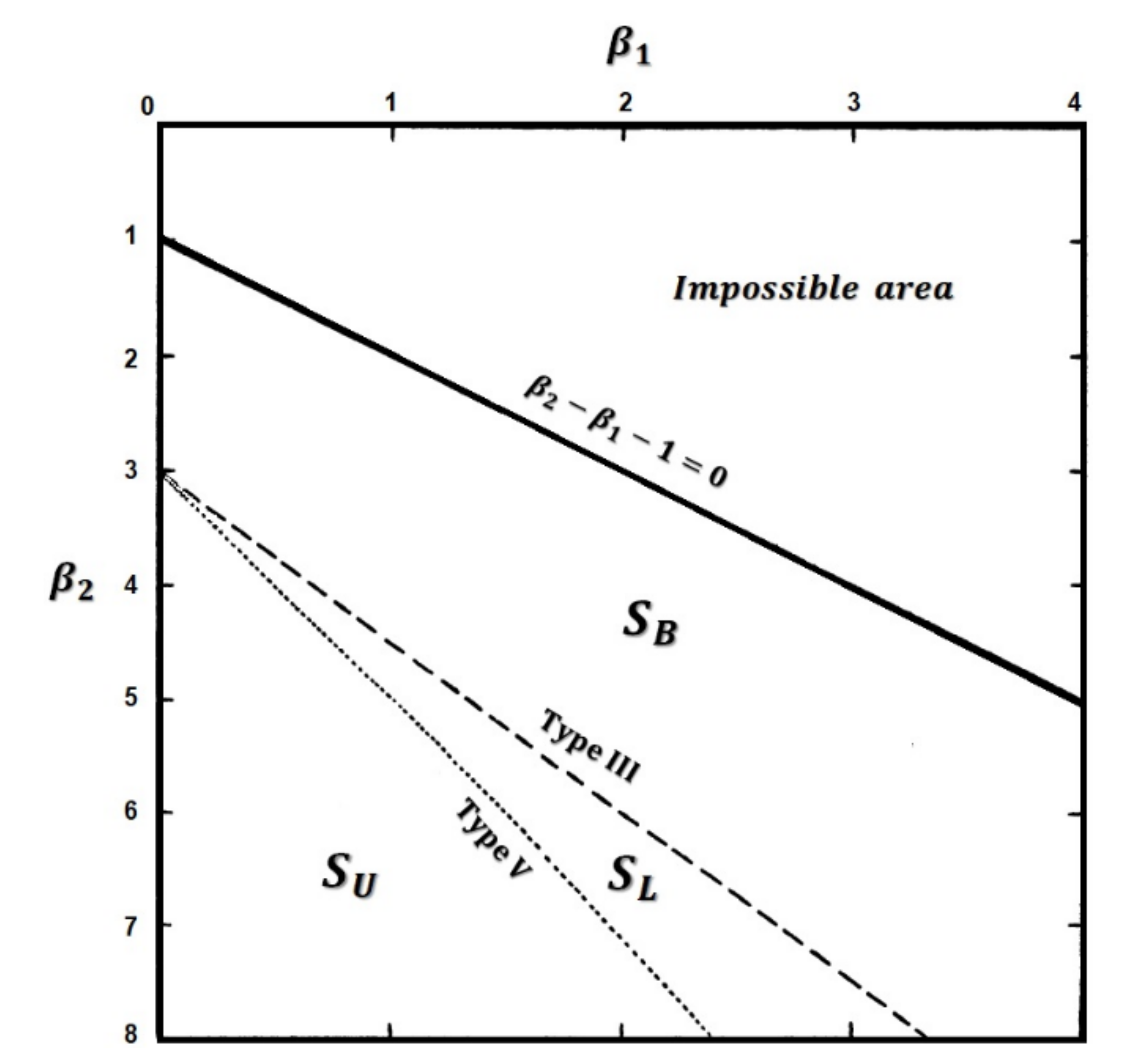

The S

U system is bounded at one end only (Pearson Type V). The S

L system is lying between S

B and S

U systems. These regions are indicated in

Figure 1. The S

U system is presented in detail in [

24].

Estimates of

statistic Johnson S

U distribution parameters for different dimensions are given in

Table 1.

For statistic

, the invariance and contingency properties were checked. The invariance property is confirmed because standardized data was used. The contingency property is confirmed experimentally (see

Section 5).

5. Simulation Study and Discussion

This section provides a modeling study that evaluates the power of selected multivariate normality tests. We used the Monte Carlo method to compare our proposed test with 13 multivariate tests described above for dimensions , with sample sizes at significance level . Power was estimated by applying the tests on 1 000 000 randomly drawn samples from the alternative distribution (Beta, Cauchy, Laplace, Logistic, Student, Standard normal, Chi-Square, Gamma, Gumbel, Lognormal, Weibull, Mixed, Normal mixture).

The values of the test smoothness parameter () were selected experimentally: from 0.1 to 5 with a step of 0.1. The value of the test parameter was determined for each dimension considered. It was found that the best results are obtained (i.e., maximum statistical value) for with , for with , and for with . These smoothness parameter values were used to carry out the numerical experiments.

The power of 13 (including our proposed test) multivariate goodness of fit hypothesis tests was estimated calculated for different sample sizes, distributions and mixtures. The mean power values for the groups for distributions (given in

Section 4), for each test and sample sizes, have been computed and presented in

Table 2,

Table 3,

Table 4 and

Table 5. It can be determined that the new test for the groups of symmetric and mixed distributions is the most powerful one. In the group of asymmetric distributions, the new (for

) and Roy (for

and

) tests are the most powerful ones. The new (for

and

) and Roy (for

with sample sizes

) tests are also the most powerful in the group of normal distribution mixtures. Comparing the Mardia (Mar1 and Mar2) tests, based on asymmetry and excess coefficients, it has been found that Mar1 is the most powerful only for the group of asymmetric distributions. For the group of symmetric distributions the power of this test is the lowest (compared to other tests).

In order to supplement and emphasize the results presented in

Table 2,

Table 3,

Table 4 and

Table 5, the generalized line diagrams were drawn using the Trellis display [

26] multivariate data visualization method. The resulting graph is shown in

Figure 2 which shows that the New test is significantly more powerful than the other tests. The power of the Mar1 tests is the lowest compared with the other tests.

Figure 2 indicate that the power of the tests increases as the sample size increases. By increasing the dimensions of the power of 8 (AD, CHI2, CVM, Energy, HZ, New, Mar1 and NRR) tests decreases while the power of the other (DH, DN, LV, Mar2 and Roy) tests increases slightly. For small sample sizes, the most powerful tests are New, Roy and DH. For large sample sizes, the most powerful tests are New, Energy, HZ and LV.