1. Introduction

Internal Audit (IA) is a dynamic function that has emerged over the years from a means of fraud detection and compliance to encompass all elements of organisational processes, aimed at obtaining a level of assurance as to the proper functioning of all entities within an organisation. With the added focus on risk management (RM), internal auditors now plan and establish internal control systems to enhance all processes, including organisational governance systems. Functional, effective IAing systems add value to institutions. In fact,

Phornlaphatrachakorn’s (

2020) study identified a positive relationship between internal audit effectiveness (IAE) and superior financial reporting, both of which positively contribute to organisational success.

Cohen and Sayag (

2010) had an interesting approach towards the definition of internal audit, describing that the aim is “to improve organisational efficiency and effectiveness through constructive criticism.” The modern definition of internal audit, appearing in most IA related literature is as follows:

“Internal auditing is an independent, objective assurance and consulting activity designed to add value and improve an organisation’s operations. It helps an organisation accomplish its objectives by bringing a systematic, disciplined approach to evaluate and improve the effectiveness of risk management, control, and governance processes.”

The rise of large corporate scandals and the disasters they left behind have induced a surge in the awareness of good corporate governance in recent years, emphasised through regulatory reforms such as the Sarbanes-Oxley Act in 2002. The internal audit function fits into this system as an integral piece of the ‘governance mosaic,’ providing assurance of internal controls, risk management and compliance (

Soh and Martinov-Bennie 2011). This would tie back to the increased value-adding purpose of the function, with

Arena and Azzone (

2009) reiterating the previous points mentioned and further adding the benefits an IA team would provide to external auditors (EA) and the audit committee (AC).

There is a wealth of literature further detailing the relevance of the IA function. However, little exists on the characteristics, which make IA practices effective.

Cohen and Sayag (

2010) point out that IAE is “an important concept rarely examined in scientific literature.”

Turetken et al. (

2019) and

Karagiorgos et al. (

2011) explain that although we have a solid grasp of the importance of the IA process, there is still significant difficulty in measuring its effectiveness and assigning a metric as to the benefits it is creating, including its alignment with the organisation’s aims of sustainable, long-term success. The research emphasises the necessity for “accurate, realistic and simple indicators that would help organisations to measure the effectiveness of the IAs”.

The study is of noteworthy significance for IA teams, as the specific characteristics, which improve IA processes will be identified through a convergent, parallel mixed-methods approach, making use of the strengths of both to provide a comprehensive and all-rounded research (

Creswell and Clark 2011). If the drivers of effective IA practices are identified and the unrelated factors are removed, modern IA teams may focus on the influential characteristics to improve their own unit, enhancing their service and further aligning with the entity’s goals of organisational success. The reasonable amount of previous literature provides evidence of the viability of the research; however, they all seem to be lacking in one form or another.

Arena and Azzone (

2009) explain that “unfortunately, there is still a lack of knowledge about the organisational dimensions a company should act on and how it should modify them in order to improve the ‘added value’ of IA.” It is for that reason that the Authors intends to use it as a foundation to build the new study on, adding onto their works and evaluating from a fresh perspective. In this case, self-completed surveys were deemed preferable to interviews, as they are easier to distribute and may provide a substantially higher response than physical/virtual interviews. Moreover, interviews provide mainly qualitative data, and the study aims for a mixed- methods approach which also gathers quantitative data, as there seems to be a lack of similar studies in the field.

There have been significant advancements regarding the IA function in the recent years, mainly the last two decades. In Malta, this was observed in

Attard (

2014), which research related to IA barriers, where the author performed a parallel study of

Farrugia (

2006). Semi-structured interviews were carried out on Maltese listed firms and it was noted that since 2006, IAing gained significant importance and the findings demonstrated that there has been an overall increase in IA effectiveness since then. These studies were however limited to just Maltese listed firms, of which only 11 were interviewed in 2014.

On the other hand, this may provide a reasonable baseline for several expectations for this study. In Kosovo, IA has its roots mainly dating back to 2000 in the public sector, where it was a centralised function aimed at auditing all public institutions in the country. Their principal target was to identify the possible misuse of public funds, ensuring they were “economically, effectively and efficiently used” (

Rudhani et al. 2017).

The research undertaken involved developing various factors or metrics which would quantify IA’s effectiveness, and furthermore identifying characteristics of the IA process which would influence IAE. The aim of the Authors with this study is to determine factors that impact IA effectiveness and address specific objectives related to such. The two specific objectives are (1) to determine the factors that impact internal audit effectiveness based on the prior literature; and (2) to confirm these identified factors in the context of internal audit effectiveness.

This will be tackled by isolating the objective into more manageable goals, making it simpler to visualise and achieve. These would take the form of the following research questions:

Research Question 1: What are the factors/latent constructs deemed to influence internal audit effectiveness?

Following the theoretical framework proposed by

Yin (

2002) and

Stake (

1995) and scanning the literature above using the thematic approach as suggested by

Braun and Clarke (

2006), the following propositions as shown in

Table 1 were determined and used as characteristics/latent constructs:

However, one must maintain an open mind to allow for any new variables that have not been identified through our literature review, and any newly underlying constructs which may be identified through literature or other preliminary analysis.

Research Question 2: What variable/s may be used as measures of effectiveness? (Dependent variable)

Research Question 3: Which variables/constructs have any statistically significant relationship with internal audit effectiveness?

The methodology section will elaborate on the objectives and how they are to be tackled in a structured approach.

The secondary objectives are the following:

To explore perceptions and viewpoints of IA professionals, departments and possibly individuals on IAE.

To provide general recommendations and suggestions based on the IAE findings in the research.

Figure 1 below provides an outline of the conceptual framework and structure of the statistical analysis to be carried out. As evidenced, the graphic is purposely divided into four distinct sections. First are the raw variables to be tested in the questionnaire, such as years of experience. These then form a latent construct, which is a grouping of variables forming a factor, a characteristic that exists but cannot be directly observed, only inferred through the other variables. These latent constructs are then analysed for any statistical relationship with the dependent variable, being IAE, which then has its own variables to be tested to quantify effectiveness, noted as the measures of effectiveness. The latent variables represent the hypotheses formed, comprising the effectiveness factors listed in the objectives. The variables, latent construct and measure are considered the influencing factors. The variables are the formative constructs, and the measures are the reflective constructs.

3. Methodology

A differentiation must firstly be made between the terms ‘effective’ and ‘efficient’ as they commonly occur in many readings and may be misused.

Arena and Azzone (

2009) described the former as the “capacity to obtain results that are consistent with targets”, while Chambers explained it as “doing the right thing,” in comparison to the latter being “doing it well” (

Chambers 1993 as cited in

Turetken et al. 2019).

Bednarek (

2018) stated that although both are fundamental, efficiency is irrelevant if the IA process is ineffective, rendering it ‘useless’.

The first step of the research design in the preliminary stages involved developing the problem to be tackled. In the second stage, the objectives and research questions were formulated accordingly, in line with the problem in the previous step. The following step made use of the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) method (

Page et al. 2021).

This involved identifying databases and registers, scanning them for specific search strings to arrive at the best possible set of results with the most accurate articles. The following PRISMA flow diagram (

Figure 2) was followed accordingly, in order to carry out the most comprehensive systematic review of all the literature possible.

After conducting pilot searches, it was clear that the most common strings of text evident among all titles were those of ‘effectiveness’ and ‘internal audit’. It was important to note that when developing the advanced search criteria within the Hydi platform, Google Scholar, Researchgate and Emerald Insight, both strings were required to be present in the title, in no specific order This decreased the likelihood of being presented with articles relating solely to internal audit or effectiveness in any other realm of research, while also providing titles not strictly written as “internal audit effectiveness,” but also ones such as “effectiveness of internal audit” marked. Hence, the following statement was used for electronic libraries:

(“effectiveness”) AND (“internal audit”).

Similar to

Turetken et al. (

2019), some studies made use of ‘quality’ and ‘effectiveness’ interchangeably, so the same search was carried out with the new string as follows:

(“quality”) AND (“internal audit”)

Utilising these search criteria and removing duplicates reduced the records identified significantly, from 4422 to 141 as portrayed in

Figure 2. Further exclusion and inclusion criteria were then applied to these results, adapted also from

Turetken et al. (

2019). These were the following:

Inclusion Criteria:

- (1)

Publications must be in the English language.

- (2)

Research must be published after the year 2000.

- (3)

Publications must relate to internal audit effectiveness and must include qualitative or quantitative metrics relating to factors influencing IA effectiveness.

Exclusion Criteria:

- (1)

Publications analysing IA ‘efficiency’ rather than ‘effectiveness’.

- (2)

Papers without bibliographic information, working papers and white papers.

- (3)

Studies not specifically relating to internal audit effectiveness.

These measures reduced the results to 45, which were screened individually. From those publications, 7 were not retrieved as the study was not available for viewing and the publisher did not grant permission to view it or send a copy. The remaining 38 articles were reduced to the final 33 through more detailed scanning of the literature, removing 5 articles which were still deemed out of scope. Each of the final publications were then thoroughly analysed for their themes, hypotheses and the findings, specifically the causal relationship, if any, that was found between the tested factors and IAE.

The questionnaire is devised using a deductive, thematic approach as explained by

Braun and Clarke (

2006) and sent out to internal audit professionals, making use of structural equation modelling (SEM) to validate the framework. This would involve obtaining underlying variables for each construct to be tested, to reduce elements of error and increase reliability. However, this increases significantly in complexity when there are many different variables and factors which all have certain relationships with each other, which is why the use of SEM is so crucial in confirming the framework. A measurement framework is also required for IAE itself, as explained previously. This would involve assigning variables such as perceived audit effectiveness or recommendation implementation rates to measure IAE, in order to allow the analysis of the causal relationship between an increase in one hypothesis factor and its relationship with IAE. Various questions were subjective while others objective and without bias. For example, IA size is objective, whereas perceived competency is subjective in nature. In our methodology, we preferred co-variance SEM to variance-based SEM, since we were interested in the measure of the directional relationship between two random variables (covariance) and not the spread of a data set around its mean value (Variance-Based) (

Fan et al. 2016).

In order to identify whether the factors being tested hold true to the research, hypotheses were formed as displayed in

Table 4, relating directly to the factors mentioned previously.

It was decided that questionnaires would be utilised for this research in order to obtain standardised replies. The majority of questions are statements relating to the various IA factors, to which the respondent may select a reply following a 5-point Likert scale to provide their level of agreeance, ranging from “Strongly disagree” to “Strongly Agree.” This results in quantitative data ranges from 1–5, required for the specific analysis described above.

To achieve the mixed-methods approach, a qualitative element is necessary within the research. This was carried out through an open-ended field within the survey itself, allowing the respondents to provide further feedback, bring forward alternative ideas or explain their rationale. This all-encompassing mixed-methods format aims to reap both the benefits of the structured, quantitative data analysis formerly mentioned and the qualitative data gathering similar to that of an interview.

Table 5 outlines the structure of the survey developed, divided into 5 distinct areas.

Section 1 provides a brief introduction on the study at hand, what the Authors’ aim to achieve, GDPR guidelines and other contact information as guidelines and standard procedure. In

Section 2, the questions begin with the main demographics and general details such as gender, level of education and team size.

Section 3 provides a table of statements allowing responses within 5 points as explained above.

Table 3 lists each factor/hypothesis expected to be tested by that question. It is important to note that the EFA carried out on the findings may adjust or negate these factors and create its own latent constructs, having different assortments of questions linking to different factors or entirely new ones.

Section 4 assesses the measures of IAE, which will be used to quantify effectiveness. The final section leaves an open-ended question for the qualitative portion, accepting any form of feedback.

EFA is used to determine whether the factor that forms the basis of the data is sufficient for a hypothesis and to determine the number of factors. EFA is often thought of as a theory-generating technique rather than testing theories (

Stevens 2002). In this respect, EFA is used to test hypotheses rather than verifying the information produced (

Stapleton 1997).

CFA is a theory-testing model rather than a theory-generating model (

Henson and Roberts 2006;

Stevens 2002). In confirmatory factor analysis, the researcher establishes a hypothesis before the analysis. This hypothesis or model states which variables are related to which dimensions and which dimensions are related to each other. Thus, the model is based on a theoretical or experimental basis (

Stevens 2002). CFA is a very broad technique used in testing theories about latent variables and at a higher level (

Tabachnick and Fidell 2014). CFA is used to determine whether there is a concordance between the factors determined in the exploratory factor analysis and the factors put forward theoretically. In other words, CFA tests whether there is compatibility between the variables that play a role in determining the theoretical factors and the original variables that make up the factors determined by exploratory factor analysis (

Özdamar 2010;

Brown 2015). In this context, CFA is used to evaluate the construct validity of the constructs obtained by EFA (

Kline 2005;

Stapleton 1997).

We applied the methodology used by

Schmitt (

2011), who applied CFA to the same data to confirm the structure obtained by first applying EFA. Although it seems reasonable to apply exploratory factor analysis first and test the model discovered with exploratory factor analysis with confirmatory factor analysis, in terms of achieving theoretical robustness, there are also discussions about how this process should be done. This controversial situation becomes especially evident in the process of developing a new test, when there is no empirical information about the dimensions of the test and what items constitute these dimensions. In such cases, although a structure is initially reached with EFA, it is an important question whether CFA can be applied when it comes to testing this structure.

4. Results

4.1. Data Gathered

A total of 402 final responses were gathered from circa 1500 requests to fill in the survey. The requests were at first administered using a non-probability purposive sampling technique directed towards the authors’ IA contacts found on LinkedIn, who were provided with an online link built on the Qualtrics XM application software. In turn, these contacts were asked to send the survey to their IA colleagues (i.e., Non-probability snowballing sampling). However, although the sampling method used was non-probability in a sense that focus was placed on IAs who were connected to the authors on LinkedIn, there was still some form of probability sampling since it was not known who the respondents were. All that was known is that they were IAs. Around 765 questionnaires were initiated, with the difference making up the responses, which remained unfinished for over a two-week period, resulting in the specific uniquely identifiable response becoming invalidated. From a geographical perspective, no limitations were placed on the location of the respondent or the jurisdiction within which they carry out their IA activities. This decision was taken in order to encompass opinions from all possible geographical backgrounds and to maximise possible responses, arriving at more accurate statistical analysis. This was possible through the sample size gathered, which may statistically cover any population size with a 95% confidence level and 5% margin of error, being that the threshold required would be 384 responses. Since as noted above we felt that there was an element of probability in our sample and we had no idea of the population, we used a sample size calculator to determine the optimal sample size. The 384 sample size figure was obtained using the formula: n = z

2·[p*q]/d

2), which is used to calculate the sample size of a qualitative variable in prevalence or cross-sectional studies of an unknown population size. Here, n is the sample size, P is the estimated proportion of the study variable or construct. Based on previous studies or pilot studies (50%), q = 1 −

p (50%), and d is the margin of error (5%). ‘z’ is the Z-score or a standard normal deviate corresponding to 5% type 1 error (

p < 0.05) it is 1.96. Therefore [1.96

2 × 0.50 (1 − 0.50)]/0.05

2 (

Charan and Biswas 2013). Moreover, we were confident that we reached qualitative saturation with our sample since also following the suggested rule of thumb by

Sekaran (

2003), “Sample sizes larger than 30 and less than 500 are appropriate for most research” and “in multivariate research (including multiple regression analyses),the sample size should be several times (preferably between 4 and 10 times or more) as large as the number of variables in the study”.

For the exploratory factor analysis to be carried out, it is generally recommended to have 5–10 responses per question or an arbitrary amount such as 100 or 200 to arrive at successful analysis, although there are more complex methods to arrive at a specific sample size (

Hair et al. 2010;

Fabrigar et al. 1999). This research has gathered just over 10 responses per question (assuming 40 total questions from the statements). Although

Child (

2006) warned against collecting samples from many diverse populations for EFA, it should be noted that the factors being analysed are of a universal nature and any variance within the responses which are clearly influenced by location or jurisdiction is simply information which may further support the research’s endeavours.

As an explanatory example, an understaffed IA department should result in lower effectiveness regardless of the jurisdiction in which it operates. However, the finding that certain jurisdictions tend to operate with understaffed departments is certainly a fruitful secondary discovery.

The sample of valid responses came mainly from Europe (77%) with the largest portion from the United Kingdom (19%), then Malta (14%), Turkey (13), Italy (6%), Latvia (5%) and other EU countries (20%). 13% came from the United States, 3% from Asia, 3% from Australia and the rest from Africa. 66% of the respondents were male and 32% Female. Their experience varied with the largest portion having 0 to 5 years of experience (31%), 26% having 6 to 10 years of experience, 21% with 10 to 15 years of experience and the rest (22%) having 15 to 30+ years of experience. The level of education of respondents varied with the largest portion holding a master’s degree (72%), 22% holding a bachelor’s level and 6% holding a doctorate degree. Most worked in large companies with over 500 employees (65%), with the second-most common being medium-sized entities with 201–500 employees (15%) and the remaining employed with companies staffing 200 people or less.

4.2. Data Classification

As previously described, the questionnaire was split into four distinct sections, being the demographics, the statements to be tested, the measures of effectiveness and finally the qualitative comments section. For data analysis purposes and further reference, these will be classified according to the following

Table 6 while maintaining the same numbering as

Table 4.

4.3. Exploratory Factor Analysis

The data gathered was inputted into IBM

® SPSS

® version 26 to perform EFA, in order to reduce the variables tested (S1–S40) into latent factors. The Kaiser-Meyer-Olkin (KMO) test to measure sampling adequacy arrived at a value of 0.902 with a significance of 0.000 under Bartlett’s Test of Sphericity, with the former falling within the marvellous range (above 0.9), highlighting its validity for EFA to be carried out (

Kaiser 1975). The latter rejects the null hypothesis that the correlation matrix is an identity matrix with the value being less than 0.05, proving that the variables are indeed related and prime candidates for EFA (

Dodge 2008).

The procedure loaded 7 factors from 35 statements, omitting 5 from the 40 initial statements. The following statements in

Table 7 list the excluded statements, discarded either due to explaining little variance, being explained by other variables, being loaded with little correlation onto too many factors (cross-loading), loaded onto no factors with significance or loaded onto factors with too few variables (one or two) resulting in their unreliability (

Tabachnick and Fidell 2007).

Principal component analysis was the preferred method to reduce the statements into their latent components, carried out on the remaining 35 variables. From the 35 components which emerged, 7 were valid for selection meeting the criteria of having eigenvalues greater than Kaiser’s criterion of 1 and covering 60.15% of the variance. This threshold for factor retention is perhaps the most widely adopted, however often scrutinised for its oversimplification and tendency to retain more factors than are truly valid, often warranting the use of

Horn’s (

1965) Parallel Analysis or Cattell’s Scree plot graphical interpretation test (

Fabrigar et al. 1999;

Cattell and Vogelmann 1977). On the other hand, it is generally required that the factors must explain a minimum of 60% of the cumulative variance, slightly disregarding the arbitrary threshold of 1 eigenvalue (

Hair et al. 2010). This is further emphasised through

Hooper’s (

2012) paper explaining that these stringent methods such as PA or Scree test might result in under-factoring, where the variables are reduced into too few factors, which she strongly advises against. It is deemed to be much safer to load more factors than needed, as under-factoring may result in common factors combining and “obfuscating the true factor structure,” (

Hooper 2012). Therefore, although these methods may be omitted, the 60% threshold was met with factors containing eigenvalues greater than 1, as depicted in

Table 8 below.

Figure 3 provides a Scree Plot depicting the eigenvalues of each factor accordingly. Although the Scree Test would aim to identify the ‘breaking point’ or ‘elbow’ as the cut-off for factor selection, it may be observed that the seven factors already chosen are suitable for loading.

4.4. Factor Rotation Results

Promax rotation with Kaiser normalisation was the preferred method alongside principal components analysis, displayed in Table 10. This form of oblique rotation allows for correlation between factors which is a more realistic solution than orthogonal rotations, however interpreting the results of an oblique rotation is often more complex and tedious. Although

Hetzel (

1996) states that where possible, an orthogonal solution should be utilised,

Tabachnick and Fidell (

2007) state that one should first request an oblique rotation and analyse the component correlation matrix for any values surpassing 0.32, which would warrant an oblique rotation to be held. This was indeed the case, where various components were observed to have correlation over the threshold mentioned, as evidenced in

Table 9 below.

Thurstone (

1947) proposed five criteria that make up a ‘simple structure,’ which according to

Gorsuch (

1983), would make the researcher indifferent to the choice of rotation as they would “lead to the same interpretations.”

Various researchers view these criteria strictly, while others such as

Kline (

2002) stating that the most crucial criteria are that each factor has a few variables with high loadings and the rest with zero or close to zero loadings.

Kim and Mueller (

1978) explained that as long as variables are placed into “theoretically meaningful subdimensions,” any popular rotation method will achieve the same results.

The constraints placed on the software were as follows:

- (1)

Five variables were initially removed,

- (2)

The dimensions were reduced to seven factors,

- (3)

Principal Components Analysis extraction was selected,

- (4)

Promax rotation was selected,

- (5)

Factor loading was suppressed to >0.4, removing any loadings lower than 0.4 from the table for simpler analysis since they are not significant.

The Pattern Matrix outputted in the originally requested Promax rotation in

Table 10 provides a clear indication that it undoubtedly follows a ‘simple structure,’ with all factors comprising of 3 or more variables each, no significant cross- loadings between factors and each variable having one significant loading on one factor, with zero-loading on the rest. This further confirms the choice of Promax rotation, where the correlation between components requires and oblique method while the simple structure should make us indifferent from other aspects, leaving no reason for necessitating orthogonal rotations.

Reliability tests were carried out on all factors to ensure the and consistency of the factors in relation to what was being measured. All factors surpassed the Cronbach’s Alpha threshold of >0.7 to ensure high reliability and consistency, aside from factor F7 described to have acceptable reliability most likely due to it consisting of the least variables, yet surpassing >0.5 threshold (

Nunnally 1978). These were listed in

Table 11 below, utilising the descriptors laid out by

Taber (

2018).

Taber (

2018) notes that, alpha values are to be described as excellent (0.93–0.94), strong (0.91–0.93), reliable (0.84–0.90), robust (0.81), fairly high (0.76–0.95), high (0.73–0.95), good (0.71–0.91), relatively high (0.70–0.77), slightly low (0.68), reasonable (0.67–0.87), adequate (0.64–0.85), moderate (0.61– 0.65), satisfactory (0.58–0.97), acceptable (0.45–0.98), sufficient (0.45–0.96), not satisfactory (0.4–0.55) and low (0.11). Tests are however, robust if any two or three items were removed from the test, the Cronbach alpha reliability would not drop below the acceptable value of 0.70. Then, a critical value of alpha of 0.70 offers evidence of the reliability/internal consistency of an instrument across different contexts, modes, and levels of representation.

4.5. New Factor Labelling

The new factors produced are available in

Table 12, listing the variables in each component, the hypothesised factors covered and the new labelling for each. Interestingly, most variables were grouped similarly to what was hypothesised, with the exception that the 16 hypothesised factors were reduced to 7 (excluding the factors tested through the demographics such as IA size, insourced/co-sourced/outsourced and public/private organisations).

As anticipated, Independence and objectivity were grouped together due to the expectation of having very similar characteristics, while F4 contains the sole factor relating to Big Data and F5 for external audit cooperation, confirming the validity of their groupings as a singular latent variable. F1 covers cooperation with AC and the development of a follow-up process. F2 relates to the organisational environment providing appropriate resources with an emphasis on knowledge resources, while promoting good corporate governance and incentivising communication and positive performance. F3 groups the largest number of hypothesised components, covering mainly the support from management and external audit, with a risk-based approach. F6 relates to overall independence of the IA team and scope limitations, while the final factor F7 relates principally to the establishing of risk assessment and response measures, including adherence to ISO19011 which has recently added the risk measurements as part of the standard.

4.6. EFA Procedure on Effectiveness Measures

It was decided to perform EFA not only on the statements S1–S40, but also on the measures of effectiveness M1–M10 in order to group them into latent factors where possible and reduce any variables which explain little variance. The following matrix emerged, visible in

Table 13, after placing the following constraints:

Variable M4 (“Audit findings are solved in a timely manner”) was removed due to no significant factor loadings.

Principal Components Analysis extraction was selected.

The dimensions were reduced to 2 factors, all with Eigenvalues over 1

Direct Oblimin (Oblique) rotation was selected with Kaiser normalisation

Factor loading was suppressed to >0.4, removing any loadings lower than 0.4 from the table for simpler analysis since they are not significant.

The Kaiser-Meyer-Olkin Measure of Sampling Adequacy produced a value of 0.798, surpassing the 0.7 validity threshold and interpreted as “middling” with at a significance of 0.000, accumulating a total explained variance of 1.723 from two components selected, each with eigenvalues over 1 (

Kaiser 1975).

As expected, component 2 loaded up solely in questions relating to the hypothesised measure 10, confirming Perceived IA Effectiveness as a valid sole latent variable, therefore retaining the same label. The first component loaded the remaining measures into a singular factor, comprising objective measures, M8 relating to stakeholder satisfaction and M9 relating to added value. Although various statements still maintain a level of subjectivity within them, for the purposes of this study the more objective variables were labelled as “objective” in component 1. Despite M8 and M9 being listed under perceived measures, the specific survey questions were worded more closely with the objective measures in contrast with the Perceived IA Effectiveness questions, which involved substantial personal interpretation.

When performing reliability tests on both latent constructs, Component 1 achieved a Chronbach’s Alpha score of 0.759, interpreted as “relatively high” utilising the previous scales (

Taber 2018). The second Component achieved an “acceptable” score of 0.557, confirming the validity of both factors.

4.7. SEM: Model Design and Development

IBM

® SPSS

® Amos version 21 was utilised as the SEM software of choice, allowing the model to be designed, developed, and calculated accordingly for further analysis. Due to the measures of effectiveness being divided into two latent constructs of their own, two separate models were developed to test the factors on the objective and perceived effectiveness components.

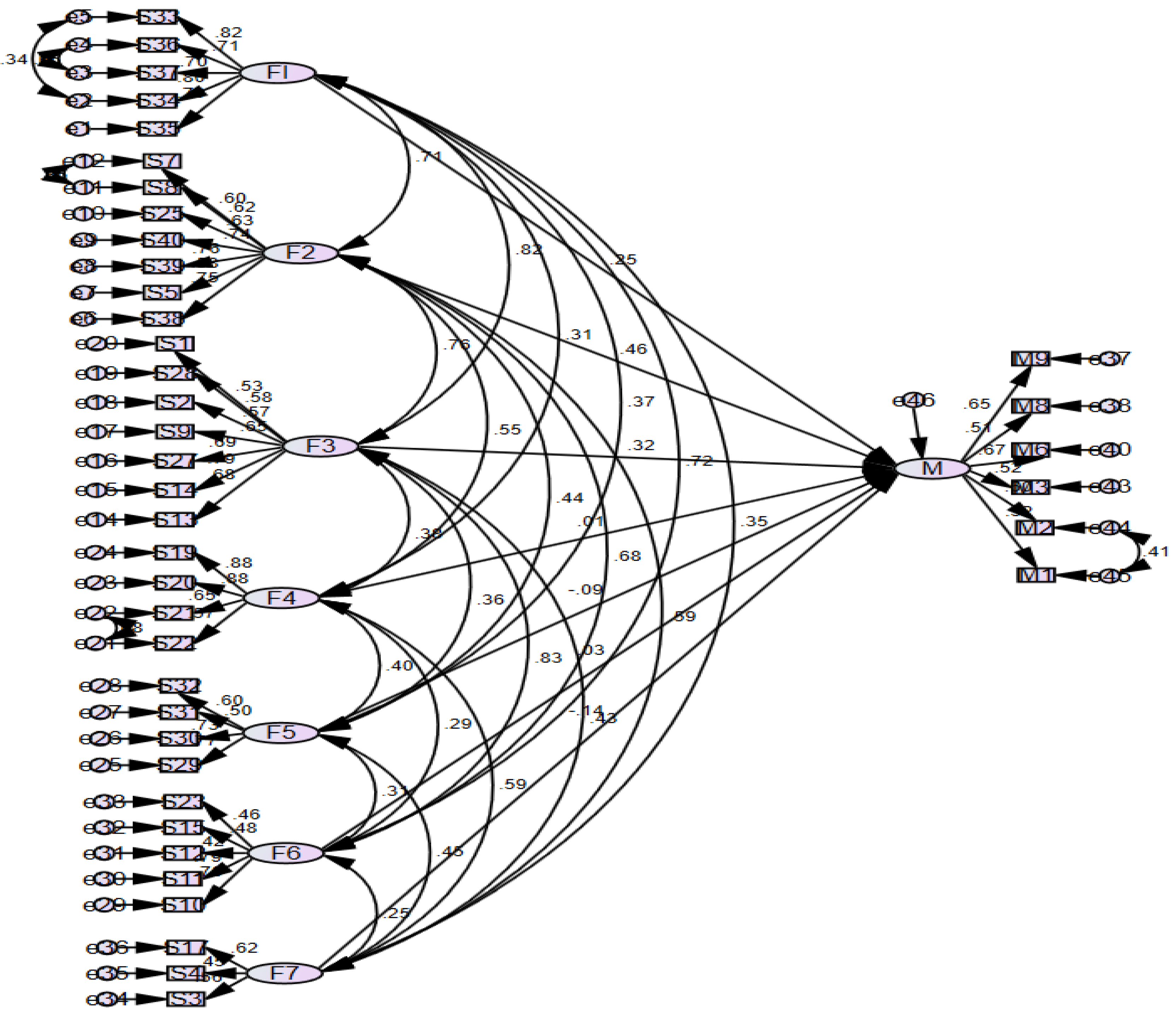

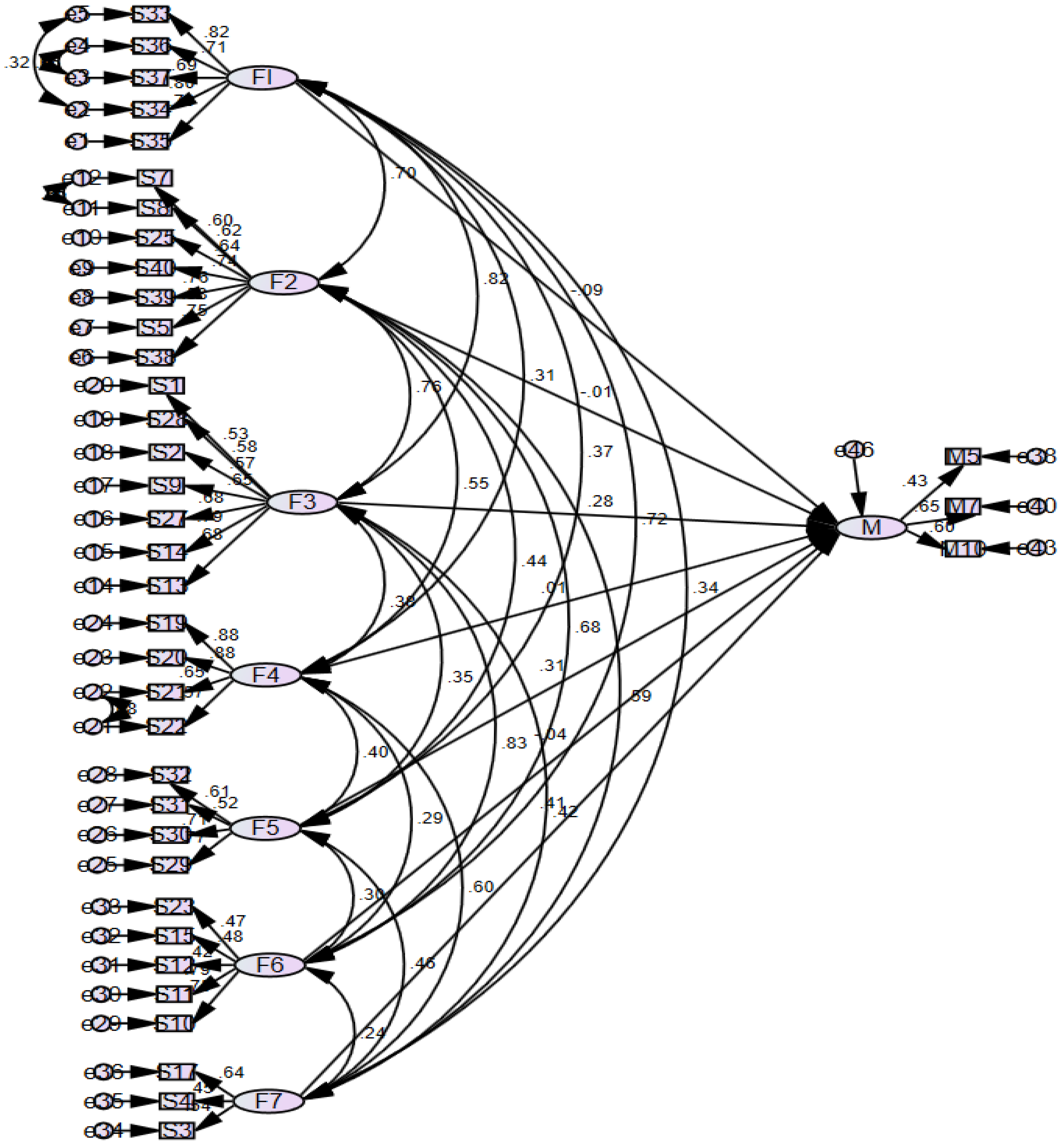

Figure 4 and

Figure 5 represent the two models, with each oval figure representing the unobserved variables or ‘factors’, the rectangular figures representing the 35 observed variables or statements and the circular figures representing the errors of measurement. All arrows represent the paths between variables, with each unobserved variable such us the factors being explained by their underlying observed variables, such as F1 being explained by S33–S37 which have their own errors of measurement terms respectively.

F1–F7 are then linked through single-headed arrow paths to M, the factor measuring effectiveness, made up of a different sets of measures for each model as explained previously. The components F1–F7 are also linked with each other with double-headed arrows representing the covariances allowed between each factor, maintaining consistency with the previous decision of utilising oblique rotation for EFA to allow for correlation between factors. Covariances were also drawn between error terms when the modification indices results deemed it ideal from the initial run-throughs of the model, ensuring the overall model fit is sufficient.

4.8. Structural Equation Model 1

Figure 4 below represents the first model developed, analysing the relationship of factors F1–F7 on ‘M’, comprising of the variables making up the “Objective IA Effectiveness and Added Value” component.

Univariate and multivariate tests were carried out in order to determine multivariate normality, using

Mardia’s (

1970) skewness and kurtosis coefficients (Refer to

Appendix B for the assessment of normality). With 41 observed variables, the dataset was determined to provide the assumption of multivariate normality, since the value of 41*(41 + 2) = 1763 determined by the formula

p*(

p + 2) was greater than the calculated Mardia kurtosis value of 405.196 displayed at the bottom of the table in

Appendix B.

After drawing and developing the structure, the maximum likelihood method was utilised in order to estimate the model parameters. The regression weights of the predictor variables and the regression coefficients were then checked to identify the significance of the independent variables as predictors of effectiveness. Estimates, standard errors, and critical ratios are subtracted in this calculation. The critical ratio was found by dividing the parameter estimate by the standard error, where a value greater than ±1.96 at 0.05 significance level are statistically significant (

Khine 2013).

The most widely used and reliable Chi-square analysis returned a value of 1585.584 with 746 degrees of freedom (p = 0.000). The Chi-square/d.o.f value returned 2.125, further proving the compatibility and fit of the model. The RMSEA calculation returned a value of 0.053, remaining within the required 0–0.8 range while the SRMR value was 0.06, remaining within the 0–0.10 range.

Table 14 describes the estimates outputted by Amos

® displaying the regression weights and other figures for each structural relationship:

The factors deemed to correlated with M significantly are highlighted in the table, being F1, F2 and F3. The “***” symbol in the p column indicates a significance value less than 0.001 as evidenced for F2, while F1 and F3 have p values lower than 0.05. The R

2 value for the model was calculated as 0.77, meaning that 77% of the variance in M is explained by these factors. The remaining structural relationships are visible in the

Appendix B.

4.9. Structural Equation Model 2

Figure 5 below represents the second model developed, analysing the relationship of factors F1–F7 on ‘M’, comprising of the variables making up the “Perceived IA Effectiveness” component.

The previous tests were repeated for the new variables respectively (

Table 15), beginning with

Mardia’s (

1970) skewness and kurtosis coefficients. With 38 observed variables, the value of 38*(38 + 2) = 1520 was once again larger than the Mardia kurtosis value of 345.142.

The Chi-square analysis returned a value of 1429.091 with 633 degrees of freedom (p = 0.000), surpassing the minimum. The Chi-square/d.o.f value returned 2.258, further confirming the compatibility and fit of the model. The RMSEA calculation returned a value of 0.056, remaining within the required 0–0.8 range while the SRMR value was 0.0631, remaining within the 0–0.10 range.

Table 15 describes the estimates outputted by Amos®, displaying the regression weights and other figures for each structural relationship.

The factors deemed to correlated with M significantly are bold in the table, being F5 and F7. The “***” symbol in the p column indicates a significance value less than 0.001 as evidenced for F5, while F7 has a p value lower than 0.05. The R

2 value for the model was calculated as 0.88, meaning that 88% of the variance in M is explained by these factors. The remaining structural relationships and variable descriptions are visible in the

Appendix B.

4.10. Confirmed Factors

From the previous confirmatory factor analysis (CFA) procedure carried out as part of the structural equation modelling, 5 factors have emerged as significantly correlated, with F1–F3 identified from model 1 while F5 and F7 were identified from model 2. This would imply that for factors F4 and F6, inadequate statistical evidence towards their reliability as latent variables were obtained, resulting in them no longer being considered during the following stages of analysis. This is done as prudential effort to make use of solely factors confirmed through the CFA process in order to ensure statistical reliability and integrity of the constructs being formed and their relationships with effectiveness. Therefore, F4 relating to the use of Big Data and F6 relating to Independence and Scope will be disregarded and all further analyses will be carried out on the remaining 5 factors.

4.11. Linear Regression and Pearson Correlation Test

Although EFA was carried out on all the survey statements, the demographics variables D1–D7 were not within the scope of grouping into latent factors and hence not included within the respective measurements. However, it is essential to perform regression analysis to identify the causal relationships, if any, between these variables and effectiveness.

Three new variables were computed prior to carrying out the necessary calculations, visible in

Table 16. These were ‘IA_Ratio’, being the demographics variable measuring the number of IAs in a team divided by the total number of employees in the team. This was carried out in order to analyse not only the relationship of IA team size or total organisation size with effectiveness, but the IA size in relation to the firm’s total capacity, as explained and hypothesised. The second variable calculated was labelled as ‘Confirmed Factors’, being the average of the five confirmed factors while the last variable is Measures, taking the average of the two factors comprising the measures of effectiveness.

The regression analysis carried out for the demographics against the dependent variable ‘Measures’ outputted the following coefficient results in

Table 17, with 0.052 significance value in the ANOVA table, achieving reliability at the 90% confidence level.

As may be observed, no clear causal relationship is apparent between any variable and the dependent aside from the demographic relating to insourcing and outsourcing of the IA process, with a −9.4% standardised beta highlighting that insourcing a team will perform more effectively at a 0.1 level of significance. The process was then repeated with ‘Confirmed_Factors’ replacing ‘Measures’ as the dependent variable. This was done since the latter represents effectiveness, while the former is statistically proven to influence effectiveness. Therefore, any variable sustaining a causal relationship with ‘Confirmed_Factors’ should in turn also influence ‘Measures’ and ultimately impact effectiveness. This yielded similar results, with the exception being that ‘No. of IAors’ obtained a standardised beta value of 10.6% at 0.056 significance, indicating an increase in internal auditors is deemed to influence effectiveness. The regression test for ‘IA_Ratio’ was carried out separately due to it not being a categorical variable, wielding no statistically conclusive results.

Pearson Correlation was then carried out on all the demographics variables, ‘IA_Ratio’, ‘Confirmed_Factors’ and ‘Measures’.

Table 18 lists the correlations of all the variables against ‘Confirmed_Factors’ and ‘Measures’.

As evidenced by the first three rows of results, no statistically significant outcomes were present to prove that gender, education, or experience correlate with effectiveness. On the other hand, 9.9% correlation was found between number of IAors and ‘Confirmed_Factors’, while 12.7% correlation was observed between number of employees and ‘Measures’, highlighting that larger IA teams and larger organisations tend to have more effective IA procedures, further proving the IA size hypothesis. The negative correlation observed for the type of IA team further corroborates with the previous findings that insourced teams tend to perform more effectively than their co-sourced or outsourced counterparts, while no evidence was found regarding public/private entities. The ‘IA_Ratio’ calculation yielded no significant results, while as expected ‘Confirmed_Factors’ were strongly associated with ‘Measures’ at 0.000 significance level with 64.2% Pearson correlation, seeing that these are made up of the five factors already confirmed through other statistical methods to influence effectiveness.

4.12. Confirmed Hypotheses

Table 19 depicts the original hypotheses laid out in the literature review chapter, alongside the study’s findings relating to each. All rows marked with a green tick ‘✓’ represent hypotheses where significant statistical evidence was found to confirm and substantiate the factor’s positive influence on internal audit effectiveness, while the red tick ‘✓’ represents the negative influence for H8, concluding from the previous findings that insourced entities are more effective. Yellow ticks ‘✓’ represent partially confirmed positive influence, describing factors where evidence was obtained but of limited nature. H5 for example comprised 2 statements from the original questionnaire, where one was grouped with confirmed factor F3, while the other with F6 which was disregarded due to not achieving statistical significance. Similar results occurred with H4 relating to independence, resulting in their partial confirmation. The remaining hypotheses found no evidence of their influence towards effectiveness either due to the specific statements being removed in the EFA phase, or due to them forming factors F4 or F6 which were ruled out or due to any statistical evidence of their influence being not significant enough to conclude with reasonable reliability.

5. Discussion of Findings

Similar to the literature, hypothesis H1 (Risk-Based Audit Approach), maintained a positive influence on effectiveness. For H1 relating to risk-based audit approaches, these loaded onto factors F3 and F7, both being factors confirmed to influence effectiveness through the SEM process. As opposed to the initial literature, these interestingly did not group into one latent variable but were separated into statements relating to the incorporation of risk management and risk assessments within the IA process in F3, while S3 and S4 focus more on Risk based frameworks and policies to be adhered with, including risk response. This confirms the recent shift of the IA process to one which now incorporates a risk-based approach as discussed by

Arena and Azzone (

2009),

Erasmus and Coetzee (

2018) and

Dinh et al. (

2021) among others, with the added concept that RM should not only be implemented and risk assessments carried out, but popular RM frameworks should be adhered to accordingly to provide a stronger process overall.

The evidence relating to H2 (IA Size) also concurs with the literature discussed that more internal auditors do increase effectiveness accordingly. This was discernible when carrying out the regression analysis between the number of IAors and the confirmed factors, where it was concluded that an increase in the audit team size does create an increase in the confirmed factors, which invariably indirectly impacts on effectiveness. This result was expected, as a logical conclusion would be that more human resources would allow for more IA activities and higher quality work to be carried out, impacting IAE accordingly as outlined by

Alzeban and Gwilliam (

2014) and

Ahmad et al. (

2012). Although it is also logical to assume

Arena and Azzone’s (

2009) argument to be true, stating that the number of ‘IA- Ratio’ should take into consideration the total number of employees, the ‘IA_Ratio’ variable was unable to statistically agree with this statement, arriving at no conclusion. However, larger IAUs and firms in general tend to have more effective IA processes, as observed in the Pearson correlation carried out (Refer to

Table 15). It is interesting to note however that roughly 20% of organisations with over 500 employees still employ 3 internal auditors or less, highlighting

Attard’s (

2014) statement that Maltese organisations are still understaffed when it comes to IA and this finding indicates that this is also a worldwide phenomenon.

H3 (IA Competence) yielded interesting results, hypothesising competency as another plausibly crucial factor influencing effectiveness. Although the study confirmed this factor as positively impacting effectiveness by loading up the specific statements onto F2, the demographic variables relating to the respondent’s own level of education and experience yielded no statistically significant results. This means that there may have been a level of bias when it came to responding to this area, as it is surely reasonable to assume that more competent individuals will result in more effective IA processes as outlined by

Turetken et al. (

2019);

Rudhani et al. (

2017) and the

IIA (

2017) itself.

Although it would be nonsensical to assume that competency plays no role in increasing effectiveness, perhaps the outcome of this study was not purely a chance occurrence, but in fact yielded results suggesting that IAUs should not over-rely on measuring competency solely through years of experience or level of education, emphasising quality of experience and competency over quantity.

In fact, there has also been a shift away from traditional education institutions, such as the common pathway of obtaining a CPA qualification through Universities or the ACCA equivalent prior to entering the IA environment. Although an accounting qualification would undoubtably assist an internal auditor in their work, it is controversial as to whether a 5-year route including an undergraduate and postgraduate degree in accountancy is necessary, especially since it disincentivises many students from venturing into internal audit owing to the lengthy requirements of formal education. In fact, whereas this model is as explained above resulting in most respondents having a master’s degree, the USA and UK have an almost even distribution between those with bachelor’s and master’s degrees. This further points to a decreased need to run postgraduate programs for qualifying in IA. This would instead be compensated with more highly focused training relating specifically to IA and to its related tasks such as data security, audit system training and technology rather than having a broad scope learning through tertiary education.

Although most variables were loaded onto F6, which was not statistically confirmed through SEM, two statements relating specifically to the independence from management loaded onto F3, warranting solely partial confirmation of H4 (IA Independence) with the results obtained by

Briffa (

2016),

Onay (

2021),

Dinh et al. (

2021) and

Alzeban and Gwilliam (

2014). Upon reflection, the loading onto separate factors indicates that independence is a multi-faceted concept which needs to be regarded from both angles, that as having no limitations or access restrictions which may impair judgements, while also being independent from management by reporting operationally to the audit committee and being able to report on both the lowest and highest levels of management within the organisation. That being said, S10–S12, which loaded onto unconfirmed F6 correlated with effectiveness (‘Measures’) at the 0.000 significance level, highlighting the possible positive influence of such statements.

Similar to Independence, H5 (IA Objectivity) statements split between F6 and F3, warrant partial confirmation. It is clear, as anticipated in the literature review that significant overlap would be present between objectivity and independence, since the statements gathered from the survey may also be used to describe independence, with the confirmed statement discussing the importance of an unbiased mental attitude when carrying out activities, similar to

Dejnaronk et al. (

2016) study. Although S15 was loaded onto F6, 23.9% correlation was observed with effectiveness at the 0.000 significance level, highlighting that, although partially confirmed, objectivity should still be taken into consideration. F7 (IA Independence and Scope) had the majority of statements relating to independence being loaded alongside a statement for objectivity and scope. The findings substantiate the idea that independence should be viewed from two different aspects, being independence from management (as explained in F3) and independence arising from unimpaired influence and unrestricted access placed on the IAU at all levels of the organisation, allowing them to carry out their duties effectively. This may suggest that unrestricted and unlimited access is not entirely necessary and that although generally not recommended, it is not imperative for IAs never to carry out any form of managerial functions. The singular statement relating to scope was also loaded onto this factor, possibly implying that it may be unreasonable to apply absolutely no boundaries or limits to an IA.

No statistical evidence was found regarding H6 (audit quality), as the sole statement testing this factor was removed entirely from the analysis during the EFA process, thus resulting in no impact on effectiveness being observed. The question relating to the creation and upkeep of a quality assurance and improvement program garnered a mean response of around 4, thus indicating overall respondent agreement, and 34% correlation with effectiveness, thus highlighting possible positive influence. The broad definition of quality creates a level of ambiguity which brought considerable overlap with other factors, as it is generally considered that higher quality audits are more effective, with the reverse also holding true. As discussed above ‘quality’ and ‘effectiveness’ are sometimes even interchanged, resulting in a lack of clarity when it comes to investigating this factor alone because all other factors would be deemed to affect quality too. For these reasons, no statistical confirmation was possible against the claims of

Cohen and Sayag (

2010) or

Badara and Saidin’s (

2014) among others, signifying that rather than hypothesising “IA Quality” it may be more suitable to assess IA quality assurance programs instead.

The partial confirmation of H7 (Adherence to Standards), in line with

Dejnaronk et al. (

2016), was attributable to the fact that from the two statements relating to this factor, S18 was removed in the EFA process. S18 had a mean value of 4.58, indicating strong compliance with standards, and 49% correlation with ‘Measures’. S17 related solely to ISO19011 audit standard, which was loaded onto the confirmed F7. Although it is reasonable to assume that standard adherence positively influences effectiveness (with the added indicators that S18 correlates with effectiveness), it was deemed prudent to conclude with partial confirmation, as only one statement for the original hypothesis was fully tested.

The literature put forward by

Dellai and Omri (

2016) and

Briffa (

2016) posed a mixed viewpoint regarding H8 (Insourcing/Co-sourcing/Outsourcing), highlighting the benefits and drawbacks of both insourcing and outsourcing and ultimately ending with inconclusive results. The results of this study concluded a negative impact of outsourcing, with the evidence indicating insourced entities prove to be more effective. This is likely in line with the previous authors’ explanations that insourced teams are solely focused on the single entity, gaining a deeper understanding of its risks, environment, and circumstantial affairs. However, this hypothesis is not entirely straightforward, as there are surely legitimate reasons to outsource the process, including for small organisations, which cannot afford the resources required to hire and train an IA team, or perhaps specifically skilled IA professionals are necessary who form part of an external team. Therefore, although insourcing the IA process was deemed to increase effectiveness, specific circumstances may require co-sourcing or outsourcing respectively.

No statistical confirmation was obtained for H9 (Use of Big Data), as although all questions loaded as expected onto a singular factor, it was not deemed statistically significant in either of the two SEM processes. Therefore, the claims portrayed by

Joshi (

2020,

2021),

Yoon et al. (

2015)

Kaya et al. (

2018) and

Deloitte (

2018) could not be corroborated as no statistical conclusions were arrived at. It is interesting to note however that there was 32.4% correlation between factor F4 relating exclusively to this hypothesis and effectiveness (‘Measures’), possibly indicating positive influence of Big Data Analytics. F4 (Use of Big Data) also loaded one hypothesis entirely onto the newly created factor, therefore relating solely to the use of Big Data and analytical techniques. Although the use of whole datasets for analysis rather than samples should in theory reduce any possibility of sampling risk and the extra efforts required to obtain a correct sample, the findings do not validate these claims with significance, possibly indicating that the IA process is still a while away from making these procedures common practise or perhaps there is not yet the knowledge and understanding of the benefits of analytical techniques. This may also be an indication that in practice, the use of Big Data is not yet viable or feasible, either due to the computing power required, or the datasets still being too large to process entirely. This method may also not yet be widely accepted by IAs possibly due to more mature auditors being reluctant to change and sceptical on the practicability and skills required to carry out the procedure effectively, who would rather work with tried and tested mechanisms.

No statistical evidence was obtained for H10 (Limitation if scope) due to the singular statement loading onto factor F6 which was not confirmed in the SEM process. Although the findings from

Erasmus and Coetzee (

2018) and the

ISO 19011:2011 (

2011) standard plausibly argue that no scope limitations should be placed, this study could not conclude similar results. However, 21.2% correlation at 0.000 significance with effectiveness was observed, once again possibly highlighting the validity of the literature.

A positive influence was concluded for H11 (Management Support) after 2/4 statements relating to support from management were loaded onto separate confirmed factors F2 and F3. The other two statements were ruled out in the EFA process despite both correlating at the 0.00 level of significance with effectiveness, with 46.1% and 30.6% for S24 and S26 respectively. Interestingly, S25 loaded onto F2 alongside competency and control environment. This may be explained since the question was not directly related to management support, but rather that IA has the resources required (assumably from management, resulting in their support) to fulfil its tasks. This resulted in F2 comprising of statements relating to various types of resources required for internal audits, being knowledge, management/and environmental resources. S27 was loaded onto F3 relating to communication with CAE, overlapping with independence and objectivity since open lines of communication would allow IAors to work independently and with increased objectivity. It is for these reasons that management support clearly assists IA effectiveness from multiple perspectives, thus confirming that it positively influences effectiveness, in line with the findings of

Al-Twaijry et al. (

2003);

Alzeban and Gwilliam (

2014) and

Onay (

2021).

A clear positive Influence was observed for H12 (Cooperation with External Audit), with S28 relating to attitude and communication with EA loading onto confirmed F3 and the remaining statements S29–S32 loading exclusively onto F5, once again creating a singular factor for cooperation with external audit. The factor confirmation through the SEM process leaves no doubt as to the positive impact of external audit cooperation with IAE. This validates the claims made by

Alzeban and Gwilliam (

2014), arguing that the sharing of work papers, joint planning, and information exchange between the two teams may prove a positive influence on effectiveness. Regular meetings and discussions may result in less overlap of work between the two, resulting in reduced time and resource wastage in both IA and EA.

All statements relating to H13 (Cooperation with the Audit Committee) loaded onto confirmed F1, corroborating with

Bednarek’s (

2018) study proving that cooperation with the Audit Committee does indeed positively influence effectiveness. This would generally entail regular meetings between IA and AC, cooperation and assistance with risk signaling by the AC allowing constructive and strategic plans to be put in place in order to tackle these risks. F1 (Audit Follow-up and Audit Committee Cooperation) loaded up statements S33–S37 with a regression weight of 25.3% at 0.05 significance, relating to the two hypothesized factors being the follow-up process of an audit (H14) and cooperation with the audit committee (H13). It should be noted that since the questions for these followed each other in the survey, there may have been an element of influence or bias within the replies, resulting in the answers relating to follow-up process possibly being subconsciously prejudiced and assimilated with the previous questions measuring AC cooperation. This may have resulted in similar replies on the degree of agreement despite the distinctness of the statements, which may have indirectly contributed to the loading of both constructs onto a singular factor. However, this phenomenon was not evident in any other factor, possibly indicating this event as coincidental. The amalgamation of the two factors may highlight the importance of their overlapping qualities, being the risk identification and constant monitoring. Just as audit committees should “signal significant risks” to prioritise IA activities for strategic IA plans, the follow-up process is crucial in also identifying unrectified deficiencies and risks which require amending (

Bednarek 2018). These reasons create a strong argument for the joining of these factors into one, clearly emphasising the true significance of both processes and the ultimate intention being risk identification and recommendation implementation through these two distinct methods.

The amalgamation of the two factors may highlight the importance of their overlapping qualities, being the risk identification and constant monitoring. Just as audit committees should “signal significant risks” to prioritise IA activities for strategic IA plans, the follow-up process is crucial in also identifying unrectified deficiencies and risks which require amending (

Bednarek 2018;

Ahmed et al. 2022) These reasons create a strong argument for the joining of these factors into one, clearly emphasising the true significance of both processes and the ultimate intention being risk identification and recommendation implementation through these two distinct methods (

Salmasi et al. 2022;

Zybin and Bielozorova 2021).

F5 (Cooperation with External Audit) loaded a singular hypothesis precisely as anticipated, affirming the positive influence on effectiveness that cooperation with EA may have, as explained by the literature and the findings.F7 (Adherence to Audit, Risk Assessment and Response Frameworks) gathered three statements from two hypothesised factors, with the common characteristic being namely the adherence to standards/frameworks/policies/guidelines or any publicly available or privately created procedures. Public models and guidelines from reputable institutions such as the IIA or ISO standards provide extensively detailed guidance from industry professionals on all aspects pertaining to the IA profession. When complied with, the standards ensure that the IA process is functioning to an exceptional level, validated by the findings. It is not always simple to carry out IA activities without the instructions or direction from these standards, which provide a very solid foundation for each IA team to build upon, including methods, concepts, and other key aspects to look out for. Among the statements tested, control risk self-assessment (CRSA) techniques and ISO 19011 were mentioned due to their significance in the IA sphere.

Both statements relating to a follow-up process (H14) were loaded onto confirmed F1 alongside the statements relating to H13, once again in agreeance with

Mihret and Yismaw (

2007) and the

IIA’s (

2017) standard requirements. The research concludes that this method of evaluating the actions taken (or not) in order to rectify the audit findings and implement the recommendations does indeed positively influence effectiveness, highlighting the importance of a follow-up process being in place and utilised.

The findings substantiate the claims made by

Barisic and Tusek (

2016) and

Turetken et al. (

2019), emphasising the relevance of working within an environment which promotes prospective thinking, accountability, creativity and rewards positive efforts and actions accordingly (H15). All statements loaded onto confirmed F2, highlighting the concept of the organisational environment as a key player in increasing effectiveness, resulting in its positive influence. F2 (Organisational Environment and Knowledge Resources) loaded statements from three hypothesised factors with the largest standardised regression weighting of 46% in SEM model 1 at 0.000 significance level, highlighting its strength as a factor. Although the statements tested were diverse in nature, the overlapping characteristics observed were the various resources and the environment required by an IA unit to increase effectiveness. Interestingly, this factor did not relate directly to monetary resources or number of employees, but instead to the knowledge resources and competency of personnel, having the professional know-how, right training and skills in all aspects including audit, taxation, IT/data security and other modern technologies. Furthermore, the idea of viewing the organisational environment as a resource in itself which may encourage and increase effectiveness is a compelling concept, highlighting corporate culture as a resource which may be exploited to the company’s advantage, utilising the elements discussed in H15.

No statistical evidence was obtained to prove either argument put forward in H16 (Private vs. Public Organisation), as clearly there are inherent advantages and disadvantages to both organisational forms. It is reasonable to assume that as

Goodwin (

2004) and

Cohen and Sayag (

2010) explained, the rigid legislation and scrutiny imposed on public entities may result in higher performing IAUs. The counterargument would likely be valid that private entities are heavily pressured into functioning effectively due to the large competition the private sector poses. The authors’ arguments may also hold true, that public entities may focus more on service delivery rather than cost minimisation, while private entities would focus on the latter and overall profit maximisation, seeing that they are for-profit unlike their public counterparts. Therefore, no conclusion could be achieved as to whether either organisational form is more effective than the other.

From the 402 total responses, 61 filled in the text field with 38 providing suitable material other than words of appreciation or well-wishing. The first issue was regarding the statements relating to Big Data and Analytics. Several respondents commented on the fact that the use of Big Data and analytical techniques are separate, non-homogenous functions which should not be amalgamated, possibly resulting in its decreased relevance and subsequent non-confirmation as a significant factor. Although the former does involve analytical techniques, the latter may be performed for other functions that do not involve Big Data and are likely more frequently used and simpler to carry out. Another respondent agreed on their importance but adds that the organisation’s data must be completely digitised, accurate and complete, which is not always the case.

Several respondents displayed stark disagreement at the idea of allowing full access to systems and data, explaining the extensive risks involved in doing so, especially in entities which deal with highly sensitive information such as health data. However, they should be allowed to access the data through permissions from the correct personnel or legal written consent, depending on specific requirements. This is in contrast with statements such as S10, “direct and unlimited access […] can freely audit all sections without any ad hoc permissions being necessary,” stressing the important distinction that needs to be made. This may explain why the independence statements loaded onto two factors, with the specific statements relating to access restrictions loading onto the unconfirmed factor F6 due to non-agreement.

Another crucial point explains that development of controls should never be carried out by IA despite being highly qualified to do so, as it would severely hinder independence and objectivity. One respondent in particular highlighted that even though IA should only provide recommendations, a management action plan (MAP) must be produced by the auditee which is approved by IA and published, allowing IA to provide their input at all stages. For this organisation, 100% of the MAP must be implemented with all the agreed-upon changes, with senior management facing the risk of losing their jobs if not carried out accordingly. Although this approach may seem severe, it allows the executives to ensure that management do not ignore IA and give them the consideration needed. Generally, the risk management team is tasked with control development.

One respondent described the idea of setting targets relating to number/severity of findings as controversial, which may even impede independence or effectiveness. When management is already aware of certain control issues, it should not wait for IA to diagnose the problem separately and propose corrective action, rather management should personally ‘own’ the issue and seek remedy. Another fact pointed out which related to independence was the approval of budgets, that is that the IA should not be allowed to approve its own budgets, as it is no exception to any other organisational unit. Instead, they suggested questioning whether the IAU achieved an approved budget which met the IA objectives.

Various respondents illustrated the leading purposes of IA being assurance, insight, fraud and waste prevention, all through focusing on operational rather than financial risks, with one respondent explaining that assessing financial statement accuracy is an ancillary objective, although that is generally carried out by external auditors. An individual explained that the mere existence of IA greatly improves internal controls and governance, with the level of maturity assisting its ability to carry out functions accordingly.

Lack of competency seems a common point of distress among respondents, explaining the significance of finding and employing individuals with the appropriate competencies, in line with the findings and F2. One respondent explained that the IA process is outsourced at times when the Group Internal Auditor deems that there are insufficient competencies with the in-house team. Another explained the lack of expertise in fields such as compliance, tax, RM, GDPR and others, highlighting the need for IAs with sufficient knowledge in various fields in order to maintain an agile and responsive IAU. Two respondents from the UK and Malta expressed their trouble attracting and hiring quality IA, explaining that reliance is then placed on junior auditors who significantly lack the skills and experience required, thus resulting in an adverse impact on audit quality. This is an alarming statement, given that the UK is generally viewed as one of the more advanced societies in IA. This highlights the increased need not only for IAs, but sufficiently trained individuals. This is also substantiated by a Turkish respondent’s advice that specific IA training/courses should be carried out at university level with emphasis on hands-on learning provided, rather than providing broad-scope teaching and relying on fresh graduates learning as they enter employment. One respondent claimed the IIA has no presence in Malta and very few Maltese have obtained the Certified Internal Auditor (CIA) qualification, which is deemed crucial for any internal auditor.

Independence was topic commonly brought up, with a Turkish respondent explaining that, if independence is breached, IA will be unable to provide transparent data not only to management but even to external audit. It was also noted that independence may be thwarted when risks are highlighted to clients/management and they respond defensively/threateningly and in disagreement with IA’s findings and recommendations, thus impeding the value-adding process. Another explained that some key audit directors involved in IA projects impair the process significantly due to their inability to operate independently. However, one pointed out that there must be a degree of flexibility where the IA does not distance itself too much from management, creating an “us and them” culture, yet at the same time functioning as a “critical friend” who has the same goals of improving organisational systems yet still willing to act “critically” and scrutinise where necessary. This was supported by another statement that the provision of management advisory services by the IAU is increasingly becoming a core contributor to IAE and the value-adding process.

Various respondents emphasised the relevance of AC support to ensure focus on risk and governance, while another maintained that IA is only effective when the CAE, who reports to the AC, is capable of successfully understanding and assessing audit risks. Several respondents described the importance of communication and exchanges between internal and external auditors. However, they highlighted that the objectives of each type of auditor were different, and the methodologies utilised were therefore also different.