Journal Description

Sensors

Sensors

is an international, peer-reviewed, open access journal on the science and technology of sensors. Sensors is published semimonthly online by MDPI. The Polish Society of Applied Electromagnetics (PTZE), Japan Society of Photogrammetry and Remote Sensing (JSPRS), Spanish Society of Biomedical Engineering (SEIB) and International Society for the Measurement of Physical Behaviour (ISMPB) are affiliated with Sensors and their members receive a discount on the article processing charges.

- Open Access — free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, SCIE (Web of Science), PubMed, MEDLINE, PMC, Ei Compendex, Inspec, Astrophysics Data System, and other databases.

- Journal Rank: JCR - Q2 (Instruments & Instrumentation) / CiteScore - Q1 (Instrumentation)

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 17 days after submission; acceptance to publication is undertaken in 2.8 days (median values for papers published in this journal in the second half of 2023).

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

- Testimonials: See what our editors and authors say about Sensors.

- Companion journals for Sensors include: Chips, Automation, JCP and Targets.

Impact Factor:

3.9 (2022);

5-Year Impact Factor:

4.1 (2022)

Latest Articles

Adaptive Super-Twisting Sliding Mode Control for Robot Manipulators with Input Saturation

Sensors 2024, 24(9), 2783; https://doi.org/10.3390/s24092783 (registering DOI) - 26 Apr 2024

Abstract

The paper investigates a modified adaptive super-twisting sliding mode control (ASTSMC) for robotic manipulators with input saturation. To avoid singular perturbation while increasing the convergence rate, a modified sliding mode surface (SMS) is developed in this method. Using the proposed SMS, an ASTSMC

[...] Read more.

The paper investigates a modified adaptive super-twisting sliding mode control (ASTSMC) for robotic manipulators with input saturation. To avoid singular perturbation while increasing the convergence rate, a modified sliding mode surface (SMS) is developed in this method. Using the proposed SMS, an ASTSMC is developed for robot manipulators, which not only achieves strong robustness but also ensures finite-time convergence. The boundary of lumped uncertainties cannot be easily obtained. A modified adaptive law is developed such that the boundaries of time-varying disturbance and its derivative are not required. Considering input saturation in practical cases, an ASTSMC with saturation compensation is proposed to reduce the effect of input saturation on tracking performances of robot manipulators. The finite-time convergence of the proposed scheme is analyzed. Through comparative simulations against two other sliding mode control schemes, the proposed method has been validated to possess strong adaptability, effectively adjusting control gains; simultaneously, it demonstrates robustness against disturbances and uncertainties.

Full article

(This article belongs to the Topic Industrial Control Systems)

Open AccessArticle

Performance Assessment for the Validation of Wireless Communication Engines in an Innovative Wearable Monitoring Platform

by

Alessio Serrani and Andrea Aliverti

Sensors 2024, 24(9), 2782; https://doi.org/10.3390/s24092782 (registering DOI) - 26 Apr 2024

Abstract

In today’s health-monitoring applications, there is a growing demand for wireless and wearable acquisition platforms capable of simultaneously gathering multiple bio-signals from multiple body areas. These systems require well-structured software architectures, both to keep different wireless sensing nodes synchronized each other and to

[...] Read more.

In today’s health-monitoring applications, there is a growing demand for wireless and wearable acquisition platforms capable of simultaneously gathering multiple bio-signals from multiple body areas. These systems require well-structured software architectures, both to keep different wireless sensing nodes synchronized each other and to flush collected data towards an external gateway. This paper presents a quantitative analysis aimed at validating both the wireless synchronization task (implemented with a custom protocol) and the data transmission task (implemented with the BLE protocol) in a prototype wearable monitoring platform. We evaluated seven frequencies for exchanging synchronization packets (10 Hz, 20 Hz, 30 Hz, 40 Hz, 50 Hz, 60 Hz, 70 Hz) as well as two different BLE configurations (with and without the implementation of a dynamic adaptation of the BLE Connection Interval parameter). Additionally, we tested BLE data transmission performance in five different use case scenarios. As a result, we achieved the optimal performance in the synchronization task (1.18 ticks as median synchronization delay with a Min-Max range of 1.60 ticks and an Interquartile range (IQR) of 0.42 ticks) when exploiting a synchronization frequency of 40 Hz and the dynamic adaptation of the Connection Interval. Moreover, BLE data transmission proved to be significantly more efficient with shorter distances between the communicating nodes, growing worse by 30.5% beyond 8 m. In summary, this study suggests the best-performing network configurations to enhance the synchronization task of the prototype platform under analysis, as well as quantitative details on the best placement of data collectors.

Full article

(This article belongs to the Special Issue Selected Papers from the 2023 IEEE International Conference on Metrology for eXtended Reality, Artificial Intelligence and Neural Engineering (IEEE MetroXRAINE 2023))

Open AccessArticle

A Performance Analysis of Security Protocols for Distributed Measurement Systems Based on Internet of Things with Constrained Hardware and Open Source Infrastructures

by

Antonio Francesco Gentile, Davide Macrì, Domenico Luca Carnì, Emilio Greco and Francesco Lamonaca

Sensors 2024, 24(9), 2781; https://doi.org/10.3390/s24092781 (registering DOI) - 26 Apr 2024

Abstract

The widespread adoption of Internet of Things (IoT) devices in home, industrial, and business environments has made available the deployment of innovative distributed measurement systems (DMS). This paper takes into account constrained hardware and a security-oriented virtual local area network (VLAN) approach that

[...] Read more.

The widespread adoption of Internet of Things (IoT) devices in home, industrial, and business environments has made available the deployment of innovative distributed measurement systems (DMS). This paper takes into account constrained hardware and a security-oriented virtual local area network (VLAN) approach that utilizes local message queuing telemetry transport (MQTT) brokers, transport layer security (TLS) tunnels for local sensor data, and secure socket layer (SSL) tunnels to transmit TLS-encrypted data to a cloud-based central broker. On the other hand, the recent literature has shown a correlated exponential increase in cyber attacks, mainly devoted to destroying critical infrastructure and creating hazards or retrieving sensitive data about individuals, industrial or business companies, and many other entities. Much progress has been made to develop security protocols and guarantee quality of service (QoS), but they are prone to reducing the network throughput. From a measurement science perspective, lower throughput can lead to a reduced frequency with which the phenomena can be observed, generating, again, misevaluation. This paper does not give a new approach to protect measurement data but tests the network performance of the typically used ones that can run on constrained hardware. This is a more general scenario typical for IoT-based DMS. The proposal takes into account a security-oriented VLAN approach for hardware-constrained solutions. Since it is a worst-case scenario, this permits the generalization of the achieved results. In particular, in the paper, all OpenSSL cipher suites are considered for compatibility with the Mosquitto server. The most used key metrics are evaluated for each cipher suite and QoS level, such as the total ratio, total runtime, average runtime, message time, average bandwidth, and total bandwidth. Numerical and experimental results confirm the proposal’s effectiveness in foreseeing the minimum network throughput concerning the selected QoS and security. Operating systems yield diverse performance metric values based on various configurations. The primary objective is identifying algorithms to ensure suitable data transmission and encryption ratios. Another aim is to explore algorithms that ensure wider compatibility with existing infrastructures supporting MQTT technology, facilitating secure connections for geographically dispersed DMS IoT networks, particularly in challenging environments like suburban or rural areas. Additionally, leveraging open firmware on constrained devices compatible with various MQTT protocols enables the customization of the software components, a crucial necessity for DMS.

Full article

(This article belongs to the Section Internet of Things)

Open AccessCommunication

Design of a Negative Temperature Coefficient Temperature Measurement System Based on a Resistance Ratio Model

by

Ziang Liu, Peng Huo, Yuquan Yan, Chenyu Shi, Fanlin Kong, Shiyu Cao, Aimin Chang, Junhua Wang and Jincheng Yao

Sensors 2024, 24(9), 2780; https://doi.org/10.3390/s24092780 - 26 Apr 2024

Abstract

In this paper, a temperature measurement system with NTC (Negative Temperature Coefficient) thermistors was designed. An MCU (Micro Control Unit) primarily operates by converting the voltage value collected by an ADC (Analog-to-Digital Converter) into the resistance value. The temperature value is then calculated,

[...] Read more.

In this paper, a temperature measurement system with NTC (Negative Temperature Coefficient) thermistors was designed. An MCU (Micro Control Unit) primarily operates by converting the voltage value collected by an ADC (Analog-to-Digital Converter) into the resistance value. The temperature value is then calculated, and a DAC (Digital-to-Analog Converter) outputs a current of 4 to 20 mA that is linearly related to the temperature value. The nonlinear characteristics of NTC thermistors pose a challenging problem. The nonlinear characteristics of NTC thermistors were to a great extent solved by using a resistance ratio model. The high precision of the NTC thermistor is obtained by fitting it with the Hoge equation. The results of actual measurements suggest that each module works properly, and the temperature measurement accuracy of 0.067 °C in the range from −40 °C to 120 °C has been achieved. The uncertainty of the output current is analyzed and calculated with the uncertainty of 0.0014 mA. This type of system has broad potential applications in industry fields such as the petrochemical industry.

Full article

(This article belongs to the Section Industrial Sensors)

Open AccessArticle

A Multi-Agent RL Algorithm for Dynamic Task Offloading in D2D-MEC Network with Energy Harvesting

by

Xin Mi, Huaiwen He and Hong Shen

Sensors 2024, 24(9), 2779; https://doi.org/10.3390/s24092779 - 26 Apr 2024

Abstract

Delay-sensitive task offloading in a device-to-device assisted mobile edge computing (D2D-MEC) system with energy harvesting devices is a critical challenge due to the dynamic load level at edge nodes and the variability in harvested energy. In this paper, we propose a joint dynamic

[...] Read more.

Delay-sensitive task offloading in a device-to-device assisted mobile edge computing (D2D-MEC) system with energy harvesting devices is a critical challenge due to the dynamic load level at edge nodes and the variability in harvested energy. In this paper, we propose a joint dynamic task offloading and CPU frequency control scheme for delay-sensitive tasks in a D2D-MEC system, taking into account the intricacies of multi-slot tasks, characterized by diverse processing speeds and data transmission rates. Our methodology involves meticulous modeling of task arrival and service processes using queuing systems, coupled with the strategic utilization of D2D communication to alleviate edge server load and prevent network congestion effectively. Central to our solution is the formulation of average task delay optimization as a challenging nonlinear integer programming problem, requiring intelligent decision making regarding task offloading for each generated task at active mobile devices and CPU frequency adjustments at discrete time slots. To navigate the intricate landscape of the extensive discrete action space, we design an efficient multi-agent DRL learning algorithm named MAOC, which is based on MAPPO, to minimize the average task delay by dynamically determining task-offloading decisions and CPU frequencies. MAOC operates within a centralized training with decentralized execution (CTDE) framework, empowering individual mobile devices to make decisions autonomously based on their unique system states. Experimental results demonstrate its swift convergence and operational efficiency, and it outperforms other baseline algorithms.

Full article

(This article belongs to the Section Communications)

Open AccessArticle

Research on Tire Surface Damage Detection Method Based on Image Processing

by

Jiaqi Chen, Aijuan Li, Fei Zheng, Shanshan Chen, Weikai He and Guangping Zhang

Sensors 2024, 24(9), 2778; https://doi.org/10.3390/s24092778 - 26 Apr 2024

Abstract

The performance of the tire has a very important impact on the safe driving of the car, and in the actual use of the tire, due to complex road conditions or use conditions, it will inevitably cause immeasurable wear, scratches and other damage.

[...] Read more.

The performance of the tire has a very important impact on the safe driving of the car, and in the actual use of the tire, due to complex road conditions or use conditions, it will inevitably cause immeasurable wear, scratches and other damage. In order to effectively detect the damage existing in the key parts of the tire, a tire surface damage detection method based on image processing was proposed. In this method, the image of tire side is captured by camera first. Then, the collected images are preprocessed by optimizing the multi-scale bilateral filtering algorithm to enhance the detailed information of the damaged area, and the optimization effect is obvious. Thirdly, the image segmentation based on clustering algorithm is carried out. Finally, the Harris corner detection method is used to capture the “salt and pepper” corner of the target region, and the segmsegmed binary image is screened and matched based on histogram correlation, and the target region is finally obtained. The experimental results show that the similarity detection is accurate, and the damage area can meet the requirements of accurate identification.

Full article

(This article belongs to the Special Issue Sensor Fusion and Advanced Controller for Connected and Automated Vehicles (Volume II))

Open AccessReview

Recent Development in Intelligent Compaction for Asphalt Pavement Construction: Leveraging Smart Sensors and Machine Learning

by

Yudan Wang, Jue Li, Xinqiang Zhang, Yongsheng Yao and Yi Peng

Sensors 2024, 24(9), 2777; https://doi.org/10.3390/s24092777 - 26 Apr 2024

Abstract

Intelligent compaction (IC) has emerged as a breakthrough technology that utilizes advanced sensing, data transmission, and control systems to optimize asphalt pavement compaction quality and efficiency. However, accurate assessment of compaction status remains challenging under real construction conditions. This paper reviewed recent progress

[...] Read more.

Intelligent compaction (IC) has emerged as a breakthrough technology that utilizes advanced sensing, data transmission, and control systems to optimize asphalt pavement compaction quality and efficiency. However, accurate assessment of compaction status remains challenging under real construction conditions. This paper reviewed recent progress and applications of smart sensors and machine learning (ML) to address existing limitations in IC. The principles and components of various advanced sensors deployed in IC systems were introduced, including SmartRock, fiber Bragg grating, and integrated circuit piezoelectric acceleration sensors. Case studies on utilizing these sensors for particle behavior monitoring, strain measurement, and impact data collection were reviewed. Meanwhile, common ML algorithms including regression, classification, clustering, and artificial neural networks were discussed. Practical examples of applying ML to estimate mechanical properties, evaluate overall compaction quality, and predict soil firmness through supervised and unsupervised models were examined. Results indicated smart sensors have enhanced compaction monitoring capabilities but require robustness improvements. ML provides a data-driven approach to complement traditional empirical methods but necessitates extensive field validation. Potential integration with digital construction technologies such as building information modeling and augmented reality was also explored. In conclusion, leveraging emerging sensing and artificial intelligence presents opportunities to optimize the IC process and address key challenges. However, cooperation across disciplines will be vital to test and refine technologies under real-world conditions. This study serves to advance understanding and highlight priority areas for future research toward the realization of IC’s full potential.

Full article

(This article belongs to the Special Issue Feature Review Papers in Intelligent Sensors)

Open AccessArticle

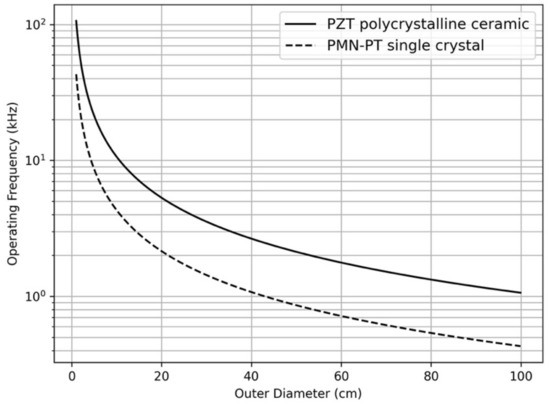

Through-Ice Acoustic Communication for Ocean Worlds Exploration

by

Hyeong Jae Lee, Yoseph Bar-Cohen, Mircea Badescu, Stewart Sherrit, Benjamin Hockman, Scott Bryant, Samuel M. Howell, Elodie Lesage and Miles Smith

Sensors 2024, 24(9), 2776; https://doi.org/10.3390/s24092776 - 26 Apr 2024

Abstract

Subsurface exploration of ice-covered planets and moons presents communications challenges because of the need to communicate through kilometers of ice. The objective of this task is to develop the capability to wirelessly communicate through kilometers of ice and thus complement the potentially failure-prone

[...] Read more.

Subsurface exploration of ice-covered planets and moons presents communications challenges because of the need to communicate through kilometers of ice. The objective of this task is to develop the capability to wirelessly communicate through kilometers of ice and thus complement the potentially failure-prone tethers deployed behind an ice-penetrating probe on Ocean Worlds. In this paper, the preliminary work on the development of wireless deep-ice communication is presented and discussed. The communication test and acoustic attenuation measurements in ice have been made by embedding acoustic transceivers in glacial ice at the Matanuska Glacier, Anchorage, Alaska. Field test results show that acoustic communication is viable through ice, demonstrating the transmission of data and image files in the 13–18 kHz band over 100 m. The results suggest that communication over many kilometers of ice thickness could be feasible by employing reduced transmitting frequencies around 1 kHz, though future work is needed to better constrain the likely acoustic attenuation properties through a refrozen borehole.

Full article

(This article belongs to the Topic Techniques and Science Exploitations for Earth Observation and Planetary Exploration)

►▼

Show Figures

Figure 1

Open AccessArticle

A Versatile Approach for Adaptive Grid Mapping and Grid Flex-Graph Exploration with a Field-Programmable Gate Array-Based Robot Using Hardware Schemes

by

Mudasar Basha, Munuswamy Siva Kumar, Mangali Chinna Chinnaiah, Siew-Kei Lam, Thambipillai Srikanthan, Gaddam Divya Vani, Narambhatla Janardhan, Dodde Hari Krishna and Sanjay Dubey

Sensors 2024, 24(9), 2775; https://doi.org/10.3390/s24092775 - 26 Apr 2024

Abstract

Robotic exploration in dynamic and complex environments requires advanced adaptive mapping strategies to ensure accurate representation of the environments. This paper introduces an innovative grid flex-graph exploration (GFGE) algorithm designed for single-robot mapping. This hardware-scheme-based algorithm leverages a combination of quad-grid and graph

[...] Read more.

Robotic exploration in dynamic and complex environments requires advanced adaptive mapping strategies to ensure accurate representation of the environments. This paper introduces an innovative grid flex-graph exploration (GFGE) algorithm designed for single-robot mapping. This hardware-scheme-based algorithm leverages a combination of quad-grid and graph structures to enhance the efficiency of both local and global mapping implemented on a field-programmable gate array (FPGA). This novel research work involved using sensor fusion to analyze a robot’s behavior and flexibility in the presence of static and dynamic objects. A behavior-based grid construction algorithm was proposed for the construction of a quad-grid that represents the occupancy of frontier cells. The selection of the next exploration target in a graph-like structure was proposed using partial reconfiguration-based frontier-graph exploration approaches. The complete exploration method handles the data when updating the local map to optimize the redundant exploration of previously explored nodes. Together, the exploration handles the quadtree-like structure efficiently under dynamic and uncertain conditions with a parallel processing architecture. Integrating several algorithms into indoor robotics was a complex process, and a Xilinx-based partial reconfiguration approach was used to prevent computing difficulties when running many algorithms simultaneously. These algorithms were developed, simulated, and synthesized using the Verilog hardware description language on Zynq SoC. Experiments were carried out utilizing a robot based on a field-programmable gate array (FPGA), and the resource utilization and power consumption of the device were analyzed.

Full article

(This article belongs to the Special Issue Environment Perception for Industrial Robotics, Connected and Autonomous Vehicles and Beyond)

Open AccessCommunication

Grating (Moiré) Microinterferometric Displacement/Strain Sensor with Polarization Phase Shift

by

Leszek Sałbut, Dariusz Łukaszewski and Aleksandra Piekarska

Sensors 2024, 24(9), 2774; https://doi.org/10.3390/s24092774 - 26 Apr 2024

Abstract

Grating (moiré) interferometry is one of the well-known methods for full-field in-plane displacement and strain measurement. There are many design solutions for grating interferometers, including systems with a microinterferometric waveguide head. This article proposes a modification to the conventional waveguide interferometer head, enabling

[...] Read more.

Grating (moiré) interferometry is one of the well-known methods for full-field in-plane displacement and strain measurement. There are many design solutions for grating interferometers, including systems with a microinterferometric waveguide head. This article proposes a modification to the conventional waveguide interferometer head, enabling the implementation of a polarization fringe phase shift for automatic fringe pattern analysis. This article presents both the theoretical considerations associated with the proposed solution and its experimental verification, along with the concept of in-plane displacement/strain sensing using the described head.

Full article

(This article belongs to the Section Physical Sensors)

Open AccessArticle

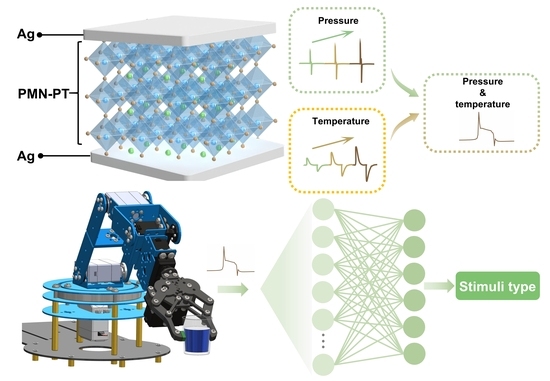

Self-Powered Pressure–Temperature Bimodal Sensing Based on the Piezo-Pyroelectric Effect for Robotic Perception

by

Xiang Yu, Yun Ji, Xinyi Shen and Xiaoyun Le

Sensors 2024, 24(9), 2773; https://doi.org/10.3390/s24092773 - 26 Apr 2024

Abstract

Multifunctional sensors have played a crucial role in constructing high-integration electronic networks. Most of the current multifunctional sensors rely on multiple materials to simultaneously detect different physical stimuli. Here, we demonstrate the large piezo-pyroelectric effect in ferroelectric Pb(Mg1/3Nb2/3)O3

[...] Read more.

Multifunctional sensors have played a crucial role in constructing high-integration electronic networks. Most of the current multifunctional sensors rely on multiple materials to simultaneously detect different physical stimuli. Here, we demonstrate the large piezo-pyroelectric effect in ferroelectric Pb(Mg1/3Nb2/3)O3-PbTiO3 (PMN-PT) single crystals for simultaneous pressure and temperature sensing. The outstanding piezoelectric and pyroelectric properties of PMN-PT result in rapid response speed and high sensitivity, with values of 46 ms and 28.4 nA kPa−1 for pressure sensing, and 1.98 s and 94.66 nC °C−1 for temperature detection, respectively. By leveraging the distinct differences in the response speed of piezoelectric and pyroelectric responses, the piezo-pyroelectric effect of PMN-PT can effectively detect pressure and temperature from mixed-force thermal stimuli, which enables a robotic hand for stimuli classification. With appealing multifunctionality, fast speed, high sensitivity, and compact structure, the proposed self-powered bimodal sensor therefore holds significant potential for high-performance artificial perception.

Full article

(This article belongs to the Section Sensors and Robotics)

►▼

Show Figures

Graphical abstract

Open AccessArticle

Identifying Characteristic Fire Properties with Stationary and Non-Stationary Fire Alarm Systems

by

Michał Wiśnios, Sebastian Tatko, Michał Mazur, Jacek Paś, Jarosław Mateusz Łukasiak and Tomasz Klimczak

Sensors 2024, 24(9), 2772; https://doi.org/10.3390/s24092772 - 26 Apr 2024

Abstract

The article reviews issues associated with the operation of stationary and non-stationary electronic fire alarm systems (FASs). These systems are employed for the fire protection of selected buildings (stationary) or to monitor vast areas, e.g., forests, airports, logistics hubs, etc. (non-stationary). An FAS

[...] Read more.

The article reviews issues associated with the operation of stationary and non-stationary electronic fire alarm systems (FASs). These systems are employed for the fire protection of selected buildings (stationary) or to monitor vast areas, e.g., forests, airports, logistics hubs, etc. (non-stationary). An FAS is operated under various environmental conditions, indoor and outdoor, favourable or unfavourable to the operation process. Therefore, an FAS has to exhibit a reliable structure in terms of power supply and operation. To this end, the paper discusses a representative FAS monitoring a facility and presents basic tactical and technical assumptions for a non-stationary system. The authors reviewed fire detection methods in terms of fire characteristic values (FCVs) impacting detector sensors. Another part of the article focuses on false alarm causes. Assumptions behind the use of unmanned aerial vehicles (UAVs) with visible-range cameras (e.g., Aviotec) and thermal imaging were presented for non-stationary FASs. The FAS operation process model was defined and a computer simulation related to its operation was conducted. Analysing the FAS operation process in the form of models and graphs, and the conducted computer simulation enabled conclusions to be drawn. They may be applied for the design, ongoing maintenance and operation of an FAS. As part of the paper, the authors conducted a reliability analysis of a selected FAS based on the original performance tests of an actual system in operation. They formulated basic technical and tactical requirements applicable to stationary and mobile FASs detecting the so-called vast fires.

Full article

(This article belongs to the Section Environmental Sensing)

Open AccessArticle

USV Trajectory Tracking Control Based on Receding Horizon Reinforcement Learning

by

Yinghan Wen, Yuepeng Chen and Xuan Guo

Sensors 2024, 24(9), 2771; https://doi.org/10.3390/s24092771 - 26 Apr 2024

Abstract

We present a novel approach for achieving high-precision trajectory tracking control in an unmanned surface vehicle (USV) through utilization of receding horizon reinforcement learning (RHRL). The control architecture for the USV involves a composite of feedforward and feedback components. The feedforward control component

[...] Read more.

We present a novel approach for achieving high-precision trajectory tracking control in an unmanned surface vehicle (USV) through utilization of receding horizon reinforcement learning (RHRL). The control architecture for the USV involves a composite of feedforward and feedback components. The feedforward control component is derived directly from the curvature of the reference path and the dynamic model. Feedback control is acquired through application of the RHRL algorithm, effectively addressing the problem of achieving optimal tracking control. The methodology introduced in this paper synergizes with the rolling time domain optimization mechanism, converting the perpetual time domain optimal control predicament into a succession of finite time domain control problems amenable to resolution. In contrast to Lyapunov model predictive control (LMPC) and sliding mode control (SMC), our proposed method employs the RHRL controller, which yields an explicit state feedback control law. This characteristic endows the controller with the dual capabilities of direct offline and online learning deployment. Within each prediction time domain, we employ a time-independent executive–evaluator network structure to glean insights into the optimal value function and control strategy. Furthermore, we substantiate the convergence of the RHRL algorithm in each prediction time domain through rigorous theoretical proof, with concurrent analysis to verify the stability of the closed-loop system. To conclude, USV trajectory control tests are carried out within a simulated environment.

Full article

(This article belongs to the Section Fault Diagnosis & Sensors)

Open AccessArticle

Transient Interference Excision and Spectrum Reconstruction with Partial Samples Using Modified Alternating Direction Method of Multipliers-Net for the Over-the-Horizon Radar

by

Zhang Man, Quan Huang and Jia Duan

Sensors 2024, 24(9), 2770; https://doi.org/10.3390/s24092770 - 26 Apr 2024

Abstract

Transient interference often submerges the actual targets when employing over-the-horizon radar (OTHR) to detect targets. In addition, modern OTHR needs to carry out multi-target detection from sea to air, resulting in the sparse sampling of echo data. The sparse OTHR signal will raise

[...] Read more.

Transient interference often submerges the actual targets when employing over-the-horizon radar (OTHR) to detect targets. In addition, modern OTHR needs to carry out multi-target detection from sea to air, resulting in the sparse sampling of echo data. The sparse OTHR signal will raise serious grating lobes using conventional methods and thus degrade target detection performance. This article proposes a modified Alternating Direction Method of Multipliers (ADMM)-Net to reconstruct the target and clutter spectrum of sparse OTHR signals so that target detection can be performed normally. Firstly, transient interferences are identified based on the sparse basis representation and then excised. Therefore, the processed signal can be seen as a sparse OTHR signal. By solving the Doppler sparsity-constrained optimization with the trained network, the complete Doppler spectrum is reconstructed effectively for target detection. Compared with traditional sparse solution methods, the presented approach can balance the efficiency and accuracy of OTHR signal spectrum reconstruction. Both simulation and real-measured OTHR data proved the proposed approach’s performance.

Full article

(This article belongs to the Special Issue Signal Processing in Radar Systems)

►▼

Show Figures

Figure 1

Open AccessArticle

Design, Construction, and Validation of an Experimental Electric Vehicle with Trajectory Tracking

by

Joel Artemio Morales Viscaya, Alejandro Israel Barranco Gutiérrez and Gilberto González Gómez

Sensors 2024, 24(9), 2769; https://doi.org/10.3390/s24092769 - 26 Apr 2024

Abstract

This research presents an experimental electric vehicle developed at the Tecnológico Nacional de México Celaya campus. It was decided to use a golf cart-type gasoline vehicle as a starting point. Initially, the body was removed, and the vehicle was electrified, meaning its engine

[...] Read more.

This research presents an experimental electric vehicle developed at the Tecnológico Nacional de México Celaya campus. It was decided to use a golf cart-type gasoline vehicle as a starting point. Initially, the body was removed, and the vehicle was electrified, meaning its engine was replaced with an electric one. Subsequently, sensors used to measure the vehicle states were placed, calibrated, and instrumented. Additionally, a mathematical model was developed along with a strategy for the parametric identification of this model. A communication scheme was implemented consisting of four slave devices responsible for controlling the accelerator, brake, steering wheel, and measuring the sensors related to odometry. The master device is responsible for communicating with the slaves, displaying information on a screen, creating a log, and implementing trajectory tracking techniques based on classical, geometric, and predictive control. Finally, the performance of the control algorithms implemented on the experimental prototype was compared in terms of tracking error and control input across three different types of trajectories: lane change, right-angle curve, and U-turn.

Full article

(This article belongs to the Special Issue Advanced Research in Intelligent Autonomous Mobile Robots System, Learning and Control)

►▼

Show Figures

Figure 1

Open AccessArticle

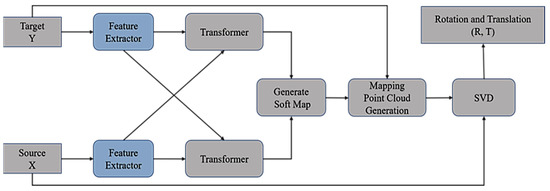

HALNet: Partial Point Cloud Registration Based on Hybrid Attention and Deep Local Features

by

Deling Wang, Huadan Hao and Jinsong Zhang

Sensors 2024, 24(9), 2768; https://doi.org/10.3390/s24092768 - 26 Apr 2024

Abstract

Point cloud registration is an important task in computer vision and robotics which is widely used in 3D reconstruction, target recognition, and other fields. At present, many registration methods based on deep learning have better registration accuracy in complete point cloud registration, but

[...] Read more.

Point cloud registration is an important task in computer vision and robotics which is widely used in 3D reconstruction, target recognition, and other fields. At present, many registration methods based on deep learning have better registration accuracy in complete point cloud registration, but partial registration accuracy is poor. Therefore, a partial point cloud registration network, HALNet, is proposed. Firstly, a feature extraction network consisting mainly of adaptive graph convolution (AGConv), two-dimensional convolution, and convolution block attention (CBAM) is used to learn the features of the initial point cloud. Then the overlapping estimation is used to remove the non-overlapping points of the two point clouds, and the hybrid attention mechanism composed of self-attention and cross-attention is used to fuse the geometric information of the two point clouds. Finally, the rigid transformation is obtained by using the fully connected layer. Five methods with excellent registration performance were selected for comparison. Compared with SCANet, which has the best registration performance among the five methods, the RMSE(R) and MAE(R) of HALNet are reduced by 10.67% and 12.05%. In addition, the results of the ablation experiment verify that the hybrid attention mechanism and fully connected layer are conducive to improving registration performance.

Full article

(This article belongs to the Section Sensing and Imaging)

►▼

Show Figures

Figure 1

Open AccessArticle

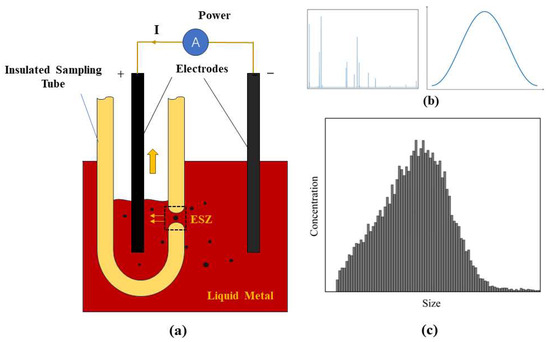

An Online Monitoring System for In Situ and Real-Time Analyzing of Inclusions within the Molten Metal

by

Yunfei Wu, Hao Yan, Jiahao Wang, Xianzhao Na, Xiaodong Wang and Jincan Zheng

Sensors 2024, 24(9), 2767; https://doi.org/10.3390/s24092767 - 26 Apr 2024

Abstract

Traditional methods for assessing the cleanliness of liquid metal are characterized by prolonged detection times, delays, and susceptibility to variations in sampling conditions. To address these limitations, an online cleanliness-analyzing system grounded in the method of the electrical sensing zone has been developed.

[...] Read more.

Traditional methods for assessing the cleanliness of liquid metal are characterized by prolonged detection times, delays, and susceptibility to variations in sampling conditions. To address these limitations, an online cleanliness-analyzing system grounded in the method of the electrical sensing zone has been developed. This system facilitates real-time, in situ, and quantitative analysis of inclusion size and amount in liquid metal. Comprising pneumatic, embedded, and host computer modules, the system supports the continuous, online evaluation of metal cleanliness across various metallurgical processes in high-temperature environments. Tests conducted with gallium liquid at 90 °C and aluminum melt at 800 °C have validated the system’s ability to precisely and quantitatively detect inclusions in molten metal in real time. The detection procedure is stable and reliable, offering immediate data feedback that effectively captures fluctuations in inclusion amount, thereby meeting the metallurgical industry’s demand for real-time analyzing and control of inclusion cleanliness in liquid metal. Additionally, the system was used to analyze inclusion size distribution during the hot-dip galvanizing process. At a zinc melt temperature of 500 °C, it achieved a detection limit of 21 μm, simultaneously providing real-time data on the size and amount distribution of inclusions. This represents a novel strategy for the online monitoring and quality control of zinc slag throughout the hot-dip galvanizing process.

Full article

(This article belongs to the Section Industrial Sensors)

►▼

Show Figures

Figure 1

Open AccessArticle

Optical Camera Communications in Healthcare: A Wearable LED Transmitter Evaluation during Indoor Physical Exercise

by

Eleni Niarchou, Vicente Matus, Jose Rabadan, Victor Guerra and Rafael Perez-Jimenez

Sensors 2024, 24(9), 2766; https://doi.org/10.3390/s24092766 - 26 Apr 2024

Abstract

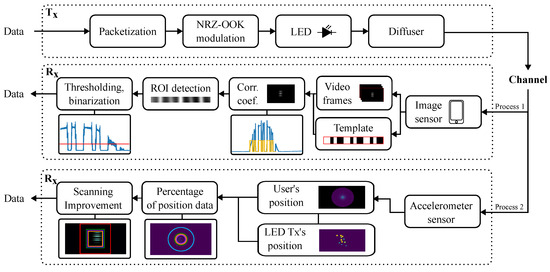

This paper presents an experimental evaluation of a wearable light-emitting diode (LED) transmitter in an optical camera communications (OCC) system. The evaluation is conducted under conditions of controlled user movement during indoor physical exercise, encompassing both mild and intense exercise scenarios. We introduce

[...] Read more.

This paper presents an experimental evaluation of a wearable light-emitting diode (LED) transmitter in an optical camera communications (OCC) system. The evaluation is conducted under conditions of controlled user movement during indoor physical exercise, encompassing both mild and intense exercise scenarios. We introduce an image processing algorithm designed to identify a template signal transmitted by the LED and detected within the image. To enhance this process, we utilize the dynamics of controlled exercise-induced motion to limit the tracking process to a smaller region within the image. We demonstrate the feasibility of detecting the transmitting source within the frames, and thus limit the tracking process to a smaller region within the image, achieving an reduction of 87.3% for mild exercise and 79.0% for intense exercise.

Full article

(This article belongs to the Special Issue Recent Trends and Advances in Telecommunications and Sensing)

►▼

Show Figures

Figure 1

Open AccessArticle

Developing a Novel Prosthetic Hand with Wireless Wearable Sensor Technology Based on User Perspectives: A Pilot Study

by

Yukiyo Shimizu, Takahiko Mori, Kenichi Yoshikawa, Daisuke Katane, Hiroyuki Torishima, Yuki Hara, Arito Yozu, Masashi Yamazaki, Yasushi Hada and Hirotaka Mutsuzaki

Sensors 2024, 24(9), 2765; https://doi.org/10.3390/s24092765 - 26 Apr 2024

Abstract

Myoelectric hands are beneficial tools in the daily activities of people with upper-limb deficiencies. Because traditional myoelectric hands rely on detecting muscle activity in residual limbs, they are not suitable for individuals with short stumps or paralyzed limbs. Therefore, we developed a novel

[...] Read more.

Myoelectric hands are beneficial tools in the daily activities of people with upper-limb deficiencies. Because traditional myoelectric hands rely on detecting muscle activity in residual limbs, they are not suitable for individuals with short stumps or paralyzed limbs. Therefore, we developed a novel electric prosthetic hand that functions without myoelectricity, utilizing wearable wireless sensor technology for control. As a preliminary evaluation, our prototype hand with wireless button sensors was compared with a conventional myoelectric hand (Ottobock). Ten healthy therapists were enrolled in this study. The hands were fixed to their forearms, myoelectric hand muscle activity sensors were attached to the wrist extensor and flexor muscles, and wireless button sensors for the prostheses were attached to each user’s trunk. Clinical evaluations were performed using the Simple Test for Evaluating Hand Function and the Action Research Arm Test. The fatigue degree was evaluated using the modified Borg scale before and after the tests. While no statistically significant differences were observed between the two hands across the tests, the change in the Borg scale was notably smaller for our prosthetic hand (p = 0.045). Compared with the Ottobock hand, the proposed hand prosthesis has potential for widespread applications in people with upper-limb deficiencies.

Full article

(This article belongs to the Special Issue Challenges and Future Trends of Wearable Robotics—2nd Edition)

►▼

Show Figures

Figure 1

Open AccessArticle

Deep Learning Models to Reduce Stray Light in TJ-II Thomson Scattering Diagnostic

by

Ricardo Correa, Gonzalo Farias, Ernesto Fabregas, Sebastián Dormido-Canto, Ignacio Pastor and Jesus Vega

Sensors 2024, 24(9), 2764; https://doi.org/10.3390/s24092764 - 26 Apr 2024

Abstract

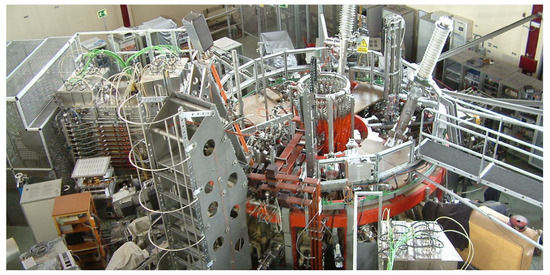

Nuclear fusion is a potential source of energy that could supply the growing needs of the world population for millions of years. Several experimental thermonuclear fusion devices try to understand and control the nuclear fusion process. A very interesting diagnostic called Thomson scattering

[...] Read more.

Nuclear fusion is a potential source of energy that could supply the growing needs of the world population for millions of years. Several experimental thermonuclear fusion devices try to understand and control the nuclear fusion process. A very interesting diagnostic called Thomson scattering (TS) is performed in the Spanish fusion device TJ-II. This diagnostic takes images to measure the temperature and density profiles of the plasma, which is heated to very high temperatures to produce fusion plasma. Each image captures spectra of laser light scattered by the plasma under different conditions. Unfortunately, some images are corrupted by noise called stray light that affects the measurement of the profiles. In this work, we propose the use of deep learning models to reduce the stray light that appears in the diagnostic. The proposed approach utilizes a Pix2Pix neural network, which is an image-to-image translation based on a generative adversarial network (GAN). This network learns to translateimages affected by stray light to images without stray light. This allows for the effective removal of the noise that affects the measurements of the TS diagnostic, avoiding the need for manual image processing adjustments. The proposed method shows a better performance, reducing the noise up to

(This article belongs to the Section Physical Sensors)

►▼

Show Figures

Figure 1

Journal Menu

► ▼ Journal Menu-

- Sensors Home

- Aims & Scope

- Editorial Board

- Reviewer Board

- Topical Advisory Panel

- Instructions for Authors

- Special Issues

- Topics

- Sections & Collections

- Article Processing Charge

- Indexing & Archiving

- Editor’s Choice Articles

- Most Cited & Viewed

- Journal Statistics

- Journal History

- Journal Awards

- Society Collaborations

- Conferences

- Editorial Office

Journal Browser

► ▼ Journal Browser-

arrow_forward_ios

Forthcoming issue

arrow_forward_ios Current issue - Vol. 24 (2024)

- Vol. 23 (2023)

- Vol. 22 (2022)

- Vol. 21 (2021)

- Vol. 20 (2020)

- Vol. 19 (2019)

- Vol. 18 (2018)

- Vol. 17 (2017)

- Vol. 16 (2016)

- Vol. 15 (2015)

- Vol. 14 (2014)

- Vol. 13 (2013)

- Vol. 12 (2012)

- Vol. 11 (2011)

- Vol. 10 (2010)

- Vol. 9 (2009)

- Vol. 8 (2008)

- Vol. 7 (2007)

- Vol. 6 (2006)

- Vol. 5 (2005)

- Vol. 4 (2004)

- Vol. 3 (2003)

- Vol. 2 (2002)

- Vol. 1 (2001)

Highly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Topic in

Agriculture, Forests, Sensors

Metrology-Assisted Production in Agriculture and Forestry

Topic Editors: Heye Bogena, Cosimo Brogi, Christof Huebner, Andreas PanagopoulosDeadline: 30 April 2024

Topic in

Materials, Nanomaterials, Photonics, Polymers, Applied Sciences, Sensors

Optical and Optoelectronic Properties of Materials and Their Applications

Topic Editors: Zhiping Luo, Gibin George, Navadeep ShrivastavaDeadline: 20 May 2024

Topic in

Remote Sensing, Sensors, Smart Cities, Vehicles, Geomatics

Information Sensing Technology for Intelligent/Driverless Vehicle, 2nd Volume

Topic Editors: Yan Huang, Yi Ren, Penghui Huang, Jun Wan, Zhanye Chen, Shiyang TangDeadline: 31 May 2024

Topic in

Applied Sciences, Electricity, Electronics, Energies, Sensors

Power System Protection

Topic Editors: Seyed Morteza Alizadeh, Akhtar KalamDeadline: 20 June 2024

Conferences

Special Issues

Special Issue in

Sensors

Radar Remote Sensing and Applications

Guest Editor: Shunjun WeiDeadline: 27 April 2024

Special Issue in

Sensors

Smart Sensors for Remotely Operated Robots

Guest Editors: Liviu C. Miclea, Ovidiu P. Stan, Vlad Muresan, Florin PopDeadline: 30 April 2024

Special Issue in

Sensors

Meta-User Interfaces for Ambient Environments

Guest Editors: Marco Romano, Phillip C-Y. Sheu, Giuliana VitielloDeadline: 20 May 2024

Special Issue in

Sensors

Selected Papers from 20th World Conference on Non-Destructive Testing (WCNDT 2022)

Guest Editor: Seunghee ParkDeadline: 31 May 2024

Topical Collections

Topical Collection in

Sensors

Robotic and Sensor Technologies in Environmental Exploration and Monitoring

Collection Editors: Jacopo Aguzzi, Corrado Costa, Sergio Stefanni, Valerio Funari

Topical Collection in

Sensors

Microfluidic Sensors

Collection Editors: Sabina Merlo, Klaus Stefan Drese