Abstract

The discounted {0-1} knapsack problem (D{0-1}KP) is a multi-constrained optimization and an extended form of the 0-1 knapsack problem. The DKP is composed of a set of item batches where each batch has three items and the objective is to maximize profit by selecting at most one item from each batch. Therefore, the D{0-1}KP is complex and has found many applications in real economic problems and other areas where the concept of promotional discounts exists. As DKP belongs to a binary class problem, so the novel binary particle swarm optimization variant with modifications is proposed in this paper. The acceleration coefficients are important parameters of the particle swarm optimization algorithm that keep the balance between exploration and exploitation. In conventional binary particle swarm optimization (BPSO), the acceleration coefficients of each particle remain the same in iteration, whereas in the proposed variant, fitness-based acceleration coefficient binary particle swarm optimization (FACBPSO), values of acceleration coefficients are based on the fitness of each particle. This modification enforces the least fit particles to move fast and best fit accordingly, which accelerates the convergence speed and reduces the computing time. Experiments were conducted on four instances of DKP having 10 datasets of each instance and the results of FACBPSO were compared with conventional BPSO and the new exact algorithm using a greedy repair strategy. The results demonstrate that the proposed algorithm outperforms PSO-GRDKP and the new exact algorithm in solving four instances of D{0-1}KP, with improved convergence speed and feasible solution time.

1. Introduction

The knapsack problem (KP) is a well-known combinatorial optimization problem that belongs to classical NP problems [1]. For classical optimization problems, KP is modeled in such a way that total profit among selected items should be maximized within a given capacity. Additionally, KPs became popular from their emergence in many real-world applications [2] including investment decisions, cargo loading problems [3], energy minimization [4,5], resource allocation [6,7], computer memory [8], project portfolio selection [8,9,10], adaptive multimedia systems [11], housing problems [12], cutting-stock problems [13] and many others [7,10,14,15]. There are many variants of KPs such as the bounded knapsack problem (BKP), the unbounded knapsack problem (UKP), the multidimensional knapsack problem (MDKP), the multiple knapsack problem (MKP), the quadratic knapsack problem (QKP), the set-union knapsack problem (SUKP), the randomized time-varying knapsack problem (RTVKP), the quadratic multiple knapsack problem (QMKP), the multiple-choice multidimensional knapsack problem (MMKP) and the discounted knapsack problem (DKP) [16,17,18,19,20,21].

The D{0-1}KP is an extended and more complex form of KP that addresses real-world practical problems with the concept of promotional discounts for budget control, investment and decision-making projects [17,22]. To solve D{0-1}KP the authors proposed several heuristic ways using fixation techniques [23]. In the literature, two main approaches have been adopted for solving D{0–1}KP, the dynamic programming-based algorithms and evolutionary strategy-based randomized algorithms [24,25]. Yi et al. in [26] presented a systematic study on the exact and approximate algorithms inspired by developing performance with low computational complexity algorithms, NS-DKP to solve D{0-1}KP. In [18,26], experiments were conducted to evaluate different dynamic programming-based algorithms on different types of DKP situations. A greedy repair optimization algorithm based on the particle swarm optimization algorithm has been proposed to give an efficient solution using exact and approximate algorithms [26]. The impact of Levy flights operator and fly straight operator has been established in binary moth searched algorithm by proposing nine MS-based algorithms for D{0-1}KP [27]. To enlarge the population diversity and strengthen the global search ability of moth search, a binary moth search algorithm based on self-learning (SLMS) has been proposed to work out an NP-hard combinatorial optimization problem using 0–1 multidimensional knapsack problem (MKP) [28]. A new monarch butterfly optimization algorithm with multiple strategies (MMBO) has been presented to detect the best possible solutions for a given problem with greater convergence speed [29]. The discrete hybrid teaching–learning-based optimization algorithm (HTLBO) solved the D{0-1}KP with high accuracy [24]. The binary slap swarm algorithm adopted a greedy repair strategy to find the global optimal solution of the discounted {0-1} knapsack problem proposed by Dang and Truong. In the literature, various evolutionary techniques for D{0-1}KP are presented [29,30,31].

The latest studies show that swarm-intelligence-based optimization techniques have shown promising results for solving D{0-1}KP [27]. The DKP is a binary problem in nature. The binary variants of PSO are shown to be efficient for solving binary optimization problems. However, the issues with all variants of PSO are its local trapping dilemma and slow convergence rate. A number of studies have been performed to resolve the issues of PSO [25,32,33,34,35,36]. In this paper, our proposed technique is inspired by the technique adopted in [26], in which the BPSO algorithm with a greedy repair algorithm was used to solve the DKP. In existing BPSO variants, the velocity of each particle is updated using the same acceleration coefficients, which leads to slow convergence. To overcome this problem, we have proposed fitness-based acceleration coefficient binary particle swarm optimization (FACBPSO) to solve the D{0-1}KP. The proposed technique modifies the acceleration coefficients based on the fitness of each particle in terms of fitness rank. The particles with high fitness get a higher rank, whereas the particles with low fitness are assigned a lower rank; this accelerates convergence speed. Experiments were conducted on four instances of D{0-1}KP with 10 datasets of each instance and results of FACBPSO were compared with conventional BPSO and the new exact algorithm using a greedy repair strategy. The results demonstrate that the proposed algorithm outperforms the PSO-GRDKP and the exact algorithm in solving four instances of D{0-1}KP with improved convergence speed.

The rest of the paper is organized as follows: Section 2 describes the core concept of D{0-1}KP with its detailed description and mathematical model. In Section 3, fitness-based acceleration coefficient binary particle swarm optimization for D{0-1}KP (FACBPSO) is presented. Section 4 demonstrates the parameters of the experimental setup. The experimental results and discussions are summarized in Section 5. The paper is concluded in Section 6.

2. The Core Concept Related to Discounted {0-1} KP

The D{0-1}KP is a multidimensional problem [17] in which a set of n item groups N = {0, 1, 2,…, n − 1} having three items 3i, 3i + 1, and 3i + 2 in each group and one knapsack with capacity ‘C’. All of the three items in each group have their value and weight which are specified in Table 1. The purpose of D{0-1}KP is to get maximum profit by choosing the maximum value profit item from the three items from each group to be put into the knapsack so that the overall weight remains less than the knapsack capacity ‘C’.

Table 1.

The Profit and Weight Coefficient.

Where w3i+2 (w3i+2 < w3i + w3i+1) is the discount weight of the third item. In a comparison with the other two items in each group, the third item provides more benefit due to its profit and weight. The third item has the maximum profit and minimum weight when compared with the other two items, that is why the third item in the group is selected to put in the knapsack.

All the profit and weight coefficients, and knapsack capacity ‘C’ may be assumed as positive integers deprived of the loss of generality, and all the weight coefficients will remain less than knapsack capacity C .

2.1. Mathematical Model of D{0-1}KP

The formulation of D{0-1}KP is given below [27]:

where, s3i, s3i+1, and s3i+2 specify whether the items 3i, 3i+1, and 3i+2 are placed in the knapsack. Based on binary decision variables, an item is not put into the knapsack when sk = 0, (k = 0, 1, …, 3n − 1), whereas an item is put into the knapsack when sk = 1. The potential solution of the binary vector S is the feasible solution of D{0-1}KP only when S meets Equations (2) and (3) at the same time.

2.1.1. Greedy Repair Optimization Algorithm

To solve multi-constrained optimization problems two common methods are used to avoid an infeasible solution. The first is the penalty function and the second is the repair function [26].

We can achieve a feasible solution of DKP for any binary vector only if it meets the conditions of Equations (2) and (3). At first, the algorithm ensures that S meets the conditions of Equation (2), which means a maximum of one item from each item group can be packed in the knapsack. After that, the algorithms ensure S meets Equation (3), which means that the weight coefficient of the selected item to put into the knapsack must be less than the knapsack capacity. The Algorithm 1 sorts the items in non-increasing order using quicksort according to their profit and weight ratio. After that, the items with their index values are kept in an array H= [0, 1, 2, …, 3n − 1]. Let flag [0, …, n − 1] be a Boolean array that keeps a record of items from item group q to be placed into the knapsack. When flag[i] =1 this represents that there must be an item to place into the knapsack, whereas when flag[i] = 0 it represents there is no item to place into the knapsack from group j.

| Algorithm 1 Structure of Greedy Repair Algorithm for D{0-1}KP |

| START |

|

| END |

2.1.2. PSO-DRGKP

This section presents a PSO-based Greedy Repair Algorithm 2 to solve D{0-1}KP [26]. Suppose

be the t-th generation population, where

is the i-th generation, the position of the t-th particle is presented because it is the potential solution for PSO-GRDKP.

And in the t-th generation, the velocity of an i-th particle is presented as:

here N is the total number of populations; t > 0 is the total number of iterations; L and U represent the upper and lower bounds of velocity.

The local feasible solution of i-th particle and global feasible solution is represented as:

and

Each particle’s velocity and position are updated using the following equations:

where Rd1 and Rd2 are random numbers, w is the inertia weight, and c1 and c2 are constants known as acceleration coefficients.

| Algorithm 2 Structure of PSO-GRDKP |

| START |

| INPUT: Instance I of D{0-1}KP, N = Population Size, T = Iterations, L = Lower bound, U = Upper bound, W = Inertia Weight, c1, c2 = Acceleration Coefficients. |

|

| END |

3. Fitness-Based Acceleration Coefficient Binary Particle Swarm Optimization Algorithm for DKP (FACBPSO)

The problem with PSO-GRDKP [26] is that it uses fixed acceleration coefficients for all particles in a swarm and the velocity of each particle is updated using the same acceleration coefficients, which leads to slow convergence. To overcome this problem, we have proposed an adaptive acceleration coefficient scheme with BPSO using a greedy repair algorithm called the fitness-based acceleration coefficient binary particle swarm optimization algorithm (FACBPSO) to solve D{0-1}KP. The proposed technique modifies the acceleration coefficients based on the fitness of each particle in terms of fitness rank. A particle with high fitness gets a higher rank, whereas a particle with low fitness is assigned a lower rank and this accelerates its convergence speed and efficiently improves the feasible solution time.

The BPSO adopts a sigmoid function (Sg) to map the velocity to the probability in the range of [0, 1].

The particle’s new position based on Equation (6) is calculated as:

where Rd is a random number chosen for the range of [0, 1].

The acceleration coefficients of the proposed FACBPSO are modified based on the fitness of each particle. The purpose of increasing c1 and decreasing c2 for each particle with higher fitness in the population is to enhance the feasible solution time.

The FACBPSO modifies c1 and c2 adaptively for each particle in terms of fitness rank for each particle as:

where the ‘FR’ is the fitness rank, 1.5, 0.01, and 1 are the empirical constants. The FACBPSO sorts all the particles in the population with respect to their fitness and then a fitness rank is assigned to each particle. The FACBPSO first ranks the particles with a greater fitness value and then later ranks the particles with smaller fitness values. Acceleration coefficients are important parameters that have influenced the performance of the proposed FACBPSO algorithm as compared to the existing algorithms. The proposed FACBPSO has been proved robust and efficient in solving D {0-1} KP with improved convergence time, accurate approximate ratios and feasible solution time.

c1 = 1.5 − (1 − FR)2

c2 = 0.01 × (1 − FR)3

3.1. Procedure of FACBPSO

The algorithm of FACBPSO is presented as:

- Sorting: Sort the items with respect to their profit–weight ratio in descending order. All the sorted items are kept in an array H [0, 1, 2, …, 3n − 1] with their index value.

- Initialization: Initialize the populationrandomly.

- Fitness Calculation: Compute the fitness of every individual utilizing its present position. ,

- while doing (stopping criterion)

- Determine the pbest and gbest with respect to their fitness.

- Apply the greedy repair algorithm to repair the solution.

- Evaluate the fitness of the particles and record the gbest.

- Get the pbest by comparison of the fitness of each particle to its best fitness.

- Get the gbest by comparing of fitness of each particle to its best fitness within the population.

- Sort and rank all particles according to their fitness.

- Calculate the acceleration coefficients for each particle in terms of fitness using Equation (9).

- Update velocity and position of the particles using Equations (5) and (6).

- end while

- Output: The approximate solution G (T): The best results.

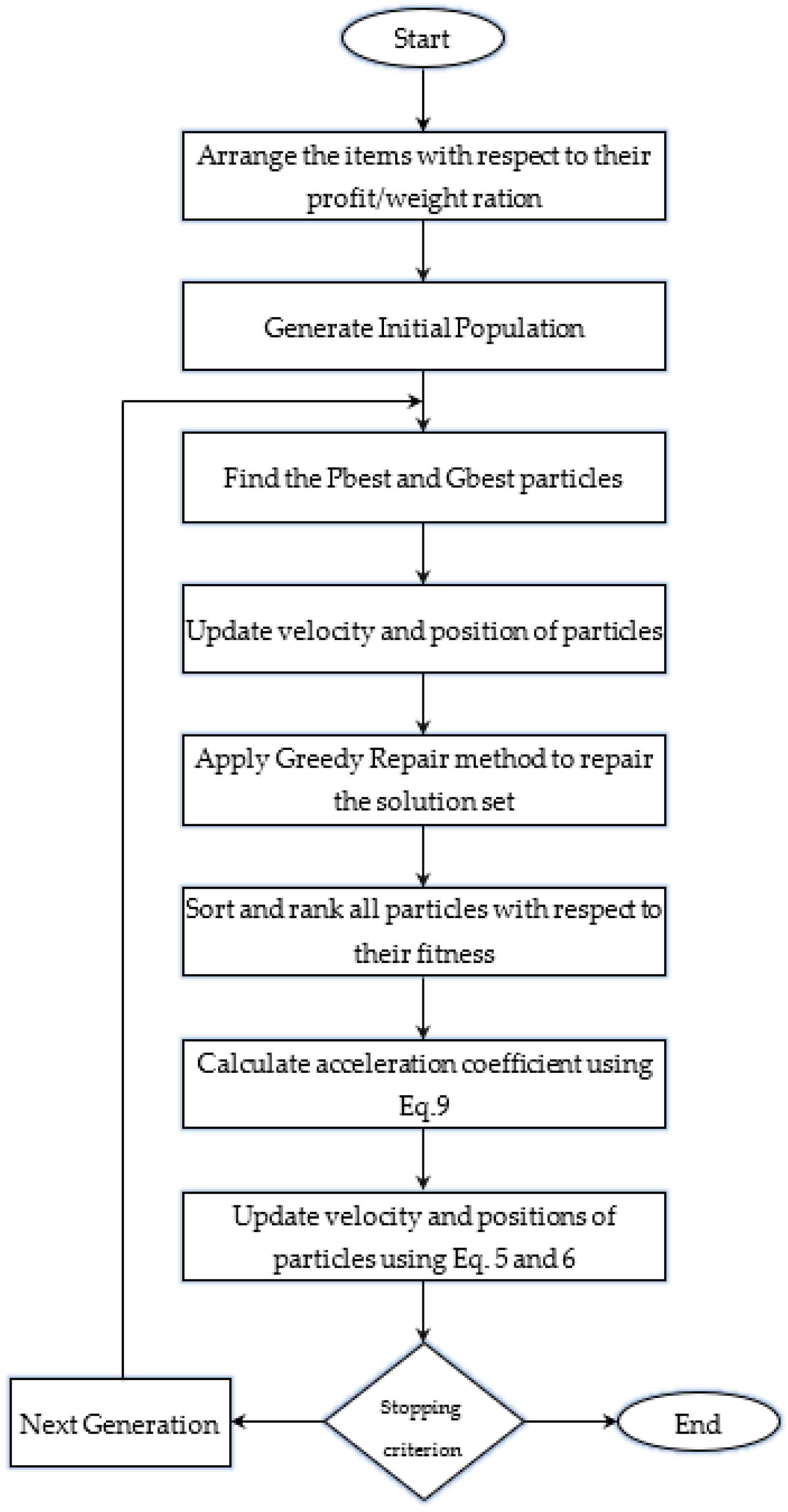

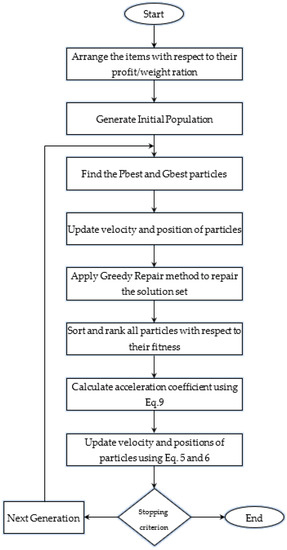

3.2. Flowchart of FACBPSO

Figure 1 describes the flowchart of the proposed FACBPSO algorithm.

Figure 1.

Flowchart of FACBPSO.

4. Experimental Setup

The performance of the proposed algorithm is compared with the binary particle swarm optimization-based greedy repair algorithm for discounted {0-1} KP (PSO-GRDKP) and a new exact algorithm for discounted {0-1} KP (NE-DKP). In this section, the benchmark D{0-1}KP instances and contender algorithms are presented.

4.1. Benchmark D{0-1}KP Instances

This section presents four kinds of large-scale benchmark D{0-1}KP instances used to conduct experiments as given below [26]:

- Uncorrelated Instances (UDKP) used 10 datasets from UDKP1 to UDKP10, in which the value and weight coefficients are not related at all

- Weakly Correlated Instances (WDKP) used 10 datasets from WDKP1 to WDKP10, in which the value and weight coefficients of items are weakly correlated.

- Strongly Correlated Instances (SDKP) used 10 datasets from SDKP1 to SDKP10, in which the value and weight coefficients of items are strongly correlated.

- Inverse Correlated Instances (IDKP) used 10 datasets from IDKP1 to IDKP10, in which the value and weight coefficients of items are inversely correlated.

Each D{0-1}KP instance computes the results using 10 different datasets with different knapsack capacity and dimensions (i.e., 3*n = 300, 600, …, 3000, n = (1, 2, …, 10)).

4.2. Parameters Settings

The parameters used in applied algorithms are presented in Table 2. For a fair comparison, we must use the same parameters for the algorithms under consideration. In PSO-GRDKP, c1 and c2 are kept fixed whereas in our proposed algorithm c1 and c2 are calculated in terms of fitness rank ‘FR’. The population size and number of iterations are the same in both algorithms. Dimensions remain the same for all algorithms ranging from 100 to 1000 from dataset 1 to the last dataset.

Table 2.

Parameter setting.

The benchmark D{0-1}KP instances are utilized to validate and compare the performance of optimization algorithms based on the feasible optimal solution, convergence rate, robustness, precision, and general performance. The performance of the FACBPSO algorithm is evaluated by modifying its acceleration coefficients measured by comparing it with a PSO-GRDKP and a new exact algorithm for D{0-1}KP NEDKP [32] algorithm. All the simulations are conducted in a Dev C++ environment using Haier Intel® CORE m3 with Windows 10.

5. Experimental Results and Discussions

The experimental results of the proposed FACBSPSO algorithm and its contenders PSO-GRDKP and NE-DKP are presented in this section. The performance of the proposed FACBSPSO algorithm is evaluated by conducting experiments on four D{0-1}KP instances over 3*D iterations. Based on the same datasets all algorithms were run 20 times and the average results were reported. AppMean is the mean value, AppStd is the standard deviation and Exetime is the feasible solution time in seconds.

5.1. Comparison of FACBSPSO with PSO-GRDKP and NE-DKP on Four D{0-1}KP Instances

Experimental results are shown in Table 3, Table 4, Table 5 and Table 6, the FACBPSO shows promising results on all instances of D{0-1}KP with a significantly improved convergence rate and feasible solution time compared with the contender algorithms.

Table 3.

Experimental results of feasible solution time on SDKP instances.

Table 4.

Experimental results of feasible solution time on WDKP instances.

Table 5.

Experimental results of feasible solution time on UDKP instances.

Table 6.

Experimental results of feasible solution time on IDKP instances.

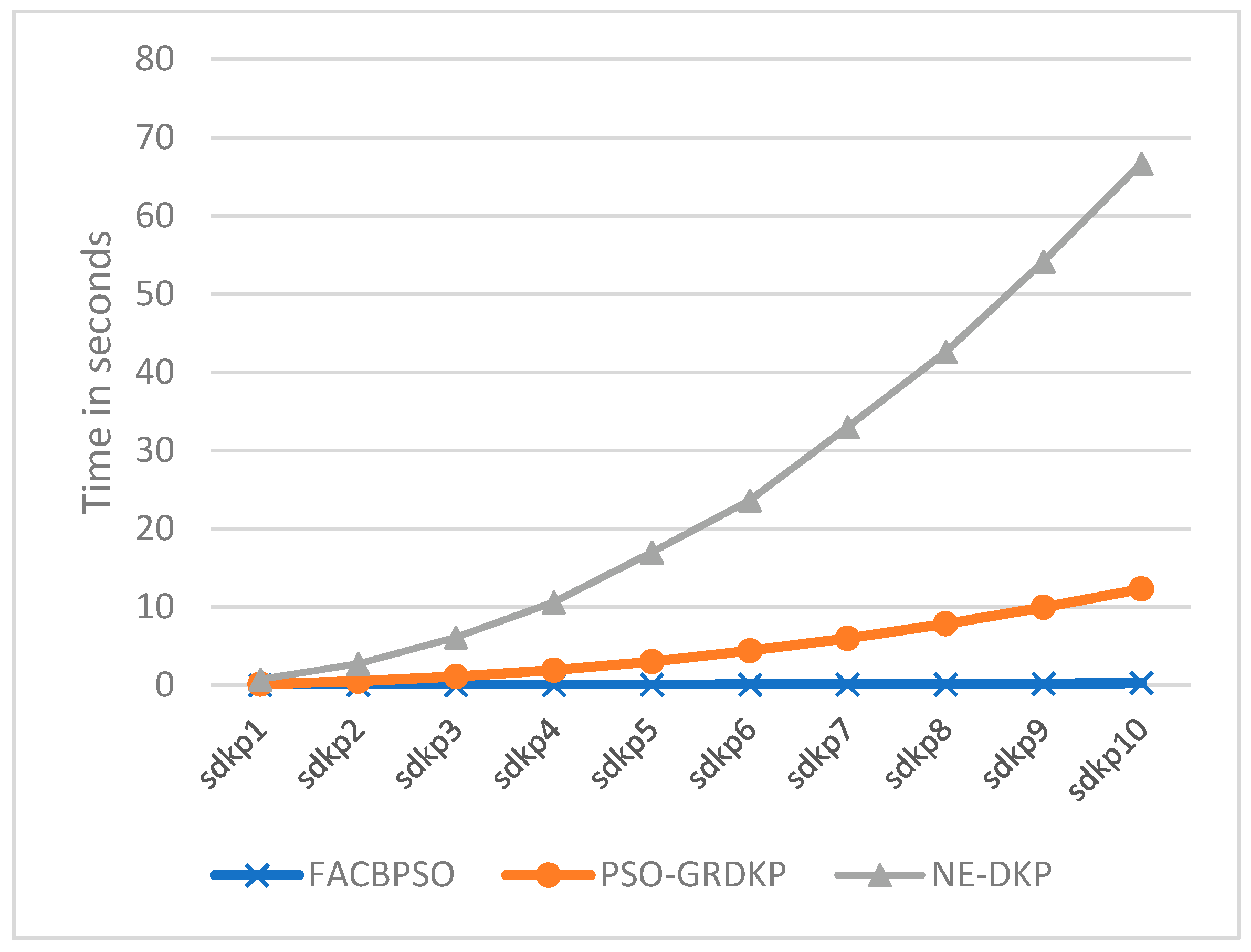

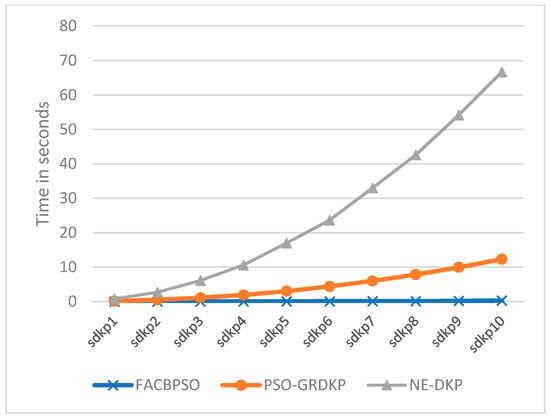

5.1.1. Experimental Results of Strongly Correlated Instances (SDKP)

To evaluate the performance of the proposed technique, experiments were carried out on different instances of DKP. The first experiment was conducted using strongly correlated instances of DKP on its 10 datasets ranging from sdkp1 to sdkp10. The mean, standard deviation, and execution time of each algorithm are presented in Table 3. The experimental results show that the proposed algorithm based on the greedy repair algorithm outperforms the compared techniques. The experimental results show that the FACBPSO has improved the feasible solution time when compared with PSO-GRDKP and NE-DKP on 10 SDKP instances.

Figure 2 demonstrates the feasible solution time in seconds for three compared algorithms. The datasets sdkp1 to sdkp10 are shown on the x-axis and the corresponding execution time is shown the on the y-axis. The results show that the FACBSPSO takes the least feasible solution time and converges quickly. The PSOGR-DKP takes more feasible time than the proposed FACBSPSO, but less feasible solution time compared with NE-DKP. NE-DKP takes more feasible solution time and converges slowly. Hence, from the comparative analysis, we can conclude that the proposed algorithm outperforms the others on all instances of SDKP, even with increased dimensions.

Figure 2.

Comparative analysis of feasible solution time on SDKP instances.

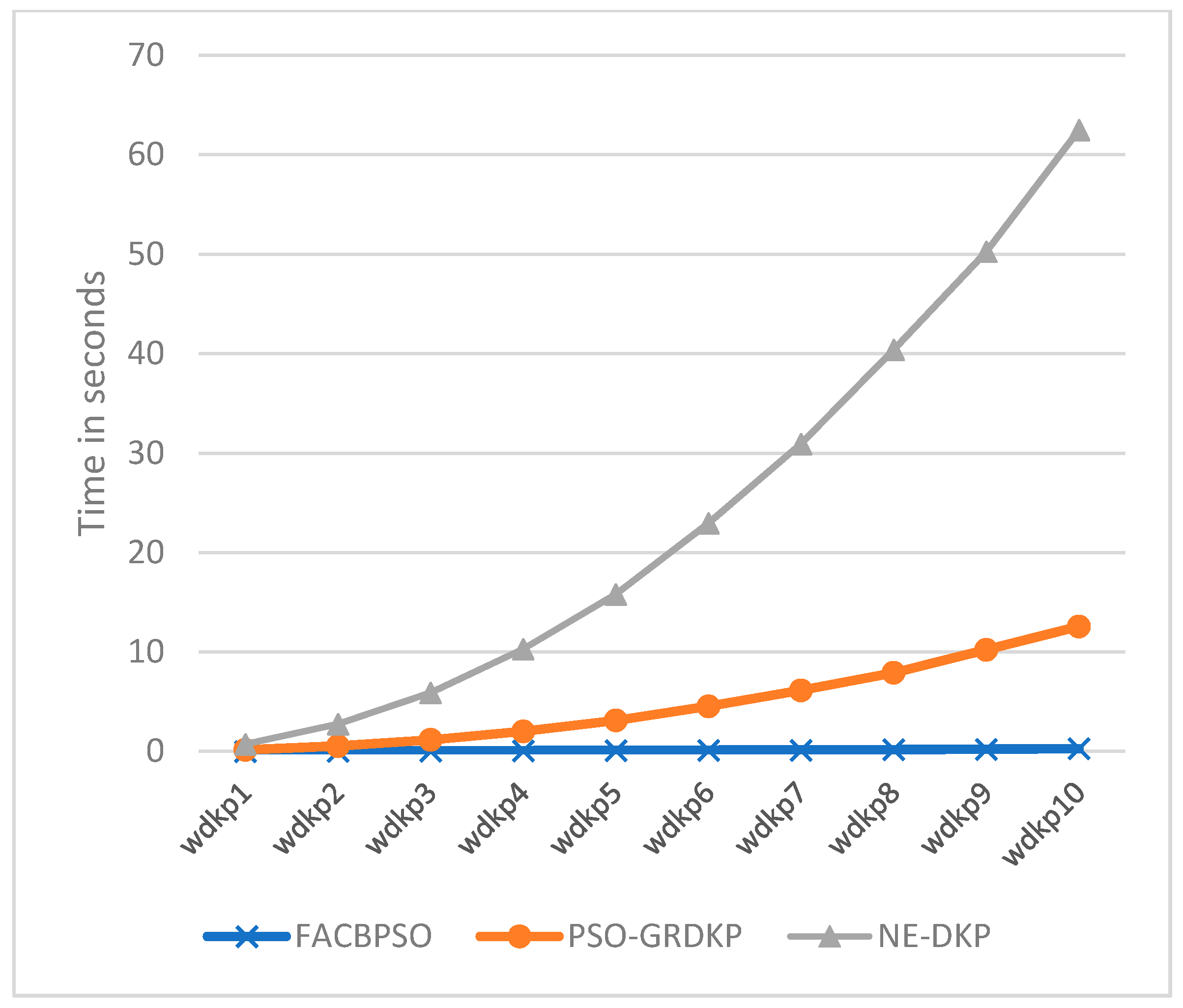

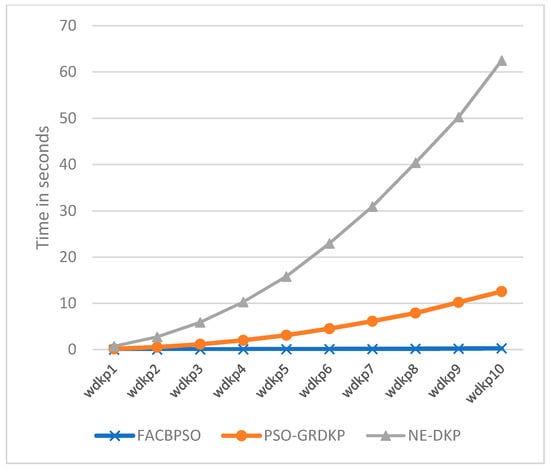

5.1.2. Experimental Results of Weakly Correlated Instances (WDKP)

In weakly correlated instances (WDKP), the value and weight coefficients of items are weakly correlated. The experimental results presented in Table 4 show the mean, standard deviation, and feasible solution time of FACBPSO, PSO-GRDKP, and NEDKP. The results reflect that the proposed algorithm gives better performance with an efficient feasible solution with quick convergence time and more accurate values of approximate ratios.

Figure 3 demonstrates the feasible solution time of three algorithms. The FACBSPSO takes the least feasible solution time and converges quickly when compared with the other algorithms. There is a significant improvement in computation time to solve all the instances of WDKP. Hence, from the comparative analysis, we can conclude that the proposed algorithm outperforms both existing algorithms on 10 WDKP instances.

Figure 3.

Comparative analysis of feasible solution time on WDKP instances.

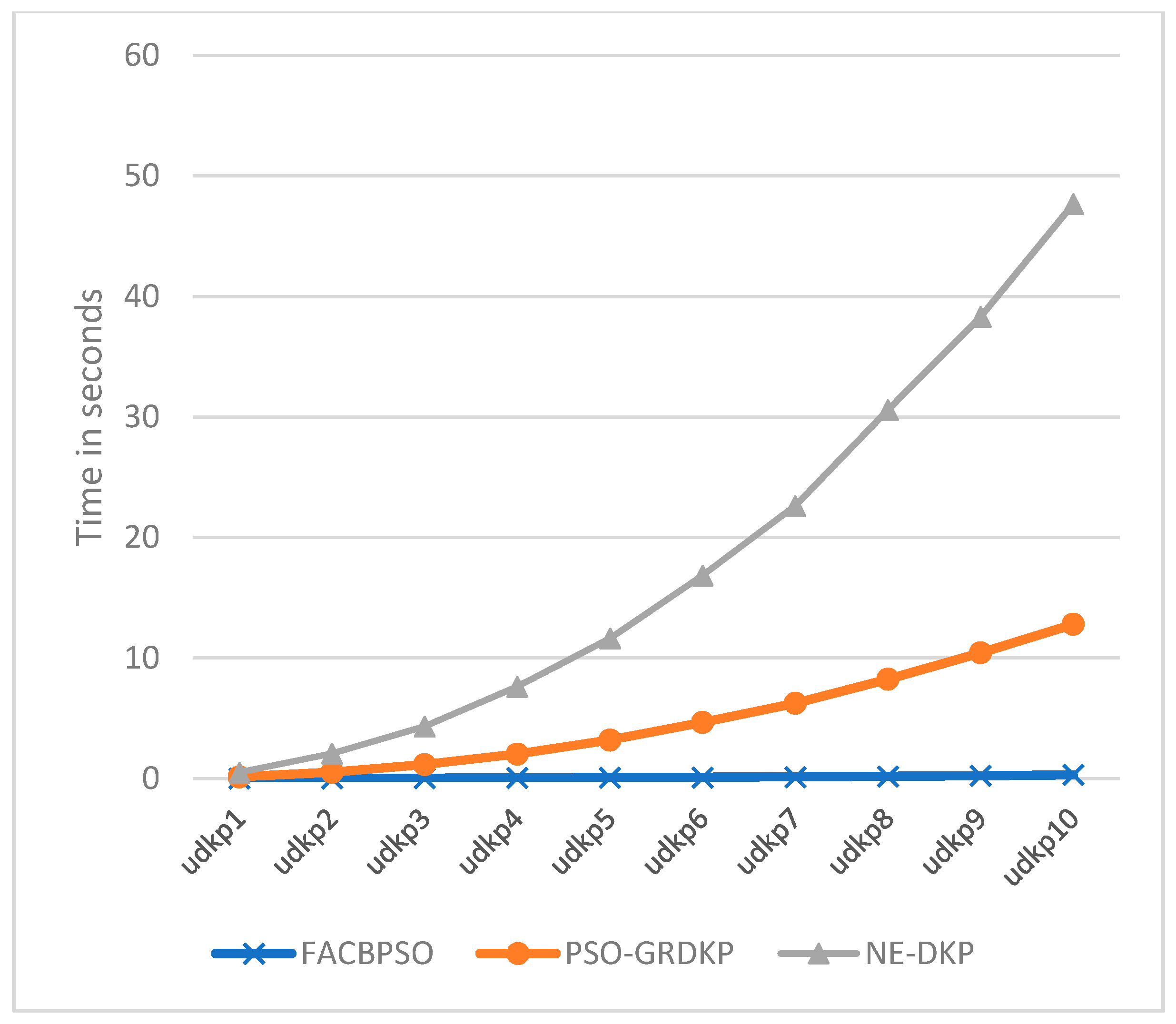

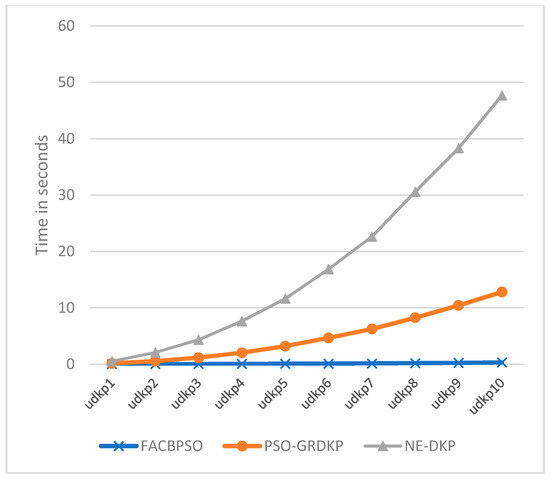

5.1.3. Experimental Results of Uncorrelated Instances (UDKP)

The mean, standard deviation, and the feasible solution time of FACBSPSO, PSOGR-DKP, and NE-DKP are presented in Table 5. As in the instances for UDKP, the weight coefficient and the value of items are not correlated. Therefore, it is a difficult case of D{0-1}KP. The experimental results show that FACBPSO has improved approximate ratios with the least standard deviation and reduced feasible solution time compared with the other methods.

The feasible solution time presented in Figure 4 shows that the FACBPSO has a smaller feasible solution time than the other algorithms. NE-DKP has a much higher feasible solution time than FACBSPO and PSOGR-DKP.

Figure 4.

Comparative analysis of feasible solution time on UDKP instances.

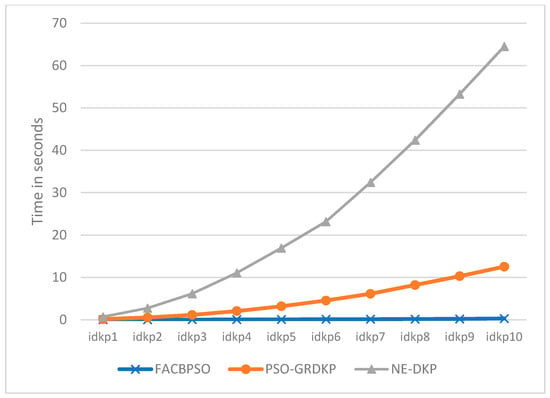

5.1.4. Experimental Results of Inverse Correlated Instances

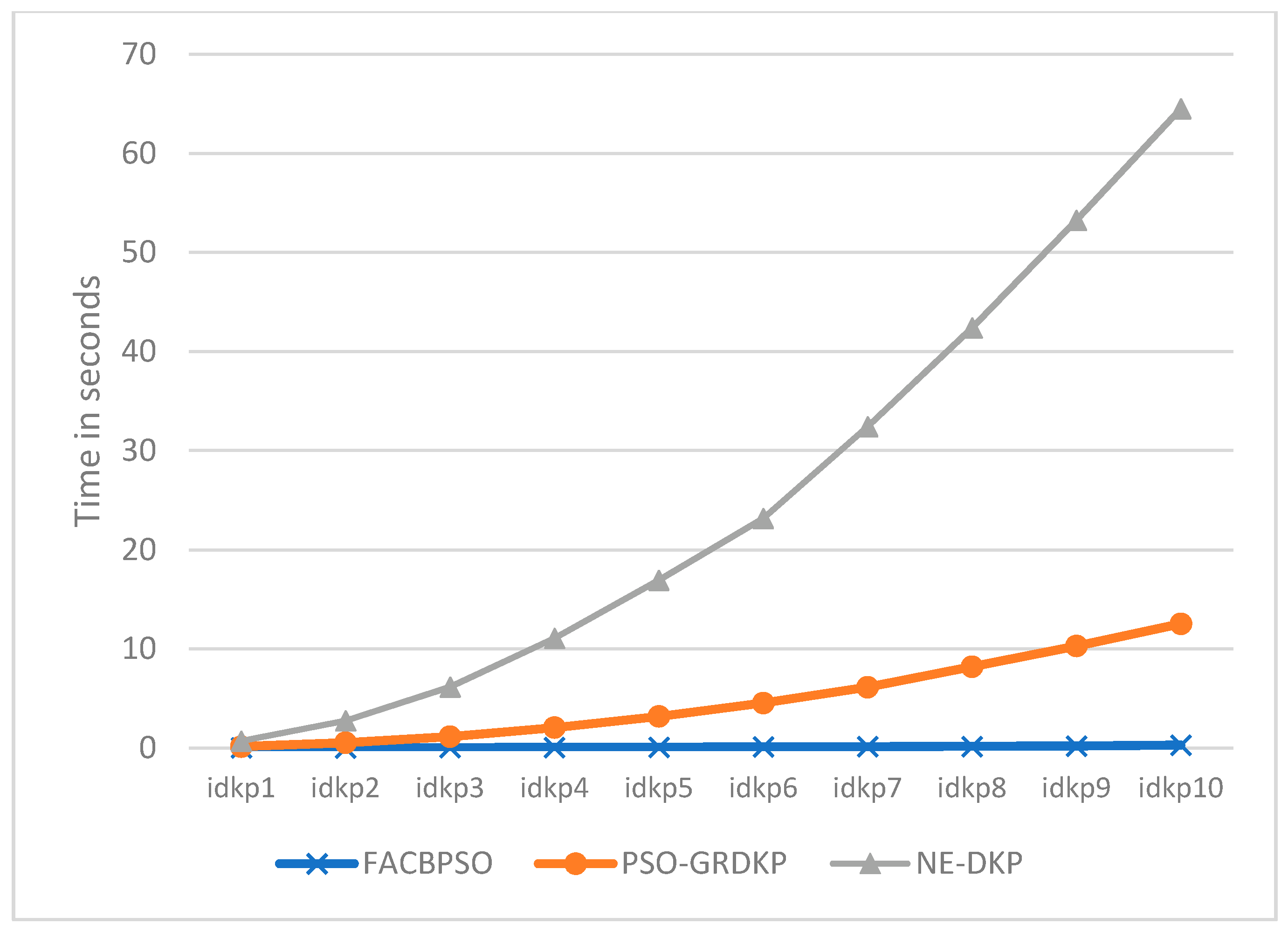

The experimental results presented in Table 6 reflect that, when compared with PSO-GRDKP and NE-DKP, the proposed algorithm shows improved execution time for the dataset of 10 IDKP instances. The results demonstrated that the FACBPSO has a significantly improved feasible solution time, convergence time and approximate ratios on all instances of the IDKP dataset.

Figure 5 demonstrates that among all three compared algorithms, the proposed FACBSPSO takes the least feasible solution time and converges quickly. The PSOGR-DKP takes more feasible time compared with FACBSPSO, whereas NE-DKP takes the longest feasible solution time and converges slowly. Hence, from the comparative analysis, we can conclude that the proposed algorithm outperforms both existing algorithms on 10 IDKP instances.

Figure 5.

Comparative analysis of feasible solution time on IDKP instances.

After analyzing the performance of the proposed and existing algorithms, using 10 values of each instance, we compared the average results of all algorithms (Table 7). The results in Table 7 show that the average feasible solution time of the proposed FACBSPSO is less than for PSOGR-DKP and NE-DKP in all four instances.

Table 7.

Average feasible solution time on all four instances.

6. Conclusions

The discounted {0-1} knapsack problem is a combinatorial and multi-constrained optimization problem, and its objective is to maximize total profit among selected items. As D{0-1}KP is a complex optimization problem that has four instances having 10 datasets of each type with a varying number of dimensions. To address this problem, dynamic programming-based algorithms and evolutionary algorithms have been adopted. As the problem is binary in nature, the binary PSO variant was modified to improve the convergence speed and feasible solution time of D{0-1}KP. The proposed FACBPSO modified the acceleration coefficients in terms of fitness rank. To accelerate the convergence speed, the fitness of each particle is used to modify the cognitive and social components of each particle such that, the particles with high fitness get a higher rank, whereas the particles with low fitness are ranked accordingly. Table 3, Table 4, Table 5 and Table 6 indicate that the proposed FACBSPSO is efficient, exhibiting a better feasible solution time with quick convergence, which makes it more suitable to solve D{0-1}KP efficiently and accurately compared with the existing PSO-GRDKP and NE-DKP methods, by evaluating four D{0-1}KP instances for different dimensions. The experimental results reflect that the proposed FACBPSO also improved the approximate ratios, which makes the proposed algorithm better than the others. The graphical comparison also indicates that the proposed fitness-based BPSO with greedy repair outperforms the existing PSO-GRDKP and NE-DKP. Hence, it is concluded that our proposed fitness-based algorithm with a greedy repair strategy is more suitable to solve D{0-1}KP efficiently and accurately and it could be of interest to researchers who need a quick response decision with the least amount of computation resources.

Author Contributions

Conceptualization, Y.M.; Methodology, M.S., Y.M., M.A. and G.A.A.; Formal analysis, M.S., Y.M. and M.A.; Data curation, A.S. and M.A.; Investigation, M.S.; Writing—original draft, M.S. and Y.M.; Writing—review & editing, M.A. and G.A.A.; Supervision, G.A.A.; Funding acquisition, A.S. and G.A.A. All authors have read and agreed to the published version of the manuscript.

Funding

The authors would like to express their gratitude to the ministry of education and the deanship of scientific research—Najran University—Kingdom of Saudi Arabia for their financial and technical support under code number NU/-/SERC/10/639.

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Data Availability Statement

Not applicable.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Wang, R.; Zhang, Z.; Ng, W.W.; Wu, W. An improved group theory-based optimization algorithm for discounted 0-1 knapsack problem. Adv. Comput. Intell. 2021, 1, 9. [Google Scholar] [CrossRef]

- Abdel-Basset, M.; Mohamed, R.; Mirjalili, S. A Binary Equilibrium Optimization Algorithm for 0–1 Knapsack Problems. Comput. Ind. Eng. 2021, 151, 106946. [Google Scholar] [CrossRef]

- Cho, M. The knapsack problem and its applications to the cargo loading problem. Anal. Appl. Math. 2019, 13, 48–63. [Google Scholar]

- Müller, S.; Al-Shatri, H.; Wichtlhuber, M.; Hausheer, D.; Klein, A. Computation offloading in wireless multi-hop networks: Energy minimization via multi-dimensional knapsack problem. In Proceedings of the 2015 IEEE 26th Annual International Symposium on Personal, Indoor, and Mobile Radio Communications (PIMRC), Hong Kong, China, 30 August–2 September 2015; pp. 1717–1722. [Google Scholar]

- Karaboghossian, T.; Zito, M. Easy knapsacks and the complexity of energy allocation problems in the smart grid. Optim. Lett. 2018, 12, 1553–1568. [Google Scholar] [CrossRef]

- Jacko, P. Resource capacity allocation to stochastic dynamic competitors: Knapsack problem for perishable items and index-knapsack heuristic. Ann. Oper. Res. 2016, 241, 83–107. [Google Scholar] [CrossRef]

- Zhang, X.; Chen, Y. Admissibility and robust stabilization of continuous linear singular fractional order systems with the fractional order α: The 0 <α<1 case. ISA Trans. 2018, 82, 42–50. [Google Scholar]

- Oppong, E.O.; Oppong, S.O.; Asamoah, D.; Abiew, N.A.K. Meta-heuristics approach to knapsack problem in memory management. Asian J. Res. Comput. Sci. 2019, 3, 1–10. [Google Scholar] [CrossRef]

- Tavana, M.; Keramatpour, M.; Santos-Arteaga, F.J.; Ghorbaniane, E. A fuzzy hybrid project portfolio selection method using data envelopment analysis, TOPSIS and integer programming. Expert Syst. Appl. 2015, 42, 8432–8444. [Google Scholar] [CrossRef]

- Zhang, J.-X.; Yang, G.-H. Low-complexity tracking control of strict-feedback systems with unknown control directions. IEEE Trans. Autom. Control 2019, 64, 5175–5182. [Google Scholar] [CrossRef]

- Khan, S.; Li, K.F.; Manning, E.G.; Akbar, M.M. Solving the knapsack problem for adaptive multimedia systems. Stud. Inform. Univ. 2002, 2, 157–178. [Google Scholar]

- Chan, H.; Tran-Thanh, L.; Wilder, B.; Rice, E.; Vayanos, P.; Tambe, M. Utilizing housing resources for homeless youth through the lens of multiple multi-dimensional knapsacks. In Proceedings of the 2018 AAAI/ACM Conference on AI, Ethics, and Society, New Orleans, LA, USA, 1–3 February 2018; pp. 41–47. [Google Scholar]

- Alfares, H.K.; Alsawafy, O.G. A Least-Loss Algorithm for a Bi-Objective One-Dimensional Cutting-Stock Problem. Int. J. Appl. Ind. Eng. (IJAIE) 2019, 6, 1–19. [Google Scholar] [CrossRef]

- Du, Y.; Xu, F. A hybrid multi-step probability selection particle swarm optimization with dynamic chaotic inertial weight and acceleration coefficients for numerical function optimization. Symmetry 2020, 12, 922. [Google Scholar] [CrossRef]

- Wang, R.; Zhang, Z. Set Theory Based Operator Design in Evolutionary Algorithms for Solving Knapsack Problems. IEEE Trans. Evol. Comput. 2021, 25, 1133–1147. [Google Scholar] [CrossRef]

- Kellerer, H.; Pferschy, U.; Pisinger, D. Multidimensional knapsack problems. In Knapsack Problems; Springer: Berlin/Heidleberg, Germany, 2004; pp. 235–283. [Google Scholar]

- Guldan, B. Heuristic and Exact Algorithms for Discounted Knapsack Problems. Master’s Thesis, University of Erlangen-Nürnberg, Erlangen, Germany, 2007. [Google Scholar]

- Rong, A.; Figueira, J.R.; Klamroth, K. Dynamic programming based algorithms for the discounted {0–1} knapsack problem. Appl. Math. Comput. 2012, 218, 6921–6933. [Google Scholar] [CrossRef]

- Saraç, T.; Sipahioglu, A. A genetic algorithm for the quadratic multiple knapsack problem. In International Symposium on Brain, Vision, and Artificial Intelligence; Springer: Berlin/Heidleberg, Germany, 2007; pp. 490–498. [Google Scholar]

- He, Y.; Zhang, X.; Li, W.; Li, X.; Wu, W.; Gao, S. Algorithms for randomized time-varying knapsack problems. J. Comb. Optim. 2016, 31, 95–117. [Google Scholar] [CrossRef]

- Ren, Z.-G.; Feng, Z.-R.; Zhang, A.-M. Fusing ant colony optimization with Lagrangian relaxation for the multiple-choice multidimensional knapsack problem. Inf. Sci. 2012, 182, 15–29. [Google Scholar] [CrossRef]

- Li, Y.; He, Y.; Liu, X.; Guo, X.; Li, Z. A novel discrete whale optimization algorithm for solving knapsack problems. Appl. Intell. 2020, 50, 3350–3366. [Google Scholar] [CrossRef]

- Wilbaut, C.; Todosijevic, R.; Hanafi, S.; Fréville, A. Heuristic and exact fixation-based approaches for the discounted 0-1 knapsack problem. arXiv 2021, arXiv:2106.03438. [Google Scholar]

- Wu, C.; Zhao, J.; Feng, Y.; Lee, M. Solving discounted {0-1} knapsack problems by a discrete hybrid teaching-learning-based optimization algorithm. Appl. Intell. 2020, 50, 1872–1888. [Google Scholar] [CrossRef]

- Mehmood, Y.; Shahzad, W. An accelerated convergent particle swarm optimizer (ACPSO) of multimodal functions. Intell. Autom. Soft Comput. 2019, 25, 91–103. [Google Scholar] [CrossRef]

- He, Y.-C.; Wang, X.-Z.; He, Y.-L.; Zhao, S.-L.; Li, W.-B. Exact and approximate algorithms for discounted {0-1} knapsack problem. Inf. Sci. 2016, 369, 634–647. [Google Scholar] [CrossRef]

- Feng, Y.-H.; Wang, G.-G. Binary moth search algorithm for discounted {0-1} knapsack problem. IEEE Access 2018, 6, 10708–10719. [Google Scholar] [CrossRef]

- Feng, Y.; Wang, G.-G. A binary moth search algorithm based on self-learning for multidimensional knapsack problems. Future Gener. Comput. Syst. 2022, 126, 48–64. [Google Scholar] [CrossRef]

- Feng, Y.; Wang, G.-G.; Li, W.; Li, N. Multi-strategy monarch butterfly optimization algorithm for discounted {0-1} knapsack problem. Neural Comput. Appl. 2018, 30, 3019–3036. [Google Scholar] [CrossRef]

- Yang, Y.; Dazhi, P.; Yi, L.; Dailun, T. New simplified model of discounted {0-1} knapsack problem and solution by genetic algorithm. J. Comput. Appl. 2019, 39, 656. [Google Scholar]

- Zhu, H.; He, Y.; Wang, X.; Tsang, E.C. Discrete differential evolutions for the discounted {0-1} knapsack problem. Int. J. Bio-Inspired Comput. 2017, 10, 219–238. [Google Scholar] [CrossRef]

- Zhou, H.; Wei, X. Particle swarm optimization based on a novel evaluation of diversity. Algorithms 2021, 14, 29. [Google Scholar] [CrossRef]

- Gómez-Montoya, R.A.; Cano, J.A.; Cortés, P.; Salazar, F. A discrete particle swarm optimization to solve the put-away routing problem in distribution centres. Computation 2020, 8, 99. [Google Scholar] [CrossRef]

- Mehmood, Y.; Sadiq, M.; Shahzad, W.; Amin, F. Fitness-based acceleration coefficients to enhance the convergence speed of novel binary particle swarm optimization. In Proceedings of the 2018 International Conference on Frontiers of Information Technology (FIT), Islamabad, Pakistan, 17–19 December 2018; pp. 355–360. [Google Scholar]

- Cipriani, E.; Fusco, G.; Patella, S.M.; Petrelli, M. A particle swarm optimization algorithm for the solution of the transit network design problem. Smart Cities 2020, 3, 541–555. [Google Scholar] [CrossRef]

- Kiani, A.T.; Nadeem, M.F.; Ahmed, A.; Khan, I.A.; Alkhammash, H.I.; Sajjad, I.A. An Improved Particle Swarm Optimization with Chaotic Inertia Weight and Acceleration Coefficients for Optimal Extraction of PV Models Parameters. Energies 2021, 14, 2980. [Google Scholar] [CrossRef]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).