1. Introduction

Deep learning has revolutionized a wide range of computer vision tasks like image classification, image segmentation, and object detection [

1,

2]. However, despite these significant advancements, overfitting remains a challenge [

3]. The data distribution shifts between the training set and test set may cause model degradation. This is also particularly exacerbated when working with limited labeled data or with corrupted data. Numerous mitigation strategies have been proposed, and among these, data augmentation has proven to be remarkably effective [

4,

5]. Data augmentation techniques increase the diversity of training data by applying various transformations to input images in the model training. The model can be trained with a wider slice of the underlying data distribution, which improves model generalization and robustness to unseen inputs. Of particular interest among these techniques are mixup-based methods, which create synthetic training examples through the combination of pairs of training examples and their labels [

6].

Subsequent to mixup, an array of innovative strategies were developed which go beyond the simple linear weighted blending of mixup, and instead apply more intricate ways to fuse image pairs. Notable among these are CutMix and FMix methods [

7,

8]. The CutMix technique [

7] formulates a novel approach where parts of an image are cut and pasted onto another, thereby merging the images in a region-based manner. On the other hand, FMix [

8] applies a binary mask to the frequency spectrum of images for fusion, hence achieving an enhanced mixup process that can take on a wide range of mask shapes, rather than just the square mask in CutMix. These methods have been successful in preserving local spatial information while introducing more extensive variations into the training data.

While mixup-based methods have shown promising results, there remains ample room for innovation and improvement. These mixup techniques utilize little to no prior knowledge, which simplifies their integration into training pipelines and incurs only a modest increase in training costs. To further enhance performance, some methodologies have leveraged intrinsic image features to boost the impact of mixup-based methods [

9]. Recently, following this approach, some methods employ the model-generated feature to guide image mixing [

10]. Furthermore, some researchers have also incorporated image labels and model outputs in the training process as prior knowledge, introducing another dimension to improve these methods’ performance [

11]. The utilization of these methods often introduces a considerable increase in training costs to extract the prior knowledge and construct a mixing mask dynamically. This added complexity not only impacts the speed and efficiency of the training process but can also act as a barrier to deployment in resource-constrained environments. Despite their theoretical simplicity, in practice, these methods might pose integration challenges. The necessity to adjust the existing pipeline to accommodate these techniques could complicate the training process and hinder their adoption in a broader range of applications. Given this, we are driven to ponder an important question about the evolution of mixed sample data augmentation methods: How can we fully unleash the potential of MSDA while avoiding extra computational cost and facilitating seamless integration into existing training pipelines?

Considering the RandAugment [

4] and other image augmentation policies, we are actually applying multiple layers of data augmentation to the input images, and those works have shown that a multi-layered and diversified data augmentation strategy can significantly improve the generalization and performance of deep learning models. The work RandomMix [

12] starts ensembling the MSDA methods by randomly choosing one from a set of methods. However, by restricting to only one mixing mask being applied, RandomMix imposes some unnecessary limitations. Firstly, the variety of mixing methods can be highly improved if multiple mask methods can be applied together. Secondly, the diversity of possible mixing shapes can be greater if we can further augment the mixing masks. Thirdly, we draw insights from AUGMIX, an innovative approach that applies different random sampled augmentations on the same input image and mixes those augmented images. With the help of customized loss function design, it achieved substantial improvements in robustness. Inspired by this, we propose to remove a limitation in conventional MSDA methods and allow a sample to mix with itself with an assigned probability. It is essential to note that during this mixing process, the input data must undergo two distinct random data augmentations.

In this paper, we propose the MiAMix: Multi-layered Augmented Mixup. MiAMix alleviates the previously mentioned restrictions. Our contributions can be summarized as follows:

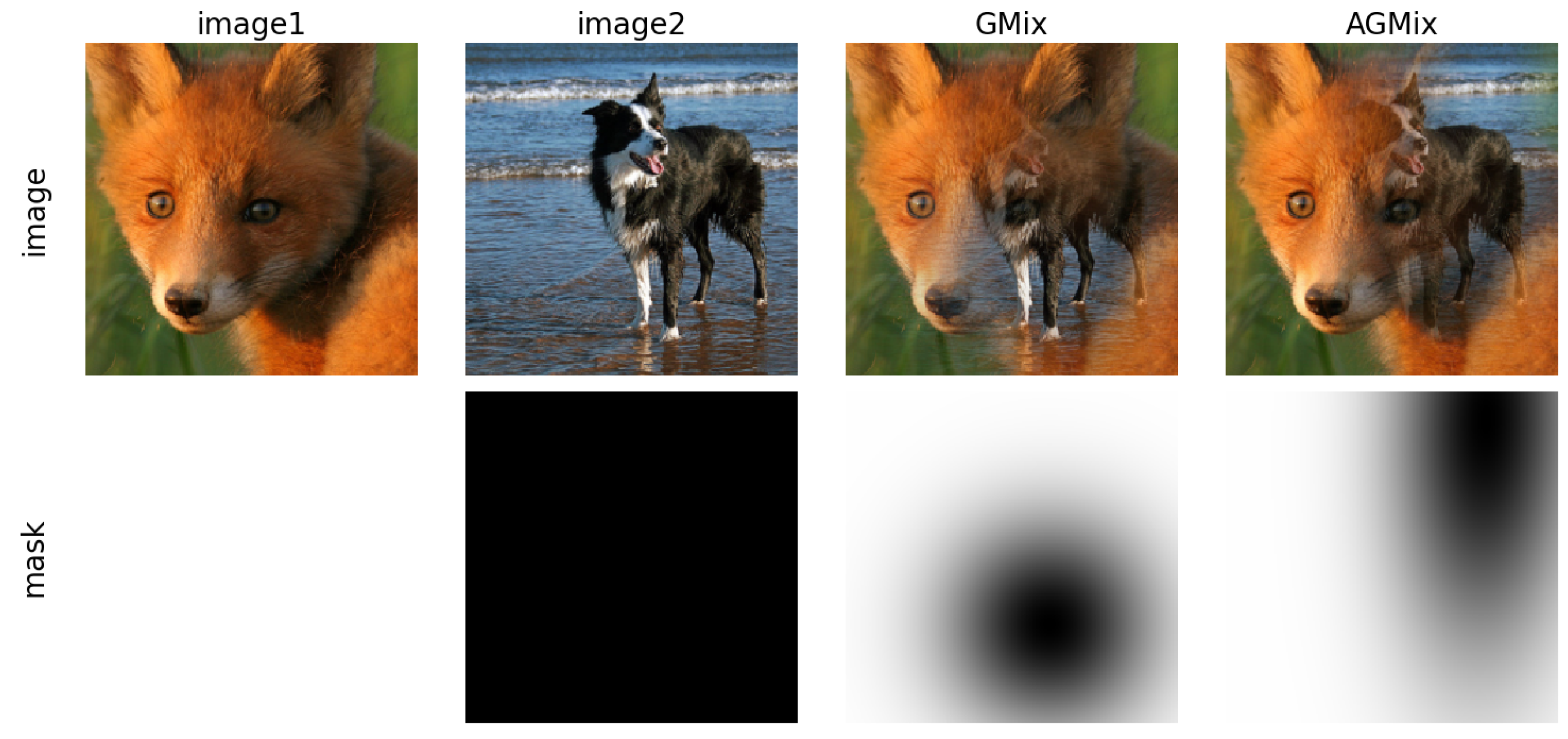

We firstly revisit the design of GMix [

13], leading to an augmented form called AGMix. This novel form fully capitalizes the flexibility of the Gaussian kernel to generate a more diversified mixing output.

A novel sampling method of the mixing ratio is designed for multiple mixing masks.

We define a new MSDA method with multiple stages: random sample paring, mixing methods and ratios sampling, generation and augmentation of mixing masks, and finally, the mixed sample output stage. We consolidate these stages into a comprehensive framework named MiAMix and establish a search space with multiple hyper-parameters.

To assess the performance of our proposed AGmix and MiAMix method, we conducted a series of rigorous evaluations across CIFAR-10/100, and Tiny-ImageNet [

14] datasets. The outcomes of these experiments substantiate that MiAMix consistently outperforms the leading mixed sample data augmentation methods, establishing a new benchmark in this realm. In addition to measuring the generalization performance, we also evaluated the robustness of our model in the presence of natural noises. The experiments demonstrated that the application of RandomMix during training considerably enhances the model’s robustness against such perturbations. Moreover, to scrutinize the effectiveness of our multi-stage design, we implemented an extensive ablation study using the ResNet18 [

1] model on the Tiny-ImageNet dataset.

2. Related Works

Mixup-based data augmentation methods have played an important role in deep neural network training [

15]. Mixup generates mixed samples via linear interpolation between two images and their labels [

6]. The mixed input

and label

are generated as:

where

,

are raw input vectors.

where

,

are one-hot label encodings.

and

are two examples drawn at random from our training data, and

. The

, for

. Following the development of Mixup, an assortment of techniques has been proposed that focuses on merging two images as part of the augmentation process. Among these, CutMix [

7] has emerged as a particularly compelling method.

In the CutMix approach, instead of creating a linear combination of two images as Mixup does, it generates a mixing mask with a square-shaped area, and the targeted area of the image is replaced by corresponding parts from a different image. This method is considered a cutting technique due to its method of fusing two images. The cutting and replacement idea has also been used in FMix [

8] and GridMix [

16].

The paper [

13] unified the design of different MSDA masks and proposed GMix. The Gaussian Mixup (GMix) generates mixed samples by combining two images using a Gaussian mixing mask. GMix first randomly selects a center point

c in the input image. It then generates a Gaussian mask centered at

c, where the mask values follow:

where

is set based on the mixing ratio

and image size

N as

This results in a smooth Gaussian mix of the two images, transitioning from one image to the other centered around the point

c. There are numerous other outstanding works in the realm of MSDA that we cannot enumerate exhaustively. In

Table 1, we have summarized some of the most representative MSDA methods and those that will be related to our subsequent experiments.

4. Results

In order to examine the benefits of MiAMix, we conduct experiments on fundamental tasks in image classification. Specifically, we chose the CIFAR-10, CIFAR-100, and Tiny-ImageNet datasets for comparison with prior work. We replicate the corresponding methods on all those datasets to demonstrate the relative improvement of employing this method over previous mixup-based methods.

4.1. Tiny-ImageNet, CIFAR-10, and CIFAR-100 Classification

For our image classification experiments, we utilize the Tiny-ImageNet [

14] dataset, which consists of 200 classes with 500 training images and 50 testing images per class. Each image in this dataset has been downscaled to a resolution of

pixels. We also evaluate our methods (AGMix and MiAMix) against those mixing methods on CIFAR-10 and CIFAR-100 datasets. The CIFAR-10 dataset consists of 60,000 32 × 32 pixel images distributed across 10 distinct classes, and the CIFAR-100 dataset, mirrors the structure of the CIFAR-10 but encompasses 100 distinct classes, each holding 600 images. Both datasets include 50,000 training images and 10,000 for testing.

Training is performed using the ResNet-18 and ResNeXt-50 network architecture over the course of 400 epochs, with a batch size of 100. Our optimization strategy employs Stochastic Gradient Descent (SGD) with a momentum of 0.9 and weight decay set to . The initial learning rate is set to 0.1 and decays according to a cosine annealing schedule.

In our investigation of various mixup methods, we select a set of methods . Each of these methods was given a weight, represented as a vector . The mixing parameter, , was set to 1 throughout the experiments.

As shown in

Table 3, we compare the performance and training cost of several MSDA methods. The training cost is measured as the ratio of the training time of the method to the training time of the vanilla training. From the results, it is clear that our proposed method, MiAMix, shows a state-of-the-art performance among those low-cost MSDA methods. The test results even surpass the AutoMix, which embeds the mixing mask generation into the training pipeline to take more advantage of injecting dynamic prior knowledge into the sample mixing. Notably, the MiAMix method only incurs an 11% increase in training cost over the vanilla model, making it a cost-effective solution for data augmentation. In contrast, the AutoMix takes approximately 70% more training costs.

4.2. Experiment on Image Transformer Model

Image transformers [

20,

21] have reshaped the landscape of computer vision by achieving remarkable performance across a wide range of tasks. Given their structural differences from traditional CNN architectures, it is crucial to ensure that the data augmentation methods, especially the mixed-sample ones, generalize well with them. In this section, we present an evaluation of various mixed-sample data augmentation (MSDA) methods on the CIFAR-100 dataset using the DeiT-S Image Transformer Model. The models are trained from scratch in 200 epochs, with a batch size of 100. The Adam optimizer is applied with learning rate warm-up and decay.

As shown in

Table 4, the incorporation of MSDA methods offers substantial improvements. Among the methods listed, MiAMix stands out by achieving a Top-1 accuracy of

. MiAMix demonstrates its robustness and adaptability when paired with the transformer architecture. In contrast to other mixup variants, a shift in architecture tends to induce more pronounced discrepancies in performance outcomes. This is particularly impressive, considering that transformers have intricacies that are different from traditional CNNs, emphasizing MiAMix’s wide applicability and effectiveness.

4.3. Transfer Learning

Transfer learning is a prevalent technique in modern deep learning practices. In our experiments, we utilized the CUB-200 [

22] dataset, which comprises 11,788 images spanning 200 bird subcategories, with a division of 5994 for training and 5794 for testing. Images are presented at a resolution of

.

We employed a ResNet-18, which was pre-trained on the ImageNet-1k dataset, as our initialization checkpoint. The training was conducted over 200 epochs using the SGD optimizer with a learning rate of , momentum set to 0.9, and a batch size of 32.

From the results showcased in

Table 5, MiAMix demonstrates commendable efficacy even under conditions of limited data availability. Moreover, its performance under the transfer learning paradigm further underscores its robustness and adaptability across diverse learning scenarios.

4.4. Robustness

To assess robustness, we set up an evaluation on the CIFAR-100-C dataset, explicitly designed for corruption robustness testing and providing 19 distinct corruptions such as noise, blur, and digital corruption. Our model architecture and parameter settings used for this evaluation are consistent with those applied to the original CIFAR-100 dataset in our above experiments. According to

Table 6, our proposed MiAMix method demonstrated exemplary performance, achieving the highest accuracy. This provides compelling evidence that our multi-stage and diversified mixing approach contributes significantly to the improvement of model robustness.

4.5. Ablation Study

The MiAMix method involves multiple stages of randomization and augmentation, which introduce many parameters into the process. It is essential to clearly articulate whether each stage is necessary and how much it contributes to the final result. Furthermore, understanding the influence of each major parameter on the outcome is also crucial. To further demonstrate the effectiveness of our method, we conducted several ablation experiments on the CIFAR-10, CIFAR-100-C, and Tiny-ImageNet datasets.

4.5.1. GMix, AGMix, and Mixing Mask Augmentation

A particular comparison of interest is between the GMix and our augmented version, AGMix, in

Table 3 and

Table 6. The primary difference between these two methods lies in the inclusion of additional randomization in the Gaussian Kernel. The experiment results reveal that this simple yet effective augmentation strategy indeed brings about a significant improvement in the performance of the mixup method across all three datasets and one corrupted dataset, despite maintaining almost the same training cost as GMix. As the results in

Table 7 illustrate, the introduction of various forms of augmentation progressively improves model performance. These experiment results underscore the importance and effectiveness of augmenting mixing masks during the training process; furthermore, they validate the approach taken in the design of our MiAMix method.

4.5.2. The Effectiveness of Multiple Mixing Layers

The data presented in

Table 8 demonstrates the substantial impact of multiple mixing layers on the model’s performance. As the table shows, a discernible improvement in Top-1 accuracy is observed when more layers of masks are added, emphasizing the effectiveness of this approach in enhancing the diversity and complexity of the training data. Most notably, the model’s performance is further amplified when the number of layers is not constant but rather sampled randomly from a set of values, as indicated by the bracketed entries in the table. This observation suggests that introducing variability into the number of mixing layers could potentially be an effective approach for extracting more comprehensive and robust features from the data.

However, it is important to note that there are diminishing returns as we further increase the layers of mixing. The experimental results reveal certain limitations; particularly, an overly diversified mixing mask does not always guarantee significant performance enhancements. As the number of layers continues to grow, the increase in Top-1 accuracy begins to plateau. This phenomenon might be attributed to the potential over-complexification of the data, which may inadvertently introduce noise or ambiguities detrimental to the learning process. Therefore, based on our findings, it seems prudent to adopt a balanced approach, where the optimal configuration employs a mix of one or two layers with a sampling weight of [0.5, 0.5]. This setup offers a judicious blend of diversity and clarity, ensuring the model extracts meaningful patterns without being overwhelmed by excessive variability.

4.5.3. The Effectiveness of MSDA Ensemble

In the study, the ensemble’s efficacy was tested by systematically removing individual mixup-based data augmentation methods from the ensemble and observing the impact on Top-1 accuracy. The results, as shown in

Table 9, clearly exhibit the vital contributions each method provides to the overall performance. Eliminating any single method from the ensemble led to a decrease in accuracy, underscoring the value of the diverse mixup-based data augmentation techniques employed. This demonstrates the strength of our MiAMix approach in harnessing the collective contributions of these diverse techniques, optimizing their integration, and achieving superior performance results.

Furthermore, it is noteworthy to mention the consistent performance trends observed across both Tiny-ImageNet and CIFAR-10 datasets. Despite their intrinsic differences, the similar patterns of accuracy drop upon the removal of individual methods, highlight the strong transferability of our MiAMix ensemble approach. This consistency is particularly encouraging as it suggests that the benefits reaped from integrating diverse mixup-based data augmentation techniques are not bound to a particular dataset. Rather, when the dataset changes, the ensemble still maintains its robustness and performance. Such strong transferability is invaluable, allowing for the seamless application of our approach across different tasks and datasets without the need for extensive retuning or adaptation. This underscores the versatility and broad applicability of the MiAMix ensemble in real-world machine-learning scenarios.

4.5.4. Comparison between Mask Merging Methods and Mixing Ratio Merging Methods

As shown in

Table 10, the combination of multiplication for mask merging and the “out” method for

merging yields the highest accuracy for both Top-1 (67.95%) and Top-5 (87.26%). On the other hand, when using the sum operation for mask merging or reusing the original

(the “orig” method), the performance degrades. This suggests that reusing the original

might not provide a sufficiently adaptive mixing ratio for the model’s learning process. Moreover, compared with the multiplication operation, the lower flexibility of the sum operation does impede the performance. These results reaffirm the superiority of the (mul, out) method in our multi-stage data augmentation framework.

4.5.5. The Effectiveness of Mixing with an Augmented Version of the Image Itself

In our experiments, we also explore the concept of self-mixing, which refers to a particular case where an image does not undergo the usual mixup operation with another randomly paired image but instead blends with an augmented version of itself. This process can be controlled by the self-mixing ratio, denoting the percentage of images subject to self-mixing.

Table 11 showcases the impact of the self-mixing ratio on the classification accuracy of both CIFAR-100 and CIFAR-100-C datasets when employing the ResNeXt-50 model. The results illustrate a notable trend: a 10% self-mixing ratio leads to improvements in the classification performance, especially on the CIFAR-100-C dataset, which consists of corrupted versions of the original images. The improvement in CIFAR-100-C indicates that self-mixing contributes significantly to the model’s robustness against various corruptions and perturbations. By incorporating self-mixing, our model becomes exposed to a form of noise, thereby mimicking the potential real-world scenarios more effectively and enhancing the model’s ability to generalize. The noise introduced via self-mixing could be viewed as another unique variant of the data augmentation, further justifying the importance of diverse augmentation strategies in improving the performance and robustness of the model.

4.5.6. Parameter

The role of is pivotal in determining the mixing ratio, as it governs the sampling of from the Beta or Dirichlet Distribution. Specifically:

Impact on Mixing: A larger will lead to more “extreme" mixing ratios more often. This means that, on average, the mixed samples will look more like one of the original images than a 50–50 mix. Conversely, a smaller generally tends to produce mixed samples that are closer to an even blend of the two original images.

Special Case of : An set to 1 implies uniform sampling of over the interval [0, 1].

The results presented in

Figure 3 offer several insights into the behavior of MiAMix with respect to the hyperparameter

. Firstly, it is evident that MiAMix performs optimally with

as the default across various datasets. This showcases the method’s inherent flexibility and adaptability.

Another key observation is the method’s good transferability across different datasets when the underlying model architectures are similar. To evaluate the transferability of the optimal hyperparameters found on Tiny-ImageNet with the ResNet-18 model, we use the same on the CIFAR-100 dataset with ResNet-18 and ResNext-50 models. Such a trait is highly desirable, especially when deploying models in different datasets without the need for extensive retuning.

However, when switching between fundamentally different model architectures, like the Vision Transformer (ViT) and traditional Convolutional Neural Networks (CNNs), there is a slight divergence in optimal values. Due to the inherent differences between these two architectures, our experiments suggest that ViT requires a larger value.

In the context of transfer learning, a more conservative is favorable. Specifically, an value nearing seems to yield superior results. This preference toward mixing samples enables the generation of a more diverse dataset, particularly valuable when the available data are limited.

In summary, the model exhibits a moderate level of sensitivity to the parameter, yet its transferability remains well within a manageable range. This emphasizes the method’s strong generalizability.

4.6. MiAMix vs. Other MSDA Methods

In this work, we have also conducted a comprehensive comparison between MiAMix and other prevalent MSDA methods, as detailed in

Table 12. The first comparison lies in the manner of mixing, which generally falls under three primary categories: linear interpolation, cropping of images, and the ensemble of MSDA approaches adopted by MiAMix. Secondly, we summarized the improvements for each method and examined them across different experimental settings. Moreover, the training cost is added to the comparison as well. It is essential to highlight the significance of concurrent execution of data preparation on the CPU and GPU computation. For instance, while methods like AutoMix show promise in terms of performance, their reliance on GPU-intensive operations, such as feature extraction for mask generation, inherently lengthens the training process. Contrarily, methods designed for parallel execution, akin to MiAMix, might seem computationally intensive, but due to the design, the real-world overhead is negligible. Based on that comparison and experiments, our experiments shed light on the strengths and potential pitfalls of each approach. MiAMix offers considerable improvements across scenarios but demands more work on parameter tuning, highlighting the need for a balance between performance and ease of use. Therefore, in the following section, we will delve deeper into this topic to assist readers in effectively applying this method to their applications.

4.7. Delving into Parameter Tuning

While

Table 2 enumerates several tunable parameters for our MiAMix method, we have previously detailed the effectiveness of

,

M,

, and

in the ablation studies. Additionally, we have conducted an in-depth evaluation and analysis focusing specifically on the parameters

and

M in terms of their transferability across different tasks and model architectures in the ablation studies. Our findings suggest that, when transitioning between tasks with similar objectives or employing models with similar architecture, the need for extensive parameter tuning is limited. The MiAMix method exhibits a commendable degree of stability in these scenarios, often achieving satisfactory results with minimal adjustments to parameters. In this section, our attention shifts to the

W,

, and

parameters. Furthermore, we will give some results on different mixing mask augmentation methods and augmentation levels.

For the sampling weight

W, on datasets like Tiny-ImageNet, CIFAR-10, and CIFAR-100 with the Resnet model, we identified the optimal ratio as

for methods

. There exists a relatively straightforward approach for tuning these weights. One can preliminarily evaluate the impact of each candidate method on the final model’s performance. If a specific method significantly enhances performance, it is advisable to amplify its weight. Conversely, if its improvement is marginal, one might allocate a lesser weight or even set it to zero. For instance, compared to traditional CNNs, the DeiT transformer-based model excels in capturing long-range pixel interactions. Consequently, in our tests, the MixUp technique did not manifest a pronounced boost. However, methods like CutMix, which prioritize the understanding of adjacent pixels, proved to be of greater assistance. As illustrated in

Table 10, we also noted that FMix’s performance was subpar, leading us to ultimately select a weight distribution of [1, 3, 0, 1, 1].

The experimental results presented in

Table 13 offer intriguing insights into the interplay between

and

on the performance of ResNet-18 on the Cifar-10 dataset. When

increases (i.e., when there is more emphasis on the application of the Gaussian filter for mask smoothing), the performance peaks at

, regardless of the value of

. On the other hand, for the parameter

, which controls the probability of applying rotation and distortion augmentation to the mixing mask, a value of 0.25 in combination with

yields the highest Top-1 accuracy of

. The method requires a lower probability of shape augmentation

primarily because Fmix can already generate highly flexible masks, and the application of multiple layers of mixing masks inherently presents a diversified shape. To harness the full potential of the method, the result suggests that applying a moderate level of augmentation and smoothing on the mixing mask offers the best performance.

For our mixing mask augmentation, especially when considering rotation, we set the maximum rotation angle to range from to . This range essentially covers all the orientations. For the smoothing process, Gaussian blurring is applied with a window size chosen randomly from the set for images and for images.

5. Future Work

While MiAMix has showcased compelling results, as is evident from our rigorous evaluations against existing state-of-the-art mixed sample data augmentation techniques, it also opens avenues for intriguing future work. One such avenue is delving deeper into the interpretability of the method. With the increasing complexity and diversity brought about by MiAMix, understanding the exact nature of the transformations and their impact on the neural network’s internal representations becomes crucial.

Another promising direction would be better parameterization. The current approach has several parameters, and while the paper identifies their optimal values from our experiments, a more extensive empirical foundation would allow us to devise a simpler strategy to adjust the level of diversification instead of tuning each hyperparameter. This can potentially lead to a more streamlined approach similar to the AutoMix [

11] method. Furthermore, there is potential in exploring adaptive algorithms that could dynamically adjust the mixup strategy based on the model’s performance or the complexity of the data. This could ensure that the augmentation strategy evolves in tandem with the learning process, optimizing for both generalization and computational efficiency.

Moreover, the proposed methodology is primarily optimized for image data, but it presents potential applications beyond its current domain. It would be particularly intriguing to explore its efficacy on audio data. Given the distinct nature of audio signals and their intricate temporal dependencies, adapting and refining MiAMix for such datasets can provide a fresh perspective on its versatility. Such exploration may necessitate modifications in the mixing masks or even the introduction of new augmentation strategies tailored to audio’s unique challenges.

6. Conclusions

In conclusion, our work in this paper has provided a significant contribution toward the development and understanding of Multi-layered Augmented Mixup (MiAMix). By reimagining the design of GMix, we have introduced an augmented form, AGMix, that leverages the Gaussian kernel’s flexibility to produce a diversified range of mixing outputs. Additionally, we have devised an innovative method for sampling the mixing ratio when dealing with multiple mixing masks. Most crucially, we have proposed a novel approach for MSDA that incorporates various stages, namely: random sample pairing, mixing methods and ratios sampling, the generation and augmentation of mixing masks, and the output of mixed samples. By unifying these stages into a cohesive framework—MiAMix—we have constructed a search space replete with diverse hyper-parameters. This multi-stage approach offers a more diversified and dynamic way to apply data augmentation, potentially leading to improved model performance and better generalization on unseen data. Importantly, our methods do not incur excessive computational costs and can be seamlessly integrated into established training pipelines, making them practically viable. Furthermore, the versatile nature of MiAMix allows for future adaptations and improvements, promising an exciting path for the continuous evolution of data augmentation techniques. Given these advantages, we are optimistic about the potential of MiAMix to significantly influence and shape the field of machine learning, thereby enabling more robust and efficient model training processes.