Journal Description

Entropy

Entropy

is an international and interdisciplinary peer-reviewed open access journal of entropy and information studies, published monthly online by MDPI. The International Society for the Study of Information (IS4SI) and Spanish Society of Biomedical Engineering (SEIB) are affiliated with Entropy and their members receive a discount on the article processing charge.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, SCIE (Web of Science), Inspec, PubMed, PMC, Astrophysics Data System, and other databases.

- Journal Rank: JCR - Q2 (Physics, Multidisciplinary) / CiteScore - Q1 (Mathematical Physics)

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 20.8 days after submission; acceptance to publication is undertaken in 2.9 days (median values for papers published in this journal in the second half of 2023).

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

- Testimonials: See what our editors and authors say about Entropy.

- Companion journals for Entropy include: Foundations, Thermo and MAKE.

Impact Factor:

2.7 (2022);

5-Year Impact Factor:

2.6 (2022)

Latest Articles

Memory Corrections to Markovian Langevin Dynamics

Entropy 2024, 26(5), 425; https://doi.org/10.3390/e26050425 (registering DOI) - 16 May 2024

Abstract

Analysis of non-Markovian systems and memory-induced phenomena poses an everlasting challenge in the realm of physics. As a paradigmatic example, we consider a classical Brownian particle of mass M subjected to an external force and exposed to correlated thermal fluctuations. We show that

[...] Read more.

Analysis of non-Markovian systems and memory-induced phenomena poses an everlasting challenge in the realm of physics. As a paradigmatic example, we consider a classical Brownian particle of mass M subjected to an external force and exposed to correlated thermal fluctuations. We show that the recently developed approach to this system, in which its non-Markovian dynamics given by the Generalized Langevin Equation is approximated by its memoryless counterpart but with the effective particle mass

(This article belongs to the Collection Foundations of Statistical Mechanics)

►

Show Figures

Open AccessArticle

Non-Negative Decomposition of Multivariate Information: From Minimum to Blackwell-Specific Information

by

Tobias Mages, Elli Anastasiadi and Christian Rohner

Entropy 2024, 26(5), 424; https://doi.org/10.3390/e26050424 - 15 May 2024

Abstract

Partial information decompositions (PIDs) aim to categorize how a set of source variables provides information about a target variable redundantly, uniquely, or synergetically. The original proposal for such an analysis used a lattice-based approach and gained significant attention. However, finding a suitable underlying

[...] Read more.

Partial information decompositions (PIDs) aim to categorize how a set of source variables provides information about a target variable redundantly, uniquely, or synergetically. The original proposal for such an analysis used a lattice-based approach and gained significant attention. However, finding a suitable underlying decomposition measure is still an open research question at an arbitrary number of discrete random variables. This work proposes a solution with a non-negative PID that satisfies an inclusion–exclusion relation for any f-information measure. The decomposition is constructed from a pointwise perspective of the target variable to take advantage of the equivalence between the Blackwell and zonogon order in this setting. Zonogons are the Neyman–Pearson region for an indicator variable of each target state, and f-information is the expected value of quantifying its boundary. We prove that the proposed decomposition satisfies the desired axioms and guarantees non-negative partial information results. Moreover, we demonstrate how the obtained decomposition can be transformed between different decomposition lattices and that it directly provides a non-negative decomposition of Rényi-information at a transformed inclusion–exclusion relation. Finally, we highlight that the decomposition behaves differently depending on the information measure used and how it can be used for tracing partial information flows through Markov chains.

Full article

(This article belongs to the Special Issue Synergy and Redundancy Measures: Theory and Applications to Characterize Complex Systems and Shape Neural Network Representations)

►▼

Show Figures

Figure 1

Open AccessArticle

Landauer Bound in the Context of Minimal Physical Principles: Meaning, Experimental Verification, Controversies and Perspectives

by

Edward Bormashenko

Entropy 2024, 26(5), 423; https://doi.org/10.3390/e26050423 - 15 May 2024

Abstract

The physical roots, interpretation, controversies, and precise meaning of the Landauer principle are surveyed. The Landauer principle is a physical principle defining the lower theoretical limit of energy consumption necessary for computation. It states that an irreversible change in information stored in a

[...] Read more.

The physical roots, interpretation, controversies, and precise meaning of the Landauer principle are surveyed. The Landauer principle is a physical principle defining the lower theoretical limit of energy consumption necessary for computation. It states that an irreversible change in information stored in a computer, such as merging two computational paths, dissipates a minimum amount of heat

(This article belongs to the Special Issue The Landauer Principle and Its Implementations in Physics, Chemistry and Biology: Current Status, Critics and Controversies)

Open AccessArticle

Model Selection for Exponential Power Mixture Regression Models

by

Yunlu Jiang, Jiangchuan Liu, Hang Zou and Xiaowen Huang

Entropy 2024, 26(5), 422; https://doi.org/10.3390/e26050422 - 15 May 2024

Abstract

Finite mixture of linear regression (FMLR) models are among the most exemplary statistical tools to deal with various heterogeneous data. In this paper, we introduce a new procedure to simultaneously determine the number of components and perform variable selection for the different regressions

[...] Read more.

Finite mixture of linear regression (FMLR) models are among the most exemplary statistical tools to deal with various heterogeneous data. In this paper, we introduce a new procedure to simultaneously determine the number of components and perform variable selection for the different regressions for FMLR models via an exponential power error distribution, which includes normal distributions and Laplace distributions as special cases. Under some regularity conditions, the consistency of order selection and the consistency of variable selection are established, and the asymptotic normality for the estimators of non-zero parameters is investigated. In addition, an efficient modified expectation-maximization (EM) algorithm and a majorization-maximization (MM) algorithm are proposed to implement the proposed optimization problem. Furthermore, we use the numerical simulations to demonstrate the finite sample performance of the proposed methodology. Finally, we apply the proposed approach to analyze a baseball salary data set. Results indicate that our proposed method obtains a smaller BIC value than the existing method.

Full article

(This article belongs to the Section Information Theory, Probability and Statistics)

Open AccessArticle

Optimal Decoding Order and Power Allocation for Sum Throughput Maximization in Downlink NOMA Systems

by

Zhuo Han, Wanming Hao, Zhiqing Tang and Shouyi Yang

Entropy 2024, 26(5), 421; https://doi.org/10.3390/e26050421 - 15 May 2024

Abstract

In this paper, we consider a downlink non-orthogonal multiple access (NOMA) system over Nakagami-m channels. The single-antenna base station serves two single-antenna NOMA users based on statistical channel state information (CSI). We derive the closed-form expression of the exact outage probability under

[...] Read more.

In this paper, we consider a downlink non-orthogonal multiple access (NOMA) system over Nakagami-m channels. The single-antenna base station serves two single-antenna NOMA users based on statistical channel state information (CSI). We derive the closed-form expression of the exact outage probability under a given decoding order, and we also deduce the asymptotic outage probability and diversity order in a high-SNR regime. Then, we analyze all the possible power allocation ranges and theoretically prove the optimal power allocation range under the corresponding decoding order. The demarcation points of the optimal power allocation ranges are affected by target data rates and total power, without an effect from the CSI. In particular, the values of the demarcation points are proportional to the total power. Furthermore, we formulate a joint decoding order and power allocation optimization problem to maximize the sum throughput, which is solved by efficiently searching in our obtained optimal power allocation ranges. Finally, Monte Carlo simulations are conducted to confirm the accuracy of our derived exact outage probability. Numerical results show the accuracy of our deduced demarcation points of the optimal power allocation ranges. And the optimal decoding order is not constant at different total transmit power levels.

Full article

(This article belongs to the Special Issue Advanced New Physical Layer Technologies for Next-Generation Wireless Communications)

►▼

Show Figures

Figure 1

Open AccessReview

The SU(3)C × SU(3)L × U(1)X (331) Model: Addressing the Fermion Families Problem within Horizontal Anomalies Cancellation

by

Claudio Corianò and Dario Melle

Entropy 2024, 26(5), 420; https://doi.org/10.3390/e26050420 - 14 May 2024

Abstract

One of the most important and unanswered problems in particle physics is the origin of the three generations of quarks and leptons. The Standard Model does not provide any hint regarding its sequential charge assignments, which remain a fundamental mystery of Nature. One

[...] Read more.

One of the most important and unanswered problems in particle physics is the origin of the three generations of quarks and leptons. The Standard Model does not provide any hint regarding its sequential charge assignments, which remain a fundamental mystery of Nature. One possible solution of the puzzle is to look for charge assignments, in a given gauge theory, that are inter-generational, by employing the cancellation of the gravitational and gauge anomalies horizontally. The 331 model, based on an

(This article belongs to the Special Issue Particle Theory and Theoretical Cosmology—Dedicated to Professor Paul Howard Frampton on the Occasion of His 80th Birthday)

►▼

Show Figures

Figure 1

Open AccessArticle

Capacity Analysis of Hybrid Satellite–Terrestrial Systems with Selection Relaying

by

Predrag Ivaniš, Jovan Milojković, Vesna Blagojević and Srđan Brkić

Entropy 2024, 26(5), 419; https://doi.org/10.3390/e26050419 - 13 May 2024

Abstract

A hybrid satellite–terrestrial relay network is a simple and flexible solution that can be used to improve the performance of land mobile satellite systems, where the communication links between satellite and mobile terrestrial users can be unstable due to the multipath effect, obstacles,

[...] Read more.

A hybrid satellite–terrestrial relay network is a simple and flexible solution that can be used to improve the performance of land mobile satellite systems, where the communication links between satellite and mobile terrestrial users can be unstable due to the multipath effect, obstacles, as well as the additional atmospheric losses. Motivated by these facts, in this paper, we analyze a system where the satellite–terrestrial links undergo shadowed Rice fading, and, following this, terrestrial relay applies the selection relaying protocol and forwards the information to the final destination using the communication link subjected to Nakagami-m fading. For the considered relaying protocol, we derive the exact closed-form expressions for the outage probability, outage capacity, and ergodic capacity, presented in polynomial–exponential form for the integer-valued fading parameters. The presented numerical results illustrate the usefulness of the selection relaying for various propagation scenarios and system geometry parameters. The obtained analytical results are corroborated by an independent simulation method, based on the originally developed fading simulator.

Full article

(This article belongs to the Special Issue Information Theory and Coding for Wireless Communications II)

►▼

Show Figures

Figure 1

Open AccessArticle

In Our Mind’s Eye: Thinkable and Unthinkable, and Classical and Quantum in Fundamental Physics, with Schrödinger’s Cat Experiment

by

Arkady Plotnitsky

Entropy 2024, 26(5), 418; https://doi.org/10.3390/e26050418 - 13 May 2024

Abstract

This article reconsiders E. Schrödinger’s cat paradox experiment from a new perspective, grounded in the interpretation of quantum mechanics that belongs to the class of interpretations designated as “reality without realism” (RWR) interpretations. These interpretations assume that the reality ultimately responsible for quantum

[...] Read more.

This article reconsiders E. Schrödinger’s cat paradox experiment from a new perspective, grounded in the interpretation of quantum mechanics that belongs to the class of interpretations designated as “reality without realism” (RWR) interpretations. These interpretations assume that the reality ultimately responsible for quantum phenomena is beyond conception, an assumption designated as the Heisenberg postulate. Accordingly, in these interpretations, quantum physics is understood in terms of the relationships between what is thinkable and what is unthinkable, with, physical, classical, and quantum, corresponding to thinkable and unthinkable, respectively. The role of classical physics becomes unavoidable in quantum physics, the circumstance designated as the Bohr postulate, which restores to classical physics its position as part of fundamental physics, a position commonly reserved for quantum physics and relativity. This view of quantum physics and relativity is maintained by this article as well but is argued to be sufficient for understanding fundamental physics. Establishing this role of classical physics is a distinctive contribution of the article, which allows it to reconsider Schrödinger’s cat experiment, but has a broader significance for understanding fundamental physics. RWR interpretations have not been previously applied to the cat experiment, including by N. Bohr, whose interpretation, in its ultimate form (he changed it a few times), was an RWR interpretation. The interpretation adopted in this article follows Bohr’s interpretation, based on the Heisenberg and Bohr postulates, but it adds the Dirac postulate, stating that the concept of a quantum object only applies at the time of observation and not independently.

Full article

(This article belongs to the Special Issue Quantum Information and Probability: From Foundations to Engineering II)

Open AccessArticle

Aging Intensity for Step-Stress Accelerated Life Testing Experiments

by

Francesco Buono and Maria Kateri

Entropy 2024, 26(5), 417; https://doi.org/10.3390/e26050417 - 13 May 2024

Abstract

The aging intensity (AI), defined as the ratio of the instantaneous hazard rate and a baseline hazard rate, is a useful tool for the describing reliability properties of a random variable corresponding to a lifetime. In this work, the concept of AI is

[...] Read more.

The aging intensity (AI), defined as the ratio of the instantaneous hazard rate and a baseline hazard rate, is a useful tool for the describing reliability properties of a random variable corresponding to a lifetime. In this work, the concept of AI is introduced in step-stress accelerated life testing (SSALT) experiments, providing new insights to the model and enabling the further clarification of the differences between the two commonly employed cumulative exposure (CE) and tampered failure rate (TFR) models. New AI-based estimators for the parameters of a SSALT model are proposed and compared to the MLEs in terms of examples and a simulation study.

Full article

(This article belongs to the Special Issue Information-Theoretic Criteria for Statistical Model Selection)

►▼

Show Figures

Figure 1

Open AccessArticle

Towards Multi-Objective Object Push-Grasp Policy Based on Maximum Entropy Deep Reinforcement Learning under Sparse Rewards

by

Tengteng Zhang and Hongwei Mo

Entropy 2024, 26(5), 416; https://doi.org/10.3390/e26050416 - 12 May 2024

Abstract

In unstructured environments, robots need to deal with a wide variety of objects with diverse shapes, and often, the instances of these objects are unknown. Traditional methods rely on training with large-scale labeled data, but in environments with continuous and high-dimensional state spaces,

[...] Read more.

In unstructured environments, robots need to deal with a wide variety of objects with diverse shapes, and often, the instances of these objects are unknown. Traditional methods rely on training with large-scale labeled data, but in environments with continuous and high-dimensional state spaces, the data become sparse, leading to weak generalization ability of the trained models when transferred to real-world applications. To address this challenge, we present an innovative maximum entropy Deep Q-Network (ME-DQN), which leverages an attention mechanism. The framework solves complex and sparse reward tasks through probabilistic reasoning while eliminating the trouble of adjusting hyper-parameters. This approach aims to merge the robust feature extraction capabilities of Fully Convolutional Networks (FCNs) with the efficient feature selection of the attention mechanism across diverse task scenarios. By integrating an advantage function with the reasoning and decision-making of deep reinforcement learning, ME-DQN propels the frontier of robotic grasping and expands the boundaries of intelligent perception and grasping decision-making in unstructured environments. Our simulations demonstrate a remarkable grasping success rate of 91.6%, while maintaining excellent generalization performance in the real world.

Full article

(This article belongs to the Topic AI and Computational Methods for Modelling, Simulations and Optimizing of Advanced Systems: Innovations in Complexity)

(This article belongs to the Section Multidisciplinary Applications)

►▼

Show Figures

(This article belongs to the Section Multidisciplinary Applications)

Figure 1

Open AccessFeature PaperArticle

Quantum Synchronization and Entanglement of Dissipative Qubits Coupled to a Resonator

by

Alexei D. Chepelianskii and Dima L. Shepelyansky

Entropy 2024, 26(5), 415; https://doi.org/10.3390/e26050415 - 11 May 2024

Abstract

In a dissipative regime, we study the properties of several qubits coupled to a driven resonator in the framework of a Jaynes–Cummings model. The time evolution and the steady state of the system are numerically analyzed within the Lindblad master equation, with up

[...] Read more.

In a dissipative regime, we study the properties of several qubits coupled to a driven resonator in the framework of a Jaynes–Cummings model. The time evolution and the steady state of the system are numerically analyzed within the Lindblad master equation, with up to several million components. Two semi-analytical approaches, at weak and strong (semiclassical) dissipations, are developed to describe the steady state of this system and determine its validity by comparing it with the Lindblad equation results. We show that the synchronization of several qubits with the driving phase can be obtained due to their coupling to the resonator. We establish the existence of two different qubit synchronization regimes: In the first one, the semiclassical approach describes well the dynamics of qubits and, thus, their quantum features and entanglement are suppressed by dissipation and the synchronization is essentially classical. In the second one, the entangled steady state of a pair of qubits remains synchronized in the presence of dissipation and decoherence, corresponding to the regime non-existent in classical synchronization.

Full article

(This article belongs to the Section Quantum Information)

►▼

Show Figures

Figure 1

Open AccessFeature PaperArticle

Quantum Tunneling and Complex Dynamics in the Suris’s Integrable Map

by

Yasutaka Hanada and Akira Shudo

Entropy 2024, 26(5), 414; https://doi.org/10.3390/e26050414 - 11 May 2024

Abstract

Quantum tunneling in a two-dimensional integrable map is studied. The orbits of the map are all confined to the curves specified by the one-dimensional Hamiltonian. It is found that the behavior of tunneling splitting for the integrable map and the associated Hamiltonian system

[...] Read more.

Quantum tunneling in a two-dimensional integrable map is studied. The orbits of the map are all confined to the curves specified by the one-dimensional Hamiltonian. It is found that the behavior of tunneling splitting for the integrable map and the associated Hamiltonian system is qualitatively the same, with only a slight difference in magnitude. However, the tunneling tails of the wave functions, obtained by superposing the eigenfunctions that form the doublet, exhibit significant differences. To explore the origin of the difference, we observe the classical dynamics in the complex plane and find that the existence of branch points appearing in the potential function of the integrable map could play the role of yielding non-trivial behavior in the tunneling tail. The result highlights the subtlety of quantum tunneling, which cannot be captured in nature only by the dynamics in the real plane.

Full article

(This article belongs to the Special Issue Tunneling in Complex Systems)

►▼

Show Figures

Figure 1

Open AccessArticle

Efficient Quantum Private Comparison Based on GHZ States

by

Min Hou, Yue Wu and Shibin Zhang

Entropy 2024, 26(5), 413; https://doi.org/10.3390/e26050413 - 10 May 2024

Abstract

Quantum private comparison (QPC) is a fundamental cryptographic protocol that allows two parties to compare the equality of their private inputs without revealing any information about those inputs to each other. In recent years, QPC protocols utilizing various quantum resources have been proposed.

[...] Read more.

Quantum private comparison (QPC) is a fundamental cryptographic protocol that allows two parties to compare the equality of their private inputs without revealing any information about those inputs to each other. In recent years, QPC protocols utilizing various quantum resources have been proposed. However, these QPC protocols have lower utilization of quantum resources and qubit efficiency. To address this issue, we propose an efficient QPC protocol based on GHZ states, which leverages the unique properties of GHZ states and rotation operations to achieve secure and efficient private comparison. The secret information is encoded in the rotation angles of rotation operations performed on the received quantum sequence transmitted along the circular mode. This results in the multiplexing of quantum resources and enhances the utilization of quantum resources. Our protocol does not require quantum key distribution (QKD) for sharing a secret key to ensure the security of the inputs, resulting in no consumption of quantum resources for key sharing. One GHZ state can be compared to three bits of classical information in each comparison, leading to qubit efficiency reaching 100%. Compared with the existing QPC protocol, our protocol does not require quantum resources for sharing a secret key. It also demonstrates enhanced performance in qubit efficiency and the utilization of quantum resources.

Full article

(This article belongs to the Special Issue Quantum Computation, Communication and Cryptography)

►▼

Show Figures

Figure 1

Open AccessArticle

Finite-Temperature Correlation Functions Obtained from Combined Real- and Imaginary-Time Propagation of Variational Thawed Gaussian Wavepackets

by

Jens Aage Poulsen and Gunnar Nyman

Entropy 2024, 26(5), 412; https://doi.org/10.3390/e26050412 - 10 May 2024

Abstract

We apply the so-called variational Gaussian wavepacket approximation (VGA) for conducting both real- and imaginary-time dynamics to calculate thermal correlation functions. By considering strongly anharmonic systems, such as a quartic potential and a double-well potential at high and low temperatures, it is shown

[...] Read more.

We apply the so-called variational Gaussian wavepacket approximation (VGA) for conducting both real- and imaginary-time dynamics to calculate thermal correlation functions. By considering strongly anharmonic systems, such as a quartic potential and a double-well potential at high and low temperatures, it is shown that this method is partially able to account for tunneling. This is contrary to other popular many-body methods, such as ring polymer molecular dynamics and the classical Wigner method, which fail in this respect. It is a historical peculiarity that no one has considered the VGA method for representing both the Boltzmann operator and the real-time propagation. This method should be well suited for molecular systems containing many atoms.

Full article

(This article belongs to the Special Issue Tunneling in Complex Systems)

►▼

Show Figures

Figure 1

Open AccessArticle

Does Quantum Mechanics Require “Conspiracy”?

by

Ovidiu Cristinel Stoica

Entropy 2024, 26(5), 411; https://doi.org/10.3390/e26050411 - 9 May 2024

Abstract

Quantum states containing records of incompatible outcomes of quantum measurements are valid states in the tensor-product Hilbert space. Since they contain false records, they conflict with the Born rule and with our observations. I show that excluding them requires a fine-tuning to an

[...] Read more.

Quantum states containing records of incompatible outcomes of quantum measurements are valid states in the tensor-product Hilbert space. Since they contain false records, they conflict with the Born rule and with our observations. I show that excluding them requires a fine-tuning to an extremely restricted subspace of the Hilbert space that seems “conspiratorial”, in the sense that (1) it seems to depend on future events that involve records (including measurement settings) and on the dynamical law (normally thought to be independent of the initial conditions), and (2) it violates Statistical Independence, even when it is valid in the context of Bell’s theorem. To solve the puzzle, I build a model in which, by changing the dynamical law, the same initial conditions can lead to different histories in which the validity of records is relative to the new dynamical law. This relative validity of the records may restore causality, but the initial conditions still must depend, at least partially, on the dynamical law. While violations of Statistical Independence are often seen as non-scientific, they turn out to be needed to ensure the validity of records and our own memories and, by this, of science itself. A Past Hypothesis is needed to ensure the existence of records and turns out to require violations of Statistical Independence. It is not excluded that its explanation, still unknown, ensures such violations in the way needed by local interpretations of quantum mechanics. I suggest that an as-yet unknown law or superselection rule may restrict the full tensor-product Hilbert space to the very special subspace required by the validity of records and the Past Hypothesis.

Full article

(This article belongs to the Section Quantum Information)

Open AccessArticle

Geometric Algebra Jordan–Wigner Transformation for Quantum Simulation

by

Grégoire Veyrac and Zeno Toffano

Entropy 2024, 26(5), 410; https://doi.org/10.3390/e26050410 - 8 May 2024

Abstract

►▼

Show Figures

Quantum simulation qubit models of electronic Hamiltonians rely on specific transformations in order to take into account the fermionic permutation properties of electrons. These transformations (principally the Jordan–Wigner transformation (JWT) and the Bravyi–Kitaev transformation) correspond in a quantum circuit to the introduction of

[...] Read more.

Quantum simulation qubit models of electronic Hamiltonians rely on specific transformations in order to take into account the fermionic permutation properties of electrons. These transformations (principally the Jordan–Wigner transformation (JWT) and the Bravyi–Kitaev transformation) correspond in a quantum circuit to the introduction of a supplementary circuit level. In order to include the fermionic properties in a more straightforward way in quantum computations, we propose to use methods issued from Geometric Algebra (GA), which, due to its commutation properties, are well adapted for fermionic systems. First, we apply the Witt basis method in GA to reformulate the JWT in this framework and use this formulation to express various quantum gates. We then rewrite the general one and two-electron Hamiltonian and use it for building a quantum simulation circuit for the Hydrogen molecule. Finally, the quantum Ising Hamiltonian, widely used in quantum simulation, is reformulated in this framework.

Full article

Figure 1

Open AccessArticle

Entropy-Aided Meshing-Order Modulation Analysis for Wind Turbine Planetary Gear Weak Fault Detection under Variable Rotational Speed

by

Shaodan Zhi, Hengshan Wu, Haikuo Shen, Tianyang Wang and Hongfei Fu

Entropy 2024, 26(5), 409; https://doi.org/10.3390/e26050409 - 8 May 2024

Abstract

As one of the most vital energy conversation systems, the safe operation of wind turbines is very important; however, weak fault and time-varying speed may challenge the conventional monitoring strategies. Thus, an entropy-aided meshing-order modulation method is proposed for detecting the optimal frequency

[...] Read more.

As one of the most vital energy conversation systems, the safe operation of wind turbines is very important; however, weak fault and time-varying speed may challenge the conventional monitoring strategies. Thus, an entropy-aided meshing-order modulation method is proposed for detecting the optimal frequency band, which contains the weak fault-related information. Specifically, the variable rotational frequency trend is first identified and extracted based on the time–frequency representation of the raw signal by constructing a novel scaling-basis local reassigning chirplet transform (SLRCT). A new entropy-aided meshing-order modulation (EMOM) indicator is then constructed to locate the most sensitive modulation frequency area according to the extracted fine speed trend with the help of order tracking technique. Finally, the raw vibration signal is bandpass filtered via the corresponding optimal frequency band with the highest EMOM indicator. The order components resulting from the weak fault can be highlighted to accomplish weak fault detection. The effectiveness of the proposed EMOM analysis-based method has been tested using the experimental data of three different gear fault types of different fault levels from a planetary test rig.

Full article

(This article belongs to the Special Issue Signal Processing for Fault Detection and Diagnosis in Electric Machines and Energy Conversion Systems)

►▼

Show Figures

Figure 1

Open AccessArticle

Investigation of Thermo-Hydraulics in a Lid-Driven Square Cavity with a Heated Hemispherical Obstacle at the Bottom

by

Farhan Lafta Rashid, Abbas Fadhil Khalaf, Arman Ameen and Mudhar A. Al-Obaidi

Entropy 2024, 26(5), 408; https://doi.org/10.3390/e26050408 - 8 May 2024

Abstract

Lid-driven cavity (LDC) flow is a significant area of study in fluid mechanics due to its common occurrence in engineering challenges. However, using numerical simulations (ANSYS Fluent) to accurately predict fluid flow and mixed convective heat transfer features, incorporating both a moving top

[...] Read more.

Lid-driven cavity (LDC) flow is a significant area of study in fluid mechanics due to its common occurrence in engineering challenges. However, using numerical simulations (ANSYS Fluent) to accurately predict fluid flow and mixed convective heat transfer features, incorporating both a moving top wall and a heated hemispherical obstruction at the bottom, has not yet been attempted. This study aims to numerically demonstrate forced convection in a lid-driven square cavity (LDSC) with a moving top wall and a heated hemispherical obstacle at the bottom. The cavity is filled with a Newtonian fluid and subjected to a specific set of velocities (5, 10, 15, and 20 m/s) at the moving wall. The finite volume method is used to solve the governing equations using the Boussinesq approximation and the parallel flow assumption. The impact of various cavity geometries, as well as the influence of the moving top wall on fluid flow and heat transfer within the cavity, are evaluated. The results of this study indicate that the movement of the wall significantly disrupts the flow field inside the cavity, promoting excellent mixing between the flow field below the moving wall and within the cavity. The static pressure exhibits fluctuations, with the highest value observed at the top of the cavity of 1 m width (adjacent to the moving wall) and the lowest at 0.6 m. Furthermore, dynamic pressure experiences a linear increase until reaching its peak at 0.7 m, followed by a steady decrease toward the moving wall. The velocity of the internal surface fluctuates unpredictably along its length while other parameters remain relatively stable.

Full article

(This article belongs to the Special Issue Modern Trends in Multi-Phase Flow and Heat Transfer)

►▼

Show Figures

Figure 1

Open AccessArticle

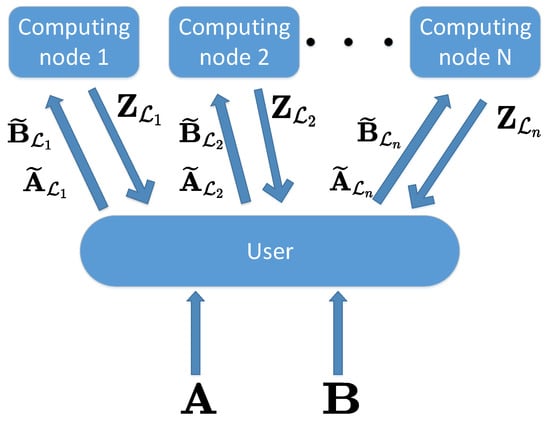

Minimizing Computation and Communication Costs of Two-Sided Secure Distributed Matrix Multiplication under Arbitrary Collusion Pattern

by

Jin Li, Nan Liu and Wei Kang

Entropy 2024, 26(5), 407; https://doi.org/10.3390/e26050407 - 8 May 2024

Abstract

This paper studies the problem of minimizing the total cost, including computation cost and communication cost, in the system of two-sided secure distributed matrix multiplication (SDMM) under an arbitrary collusion pattern. In order to perform SDMM, the two input matrices are split into

[...] Read more.

This paper studies the problem of minimizing the total cost, including computation cost and communication cost, in the system of two-sided secure distributed matrix multiplication (SDMM) under an arbitrary collusion pattern. In order to perform SDMM, the two input matrices are split into some blocks, blocks of random matrices are appended to protect the security of the two input matrices, and encoded copies of the blocks are distributed to all computing nodes for matrix multiplication calculation. Our aim is to minimize the total cost, overall matrix splitting factors, number of appended random matrices, and distribution vector, while satisfying the security constraint of the two input matrices, the decodability constraint of the desired result of the multiplication, the storage capacity of the computing nodes, and the delay constraint. First, a strategy of appending zeros to the input matrices is proposed to overcome the divisibility problem of matrix splitting. Next, the optimization problem is divided into two subproblems with the aid of alternating optimization (AO), where a feasible solution can be obtained. In addition, some necessary conditions for the problem to be feasible are provided. Simulation results demonstrate the superiority of our proposed scheme compared to the scheme without appending zeros and the scheme with no alternating optimization.

Full article

(This article belongs to the Special Issue Information-Theoretic Cryptography and Security)

►▼

Show Figures

Figure 1

Open AccessFeature PaperArticle

Relativistic Roots of κ-Entropy

by

Giorgio Kaniadakis

Entropy 2024, 26(5), 406; https://doi.org/10.3390/e26050406 - 7 May 2024

Abstract

The axiomatic structure of the

The axiomatic structure of the

(This article belongs to the Special Issue Twenty Years of Kaniadakis Entropy: Current Trends and Future Perspectives)

Journal Menu

► ▼ Journal Menu-

- Entropy Home

- Aims & Scope

- Editorial Board

- Reviewer Board

- Topical Advisory Panel

- Video Exhibition

- Instructions for Authors

- Special Issues

- Topics

- Sections & Collections

- Article Processing Charge

- Indexing & Archiving

- Editor’s Choice Articles

- Most Cited & Viewed

- Journal Statistics

- Journal History

- Journal Awards

- Society Collaborations

- Conferences

- Editorial Office

Journal Browser

► ▼ Journal Browser-

arrow_forward_ios

Forthcoming issue

arrow_forward_ios Current issue - Vol. 26 (2024)

- Vol. 25 (2023)

- Vol. 24 (2022)

- Vol. 23 (2021)

- Vol. 22 (2020)

- Vol. 21 (2019)

- Vol. 20 (2018)

- Vol. 19 (2017)

- Vol. 18 (2016)

- Vol. 17 (2015)

- Vol. 16 (2014)

- Vol. 15 (2013)

- Vol. 14 (2012)

- Vol. 13 (2011)

- Vol. 12 (2010)

- Vol. 11 (2009)

- Vol. 10 (2008)

- Vol. 9 (2007)

- Vol. 8 (2006)

- Vol. 7 (2005)

- Vol. 6 (2004)

- Vol. 5 (2003)

- Vol. 4 (2002)

- Vol. 3 (2001)

- Vol. 2 (2000)

- Vol. 1 (1999)

Highly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Topic in

Algorithms, Diagnostics, Entropy, Information, J. Imaging

Application of Machine Learning in Molecular Imaging

Topic Editors: Allegra Conti, Nicola Toschi, Marianna Inglese, Andrea Duggento, Matthew Grech-Sollars, Serena Monti, Giancarlo Sportelli, Pietro CarraDeadline: 31 May 2024

Topic in

Education Sciences, Entropy, JAL, Societies, Sustainability

Sustainability in Aging and Depopulation Societies

Topic Editors: Shiro Horiuchi, Gregor Wolbring, Takeshi MatsudaDeadline: 15 June 2024

Topic in

Buildings, Energies, Entropy, Resources, Sustainability

Advances in Solar Heating and Cooling

Topic Editors: Salvatore Vasta, Sotirios Karellas, Marina Bonomolo, Alessio Sapienza, Uli JakobDeadline: 30 June 2024

Topic in

Actuators, Applied Sciences, Entropy

Thermodynamics and Heat Transfers in Vacuum Tube Trains (Hyperloop)

Topic Editors: Suyong Choi, Minki Cho, Jungyoul LimDeadline: 30 July 2024

Conferences

22–26 November 2024

2024 International Conference on Science and Engineering of Electronics (ICSEE'2024) Wuhan, China

28–31 May 2024

XXII Conference on Non-equilibrium Statistical Mechanics and Nonlinear Physics—MEDYFINOL 2024

Special Issues

Special Issue in

Entropy

Signal Processing for Fault Detection and Diagnosis in Electric Machines and Energy Conversion Systems

Guest Editor: Epaminondas D. MitronikasDeadline: 20 May 2024

Special Issue in

Entropy

Entropy, Statistical Evidence, and Scientific Inference: Evidence Functions in Theory and Applications

Guest Editors: Brian Dennis, Mark L. Taper, Jose Miguel PoncianoDeadline: 31 May 2024

Special Issue in

Entropy

Nonlinear Dynamics in Cardiovascular Signals

Guest Editor: Claudia LermaDeadline: 15 June 2024

Special Issue in

Entropy

Non-equilibrium Thermodynamics

Guest Editors: Duc Nguyen-Manh, Abraham MarmurDeadline: 30 June 2024

Topical Collections

Topical Collection in

Entropy

Algorithmic Information Dynamics: A Computational Approach to Causality from Cells to Networks

Collection Editors: Hector Zenil, Felipe Abrahão

Topical Collection in

Entropy

Wavelets, Fractals and Information Theory

Collection Editor: Carlo Cattani

Topical Collection in

Entropy

Entropy in Image Analysis

Collection Editor: Amelia Carolina Sparavigna