Journal Description

AI

AI

is an international, peer-reviewed, open access journal on artificial intelligence (AI), including broad aspects of cognition and reasoning, perception and planning, machine learning, intelligent robotics, and applications of AI, published monthly online by MDPI.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within ESCI (Web of Science), Scopus, EBSCO, and other databases.

- Journal Rank: JCR - Q2 (Computer Science, Artificial Intelligence) / CiteScore - Q2 (Artificial Intelligence)

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 18.9 days after submission; acceptance to publication is undertaken in 4.9 days (median values for papers published in this journal in the second half of 2024).

- Recognition of Reviewers: APC discount vouchers, optional signed peer review, and reviewer names published annually in the journal.

Impact Factor:

3.1 (2023);

5-Year Impact Factor:

3.3 (2023)

Latest Articles

Should We Reconsider RNNs for Time-Series Forecasting?

AI 2025, 6(5), 90; https://doi.org/10.3390/ai6050090 - 25 Apr 2025

Abstract

(1) Background: In recent years, Transformer-based models have dominated the time-series forecasting domain, overshadowing recurrent neural networks (RNNs) such as Long Short-Term Memory (LSTM) and Gated Recurrent Unit (GRU). While Transformers demonstrate superior performance, their high computational cost limits their practical application in

[...] Read more.

(1) Background: In recent years, Transformer-based models have dominated the time-series forecasting domain, overshadowing recurrent neural networks (RNNs) such as Long Short-Term Memory (LSTM) and Gated Recurrent Unit (GRU). While Transformers demonstrate superior performance, their high computational cost limits their practical application in resource-constrained settings. (2) Methods: In this paper, we reconsider RNNs—specifically the GRU architecture—as an efficient alternative to time-series forecasting by leveraging this architecture’s sequential representation capability to capture cross-channel dependencies effectively. Our model also utilizes a feed-forward layer right after the GRU module to represent temporal dependencies, and aggregates it with the GRU layers to predict future values of a given time-series. (3) Results and conclusions: Our extensive experiments conducted on different real-world datasets show that our inverted GRU (iGRU) model achieves promising results in terms of error metrics and memory efficiency, challenging or surpassing state-of-the-art models on various benchmarks.

Full article

(This article belongs to the Section AI Systems: Theory and Applications)

►

Show Figures

Open AccessArticle

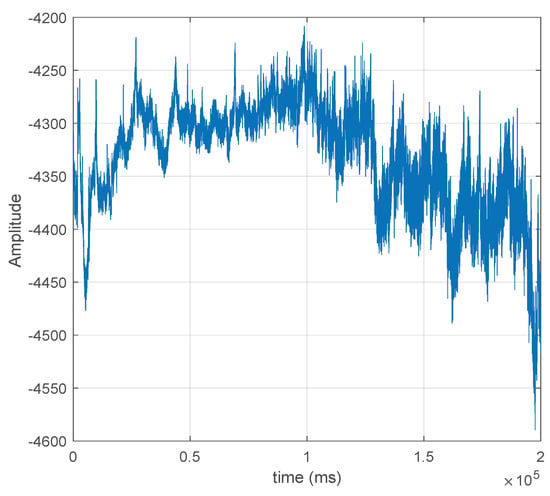

Non-Linear Synthetic Time Series Generation for Electroencephalogram Data Using Long Short-Term Memory Models

by

Bakr Rashid Alqaysi, Manuel Rosa-Zurera and Ali Abdulameer Aldujaili

AI 2025, 6(5), 89; https://doi.org/10.3390/ai6050089 - 25 Apr 2025

Abstract

Background/Objectives: The implementation of artificial intelligence-based systems for disease detection using biomedical signals is challenging due to the limited availability of training data. This paper deals with the generation of synthetic EEG signals using deep learning-based models, to be used in future research

[...] Read more.

Background/Objectives: The implementation of artificial intelligence-based systems for disease detection using biomedical signals is challenging due to the limited availability of training data. This paper deals with the generation of synthetic EEG signals using deep learning-based models, to be used in future research for training Parkinson’s disease detection systems. Methods: Linear models, such as AR, MA, and ARMA, are often inadequate due to the inherent non-linearity of time series. To overcome this drawback, long short-term memory (LSTM) networks are proposed to learn long-term dependencies in non-linear EEG time series and subsequently generate synthetic signals to enhance the training of detection systems. To learn the forward and backward time dependencies in the EEG signals, a Bidirectional LSTM model has been implemented. The LSTM model was trained on the UC San Diego Resting State EEG Dataset, which includes samples from two groups: individuals with Parkinson’s disease and a healthy control group. Results: To determine the optimal number of cells in the model, we evaluated the mean squared error (MSE) and cross-correlation between the original and synthetic signals. This method was also applied to select the length of the hidden state vector. The number of hidden cells was set to 14, and the length of the hidden state vector for each cell was fixed at 4. Increasing these values did not improve MSE or cross-correlation and unnecessarily increased computational complexity. The proposed model’s performance was evaluated using the mean-squared error (MSE), Pearson’s correlation coefficient, and the power spectra of the synthetic and original signals, demonstrating the suitability of the proposed method for this application. Conclusions: The proposed model was compared to Autoregressive Moving Average (ARMA) models, demonstrating superior performance. This confirms that deep learning-based models, such as LSTM, are strong alternatives to statistical models like ARMA for handling non-linear, multifrequency, and non-stationary signals.

Full article

(This article belongs to the Topic Mathematical Applications and Computational Intelligence in Medicine and Biology)

►▼

Show Figures

Figure 1

Open AccessArticle

Evaluating the Efficacy of Deep Learning Models for Identifying Manipulated Medical Fundus Images

by

Ho-Jung Song, Ju-Hyuck Han, You-Sang Cho and Yong-Suk Kim

AI 2025, 6(5), 88; https://doi.org/10.3390/ai6050088 - 24 Apr 2025

Abstract

(1) Background: The misuse of transformation technology using medical images is a critical problem that can endanger patients’ lives, and detecting manipulation via a deep learning model is essential to address issues of manipulated medical images that may arise in the healthcare field.

[...] Read more.

(1) Background: The misuse of transformation technology using medical images is a critical problem that can endanger patients’ lives, and detecting manipulation via a deep learning model is essential to address issues of manipulated medical images that may arise in the healthcare field. (2) Methods: The dataset was divided into a real fundus dataset and a manipulated dataset. The fundus image manipulation detection model uses a deep learning model based on a Convolution Neural Network (CNN) structure that applies a concatenate operation for fast computation speed and reduced loss of input image weights. (3) Results: For real data, the model achieved an average sensitivity of 0.98, precision of 1.00, F1-score of 0.99, and AUC of 0.988. For manipulated data, the model recorded sensitivity of 1.00, precision of 0.84, F1-score of 0.92, and AUC of 0.988. Comparatively, five ophthalmologists achieved lower average scores on manipulated data: sensitivity of 0.71, precision of 0.61, F1-score of 0.65, and AUC of 0.822. (4) Conclusions: This study presents the possibility of addressing and preventing problems caused by manipulated medical images in the healthcare field. The proposed approach for detecting manipulated fundus images through a deep learning model demonstrates higher performance than that of ophthalmologists, making it an effective method.

Full article

(This article belongs to the Section Medical & Healthcare AI)

►▼

Show Figures

Figure 1

Open AccessReview

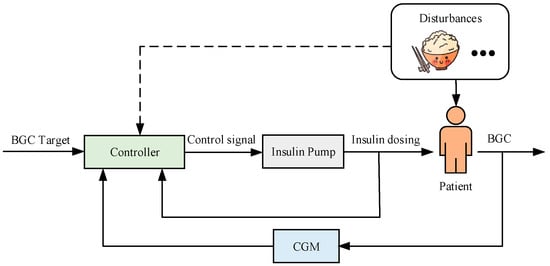

Deep Reinforcement Learning for Automated Insulin Delivery Systems: Algorithms, Applications, and Prospects

by

Xia Yu, Zi Yang, Xiaoyu Sun, Hao Liu, Hongru Li, Jingyi Lu, Jian Zhou and Ali Cinar

AI 2025, 6(5), 87; https://doi.org/10.3390/ai6050087 - 23 Apr 2025

Abstract

Advances in continuous glucose monitoring (CGM) technologies and wearable devices are enabling the enhancement of automated insulin delivery systems (AIDs) towards fully automated closed-loop systems, aiming to achieve secure, personalized, and optimal blood glucose concentration (BGC) management for individuals with diabetes. While model

[...] Read more.

Advances in continuous glucose monitoring (CGM) technologies and wearable devices are enabling the enhancement of automated insulin delivery systems (AIDs) towards fully automated closed-loop systems, aiming to achieve secure, personalized, and optimal blood glucose concentration (BGC) management for individuals with diabetes. While model predictive control provides a flexible framework for developing AIDs control algorithms, models that capture inter- and intra-patient variability and perturbation uncertainty are needed for accurate and effective regulation of BGC. Advances in artificial intelligence present new opportunities for developing data-driven, fully closed-loop AIDs. Among them, deep reinforcement learning (DRL) has attracted much attention due to its potential resistance to perturbations. To this end, this paper conducts a literature review on DRL-based BGC control algorithms for AIDs. First, this paper systematically analyzes the benefits of utilizing DRL algorithms in AIDs. Then, a comprehensive review of various DRL techniques and extensions that have been proposed to address challenges arising from their integration with AIDs, including considerations related to low sample availability, personalization, and security are discussed. Additionally, the paper provides an application-oriented investigation of DRL-based AIDs control algorithms, emphasizing significant challenges in practical implementations. Finally, the paper discusses solutions to relevant BGC control problems, outlines prospects for practical applications, and suggests future research directions.

Full article

(This article belongs to the Special Issue Artificial Intelligence for Future Healthcare: Advancement, Impact, and Prospect in the Field of Cancer)

►▼

Show Figures

Figure 1

Open AccessArticle

Evaluating the Societal Impact of AI: A Comparative Analysis of Human and AI Platforms Using the Analytic Hierarchy Process

by

Bojan Srđević

AI 2025, 6(4), 86; https://doi.org/10.3390/ai6040086 - 20 Apr 2025

Abstract

►▼

Show Figures

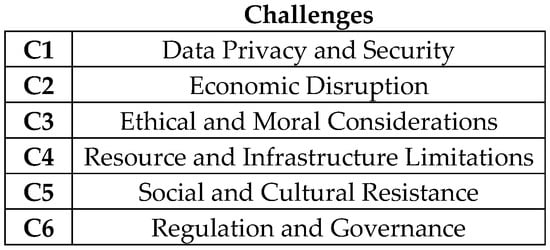

A central focus of this study was the methodology used to evaluate both humans and AI platforms, particularly in terms of their competitiveness and the implications of six key challenges to society resulting from the development and increasing use of artificial intelligence (AI)

[...] Read more.

A central focus of this study was the methodology used to evaluate both humans and AI platforms, particularly in terms of their competitiveness and the implications of six key challenges to society resulting from the development and increasing use of artificial intelligence (AI) technologies. The list of challenges was compiled by consulting various online sources and cross-referencing with academics from 15 countries across Europe and the USA. Professors, scientific researchers, and PhD students were invited to independently and remotely evaluate the challenges. Rather than contributing another discussion based solely on social arguments, this paper seeks to provide a logical evaluation framework, moving beyond qualitative discourse by incorporating numerical values. The pairwise comparison of AI challenges was conducted by two groups of participants using the multicriteria decision-making model known as the analytic hierarchy process (AHP). Thirty-eight humans performed pairwise comparisons of the six challenges after they were listed in a distributed questionnaire. The same procedure was carried out by four AI platforms—ChatGPT, Gemini (BardAI), Perplexity, and DedaAI—who responded to the same requests as the human participants. The results from both groups were grouped and compared, revealing interesting differences in the prioritization of AI challenges’ impact on society. Both groups agreed on the highest importance of data privacy and security, as well as the lowest importance of social and cultural resistance, specifically the clash of AI with existing cultural norms and societal values.

Full article

Figure 1

Open AccessArticle

CacheFormer: High-Attention-Based Segment Caching

by

Sushant Singh and Ausif Mahmood

AI 2025, 6(4), 85; https://doi.org/10.3390/ai6040085 - 18 Apr 2025

Abstract

►▼

Show Figures

Efficiently handling long contexts in transformer-based language models with low perplexity is an active area of research. Numerous recent approaches like Linformer, Longformer, Performer, and Structured state space models (SSMs), have not fully resolved this problem. All these models strive to reduce the

[...] Read more.

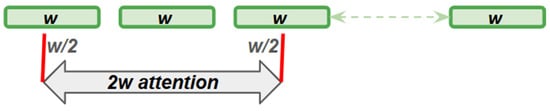

Efficiently handling long contexts in transformer-based language models with low perplexity is an active area of research. Numerous recent approaches like Linformer, Longformer, Performer, and Structured state space models (SSMs), have not fully resolved this problem. All these models strive to reduce the quadratic time complexity of the attention mechanism while minimizing the loss in quality due to the effective compression of the long context. Inspired by the cache and virtual memory principle in computers, where in case of a cache miss, not only the needed data are retrieved from the memory, but the adjacent data are also obtained, we apply this concept to handling long contexts by dividing it into small segments. In our design, we retrieve the nearby segments in an uncompressed form when high segment-level attention occurs at the compressed level. Our enhancements for handling long context include aggregating four attention mechanisms consisting of short sliding window attention, long compressed segmented attention, dynamically retrieving top-k high-attention uncompressed segments, and overlapping segments in long segment attention to avoid segment fragmentation. These enhancements result in an architecture that outperforms existing SOTA architectures with an average perplexity improvement of 8.5% over similar model sizes.

Full article

Figure 1

Open AccessSystematic Review

Artificial Intelligence in Ovarian Cancer: A Systematic Review and Meta-Analysis of Predictive AI Models in Genomics, Radiomics, and Immunotherapy

by

Mauro Francesco Pio Maiorano, Gennaro Cormio, Vera Loizzi and Brigida Anna Maiorano

AI 2025, 6(4), 84; https://doi.org/10.3390/ai6040084 - 18 Apr 2025

Abstract

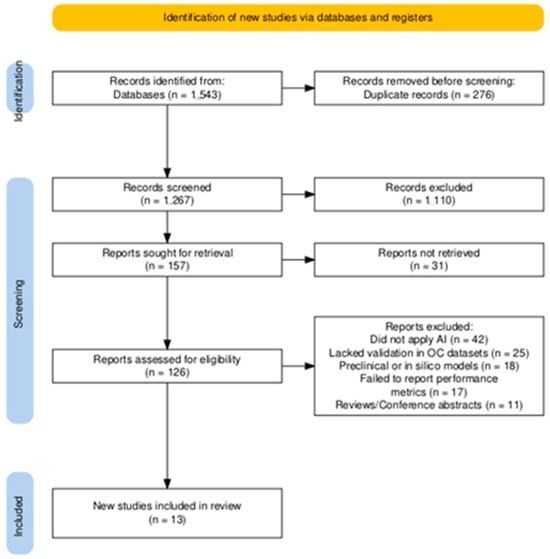

Background/Objectives: Artificial intelligence (AI) is increasingly influencing oncological research by enabling precision medicine in ovarian cancer through enhanced prediction of therapy response and patient stratification. This systematic review and meta-analysis was conducted to assess the performance of AI-driven models across three key

[...] Read more.

Background/Objectives: Artificial intelligence (AI) is increasingly influencing oncological research by enabling precision medicine in ovarian cancer through enhanced prediction of therapy response and patient stratification. This systematic review and meta-analysis was conducted to assess the performance of AI-driven models across three key domains: genomics and molecular profiling, radiomics-based imaging analysis, and prediction of immunotherapy response. Methods: Relevant studies were identified through a systematic search across multiple databases (2020–2025), adhering to PRISMA guidelines. Results: Thirteen studies met the inclusion criteria, involving over 10,000 ovarian cancer patients and encompassing diverse AI models such as machine learning classifiers and deep learning architectures. Pooled AUCs indicated strong predictive performance for genomics-based (0.78), radiomics-based (0.88), and immunotherapy-based (0.77) models. Notably, radiogenomics-based AI integrating imaging and molecular data yielded the highest accuracy (AUC = 0.975), highlighting the potential of multi-modal approaches. Heterogeneity and risk of bias were assessed, and evidence certainty was graded. Conclusions: Overall, AI demonstrated promise in predicting therapeutic outcomes in ovarian cancer, with radiomics and integrated radiogenomics emerging as leading strategies. Future efforts should prioritize explainability, prospective multi-center validation, and integration of immune and spatial transcriptomic data to support clinical implementation and individualized treatment strategies. Unlike earlier reviews, this study synthesizes a broader range of AI applications in ovarian cancer and provides pooled performance metrics across diverse models. It examines the methodological soundness of the selected studies and highlights current gaps and opportunities for clinical translation, offering a comprehensive and forward-looking perspective in the field.

Full article

(This article belongs to the Special Issue Artificial Intelligence for Future Healthcare: Advancement, Impact, and Prospect in the Field of Cancer)

►▼

Show Figures

Figure 1

Open AccessArticle

Efficient Detection of Mind Wandering During Reading Aloud Using Blinks, Pitch Frequency, and Reading Rate

by

Amir Rabinovitch, Eden Ben Baruch, Maor Siton, Nuphar Avital, Menahem Yeari and Dror Malka

AI 2025, 6(4), 83; https://doi.org/10.3390/ai6040083 - 18 Apr 2025

Abstract

►▼

Show Figures

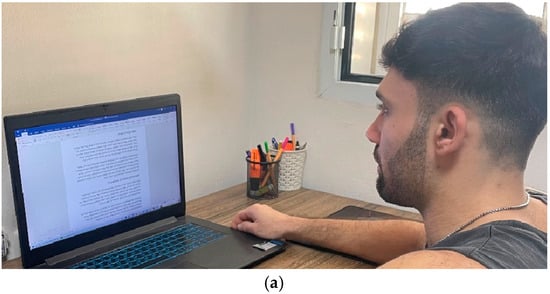

Mind wandering is a common issue among schoolchildren and academic students, often undermining the quality of learning and teaching effectiveness. Current detection methods mainly rely on eye trackers and electrodermal activity (EDA) sensors, focusing on external indicators such as facial movements but neglecting

[...] Read more.

Mind wandering is a common issue among schoolchildren and academic students, often undermining the quality of learning and teaching effectiveness. Current detection methods mainly rely on eye trackers and electrodermal activity (EDA) sensors, focusing on external indicators such as facial movements but neglecting voice detection. These methods are often cumbersome, uncomfortable for participants, and invasive, requiring specialized, expensive equipment that disrupts the natural learning environment. To overcome these challenges, a new algorithm has been developed to detect mind wandering during reading aloud. Based on external indicators like the blink rate, pitch frequency, and reading rate, the algorithm integrates these three criteria to ensure the accurate detection of mind wandering using only a standard computer camera and microphone, making it easy to implement and widely accessible. An experiment with ten participants validated this approach. Participants read aloud a text of 1304 words while the algorithm, incorporating the Viola–Jones model for face and eye detection and pitch-frequency analysis, monitored for signs of mind wandering. A voice activity detection (VAD) technique was also used to recognize human speech. The algorithm achieved 76% accuracy in predicting mind wandering during specific text segments, demonstrating the feasibility of using noninvasive physiological indicators. This method offers a practical, non-intrusive solution for detecting mind wandering through video and audio data, making it suitable for educational settings. Its ability to integrate seamlessly into classrooms holds promise for enhancing student concentration, improving the teacher–student dynamic, and boosting overall teaching effectiveness. By leveraging standard, accessible technology, this approach could pave the way for more personalized, technology-enhanced education systems.

Full article

Figure 1

Open AccessArticle

BEV-CAM3D: A Unified Bird’s-Eye View Architecture for Autonomous Driving with Monocular Cameras and 3D Point Clouds

by

Daniel Ayo Oladele, Elisha Didam Markus and Adnan M. Abu-Mahfouz

AI 2025, 6(4), 82; https://doi.org/10.3390/ai6040082 - 18 Apr 2025

Abstract

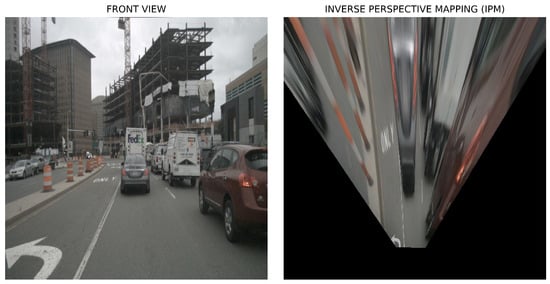

Three-dimensional (3D) visual perception is pivotal for understanding surrounding environments in applications such as autonomous driving and mobile robotics. While LiDAR-based models dominate due to accurate depth sensing, their cost and sparse outputs have driven interest in camera-based systems. However, challenges like cross-domain

[...] Read more.

Three-dimensional (3D) visual perception is pivotal for understanding surrounding environments in applications such as autonomous driving and mobile robotics. While LiDAR-based models dominate due to accurate depth sensing, their cost and sparse outputs have driven interest in camera-based systems. However, challenges like cross-domain degradation and depth estimation inaccuracies persist. This paper introduces BEVCAM3D, a unified bird’s-eye view (BEV) architecture that fuses monocular cameras and LiDAR point clouds to overcome single-sensor limitations. BEVCAM3D integrates a deformable cross-modality attention module for feature alignment and a fast ground segmentation algorithm to reduce computational overhead by 40%. Evaluated on the nuScenes dataset, BEVCAM3D achieves state-of-the-art performance, with a 73.9% mAP and a 76.2% NDS, outperforming existing LiDAR-camera fusion methods like SparseFusion (72.0% mAP) and IS-Fusion (73.0% mAP). Notably, it excels in detecting pedestrians (91.0% AP) and traffic cones (89.9% AP), addressing the class imbalance in autonomous driving scenarios. The framework supports real-time inference at 11.2 FPS with an EfficientDet-B3 backbone and demonstrates robustness under low-light conditions (62.3% nighttime mAP).

Full article

(This article belongs to the Section AI in Autonomous Systems)

►▼

Show Figures

Figure 1

Open AccessArticle

The Impact of Ancient Greek Prompts on Artificial Intelligence Image Generation: A New Educational Paradigm

by

Anna Kalargirou, Dimitrios Kotsifakos and Christos Douligeris

AI 2025, 6(4), 81; https://doi.org/10.3390/ai6040081 - 18 Apr 2025

Abstract

►▼

Show Figures

Background/Objectives: This article explores the use of Ancient Greek as a prompt language in DALL·E 3, an Artificial Intelligence software for image generation. The research investigates three dimensions of Artificial Intelligence’s ability: (a) the sense and visualization of the concept of distance, (b)

[...] Read more.

Background/Objectives: This article explores the use of Ancient Greek as a prompt language in DALL·E 3, an Artificial Intelligence software for image generation. The research investigates three dimensions of Artificial Intelligence’s ability: (a) the sense and visualization of the concept of distance, (b) the mixing of representational as well as mythic contents, and (c) the visualization of emotions. More specifically, the research not only investigates AI’s potentialities in processing and representing Ancient Greek texts but also attempts to assess its interpretative boundaries. The key question is whether AI can faithfully represent the underlying conceptual and narrative structures of ancient literature or whether its representations are superficial and constrained by algorithmic procedures. Methods: This is a mixed-methods experimental research design examining whether a specified Artificial Intelligence software can sense, understand, and graphically represent linguistic and conceptual structures in the Ancient Greek language. Results: The study highlights Artificial Intelligence’s possibility in classical language education as well as digital humanities regarding linguistic complexity versus AI’s power in interpretation. More specifically, the research not only investigates AI’s potentialities in processing and representing Ancient Greek texts but also attempts to assess its interpretative boundaries. The key question is whether AI can faithfully represent the underlying conceptual and narrative structures of ancient literature or whether its representations are superficial and constrained by algorithmic procedures. The study highlights Artificial Intelligence’s possibility in classical language education as well as digital humanities regarding linguistic complexity versus AI’s power in interpretation. Conclusions: The research is a step toward a more extensive discussion on Artificial Intelligence in historical linguistics, digital pedagogy, as well as aesthetic representation by Artificial Intelligence environments.

Full article

Figure 1

Open AccessArticle

Radiomics-Based Machine Learning Models Improve Acute Pancreatitis Severity Prediction

by

Ahmet Yasin Karkas, Gorkem Durak, Onder Babacan, Timurhan Cebeci, Emre Uysal, Halil Ertugrul Aktas, Mehmet Ilhan, Alpay Medetalibeyoglu, Ulas Bagci, Mehmet Semih Cakir and Sukru Mehmet Erturk

AI 2025, 6(4), 80; https://doi.org/10.3390/ai6040080 - 18 Apr 2025

Abstract

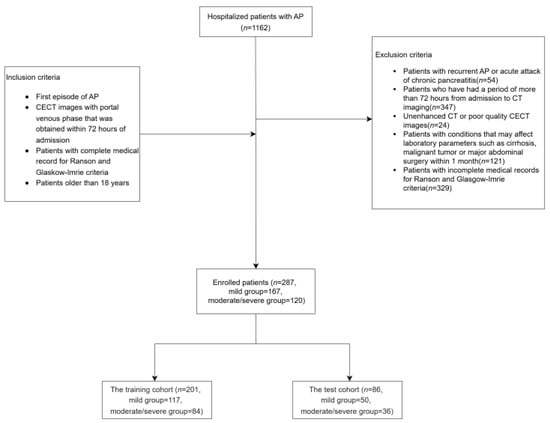

(1) Acute pancreatitis (AP) is a medical emergency associated with high mortality rates. Early and accurate prognosis assessment during admission is crucial for optimizing patient management and outcomes. This study seeks to develop robust radiomics-based machine learning (ML) models to classify the severity

[...] Read more.

(1) Acute pancreatitis (AP) is a medical emergency associated with high mortality rates. Early and accurate prognosis assessment during admission is crucial for optimizing patient management and outcomes. This study seeks to develop robust radiomics-based machine learning (ML) models to classify the severity of AP using contrast-enhanced computed tomography (CECT) scans. (2) Methods: A retrospective cohort of 287 AP patients with CECT scans was analyzed, and clinical data were collected within 72 h of admission. Patients were classified as mild or moderate/severe based on the Revised Atlanta classification. Two radiologists manually segmented the pancreas and peripancreatic regions on CECT scans, and 234 radiomic features were extracted. The performance of the ML algorithms was compared with that of traditional scoring systems, including Ranson and Glasgow-Imrie scores. (3) Results: Traditional severity scoring systems produced AUC values of 0.593 (Ranson, Admission), 0.696 (Ranson, 48 h), 0.677 (Ranson, Cumulative), and 0.663 (Glasgow-Imrie). Using LASSO regression, 12 radiomic features were selected for the ML classifiers. Among these, the best-performing ML classifier achieved an AUC of 0.876 in the training set and 0.777 in the test set. (4) Conclusions: Radiomics-based ML classifiers significantly enhanced the prediction of AP severity in patients undergoing CECT scans within 72 h of admission, outperforming traditional severity scoring systems. This research is the first to successfully predict prognosis by analyzing radiomic features from both pancreatic and peripancreatic tissues using multiple ML algorithms applied to early CECT images.

Full article

(This article belongs to the Section Medical & Healthcare AI)

►▼

Show Figures

Figure 1

Open AccessArticle

Cross-Context Stress Detection: Evaluating Machine Learning Models on Heterogeneous Stress Scenarios Using EEG Signals

by

Omneya Attallah, Mona Mamdouh and Ahmad Al-Kabbany

AI 2025, 6(4), 79; https://doi.org/10.3390/ai6040079 - 14 Apr 2025

Abstract

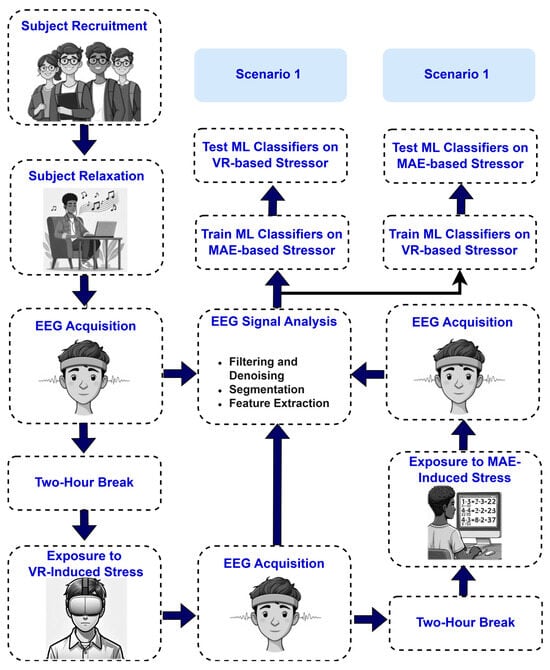

Background/Objectives: This article addresses the challenge of stress detection across diverse contexts. Mental stress is a worldwide concern that substantially affects human health and productivity, rendering it a critical research challenge. Although numerous studies have investigated stress detection through machine learning (ML) techniques,

[...] Read more.

Background/Objectives: This article addresses the challenge of stress detection across diverse contexts. Mental stress is a worldwide concern that substantially affects human health and productivity, rendering it a critical research challenge. Although numerous studies have investigated stress detection through machine learning (ML) techniques, there has been limited research on assessing ML models trained in one context and utilized in another. The objective of ML-based stress detection systems is to create models that generalize across various contexts. Methods: This study examines the generalizability of ML models employing EEG recordings from two stress-inducing contexts: mental arithmetic evaluation (MAE) and virtual reality (VR) gaming. We present a data collection workflow and publicly release a portion of the dataset. Furthermore, we evaluate classical ML models and their generalizability, offering insights into the influence of training data on model performance, data efficiency, and related expenses. EEG data were acquired leveraging MUSE-STM hardware during stressful MAE and VR gaming scenarios. The methodology entailed preprocessing EEG signals using wavelet denoising mother wavelets, assessing individual and aggregated sensor data, and employing three ML models—linear discriminant analysis (LDA), support vector machine (SVM), and K-nearest neighbors (KNN)—for classification purposes. Results: In Scenario 1, where MAE was employed for training and VR for testing, the TP10 electrode attained an average accuracy of 91.42% across all classifiers and participants, whereas the SVM classifier achieved the highest average accuracy of 95.76% across all participants. In Scenario 2, adopting VR data as the training data and MAE data as the testing data, the maximum average accuracy achieved was 88.05% with the combination of TP10, AF8, and TP9 electrodes across all classifiers and participants, whereas the LDA model attained the peak average accuracy of 90.27% among all participants. The optimal performance was achieved with Symlets 4 and Daubechies-2 for Scenarios 1 and 2, respectively. Conclusions: The results demonstrate that although ML models exhibit generalization capabilities across stressors, their performance is significantly influenced by the alignment between training and testing contexts, as evidenced by systematic cross-context evaluations using an 80/20 train–test split per participant and quantitative metrics (accuracy, precision, recall, and F1-score) averaged across participants. The observed variations in performance across stress scenarios, classifiers, and EEG sensors provide empirical support for this claim.

Full article

(This article belongs to the Special Issue Artificial Intelligence in Biomedical Engineering: Challenges and Developments)

►▼

Show Figures

Figure 1

Open AccessArticle

On the Integration of Social Context for Enhanced Fake News Detection Using Multimodal Fusion Attention Mechanism

by

Hachemi Nabil Dellys, Halima Mokeddem and Layth Sliman

AI 2025, 6(4), 78; https://doi.org/10.3390/ai6040078 - 11 Apr 2025

Abstract

►▼

Show Figures

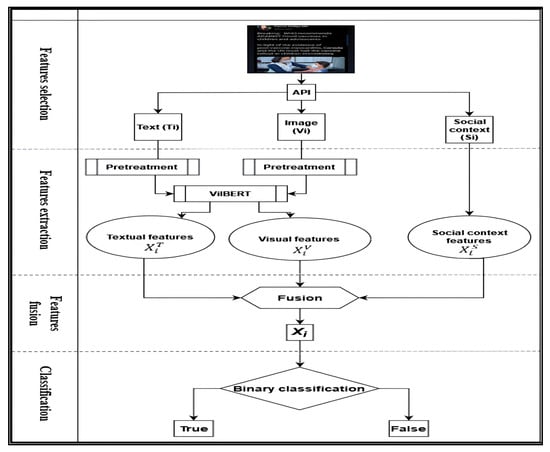

Detecting fake news has become a critical challenge in today’s information-dense society. Existing research on fake news detection predominantly emphasizes multi-modal approaches, focusing primarily on textual and visual features. However, despite its clear importance, the integration of social context has received limited attention

[...] Read more.

Detecting fake news has become a critical challenge in today’s information-dense society. Existing research on fake news detection predominantly emphasizes multi-modal approaches, focusing primarily on textual and visual features. However, despite its clear importance, the integration of social context has received limited attention in the literature. To address this gap, this study proposes a novel three-dimensional multimodal fusion framework that integrates textual, visual, and social context features for effective fake news detection on social media platforms. The proposed methodology leverages an advanced Vision-and-Language Bidirectional Encoder Representations from Transformers multi-task model to extract fused attention features from text and images concurrently, capturing intricate inter-modal correlations. Comprehensive experiments validate the efficacy of the proposed approach. The results demonstrate that the proposed solution achieves the highest balanced accuracy of 77%, surpassing other baseline models. Furthermore, the incorporation of social context features significantly enhances model performance. The proposed multimodal architecture also outperforms state-of-the-art approaches, providing a robust and scalable framework for fake news detection using artificial intelligence. This study contributes to advancing the field by offering a comprehensive and practical engineering solution for combating fake news.

Full article

Figure 1

Open AccessArticle

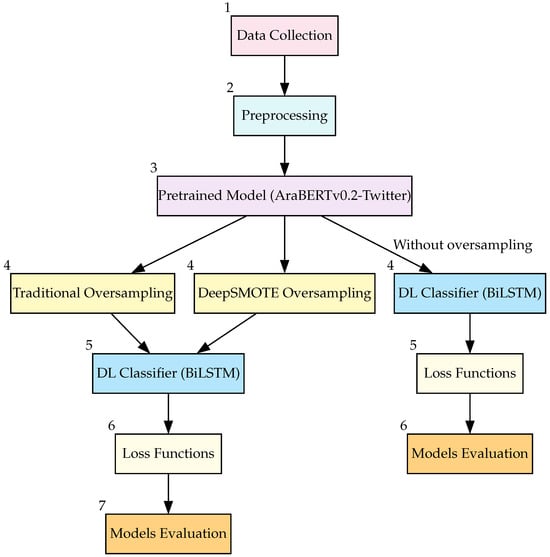

Enhancing the Classification of Imbalanced Arabic Medical Questions Using DeepSMOTE

by

Bushra Al-Smadi, Bassam Hammo, Hossam Faris and Pedro A. Castillo

AI 2025, 6(4), 77; https://doi.org/10.3390/ai6040077 - 11 Apr 2025

Abstract

The growing demand for telemedicine has highlighted the need for automated healthcare services, particularly in medical question classification. This study presents a deep learning model designed to address key challenges in telemedicine, including class imbalance and accurate routing of Arabic medical questions to

[...] Read more.

The growing demand for telemedicine has highlighted the need for automated healthcare services, particularly in medical question classification. This study presents a deep learning model designed to address key challenges in telemedicine, including class imbalance and accurate routing of Arabic medical questions to the correct specialties. The model combines AraBERTv0.2-Twitter, fine-tuned for informal Arabic, with Bidirectional Long Short-Term Memory (BiLSTM) networks to capture deep semantic relationships in medical text. We used a labeled dataset of 5000 Arabic consultation records from Altibbi, covering five key medical specialties selected for their clinical relevance and frequency. The data underwent preprocessing to remove noise and normalize text. We employed stratified sampling to ensure representative distribution across the selected medical specialties. We evaluate multiple models using macro precision, macro recall, macro F1-score, weighted F1-score, and G-Mean. Our results demonstrate that DeepSMOTE combined with cross-entropy loss achieves the best performance. The findings offer statistically significant improvements and have practical implications for improving screening and patient routing in telemedicine platforms.

Full article

(This article belongs to the Section Medical & Healthcare AI)

►▼

Show Figures

Figure 1

Open AccessArticle

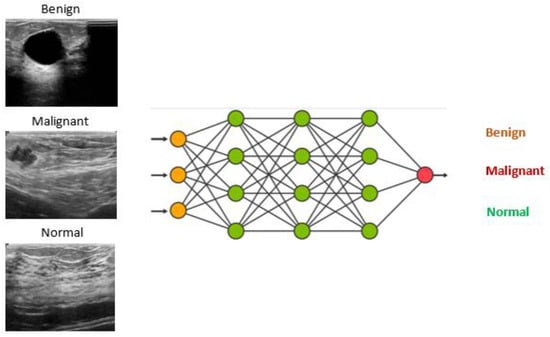

FPGA Hardware Acceleration of AI Models for Real-Time Breast Cancer Classification

by

Ayoub Mhaouch, Wafa Gtifa and Mohsen Machhout

AI 2025, 6(4), 76; https://doi.org/10.3390/ai6040076 - 11 Apr 2025

Abstract

Breast cancer detection is a critical task in healthcare, requiring fast, accurate, and efficient diagnostic tools. However, the high computational demands and latency of deep learning models in medical imaging present significant challenges, especially in resource-constrained environments. This paper addresses these challenges by

[...] Read more.

Breast cancer detection is a critical task in healthcare, requiring fast, accurate, and efficient diagnostic tools. However, the high computational demands and latency of deep learning models in medical imaging present significant challenges, especially in resource-constrained environments. This paper addresses these challenges by presenting an FPGA hardware accelerator tailored for breast cancer classification, leveraging the Zynq XC7Z020 SoC. The system integrates FPGA-accelerated layers with an ARM Cortex-A9 processor to optimize both performance and resource efficiency. We developed modular IP cores, including Conv2D, Average Pooling, and ReLU, using Vivado HLS to maximize FPGA resource utilization. By adopting 8-bit fixed-point arithmetic, the design achieves a 15.8% reduction in execution time compared to traditional CPU-based implementations while maintaining high classification accuracy. Additionally, our optimized approach significantly enhances energy efficiency, reducing power consumption from 3.8 W to 1.4 W a 63.15% reduction. This improvement makes our design highly suitable for real-time, power-sensitive applications, particularly in embedded and edge computing environments. Furthermore, it underscores the scalability and efficiency of FPGA-based AI solutions for healthcare diagnostics, enabling faster and more energy-efficient deep learning inference on resource-constrained devices.

Full article

(This article belongs to the Section Medical & Healthcare AI)

►▼

Show Figures

Figure 1

Open AccessArticle

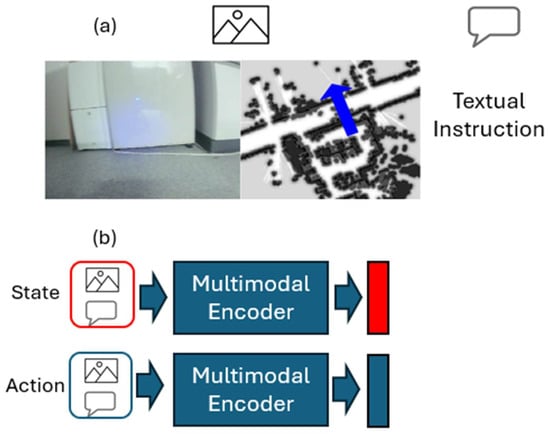

History-Aware Multimodal Instruction-Oriented Policies for Navigation Tasks

by

Renas Mukhametzianov and Hidetaka Nambo

AI 2025, 6(4), 75; https://doi.org/10.3390/ai6040075 - 11 Apr 2025

Abstract

The rise of large-scale language models and multimodal transformers has enabled instruction-based policies, such as vision-and-language navigation. To leverage their general world knowledge, we propose multimodal annotations for action options and support selection from a dynamic, describable action space. Our framework employs a

[...] Read more.

The rise of large-scale language models and multimodal transformers has enabled instruction-based policies, such as vision-and-language navigation. To leverage their general world knowledge, we propose multimodal annotations for action options and support selection from a dynamic, describable action space. Our framework employs a multimodal transformer that processes front-facing camera images, light detection and ranging (LIDAR) sensor’s point clouds, and tasks as textual instructions to produce a history-aware decision policy for mobile robot navigation. Our approach leverages a pretrained vision–language encoder and integrates it with a custom causal generative pretrained transformer (GPT) decoder to predict action sequences within a state–action history. We propose a trainable attention score mechanism to efficiently select the most suitable action from a variable set of possible options. Action options are text–image pairs and encoded using the same multimodal encoder employed for environment states. This approach of annotating and dynamically selecting actions is applicable to broader multidomain decision-making tasks. We compared two baseline models, ViLT (vision-and-language transformer) and FLAVA (foundational language and vision alignment), and found that FLAVA achieves superior performance within the constraints of 8 GB video memory usage in the training phase. Experiments were conducted in both simulated and real-world environments using our custom datasets for instructed task completion episodes, demonstrating strong prediction accuracy. These results highlight the potential of multimodal, dynamic action spaces for instruction-based robot navigation and beyond.

Full article

(This article belongs to the Section AI in Autonomous Systems)

►▼

Show Figures

Figure 1

Open AccessArticle

Beautimeter: Harnessing GPT for Assessing Architectural and Urban Beauty Based on the 15 Properties of Living Structure

by

Bin Jiang

AI 2025, 6(4), 74; https://doi.org/10.3390/ai6040074 - 10 Apr 2025

Abstract

►▼

Show Figures

Beautimeter is a new tool powered by generative pre-trained transformer (GPT) technology, designed to evaluate architectural and urban beauty. Rooted in Christopher Alexander’s theory of centers, this work builds on the idea that all environments possess, to varying degrees, an innate sense of

[...] Read more.

Beautimeter is a new tool powered by generative pre-trained transformer (GPT) technology, designed to evaluate architectural and urban beauty. Rooted in Christopher Alexander’s theory of centers, this work builds on the idea that all environments possess, to varying degrees, an innate sense of life. Alexander identified 15 fundamental properties, such as levels of scale and thick boundaries, that characterize living structure, which Beautimeter uses as a basis for its analysis. By integrating GPT’s advanced natural language processing capabilities, Beautimeter assesses the extent to which a structure embodies these 15 properties, enabling a nuanced evaluation of architectural and urban aesthetics. Using ChatGPT4o, the tool helps users generate insights into the perceived beauty and coherence of spaces. We conducted a series of case studies, evaluating images of architectural and urban environments, as well as carpets, paintings, and other artifacts. The results demonstrate Beautimeter’s effectiveness in analyzing aesthetic qualities across diverse contexts. Our findings suggest that by leveraging GPT technology, Beautimeter offers architects, urban planners, and designers a powerful tool to create spaces that resonate deeply with people. This paper also explores the implications of such technology for architecture and urban design, highlighting its potential to enhance both the design process and the assessment of built environments.

Full article

Figure 1

Open AccessArticle

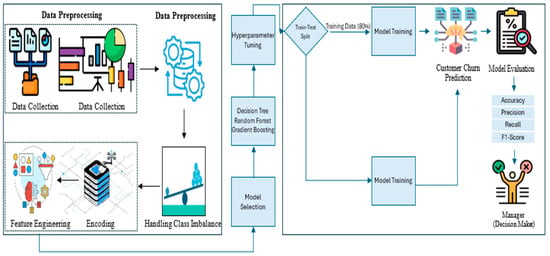

Bridging Predictive Insights and Retention Strategies: The Role of Account Balance in Banking Churn Prediction

by

Tahsien Al-Quraishi, Osamah Albahri, Ahmed Albahri, Abdullah Alamoodi and Iman Mohammed Sharaf

AI 2025, 6(4), 73; https://doi.org/10.3390/ai6040073 - 10 Apr 2025

Abstract

The banking industry faces significant challenges, from high customer churn rates to threatening long-term revenue generation. Traditionally, churn models assess service quality using customer satisfaction metrics; however, these subjective variables often yield low predictive accuracy. This study examines the relationship between customer attrition

[...] Read more.

The banking industry faces significant challenges, from high customer churn rates to threatening long-term revenue generation. Traditionally, churn models assess service quality using customer satisfaction metrics; however, these subjective variables often yield low predictive accuracy. This study examines the relationship between customer attrition and account balance using decision trees (DT), random forests (RF), and gradient-boosting machines (GBM). This research utilises a customer churn dataset and applies synthetic oversampling to balance class distribution during the preprocessing of financial variables. Account balance service is the primary factor in predicting customer churn, as it yields more accurate predictions compared to traditional subjective assessment methods. The tested model set achieved its highest predictive performance by applying boosting methods. The evaluation of research data highlights the critical role of financial indicators in shaping effective customer retention strategies. By leveraging machine learning intelligence, banks can make more informed decisions, attract new clients, and mitigate churn risk, ultimately enhancing long-term financial results.

Full article

(This article belongs to the Section AI Systems: Theory and Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

Multimodal Data Fusion for Tabular and Textual Data: Zero-Shot, Few-Shot, and Fine-Tuning of Generative Pre-Trained Transformer Models

by

Shadi Jaradat, Mohammed Elhenawy, Richi Nayak, Alexander Paz, Huthaifa I. Ashqar and Sebastien Glaser

AI 2025, 6(4), 72; https://doi.org/10.3390/ai6040072 - 7 Apr 2025

Abstract

In traffic safety analysis, previous research has often focused on tabular data or textual crash narratives in isolation, neglecting the potential benefits of a hybrid multimodal approach. This study introduces the Multimodal Data Fusion (MDF) framework, which fuses tabular data with textual narratives

[...] Read more.

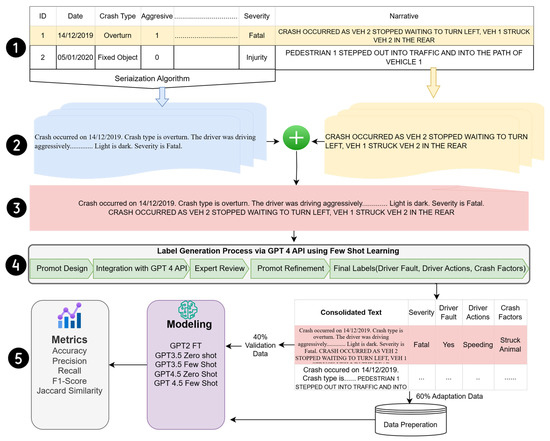

In traffic safety analysis, previous research has often focused on tabular data or textual crash narratives in isolation, neglecting the potential benefits of a hybrid multimodal approach. This study introduces the Multimodal Data Fusion (MDF) framework, which fuses tabular data with textual narratives by leveraging advanced Large Language Models (LLMs), such as GPT-2, GPT-3.5, and GPT-4.5, using zero-shot (ZS), few-shot (FS), and fine-tuning (FT) learning strategies. We employed few-shot learning with GPT-4.5 to generate new labels for traffic crash analysis, such as driver fault, driver actions, and crash factors, alongside the existing label for severity. Our methodology was tested on crash data from the Missouri State Highway Patrol, demonstrating significant improvements in model performance. GPT-2 (fine-tuned) was used as the baseline model, against which more advanced models were evaluated. GPT-4.5 few-shot learning achieved 98.9% accuracy for crash severity prediction and 98.1% accuracy for driver fault classification. In crash factor extraction, GPT-4.5 few-shot achieved the highest Jaccard score (82.9%), surpassing GPT-3.5 and fine-tuned GPT-2 models. Similarly, in driver actions extraction, GPT-4.5 few-shot attained a Jaccard score of 73.1%, while fine-tuned GPT-2 closely followed with 72.2%, demonstrating that task-specific fine-tuning can achieve performance close to state-of-the-art models when adapted to domain-specific data. These findings highlight the superior performance of GPT-4.5 few-shot learning, particularly in classification and information extraction tasks, while also underscoring the effectiveness of fine-tuning on domain-specific datasets to bridge performance gaps with more advanced models. The MDF framework’s success demonstrates its potential for broader applications beyond traffic crash analysis, particularly in domains where labeled data are scarce and predictive modeling is essential.

Full article

(This article belongs to the Section AI Systems: Theory and Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

From Camera Image to Active Target Tracking: Modelling, Encoding and Metrical Analysis for Unmanned Underwater Vehicles

by

Samuel Appleby, Giacomo Bergami and Gary Ushaw

AI 2025, 6(4), 71; https://doi.org/10.3390/ai6040071 - 7 Apr 2025

Abstract

Marine mammal monitoring, a growing field of research, is critical to cetacean conservation. Traditional ‘tagging’ attaches sensors such as GPS to such animals, though these are intrusive and susceptible to infection and, ultimately, death. A less intrusive approach exploits UUV commanded by a

[...] Read more.

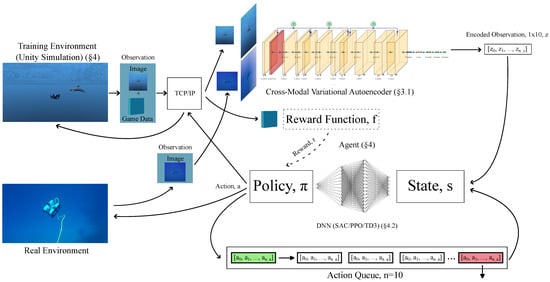

Marine mammal monitoring, a growing field of research, is critical to cetacean conservation. Traditional ‘tagging’ attaches sensors such as GPS to such animals, though these are intrusive and susceptible to infection and, ultimately, death. A less intrusive approach exploits UUV commanded by a human operator above ground. The development of AI for autonomous underwater vehicle navigation models training environments in simulation, providing visual and physical fidelity suitable for sim-to-real transfer. Previous solutions, including UVMS and L2D, provide only satisfactory results, due to poor environment generalisation while sensors including sonar create environmental disturbances. Though rich in features, image data suffer from high dimensionality, providing a state space too great for many machine learning tasks. Underwater environments, susceptible to image noise, further complicate this issue. We propose SWiMM2.0, coupling a Unity simulation modelling of a BLUEROV UUV with a DRL backend. A pre-processing step exploits a state-of-the-art CMVAE, reducing dimensionality while minimising data loss. Sim-to-real generalisation is validated by prior research. Custom behaviour metrics, unbiased to the naked eye and unprecedented in current ROV simulators, link our objectives ensuring successful ROV behaviour while tracking targets. Our experiments show that SAC maximises the former, achieving near-perfect behaviour while exploiting image data alone.

Full article

(This article belongs to the Special Issue Artificial Intelligence-Based Object Detection and Tracking: Theory and Applications)

►▼

Show Figures

Figure 1

Highly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Topic in

AI, Buildings, Computers, Drones, Entropy, Symmetry

Applications of Machine Learning in Large-Scale Optimization and High-Dimensional Learning

Topic Editors: Jeng-Shyang Pan, Junzo Watada, Vaclav Snasel, Pei HuDeadline: 30 April 2025

Topic in

AI, Applied Sciences, Education Sciences, Electronics, Information

Explainable AI in Education

Topic Editors: Guanfeng Liu, Karina Luzia, Luke Bozzetto, Tommy Yuan, Pengpeng ZhaoDeadline: 30 June 2025

Topic in

Applied Sciences, Energies, Buildings, Smart Cities, AI

Smart Electric Energy in Buildings

Topic Editors: Daniel Villanueva Torres, Ali Hainoun, Sergio Gómez MelgarDeadline: 15 July 2025

Topic in

AI, BDCC, Fire, GeoHazards, Remote Sensing

AI for Natural Disasters Detection, Prediction and Modeling

Topic Editors: Moulay A. Akhloufi, Mozhdeh ShahbaziDeadline: 25 July 2025

Conferences

Special Issues

Special Issue in

AI

Artificial Intelligence in Agriculture

Guest Editor: Arslan MunirDeadline: 30 April 2025

Special Issue in

AI

AI Bias in the Media and Beyond

Guest Editors: Venetia Papa, Theodoros Kouros, Savvas A. ChatzichristofisDeadline: 30 June 2025

Special Issue in

AI

Machine Learning in Bioinformatics: Current Research and Development

Guest Editors: Yinghao Wu, Kalyani Dhusia, Zhaoqian SuDeadline: 30 June 2025

Special Issue in

AI

Artificial Intelligence-Based Object Detection and Tracking: Theory and Applications

Guest Editors: Di Yuan, Xiu ShuDeadline: 30 June 2025